Professional Documents

Culture Documents

Causes of Downtime in Mysql

Causes of Downtime in Mysql

Uploaded by

Mukhammad WildanCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Causes of Downtime in Mysql

Causes of Downtime in Mysql

Uploaded by

Mukhammad WildanCopyright:

Available Formats

Causes of Downtime in Production MySQL Servers

A Percona White Paper

Baron Schwartz

Abstract

Everyone wants to prevent database downtime by being proactive, but are measures such as inspecting

logs and analyzing SQL really proactive? Although these are worthwhile, they almost always identify

problems that already exist. To be truly proactive, one must prevent problems, which requires studying

and understanding the reasons for downtime.

This paper presents the results of a study of emergency downtime issues that Percona was asked to

resolve. It sheds light on what types of problems

can occur in production environments. The results

might be surprising. Conventional wisdom holds

that bad SQL is the leading cause of downtime, but

the outcome of this research was quite different.

In this paper, downtime is defined as time that the

database server is not providing correct responses

with acceptable performance. Downtime could be

because a server has just been rebooted and is running on cold caches with increased response times,

or data has been destroyed so the server is returning

wrong answers, or replication is delayed, or any of

a number of other circumstances besides complete

unavailability.

This paper explains how we categorized the incidents, presents take-aways about downtime and its

causes, and concludes with some recommendations

that seem likely to help prevent downtime. Our goal

is that MySQL users and support professionals can

advance their understanding of what it means to be

proactive, increasing the quality of service to end

customers.

The sample data is biased and might not represent

the overall population of MySQL users. There might

be a high number of website emergencies compared

to the general population. Web application developers and startup companies often work in a less disciplined fashion than, for example, banks or pharmaceutical research companies. (Perconas customer

list does include those industries, and the sample includes emergency downtime issues from those types

of customers.) Therefore, this study might overrepresent mistakes made by people building applications in a hectic startup environment without

proper resources or planning. Furthermore, many

emergencies are resolved without calling Percona;

we are often called only for perplexing problems.

For example, although the data shows that SAN failures are a significant cause of storage problems, that

could be because SAN failures are difficult to diagnose.

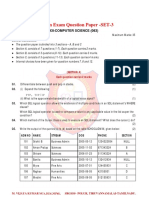

We found that downtime incidents can be grouped

into four major categories. Figure 1 shows the categories and how frequently they occurred in the sample.

Research Method

We drew sample incidents from 200 emergency issues in Perconas support database. We categorized

each incident by its nature, causes, resolution, and

measures that in our professional judgment could

have prevented the problem from happening. Our

conclusions are based on reading each issues emails, technician notes, chat transcripts, and when

appropriate, terminal session transcripts. Some isFigure 1: Categories of Downtime

sues were false-positives or did not have detailed

enough notes to categorize and codify them, so the Lets look at each of these categories in a bit more

final sample was 154 incidents.

detail.

Causes of Downtime in Production MySQL Servers

The Operating Environment

More than a third of the emergencies were caused by

problems in the databases operating environment,

such as the storage system, network, or operating

system. Table 1 shows the proportion of problems in

each sub-category:

Item

Storage System

Network

Operating System

External Software

Init Scripts

Power Loss

Incidents

24

9

8

5

4

2

% of Total

44.4%

16.7%

14.8%

9.3%

7.4%

3.7%

Table 1: Failures in the Operating Environment

Storage systems were the leading component that

failed in the operating environment; nearly half of

the incidents were due to storage failures. Of the remaining incidents, the most common was network

failure, including DNS failure; MySQL is vulnerable

to DNS failures in its default configuration. This was

followed by operating system configuration, such

as kernel bugs. The category of external software

includes malfunctioning high-availability systems,

connection pools, and cache servers.

There were four instances of system init scripts

breaking the database in a variety of ways. For example, one system crashed and had to run recovery

upon MySQL startup, but the init script would not

wait long enough for it to complete, so it repeatedly

killed it and started it again in a loop. Other systems

started multiple instances of the MySQL server, or

did not shut the server down cleanly. One control

panel uninstalled MySQL and reverted it back to a

buggy and poorly performing earlier version automatically, but the downgrade was unclean, and the

system entered a loop of crashing and restarting.

2

SAN failures tended to be harder to diagnose than

RAID controller failures; in one instance a SAN control panel showed nothing wrong with the system

even though it had degraded performance due to

a failed disk. Disk failures themselves are not included in this table because unless multiple disks

fail, which did not occur in this sample of incidents,

a functioning SAN or RAID controller does not fail

completely. The Other Storage category includes

NFS servers and Amazon EBS volumes.

Performance Problems

Performance problems of various sorts caused 53

downtime incidents, nearly as many as problems in

the operating environment. This is probably no surprise to readers, and neither is the leading cause of

performance problems: badly optimized SQL, followed by badly designed schema. Table 3 shows the

relative proportions:

Item

SQL

Schema and Indexing

InnoDB

Configuration

Idle Transactions

Other

Query Cache

Incidents

20

8

8

7

4

4

2

% of Total

37.8%

15.1%

15.1%

13.2%

7.6%

7.6%

3.8%

Table 3: Causes of Bad Performance

In most cases, the InnoDB problems were caused by

old versions of MySQL, and could be solved by upgrading the server. Some of them, however, required

custom patches to the server.

Most configuration problems were caused by gross

user error such as complete lack of configuration,

Storage failures are so common that they are worth and fine-tuning the server was not a solution for

drilling into. Table 2 is a breakdown of storage fail- any of the incidents we looked at. For example, one

ure incidents.

powerful server with dozens of gigabytes of memory was used for a large InnoDB databasebut the

Item

Incidents % of Total

InnoDB buffer pool was unconfigured and defaulted

Disk Full

9

37.5%

to 8MB. Another frequent error was failing to set the

SAN

5

20.8%

InnoDB log file size, so the server was running with

RAID Controller

4

16.7%

the default 10MB of redo logs instead of hundreds of

Other Storage

3

12.5%

megabytes.

Filesystem

2

8.3%

Idle transactionstransactions that had modified

Permissions

1

4.2%

Table 2: Storage System Failures

data and then never committedcaused problems

c 2011 Percona Inc.

Copyright

http://www.percona.com/

Causes of Downtime in Production MySQL Servers

by blocking other transactions, which timed out and

failed. The query cache problems were all solved by

simply disabling the query cache; it is not a scalable

component of the MySQL server. The problems in

the other category included server bugs, running

a broken server build, badly designed backups, and

long-running ALTER TABLE statements.

sions, and are easy to solve by upgrading to a sensible server version. A surprising number of users

install MySQL from old installation CDs, such as the

default version of MySQL 5.0.22 that shipped with a

popular enterprise Linux distribution for a number

of years. This version is very buggy and was obsoleted almost immediatelybut what is on the CD is

what gets installed in many cases.

The catch-all Other category includes badly executed upgrades, user errors, and attempts to run

replication in brittle configurations such as a ring

Replication is a key strategy for users to scale their topology.

applications, provide redundancy, take backups, run

reporting queries, and many other uses. Unfortunately, MySQLs built-in replication is somewhat Data Loss and Corruption

fragile. It is not hard to configure and use, but it is

also not hard to break, and there were 32 incidents The sample contained 15 cases of data loss or corrupof replication downtime. Table 4 shows the types of tion. These are usually caused by a mistake or hardreplication problems:

ware failure. Rarely is arbitrary corruption found in

Replication Problems

Item

Data Differences

Configuration

Other

Delays

Bugs

Incidents

14

6

5

4

4

% of Total

43.8%

18.8%

15.6%

12.5%

12.5%

Table 4: Replication Problems

The leading cause of replication problems is replication stopping due to the master and replica datasets

diverging. The usual culprit is a careless user or

misbehaving application that connects to the replica

and modifies data there, causing an update from the

master to fail. The second leading cause of failures

is a configuration problem, such as the infamous

max packet allowed configuration variable not being set large enough.

the database; it is generally introduced from the outside, and InnoDB detects it and refuses to operate.

In most cases, lack of recent restorable backups is the

only reason the problem becomes an emergency. Table 5 shows the causes of data loss and corruption in

the cases we studied:

Item

DROP TABLE

Data Corruption

Server Bug

Malicious Attack

Incidents

9

3

2

1

% of Total

60.0%

20.0%

13.3%

6.7%

Table 5: Causes of Data Loss and Corruption

This data confirmed our belief that it is more common for users to destroy their own data than for a

system component to fail. The practice of using a

replica as a backup does not protect against this type

Replication delays do not cause replication to stop, of problem.

but the data on the replica can become so stale that

it is unusable, and read-write splitting systems such

as the MMM replication manager will remove the Root Cause Analysis

replica from the pool of read-only servers, effectively

causing downtime for that replica. Most replication Root cause analysis is something of a mythical beast.

delays are actually performance problems at root, It sounds simple and easy to do, but there are albut they are often caused by bugs in replication, such ways multiple root causes, and if you follow the

as a bug causing the replica not to use an index for trail far enough, you will end up at the CEO of the

an update.

organization most of the time. Still, we were able

Another portion of bugs can cause replication to fail to make some generalizations, and assigned blame

entirely. Most of these occur in very old server ver- for the incidents to five broad categories: failures

c 2011 Percona Inc.

Copyright

http://www.percona.com/

Causes of Downtime in Production MySQL Servers

in change control, configuration, capacity planning,

backups, and monitoring. Most of the issues could

have been prevented by several different measures.

For example, lack of disk space could have been detected before it became an emergency with a monitoring and alerting system, or it could have been

predicted by capacity planning, or prevented by fixing a buggy logrotate script. Configuration errors

could have been detected early by performance analysis required for capacity planning, or prevented by

doing the configuration properly in the first place,

or detected by a monitoring system. And although

most data loss could have been prevented by backups, having backups doesnt prevent the problem

that leads to the data loss in the first place. This plurality of root causes makes it hard to say which are

the most prevalent.

Preventing Emergencies

In most cases, the 154 emergencies we analyzed

could have been prevented best by a systematic,

organization-wide effort to find and remove latent

problems. Many of the activities involved in this effort could appear to be unproductive, and might be

unrewarding for people to do.

Along with the findings presented earlier in this paper, we created a list of detailed preventive measures

for every issue we investigated. This is published

separately as a white paper on Perconas web site.

Conclusion

Our study of a subset of emergency issues for

Perconas customers confirmed what we believed,

while revealing patterns we had not noticed before.

For example, we have long believed that many organizations over-invest in database tuning, but do not

dedicate enough continuing resources for backups,

monitoring, and gathering historical performance

data for analysis and capacity planning. This research showed that database tuning is seldom the

Upgrading carelessly. Server upgrades should be resolution or prevention for emergencies, but these

tested before they are performed in production en- second quadrant activities surely are.

vironments. Many of the issues were caused by

At the same time, we had never questioned the old

changes in behavior with new server versions. These

saw that bad SQL is the leading cause of downtime

included queries no longer using indexes, differin databases. The numbers tell a different story: bad

ences in query results, bugs, and instability.

SQL is responsible for only 20 of the issues we saw,

Not upgrading. New versions of the server are re- and even if we group bad SQL and improper schema

leased for a reason. The longer an old version of design together, it is still only 28 issues out of 154.

the server runs, the more likely it is that someone Nearly twice as many issues arose in the operating

will trigger one of its many bugs. For example, environment.

many replication problems in 5.0 server versions are

Another eye-opening moment came when we realcaused by bugs that have been fixed for yearsbut

ized how much trouble is caused by poor-quality

nobody upgraded the server to avoid them.

external tools, such as init scripts, tuning tools, and

Notice the contradiction: without the resources and high-availability systems. It is ironic but true that

time to upgrade properly, users can cause problems high-availability tools can cause downtime. Solving

both by upgrading and by not upgrading. Poor problems in third-party software will require a sigchange control might be a large cause of problems nificant engineering effort in most cases.

in part because it is difficult to do well.

It can be difficult to convince organizations to foIn our opinion, though, lack of change control

is easily the most common cause of emergencies.

By change control we mean that when someone

changes the database server or application, they

should test and verify that nothing bad happens.

Similarly, many changes to the server and application should be made, but are not, and that causes

problems. Here are two representative examples:

We group several other types of problems under

the heading of change control. For example, SQL

queries that perform badly do not usually get past

good pre-production testing. Without such testing,

which is a form of change control, bad SQL in production is inevitable.

c 2011 Percona Inc.

Copyright

cus on tasks such as testing deployments and upgrades more carefully. For those who are interested

in preventing downtime in their MySQL instances,

we hope that this paper, along with the concrete

steps in the companion white paper on Perconas

website, will prove useful.

http://www.percona.com/

Causes of Downtime in Production MySQL Servers

About Percona, Inc.

Percona is the oldest and largest independent MySQL services company, with an international team of over

40 MySQL experts providing 24x7 support, consulting, training, and engineering services for MySQL to

over 1000 customers since 2006. Customers include Cisco Systems, Alcatel-Lucent, Groupon, the BBC, and

StumbleUpon.

Perconas founders are world-famous for their expertise in MySQL performance and scaling. They are the

authors of High Performance MySQL, 2nd Edition. Percona also develops software for MySQL users, including Percona Server and Percona XtraBackup. Percona Server is an enhanced version of the MySQL database

server. With Percona Server, users achieve faster and more consistent query execution, better insight into

server behavior, and the ability to consolidate servers with commodity hardware and flash storage. Percona

XtraBackup is the worlds only open-source hot backup utility for MySQLs default transactional storage

engine InnoDB, and is praised by social networking giant Facebook. Both Percona Server and Percona

XtraBackup are free and open-source.

If you are interested in Perconas products or services, we invite you to contact us through our website at

http://www.percona.com/, or to to call us. In the USA, you can reach us during business hours in Pacific

(California) Time, toll-free at 1-888-316-9775. Outside the USA, please dial +1-208-473-2904. You can reach

us during business hours in the UK at +44-208-133-0309.

Percona, XtraDB, and XtraBackup are trademarks of Percona Inc. InnoDB and MySQL are trademarks of Oracle Corp.

This white paper originally appeared as an article in IOUGs SELECT magazine, Q1 2011.

c 2011 Percona Inc.

Copyright

http://www.percona.com/

You might also like

- LinuxFoundation CKA v2023-03-27 q73Document69 pagesLinuxFoundation CKA v2023-03-27 q73kimon100% (1)

- Advanced SQL Lab 2Document2 pagesAdvanced SQL Lab 2Nyaboke OlyviahNo ratings yet

- MB51-Material Document ListDocument5 pagesMB51-Material Document ListMohammed Nawaz Shariff100% (1)

- Percona ContractDocument6 pagesPercona ContractAndrew BredonNo ratings yet

- Unit 38 DatabaseManagementSystem-RoshanSirDocument5 pagesUnit 38 DatabaseManagementSystem-RoshanSirManzu PokharelNo ratings yet

- MySQL Performance Tuning - MySQL 8 Query Performance Tuning - A Systematic Method For Improving Execution SpeedsDocument3 pagesMySQL Performance Tuning - MySQL 8 Query Performance Tuning - A Systematic Method For Improving Execution SpeedsChandra Sekhar DNo ratings yet

- OEM12c PatchingDocument16 pagesOEM12c PatchingPankaj GuptaNo ratings yet

- Veritas Netbackup 6.5 For Oracle For Solaris PDFDocument1 pageVeritas Netbackup 6.5 For Oracle For Solaris PDFs4nt1mutNo ratings yet

- LTE ENodeB Initial Config Integration GuideDocument77 pagesLTE ENodeB Initial Config Integration GuideSteadRed100% (11)

- More On The SMS PDUDocument15 pagesMore On The SMS PDUMukhammad WildanNo ratings yet

- Windows Command Line ReferenceDocument11 pagesWindows Command Line ReferenceCSArchive.Net100% (2)

- System Refresh DOCUMENT SSID To TSID 4 6 (Latest)Document28 pagesSystem Refresh DOCUMENT SSID To TSID 4 6 (Latest)Prasad BalkrishnanNo ratings yet

- Oracle Database 11g - Underground Advice for Database Administrators: Beyond the basicsFrom EverandOracle Database 11g - Underground Advice for Database Administrators: Beyond the basicsNo ratings yet

- Percona SQL InjectionsDocument78 pagesPercona SQL Injectionssonny.rubenNo ratings yet

- Forecasting Mysql ScalabilityDocument9 pagesForecasting Mysql ScalabilityCarlos G. RodríguezNo ratings yet

- Mysql PN Performance 101Document109 pagesMysql PN Performance 101duongatnaNo ratings yet

- Mysql MonitoringDocument20 pagesMysql Monitoringgopinathkarangula100% (1)

- MySQL Training Part1Document23 pagesMySQL Training Part1Saeed MeethalNo ratings yet

- Joe Sack Performance Troubleshooting With Wait StatsDocument56 pagesJoe Sack Performance Troubleshooting With Wait StatsAamöd ThakürNo ratings yet

- PerconaXtraDBCluster 5.7 1Document284 pagesPerconaXtraDBCluster 5.7 1GN RajeshNo ratings yet

- Migrating To Amazon RDS Via XtrabackupDocument70 pagesMigrating To Amazon RDS Via XtrabackupovandidoNo ratings yet

- Mysql Cluster Deployment Best PracticesDocument39 pagesMysql Cluster Deployment Best Practicessushil punNo ratings yet

- MySQL Master-Master ReplicationDocument7 pagesMySQL Master-Master ReplicationStephenEfangeNo ratings yet

- Upgrading To MySQL 8.0Document37 pagesUpgrading To MySQL 8.0ahmedNo ratings yet

- D74508GC10 OldDocument2 pagesD74508GC10 OldMangesh AbnaveNo ratings yet

- Dublin Tech - DB2 Cheat SheetDocument4 pagesDublin Tech - DB2 Cheat SheetTanveer AhmedNo ratings yet

- Edb - Bart - Qs - 7 Quick Start Guide For RHEL-CentOS 7Document7 pagesEdb - Bart - Qs - 7 Quick Start Guide For RHEL-CentOS 7AntonioNo ratings yet

- 03 Introduction To PostgreSQLDocument43 pages03 Introduction To PostgreSQLpaula Sanchez GayetNo ratings yet

- Adding New Tables To An Existing Oracle Goldengate ReplicationDocument7 pagesAdding New Tables To An Existing Oracle Goldengate ReplicationmdrajivNo ratings yet

- 001 ArchitectureDocument26 pages001 ArchitecturechensuriyanNo ratings yet

- MySQL MariaDB Story PDFDocument48 pagesMySQL MariaDB Story PDFMohammad Mizanur Rahman NayanNo ratings yet

- PostgreSQL Backups The Modern WayDocument50 pagesPostgreSQL Backups The Modern WayRamkumarNo ratings yet

- 01 PostgreSQL IntroductionDocument14 pages01 PostgreSQL Introductionvillanito23No ratings yet

- Knowledge Transfer Data Redaction On EnterpriseDBDocument19 pagesKnowledge Transfer Data Redaction On EnterpriseDBGuntur KsNo ratings yet

- Grid Infrastructure Installation Guide PDFDocument198 pagesGrid Infrastructure Installation Guide PDFjmsalgadosNo ratings yet

- Trobleshoot GRID 1050908.1Document18 pagesTrobleshoot GRID 1050908.1nandiniNo ratings yet

- Mysql Commands For DBDocument10 pagesMysql Commands For DBRavi Kumar PatnanaNo ratings yet

- Percona Server Installation: Running PMM Server Via DockerDocument3 pagesPercona Server Installation: Running PMM Server Via Dockerhari krishnaNo ratings yet

- Maa WP 11gr1 Activedataguard 1 128199Document41 pagesMaa WP 11gr1 Activedataguard 1 128199madhusribNo ratings yet

- Best Practices For MySQL Scalability SlidesDocument25 pagesBest Practices For MySQL Scalability SlidesDojak DojakNo ratings yet

- Oracle® Database 2 Day Performance Tuning GuideDocument240 pagesOracle® Database 2 Day Performance Tuning Guidepepet1000No ratings yet

- Rman TutorialDocument20 pagesRman TutorialRama Krishna PasupulettiNo ratings yet

- Percona XtraDB Cluster 5.7Document92 pagesPercona XtraDB Cluster 5.7Rifki ZulfikarNo ratings yet

- SQL Server Database Mirroring ConceptDocument75 pagesSQL Server Database Mirroring ConceptnithinvnNo ratings yet

- Sybase Capacity Planning DesignDocument208 pagesSybase Capacity Planning DesigngabjonesNo ratings yet

- Analyzing Oracle TraceDocument13 pagesAnalyzing Oracle TraceChen XuNo ratings yet

- InstallDocument10 pagesInstallalecostabrNo ratings yet

- EDB Postgres Failover Manager Guide v2.1Document86 pagesEDB Postgres Failover Manager Guide v2.1Anggia MauritianaNo ratings yet

- Wait States SQL 2005 8Document30 pagesWait States SQL 2005 8sqlbookreaderNo ratings yet

- How To Move Devices Using Disk MirroringDocument5 pagesHow To Move Devices Using Disk MirroringSarosh Siddiqui100% (1)

- Import Export Datapump Using Flashback - Time or Flashback - SCN (And Network - Link) Fails With Ora31693, Ora01031Document4 pagesImport Export Datapump Using Flashback - Time or Flashback - SCN (And Network - Link) Fails With Ora31693, Ora01031Alberto Hernandez HernandezNo ratings yet

- How To Create and Configure Oracle ASM Disk On Linux Using OracleASMDocument7 pagesHow To Create and Configure Oracle ASM Disk On Linux Using OracleASMRajNo ratings yet

- Mysql Monitor 8.0 enDocument386 pagesMysql Monitor 8.0 enMohd Nadzir Abdul RahmanNo ratings yet

- Performance Tuning in Oracle 10g Feel The Power !: Kyle HaileyDocument135 pagesPerformance Tuning in Oracle 10g Feel The Power !: Kyle Haileybsrksg123No ratings yet

- MySQL - DBADocument6 pagesMySQL - DBAkhaled osmanNo ratings yet

- Dbms NewDocument156 pagesDbms NewrajaNo ratings yet

- Administrating A MySQL ServerDocument4 pagesAdministrating A MySQL Serverangdrake100% (1)

- Golden Gate - Online Change Synchronization With The Initial Data LoadDocument5 pagesGolden Gate - Online Change Synchronization With The Initial Data LoadPujan Roy100% (1)

- Percona XtraBackup 2.4 DocumentationDocument200 pagesPercona XtraBackup 2.4 DocumentationdanielNo ratings yet

- Data GuardDocument8 pagesData GuardVinay KumarNo ratings yet

- Administering Oracle Database Classic Cloud Service PDFDocument425 pagesAdministering Oracle Database Classic Cloud Service PDFGanesh KaralkarNo ratings yet

- Weblogic Admin ResponsibilitiesDocument2 pagesWeblogic Admin ResponsibilitiessunitacrmNo ratings yet

- Exadata AdvantagesDocument1 pageExadata AdvantagesPrashanth RaoNo ratings yet

- Barman-3 5 0-ManualDocument88 pagesBarman-3 5 0-ManualFelipe Nicolas Alvarado DiazNo ratings yet

- Percona XtraDB 集群安装与配置Document24 pagesPercona XtraDB 集群安装与配置HoneyMooseNo ratings yet

- Configure Email Notification and Incidents Rule: Osama MustafaDocument17 pagesConfigure Email Notification and Incidents Rule: Osama MustafaThota Mahesh DbaNo ratings yet

- iNeOM Product KnowledgeDocument34 pagesiNeOM Product KnowledgeMukhammad WildanNo ratings yet

- NSN Flexi MR 10 Bts Brochure 2014Document4 pagesNSN Flexi MR 10 Bts Brochure 2014thksi100% (1)

- OSSii Berlin v10Document18 pagesOSSii Berlin v10Mukhammad WildanNo ratings yet

- SMS Tutorial: Operating Mode: SMS Text Mode and SMS PDU ModeDocument5 pagesSMS Tutorial: Operating Mode: SMS Text Mode and SMS PDU ModeMukhammad WildanNo ratings yet

- Oss Standards PresentationDocument61 pagesOss Standards PresentationMukhammad WildanNo ratings yet

- FIO DS Octal V15webDocument1 pageFIO DS Octal V15webMukhammad WildanNo ratings yet

- Linux and H/W Optimizations For Mysql: Yoshinori MatsunobuDocument160 pagesLinux and H/W Optimizations For Mysql: Yoshinori MatsunobuMukhammad WildanNo ratings yet

- Release NotesDocument74 pagesRelease NotesMukhammad WildanNo ratings yet

- Top 20 OpenSSH Server Best Security PracticesDocument10 pagesTop 20 OpenSSH Server Best Security PracticesMukhammad WildanNo ratings yet

- BlackBerry Messenger SDK Getting Started Guide 1391821 0311015238 001 1.0 Beta USDocument15 pagesBlackBerry Messenger SDK Getting Started Guide 1391821 0311015238 001 1.0 Beta USMukhammad WildanNo ratings yet

- SMS Messages and The PDU FormatDocument4 pagesSMS Messages and The PDU FormatMukhammad WildanNo ratings yet

- Clouderas Distribution Including Apache Hadoop Version 3 Update 3Document2 pagesClouderas Distribution Including Apache Hadoop Version 3 Update 3Mukhammad WildanNo ratings yet

- PerconaLiveNYC2011 Why SQL WinsDocument8 pagesPerconaLiveNYC2011 Why SQL WinsMukhammad WildanNo ratings yet

- Internet ArtifactsDocument37 pagesInternet ArtifactsReginald Nikoi NeequayeNo ratings yet

- 1.smart Data AccessDocument30 pages1.smart Data Accessshreyessorte3984100% (1)

- Data Guard Concepts and AdministrationDocument436 pagesData Guard Concepts and AdministrationAnubha VermaNo ratings yet

- SQL Server Statement Overview: RaiseerrorDocument3 pagesSQL Server Statement Overview: RaiseerrormortinNo ratings yet

- Unit2 San Intelligent Storage SystemDocument9 pagesUnit2 San Intelligent Storage SystemSandeep KhandekarNo ratings yet

- AccessTechniques - 01Document20 pagesAccessTechniques - 01JOSE ANGELNo ratings yet

- Installing Oracle Database 10g On LinuxDocument24 pagesInstalling Oracle Database 10g On Linuxkappi4uNo ratings yet

- ResearchDocument21 pagesResearchHezielNo ratings yet

- COMPATIBILITY-GUIDE en Miria 4.1GA 05-09-2023Document33 pagesCOMPATIBILITY-GUIDE en Miria 4.1GA 05-09-2023harindramehtaNo ratings yet

- The Commvault PortfolioDocument5 pagesThe Commvault Portfoliomohamed hosniNo ratings yet

- Bigdata BitsDocument2 pagesBigdata BitsShreyansh DiwanNo ratings yet

- Veritas Access 3340 Appliance For Long Term Retention of Pure Storage® Flasharray™ SnapshotsDocument38 pagesVeritas Access 3340 Appliance For Long Term Retention of Pure Storage® Flasharray™ SnapshotsRousal ValinoNo ratings yet

- Day 2 - S3 S4 - Introduction To Jbase Database1Document48 pagesDay 2 - S3 S4 - Introduction To Jbase Database1alasad parvezNo ratings yet

- XIIComp SC Term2359Document5 pagesXIIComp SC Term2359Saurabh BabuNo ratings yet

- 3-System DocumentationDocument12 pages3-System DocumentationNguyễn Vũ DuyNo ratings yet

- Creating An ODI Project and Interface - ODI11gDocument25 pagesCreating An ODI Project and Interface - ODI11gPedro ResendeNo ratings yet

- Installing Database Gateway E12061Document180 pagesInstalling Database Gateway E12061amitvats1234No ratings yet

- Database SecurityDocument38 pagesDatabase SecurityNavtej BhattNo ratings yet

- Assignment 1Document9 pagesAssignment 1Sandeep PatelNo ratings yet

- Display Xstring As PDF in ReportDocument17 pagesDisplay Xstring As PDF in ReportFrederico MorgadoNo ratings yet

- Class XII (As Per CBSE Board) : Computer ScienceDocument38 pagesClass XII (As Per CBSE Board) : Computer ScienceDrAshish VashisthaNo ratings yet

- Database GuidesDocument4 pagesDatabase GuidesYaronBabaNo ratings yet

- Multi Guard Authentication in Laravel 5.8Document6 pagesMulti Guard Authentication in Laravel 5.8Dinesh SutharNo ratings yet

- Makalah Laundry DatabaseeeDocument19 pagesMakalah Laundry DatabaseeeRagiliawan PutraNo ratings yet