Professional Documents

Culture Documents

Comparison of Speech Activity Detection Techniques For Speaker Recognition

Comparison of Speech Activity Detection Techniques For Speaker Recognition

Uploaded by

sahibhaiOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Comparison of Speech Activity Detection Techniques For Speaker Recognition

Comparison of Speech Activity Detection Techniques For Speaker Recognition

Uploaded by

sahibhaiCopyright:

Available Formats

1

Comparison of Speech Activity Detection

Techniques for Speaker Recognition

arXiv:1210.0297v2 [cs.MM] 6 Oct 2012

Md Sahidullah, Student Member, IEEE, Goutam Saha, Member, IEEE

AbstractSpeech activity detection (SAD) is an essential

component for a variety of speech processing applications. It

has been observed that performances of various speech based

tasks are very much dependent on the efficiency of the SAD.

In this paper, we have systematically reviewed some popular

SAD techniques and their applications in speaker recognition.

Speaker verification system using different SAD technique are

experimentally evaluated on NIST speech corpora using Gaussian

mixture model- universal background model (GMM-UBM) based

classifier for clean and noisy conditions. It has been found that

two Gaussian modeling based SAD is comparatively better than

other SAD techniques for different types of noises.

Index TermsVoice activity detection (VAD), Speech Activity

Detection (SAD), G.729B, Bi Gaussian Modeling, NOISEX-92.

I. I NTRODUCTION

Speech activity detection (SAD) is an important task in

most of the speech processing applications. The function of a

SAD is to distinguish silence, non-speech frames from speech

signals. It has been found that the presence of non-speech

frames considerably affects performance of the system. Speech

activity detection is also called voice activity detection. However, as in speech processing terminology voice and speech

are not same, we call the task of identifying speech frames

as SAD throughout this work. SAD techniques are designed

using various methods. Most of them use heuristically chosen

statistical properties of speech parameters like: energy, pitch,

entropy etc. Therefore, the performance of different SAD

are different and varying according to the level and type of

signal-to-noise ratio (SNR). As a result, the performances of

different speech based systems are significantly sensitive to the

employed SAD technique. Therefore, SAD should be carefully

chosen while designing a speech based system. Speech activity

detection is rigorously studied for speech recognition, speech

coding etc. However, it is not so far thoroughly studied for

speaker recognition applications. Very recently, it has drawn

attention of the researchers in this field [1][3].

In current speaker recognition systems, energy based SADs

are predominantly used. For example, Kinnunen et. al has

employed energy based SAD which is found useful for NIST

speech data [4]. The baseline speaker recognition system

developed in [5] also uses a different energy based SAD. A

bi-Gaussian model of speech frames log energy distribution

is suggested in [6]. In recent days, different SADs are used

for different quality of speech signal. For example, in [7],

for speech frame selection Hungarian phoneme recognizer

The authors are with the Department of Electronics and Electrical

Communication Engineering, Indian Institute of Technology Kharagpur,

Kharagpur, West Bengal, 721302, India. e-mail: sahidullahmd@gmail.com;

gsaha@ece.iitkgp.ernet.in

developed at BUT is used for telephone quality speech, and on

the other hand, GMM based approach is used for microphone

quality speech [8]. Other than this, voice activity detector

used in G.729B, statistical voice activity detector proposed

by Sohn et. al are also used for speech activity detection in

speaker recognition. In this paper, we briefly review all those

techniques. Then, their performances are compared for two

popular NIST speech corpus for clean and noisy environmental

conditions. The performances is also evaluated on a simulated

real-time situation where speech utterances of training and

testing are distorted with various noises of different SNRs.

In most of the cases, we have found that speaker recognition

systems with two Gaussian modeling based SADs are significantly better than other techniques.

The rest of the paper is organized as follows. In Section II,

the existing speech activity detectors are briefly reviewed.

The experimental setup used in this paper is discussed in

Section III. The results obtained in different experiments are

discussed in Section IV. Finally, the paper is concluded in

Section V.

II. R EVIEW

OF

S OME P OPULAR SAD T ECHNIQUES

Most of the speech activity detectors are based on either

time domain or frequency domain approach. Various time

domain features like short-time average energy (STAE), shorttime average magnitude (STAM), zero-crossing rate (ZCR) and

so on are used in time domain. On the other hand, in frequency

domain, various spectral information are used for designing a

SAD. There are numerous examples where these time and

frequency domain information and their statistical properties

are used to develop robust speech activity detector [9][14].

Speech activity detectors based on periodicity measure of

speech signal is used in [15]. Cepstral information based SAD

is proposed in [16]. In [17], SAD based on long term speech

information is proposed for automatic speech recognition.

Transformed domain characteristics of speech signal are used

to design SAD in [18]. Entropy based SAD is proposed

in [19]. Divergence of subband information is utilized in [20].

Recently, modulation spectrum information in terms of deltaphase spectrum is used to design robust voice activity detection

for robust speaker recognition [21].

A concise and updated review of the existing SAD techniques are not available yet. However, some older survey exists

in this domain. For example, a class of frequency domain

voice activity detector used for VoIP speech compression is

compared in [22]. In [23], three popular voice activity detectors in speech coding domain are experimentally evaluated

and assessed using different subjective and objective indices.

0.7

A. SAD Used in G.729B [9]

Voice activity detection used in G.729 codec is widely used

in different speech processing applications. This technique

classifies active and inactive voice frames with the help of

multiple speech parameters in the following steps:

First, four parameters: line spectral pairs (LSFs), fullband energy, low-band energy and the zero-crossing rate

are computed for different speech frames of the given

speech signal.

Parameters of initial frames of the signals are considered

as background noise information and distortion is measured with respect to those background parameters.

Initial VAD decisions are made using multiboundary of

four parameter set.

Initial VAD decisions are smoothed in four steps in order

to reflect stationary nature of speech and background

signal.

The VAD algorithm is terminated after the estimated background noise information crosses a pre-defined threshold.

Silence/Background Noise

Component

0.6

Speech

Component

0.5

Likelihood

VADs are compared in wireless application domain in [24]. As

there are enormous amount of work in this domain it is quite

difficult to evaluate all of them in a single work. In this paper,

we review five popular SAD techniques which are commonly

used in state-of-the art speaker recognition system.

0.4

0.3

0.2

0.1

0

9

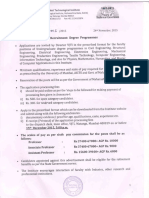

Fig. 1. Bi Gaussian model of log-energy values of speech frames. Gaussian

distribution function with Red color represents speech component and Blue

color represents noise/background component. Here, is the threshold.

tool identifies speech segments as speech or non-speech

segments. The speech segments are assigned to different

phonemes. On the other hand, non speech segments are

of four kinds2 : (i)pau: pause within speech signal, (ii)spk:

speaker related noises, (iii)sta: stationary noise, and (iv)int:

intermittent noise. This tool uses a neural network and hidden

Markov model based technique to identify phonemes. Neural

network is used to train speech frames according to their target

labels which is usually obtained from a standard database.

B. Statistical SAD [10]

E. Bi Gaussian Modeling of log-Energies Based SAD [6]

In this SAD technique, a statistical model is used where

the decision are taken based on likelihood ratio test (LRT).

In addition to that, a decision-directed (DD) method is used

to estimate various parameters. Finally, it also introduces an

improved hang-over scheme based on hidden Markov model

(HMM). The purpose of hang-over scheme is to detect the

speech frames which almost buried in noise. It has been shown

that this approach performs significantly better than G.729B

based SAD in low SNR for speech frame detection.

Bi-Gaussian modeling for speech frame selection is briefly

explained in [6], [28]. In this method, first the log-energies

of each speech frames of a speech utterance are computed.

Then the distribution of log-energy coefficients is estimated

using Gaussian mixture model of two mixtures. The cluster

corresponding to smaller value of center is treated as noise

or non-speech class, and the cluster corresponding to larger

value of center is considered as speech (Fig. 1). A threshold is

computed to determine the decision making boundary between

speech and non-speech class. Usually, it is chosen as the point

between the two centers where the probabilities are equal.

C. Energy Based SAD [4], [5]

Energy based SAD techniques are very straightforward, and

they are widely used in speech and speaker recognition application. First, energy of all the speech frames are computed

for a given speech utterance. Then, an empirical threshold

is selected from the frame energies. In [4], the threshold is

determined from the maximum energy of the speech frames.

In [5], the threshold is selected as 0.06 Eavg , where Eavg

is the average energy of the frames a speech utterance. These

kinds of techniques are somewhat suitable for clean condition.

But, the performance of the system degrades significantly in

low SNR.

D. Phoneme Recognizer Based SAD [25]

State-of-the art speaker recognition system uses Hungarian

phoneme recognizer tool1 to mark speech and non-speech

frames for telephone quality speech signals [26], [27]. This

Log Energy

III. E XPERIMENTAL S ETUP

A. Database Description

Speaker recognition experiments have been conducted on

NIST SRE 2001 [29] and NIST SRE 2002 [30]. In literature,

it has been found that noise related experiments are mostly

performed on those database. These two datasets have less

variability due to channel and handset compared to the latest

NIST corpora. Therefore, in order to observe the effect in presence of adverse environmental condition, synthetic addition of

noise to this dataset is more justified. We have performed all

the experiments on core task condition of the evaluation plan.

The detail description of the database is shown in Table I. In

both the cases, we have used the development data of NIST

SRE 2001 for UBM preparation.

1 http://speech.fit.vutbr.cz/software/phoneme-recognizer-based-long-temporal-context

2 http://www.fee.vutbr.cz/SPEECHDAT-E/public/Deliver/WP1/ED141v11-fin.doc

TABLE I

D ATABASE DESCRIPTION ( CORETEST SECTION ) FOR THE PERFORMANCE

EVALUATION OF VARIOUS SPEECH ACTIVITY DETECTORS .

SRE 2001

SRE 2002

Target Models

74, 100

139, 191

Test Segments

2038

3570

Total Trial

22418

39270

True Trial

2038

2983

Impostor Trial

20380

36287

B. Feature Extraction

MFCC features have been extracted using 20 filters linearly

spaced in mel scale from speech frame of 20ms keeping

50% overlap with adjacent frames. The details of our feature

extraction process can be found in [31]. We have also shown

the performance for our previously proposed overlapped block

transform coefficient (OBTC) feature termed as OBT-9-13

which extracted by performing distributed DCT on the mel

filterbank output [31]. The dimensions of the MFCC and

OBTC features are 38 and 40 correspondingly after static

features are augmented with their velocity coeffificents.

C. Classifier Description

In this paper, all the performance are based on GMM-UBM

based classifier where the target models are created by adapting UBM parameters [32]. Initially, a gender dependent UBM

with 256 mixtures is trained using expectation-maximization

algorithm after initialization using split vector quantization

with data from development section of NIST SRE 2001. Target

models are created by adapting the centres of the UBM with

relevance factor of 14. Finally, during score computation, only

top-5 Gaussians of UBM per frame are considered.

D. Performance Evaluation Metric

The performance of speaker recognition is measured using

equal error rate (EER) metric which is a particular operating

point on detection error tradeoff plot where the probability

of false rejection (FR) equals probability of false acceptance

(FA). The performance is also measured in terms of minimum

detection cost function (minDCF) where a cost function is

minimized by assigning unequal cost to FR and FA. Here, the

costs of FR and FA are set at 1 and 10 correspondingly.

IV. R ESULTS AND D ISCUSSIONS

Speaker recognition experiments are conducted on both

the databases using MFCC and OBTC feature. In all the

experiments, everything other than the SAD techniques are

kept fixed to observe the effect of SAD. In this paper, the

SAD techniques are abbreviated as follows:

G.729B: SAD used in G.729B [9].

SMSAD: Statistical model based SAD proposed by Sohn

ET. AL [10].

HNPNR:

Hungarian phoneme recognizer based

SAD [25].

MEBTS: Energy dependent SAD using maximum energy

based threshold selection [4].

AEBTS: Energy dependent SAD using average energy

based threshold selection [5].

UBGME: Utterance-wise bi Gaussian modeling of logenergy based threshold selection [28].

A. Results in Match Condition

Experiments are first conducted on clean speech database.

Speaker verification results with different SADs are shown in

Table II. Frame selection technique which is used in G.729B

is shown to perform worst for speaker recognition in almost

all the cases. The performance of SMSAD is better than

the performance of G.729B for most of the cases. HNPNR

based SAD is shown to outperform the first two techniques.

However, MEBTS based approach is consistently better than

those techniques for all the cases. Then, bi Gaussian modeling

based approach (UBGME) is shown to perform better than

all the previously mentioned techniques for NIST SRE 2001.

However, its performance is slightly reduced than HNPNR,

MEBTS and AEBTS for NIST SRE 2002. It can also be

observed that energy based SADs are relatively better than the

first two techniques. Though these two techniques are very

useful for speech coding and compression, but they are not

much important in speaker recognition. This is most likely

due to the fact that those techniques consider a signal frame

as speech if it has some speech-like information. However, the

energy based SADs consider a frame as speech frame if only if

it has significant amount of energy. Though low energy speech

frames may have speech information, but their contribution in

speaker recognition seems to be negligible.

The overall energy of the frames increases when the speech

is distorted by noise in adverse conditions. In that case, merely

energy of the frame seems to be unreliable for speech activity

detection. Therefore, it is worth studying the effect of the SAD

in speaker recognition for noisy condition. This study is carried

out on both the databases and the same is discussed in the

following subsection.

B. Results in Mismatch Condition

The experiments on noisy conditions are performed by

synthetically adding noise to the test utterances. The noise

samples are taken from NOISEX-92 database4 and they are

down-sampled to 8kHz. The noise is added to the speech signal

using the following steps:

A segment of noise sample from the original noise signal

is randomly selected according to the length of the speech

signal with whom noise is to be added.

The amplitude of the noise segment is scaled depending

on desired SNR.

The scaled noise signal is added to the clean signal to

get the distorted speech.

In our experiment, we have chosen five different noise

samples: white, pink, volvo, babble and factory (Factory-1

noise in the original database). We have chosen those noises

4 http://www.speech.cs.cmu.edu/comp.speech/Section1/Data/noisex.html

TABLE II

S PEAKER VERIFICATION RESULTS IN TERMS OF EQUAL ERROR RATE AND MINIMUM DETECTION COST FUNCTION ON NIST SRE 2001 AND NIST SRE

2002 FOR DIFFERENT IMPLEMENTATIONS OF SPEECH ACTIVITY DETECTOR . [G.729B: SAD USED IN G.729 C ODEC [9], SMSAD: S TATISTICAL M ODEL

BASED SAD [10], HNPNR: H UNGARIAN P HONEME R ECOGNIZER [25], MEBTS: M AXIMUM E NERGY B ASED T HRESHOLD S ELECTION [4], AEBTS:

AVERAGE E NERGY BASED T HRESHOLD S ELECTION [5], UBGME: U TTERANCE W ISE B I G AUSSIAN M ODELING OF L OG E NERGY [28].]

Method

NIST SRE 2001

EER (in %)

minDCF 100

MFCC

OBTC

MFCC

OBTC

NIST SRE 2002

EER (in %)

minDCF 100

MFCC

OBTC

MFCC

OBTC

G.729B

9.32

8.59

4.11

3.85

10.16

9.83

4.50

4.38

SMSAD

8.97

8.11

3.92

3.74

9.86

9.85

4.51

4.29

HNPNR

8.54

7.76

3.74

3.48

9.09

8.78

4.43

4.35

MEBTS

8.24

7.27

3.58

3.44

9.09

8.58

4.45

4.10

AEBTS

7.96

7.21

3.61

3.49

9.45

8.59

4.44

4.22

UBGME

7.91

7.36

3.72

3.41

9.69

9.05

4.50

4.28

due to their various frequency domain behavior as shown in

Fig. 2. The experiments are conducted for three different levels

of noise: high (0 dB), medium (10 dB) and low (20 dB).

The results are shown in Table III and Table IV for the two

databases.

Performance of the speaker recognition system drops

severely in presence of noise for different types of SAD. The

degradation in performance is dependent on the frequency

response of the noise. For example, from Fig. 2, we can

interpret that as the frequency response of the WHITE noise

is flat, all the frequency component of the speech signal is

affected. Therefore, the performance is worst for this noise.

On the other hand, volvo noise affects only first few Mel filterbank output. Hence, performance in the presence of VOLVO

noise is relatively less degraded compared to other noises of

same SNR.

We also note that performance is varying significantly for

different SAD techniques in presence of noise. In case of

G.729B and SMSAD, the performances are nearly equivalent

for both the databases. HNPNR based voice activity detector

which is used in state-of-the art speaker recognition system for

speech frame selection performs poorly compared to the other

techniques. The performance of energy thresholded SADs i.e.

MEBTS and AEBTS suffer severely in presence of noise. The

performance is even worse compared to G.729B and SMSAD.

In presence of noise, all the speech frames are affected

i.e. energy of each frames are increased and the frequency

response of all the speech frames are severely affected. In this

scenario, maximum energy or average energy based threshold

selection techniques will not be much effective. Hence, vowel

like regions are only seems to be relevant for those cases [5].

The two Gaussian model based approach selects the energy

threshold as the boundary between speech and non-speech

class which selects vowel like higher energy frames for most

of the part. Therefore, the performance is significantly better

for this technique in higher noise.

C. Results in Real-time Scenario

In Section IV-A results are shown for clean condition

whereas in Section IV-B, speaker recognition results are

shown for noisy condition where in every case all the test

speech segments are distorted with same noise of equal SNR.

However, in real life, this kind of controlled environment is

not replicated. In practice, most of the speech utterances are

distorted with different type of noise of various SNR. In order

to observe the performance of speaker recognition in this kind

of situation, we have distorted different speech files of NIST

SRE 2002 with different noise of various SNR. The noise type

is randomly chosen from the set of five noises used in the

previous experiments. The SNR is randomly selected between

0 to 40. We call the distorted dataset as Distorted NIST

SRE 2002. As different speech files are collected for different

environmental conditions, score normalization would be very

effective here [33]. Here, we have applied t-normalization

on raw log likelihood scores for generating final scores. The

utterances for t normalizations are chosen from training speech

files of NIST SRE 2001 i.e. 74 male and 100 female speech

files are selected for t-normalization.

V. C ONCLUSION & F UTURE W ORK

In this work, we briefly review some standard SAD techniques and their effects in speaker recognition performances.

The performance of different techniques are evaluated on

two NIST corpora: NIST SRE 2001 and NIST SRE 2002.

SAD techniques like G.729B and SMSAD, which are very

much accepted in speech coding and other applications, are

shown to exhibit lower performance than even simple energy

based speech activity detector. The experimental results show

that speaker recognition system with two Gaussian modeling

of log-energy based SAD is significantly better than other

techniques for wide range of SNR. We have evaluated the

performances using two cepstral features: standard MFCC and

our previously proposed OBTC. It has been shown that OBTC

based system is superior than MFCC for most of the cases.

The performance of the UBGME based SAD appears to be

suboptimal in the shown cases. However, this utterance wise

bi Gaussian modeling of log-energy based approach can be

further improved by investigating the followings subjects:

When a speech utterance gets distorted by noise, its

different frequency bands are unequally affected (Fig. 2).

Therefore, two Gaussian modeling of subband information could be used to improve the performance of the

speech activity detector.

20

Speech

White

Pink

Volvo

Babble

Factory

Magnitude Response (in dB)

10

10

20

30

40

0

500

1000

1500

2000

Frequency (in Hz)

2500

3000

3500

4000

Fig. 2. Average of short term magnitude responses for clean speech and noise samples. The speech signals are taken from NIST SRE 2001 where noise

samples are taken from NOISEX-92 database. Averaging operation is performed over all available signal frames.

The UBGME based approach uses only log-energy. However, bi Gaussian modeling of other parameters like

entropy, spectral flatness parameter can be studied to

extract robust speech frames.

Decision fusion technique [3] can be used to improve

the performance further by combining multiple speech

activity detector carrying supplementary information.

R EFERENCES

[1] R. Padmanabhan, Studies on voice activity detection and feature

diversity for speaker recognition, Ph.D. dissertation, Indian Institute

of Technology Madras, August 2012.

[2] S. H. Parthasarathi, R. Padmanabhan, and H. A. Murthy, Robustness

of group delay representations for noisy speech signals, International

Journal of Speech Technolgy, vol. 14, no. 4, pp. 361368, Dec 2011.

[3] Z. Nasibov, Decision fusion of voice activity detectors, Masters thesis,

University of Eastern Finland, Joensuu, May 2012.

[4] T. Kinnunen and H. Li, An overview of text-independent speaker

recognition: From features to supervectors, Speech Communication,

vol. 52, no. 1, pp. 1240, 2010.

[5] S. Prasanna and G. Pradhan, Significance of vowel-like regions for

speaker verification under degraded conditions, Audio, Speech, and

Language Processing, IEEE Transactions on, vol. 19, no. 8, pp. 2552

2565, Nov. 2011.

[6] F. Bimbot, J.-F. Bonastre, C. Fredouille, G. Gravier, I. MagrinChagnolleau, S. Meignier, T. Merlin, J. Ortega-Garca, D. PetrovskaDelacrtaz, and D. A. Reynolds, A tutorial on text-independent speaker

verification, EURASIP Journal on Applied Signal Processing, vol. 2004,

no. 4, pp. 430451, 2004.

[7] N. Dehak, P. Kenny, R. Dehak, P. Dumouchel, and P. Ouellet, Front-end

factor analysis for speaker verification, Audio, Speech, and Language

Processing, IEEE Transactions on, vol. 19, no. 4, pp. 788798, may

2011.

[8] S. Hanwu, M. Bin, S. Kalayar Khine, and H. Li, Speaker diarization

system for RT07 and RT09 meeting room audio, in Acoustics Speech

and Signal Processing (ICASSP), 2010 IEEE International Conference

on, march 2010, pp. 49824985.

[9] A. Benyassine, E. Shlomot, H.-Y. Su, D. Massaloux, C. Lamblin,

and J.-P. Petit, ITU-T recommendation G.729 annex B: a silence

compression scheme for use with G.729 optimized for V.70 digital

simultaneous voice and data applications, Communications Magazine,

IEEE, vol. 35, no. 9, pp. 64 73, sep 1997.

[10] J. Sohn, N. S. Kim, and W. Sung, A statistical model-based voice

activity detection, Signal Processing Letters, IEEE, vol. 6, no. 1, pp.

13, Jan. 1999.

[11] S. Tanyer and H. Ozer, Voice activity detection in nonstationary noise,

Speech and Audio Processing, IEEE Transactions on, vol. 8, no. 4, pp.

478482, jul 2000.

[12] J. Ramirez, J. Segura, C. Benitez, L. Garcia, and A. Rubio, Statistical

voice activity detection using a multiple observation likelihood ratio

test, Signal Processing Letters, IEEE, vol. 12, no. 10, pp. 689692,

Oct. 2005.

[13] Y. Cho and A. Kondoz, Analysis and improvement of a statistical

model-based voice activity detector, Signal Processing Letters, IEEE,

vol. 8, no. 10, pp. 276278, Oct 2001.

[14] J. Chang, N. Kim, and S. Mitra, Voice activity detection based on

multiple statistical models, Signal Processing, IEEE Transactions on,

vol. 54, no. 6, pp. 19651976, June 2006.

[15] R. Tucker, Voice activity detection using a periodicity measure,

Communications, Speech and Vision, IEE Proceedings I, vol. 139, no. 4,

pp. 377380, Aug. 1992.

[16] J. Haigh and J. Mason, Robust voice activity detection using cepstral

features, in IEEE Region 10 Conference on Computer, Communication,

Control and Power Engineering (TENCON93), no. 0, Oct 1993, pp.

321324.

[17] Ramrez, J. Segura, C. Bentez, A. Torre, and A. Rubio, Efficient

voice activity detection algorithms using long-term speech information,

Speech Communication, vol. 42, no. 3-4, pp. 271287, 2004.

[18] S. Gazor and W. Zhang, A soft voice activity detector based on a

laplacian-gaussian model, Speech and Audio Processing, IEEE Transactions on, vol. 11, no. 5, pp. 498505, sept. 2003.

[19] P. Renevey and A. Drygajlo, Entropy based voice activity detection in

very noisy conditions, in Proceedings of EUROSPEECH 2001, 2001.

[20] J. Ramirez, J. Segura, C. Benitez, A. de la Torre, and A. Rubio, A new

kullback-leibler vad for speech recognition in noise, Signal Processing

Letters, IEEE, vol. 11, no. 2, pp. 266269, Feb 2004.

[21] I. McCowan, D. Dean, M. McLaren, R. Vogt, and S. Sridharan, The

delta-phase spectrum with application to voice activity detection and

TABLE III

S PEAKER VERIFICATION RESULTS ON NIST SRE 2001 IN PRESENCE OF VARIOUS ADDITIVE ENVIRONMENTAL NOISE . T HE RESULTS ARE SHOWN FOR

VARIOUS SPEECH ACTIVITY DETECTION TECHNIQUES .

EER (in %)

Method

G.729B

SMVAD

HNPNR

MEBTS

AEBTS

UBGME

[22]

[23]

[24]

[25]

[26]

[27]

[28]

Noise

20 dB

MFCC

10 dB

0 dB

White

Pink

Volvo

Babble

Factory

11.38

10.70

11.30

9.96

10.55

16.69

13.64

11.43

13.74

13.35

White

Pink

Volvo

Babble

Factory

11.24

10.12

10.85

10.16

10.06

White

Pink

Volvo

Babble

Factory

minDCF 100

20 dB

OBTC

10 dB

20 dB

MFCC

10 dB

0 dB

0 dB

20 dB

OBTC

10 dB

0 dB

28.56

23.46

13.14

23.99

20.96

10.40

9.52

10.60

9.43

9.57

15.65

12.90

10.50

12.70

12.41

27.28

22.77

12.07

22.93

19.47

4.80

4.59

4.96

4.63

4.66

7.17

6.45

5.03

6.52

6.33

9.84

9.37

6.21

9.17

8.96

4.52

4.32

4.73

4.13

4.36

7.12

6.05

4.71

5.75

5.84

9.84

9.04

5.22

8.84

8.58

15.75

13.35

11.13

13.82

13.05

27.09

23.60

13.20

24.92

21.19

9.91

9.18

10.16

8.92

9.27

14.78

12.32

10.26

11.83

11.63

27.49

22.62

11.97

22.87

19.28

4.79

4.52

4.79

4.62

4.55

7.00

6.34

5.05

6.44

6.03

9.38

9.17

5.89

9.22

8.70

4.39

4.18

4.62

4.08

4.28

6.98

5.92

4.55

5.55

5.67

9.61

9.02

5.11

8.86

8.42

11.19

9.87

9.67

9.43

9.62

16.44

13.15

9.86

12.95

12.37

34.64

29.94

12.32

24.14

26.36

9.81

8.83

8.93

8.54

9.18

15.02

12.41

9.33

11.68

11.89

34.49

28.70

10.84

23.70

26.10

4.99

4.63

4.62

4.29

4.54

7.41

6.46

4.65

6.06

6.26

10.00

10.00

5.80

9.69

10.00

4.58

4.30

4.16

3.92

4.25

7.35

6.21

4.21

5.64

5.88

10.00

10.00

4.98

9.92

10.00

White

Pink

Volvo

Babble

Factory

13.64

10.06

8.73

8.64

9.22

20.85

17.08

9.13

13.63

15.55

33.57

30.86

10.74

29.63

29.92

12.31

8.64

7.91

7.60

8.54

20.36

17.17

8.34

13.20

15.75

34.20

30.47

9.29

29.64

28.56

5.98

4.38

3.99

3.87

4.23

9.02

8.31

4.05

6.65

7.51

10.00

10.00

4.81

9.88

10.00

5.50

3.93

3.75

3.47

3.94

8.63

7.94

3.71

6.18

7.19

9.98

9.95

4.16

9.81

9.93

White

Pink

Volvo

Babble

Factory

11.97

9.09

8.58

8.29

8.88

21.41

17.47

8.99

12.22

14.62

34.15

31.45

10.30

29.70

30.57

10.40

7.90

7.89

7.57

8.10

19.68

16.29

8.25

11.38

13.94

33.32

29.78

9.18

29.34

28.60

5.12

4.20

4.00

3.79

4.08

8.96

8.06

3.95

5.90

6.80

10.00

10.00

4.62

9.93

10.00

4.52

3.79

3.69

3.46

3.76

8.05

7.15

3.66

5.51

6.26

10.00

9.98

4.10

9.84

9.98

White

Pink

Volvo

Babble

Factory

9.76

8.89

8.73

8.78

9.03

14.18

12.03

9.37

11.63

11.39

24.88

21.44

11.19

20.70

21.20

9.31

8.69

8.30

8.34

8.59

13.98

11.29

8.82

10.50

11.14

24.88

20.80

9.96

20.61

20.50

4.66

4.17

4.16

3.85

4.05

6.70

5.71

4.09

5.39

5.53

9.29

8.79

4.78

8.58

8.62

4.19

3.80

3.89

3.52

3.76

6.52

5.41

3.80

5.10

5.11

9.47

8.85

4.26

8.59

8.60

speaker recognition, Audio, Speech, and Language Processing, IEEE

Transactions on, vol. 19, no. 7, pp. 20262038, sept. 2011.

R. Venkatesha Prasad, A. Sangwan, H. Jamadagni, M. Chiranth, R. Sah,

and V. Gaurav, Comparison of voice activity detection algorithms for

voip, in Computers and Communications, 2002. Proceedings. ISCC

2002. Seventh International Symposium on, 2002, pp. 530535.

F. Beritelli, S. Casale, G. Ruggeri, and S. Serrano, Performance evaluation and comparison of G.729/AMR/fuzzy voice activity detectors,

Signal Processing Letters, IEEE, vol. 9, no. 3, pp. 8588, march 2002.

K. El-Maleh and P. Kabal, Comparison of voice activity detection

algorithms for wireless personal communications systems, in Electrical

and Computer Engineering, 1997. Engineering Innovation: Voyage of

Discovery. IEEE 1997 Canadian Conference on, vol. 2, may 1997, pp.

470473.

P. Schwarz, Phoneme recognition based on long temporal context,

Ph.D. dissertation, Brno University of Technology, 2009.

O. Glembek, L. Burget, N. Dehak, N. Brummer, and P. Kenny, Comparison of scoring methods used in speaker recognition with joint factor

analysis, in IEEE International Conference on Acoustics, Speech and

Signal Processing (ICASSP 2009), april 2009, pp. 40574060.

M. J. Alam, T. Kinnunen, P. Kenny, P. Ouellet, and D. OShaughnessy,

Multitaper MFCC and PLP features for speaker verification using ivectors, Speech Communication, no. 0, pp. , 2012, article in Press.

I. Magrin-Chagnolleau, G. Gravier, and R. Blouet, Overview of the

[29]

[30]

[31]

[32]

[33]

2000-2001 ELISA consortium research activities, in 2001: A Speaker

Odyssey The Speaker Recognition Workshop, 2001.

M. Przybocki and A. Martin, 2001 nist speaker recognition evaluation

corpus, Linguistic Data Consortium, 2002.

The

NIST

Year

2002

Speaker

Recognition

Evaluation

Plan.

[Online].

Available:

http://www.itl.nist.gov/iad/mig/tests/spk/2002/2002-spkrec-evalplan-v60.pdf

M. Sahidullah and G. Saha, Design, analysis and experimental evaluation of block based transformation in mfcc computation for speaker

recognition, Speech Communication, vol. 54, no. 4, pp. 543565, May

2012.

D. A. Reynolds, T. F. Quatieri, and R. B. Dunn, Speaker verification

using adapted gaussian mixture models, Digital Signal Processing,

vol. 10, no. 1-3, pp. 19 41, 2000.

R. Auckenthaler, M. Carey, and H. Lloyd-Thomas, Score normalization for text-independent speaker verification systems, Digital Signal

Processing, vol. 10, no. 1-3, pp. 42 54, 2000.

TABLE IV

S PEAKER VERIFICATION RESULTS ON NIST SRE 2002 IN PRESENCE OF VARIOUS ADDITIVE ENVIRONMENTAL NOISE . T HE RESULTS ARE SHOWN FOR

VARIOUS SPEECH ACTIVITY DETECTION TECHNIQUES .

EER (in %)

Method

G.729B

SMVAD

HNPNR

MEBTS

AEBTS

UBGME

Noise

20 dB

MFCC

10 dB

0 dB

White

Pink

Volvo

Babble

Factory

12.38

11.93

12.50

12.07

12.03

17.60

15.92

13.07

16.66

16.09

White

Pink

Volvo

Babble

Factory

12.26

11.90

12.48

11.87

11.83

White

Pink

Volvo

Babble

Factory

minDCF 100

20 dB

OBTC

10 dB

20 dB

MFCC

10 dB

0 dB

0 dB

20 dB

OBTC

10 dB

29.47

25.81

15.22

26.86

25.01

11.96

11.67

12.37

11.26

11.43

17.93

15.65

12.40

15.15

14.92

0 dB

28.83

24.84

13.44

25.18

23.63

5.47

5.37

5.54

5.63

5.45

7.40

7.02

6.01

7.34

7.06

9.84

9.44

6.82

9.55

9.40

5.30

5.16

5.41

5.01

5.20

7.46

6.72

5.39

6.73

6.65

9.75

9.06

5.97

9.36

8.82

17.63

15.93

13.21

17.10

15.66

27.86

25.41

15.05

28.29

24.04

11.97

11.46

12.54

11.27

11.47

17.13

15.38

12.30

15.82

14.95

28.43

24.81

13.44

25.95

22.83

5.59

5.30

5.53

5.65

5.34

7.57

7.00

5.93

7.51

6.93

9.81

9.47

6.75

9.65

9.15

5.33

5.08

5.36

5.12

5.11

7.51

6.69

5.39

6.79

6.55

9.76

9.18

6.01

9.58

8.54

12.44

11.53

10.86

11.20

11.23

17.90

15.96

11.79

15.69

15.45

35.20

31.48

14.15

26.28

28.74

11.70

11.40

11.06

10.56

10.86

17.40

15.22

11.36

14.21

14.82

35.40

30.44

12.64

25.27

27.96

5.85

5.69

5.24

5.61

5.56

8.08

7.59

5.92

7.38

7.45

10.00

10.00

7.03

10.00

10.00

5.62

5.49

5.17

5.31

5.36

7.96

7.18

5.40

6.74

7.01

10.00

10.00

6.20

10.00

10.00

White

Pink

Volvo

Babble

Factory

14.28

11.20

10.12

10.60

11.00

22.93

20.26

11.19

17.20

17.67

34.97

33.90

12.71

32.41

32.12

12.88

10.29

9.82

9.69

9.86

21.56

19.01

10.06

15.46

17.36

34.96

33.02

11.20

31.48

31.14

6.51

5.15

4.82

5.07

5.07

8.73

8.38

5.13

7.59

7.97

9.96

9.88

6.01

9.82

9.78

6.19

4.87

4.49

4.61

4.70

8.88

8.41

4.69

7.12

7.84

9.97

9.86

5.24

9.82

9.78

White

Pink

Volvo

Babble

Factory

12.37

10.52

10.16

10.56

10.60

23.17

20.01

10.67

15.52

16.93

35.17

34.16

12.41

32.35

32.58

11.93

10.09

9.76

9.86

9.76

21.11

18.64

10.22

14.21

16.45

35.43

32.55

11.22

31.41

30.51

5.96

4.98

4.73

4.88

4.90

9.10

8.42

5.09

7.07

7.70

10.00

9.97

5.78

9.91

9.88

5.57

4.76

4.60

4.59

4.64

9.00

8.22

4.76

6.59

7.29

9.95

9.91

5.16

9.82

9.77

White

Pink

Volvo

Babble

Factory

11.70

10.93

10.69

10.76

10.76

16.06

14.45

11.46

14.29

14.25

26.92

23.97

12.77

23.10

24.57

11.10

10.50

10.16

10.16

10.19

15.99

13.95

10.53

13.21

13.48

26.95

23.24

11.26

23.13

24.04

5.29

5.08

4.88

4.99

5.00

7.02

6.52

5.15

6.43

6.54

9.71

9.29

5.83

9.16

9.38

5.05

4.85

4.67

4.60

4.70

7.04

6.29

4.78

6.13

6.24

9.61

9.10

5.31

9.05

9.14

TABLE V

S PEAKER VERIFICATION RESULTS IN DISTORTED NIST SRE 2002 FOR DIFFERENT SPEECH ACTIVITY DETECTORS .

Method

w/o t-norm

EER (in %)

minDCF 100

MFCC

OBTC

MFCC

OBTC

with t-norm

EER (in %)

minDCF 100

MFCC

OBTC

MFCC

OBTC

G.729B

21.69

19.41

8.52

8.05

21.22

18.54

8.14

7.53

SMSAD

22.09

19.51

8.65

8.16

21.35

18.74

8.37

7.72

HNPNR

21.18

19.13

8.75

8.34

20.72

18.64

8.14

7.49

MEBTS

23.73

22.79

9.94

9.93

23.57

22.26

9.96

9.97

AEBTS

23.33

21.89

9.96

9.93

22.90

21.45

9.97

9.96

UBGME

19.14

17.53

8.32

8.03

18.34

16.43

7.88

7.38

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5822)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1093)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (852)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (590)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (898)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (540)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (349)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (822)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (122)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (403)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Veermata Jijabai Technological InstituteDocument29 pagesVeermata Jijabai Technological InstituteSubhrajyoti RoyNo ratings yet

- Handheld MAXX 12 Channel 5G Cell Phone and GPS Signal JammerDocument4 pagesHandheld MAXX 12 Channel 5G Cell Phone and GPS Signal JammerErwinNo ratings yet

- Farlink TDDDocument4 pagesFarlink TDDaahmadzamri100% (1)

- Audio and Video Transmission Using Visible Light TransmissionDocument4 pagesAudio and Video Transmission Using Visible Light Transmissionmuhammad usamaNo ratings yet

- Dsip Viva Questions SsDocument3 pagesDsip Viva Questions Ssrboy1993No ratings yet

- Db-Hsdpa (Ran16.0 01)Document107 pagesDb-Hsdpa (Ran16.0 01)hekriNo ratings yet

- Speech Signal Processing: A Handbook of Phonetic ScienceDocument24 pagesSpeech Signal Processing: A Handbook of Phonetic Scienceالاميره شروقNo ratings yet

- Bit Error RateDocument5 pagesBit Error RatevaskogorgNo ratings yet

- Parameter Description 3g OnlyDocument15 pagesParameter Description 3g OnlyRasyidi UsmanNo ratings yet

- Clock Domain Crossing & Asynchronous FIFODocument40 pagesClock Domain Crossing & Asynchronous FIFOPronadeep Bora100% (1)

- Thesis Projects in Wireless Systems 2012Document12 pagesThesis Projects in Wireless Systems 2012elieNo ratings yet

- ENGG2310A/ESTR2300 (Fall 2018) Problem Set #4Document8 pagesENGG2310A/ESTR2300 (Fall 2018) Problem Set #4Tsz Wing Yip100% (1)

- HFE0111 FirasDocument6 pagesHFE0111 Firaschiase83No ratings yet

- Design of FIR Filters: Z N H Z H e e e e ZDocument11 pagesDesign of FIR Filters: Z N H Z H e e e e Zapi-19804922No ratings yet

- Assignment 2Document5 pagesAssignment 2friendsNo ratings yet

- QUAD Multiplex Decoder Instruction CSDocument16 pagesQUAD Multiplex Decoder Instruction CSChindeaNo ratings yet

- Guitar Effects For DummiesDocument16 pagesGuitar Effects For DummiesJoseph Selness92% (12)

- Technical Manual Operator'S and Unit Maintenance Manual FOR Firing Device, Demolition. M122 (NSN 1375-01-021-0606) (EIC: 2NA)Document7 pagesTechnical Manual Operator'S and Unit Maintenance Manual FOR Firing Device, Demolition. M122 (NSN 1375-01-021-0606) (EIC: 2NA)Михаил НаумовNo ratings yet

- Software Receiver DesignDocument481 pagesSoftware Receiver DesignAsad Khalid100% (2)

- Mobile Computing Two MarksDocument7 pagesMobile Computing Two MarksKarthick ManiNo ratings yet

- Sbs 900Document2 pagesSbs 900almazNo ratings yet

- V60e System DiagramDocument2 pagesV60e System DiagramIoannisNo ratings yet

- RFID Whitepaper-Updated OBoyleDocument5 pagesRFID Whitepaper-Updated OBoyleMelkamu YigzawNo ratings yet

- Communication Media For Scada SystemsDocument10 pagesCommunication Media For Scada Systemsmuzammiliqbal50% (2)

- Radio Handbook 17 1967Document847 pagesRadio Handbook 17 1967Hsu100% (1)

- Se414 SWBP2LDF (DXX) Di PDFDocument2 pagesSe414 SWBP2LDF (DXX) Di PDFdidimomNo ratings yet

- FT-857 Second MenuDocument5 pagesFT-857 Second MenuJoaquim FrancoNo ratings yet

- Packet Switching: 1.3 The Network CoreDocument5 pagesPacket Switching: 1.3 The Network Corefree5050No ratings yet

- HCIA-WLAN V2.0 Mock ExamDocument5 pagesHCIA-WLAN V2.0 Mock ExamhoussemNo ratings yet

- The Basics of Sigma Delta Analog-To-Digital ConvertersDocument3 pagesThe Basics of Sigma Delta Analog-To-Digital Convertersrakheep123No ratings yet