Professional Documents

Culture Documents

PLS205 !! Lab 9 !! March 6, 2014 Topic 13: Covariance Analysis

PLS205 !! Lab 9 !! March 6, 2014 Topic 13: Covariance Analysis

Uploaded by

PETEROriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

PLS205 !! Lab 9 !! March 6, 2014 Topic 13: Covariance Analysis

PLS205 !! Lab 9 !! March 6, 2014 Topic 13: Covariance Analysis

Uploaded by

PETERCopyright:

Available Formats

PLS205 !! Lab 9 !!

March 6, 2014

Topic 13: Covariance Analysis

Covariable as a tool for increasing precision

Carrying out a full ANCOVA

Testing ANOVA assumptions

Happiness!

Covariable as a Tool for Increasing Precision

The eternal struggle of the noble researcher is to reduce the amount of variation that is unexplained by the

experimental model (i.e. error). Blocking is one way of doing this, of reducing the variation-within-

treatments (MSE) so that one can better detect differences among treatments (MST). Blocking is a

powerful general technique in that it allows the researcher to reduce the MSE by allocating variation to

block effects without necessarily knowing the exact reason for block-to-block differences. The price one

pays for this general reduction in error is twofold: 1) Reduction in dferror, and 2) Constraint of the

experimental design, as the blocks must be crossed orthogonally with the treatments under investigation.

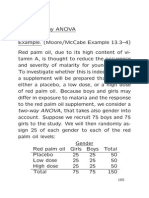

Another way of reducing unaccounted-for variation is through the use of covariables (or concomitant

variables). Covariables are specific, observable characteristics of the experimental units whose

continuous values, while independent of the treatments under investigation, are strongly correlated to the

values of the response variable in the experiment. It may sound a little confusing at first, but the concept

of covariables is quite intuitive. Consider the following example:

Baking potatoes takes forever, so a potato breeder decides to develop a fast-

baking potato. He makes a bunch of crosses and carries out a test to compare

baking times. While he hopes there are differences in baking times among the

new varieties he has made, he knows that the size of the potato, regardless of its

variety, will affect cooking time immensely. In analyzing baking times, then, the

breeder must take into account this size factor. Ideally, he would like to compare

the baking times he would have measured had all the potatoes been the same size

by using size as a covariable, he can do just that.

Unlike blocks, covariables are specific continuous variables that must be measured, and their correlation

to the response variable determined, in order to be used. One advantage over blocks is that covariables do

not need to satisfy any orthogonality criteria relative to the treatments under consideration. But a valid

ANCOVA (Analysis of Covariance) does require the covariable to meet several assumptions.

Carrying Out a Full ANCOVA

Example 9.1 ST&D p435 [Lab9ex1.sas]

This example illustrates the correct way to code for an ANCOVA, to test all ANCOVA

assumptions, and to interpret the results of such an analysis.

PLS205 2014 1 Lab 9 (Topic 13)

In this experiment, eleven varieties of lima beans (Varieties 1 11) were evaluated for differences in

ascorbic acid content (response Y, in mg ascorbic acid / 100 g dry weight). Recognizing that differences

in maturity at harvest could affect ascorbic acid content independent of variety, the researchers measured

the percent dry matter of each variety at harvest (X) and used it as a covariable (i.e. a proxy indicator of

maturity).

So, in summary:

Treatment Variety (1 11)

Response variable Y (ascorbic acid content)

Covariable X (% dry matter at harvest)

The experiment was organized as an RCBD with five blocks and one replication per block-treatment

combination.

Data LimaC;

Do Block = 1 to 5;

Do Variety = 1 to 11;

Input X Y @@;

Output;

End;

End;

Cards;

34 93 33.4 94.8 34.7 91.7 38.9 80.8 36.1 80.2

39.6 47.3 39.8 51.5 51.2 33.3 52 27.2 56.2 20.6

31.7 81.4 30.1 109 33.8 71.6 39.6 57.5 47.8 30.1

37.7 66.9 38.2 74.1 40.3 64.7 39.4 69.3 41.3 63.2

24.9 119.5 24 128.5 24.9 125.6 23.5 129 25.1 126.2

30.3 106.6 29.1 111.4 31.7 99 28.3 126.1 34.2 95.6

32.7 106.1 33.8 107.2 34.8 97.5 35.4 86 37.8 88.8

34.5 61.5 31.5 83.4 31.1 93.9 36.1 69 38.5 46.9

31.4 80.5 30.5 106.5 34.6 76.7 30.9 91.8 36.8 68.2

21.2 149.2 25.3 151.6 23.5 170.1 24.8 155.2 24.6 146.1

30.8 78.7 26.4 116.9 33.2 71.8 33.5 70.3 43.8 40.9

;

Proc GLM Data = LimaC; * Model to test individual ANOVAS, one for X and one for Y;

Class Block Variety;

Model X Y = Block Variety;

Proc Plot; *Plot to see regression of Y vs. X;

Plot Y*X = Variety / vpos = 40;

Proc GLM Data = LimaC; * Model to test general regression;

Model Y = X;

Proc GLM Data = LimaC; * Testing for homogeneity of slopes;

Class Variety Block;

Model Y = Block Variety X Variety*X; * Note: Variety*X is our only interest here!;

Proc GLM Data = LimaC; * The ANCOVA;

Class Block Variety; * Note that X is NOT included as a class variable;

Model Y = Block Variety X / solution; * ...because it's here as a regression variable

The 'solution' option generates our adjustment slope (b);

LSMeans Variety / stderr pdiff adjust = Tukey;

* LSMeans adjusts Y based on its correlation to X;

Run;

Quit;

The Output

1. The ANOVA with X (the covariable) as the response variable

Sum of

Source DF Squares Mean Square F Value Pr > F

Model 14 2534.566909 181.040494 18.97 <.0001

Error 40 381.654182 9.541355

Corrected Total 54 2916.221091

PLS205 2014 2 Lab 9 (Topic 13)

R-Square Coeff Var Root MSE X Mean

0.869127 9.088427 3.088908 33.98727

Source DF Type III SS Mean Square F Value Pr > F

Block 4 367.853818 91.963455 9.64 <.0001

Variety 10 2166.713091 216.671309 22.71 <.0001 ***

Depending on the situation, this ANOVA on X (the covariable) may be needed to test the assumption of

independence of the covariable from the treatments. The two possible scenarios:

1. The covariable (X) is measured before the treatment is applied. In this case, the assumption

of independence is satisfied a priori. If the treatment has yet to be applied, there is no way it

can influence the observed values of X.

2. The covariable (X) is measured after the treatment is applied. In this case, it is possible that

the treatment itself influenced the covariable (X); so one must test directly for independence.

In this case, the null hypothesis is that there are no significant differences among treatments

for the covariable. (i.e. Variety in this model should be NS).

Here we are in Situation #2. The "treatment" is the lima bean variety itself, so it was certainly "applied"

before the % dry matter was measured. Here H0 is soundly rejected (p < 0.0001), so we should be very

cautious when drawing conclusions.

And how might one exercise caution exactly?

The danger here is that the detected effect of Variety on ascorbic acid content is really just the

effect of differences in maturity. If the ANOVA is significant and the ANCOVA is not, you can

conclude that difference in maturity is the driving force behind ascorbic acid differences. If, on

the other hand, your ANOVA is not significant and your ANCOVA is, you can conclude that the

covariable successfully eliminated an unwanted source of variability that was obscuring your

significant differences among Varieties.

2. The ANOVA for Y

Sum of

Source DF Squares Mean Square F Value Pr > F

Model 14 55987.11782 3999.07984 26.90 <.0001

Error 40 5947.30400 148.68260

Corrected Total 54 61934.42182

R-Square Coeff Var Root MSE Y Mean

0.903974 13.71322 12.19355 88.91818

Source DF Type III SS Mean Square F Value Pr > F

Block 4 4968.94000 1242.23500 8.35 <.0001

Variety 10 51018.17782 5101.81778 34.31 <.0001

Looking at this ANOVA on Y (the response variable), there appears to be a significant effect of Variety

on ascorbic acid content; but we will wait to draw our conclusions until we see the results of the

ANCOVA (see comments in the yellow box above).

PLS205 2014 3 Lab 9 (Topic 13)

Incidentally, to calculate the relative precision of the ANCOVA to the ANOVA exactly, the bold and

underlined numbers from the individual ANOVAs above will be used.

3. The General Regression

Plot of Y*X. Symbol is value of Variety.

Y |

|

200 |

|

| 1

|

150 | 1 111

|

| 55 5 6

| 1 6 36 7

100 | 8 6 71 6 7

| 983 11 7 7

| 19 13 9 1 4 14

| 8 9 4 3 4 4

50 | 8 22

| 1 2

| 3 2 2

|

0 |

|

|__________________________________________________________________________________________

20 25 30 35 40 45 50 55 60

X

The above plot of Y vs. X indicates a clear negative correlation between the two variables, a correlation

that is confirmed by the following regression analysis:

Sum of

Source DF Squares Mean Square F Value Pr > F

Model 1 51257.57888 51257.57888 254.44 <.0001

Error 53 10676.84293 201.44987

Corrected Total 54 61934.42182

R-Square Coeff Var Root MSE Y Mean

0.827611 15.96221 14.19330 88.91818

Source DF Type I SS Mean Square F Value Pr > F

X 1 51257.57888 51257.57888 254.44 <.0001

Standard

Parameter Estimate Error t Value Pr > |t|

Intercept 231.4084282 9.13555507 25.33 <.0001

X -4.1924590 0.26282899 -15.95 <.0001

There is a strong negative correlation (p < 0.0001, slope = -4.19) between ascorbic acid content and % dry

matter at harvest. In fact, over 82% of the variation in ascorbic acid content can be attributed to

differences in % dry matter. It is a result like this that suggests that an ANCOVA would be appropriate

for this study.

PLS205 2014 4 Lab 9 (Topic 13)

4. Testing for Homogeneity of Slopes

Sum of

Source DF Squares Mean Square F Value Pr > F

Model 25 60614.92214 2424.59689 53.29 <.0001

Error 29 1319.49968 45.49999

Corrected Total 54 61934.42182

R-Square Coeff Var Root MSE Y Mean

0.978695 7.586039 6.745368 88.91818

Source DF Type III SS Mean Square F Value Pr > F

Block 4 490.4973830 122.6243457 2.70 0.0504

Variety 10 832.7573771 83.2757377 1.83 0.0994

X 1 886.4908373 886.4908373 19.48 0.0001

X*Variety 10 883.9544164 88.3954416 1.94 0.0796 NS

With this model, we checked the homogeneity of slopes across treatments, assuming that the block effects

are additive and do not affect the regression slopes. The interaction test is NS at the 5% level (p =

0.0796), so the assumption of slope homogeneity across treatments is not violated. Good. This

interaction must be NS in order for us to carry out the ANCOVA.

5. Finally, the ANCOVA

Sum of

Source DF Squares Mean Square F Value Pr > F

Model 15 59730.96772 3982.06451 70.48 <.0001

Error 39 2203.45410 56.49882

Corrected Total 54 61934.42182

R-Square Coeff Var Root MSE Y Mean

0.964423 8.453355 7.516570 88.91818

Source DF Type III SS Mean Square F Value Pr > F

Block 4 756.392459 189.098115 3.35 0.0190

Variety 10 7457.621358 745.762136 13.20 <.0001 ***

X 1 3743.849903 3743.849903 66.26 <.0001

Here we find significant differences in ascorbic acid content among varieties besides those which

correspond to differences in percent dry matter at harvest. A couple of things to notice:

1. The model df has increased from 14 to 15 because X is now included as a regression variable.

Correspondingly, the error df has dropped by 1 (from 40 to 39).

2. Adding the covariable to the model increased R2 from 90.4% to 96.4%, illustrating the fact

that inclusion of the covariable helps to explain more of the observed variation, increasing

our precision.

3. Because we are now adjusting the Block and Variety effects by the covariable regression, no

longer do the SS of the individual model effects add up to the Model SS:

756.39 (Block SS) + 7457.62 (Variety SS) + 3743.85 (X SS) = 11957.86

59730.97 (Model SS)

PLS205 2014 5 Lab 9 (Topic 13)

When we allow the regression with harvest time to account for some of the observed

variation in ascorbic acid content, we find that less variation is uniquely attributable to Blocks

and Varieties than before. The covariable SS (3743.85) comes straight out of the ANOVA

Error SS (5947.30 - 2203.45).

As with the ANOVAs before, the bold underlined term in the ANCOVA output is used in the calculation

of relative precision, which can now be carried out:

SST

Effective MSE = MSE Y Adjusted

[1 X

]

( t 1) SSE X

2166 . 713091

= 56 . 49882 [1 ] 88 . 57412238

10 381 . 654182

MSE Y Unadjusted 148 . 68260

Relative Precision = 1 . 68

EffectiveM SE Y Adjusted 88 . 57412238

In this particular case, we see that each replication with the covariable is as effective as 1.68 without; so

the ANCOVA is 1.68 times more precise than the ANOVA ['Precise' in this sense refers to our ability to

detect the real effect of Variety on ascorbic acid content (i.e. our ability to isolate the true effect of

Variety from the effect of dry matter content at harvest)].

6. Calculation of the slope (b)

The '/ solution' option tacked on the end of the Model statement finds the residuals for both X and Y and

then regresses these residuals on one another to calculate the "best" single slope value that describes the

relationship between X and Y for all classification variables. The output:

Standard

Parameter Estimate Error t Value Pr > |t|

Intercept 178.9589389 B 15.11416467 11.84 <.0001

X -3.1320177 0.38475537 -8.14 <.0001

We'll use this slope here in a bit, so keep it in mind.

7. Tukey-Kramer Separation of Adjusted Means

SAS then also generates a matrix of p-values for all the pair-wise comparisons of the eleven varieties,

their means appropriately adjusted by the covariable. Notice, too, that we requested the standard errors

for all the treatment means as these are often requested in the presentation of adjusted means.

PLS205 2014 6 Lab 9 (Topic 13)

Testing ANOVA Assumptions

To test the ANOVA assumptions for this model, we rely on an important concept: An ANCOVA of the

original data is equivalent to an ANOVA of regression-adjusted data. In other words, if we define a

regression-adjusted response variable Z:

Z i Yi b ( X i X )

then we will find that an ANCOVA on Y is equivalent to an ANOVA on Z. Let's try it:

Example 9.2 [Lab9ex2.sas]

Input X Y @@;

Z = Y + 3.1320177*(X - 33.98727); * Create the new regression-adjusted variable Z;

Output;

End;

End;

Cards;

;

Proc GLM Data = LimaC; * The ANOVA of adjusted values;

Class Block Variety;

Model Z = Block Variety;

Means Variety;

Means Variety / Tukey;

Output out = LimaCPR p = Pred r = Res;

Proc Univariate Normal Data = LimaCPR; * Test normality of residuals;

Var Res;

Proc GLM Data = LimaC; * Levene's Test for Variety;

Class Variety;

Model Z = Variety;

Means Variety / hovtest = Levene;

Proc GLM Data = LimaCPR; * Tukey's Test for Nonadditivity;

Class Block Variety;

Model Z = Block Variety Pred*Pred;

Run; Quit;

A Comparison of Means

The table below shows the adjusted Variety means generated by the LSMeans statement in the ANCOVA

alongside the normal Variety means generated by the Means statement in the ANOVA above:

ANCOVA ANOVA

Variety

LSMeans Means

1 92.587327 92.587327

2 79.116425 79.116425

3 78.103108 78.103108

4 84.530117 84.530117

5 95.983054 95.983054

6 97.506844 97.506844

7 99.978678 99.978678

8 72.044748 72.044748

9 81.146722 81.146722

10 122.783843 122.783843

11 74.319134 74.319134

PLS205 2014 7 Lab 9 (Topic 13)

Pretty impressive. This is striking confirmation that our imposed adjustment (Z) accounts for the

covariable just as the ANCOVA does.

A Comparison of Analyses

Take another look at the ANCOVA table:

Dependent Variable: Y

Sum of

Source DF Squares Mean Square F Value Pr > F

Model 15 59730.96772 3982.06451 70.48 <.0001

Error 39 2203.45410 56.49882

Corrected Total 54 61934.42182

Source DF Type III SS Mean Square F Value Pr > F

Block 4 756.392459 189.098115 3.35 0.0190 *

Variety 10 7457.621358 745.762136 13.20 <.0001 ***

X 1 3743.849903 3743.849903 66.26 <.0001

and compare it now with the ANOVA table:

Dependent Variable: Z

Sum of

Source DF Squares Mean Square F Value Pr > F

Model 14 11752.78353 839.48454 15.24 <.0001

Error 40 2203.45410 55.08635

Corrected Total 54 13956.23763

Source DF Type III SS Mean Square F Value Pr > F

Block 4 768.31526 192.07882 3.49 0.0156 *

Variety 10 10984.46826 1098.44683 19.94 <.0001 ***

A couple of things to notice:

a. Error SS is exactly the same in both (2203.45410), indicating that the explanatory powers of

the two analyses are the same.

b. The F values have shifted, but this is understandable due in part to the shifts in df.

c. The p-values are very similar in both analyses, giving us the same levels of significance as

before.

The reason it is important to show the general equivalence of these two approaches is because, now that

we have reduced the design to a simple RCBD, testing the assumptions is straightforward.

1. Normality of Residuals

Tests for Normality

Test --Statistic--- -----p Value------

Shapiro-Wilk W 0.970883 Pr < W 0.2016 NS

Our old friend Shapiro-Wilk is looking good.

PLS205 2014 8 Lab 9 (Topic 13)

2. Homogeneity of Variety Variances

Levene's Test for Homogeneity of Z Variance

ANOVA of Squared Deviations from Group Means

Sum of Mean

Source DF Squares Square F Value Pr > F

Variety 10 69418.0 6941.8 1.02 0.4408 NS

Error 44 298776 6790.4

Lovely, that.

3. Tukey Test for Nonadditivity

Source DF Type III SS Mean Square F Value Pr > F

Block 4 76.4248422 19.1062106 0.34 0.8485

Variety 10 156.1917108 15.6191711 0.28 0.9823

Pred*Pred 1 19.3869147 19.3869147 0.35 0.5597 NS

And there we go. Easy.

The Take Home Message

To test the assumptions of a covariable model, adjust the original data by the regression and

proceed with a regular ANOVA. In fact, adjusting the original data by the regression and

proceeding with an ANOVA is a valid approach to the overall analysis. [Note, however, that one

first needs to demonstrate homogeneity of slopes to be able to use a common slope to adjust all

observations.]

PLS205 2014 9 Lab 9 (Topic 13)

You might also like

- Afpba, Session I: Lecture 1.4.2 - Distributions, Hypothesis Testing, and Sample Size DeterminationDocument6 pagesAfpba, Session I: Lecture 1.4.2 - Distributions, Hypothesis Testing, and Sample Size DeterminationTeflon SlimNo ratings yet

- MOCK STATS TEST - Solutions Dec 2021Document10 pagesMOCK STATS TEST - Solutions Dec 2021Riddhi LalpuriaNo ratings yet

- CH 04Document53 pagesCH 04Agus Kuwi Yo SurinnakiNo ratings yet

- Dentaurum Rema Exakt, Rema Exakt F Mixing LiquidDocument6 pagesDentaurum Rema Exakt, Rema Exakt F Mixing LiquidPETERNo ratings yet

- Ilwis User Guide30 PDFDocument542 pagesIlwis User Guide30 PDFprinceNo ratings yet

- Completely Randomized DesignDocument7 pagesCompletely Randomized DesignElizabeth Ann FelixNo ratings yet

- Topic 13: Analysis of Covariance (ANCOVA) : PLS205 Homework 9 Winter 2015Document8 pagesTopic 13: Analysis of Covariance (ANCOVA) : PLS205 Homework 9 Winter 2015PETERNo ratings yet

- Multi Derivative AnalysisDocument14 pagesMulti Derivative AnalysisTiwaryNo ratings yet

- Lecture Seven:: Randomized Complete Block DesignDocument5 pagesLecture Seven:: Randomized Complete Block DesignkennedyNo ratings yet

- Diagnostico de ModelosDocument4 pagesDiagnostico de Modeloskhayman.gpNo ratings yet

- 2014 Lab 9 BDocument16 pages2014 Lab 9 BPiNo ratings yet

- ANOVA 1 F-RatioDocument17 pagesANOVA 1 F-RatioHamza AsifNo ratings yet

- Classification of Experimental DesignsDocument26 pagesClassification of Experimental DesignsSHEETAL SINGHNo ratings yet

- DBE Life Sciences SkillsDocument49 pagesDBE Life Sciences Skillsnn447647No ratings yet

- Completely Randomized Design: Ma. Rowena M. Bayrante, PHDDocument22 pagesCompletely Randomized Design: Ma. Rowena M. Bayrante, PHDBob UrbandubNo ratings yet

- Appropiate Critical Values Multivariate Outliers Mahalanobis DistanceDocument10 pagesAppropiate Critical Values Multivariate Outliers Mahalanobis DistanceAnonymous 3SfBIFNo ratings yet

- Unit - IV Design of ExperimentsDocument16 pagesUnit - IV Design of ExperimentsIT GirlsNo ratings yet

- RCBDDocument55 pagesRCBDHamza KhanNo ratings yet

- Lab 4Document5 pagesLab 4MaraweleNo ratings yet

- R3 Lecture 2Document25 pagesR3 Lecture 2Fernando CamelonNo ratings yet

- Stats 845 Lecture 14nDocument84 pagesStats 845 Lecture 14nAashima Sharma BhasinNo ratings yet

- 2 Way AnovaDocument20 pages2 Way Anovachawlavishnu100% (1)

- Regression Analysis: Kriti Bardhan Gupta Kriti@iiml - Ac.inDocument16 pagesRegression Analysis: Kriti Bardhan Gupta Kriti@iiml - Ac.inPavan PearliNo ratings yet

- Week10 Analysis of VarianceDocument24 pagesWeek10 Analysis of VarianceHelga LukajNo ratings yet

- Chapter 5 Sampling Distributions: InfiniteDocument16 pagesChapter 5 Sampling Distributions: InfiniteNsy noormaeeerNo ratings yet

- Randamized Block NotedDocument5 pagesRandamized Block NotedyagnasreeNo ratings yet

- Chapter 9. Multiple Comparisons and Trends Among Treatment MeansDocument21 pagesChapter 9. Multiple Comparisons and Trends Among Treatment MeanskassuNo ratings yet

- ANOVA - Testing For The Assumption of Equal Variances - Levene TestDocument2 pagesANOVA - Testing For The Assumption of Equal Variances - Levene TestPedro BarbosaNo ratings yet

- Cochran C Test For OutliersDocument3 pagesCochran C Test For OutliersEdgar ChoqueNo ratings yet

- 04 Simple AnovaDocument9 pages04 Simple AnovaShashi KapoorNo ratings yet

- RM Assignment 2Document9 pagesRM Assignment 27 EduNo ratings yet

- Large Sample TestDocument6 pagesLarge Sample Testoliver senNo ratings yet

- Experimental DesignDocument15 pagesExperimental DesignElson Antony PaulNo ratings yet

- Analysis of VarianceDocument28 pagesAnalysis of VarianceMerylea KellyNo ratings yet

- Measures of VariabilityDocument46 pagesMeasures of VariabilityMelqui MagcalingNo ratings yet

- 0feaf24f-6a96-4279-97a1-86708e467593 (1)Document7 pages0feaf24f-6a96-4279-97a1-86708e467593 (1)simandharNo ratings yet

- Complete Block DesignsDocument80 pagesComplete Block DesignsTaddese GashawNo ratings yet

- The Greatest Blessing in Life Is in Giving and Not TakingDocument30 pagesThe Greatest Blessing in Life Is in Giving and Not TakingSafi SheikhNo ratings yet

- Chapter 13Document28 pagesChapter 13Zeeshan UsmaniNo ratings yet

- Dr. Carlos GuzmanDocument3 pagesDr. Carlos GuzmanAlejandra RamrezNo ratings yet

- Analysis of Variance Assumptions Behind The AnalysisDocument35 pagesAnalysis of Variance Assumptions Behind The AnalysisAndres Suarez UsbeckNo ratings yet

- Understanding The Nature of StatisticsDocument12 pagesUnderstanding The Nature of StatisticsDenisse Nicole SerutNo ratings yet

- Stat Features XL May 2003Document12 pagesStat Features XL May 2003nirmal kumarNo ratings yet

- One Way AnovaDocument16 pagesOne Way AnovaChe Macasa SeñoNo ratings yet

- Surigao State College of Technology - Malimono Campus Learning ModuleDocument4 pagesSurigao State College of Technology - Malimono Campus Learning ModuleElla Clent moralesNo ratings yet

- VarianceDocument8 pagesVarianceKanchanNo ratings yet

- Data TransfermationDocument16 pagesData TransfermationBhuvanaNo ratings yet

- Avila, Jemuel T. - The Random-Effects Model and Randomized Complete Block DesignDocument3 pagesAvila, Jemuel T. - The Random-Effects Model and Randomized Complete Block DesignMarlNo ratings yet

- Covariance Analysis: Rajender Parsad and V.K. Gupta I.A.S.R.I., Library Avenue, New Delhi - 110 012Document6 pagesCovariance Analysis: Rajender Parsad and V.K. Gupta I.A.S.R.I., Library Avenue, New Delhi - 110 012Héctor YendisNo ratings yet

- Estimating Errors Using Variance in PhysicsDocument23 pagesEstimating Errors Using Variance in PhysicsDavid Lancelot PiadNo ratings yet

- List of Correction For Applied Statistics ModuleDocument26 pagesList of Correction For Applied Statistics ModuleThurgah VshinyNo ratings yet

- R Code For Linear Regression Analysis 1 Way ANOVADocument8 pagesR Code For Linear Regression Analysis 1 Way ANOVAHagos TsegayNo ratings yet

- ANOVA Unit3 BBA504ADocument9 pagesANOVA Unit3 BBA504ADr. Meghdoot GhoshNo ratings yet

- AGR3701 PORTFOLIO (UPDATED 2 Feb) LatestDocument36 pagesAGR3701 PORTFOLIO (UPDATED 2 Feb) LatestNur AddinaNo ratings yet

- Multiple RegressionDocument7 pagesMultiple RegressionM8R_606115976No ratings yet

- AnovaDocument27 pagesAnovaadc.deleon11No ratings yet

- CH V AnovaDocument22 pagesCH V AnovaMelaku WalelgneNo ratings yet

- Examples 328 0Document10 pagesExamples 328 0ahmadrusdi957No ratings yet

- E D STAT-1002: Xperimental EsignDocument17 pagesE D STAT-1002: Xperimental Esignaqsa batoolNo ratings yet

- Advanced Statistics: Analysis of Variance (ANOVA) Dr. P.K.Viswanathan (Professor Analytics)Document19 pagesAdvanced Statistics: Analysis of Variance (ANOVA) Dr. P.K.Viswanathan (Professor Analytics)vishnuvkNo ratings yet

- Experimental approaches to Biopharmaceutics and PharmacokineticsFrom EverandExperimental approaches to Biopharmaceutics and PharmacokineticsNo ratings yet

- Sample Sizes for Clinical, Laboratory and Epidemiology StudiesFrom EverandSample Sizes for Clinical, Laboratory and Epidemiology StudiesNo ratings yet

- Vertex Self Curing LiquidDocument18 pagesVertex Self Curing LiquidPETERNo ratings yet

- Digital StrategyDocument4 pagesDigital StrategyPETERNo ratings yet

- Methylated Spirits Msds 56454 Jun08Document8 pagesMethylated Spirits Msds 56454 Jun08PETERNo ratings yet

- Methylated Spirits Msds 56454 Jun08Document8 pagesMethylated Spirits Msds 56454 Jun08PETERNo ratings yet

- Modelling Wax Iw421xxDocument4 pagesModelling Wax Iw421xxPETERNo ratings yet

- Special Methylated Spirit 70% Untinted: Material Safety Data SheetDocument3 pagesSpecial Methylated Spirit 70% Untinted: Material Safety Data SheetPETERNo ratings yet

- Wuct121 Discrete Mathematics Final Exam Autumn 2008 Marking GuideDocument13 pagesWuct121 Discrete Mathematics Final Exam Autumn 2008 Marking GuidePETERNo ratings yet

- Wacker Catalyst T 35Document6 pagesWacker Catalyst T 35PETERNo ratings yet

- Msds B50Document3 pagesMsds B50PETERNo ratings yet

- Msds SDHDocument2 pagesMsds SDHPETERNo ratings yet

- Government Response: Pricing VET Under Smart and Skilled - Final ReportDocument7 pagesGovernment Response: Pricing VET Under Smart and Skilled - Final ReportPETERNo ratings yet

- 2012 Assessment: Specialist Maths 2 GA 3 Exam © Victorian Curriculum and Assessment Authority 2013 1Document9 pages2012 Assessment: Specialist Maths 2 GA 3 Exam © Victorian Curriculum and Assessment Authority 2013 1PETERNo ratings yet

- Mini-Lesson 1: Section 1.1: Order of OperationsDocument11 pagesMini-Lesson 1: Section 1.1: Order of OperationsPETERNo ratings yet

- Homework Topic 1&2.: Plus 20Document11 pagesHomework Topic 1&2.: Plus 20PETERNo ratings yet

- Topic 13: Analysis of Covariance (ANCOVA) : PLS205 Homework 9 Winter 2015Document8 pagesTopic 13: Analysis of Covariance (ANCOVA) : PLS205 Homework 9 Winter 2015PETERNo ratings yet

- Output Management Data IFLSDocument5 pagesOutput Management Data IFLSFirdausa Kumala SariNo ratings yet

- Vectorization: Linear Model As A PerceptronDocument5 pagesVectorization: Linear Model As A Perceptronwellhellothere123No ratings yet

- Revised Course Outline MKT 470Document5 pagesRevised Course Outline MKT 470Sabine RahmanNo ratings yet

- Selecting A Forecasting TechniqueDocument58 pagesSelecting A Forecasting TechniqueGitanjali gitanjaliNo ratings yet

- Strategic Management Journal - 2021 - Flammer - Strategic Management During The Financial Crisis How Firms Adjust TheirDocument24 pagesStrategic Management Journal - 2021 - Flammer - Strategic Management During The Financial Crisis How Firms Adjust TheirPragya patelNo ratings yet

- Real Estate Valuation Data Set: Section OrderDocument17 pagesReal Estate Valuation Data Set: Section Orderthe city of lightNo ratings yet

- Mie 1628H - Big Data Final Project Report Apple Stock Price PredictionDocument10 pagesMie 1628H - Big Data Final Project Report Apple Stock Price Predictionbenben08No ratings yet

- Optimization Techniques in Pharmaceutical Formulations and ProcessingDocument27 pagesOptimization Techniques in Pharmaceutical Formulations and ProcessingIndranil Ganguly96% (26)

- DSML Project Report - Group05Document14 pagesDSML Project Report - Group05deepak rajNo ratings yet

- Material-Balance Analysis of Gas Reservoirs With Diverse DriveDocument28 pagesMaterial-Balance Analysis of Gas Reservoirs With Diverse DriveMuhammad Arif FadilahNo ratings yet

- The Impact of Communication On Project Management Effectiveness in Ethio-Telecom: A Case of Nekemte Town Telecom Expansion ProjectDocument93 pagesThe Impact of Communication On Project Management Effectiveness in Ethio-Telecom: A Case of Nekemte Town Telecom Expansion ProjectJiregnaGaramuNo ratings yet

- Mixed CostDocument4 pagesMixed CostPeter WagdyNo ratings yet

- Smart Health Monitoring and Management UDocument21 pagesSmart Health Monitoring and Management UMaheshkumar AmulaNo ratings yet

- Academy of Management Is Collaborating With JSTOR To Digitize, Preserve and Extend Access To The Academy of Management JournalDocument19 pagesAcademy of Management Is Collaborating With JSTOR To Digitize, Preserve and Extend Access To The Academy of Management Journalmatias_moroni_1No ratings yet

- The Mediating Impact of Self-Confidence On Relationship of Perceived Formal Support and Entrepreneurial Intention in The Education Sector of PakistanDocument24 pagesThe Mediating Impact of Self-Confidence On Relationship of Perceived Formal Support and Entrepreneurial Intention in The Education Sector of PakistanInternational Journal of Management Research and Emerging SciencesNo ratings yet

- Hansen AISE IM Ch03 (2) - 1Document64 pagesHansen AISE IM Ch03 (2) - 1Thomas D DragneelNo ratings yet

- Simple Linear RegressionDocument19 pagesSimple Linear RegressionRiya SinghNo ratings yet

- 6 Partially Linear RegressionDocument12 pages6 Partially Linear RegressiondssdNo ratings yet

- Glmulti WalkthroughDocument29 pagesGlmulti Walkthroughrglinton_69932551No ratings yet

- ChainLadder Markus 20090724 PDFDocument53 pagesChainLadder Markus 20090724 PDFTejamoy GhoshNo ratings yet

- Damping Scaling Factors For Vertical Elastic Response Spectra For Shallow Crustal Earthquakes in Active Tectonic RegionsDocument24 pagesDamping Scaling Factors For Vertical Elastic Response Spectra For Shallow Crustal Earthquakes in Active Tectonic RegionsPatricio ChicaizaNo ratings yet

- Six Sigma - Dumps.icgb.v2015!08!06.by - Exampass.144qDocument61 pagesSix Sigma - Dumps.icgb.v2015!08!06.by - Exampass.144qAnonymous YkEOLljuNo ratings yet

- (MAS) 01 - Costs and Cost Concepts - For PrintingDocument6 pages(MAS) 01 - Costs and Cost Concepts - For PrintingCykee Hanna Quizo LumongsodNo ratings yet

- Testing For Spectral Granger Causality: 15, Number 4, Pp. 1157-1166Document10 pagesTesting For Spectral Granger Causality: 15, Number 4, Pp. 1157-1166Shakti ShivaNo ratings yet

- Manual Demand Planning Course NetsuiteDocument18 pagesManual Demand Planning Course NetsuiteCristian NúñezNo ratings yet

- ASPEN Plus (Brief Tutorial For VLE Correlation) PDFDocument29 pagesASPEN Plus (Brief Tutorial For VLE Correlation) PDFAKHIRNo ratings yet

- Heteroscedasticity: What Heteroscedasticity Is. Recall That OLS Makes The Assumption ThatDocument20 pagesHeteroscedasticity: What Heteroscedasticity Is. Recall That OLS Makes The Assumption Thatbisma_aliyyahNo ratings yet

- Problem Set3Document4 pagesProblem Set3Jack JacintoNo ratings yet

- Effect of Internal Recruitment On Employees PerformanceDocument49 pagesEffect of Internal Recruitment On Employees PerformanceSeph ReaganNo ratings yet