Professional Documents

Culture Documents

Hil Climbing

Hil Climbing

Uploaded by

Karina Andreea0 ratings0% found this document useful (0 votes)

3 views2 pagesTo convert a solution generated in binary (base 2) to decimal (base 10), a decode function uses a formula that takes the binary number, the minimum and maximum possible values of the function domain, and the number of bits used to represent numbers in the domain. The formula calculates the decimal value as the minimum value plus the decimal equivalent of the binary value times the range over the maximum possible value represented by the number of bits.

Original Description:

gfhghgfj

Original Title

hw

Copyright

© © All Rights Reserved

Available Formats

DOCX, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentTo convert a solution generated in binary (base 2) to decimal (base 10), a decode function uses a formula that takes the binary number, the minimum and maximum possible values of the function domain, and the number of bits used to represent numbers in the domain. The formula calculates the decimal value as the minimum value plus the decimal equivalent of the binary value times the range over the maximum possible value represented by the number of bits.

Copyright:

© All Rights Reserved

Available Formats

Download as DOCX, PDF, TXT or read online from Scribd

Download as docx, pdf, or txt

0 ratings0% found this document useful (0 votes)

3 views2 pagesHil Climbing

Hil Climbing

Uploaded by

Karina AndreeaTo convert a solution generated in binary (base 2) to decimal (base 10), a decode function uses a formula that takes the binary number, the minimum and maximum possible values of the function domain, and the number of bits used to represent numbers in the domain. The formula calculates the decimal value as the minimum value plus the decimal equivalent of the binary value times the range over the maximum possible value represented by the number of bits.

Copyright:

© All Rights Reserved

Available Formats

Download as DOCX, PDF, TXT or read online from Scribd

Download as docx, pdf, or txt

You are on page 1of 2

HIL CLIMBING:

Because the solution is generated in base 2 it has to be converted in base 10 so it will be a decode

function that will use the formula:

X10 = a+decimal(x2)*(b-a)/(2n-1)

Where:

X10 is the number in base 10

a is the smallest value that can be taken by x For example De Jong's function 1 is defined

for [-5.12, 5.12] such that a=-5.12

X2 is the number in base 2

a and b are the the domain of a function f(x), a ≤x ≤b

N= (b-a) * 10^22

n= ceil(log2(N))

You might also like

- Microeconomic Theory Basic Principles and Extensions 12th Edition Nicholson Solutions ManualDocument31 pagesMicroeconomic Theory Basic Principles and Extensions 12th Edition Nicholson Solutions Manualslacklyroomage6kmd6100% (30)

- 2015 Sasmo Maths Class 3Document9 pages2015 Sasmo Maths Class 3Meenakshi VijaykumarNo ratings yet

- Isye 6669 2019 Fall Midterm Solution: Instructors: Prof. Shabbir Ahmed and Prof. Andy SunDocument12 pagesIsye 6669 2019 Fall Midterm Solution: Instructors: Prof. Shabbir Ahmed and Prof. Andy SunhaoNo ratings yet

- MATH 1201 Written Assignment Unit 1Document4 pagesMATH 1201 Written Assignment Unit 1asdsafsvvsg100% (1)

- 52 Test and Assess Your Brain Quotient: What Should Replace The Question Mark?Document10 pages52 Test and Assess Your Brain Quotient: What Should Replace The Question Mark?Rd manNo ratings yet

- BV Cvxbook Extra Exercises2Document175 pagesBV Cvxbook Extra Exercises2Morokot AngelaNo ratings yet

- Quadratic Functions and ModelsDocument12 pagesQuadratic Functions and Modelstarun gehlotNo ratings yet

- Algebra Class 10 (Zambak)Document493 pagesAlgebra Class 10 (Zambak)elcebir80% (15)

- Abstract Algebra HomeworkDocument10 pagesAbstract Algebra HomeworkkyoshizenNo ratings yet

- Math 1201 Written Assignment Unit 1 SolutionsDocument4 pagesMath 1201 Written Assignment Unit 1 Solutions247xchangerNo ratings yet

- Math 1201 Written Assignment Unit 1 SolutionsDocument4 pagesMath 1201 Written Assignment Unit 1 Solutions247xchangerNo ratings yet

- FungsiDocument2 pagesFungsifeby zahraNo ratings yet

- Function Operations and Composition of FunctionsDocument41 pagesFunction Operations and Composition of FunctionsMohy SayedNo ratings yet

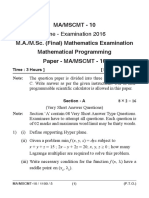

- Ma-Mscmt-10 J16Document5 pagesMa-Mscmt-10 J16Dilip BhatiNo ratings yet

- Optimization Theory and MethodsDocument7 pagesOptimization Theory and MethodsAbimael Salinas PachecoNo ratings yet

- Envelope TheoreTms, Bordered Hessians - BI Norwegian School ManagmentDocument20 pagesEnvelope TheoreTms, Bordered Hessians - BI Norwegian School ManagmentTabernáculoLaVozdeDiosNo ratings yet

- BV Cvxbook Extra ExercisesDocument187 pagesBV Cvxbook Extra ExercisesMorokot AngelaNo ratings yet

- Lecture4-PO SS2011 04.2 MultidimensionalOptimizationEqualityConstraint p5Document5 pagesLecture4-PO SS2011 04.2 MultidimensionalOptimizationEqualityConstraint p5Everton CollingNo ratings yet

- HW2 SolDocument5 pagesHW2 Solapple tedNo ratings yet

- Test Yourself - (Add, Prefix, Output)Document2 pagesTest Yourself - (Add, Prefix, Output)Vedant DubeyNo ratings yet

- Solution of A Boundary Value Problem by Rayleigh-Ritz MethodDocument1 pageSolution of A Boundary Value Problem by Rayleigh-Ritz Methodanam abbasNo ratings yet

- BV Cvxbook Extra ExercisesDocument165 pagesBV Cvxbook Extra ExercisesscatterwalkerNo ratings yet

- NPTEL Assignment 1 OptDocument14 pagesNPTEL Assignment 1 OptSoumyadeep BoseNo ratings yet

- Cal24 Leibniz Notation For The DerivativeDocument2 pagesCal24 Leibniz Notation For The Derivativemarchelo_cheloNo ratings yet

- 11 Ma 1 A Section 1 Sol 6Document2 pages11 Ma 1 A Section 1 Sol 6Alexander LopezNo ratings yet

- Functions and Calculus Investigation: Designing A Roller CoasterDocument6 pagesFunctions and Calculus Investigation: Designing A Roller CoasterprogamerNo ratings yet

- EENG703 - Assignment04 (AutoRecovered)Document19 pagesEENG703 - Assignment04 (AutoRecovered)Faisal MumtazNo ratings yet

- Dwnload Full Microeconomic Theory Basic Principles and Extensions 12th Edition Nicholson Solutions Manual PDFDocument36 pagesDwnload Full Microeconomic Theory Basic Principles and Extensions 12th Edition Nicholson Solutions Manual PDFscantletdecumanszfdq100% (13)

- PG 267/ Eg3. Cos (X) DX 2 .: Area Under The Graph of A Nonnegative FunctionDocument11 pagesPG 267/ Eg3. Cos (X) DX 2 .: Area Under The Graph of A Nonnegative Functionaye pyoneNo ratings yet

- Steepest DescentDocument4 pagesSteepest DescentvaraduNo ratings yet

- Lower Sixth MathematicsDocument4 pagesLower Sixth MathematicsKuinye NanjeNo ratings yet

- Chapter 1 - The IntegralDocument12 pagesChapter 1 - The IntegralKevin XavierNo ratings yet

- Introduction To Digital Design: Chapter 2: Combinational Logic CircuitsDocument20 pagesIntroduction To Digital Design: Chapter 2: Combinational Logic CircuitsRawan F HamadNo ratings yet

- COSC6397 Homework Assignment 1 (Larger-Scale Fading)Document2 pagesCOSC6397 Homework Assignment 1 (Larger-Scale Fading)Tame DaxNo ratings yet

- Chapter 9Document29 pagesChapter 9Subrat Kumar ShaNo ratings yet

- Ugmath2019 Solutions PDFDocument5 pagesUgmath2019 Solutions PDFVaibhav BajpaiNo ratings yet

- Ug MathsDocument5 pagesUg MathsMayank PrakashNo ratings yet

- Examples of Multiple Choice Math Exam Questions For Grade 3 High School Along With Answer Keys and ExplanationsDocument6 pagesExamples of Multiple Choice Math Exam Questions For Grade 3 High School Along With Answer Keys and ExplanationsBENDAHARA1 PANCATENGAHNo ratings yet

- Stationary Points of Functions: Local Maximum and Local MinimumDocument38 pagesStationary Points of Functions: Local Maximum and Local MinimumMuhammad yousufNo ratings yet

- Function Operations and Composition of FunctionsDocument29 pagesFunction Operations and Composition of FunctionsBretana joanNo ratings yet

- Functions (Part 1)Document12 pagesFunctions (Part 1)Sachin KumarNo ratings yet

- Pset13 Fall2020Document2 pagesPset13 Fall2020Matos DanielNo ratings yet

- Math 9-Q1-Week-6Document12 pagesMath 9-Q1-Week-6Pinky FaithNo ratings yet

- Copper SmithDocument12 pagesCopper Smithkr0465No ratings yet

- Business23 Leibniz Notation of The DerivativeDocument2 pagesBusiness23 Leibniz Notation of The Derivativemarchelo_cheloNo ratings yet

- Matematica CalculoDocument2 pagesMatematica CalculomarciliodqNo ratings yet

- SOAL 'N' JAWABAN SPK P. RUZARDIDocument17 pagesSOAL 'N' JAWABAN SPK P. RUZARDIFajar RachmantoNo ratings yet

- ME 310 Numerical Methods OptimizationDocument11 pagesME 310 Numerical Methods OptimizationMert YılmazNo ratings yet

- Mathematics 1 - Additional Exercises: Exercise 1Document2 pagesMathematics 1 - Additional Exercises: Exercise 1HenkNo ratings yet

- Quadratics and ApplicationsDocument9 pagesQuadratics and ApplicationsRashik RayatNo ratings yet

- Test 2 Working With PolynomialsDocument10 pagesTest 2 Working With PolynomialsWilson ZhangNo ratings yet

- EN112 - Functions and Limits 1: Department of Mathematics and Computer ScienceDocument22 pagesEN112 - Functions and Limits 1: Department of Mathematics and Computer SciencePoli KialouNo ratings yet

- Mathematics GR 12Document1 pageMathematics GR 12reacharunkNo ratings yet

- Gra 65161 - 202020 - 20.11.2020 - EgDocument14 pagesGra 65161 - 202020 - 20.11.2020 - EgHien NgoNo ratings yet

- AreaDocument7 pagesAreaFernie Villanueva BucangNo ratings yet

- NCERT Solutions For Class 11 Maths Chapter 2 Relations and Functions Miscellaneous ExerciseDocument7 pagesNCERT Solutions For Class 11 Maths Chapter 2 Relations and Functions Miscellaneous ExerciseBijay SchoolNo ratings yet

- PS1 Umax SolsDocument5 pagesPS1 Umax SolsebbamorkNo ratings yet

- Root Finding: Dr. Gokul K. CDocument37 pagesRoot Finding: Dr. Gokul K. CAmar MandalNo ratings yet

- WS8Sols PDFDocument2 pagesWS8Sols PDFLUIS FELIPE MOSQUERA HERNANDEZNo ratings yet

- Unit - 5 PDFDocument4 pagesUnit - 5 PDFNivitha100% (1)

- Paradise Lost PackDocument46 pagesParadise Lost PackKarina AndreeaNo ratings yet

- Limba Engleza - Exercitii Pentru AdmitereDocument14 pagesLimba Engleza - Exercitii Pentru AdmitereKarina AndreeaNo ratings yet

- Joyce Ellis, On The TownDocument4 pagesJoyce Ellis, On The TownKarina AndreeaNo ratings yet

- Then Was Not Non-Axistent Nor Existent:: Griffith'S TranslationDocument31 pagesThen Was Not Non-Axistent Nor Existent:: Griffith'S TranslationKarina Andreea100% (1)

- Studia Humanitatis: Renaissance Humanism Was A Revival in The Study ofDocument1 pageStudia Humanitatis: Renaissance Humanism Was A Revival in The Study ofKarina AndreeaNo ratings yet

- Estelle de Koning - Female Agency in The NibelungenliedDocument51 pagesEstelle de Koning - Female Agency in The NibelungenliedKarina AndreeaNo ratings yet

- Unsupervised Edge Detector Based On Evolved Cellular AutomataDocument11 pagesUnsupervised Edge Detector Based On Evolved Cellular AutomataKarina AndreeaNo ratings yet

- The Invisible World of The RigvedaDocument13 pagesThe Invisible World of The RigvedaKarina AndreeaNo ratings yet

- Studia Univ. Babes - BOLYAI, INFORMATICA, Volume LXV, Number 1, 2020 DOI: 10.24193/subbi.2020.1.06Document16 pagesStudia Univ. Babes - BOLYAI, INFORMATICA, Volume LXV, Number 1, 2020 DOI: 10.24193/subbi.2020.1.06Karina AndreeaNo ratings yet

- Studia Univ. Babes - BOLYAI, INFORMATICA, Volume LXII, Number 1, 2017 DOI: 10.24193/subbi.2017.1.01Document10 pagesStudia Univ. Babes - BOLYAI, INFORMATICA, Volume LXII, Number 1, 2017 DOI: 10.24193/subbi.2017.1.01Karina AndreeaNo ratings yet

- Life and Career: Édouard Juda Colonne (23 July 1838 - 28 March 1910) Was A FrenchDocument2 pagesLife and Career: Édouard Juda Colonne (23 July 1838 - 28 March 1910) Was A FrenchKarina AndreeaNo ratings yet

- Feat. DrakeDocument2 pagesFeat. DrakeKarina AndreeaNo ratings yet

- Verse 1: DrakeDocument3 pagesVerse 1: DrakeKarina AndreeaNo ratings yet

- A C++ Programme For Global Optimization: Serguei Zertchaninov and Kaj MadsenDocument14 pagesA C++ Programme For Global Optimization: Serguei Zertchaninov and Kaj MadsenKarina AndreeaNo ratings yet

- Evolutionary Cellular Automata For Image Segmentation and Noise Filtering Using Genetic AlgorithmsDocument9 pagesEvolutionary Cellular Automata For Image Segmentation and Noise Filtering Using Genetic AlgorithmsKarina AndreeaNo ratings yet

- Einstein and Eddington Is A British Single Drama Produced byDocument1 pageEinstein and Eddington Is A British Single Drama Produced byKarina AndreeaNo ratings yet

- Estimating Spatial Probit Models in RDocument14 pagesEstimating Spatial Probit Models in RJose CobianNo ratings yet

- Power SeriesDocument13 pagesPower SeriesCillalois Marie FameroNo ratings yet

- List Jurnal Bidang Matematika Dan Pendidikan Matematika KategoriDocument8 pagesList Jurnal Bidang Matematika Dan Pendidikan Matematika Kategorippg.lailanurhayati71No ratings yet

- Capacitance and Laplace's EquationDocument12 pagesCapacitance and Laplace's EquationKumaran G Asst. Professor100% (1)

- 2020 Kings College Budo s4 Mathematics Paper 1 Pre Uneb Test 2Document5 pages2020 Kings College Budo s4 Mathematics Paper 1 Pre Uneb Test 2Okiring JonahNo ratings yet

- B Kolman Introductory Linear Algebra With Applications Macmillan 1976 Xvi426 PPDocument2 pagesB Kolman Introductory Linear Algebra With Applications Macmillan 1976 Xvi426 PPSoung WathannNo ratings yet

- Normal Probability Distribution CHARLS PDFDocument21 pagesNormal Probability Distribution CHARLS PDFAnne BergoniaNo ratings yet

- ODE Formula SheetDocument4 pagesODE Formula SheetJohnNo ratings yet

- Determinants - Advanced MathDocument21 pagesDeterminants - Advanced MathJoan PoncedeleonNo ratings yet

- Maths Project - Square RootDocument7 pagesMaths Project - Square RootabcNo ratings yet

- CXC 2002Document6 pagesCXC 2002Oneil LewinNo ratings yet

- Harcourt CompleteDocument498 pagesHarcourt CompleteAkjafndjkNo ratings yet

- LXMLS Lab GuideDocument102 pagesLXMLS Lab GuidemldgmNo ratings yet

- Question Bank - 2markDocument17 pagesQuestion Bank - 2markong0625100% (1)

- Calculus DLL Week 9Document10 pagesCalculus DLL Week 9audie mataNo ratings yet

- MANSCI - Chapter 2Document2 pagesMANSCI - Chapter 2Rae WorksNo ratings yet

- Digital Logic Design Chap 4 NotesDocument34 pagesDigital Logic Design Chap 4 NotesHelly BoNo ratings yet

- MLT NotesDocument2 pagesMLT NotesMurthi MkNo ratings yet

- G11 Add Maths P2Document18 pagesG11 Add Maths P2Trevor G. SamarooNo ratings yet

- End Term ProjectDocument6 pagesEnd Term ProjectMoni Jana100% (7)

- FEM - Arc-Lenght - Sumup TechniquesDocument11 pagesFEM - Arc-Lenght - Sumup TechniquesabimalainNo ratings yet

- Int. J. Multiphase Flow Vol. 12, No. 5, Pp. 745-758, 1986Document14 pagesInt. J. Multiphase Flow Vol. 12, No. 5, Pp. 745-758, 1986rockyNo ratings yet

- Amc 8 2019Document10 pagesAmc 8 2019Madhu Mohan ChandranNo ratings yet

- Iit Ashram: Class: 10 GSEB: FULL TEST-1 MathematicsDocument4 pagesIit Ashram: Class: 10 GSEB: FULL TEST-1 MathematicsRutvik SenjaliyaNo ratings yet

- 60 Days DSA ChallengeDocument33 pages60 Days DSA Challengepk100% (1)

- Vaidic MathematicsDocument51 pagesVaidic MathematicsVilas Shah100% (1)

- Intermediate Mathematical Challenge: Solutions and InvestigationsDocument21 pagesIntermediate Mathematical Challenge: Solutions and InvestigationsDoddy FeryantoNo ratings yet

- LABEX1Document27 pagesLABEX1Thành VỹNo ratings yet