Professional Documents

Culture Documents

Batch 11 (Histogram)

Batch 11 (Histogram)

Uploaded by

Abhinav KumarCopyright:

Available Formats

You might also like

- Wilhelm Schulze StudyDocument11 pagesWilhelm Schulze Studyإلياس بن موسى بوراس100% (2)

- The Learning Action Cell (LAC)Document15 pagesThe Learning Action Cell (LAC)Kristine Barredo75% (16)

- Errington MacedoniaDocument15 pagesErrington Macedoniastducin100% (1)

- Auto Invoice ErrorsDocument25 pagesAuto Invoice ErrorsConrad RodricksNo ratings yet

- Chapter 1Document14 pagesChapter 1Madhukumar2193No ratings yet

- Dip Unit-IDocument14 pagesDip Unit-IPradnyanNo ratings yet

- Image Processing: ObjectiveDocument6 pagesImage Processing: ObjectiveElsadig OsmanNo ratings yet

- Ijettcs 2014 07 22 53Document4 pagesIjettcs 2014 07 22 53International Journal of Application or Innovation in Engineering & ManagementNo ratings yet

- Image Pre-Processing Tool: Kragujevac J. Math. 32 (2009) 97-107Document11 pagesImage Pre-Processing Tool: Kragujevac J. Math. 32 (2009) 97-107Jason DrakeNo ratings yet

- Digital Image ProcessingDocument18 pagesDigital Image ProcessingMahender NaikNo ratings yet

- Analysis of Various Image Processing TechniquesDocument4 pagesAnalysis of Various Image Processing TechniquesJeremiah SabastineNo ratings yet

- Basic Image OpeartionDocument13 pagesBasic Image Opeartionarbaazkhan5735No ratings yet

- DIP Lecture2Document13 pagesDIP Lecture2బొమ్మిరెడ్డి రాంబాబుNo ratings yet

- Bio-Medical Image Enhancement Based On Spatial Domain TechniqueDocument5 pagesBio-Medical Image Enhancement Based On Spatial Domain TechniqueAnshik BansalNo ratings yet

- Dip Lecture NotesDocument210 pagesDip Lecture NotesR Murugesan SINo ratings yet

- DIP NotesDocument22 pagesDIP NotesSuman RoyNo ratings yet

- 6 2022 03 25!05 37 50 PMDocument29 pages6 2022 03 25!05 37 50 PMmustafa arkanNo ratings yet

- BM3652 - MIP - Unit 2 NotesDocument23 pagesBM3652 - MIP - Unit 2 NotessuhagajaNo ratings yet

- Linear ReportDocument4 pagesLinear ReportMahmoud Ahmed 202201238No ratings yet

- Mod 1 IpDocument17 pagesMod 1 IpManjeet MrinalNo ratings yet

- DIP+Important+Questions+ +solutionsDocument20 pagesDIP+Important+Questions+ +solutionsAditya100% (1)

- Digital Image Segmentation of Water Traces in Rock ImagesDocument19 pagesDigital Image Segmentation of Water Traces in Rock Imageskabe88101No ratings yet

- Digital Image ProcessingDocument9 pagesDigital Image ProcessingRini SujanaNo ratings yet

- Ec2029 - Digital Image ProcessingDocument143 pagesEc2029 - Digital Image ProcessingSedhumadhavan SNo ratings yet

- Dip Unit 3Document18 pagesDip Unit 3motisinghrajpurohit.ece24No ratings yet

- Digital Image Definitions&TransformationsDocument18 pagesDigital Image Definitions&TransformationsAnand SithanNo ratings yet

- IA Unit 01Document25 pagesIA Unit 0121csme011anayNo ratings yet

- Image NoteDocument91 pagesImage NoteSiraj Ud-DoullaNo ratings yet

- Dip Unit 1Document33 pagesDip Unit 1Utsav VermaNo ratings yet

- Image Processing Lab Manual 2017Document40 pagesImage Processing Lab Manual 2017samarth50% (2)

- Image Processing-Chapter 1Document8 pagesImage Processing-Chapter 1arysnetNo ratings yet

- Analysis of Small Tumours in Ultrasound Breast Echography: AbstractDocument4 pagesAnalysis of Small Tumours in Ultrasound Breast Echography: AbstractDimona MoldovanuNo ratings yet

- Area Overview 1.1 Introduction To Image ProcessingDocument41 pagesArea Overview 1.1 Introduction To Image ProcessingsuperaladNo ratings yet

- What Is Image Processing? Explain Fundamental Steps in Digital Image ProcessingDocument15 pagesWhat Is Image Processing? Explain Fundamental Steps in Digital Image ProcessingraviNo ratings yet

- Image Fusion ReportDocument79 pagesImage Fusion ReportTechnos_IncNo ratings yet

- DIP-LECTURE - NOTES FinalDocument222 pagesDIP-LECTURE - NOTES FinalBoobeshNo ratings yet

- Basics of Image ProcessingDocument38 pagesBasics of Image ProcessingKarthick VijayanNo ratings yet

- TCS 707-SM02Document10 pagesTCS 707-SM02Anurag PokhriyalNo ratings yet

- Digital Image Processing NotesDocument94 pagesDigital Image Processing NotesSILPA AJITHNo ratings yet

- Dip Case StudyDocument11 pagesDip Case StudyVishakh ShettyNo ratings yet

- A Function-Based Image Binarization Based On Histogram: Hamid SheikhveisiDocument9 pagesA Function-Based Image Binarization Based On Histogram: Hamid SheikhveisiSaeideh OraeiNo ratings yet

- Optical Systems and Digital Image Acquisition: Anita Sindar RM Sinaga, ST, M.TIDocument7 pagesOptical Systems and Digital Image Acquisition: Anita Sindar RM Sinaga, ST, M.TIAnitta SindarNo ratings yet

- Zooming Digital Images Using Modal InterpolationDocument6 pagesZooming Digital Images Using Modal InterpolationInternational Journal of Application or Innovation in Engineering & ManagementNo ratings yet

- Comparison of Histogram Equalization Techniques For Image Enhancement of Grayscale Images of Dawn and DuskDocument5 pagesComparison of Histogram Equalization Techniques For Image Enhancement of Grayscale Images of Dawn and DuskIJMERNo ratings yet

- Image ProcessingDocument9 pagesImage Processingnishu chaudharyNo ratings yet

- Digital Image Processing IntroductionDocument33 pagesDigital Image Processing IntroductionAshish KumarNo ratings yet

- Image Processing LECTUREDocument60 pagesImage Processing LECTURELOUIS SEVERINO ROMANONo ratings yet

- Digital Image ProcessingDocument12 pagesDigital Image ProcessingSoviaNo ratings yet

- Multispectral Joint Image Restoration Via Optimizing A Scale MapDocument13 pagesMultispectral Joint Image Restoration Via Optimizing A Scale MappattysuarezNo ratings yet

- Image Processingمعالجة صورية غفران سعدي م4Document147 pagesImage Processingمعالجة صورية غفران سعدي م4Bakhtyar MahmoodNo ratings yet

- ANLPDocument25 pagesANLPVamsi ReddyNo ratings yet

- Digital Image FundamentalsDocument50 pagesDigital Image Fundamentalshussenkago3No ratings yet

- An Application of Morphological Image Processing To ForensicsDocument15 pagesAn Application of Morphological Image Processing To ForensicsSarma PraveenNo ratings yet

- Dip PracticalfileDocument19 pagesDip PracticalfiletusharNo ratings yet

- Dip Lecture - Notes Final 1Document173 pagesDip Lecture - Notes Final 1Ammu AmmuNo ratings yet

- Combined Approach For Image SegmentationDocument4 pagesCombined Approach For Image SegmentationseventhsensegroupNo ratings yet

- Chapter2 CVDocument79 pagesChapter2 CVAschalew AyeleNo ratings yet

- Basic Gray Level Image Processing: Alan C. BovikDocument4 pagesBasic Gray Level Image Processing: Alan C. BovikPrateek BagrechaNo ratings yet

- Module 2 DIPDocument33 pagesModule 2 DIPdigital loveNo ratings yet

- Compresion in DipDocument9 pagesCompresion in DipDheeraj YadavNo ratings yet

- CV Utsav Kashyap 12019414Document17 pagesCV Utsav Kashyap 12019414Utsav KashyapNo ratings yet

- (Main Concepts) : Digital Image ProcessingDocument7 pages(Main Concepts) : Digital Image ProcessingalshabotiNo ratings yet

- Digital Image ProcessingDocument14 pagesDigital Image ProcessingPriyanka DuttaNo ratings yet

- Histogram Equalization: Enhancing Image Contrast for Enhanced Visual PerceptionFrom EverandHistogram Equalization: Enhancing Image Contrast for Enhanced Visual PerceptionNo ratings yet

- Addressing Signal Electromigration (EM) in Today's Complex Digital DesignsDocument8 pagesAddressing Signal Electromigration (EM) in Today's Complex Digital DesignsAbhinav KumarNo ratings yet

- 1.1 Introduction To Project 1.2 Introduction To Embedded: References 60Document5 pages1.1 Introduction To Project 1.2 Introduction To Embedded: References 60Abhinav KumarNo ratings yet

- GangaDocument18 pagesGangaAbhinav KumarNo ratings yet

- ToolsDocument2 pagesToolsAbhinav KumarNo ratings yet

- Title Page No I Index II List of Figures IV: 1.1 Introduction To Project 1.2 Introduction To Embedded SystemsDocument2 pagesTitle Page No I Index II List of Figures IV: 1.1 Introduction To Project 1.2 Introduction To Embedded SystemsAbhinav KumarNo ratings yet

- 424 C049 PDFDocument5 pages424 C049 PDFAbhinav KumarNo ratings yet

- 723FPGA Based Fault Injection Verilog Model For Fault Detection Processor PDFDocument5 pages723FPGA Based Fault Injection Verilog Model For Fault Detection Processor PDFAbhinav KumarNo ratings yet

- DWR: Easy Ajax For Java: Active Mailing ListDocument10 pagesDWR: Easy Ajax For Java: Active Mailing Listi339371No ratings yet

- ANSI A92 Standards ChangesDocument6 pagesANSI A92 Standards ChangesXJN00 6469No ratings yet

- Lesson 2 HardwareDocument35 pagesLesson 2 HardwareJeff ErniNo ratings yet

- Economic and Political Weekly Economic and Political WeeklyDocument3 pagesEconomic and Political Weekly Economic and Political WeeklyAnuneetaNo ratings yet

- Subject Programme Grades 10-11Document40 pagesSubject Programme Grades 10-11Svetlana KassymovaNo ratings yet

- Questions 1-10 Refer To The Following Passage.: A. Multiple ChoiceDocument12 pagesQuestions 1-10 Refer To The Following Passage.: A. Multiple ChoiceZura SuciNo ratings yet

- An Overview of Business EnvironmentDocument46 pagesAn Overview of Business EnvironmentParul Jain75% (4)

- Understanding Operations ManagementDocument26 pagesUnderstanding Operations ManagementlapogkNo ratings yet

- Bkas2013 A181 Student SyllabusDocument5 pagesBkas2013 A181 Student SyllabusthaaareneeNo ratings yet

- What Do You Know About JobsDocument2 pagesWhat Do You Know About JobsMónicaNo ratings yet

- ReadmeDocument16 pagesReadmeroniellialvesNo ratings yet

- Combined VariationDocument3 pagesCombined VariationB01 BASILIO, MICHAEL ANGELO L.No ratings yet

- Mathworks Certified Matlab Associate Exam: PrerequisitesDocument19 pagesMathworks Certified Matlab Associate Exam: PrerequisitesKunal KhandelwalNo ratings yet

- ResumeDocument3 pagesResumejoeker31No ratings yet

- English - The Global LanguageDocument7 pagesEnglish - The Global LanguageMrigakhi Sandilya0% (1)

- 1-BA RoofVent 4214745-03 en PDFDocument50 pages1-BA RoofVent 4214745-03 en PDFNenad Mihajlov100% (1)

- Lecture 1 Introduction To PolymersDocument28 pagesLecture 1 Introduction To PolymersSophieNo ratings yet

- English Iv Unit 1: ESL Language CenterDocument32 pagesEnglish Iv Unit 1: ESL Language CenterCarlos Nava ChacinNo ratings yet

- Peka Report Experiment 4.8 Effects of Acid and Alkali On LatexDocument2 pagesPeka Report Experiment 4.8 Effects of Acid and Alkali On LatexHOOI PHING CHANNo ratings yet

- Department of Education: Republic of The PhilippinesDocument50 pagesDepartment of Education: Republic of The Philippineslian central schoolNo ratings yet

- AA7013B Contemporary Issues in Finance 30% PLO5 (Topic TBC)Document1 pageAA7013B Contemporary Issues in Finance 30% PLO5 (Topic TBC)AKMALNo ratings yet

- Technology Essay 2Document5 pagesTechnology Essay 2api-253440514No ratings yet

- Real Estate Business Entity ProgramDocument20 pagesReal Estate Business Entity ProgramPhani PinnamaneniNo ratings yet

- BTechITMathematicalInnovationsClusterInnovationCentre Res PDFDocument300 pagesBTechITMathematicalInnovationsClusterInnovationCentre Res PDFTushar SharmaNo ratings yet

- CV Nurul Rachma PutriDocument2 pagesCV Nurul Rachma PutrispongeraputNo ratings yet

- Multilink User ManualDocument120 pagesMultilink User ManualMN Titas TitasNo ratings yet

Batch 11 (Histogram)

Batch 11 (Histogram)

Uploaded by

Abhinav KumarCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Batch 11 (Histogram)

Batch 11 (Histogram)

Uploaded by

Abhinav KumarCopyright:

Available Formats

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

CHAPTER-1

1.INTRODUCTION

1.1 INTRODUCTION TO PROJECT

Reversible data embedding, which is often referred to as lossless or invertible data

embedding ,is a technique that embeds data into an image in a reversible manner .In many

applications including art, medical, and military images, this reversibility is a very desirable

characteristic, and thus considerable amount of research has been done over the last decade.

In the conventional works, extensive efforts have been devoted to increase the embedding

capacity without deteriorating the visual quality of the embedded image. A key of reversible

data embedding is to find an embedding area in an image by exploiting the redundancy in the

image content. early reversible algorithm uses lossless data compression to find an extra area

that can contain to-be-embedded data. In order to expand the extra space, the recent algorithms

reduce the redundancy by performing pixel value prediction and utilizing image histogram.

The state-of-the-art techniques exhibit high embedding capacity without severely degrading the

visual quality of the embedded result.

However, since the subjective visual quality is not taken into account in the

conventional methods, the quality of the resultant embedded images is often not satisfactory. In

this letter, we propose a histogram modification based reversible data embeddeding algorithm

considering the human visual system(HVS).in the proposed algorithm, local causal window is

used to predict a pixel value and estimate an edge. Then by taking a concept of the just

noticeable difference(JND) the pixels in the smooth and edge regions are differently treated to

reduce the perceptual distortion. experimental results demonstrate that as compared to

conventional algorithms, the proposed algorithm produces subjectively higher quality

embedded images while providing a similar embedding capacity.

1.2 INTRODUCTION TO DIGITAL IMAGE PROCESSING

1.2.1 BACKGROUND

Digital image processing is an area characterized by the need for extensive experimental

work to establish the viability of proposed solutions to a given problem. An important

characteristic underlying the design of image processing systems is the significant level of

testing & experimentation that normally is required before arriving at an acceptable solution.

This characteristic implies that the ability to formulate approaches &quickly prototype

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 1

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

candidate solutions generally plays a major role in reducing the cost & time required to arrive at

a viable system implementation.

An image may be defined as a two-dimensional function f(x, y), where x & y are spatial

coordinates, & the amplitude of f at any pair of coordinates (x, y) is called the intensity or gray

level of the image at that point. When x, y & the amplitude values of f are all finite discrete

quantities, we call the image a digital image. The field of DIP refers to processing digital image

by means of digital computer. Digital image is composed of a finite number of elements, each of

which has a particular location & value. The elements are called pixels.

Vision is the most advanced of our sensor, so it is not surprising that image play the

single most important role in human perception. However, unlike humans, who are limited to

the visual band of the EM spectrum imaging machines cover almost the entire EM spectrum,

ranging from gamma to radio waves. They can operate also on images generated by sources that

humans are not accustomed to associating with image.

There is no general agreement among authors regarding where image processing stops &

other related areas such as image analysis& computer vision start. Sometimes a distinction is

made by defining image processing as a discipline in which both the input & output at a process

are images. This is limiting & somewhat artificial boundary. The area of image analysis (image

understanding) is in between image processing & computer vision. There are no clear-cut

boundaries in the continuum from image processing at one end to complete vision at the other.

However, one useful paradigm is to consider three types of computerized processes in

this continuum: low-, mid-, & high-level processes. Low-level process involves primitive

operations such as image processing to reduce noise, contrast enhancement & image

sharpening. A low- level process is characterized by the fact that both its inputs & outputs are

images. Mid-level process on images involves tasks such as segmentation, description of that

object to reduce them to a form suitable for computer processing & classification of individual

objects.

A mid-level process is characterized by the fact that its inputs generally are images but

its outputs are attributes extracted from those images. Finally higher- level processing involves

“Making sense” of an ensemble of recognized objects, as in image analysis & at the far end of

the continuum performing the cognitive functions normally associated with human vision.

Digital image processing, as already defined is used successfully in a broad range of areas of

exceptional social & economic value.

1.2.2 What is an image

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 2

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

An image is represented as a two dimensional function f(x, y) where x and y are spatial

co-ordinates and the amplitude of ‘f’ at any pair of coordinates (x, y) is called the intensity of

the image at that point.

Gray scale image:

A grayscale image is a function I (xylem) of the two spatial coordinates of the image

plane .I(x, y) is the intensity of the image at the point (x, y) on the image plane. I (xylem) takes

non-negative values assume the image is bounded by a rectangle [0, a] ´[0, b]I: [0, a] ´ [0, b] ®

[0, info].

Color image:

It can be represented by three functions, R (xylem) for red, G (xylem) for green and B

(xylem) for blue. An image may be continuous with respect to the x and y coordinates and also

in amplitude. Converting such an image to digital form requires that the coordinates as well as

the amplitude to be digitized. Digitizing the coordinate’s values is called sampling. Digitizing

the amplitude values is called quantization.

1.2.3 Image Types:

The toolbox supports four types of images:

1 .Intensity images

2. Binary images

3. Indexed images

4. R G B images

Most monochrome image processing operations are carried out using binary or

intensity images, so our initial focus is on these two image types. Indexed and RGB color

images.

Intensity Images

An intensity image is a data matrix whose values have been scaled to represent when

intensions. The elements of an intensity image are of class unit8, or class unit 16, they have

integer values in the range [0,255] and [0, 65535], respectively. If the image is of class double,

the values are floating _point numbers. Values of scaled, double intensity images are in the

range [0, 1] by convention.

Binary Images

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 3

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Binary images have a very specific meaning in MATLAB.A binary image is a logical array

0s and1s.Thus, an array of 0s and 1s whose values are of data class, say unit8, is not considered

as a binary image in MATLAB .A numeric array is converted to binary using function logical.

Thus, if A is a numeric array consisting of 0s and 1s, we create an array B using the statement.

B=logical (A)

If A contains elements other than 0s and 1s.Use of the logical function converts all nonzero

quantities to logical 1s and all entries with value 0 to logical 0s. Using relational and logical

operators also creates logical arrays.

To test if an array is logical we use the 1 logical function is logical(c).If c is a logical array,

this function returns a 1.Otherwise returns a 0. Logical array can be converted to numeric arrays

using the data class conversion functions.

Indexed Images

An indexed image has two components:

A data matrix integer, x.

A color map matrix, map.

Matrix map is an m*3 arrays of class double containing floating_ point values in the

range [0, 1].The length m of the map are equal to the number of colors it defines. Each row of

map specifies the red, green and blue components of a single color. An indexed images uses

“direct mapping” of pixel intensity values color map values. The color of each pixel is

determined by using the corresponding value the integer matrix x as a pointer in to map. If x is

of class double ,then all of its components with values less than or equal to 1 point to the first

row in map, all components with value 2 point to the second row and so on. If x is of class units

or unit 16, then all components value 0 point to the first row in map, all components with value

1 point to the second and so on.

RGB Image

An RGB color image is an M*N*3 array of color pixels where each color pixel is triplet

corresponding to the red, green and blue components of an RGB image, at a specific spatial

location. An RGB image may be viewed as “stack” of three gray scale images that when fed in

to the red, green and blue inputs of a color monitor produce a color image on the screen.

Convention the three images forming an RGB color image are referred to as the red,

green and blue components images. The data class of the components images determines their

range of values. If an RGB image is of class double the range of values is [0, 1].

Similarly the range of values is [0,255] or [0, 65535].For RGB images of class units or

unit 16 respectively. The number of bits use to represents the pixel values of the component

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 4

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

images determines the bit depth of an RGB image. For example, if each component image is an

8bit image, the corresponding RGB image is said to be 24 bits deep.

Generally, the number of bits in all component images is the same. In this case the

number of possible color in an RGB image is (2^b) ^3, where b is a number of bits in each

component image. For the 8bit case the number is 16,777,216 colors.

1.2.4 Co-ordinate convention

The result of sampling and quantization is a matrix of real numbers. We use two

principal ways to represent digital images. Assume that an image f(x, y) is sampled so that the

resulting image has M rows and N columns. We say that the image is of size M X N. The values

of the coordinates (xylem) are discrete quantities. For notational clarity and convenience, we

use integer values for these discrete coordinates. In many image processing books, the image

origin is defined to be at (xylem)=(0,0).The next coordinate values along the first row of the

image are (xylem)=(0,1).It is important to keep in mind that the notation (0,1) is used to signify

the second sample along the first row. It does not mean that these are the actual values of

physical coordinates when the image was sampled. Following figure shows the coordinate

convention. Note that x ranges from 0 to M-1 and y from 0 to N-1 in integer increments.

The coordinate convention used in the toolbox to denote arrays is different from the

preceding paragraph in two minor ways. First, instead of using (xylem) the toolbox uses the

notation (race) to indicate rows and columns. Note, however, that the order of coordinates is the

same as the order discussed in the previous paragraph, in the sense that the first element of a

coordinate topples, (alb), refers to a row and the second to a column. The other difference is that

the origin of the coordinate system is at (r, c) = (1, 1); thus, r ranges from 1 to M and c from 1 to

N in integer increments. IPT documentation refers to the coordinates. Less frequently the

toolbox also employs another coordinate convention called spatial coordinates which uses x to

refer to columns and y to refers to rows. This is the opposite of our use of variables x and y.

1.2.5 Image as Matrices

The preceding discussion leads to the following representation for a digitized image

function.

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 5

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

The right side of this equation is a digital image by definition. Each element of this array

is called an image element, picture element, pixel or pel. The terms image and pixel are used

throughout the rest of our discussions to denote a digital image and its elements.

A digital image can be represented naturally as a MATLAB matrix.

Where f(1,1) = f(0,0) (note the use of a monoscope font to denote MATLAB

quantities). Clearly the two representations are identical, except for the shift in origin. The

notation f(p ,q) denotes the element located in row p and the column q. For example f(6,2) is

the element in the sixth row and second column of the matrix f. Typically we use the letters M

and N respectively to denote the number of rows and columns in a matrix. A 1xN matrix is

called a row vector whereas an Mx1 matrix is called a column vector. A 1x1 matrix is a scalar.

Matrices in MATLAB are stored in variables with names such as A, a, RGB, real array

and so on. Variables must begin with a letter and contain only letters, numerals and underscores.

As noted in the previous paragraph, all MATLAB quantities are written using mono-scope

characters. We use conventional Roman, italic notation such as f(x, y), for mathematical

expressions.

1.2.6 Reading Images

Images are read into the MATLAB environment using function imread whose syntax is

imread (‘filename’)

Format name Description Recognized extension

TIFF Tagged image file format .tif, .tiff

JPEG Joint Photograph Expert Group .jpg. .jpeg

GIF Graphics Interchange Format .gif

BMP Windows Bitmap .bmp

PNG Portable network Graphics .png

XWD X Window Dump .xwd

Table.1 image extentions

1.2.7 Data Classes

Although we work with integers coordinates the values of pixels themselves are not

restricted to be integers in MATLAB. Table above list various data classes supported by

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 6

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

MATLAB and IPT are representing pixels values. The first eight entries in the table are refers to

as numeric data classes. The ninth entry is the char class and, as shown, the last entry is referred

to as logical data class.

All numeric computations in MATLAB are done in double quantities, so this is also a

frequent data class encounter in image processing applications. Class unit 8 also is encountered

frequently, especially when reading data from storages devices, as 8 bit images are most

common representations found in practice. These two data classes, classes logical, and, to a

lesser degree, class unit 16 constitute the primary data classes on which we focus. Many ipt

functions however support all the data classes listed in table

Name Description

Double Double _ precision, floating_ point numbers the Approximate.

Uint8 Unsigned 8_bit integers in the range [0,255] (1byte per

Element).

Uint16 Unsigned 16_bit integers in the range [0, 65535] (2byte Per element).

Uint32 Unsigned 32_bit integers in the range [0, 4294967295] (4 bytes per element). Int8

signed 8_bit integers in the range [-128,127] 1 byte per element)

Int 16 Signed 16_byte integers in the range [32768, 32767] (2 bytes per element).

Int 32 Signed 32_byte integers in the range [-2147483648, 21474833647] (4 byte per

element).

Single Single _precision floating _point numbers with values in the approximate

range (4 bytes per elements).

Char Characters (2 bytes per elements).

Lo Values are 0 to 1 (1byte per element).

gical

Table.2 data classes description

CHAPTER-2

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 7

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

BLOCK DIAGRAM

2.1. BLOCK DIAGRAM

Fig 2.1 : reversible data hiding based histogram modification

2.1.1 Introduction

A reversible data hiding scheme based on histogram modification using pairs of peak and zero

points. Let P be the value of peak point and Z be the value of zero point. The range of the

histogram, P + 1, Z - 1, is shifted to the right-hand side by 1. Once a pixel with value P is

encountered, if the message bit is “1,” the pixel value is increased by 1. Otherwise, no

modification is needed. Data extraction is actually the reverse of the data hiding process.

Note that the number of message bits that can be embedded into an image equals the

number of pixels associated with the peak point. While multiple pairs of peak and minimum

points can be used for embedding, the pure payload is still a little low.

Figure 2.1.1 Distribution of pixel differences

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 8

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Histogram modification technique carries with it an unsolved issue in that multiple pairs of

peak and minimum points must be transmitted to the recipient via a side channel to ensure

successful restoration.

Thus, present an efficient extension of the histogram modification technique by

considering the differences between adjacent pixels instead of simple pixel value. Since image

neighbor pixels are strongly correlated, the distribution of pixel difference has a prominent

maximum, that is, the difference is expected to be very close to zero, as shown in Figure 6.1.

Can find that the differences have almost a zero-mean and Laplacian like distribution.

Distributions of other images also follow this model. Laplacian data can be applied to data

hiding schemes to improve their embedding ability. This observation leads us toward designs in

which the embedding is done in pixel differences.

And also use a tree structure to solve issue communicating multiple pairs of peak

points to recipients. Having explained r background logic, now outline the principle of the

proposed reversible data hiding algorithm.

2.1.2 Histogram Modification on Pixel Differences

For an N-pixel 8-bit grayscale host image H with a pixel value xi, where xi denotes the

grayscale value of the ith pixel, 0 < i < N — 1, xi Z, xi 0, 255.

1) Scan the image H in an inverse s-order. Calculate the pixel difference di between pixels and

xi by

(2.1)

2) Determine the peak point P from the pixel differences.

3) Scan the whole image in the same inverse s-order as in Step 1. If di > P, shift xi by 1 unit

Where yi is the encrypted value of pixel i.

4) If di = P, modify xi according to the message bit

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 9

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Where b is a message bit to be embedded. At the receiving end, the recipient extracts

message bits from the watermarked image by scanning the image in the same order as during

the embedding.

Where xi-1 denotes the restored value of yi-1.

The original pixel value of xi can be restored by

Thus, an exact copy of the original host image is obtained. These steps complete the

data hiding process in which only one peak point is used. Large hiding capacities can be

obtained by repeating the data hiding process. However, recipients may not be able to retrieve

both the embedded message and the original host image without knowledge of the peak points

of every hiding pass. Thus, here presents a binary tree structure in the following subsection that

deals with communication of multiple peak points.

Fig 2.1.2 Binary trees for proposed scheme

2.1.3 Binary Tree Structure

Figure 2.1.2shows an auxiliary binary tree for solving the issue of communication of

multiple peak points. Each element denotes a peak point. Let us assume that the number of peak

points used to embed messages is 2L, where L is the level of the binary tree. Once a pixel

difference di that satisfies di < 2L is encountered, if the message bit to be embedded is “0,” the

left child of the node di is visited; otherwise, the right child of the node di is visited. Higher

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 10

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

payloads require the use of higher tree levels, thus quickly increasing the distortion in the image

beyond acceptable levels.

However, all the recipient needs to share with the sender is the tree level L, because propose

an auxiliary binary tree that predetermines multiple peak points used to embed messages.

A detailed embedding algorithm with the auxiliary binary tree is given below.

Fig 2.1.3 Histogram shifting a) original histogram b) Histogram shifting

2.1.4 Prevent Overflow and Underflow

Modification of a pixel may not be allowed if the pixel is saturated (0 or 255). To

prevent overflow and underflow, adopts a histogram shifting technique that narrows the

histogram from both sides, as shown in Figure 2.1.3. Let us assume that the number of peak

points used to embed messages is 2L, where L is the level of the proposed binary tree structure.

Thus, shift the histogram from both sides by 2L units to prevent overflow and underflow since

the pixel xi that satisfies di > 2L will shift by 2L units after embedding takes place.

After narrowing the histogram to the range 2L, 255 – 2L, must record the histogram

shifting information as overhead bookkeeping information. For this purpose, create a one-bit

map as the location map, which is equal in size to the host image. For a pixel having grayscale

value in the range 2L, 255 –2L, assigns a value 0 in the location map; otherwise, we assign a

value 1. The location map is losslessly compressed by the run-length coding algorithm, which

will yield a large increase in compression ability since pixels out of the range 2 L, 255 — 2L are

few and are almost always contiguous.

The overhead information will be embedded into the host image together with the embedded

message. Note that the maximum modification to a pixel is limited to 2L according to the

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 11

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

proposed tree structure. As a result, shifting the histogram from both sides by 2L units enables us

to avoid the occurrence of overflow and underflow.

2.1.5 Embedding Process

For an N-pixel 8-bit grayscale host image H with a pixel value xi, where xi denotes the

grayscale value of the ith pixel, 0 < i < N - 1, Z, g 0,255.

1) Determine the level L of the binary tree.

1) Shift the histogram from both sides by 2L units. Note that the histogram shifting

information is recorded as overhead bookkeeping information that will be embedded into the

image itself with payload.

2) Scan the image H in an inverse s-order. Calculate the pixel difference di between pixels

xi-1 and xi.

3) Scan the whole image in the same inverse s-order. If

di > 2L, shift by 2L units

Where yi is the watermarked value of pixel i.

4) If di < 2L, modify according to the message bit

Where b is a message bit to be embedded and b {0, 1}. Note that the overhead

information is included in the image itself with payload. Thus, the real capacity Cap that is

referred to as pure payload is Cap = Np — |0|, where Np is the number of pixels that are

associated with peak points and | O | is the length of the overhead information.

2.1.6 Extraction Process

This process extracts both overhead information and pay-load from the watermarked

image and losslessly recovers the host image. Let L be the level of the proposed binary tree. For

an N-pixel 8-bit watermarked image W with a pixel value yi, where denotes the grayscale

value of the ith pixel, 0 < i < N — 1, yi Z, yi 0, 255.

1) Scan the watermarked image W in an inverse s-order.

2) If | | < 2L+1

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 12

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

where denotes the restored value of y;_1.

3)Restore the original value of host pixel by

4) Repeat Step 2 until the embedded message is completely extracted.

5) Extract the overhead information from the extracted message. If a value 1 is assigned in

the location i, restore to its original state by shifting it by 2L units; otherwise, no shifting is

required.

2.2 DATA HIDING

2.2.1 INTRODUCTION

Data hiding is a term encompassing a wide range of applications for embedding

messages in content. Inevitably, hiding information destroys the host image even though the

distortion introduced by hiding is imperceptible to the human visual system. There are, however

sensitive images for which any embedding distortion of the image is intolerable, such as

military images, medical images, or artwork preservation. For medical images, even slight

changes are unacceptable because of the potential risk of a physician misinterpreting the image.

Consequently, reversible data hiding techniques are designed to solve the problem of

lossless embedding of large messages in digital images so that after the embedded message is

extracted, the image can be completely restored to its original state before embedding occurred.

The novel histogram-based reversible data hiding technique was presented by Ni, in

which the message is embedded into the histogram bin. They used peak and zero points to

achieve low distortion, but with attendant low capacity.

Histogram modification techniques have been extended recently. However, those

techniques all suffer from the unresolved issue represented by the need to communicate pairs of

peak and zero points to recipients.

In this project, extended the histogram modification technique using pixel differences to

increase hiding capacity. It uses a binary tree structure to eliminate the requirement to

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 13

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

communicate pairs of peak and zero points to the recipient and also adopted a histogram

shifting technique to prevent overflow and underflow.

2.2.2 Generalized-LSB (G-LSB) Embedding

One of the earliest data-embedding methods is the LSB modification. In this well known

method, the LSB of each signal sample is replaced (over written) by a payload data bit embed-

ding one bit of data per input sample. If additional capacity is required, two or more LSBs may

be over written allowing for corresponding bits per sample. During extraction, these bits are

read in the same scanning order, and payload data is reconstructed. LSB modification is a

simple, non robust embedding technique with a high-embedding capacity and small bounded-

embedding distortion (±1). A generalization of the LSB-embedding method, namely G-LSB, is

employed here.

If the host signal is represented by a vector s, the G-LSB embedding and extraction

processes can be represented as

where s^ represents the signal containing the embedded information, w represents the

embedded payload vector of L- ray symbols, i.e., W { G {0, 1,..., L — 1}, and

is an L-level scalar quantization function, and x represents the operation of truncation to the

integer part.

2.2.3 Alternative Approaches

In this section introduces a histogram shifting technique that avoids sending the location map,

as done in Tian’s algorithm. The selection of locations for embedding is done by defining non

overlapping regions in the histogram of expandable locations. Upon expansion, the bins

corresponding to these selected locations overlap with the bins of the other locations. To

compensate for it, introduces a histogram shifting technique that eliminates all overlap between

the bins of the expanded locations and the other bins.

2.2.4 Histogram-Based Selection of Locations

Consider the histogram of expandable differences of the Lena image.

The difference histogram for most natural images would be similar to this, in the sense

that difference values with small magnitudes occur more frequently.

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 14

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Consider a process of selecting locations for expansion embedding from the set of expandable

locations that involves selecting suitable bins from the histogram of expandable differences. The

bins whose differences have smaller magnitude are given preference in the selection process

because the smaller the magnitude of the expandable difference, the smaller the resulting

distortion. Distortion resulting from expansive differences with value 0 and 1 are equal (in a

probabilistic sense, assuming equiprobable information bits). It is easy to see that difference

values that are equidistant from 0.5 contribute equally towards the embedding distortion.

Therefore, the selection of locations for expansion embedding involves setting an appropriate

threshold Δ > 0, such that Δ+ 1 negative and Δ+ 1 nonnegative bin from the histogram are

selected, resulting in 2Δ+2 bins. These selected bins have differences in the range [Δ-1,Δ] . This

selection method divides the histogram into two non-overlapping inner and outer regions.

Fig 2.2.5 Histogram of expandable difference values for the Lena image

2.2.5 Histogram Shifting

Difference expansion of the differences in the selected locations expands the histogram

of the inner region, and the modified differences now occupy the range [—2Δ— 2, 2Δ+ 1],

Comparing this range with the range of the differences that constitute the outer regions, see that

they overlap in the range [—2Δ. — 2, —Δ— 2] U [Δ+1, 2Δ+ 1].

An appropriate histogram shift of the outer regions would cancel all overlap between

the two regions. In order to achieve this, the negative differences and the nonnegative

differences of the outer regions should be shifted left and right, respectively.

hn = h+ Δ+1 if h> Δ

h- Δ-1 if h<- Δ-1 (2.13)

The histograms of the expanded inner region and the shifted outer regions. A histogram shift

can be easily reversed if Δ is known

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 15

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Note that the discussion on histogram shifting has been restricted to expandable differences

lying in the outer regions (i.e., differences outside the range [—Δ— 1,Δ]). Histogram shifting

causes a smaller change in these differences than difference expansion. Therefore, it is not

necessary to check whether a histogram shift might cause overflow/underflow.

Incorporating histogram shifting along with DE also eliminates the need to have a

location map of the selected expandable locations (they can be identified at the decoder from the

histogram of the differences). Consequently, the amount of auxiliary information embedded is

also significantly reduced. In addition, the computational intensity required for histogram

shifting is much less than that required for the compression/ decompression engine.

2.2.6 Notation and Functions

In order to simplify the explanation of the two approaches to extend Tian’s logorithm in

the subsequent sections, define some notation and functions in this section. The operators, and ,

represent the DE and LSB-embedding operations, respectively. They operate on an integer h and

a bit b {0,1} and are defined as

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 16

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Fig 2.2.6 Histogram resulting from the shifting of outer regions

The concatenation operator, , operates on two bit streams and concatenates them. The length

of a bit stream is returned by the function . Therefore, if A and B are two bit streams, then

While checking the suitability of a difference value for changeability or expandability, it is

sufficient to check only the worst-case difference for its ability to invert back to the pixel

domain. The worst-case occurs when the embedded bit is 1 for nonnegative and 0 for negative

differences. Now define functions that return the worst-case values when a difference

undergoes LSB replacement, DE or histogram shifting. The function (x) is defined as

This function returns the worst-case value when x undergoes LSB replacement. The function f\

(x, ), where x is an integer and is a nonnegative integer, is defined as

This function returns the worst-case value when x undergoes expansion embedding or

histogram shifting when the selected threshold is . The function for n > 2 returns the

worst-case difference value when x undergoes multiple expansion/shifting n times.

And is defined as

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 17

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

2.2.7 Difference-Expansion-Based Algorithms

In this section, presents two new algorithms to combine the difference-expansion embedding

technique with our proposed extensions. In these two algorithms, uses the histogram-based

procedure for location selection, and use histogram shifting to enable the decoder to identify

these selected locations. The two algorithms differ in the approach in which the decoder

identifies the set of expandable locations. As seen from the locations that are not expandable

have gray levels closer to the minimum or maximum and, thus, may overflow upon expansion.

In the first algorithm, an overflow indicating the locations that are not expandable is embedded

with the payload. In the second algorithm, flag bits are interspersed with the payload to identify

these locations.

2.2.8 Difference Expansion with Histogram Shifting and Overflow Map (DE-HS-OM)

First decompose the image into differences and integer averages and determine the

changeable (C) and the expandable locations (E). A 2-D overflow map, M, is formed, indicating

the expandable.

The overflow map is lossless compressed. The compressed overflow map and a

header segment constitute the auxiliary information. The auxiliary information stream is

formed by concatenating the compressed bit stream to the header segment The header

segment has a fixed length, which is also known to the decoder. The total length of the auxiliary

stream is

The total number of bits embedded by expansion embedding is the sum of the size of the

auxiliary bit stream and the size of the payload . Let t be the total number of bits

embedded by expansion embedding.

The operating threshold is selected such that the total number of locations that

comprise the selected bins is at least as large as t. Based on , partition E into two sets, Ee and

Es, where Ee is the set of expandable locations that comprise the selected bins and the

remaining expandable locations comprise Es. Histogram shifting of the bins that correspond to

the set Es is done next. The auxiliary information stream is then created, wherein the header

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 18

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

segment is populated with information regarding the size of the compressed overflow map,

η(m) the operating threshold and the size of the Payload, The header segment is then

appended to the compressed location map to create the auxiliary information stream.

The LSBs of the differences at the locations in C \ Ee are then saved into C. The

auxiliary information stream rj(A), the saved LSBs C, and the payload V are then concatenated

to create the Bit stream B to be embedded

The bit stream B is embedded at the locations in C using DE at the locations in Ee and

using LSB replacement at the locations in C \ Ee . From the resulting differences and integer

averages, the watermarked image is computed by inverting the transform.

At the decoder, the differences and the integer averages of the received image are

calculated. The changeable locations are identified, from which the embedded bit stream B is

extracted. The header segment Q, the compressed location map M., the operating threshold A.,,

the saved LSBs £, and the payload V are retrieved from B. Next, M is decompressed to obtain

the location map M, using which the subset E of C is identified. The subsets Ee and Es of E are

determined based on the operating threshold. The LSBs of the differences at C \ Ee are replaced

by the corresponding bits in £. The differences at locations in Es are restored by reversing the

shift, and the differences at locations in Ee are restored by reversing the expansion. From the

restored difference values and the integer averages, the original host image is calculated by

applying the inverse transform.

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 19

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

CHAPTER-3

HISTOGRAM

3.1 INTRODUCTION

For the histograms used in digital image processing, like Image histogram and Color

histogram. It is one of the Seven Basic Tools of Quality First described by Karl Pearson Purpose

to roughly assess the probability distribution of a given variable by depicting the frequencies of

observations occurring in certain ranges of values.

In statistics, a histogram is a graphical display of tabular frequencies, shown as adjacent

rectangles. Each rectangle is erected over an interval, with an area equal to the frequency of the

interval. The height of a rectangle is also equal to the frequency density of the interval, i.e. the

frequency divided by the width of the interval. The total area of the histogram is equal to the

number of data. A histogram may also be based on the relative frequencies instead. It then

shows what proportion of cases fall into each of several categories (a form of data binning), and

the total area then equals 1. The categories are usually specified as consecutive, non-

overlapping intervals of some variable. The categories (intervals) must be adjacent, and often

are chosen to be of the same size, but not necessarily so.

Histograms are used to plot density of data, and often for density estimation: estimating

the probability density function of the underlying variable. The total area of a histogram used

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 20

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

for probability density is always normalized to 1. If the lengths of the intervals on the x-axis are

all 1, then a histogram is identical to a relative frequency plot.

An alternative to the histogram is kernel density estimation, which uses a kernel to

smooth samples. This will construct a smooth probability density function, which will in general

more accurately reflect the underlying variable.

3.2 Mathematical definition

In a more general mathematical sense, a histogram is a mapping mi that counts the

number of observations that fall into various disjoint categories (known as bins), whereas the

graph of a histogram is merely one way to represent a histogram. Thus, if we let n be the total

number of observations and k be the total number of bins, the histogram mi meets the following

conditions.

Cumulative histogram

A cumulative `histogram is a mapping that counts the cumulative number of observations in all

of the bins up to the specified bin. That is, the cumulative histogram Mi of a histogram mj is

defined as

Number of bins and width

There is no "best" number of bins, and different bin sizes can reveal different features

of the data. Some theoreticians have attempted to determine an optimal number of bins, but

these methods generally make strong assumptions about the shape of the distribution. You

should always experiment with bin widths before choosing one (or more) that illustrate the

salient features in your data. A good discussion of rules for choice of bin widths is in Density

Estimation.

The number of bins k can be assigned directly or can be calculated from a suggested

bin width h as

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 21

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

The braces indicate the ceiling function.

Sturges' formula

which implicitly bases the bin sizes on the range of the data, and can perform poorly if n < 30?

Scott's choice

where σ is the sample standard deviation.

Square-Root Choice

which takes the square root of the number of data points in the sample (used by Excel

histograms and many others???).

Freedman–Diaconis' choice

which is based on the interquartile range.

Choice based on minimization of an estimated L2 risk function

where and are mean and biased variance of a histogram with bin-width ,

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 22

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Fig 3.2 a) ordinary histogram b) cumulative histogram

3.3 Image histogram

An image histogram is a type of histogram which acts as a graphical representation of

the tonal distribution in a digital image. It plots the number of pixels for each tonal value. By

looking at the histogram for a specific image a viewer will be able to judge the entire tonal

distribution at a glance.

Image histograms are present on many modern digital cameras. Photographers can use

them as an aid to show the distribution of tones captured, and whether image detail has been lost

to blown-out highlights or blacked-out shadows.

The horizontal axis of the graph represents the tonal variations, while the vertical axis

represents the number of pixels in that particular tone. The left side of the horizontal axis

represents the black and dark areas, the middle represents medium grey and the right hand side

represents light and pure white areas. The vertical axis represents the size of the area that is

captured in each one of these zones. Thus, the histogram for a very bright image with few dark

areas and/or shadows will have most of its data points on the right side and center of the graph.

Conversely, the histogram for a very dark image will have the majority of its data points on the

left side and center of the graph.

Fig 3.3.1 sunflower image

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 23

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Fig 3.3.2 Histogram of Sunflower image

3.4 Image manipulation and histograms

Image editors typically have provisions to create a histogram of the image being edited.

The histogram plots the number of pixels in the image (vertical axis) with a particular

brightness value (horizontal axis). Algorithms in the digital editor allow the user to visually

adjust the brightness value of each pixel and to dynamically display the results as adjustments

are made. Improvements in picture brightness and contrast can thus be obtained.

In the field of Computer Vision, image histograms can be useful tools for thresholding.

Because the information contained in the graph is a representation of pixel distribution as a

function of tonal variation, image histograms can be analyzed for peaks and/or valleys which

can then be used to determine a threshold value. This threshold value can then be used for edge

detection, image segmentation, and co-occurrence matrices.

3.5 Histogram equalization

This method usually increases the global contrast of many images, especially when the

usable data of the image is represented by close contrast values. Through this adjustment, the

intensities can be better distributed on the histogram. This allows for areas of lower local

contrast to gain a higher contrast. Histogram equalization accomplishes this by effectively

spreading out the most frequent intensity values.

The method is useful in images with backgrounds and foregrounds that are both bright

or both dark. In particular, the method can lead to better views of bone structure in x-ray

images, and to better detail in photographs that are over or under-exposed. A key advantage of

the method is that it is a fairly straightforward technique and an invertible operator. So in theory,

if the histogram equalization function is known, then the original histogram can be recovered.

The calculation is not computationally intensive. A disadvantage of the method is that it is

indiscriminate. It may increase the contrast of background noise, while decreasing the usable

signal.

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 24

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

In scientific imaging where spatial correlation is more important than intensity of signal (such

as separating DNA fragments of quantized length), the small signal to noise ratio usually

hampers visual detection. Histogram equalization provides better detect ability of fragment size

distributions, with savings in DNA replication, toxic fluorescent markers and strong UV source

requirements, whilst improving chemical and radiation risks in laboratory settings, and even

allowing the use of otherwise unavailable techniques for reclaiming those DNA fragments

unaltered by the partial fluorescent marking process.

Also histogram equalization can produce undesirable effects (like visible image

gradient) when applied to images with low color depth. For example, if applied to 8-bit image

displayed with 8-bit gray-scale palette it will further reduce color depth (number of unique

shades of gray) of the image. Histogram equalization will work the best when applied to images

with much higher color depth than palette size, like continuous data or 16-bit gray-scale images.

There are two ways to think about and implement histogram equalization, either as image

change or as palette change. The operation can be expressed as P(M(I)) where I is the original

image, M is histogram equalization mapping operation and P is a palette. If we define new

palette as P'=P(M) and leave image I unchanged then histogram equalization is implemented as

palette change. On the other hand if palette P remains unchanged and image is modified to I'=M

(I) then the implementation is by image change. In most cases palette change is better as it

preserves the original data.Generalizations of this method use multiple histograms to emphasize

local contrast, rather than overall contrast. Examples of such methods include adaptive

histogram equalization and contrast limiting adaptive histogram equalization or CLAHE.

Histogram equalization also seems to be used in biological neural networks so as to

maximize the output firing rate of the neuron as a function of the input statistics. This has been

proved in particular in the fly retina. Histogram equalization is a specific case of the more

general class of histogram remapping methods. These methods seek to adjust the image to make

it easier to analyze or improve visual quality (e.g., retinex).

3.6 Back projection

The back projection (or "back project") of a histogrammed image is the re-application of the

modified histogram to the original image, functioning as a look-up table for pixel brightness

values.

For each group of pixels taken from the same position from all input single-channel

images the function puts the histogram bin value to the destination image, where the coordinates

of the bin are determined by the values of pixels in this input group. In terms of statistics, the

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 25

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

value of each output image pixel characterizes probability that the corresponding input pixel

group belongs to the object whose histogram is used.

3.7 Histogram matching

Histogram matching is a method in image processing of color adjustment of two images

using the image histograms.

Fig 3.7 An example of histogram matching

It is possible to use histogram matching to balance detector responses as a relative

detector calibration technique. It can be used to normalize two images, when the images were

acquired at the same local illumination (such as shadows) over the same location, but by

different sensors, atmospheric conditions or global illuminations.

CHAPTER- 4

INTRODUCTION TO MATLAB

4.1 What Is MATLAB?

MATLAB is a high-performance language for technical computing. It integrates

computation, visualization, and programming in an easy-to-use environment where problems

and solutions are expressed in familiar mathematical notation.

version7.9.1 ( 2009 a)

Typical uses include: Math and computation,

Algorithm development

Data acquisition

Modeling, simulation, and prototyping

Data analysis, exploration, and visualization

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 26

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Scientific and engineering graphics

Application development, including graphical user interface building.

MATLAB is an interactive system whose basic data element is an array that does not

require dimensioning. This allows you to solve many technical computing problems, especially

those with matrix and vector formulations, in a fraction of the time it would take to write a

program in scalar non interactive language such as C or FORTRAN.

The name MATLAB stands for matrix laboratory. MATLAB was originally written to

provide easy access to matrix software developed by the LINPACK and EISPACK projects.

Today, MATLAB engines incorporate the LAPACK and BLAS libraries, embedding the state of

the art in software for matrix computation.

MATLAB has evolved over a period of years with input from many users. In university

environments, it is the standard instructional tool for introductory and advanced courses in

mathematics, engineering, and science. In industry, MATLAB is the tool of choice for high-

productivity research, development, and analysis.

MATLAB features a family of add-on application-specific solutions called toolboxes.

Very important to most users of MATLAB, toolboxes allow you to learn and apply specialized

technology. Toolboxes are comprehensive collections of MATLAB functions (M-files) that

extend the MATLAB environment to solve particular classes of problems. Areas in which

toolboxes are available include signal processing, control systems, neural networks, fuzzy logic,

wavelets, simulation, and many others.

4.2 The MATLAB System:

The MATLAB system consists of five main parts:

Development Environment:

This is the set of tools and facilities that help you use MATLAB functions and files.

Many of these tools are graphical user interfaces. It includes the MATLAB desktop and

Command Window, a command history, an editor and debugger, and browsers for viewing help,

the workspace, files, and the search path.

The MATLAB Mathematical Function:

This is a vast collection of computational algorithms ranging from elementary functions

like sum, sine, cosine, and complex arithmetic, to more sophisticated functions like matrix

inverse, matrix Eigen values, Bessel functions, and fast Fourier transforms.

The MATLAB Language:

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 27

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

This is a high-level matrix/array language with control flow statements, functions, data

structures, input/output, and object-oriented programming features. It allows both

"programming in the small" to rapidly create quick and dirty throw-away programs, and

"programming in the large" to create complete large and complex application programs.

Graphics:

MATLAB has extensive facilities for displaying vectors and matrices as graphs, as well

as annotating and printing these graphs. It includes high-level functions for two-dimensional

and three-dimensional data visualization, image processing, animation, and presentation

graphics. It also includes low-level functions that allow you to fully customize the appearance

of graphics as well as to build complete graphical user interfaces on your MATLAB

applications.

4.3 The MATLAB Application Program Interface (API):

This is a library that allows you to write C and FORTRAN programs that interact with

MATLAB. It includes facilities for calling routines from MATLAB (dynamic linking), calling

MATLAB as a computational engine, and for reading and writing MAT-files.

MATLAB DESKTOP:-

Mat lab Desktop is the main Mat lab application window. The desktop contains five sub

windows, the command window, the workspace browser, the current directory window, the

command history window, and one or more figure windows, which are shown only when the

user displays a graphic.

The command window is where the user types MATLAB commands and expressions at

the prompt (>>) and where the output of those commands is displayed. MATLAB defines the

workspace as the set of variables that the user creates in a work session. The workspace browser

shows these variables and some information about them. Double clicking on a variable in the

workspace browser launches the Array Editor, which can be used to obtain information and

income instances edit certain properties of the variable.

The current Directory tab above the workspace tab shows the contents of the current

directory, whose path is shown in the current directory window. For example, in the windows

operating system the path might be as follows: C:\MATLAB\Work, indicating that directory

“work” is a subdirectory of the main directory “MATLAB”; WHICH IS INSTALLED IN

DRIVE C. clicking on the arrow in the current directory window shows a list of recently used

paths. Clicking on the button to the right of the window allows the user to change the current

directory.

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 28

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

MATLAB uses a search path to find M-files and other MATLAB related files, which are

organize in directories in the computer file system. Any file run in MATLAB must reside in the

current directory or in a directory that is on search path. By default, the files supplied with

MATLAB and math works toolboxes are included in the search path. The easiest way to see

which directories are on the search path. The easiest way to see which directories is soon the

search path, or to add or modify a search path is to select set path from the File menu the

desktop, and then use the set path dialog box. It is good practice to add any commonly used

directories to the search path to avoid repeatedly having the change the current directory.

The Command History Window contains a record of the commands a user has entered in

the command window, including both current and previous MATLAB sessions. Previously

entered MATLAB commands can be selected.

And re-executed from the command history window by right clicking on a command or

sequence of commands. This action launches a menu from which to select various options in

addition to executing the commands. This is useful to select various options in addition to

executing the commands. This is a useful feature when experimenting with various commands

in a work session.

4.4 Using the MATLAB Editor to create M-Files:

The MATLAB editor is both a text editor specialized for creating M-files and a graphical

MATLAB debugger. The editor can appear in a window by itself, or it can be a sub window in

the desktop. M-files are denoted by the extension .m, as in pixelup.m. The MATLAB editor

window has numerous pull-down menus for tasks such as saving, viewing, and debugging files.

Because it performs some simple checks and also uses color to differentiate between

various elements of code, this text editor is recommended as the tool of choice for writing and

editing M-functions. To open the editor, type edit at the prompt opens the M-file filename.m in

an editor window, ready for editing. As noted earlier, the file must be in the current directory, or

in a directory in the search path.

4.5 Getting Help:

The principal way to get help online is to use the MATLAB help browser, opened as a

separate window either by clicking on the question mark symbol (?) on the desktop toolbar, or

by typing help browser at the prompt in the command window. The help Browser is a web

browser integrated into the MATLAB desktop that displays a Hypertext Markup Language

(HTML) documents.

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 29

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

The Help Browser consists of two panes, the help navigator pane, used to find

information, and the display pane, used to view the information. Self-explanatory tabs other

than navigator pane are used to perform a search.

CHAPTER-5

EXPERIMENTAL RESULTS

5.1USINGBINARY VALUES

5.1.1 INPUT IMAGE1

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 30

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Fig 5.1.1 original image

This is original image Lena taking as input image to make encrypted image

5.1.2 INPUT IMAGE2

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 31

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Fig 5.1.2 Distribution of pixel differences

This figure shows distribution of pixel differences it means after finding difference

between the pixels histogram is plotted.

5.1.3 OUTPUT IMAGE1

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 32

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Encrypted image

Fig 5.1.3 Encrypted image

This is Encrypted image of Lena image. In this after finding the pixel differences data is

embedded to the peak point of pixel differences.

5.1.4 OUTPUT IMAGE2

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 33

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Fig 5.1.4 restored image

This image shows restored image it means after extraction of encrypted data and original image.

5.1.5 OUTPUT IMAGE3

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 34

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Fig

5.1.5 command window output PSNR value

In command window outputs like PSNR, bits per pixel, transmitted bits, received bits

and capacitance of bits are displayed.

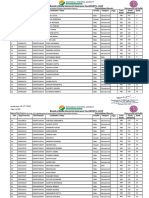

TABLE5.1 : Hiding capacity and distortion for test images with L=1

Host Np Cap(bits) PSNR(db) Bit rate(b/pixel)

image(256x256)

Lena 7388 7382 30.5037 0.0035

CHAPTER-6

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 35

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

ADVANTAGES & DISADVANTAGES

6.1. ADVANTAGES:

For high quality images reversible data hiding is used.

The pixel level adjustment can effectively reduce the distortion caused by data

embedding.

It is used to protect image with high security.

In this, Information can read by only authorized persons.

6.2. DISADVANTAGES:

1) Noise occurs when we receive the original image.

2) The histogram modification technique does not work well when an image has an

equal histogram.

3) This algorithm does not used for RGB images.

CHAPTER-7

APPLICATIONS

7.1. APPLICATIONS

Security applications

Satellite applications

Military applications

Digital video applications

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 36

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

CHAPTER 8

FUTURE SCOPE

The future scope of this project is to increase hiding capacity and reducing the bit error

rate and in this used only images for hiding data in the future supposed to do with the videos

also.Our forward improved histogram shifting method significantly increases PSNR,

embedding capacity with reduced shifting of pixels. The complexity of our method is

same as that of Hong et. al.(2010) method, as our method also scans image twice.

Handling of distortion still remains a difficult task. More robust system will also

significantly lead the area. Secure reversible encryption with any attack may be a

dream and a challenging field in near future.

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 37

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

CHAPTER 9

CONCLUSION

In this project, presented an efficient extension of the histogram modification technique

by considering the differences between adjacent pixels rather than simple pixel value.One

common drawback of virtually all histogram modification techniques is that they must provide a

side communication channel for pairs of peak and minimum points.

To solve this problem, introduces a binary tree that predetermines the multiple peak

points used to embed messages; thus, the only information the sender and recipient must share

is the tree level L. In addition, since neighbor pixels are often highly correlated and have spatial

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 38

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

redundancy, the differences have a Laplacian like distribution. This enables us to achieve large

hiding capacity while keeping embedding distortion low.

SOURCE CODE:

clc; %step1

clear all;

close all;

I=uigetfile('*.jpg','select an image');

I=imread(I);

I=imresize(I,[256 256]);

imshow(I);

title('orginal image');

J=I; %step2

for i=numel(I):-1:1

if i==1

d(i)=I(i);

else

d(i)=I(i-1)-I(i);

end

end

H=imhist(d);

kl=max(H);

p=find(kl==H);

figure,imhist(d);

title('distribution of differences');

xlabel('pixel value');

ylabel('no of pixels');

b=[0 1 1 0 0 1]; %step3

for k=1:length(b)

for i=numel(I):-1:1

if (i==1)|| (d(i)<p)

Y(i)=I(i);

else if(d(i)>p)&&(I(i)>=I(i-1))

Y(i)=I(i)+1;

end

if(d(i)>p)&&(I(i)<=I(i-1))

Y(i)=I(i)-1;

end

if(d(i)==p)&&(I(i)>=I(i-1))

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 39

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

Y(i)=I(i)+b(k);

end

if(d(i)==p)&&(I(i)<=I(i-1))

Y(i)=I(i)-b(k);

end

end

end

end

Yr=reshape(Y,[size(J,1) size(J,2)]);

figure,imshow(Yr);

title('encrypted image');

x=uint8(zeros(1,length(Y))); %step4

for i=numel(Y):-1:2

if((Y(i)-x(i-1))<p)&&(Y(i)<x(i-1))

x(i)=y(i)+1;

else

if((Y(i)-x(i-1))>p)&&(Y(i)>x(i-1))

x(i)=Y(i)-1;

else

x(i)=Y(i);

end

end

end

br=[] %step5

for i=numel(Y):-1:2

if(Y(i)-x(i-1))==p

br=[br 0];

end

if(Y(i)-x(i-1))==p+1

br=[br 1];

end

end

ko=find(p==d);

ki=ko(1:6);

k=Y(ki);

t=x(ki-1);

br=[];

for i=1;length(t)

if k(i)~=t(i)

br=[br 1];

else

br=[br 0];

end

end

xr=reshape(x,[size(J,1) size(J,2)]);

figure,imshow(xr);

title('decryted image');

mse=sum(sum((J-xr).*(J-xr)))/(size(J,1)*size(J,2));

PSNR=(20*log10((size(J,1)-1)/sqrt(mse)))

g=find(p==d);

disp('tranmited bits')

b

disp('recieved bits');

br

TRINITY COLLEGE OF ENGINEERING & TECHNOLOGY Page 40

REVERSIBLE DATA HIDING BASED HISTOGRAM MODIFICATION

CHAPTER-10

REFERENCES

[1] M. Wu and B. Lin, “Data hiding in image and video: Part I-fundamental issues and

solutions,” IEEE Trans. Image Process., vol. 12, no. 6, pp. 685–695, Jun. 2003.

[2] M. Wu, H. Yu, and B. Liu, “Data hiding in image and video: Part IIdesigns and

applications,” IEEE Trans. Image Process., vol. 12, no. 6, pp. 696–705, Jun. 2003.

[3] J. Fridrich, M. Goljan, and R. Du, “Invertible authentication,” in Proc. SPIE Security

Watermarking Multimedia Contents III, San Jose, CA, Jan. 2001, vol. 4314, pp. 197–208.

[4] J. Fridrich, M. Goljan, and R. Du, “Lossless data embedding for