Professional Documents

Culture Documents

sx60-100 - Operating Manual PDF

sx60-100 - Operating Manual PDF

Uploaded by

mirceaOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

sx60-100 - Operating Manual PDF

sx60-100 - Operating Manual PDF

Uploaded by

mirceaCopyright:

Available Formats

FibreCAT SX Series

Operating Manual

Edition August 2009 (Version 3.1, 2009-08-24)

Comments… Suggestions… Corrections…

The User Documentation Department would like to know your

opinion on this manual. Your feedback helps us to optimize our

documentation to suit your individual needs.

Feel free to send us your comments by e-mail to:

manuals@ts.fujtsu.com

Certified documentation

according to DIN EN ISO 9001:2000

To ensure a consistently high quality standard and

user-friendliness, this documentation was created to

meet the regulations of a quality management system which

complies with the requirements of the standard

DIN EN ISO 9001:2000.

cognitas. Gesellschaft für Technik-Dokumentation mbH

www.cognitas.de

Copyright and Trademarks

Copyright © Fujitsu Technology Solutions GmbH 2009.

All rights reserved.

Delivery subject to availability; right of technical modifications reserved.

All hardware and software names used are trademarks of their respective manufacturers.

This manual is printed

on paper treated with

chlorine-free bleach.

Contents

1 Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

1.1 Product Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

1.2 Management Software . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8

1.2.1 FibreCAT SX Manager Web-Based Interface . . . . . . . . . . . . . . . . . . . . . . 8

1.2.2 FibreCAT SX Manager Command-Line Interface . . . . . . . . . . . . . . . . . . . . 8

1.3 Target Group of the Manual . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

1.4 Structure of the Manual . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

1.5 Notational Conventions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

1.6 Technical Data . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

1.7 Installation and Configuration Checklist . . . . . . . . . . . . . . . . . . . . . . 15

2 Important Notes . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

2.1 Notes on Safety . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

2.2 Electrostatic-sensitive Component Label . . . . . . . . . . . . . . . . . . . . . . 19

2.3 CE Certificate . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

2.4 RFI Suppression . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

2.5 Notes on Mounting the Rack . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

2.6 Notes on Transportation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

2.7 Environmental Protection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.8 Site Requirements . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 22

2.8.1 Physical Requirements . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 22

2.8.1.1 Dimension and Weight Specifications . . . . . . . . . . . . . . . . . . . . . . . 22

2.8.1.2 Weight and Placement Guidelines . . . . . . . . . . . . . . . . . . . . . . . . . 23

2.8.1.3 Ventilation Requirements . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

2.8.2 Environmental Requirements . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

FibreCAT SX Series Operating Manual

Contents

2.8.3 Electrical Requirements . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

2.8.3.1 Electrical Guidelines . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

2.8.3.2 Site Wiring and Power Requirements . . . . . . . . . . . . . . . . . . . . . . . . 25

2.8.3.3 Cabling Requirements . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

2.8.4 Management Host Requirements . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

3 Hardware, Operating, and Indicator Components . . . . . . . . . . . . . . . . . . 27

3.1 Controller Enclosure . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 27

3.1.1 Components . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 27

3.1.2 Components and Indicators at the Front Side . . . . . . . . . . . . . . . . . . . . . 28

3.1.3 Ports and Switches at the Back Side . . . . . . . . . . . . . . . . . . . . . . . . . . 29

3.1.4 Indicators at the Back Side . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

3.2 Expansion Enclosure . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 33

3.2.1 Components. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 33

3.2.2 Components and Indicators at the Front Side . . . . . . . . . . . . . . . . . . . . . 33

3.2.3 Ports and Switches at the Back Side . . . . . . . . . . . . . . . . . . . . . . . . . . 33

3.2.4 Indicators at the Back Side . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 35

4 Connecting Hosts / Configurations . . . . . . . . . . . . . . . . . . . . . . . . . 37

4.1 Connecting Remote Management Hosts . . . . . . . . . . . . . . . . . . . . . . . 37

4.2 Installing the SCSI Enclosures Services Driver . . . . . . . . . . . . . . . . . . . 37

4.3 Connecting Direct Attached iSCSI Configurations . . . . . . . . . . . . . . . . . 38

4.3.1 Single Controller FibreCAT SX80 iSCSI With One Host . . . . . . . . . . . . . . . . 38

4.4 Host Interface Speed for FibreCAT SX (FC) . . . . . . . . . . . . . . . . . . . . . 39

4.5 Connecting Direct Attached FC Configurations . . . . . . . . . . . . . . . . . . . 41

4.5.1 FibreCAT SX60 / SX80 / SX88 With One Dual Port Host . . . . . . . . . . . . . . . . 42

4.5.2 FibreCAT SX60 / SX80 / SX88 With Two Dual Port Hosts . . . . . . . . . . . . . . . 43

4.5.2.1 Controller Failover Scenario . . . . . . . . . . . . . . . . . . . . . . . . . . . . 44

4.5.2.2 Path Failover Scenario . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 45

4.5.3 FibreCAT SX60 / SX80 / SX88 With Two Dual Port Hosts for High Performance . . . . 46

4.5.4 FibreCAT SX100 With Four Dual-Port Hosts . . . . . . . . . . . . . . . . . . . . . . 47

4.5.4.1 Controller Failover Scenario . . . . . . . . . . . . . . . . . . . . . . . . . . . . 49

4.6 Connecting Switch Attached iSCSI Configurations . . . . . . . . . . . . . . . . . 50

4.6.1 FibreCAT SX80 iSCSI With Two Dual Port Hosts and Two Switches . . . . . . . . . . 50

4.6.1.1 Controller Failover Scenario . . . . . . . . . . . . . . . . . . . . . . . . . . . . 51

FibreCAT SX Series Operating Manual

Contents

4.7 Connecting Switch Attached FC Configurations . . . . . . . . . . . . . . . . . . 52

4.7.1 FibreCAT SX60 / SX80 / SX88 With One Switch and One Dual Port Host . . . . . . . 52

4.7.2 FibreCAT SX60 / SX80 / SX88 With Two Switches and One Dual Port Host . . . . . 54

4.7.2.1 Configuration Rules for VMware ESX Server 3.0.0 and 3.0.1 . . . . . . . . . . . 55

4.7.3 FibreCAT SX60 / SX80 / SX88 With Two Switches and Two Dual Port Hosts . . . . . 56

4.7.4 FibreCAT SX100 With One Switch and Two Dual-Port Hosts . . . . . . . . . . . . . 58

4.8 Supported HBAs for Solaris (SPARC) . . . . . . . . . . . . . . . . . . . . . . . . 59

5 Installing Enclosures . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 61

5.1 Safety Precautions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 61

5.2 Unpacking an Enclosure and Verifying its Contents . . . . . . . . . . . . . . . . 62

5.3 Preparing the Rack . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 63

5.4 Installing an Enclosure in a Rack . . . . . . . . . . . . . . . . . . . . . . . . . . 63

5.4.1 Rack-Mount Kit . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 63

5.4.2 Rack Mounting Position Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

5.4.3 Mounting Steps . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

5.5 Connecting Controller and Expansion Enclosures . . . . . . . . . . . . . . . . . 69

5.6 Connecting the AC Power . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 72

5.7 Testing the Enclosure Connections . . . . . . . . . . . . . . . . . . . . . . . . . 72

6 Configuring a System for the First Time . . . . . . . . . . . . . . . . . . . . . . 73

6.1 Setting the IP Address Using the CLI . . . . . . . . . . . . . . . . . . . . . . . . 73

6.2 FibreCAT SX Manager Web-Based Interface . . . . . . . . . . . . . . . . . . . . 76

6.2.1 Configuring Your Web Browser for the WBI . . . . . . . . . . . . . . . . . . . . . . 76

6.2.2 Logging In to WBI . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 77

6.2.3 Setting Date and Time . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 77

6.3 Driver Settings . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 77

6.4 Configuring Controller Enclosure Host Ports (FC) . . . . . . . . . . . . . . . . . 78

6.5 Configuring the Microsoft iSCSI Software Initiator (iSCSI) . . . . . . . . . . . . 78

6.6 Editing Registry Timeout Values for iSCSI Initiator . . . . . . . . . . . . . . . . 80

6.7 Install Native MPIO Functionality Under Windows 2008 for FibreCAT SX . . . . 81

6.8 Installing a License . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81

FibreCAT SX Series Operating Manual

Contents

6.9 Creating Virtual Disks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81

6.10 Volume Mappings . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 82

6.11 Testing the Configuration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 83

6.12 Logging Out of the WBI . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 84

6.13 Next Steps . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 84

7 Powering the System Off and On . . . . . . . . . . . . . . . . . . . . . . . . . . . 85

7.1 Powering Off the System . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 85

7.2 Powering On the System . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 85

Glossary and Abbreviations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 87

Figures . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 105

Tables . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 107

Related Documents and Links . . . . . . . . . . . . . . . . . . . . . . . . . . . 109

Index . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 111

FibreCAT SX Series Operating Manual

1 Introduction

This guide describes how to install, initially configure and operate FibreCAT® SX series

storage system, and applies to the following models:

● FibreCAT SX60 Fibre Channel (FC) Controller Enclosure

● FibreCAT SX80 Fibre Channel (FC) Controller Enclosure

● FibreCAT SX88 Fibre Channel (FC) Controller Enclosure

● FibreCAT SX80 iSCSI Controller Enclosure

● FibreCAT SX100 Fibre Channel (FC) Controller Enclosure

● FibreCAT SX Serial Attached SCSI (SAS) Expansion Enclosure

This guide does not apply to the FibreCAT SX40 model which is covered by separate

documents.

If there are no differences between the five controller enclosure models, from now on they

together are referred to as FibreCAT SX controller enclosure.

1.1 Product Overview

FibreCAT SX storage systems are high-performance storage solutions that combine

outstanding performance with high reliability, availability, flexibility, and manageability.

FibreCAT SX storage systems are modular, rackmountable, and scalable from a single

controller enclosure configuration to a maximum configuration of eight expansion enclo-

sures behind one controller enclosure.

A controller enclosure can contain two RAID controller modules, which interact and provide

failover capability for the data path. The enclosures can use SAS (with SX80 / SX88 /SX100

only) or SATA disk drives and provides RAID functionality, caching, and disk storage.

The different characteristics of the FibreCAT SX models are itemized in “Technical Data” on

page 11.

The enclosures can be installed in Fujitsu PRIMECENTER racks or in standard 19-inch EIA

racks.

FibreCAT SX Series Operating Manual 7

Management Software Introduction

1.2 Management Software

The embedded management software FibreCAT SX Manager (FSM) includes the tools

outlined in the following topics:

● FibreCAT SX Manager’s Web Based Interface (WBI)

● FibreCAT SX Manager’s Command Line Interface (CLI)

1.2.1 FibreCAT SX Manager Web-Based Interface

The FSM web based interface (WBI) is the primary interface for configuring and managing

FibreCAT SX family arrays. A web server resides in each controller module. WBI enables

you to manage FibreCAT SX family arrays from a web browser that is properly configured

and that can access a controller module through an Ethernet connection.

The WBI also includes monitoring and diagnostic features that enable you to view and

collect information that enhances the reliability, availability, and serviceability (RAS) of

FibreCAT SX family arrays. You can configure the transmission of event notifications

(alerts), which can be sent to the screen or to email addresses, and Simple Network

Management Protocol (SNMP) traps, which can be sent to a host system. Events are

recorded in an event log, which you can view.

Information about using the WBI is in its online help and in the “FibreCAT SX Series Admin-

istrator’s Guide”.

To access the WBI for the first time, each FibreCAT SX controller module has a default IP

address (IP 10.0.0.1 and Subnet Mask 255.255.255.0). It can be used if the IP address

setting via CLI (see “Setting the IP Address Using the CLI” on page 73) can not be

performed, e. g. the appropriate serial cable is not on hand.

1.2.2 FibreCAT SX Manager Command-Line Interface

The FSM embedded command line interface (CLI) enables you to configure and manage

an array using individual commands or command scripts through an out-of-band RS-232 or

Ethernet connection.

NOTE

i As first time configuration of a FibreCAT SX controller enclosure the IP addresses

of its RAID controller(s) is/are to be set. This step can only be performed by using

the CLI through a RS-232 connection, which you will do in “Setting the IP Address

Using the CLI” on page 73.

All subsequent usage of the CLI can be performed with a terminal emulation

through an Ethernet connection.

Information about using the CLI is in the “FibreCAT SX Manager Command Line Interface

(CLI)” manual.

8 FibreCAT SX Series Operating Manual

Introduction Target Group of the Manual

1.3 Target Group of the Manual

The operating instructions are intended for the person responsible for installing the

hardware and correctly operating the system. The operating instructions contain all the

descriptions which are of importance for commissioning your FibreCAT SX storage system

in so far as they do not form part of the publication of your server system.

To understand the different expansion options it is necessary to have a knowledge of

hardware and data transmission, as well as basic knowledge of the operating system used.

1.4 Structure of the Manual

This manual consists of the following chapters:

● Important Notes

This chapter contains instructions on the safe operation of your storage system as well

as information about environmental protection and site requirements.

● Hardware, Operating, and Indicator Components

This chapter gives a detailed description of the main hardware components and the

position and meaning of the operating and indicator elements.

● Connecting Hosts / Configurations

This chapter shows some typical configurations.

● Installing Enclosures

This chapter describes the process of installing FibreCAT SX enclosures in a

PRIMECENTER rack or in a standard 19-inch EIA rack cabinet, cable a controller

enclosure to expansion enclosures, and describes how to connect the power cords and

how to test the connections.

● Connecting Hosts (FC)

This chapter describes the FibreCAT SX system cable connections to hosts for several

different configurations.

● Configuring a System for the First Time

This chapter describes how to use the CLI and the WBI of the FibreCAT SX Manager

to perform first-time configuration. The chapter also gives an overview about the

needed driver settings and describes how to perform basic storage configuration to

verify that your system is working.

● Powering the System Off and On

This chapter describes how to power off and power on the system when needed.

FibreCAT SX Series Operating Manual 9

Notational Conventions Introduction

1.5 Notational Conventions

Italics Commands, options, file names and path names are written in italic letters

in continuous text.

fixed font Commands and options in syntax descriptions as well as system output

are written in a fixed font.

<variable> Angle brackets are used to enclose variables which are to be replaced by

actual values.

semi-bold Highlights text

“Quotation marks” References to documents and chapters or sections in this or other documents

I NOTE Important information and tips

V CAUTION Reference to hazards that can lead to personal injury, loss of data or

damage to equipment

Table 1: Typographic Conventions

10 FibreCAT SX Series Operating Manual

Introduction Technical Data

1.6 Technical Data

FibreCAT

General Specifications

SX60 SX80 / SX88 SX100 SX80 iSCSI

Type 4 Gbit/s 1 Gbit/s

storage system storage system

Rack space 2U / enclosure

Number of hard disk drives max. 12 / enclosure

Supported operating systems Windows 2003 R2, Windows 2008

Red Hat 4.x and 5.x

SuSE SLES 9 and 10

VMware ESX VMware ESX VMware ESX

2.53 and 3.x 3.x 3.0, 3.0.1, 3.0.2

SPARC Solaris SPARC Solaris

--- ---

9 and 10 10

Number of power supplies 2 / enclosure

hot plug and with full redundancy

Controller cache memory

512 MByte 1 GByte

per RAID controller

FibreCAP per RAID controller 1

Number of

– FC ports with SFP transceiver

(Small Form-factor Pluggable)

2 4 2

– or RJ45 Ethernet ports

(for iSCSI

per RAID controller

Number of snapshots (default) 4

Number of snapshots (with license) 16 32 244 32

Max. number of expansion enclo-

1 4 8 4

sures per controller enclosure

Table 2: General Specifications

FibreCAT SX Series Operating Manual 11

Technical Data Introduction

FibreCAT

Hard Disk Drives

SX60 SX80 / SX88 SX100 SX80 iSCSI

Gross capacity1 500 GByte

SATA II 7200 rpm2 HDD 750 GByte

1 TByte

Gross capacity1

146 GByte

SAS1 15000 rpm2 HDD

— 300 GByte

(available for SX80 / SX88 /

450 GByte

SX 100 only)

Access time

11ms / 12ms (read / write)

with SATA II HDDs

Access time with SAS HDD — 4ms / 5ms (read / write

Total capacity including the max. 24 TByte 56 TByte 108 TByte 56 TByte

number of expansion enclosures (24 x 1 TByte) (56 x 1 TByte) (108 x 1 TByte) (56 x 1 TByte)

Table 3: Hard Disk Drives

1

mixing with SATA HDDs possible

Supported RAID Levels

RAID 0 Data Striping about several hard disk drives (HDDs)

RAID 1 Mirrored HDDs

RAID 10 Data mirroring, then Striping of the data over servers’ HDDs

RAID 3 Data Striping with dedicated parity HDD

RAID 5 Data Striping with distributed parity

RAID 50 RAID 5 arrays, Striped again over all drives

RAID 6 Data Striping with double parity

Table 4: Supported RAID Levels

12 FibreCAT SX Series Operating Manual

Introduction Technical Data

Management

Diagnostics of non-data Signalling and monitoring via SES (SCSI enclosure services) protocol and

characteristics LEDs

Management interfaces RS232 (mini-DB9)

10/100 Ethernet (RJ45)

Supported protocol SNMP

Administration FibreCAT SX Manager (FSM) with web based interface (WBI)

and command line interface (CLI)

Table 5: Management

FibreCAT

Options

SX60 SX80 / SX88 SX100 SX80 iSCSI

HDDs Hot plug HDDs (SATA and SAS, mixing possible)

RAID controller 2nd RAID controller

Max. number of expansion enclo-

1 4 8 4

sures per controller enclosure

Table 6: Options

Electrical Values per Enclosure (Maximum Values)

Power supply 2 hot-plug redundant modules with 1 fan integrated each

Apparent power 600 W (230 V AC, 50 Hz)

Max. electric current 2.61 A (230 V AC, 50 Hz)

Continuous load 535 W

Max. real ower 572 W (230 V AC, 50 Hz)

Power factor 0.953 (230 V AC, 50 Hz)

Peak load 650 W

Rated voltage 115 V – 240 V

Rated frequency 50 HZ – 60 Hz

Table 7: Electrical Values per Enclosure

FibreCAT SX Series Operating Manual 13

Technical Data Introduction

Ambient Conditions (DIN EN 60721-3-X)

Operating temperature 5°C up to 40°C (IEC 721)

Non operating temperature -40°C to 70°C

Relative humidity 10% – 90% (non condensing)

Table 8: Ambient Conditions

Heat Dissipation per Enclosure

Max. heat dissipation 1926 kJ/j

Table 9: Heat Dissipation per Enclosure

Dimensions per Enclosure

Rack space (H x W x D)

H 3,45 Inch / 8,75 cm (2 U)

W 17,56 Inch / 44,60 cm

D 22,5 Inch / 57,15 cm

Weight max. 33.6 kg (dependent on configuration level)

Table 10: Dimensions per Enclosure

Compliance with Standards

Product safety UL listet, cUL, CE, CSA-C22.2, EN 60950-2000,

IEC 60950, GS, GOST, S-Mark

Approval GS, CSAUS/C, CB-certificate

Electromagnetic compatibility FCC Part 15 Class B, ICES-003, EN 300-386,

EN 55022 Class B, VCCI Class B, AS/NZS 3548 Class B,

BMSI Class B

Vibrations EN 61000-3, EN 61000-3-3

Immunity EN 300-386, EN 55024

CE certification EU Directive: 89/336/EWG (EMV);

EN61000-3-2;

EN 6100-3-3 73/23/EWG (Product security)

Environmental compliance RoHS compliant, WEEE compliant

Table 11: Compliance with Standards

14 FibreCAT SX Series Operating Manual

Introduction Installation and Configuration Checklist

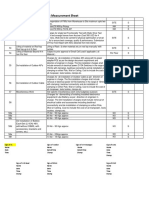

1.7 Installation and Configuration Checklist

Before you begin to install the system, make sure you have read the Safety manual.

The following table outlines the steps required to install and initially configure the system.

To ensure a successful installation, perform the tasks in the order in which they are

presented.

Step Task

1 Verify the site installation requirements (see page 22)

2 Install the enclosures (see page 61)

3 Cabling the enclosures (see page 69)

4 Connect the power cords (see page 72)

5 Test the enclosure connections (see page 72)

6 Connect the hosts (see page 69)

7 Configure a system for the first time (see page 73

Table 12: Installation and Configuration Checklist

FibreCAT SX Series Operating Manual 15

2 Important Notes

2.1 Notes on Safety

In this section you will find information that you must note when using the storage system.

This device complies with the relevant safety standards for IT equipment.

I The following safety notes are also provided in the Safety manual. Also pay

attention to the notes in the operating manual of the connected system.

If you have any questions relating to setting up and operating your system in the

environment where you intend to use it, please contact your sales outlet or our customer

service team.

V CAUTION!

● The actions described in these instructions should only be performed by

technical specialists. Equipment repairs should only be performed by service

personnel. Any unauthorized openings and improper repairs could expose the

user to risks (electric shock, energy hazards, fire hazards) and could also

damage the equipment. Please note that any unauthorized openings of the

device will result in the invalidation of the warranty and exclusion from all liability.

● Transport the device in its original packaging or in other suitable packaging

which will protect it against shock or impact.

● Read the notes on environmental conditions in “Technical Data” on page 11

before setting up and operating the device.

● If the device is brought in from a cold environment, condensation may form both

inside and on the outside of the machine.

● Wait until the device has acclimatized to room temperature and is absolutely dry

before starting it up. Material damage may be caused to the device if this

requirement is not observed.

● Check that the rated voltage specified on the type label is the same as the local

line voltage.

FibreCAT SX Series Operating Manual 17

Notes on Safety Important Notes

V CAUTION!

● The device must only be connected to a properly grounded wall outlet (the

device is fitted with a tested and approved power cable).

● Make sure that the power sockets on the device and the protective grounded

outlet of the building’s wiring system is freely accessible.

● Switching off the device does not cut off the supply of power. To do this you must

remove the power plugs.

● Before opening the unit, switch off the device and then pull out the power plugs.

● Route the cables in such a way that they do not form a potential hazard (make

sure no-one can trip over them) and that they cannot be damaged. When

connecting up a device, refer to the relevant notes in this manual.

● Never connect or disconnect data transmission lines during a storm (lightning

hazard).

● Systems which comprise a number of cabinets must use a separate fused

socket for each cabinet.

● The servers and the directly connected external storage subsystems should be

connected to the same power supply distributor. Otherwise you run the risk of

losing data if, for example, the central processing unit is still running but the

storage subsystem has failed during a power failure.

● Make sure that no objects (such as bracelets or paper clips) fall into or liquids

spill into the device (risk of electric shock or short circuit).

● In emergencies (e.g. damage to housings, power cords or controls or ingress of

liquids or foreign bodies), immediately power down the device, pull out the

power plugs and notify your service department.

● Note that proper operation of the system (in accordance with IEC 60950/DIN

EN 60950) is guaranteed only if slot covers are installed on all vacant slots

and/or dummies on all vacant bays and the housing cover is fitted (cooling, fire

protection, RFI suppression).

18 FibreCAT SX Series Operating Manual

Important Notes Electrostatic-sensitive Component Label

2.2 Electrostatic-sensitive Component Label

Electrostatic-sensitive components may be identified by the following sticker:

You must follow the instructions below when handling modules containing electrostatic-

sensitive components

Ê Discharge static electricity from your body (for example by touching a grounded metal

object) before handling modules containing electrostatic-sensitive components.

Ê The equipment and tools you use must be free of static charge.

Ê Remove the power plug before installing or removing modules containing electrostatic-

sensitive components.

Ê Only hold modules containing electrostatic-sensitive components by their edges.

Ê Do not touch any of the pins or track conductors on a module containing

electrostatic-sensitive components.

Ê Use a grounding strap designed for the purpose, to connect you to the system unit as

you install the modules.

Ê Place all components on a static-safe base.

I An exhaustive description of the handling of modules containing electrostatic-

sensitive components can be found in the relevant European and international

standards (DIN EN 61340-5-1, ANSI/ESD S20.20).

2.3 CE Certificate

The shipped version of this device complies with the requirements of the EEC

directives 89/336/EEC “Electromagnetic compatibility” and 73/23/EEC “Low

voltage directive”. The device therefore qualifies for the CE certificate

(CE=Communauté Européenne).

FibreCAT SX Series Operating Manual 19

RFI Suppression Important Notes

2.4 RFI Suppression

All other equipment which is connected to this product must also have radio noise

suppression in accordance with EC Guideline 89/336/EWG.

Products which meet this requirement are accompanied by a certificate to that effect issued

by the manufacturer and/or bear the CE mark. Products which do not meet this requirement

may be operated only with the special permission of the BZT (Bundesamt für Zulassungen

in der Telekommunikation).

I This is a “Class A“ equipment. This equipment may cause harmful interference in

residential areas. In this case, the user may be held liable for taking appropriate

measures and bearing the costs resulting from these measures.

2.5 Notes on Mounting the Rack

● For safety reasons, at least two people are required to install the rack-mounted model

because of its weight and size.

● When connecting and disconnecting cables, observe the notes in the operating manual

of your system and the comments in the “Important Notes” chapter in the technical

manual supplied with the rack.

● Ensure that the anti-tilt bracket is correctly mounted when you set up the rack.

● For safety reasons, no more than one unit may be withdrawn from the rack at any one

time during installation and maintenance work.

● If more than one unit is withdrawn from the rack at any one time, there is a danger that

the rack will tilt forward.

● The power supply to the rack must be installed by an authorized specialist (electrician).

2.6 Notes on Transportation

I Transport the storage subsystem in its original packaging or in other suitable

packaging which will protect it against shock or impact.

Do not unpack it until all transport maneuvers are completed.

If you need to lift or transport the storage system, ask someone to help you.

20 FibreCAT SX Series Operating Manual

Important Notes Environmental Protection

2.7 Environmental Protection

Environmentally friendly product design and development

This product has been designed in accordance with standards for “environmentally friendly

product design and development”. This means that the designers have taken into account

important criteria such as durability, selection of materials, emissions, packaging, the ease

with which the product can be dismantled and the extent to which it can be recycled.

This saves resources and thus reduces the harm done to the environment.

Notes on packaging

Please do not throw away the packaging. We recommend that you do not throw away the

original packaging in case you need it later for transporting.

Notes on labeling plastic housing parts

Please avoid attaching your own labels to plastic housing parts wherever possible, since

this makes it difficult to recycle them.

Take-back, recycling and disposal

The device may not be disposed of with household rubbish. This appliance is

labelled in accordance with European Directive 2002/96/EC concerning used

electrical and electronic appliances (WEEE - waste electrical and electronic

equipment).

The guideline determines the framework for the return and recycling of used

appliances as applicable throughout the EU. To return your used device, please

use the return and collection systems available to you. You will find further infor-

mation on this at www.ts.fujitsu.com/recycling.

For details on returning and reuse of devices and consumables within Europe, refer to the

“Returning used devices” manual, or contact your Fujitsu branch office/subsidiary or our

recycling centre in Paderborn:

Fujitsu Technology Solutions GmbH

Recycling Center

D-33106 Paderborn

Tel. +49 5251 8180-10

Fax +49 5251 8180-15

FibreCAT SX Series Operating Manual 21

Site Requirements Important Notes

2.8 Site Requirements

This chapter provides requirements and guidelines that you must address when preparing

your site for the installation.

CAUTION

! When selecting an installation site for the system, choose a location that avoids

excessive heat, direct sunlight, dust, or chemical exposure. These conditions

greatly reduce the system’s longevity, and might void your warranty.

2.8.1 Physical Requirements

This section provides dimension and weight specifications, weight and placement guide-

lines, and ventilation requirements.

2.8.1.1 Dimension and Weight Specifications

The floor space at the installation site must be strong enough to support the combined

weight of the rack, controller enclosures, expansion modules, and any additional

equipment. The site should provide sufficient space for installation, operation, and servicing

the enclosures, and also requires sufficient ventilation to allow a free flow of air to all enclo-

sures.

The following table lists enclosure dimensions and weight. Weights are based on an

enclosure having 12 drive modules, 2 controller or expansion modules, and 2 power and

cooling modules installed.

Specification Rackmount

Height 2U 8.76 cm

Width

● Chassis excluding mounting ears 44.6 cm

● Chassis including mounting ears 48.0 cm

Depth

● Chassis 55.37 cm

● To back of power and cooling module handle 57.12 cm

Weight, controller enclosure (12 drives)

● SAS drives 33.1 kg

● SATA drives 33.6 kg

Table 13: Dimension and Weight Specification Examples

22 FibreCAT SX Series Operating Manual

Important Notes Site Requirements

Specification Rackmount

Weight, expansion enclosure (12 drives)

● SAS drives 30.8 kg

● SATA drives 31.3 kg

Table 13: Dimension and Weight Specification Examples

2.8.1.2 Weight and Placement Guidelines

As you prepare for installation, follow these guidelines:

● Ideally, use two people to lift an enclosure. However, one person can safely lift an

enclosure if its weight is reduced by removing the power and cooling modules and drive

modules.

● Do not place enclosures in a vertical position. Always install and operate the enclosures

in a horizontal orientation.

● When installing enclosures in a rack, make sure that any surfaces over which you might

move the rack can support the weight. To prevent accidents when moving equipment,

especially on sloped loading docks and up ramps to raised floors, ensure you have a

sufficient number of helpers. Remove obstacles such as cables and other objects from

the floor.

● To prevent the rack from tipping and to minimize personnel injury in the event of a

seismic occurrence, securely anchor the rack to a wall or other rigid structure that is

attached to both the floor and to the ceiling of the room.

2.8.1.3 Ventilation Requirements

As you prepare for installation, follow these requirements:

● Do not block or cover ventilation openings at the front and rear of an enclosure. Never

place an enclosure near a radiator or heating vent. Failure to follow these guidelines can

cause overheating and affect the reliability and warranty of the product.

● Leave a minimum of 15 cm at the front and back of an enclosure to ensure adequate

airflow for cooling. No cooling clearance is required on the sides, top, or bottom of enclo-

sures.

● Leave enough space in front and in back of an enclosure to allow access to enclosure

components for servicing. Removing a component requires a clearance of at least

137 cm in front of and behind the enclosure.

FibreCAT SX Series Operating Manual 23

Site Requirements Important Notes

2.8.2 Environmental Requirements

The following table lists the environmental conditions in which the rackmounted controller

enclosure operates.

Specification Range

Altitude To 3 km, derate 2° C for every 1 km up to 3 km

Relative Humidity 10% to 90% RH, 27° C max. wet bulb, non-condensing

Temperature 5° C to 40° C, non-condensing

Shock 3.0 g, 11 ms, half-sine

Vibration 0.15 g (vertical); 0.10 g (horizontal), 5 to 500 Hz, swept-sine

Table 14: Environmental Requirements

2.8.3 Electrical Requirements

This section provides electrical guidelines and site wiring, power, and cabling requirements.

2.8.3.1 Electrical Guidelines

Each enclosure is shipped with two AC power cords that are appropriate for use in a typical

outlet in the destination country. Each power cord should connect one of the power and

cooling modules to an independent, external power source. To ensure power redundancy,

connect the two power cords to two separate circuits; for example, to one commercial circuit

and to one uninterruptable power source (UPS).

Safety status of I/O connections comply with Separated Extra Low Voltage (SELV) require-

ments.

24 FibreCAT SX Series Operating Manual

Important Notes Site Requirements

As you prepare for installation, follow these guidelines:

● The enclosures work with single-phase power systems having an earth ground

connection. To reduce the risk of electric shock, do not plug an enclosure into any other

type of power system. Contact your facilities manager or a qualified electrician if you are

not sure what type of power is supplied to your building.

● Enclosures are shipped with a grounding-type (three-wire) power cord. To reduce the

risk of electric shock, always plug the cord into a grounded power outlet.

● Do not use household extension cords with the enclosures. Not all power cords have

the same current ratings. Household extension cords do not have overload protection

and are not meant for use with computer systems.

2.8.3.2 Site Wiring and Power Requirements

Each enclosure has two power and cooling modules for redundancy. If full redundancy is

required, use a separate power source for each module. The AC power supply unit in each

power and cooling module is auto-ranging and is automatically configured to an input

voltage range from 88–264 VAC with an input frequency of 47–63 Hz. The power and

cooling modules meet standard voltage requirements for both U.S. and international

operation. The power and cooling modules use standard industrial wiring with line-to-

neutral or line-to-line power connections.

As you prepare for installation, follow these requirements:

● All AC mains and supply conductors to power distribution boxes for the rack-mounted

system must be enclosed in a metal conduit or raceway when specified by local,

national, or other applicable government codes and regulations.

● Ensure that the voltage and frequency of your power source match the voltage and

frequency inscribed on the equipment’s electrical rating label.

● To ensure redundancy, provide two separate power sources for the enclosures. These

power sources must be independent of each other, and each must be controlled by a

separate circuit breaker at the power distribution point.

● The system requires voltages within minimum fluctuation. The customer-supplied facil-

ities’ voltage must maintain a voltage with not more than ± 5 percent fluctuation. The

customer facilities must also provide suitable surge protection.

● Site wiring must include an earth ground connection to the AC power source. The

supply conductors and power distribution boxes (or equivalent metal enclosure) must

be grounded at both ends.

FibreCAT SX Series Operating Manual 25

Site Requirements Important Notes

● Power circuits and associated circuit breakers must provide sufficient power and

overload protection. To prevent possible damage to the AC power distribution boxes and

other components in the rack, use an external, independent power source that is

isolated from large switching loads (such as air conditioning motors, elevator motors,

and factory loads).

2.8.3.3 Cabling Requirements

As you prepare for installation, follow these requirements:

● Keep power and interface cables clear of foot traffic. Route cables in locations that

protect the cables from damage.

● Route interface cables away from motors and other sources of magnetic or radio

frequency interference.

● Stay within the cable length limitations.

2.8.4 Management Host Requirements

A local management host with at least one serial port connection is required for the initial

installation and configuration of a controller enclosure. After you configure one or both of

the controller modules with an Internet Protocol (IP) address, you then use a remote

management host on an Ethernet network to configure, manage, and monitor.

Note

i You must use Ethernet cable designated CAT-5 or higher to connect a controller

enclosure to an Ethernet network.

26 FibreCAT SX Series Operating Manual

3 Hardware, Operating, and Indicator

Components

This chapter describes the main hardware components and the position and meaning of the

operating and indicator elements at the FibreCAT SX storage system enclosures.

3.1 Controller Enclosure

The FibreCAT SX60 / SX80 / SX88 / SX100 controller enclosure can be connected to Fibre

Channel host bus adapters or switches. The FibreCAT SX80 iSCSI controller enclosure can

be connected to Ethernet host bus adapters, hubs or switches.

3.1.1 Components

Enclosure Components Quantity

FC or ISCS RAID controller (I/O) 1 or 21

module

SAS or SATA drive module 2–12 per enclosure

AC power and cooling module 2 per enclosure

2- or 4-Gbps FC host port with SFP2 2 per controller module (SX60 / SX80 / S88 / SX80 iSCSI);

or 1 Gbps iSCSI host port 4 per controller module (SX100)

3-Gbps, 4-lane SAS expansion port 1 per controller module (SX60 / SX80 / S88 / SX80 iSCSI);

2 per controller module (SX100)

RJ-45 Ethernet port (RJ-45) 1 per controller module

CLI port (RS-232 micro-DB9) 1 per controller module

Table 15: Controller Enclosure Components

1

Air management system drive blanks or I/O blanks must fill empty slots to maintain optimum airflow through the

chassis.

2

The SFPs are part of the controller modules and must not be removed.

FibreCAT SX Series Operating Manual 27

Controller Enclosure Hardware, Operating, and Indicator Components

3.1.2 Components and Indicators at the Front Side

Enclosure ID LED

Status LEDs (top to bottom):

Drive modules with LEDs - Unit Locator

(numbered left to right - Fault/Service Required

by row: 0–3, 4–7, 8–11) - FRU OK

- Temperature Fault

Figure 1: Components and Indicators on the Front of an Controller Enclosure

LED Color State Description

Enclosure ID Green On Shows the enclosure ID for an enclosure, which enables

you to correlate a physical enclosure with logical views

presented by management software. The enclosure ID for a

controller enclosure is zero (0); the enclosure ID for an

attached expansion enclosure is non-zero.

OK to Remove(drive Blue Off The drive module is not prepared for removal.

module) On The drive module has been removed from any active virtual

disk, spun down, and prepared for removal.

Power/Activity/Fault Green Off The drive module is not powered on.

(drive module)

On The drive module is operating normally.

Blink The drive module is active and processing I/O or is

performing a media scan.

Yellow Off No fault.

On The drive module has experienced a fault, has failed or is a

member of a critical vdisk.

Blink Physically identifies the drive module.

White Off Not active.

Unit Locator Blink Physically identifies the enclosure.

Yellow Off No fault.

Fault/Service Required On An enclosure-level fault has occurred. Service action is

required. The event has been acknowledged but the

problem still needs attention.

Table 16: Controller Enclosure LEDs (Front)

28 FibreCAT SX Series Operating Manual

Hardware, Operating, and Indicator Components Controller Enclosure

LED Color State Description

Green On The enclosure is powered on with at least one power

FRU OK module operating normally.

Off Both power modules are off.

Green Off The enclosure temperature is normal.

Temperature Fault Yellow On The enclosure temperature is above threshold.

Table 16: Controller Enclosure LEDs (Front)

3.1.3 Ports and Switches at the Back Side

The following figure shows the ports (location: controller module) and the power switch

(location: power and cooling module) at the back of the FibreCAT SX60 / SX80 / SX88

controller enclosure equipped with two FC RAID controllers. The second (lower) controller

is optional.You will use the ports and the switch during the installation procedure.

Power switch

FC ports CLI port Ethernet management port Expansion port

Figure 2: FibreCAT SX60 / SX80 / SX88 Controller Enclosure Ports (FC) and Power Switch

The following figure shows the ports (location: controller module) and the power switch

(location: power and cooling module) at the back of the FibreCAT SX80 iSCSI controller

enclosure equipped with two iSCSI controllers. The second (lower) controller is optional.

You will use the ports and the switch during the installation procedure.

FibreCAT SX Series Operating Manual 29

Controller Enclosure Hardware, Operating, and Indicator Components

Power switch

Ethernet ports CLI port Ethernet management port Expansion port

Figure 3: FibreCAT SX80 iSCSI Controller Enclosure Ports (FC) and Power Switch

The following figure shows the ports (location: controller module) and the power switch

(location: power and cooling module) at the back of the FibreCAT SX100 controller

enclosure equipped with two FC RAID controllers. The second (lower) controller is

optional.You will use the ports and the switch during the installation procedure.

Power switch

FC ports CLI port Ethernet management port Expansion ports

Figure 4: FibreCAT SX100 Controller Enclosure Ports (FC) and Power Switch

Port/Switch Description

Power switch Toggle, where “|” is On and “O” is Off.

FC/Ethernet 4-Gbps FC or 1-Gbps iSCSI ports used to connect to data hosts. Each FC port

ports contains an SFP1 transceiver. Host port 0 and 1 connect to host channel 0 and 1,

respectively. The FibreCAT SX100 model has four host ports.

CLI port Micro-DB9 port used to connect the controller module to a local management host

using RS-232 communication for out-of-band configuration and management.

Table 17: Controller Enclosure Ports and Switches (Back)

30 FibreCAT SX Series Operating Manual

Hardware, Operating, and Indicator Components Controller Enclosure

Port/Switch Description

Ethernet 10/100BASE-T Ethernet port used for TCP/IP-based out-of-band management of

management the RAID controller. An internal Ethernet device provides standard 10 Mbit/second

port and 100 Mbit/second full-duplex connectivity.

Expansion port 3-Gbps, 4-lane (12 Gbps total) table-routed egress port used to connect SAS

expansion enclosures.

Table 17: Controller Enclosure Ports and Switches (Back)

1 The SFPs are part of the controller modules and must not be removed (SFP = Small Form-factor Pluggable).

3.1.4 Indicators at the Back Side

The following figure shows the LEDs at the back of the controller enclosure (FibreCAT SX60

/ SX80 / SX88 as example) equipped with two FC RAID controllers. The second (lower)

controller is optional. You will use the LEDs during the installation procedure.

AC Power Good

Host link status Cache status

DC Voltage/Fan Fault/

Host link speed Host activity Expansion port status

Service Required

Unit Locator FRU OK Ethernet activity

OK to Remove Fault/Service Required Ethernet link status

Figure 5: FibreCAT SX60 / SX80 / SX88 Controller Enclosure (FC) LEDs

LED (Location: Power Color State Description

and Cooling Module)

AC Power Good Green Off AC power is off or input voltage is below the minimum

threshold.

On AC power is on and input voltage is normal.

DC Voltage/Fan Fault/ Yellow Off DC output voltage is normal.

Service Required On DC output voltage is out of range or a fan is operating

below the minimum required RPM.

Table 18: Controller Enclosure LEDs (Back, Power and Cooling Module)

FibreCAT SX Series Operating Manual 31

Controller Enclosure Hardware, Operating, and Indicator Components

LED (Location: Color State Description

Controller Module)

Host link status (FC only) Green Off The port is empty or the link is down.

On The port link is up and connected.

Host link speed (FC only) Green Off The data transfer rate is 2 Gbps.

On The data transfer rate is 4 Gbps.

White Off Not active.

Unit Locator Blink Physically identifies the controller module.

Blue Off The controller module is not prepared for removal.

OK to Remove On The controller module can be removed.

Yellow On A fault has been detected or a service action is required.

Fault/ServiceRequired Blink Indicates a hardware-controlled power up or a cache

flush or restore error.

Green Off Controller module is not OK.

FRU OK On Controller module is operating normally.

Blink System is booting.

Cache status Green Off Cache is clean (contains no unwritten data).

On Cache is dirty (contains unwritten data) and operation is

normal.

Blink A Compact Flash flush or cache self-refresh is in

progress.

Host activity Green Off The host ports have no I/O activity.

Blink At least one host ports has I/O activity.

Ethernet link status Green Off The Ethernet port is not connected or the link is down.

On The Ethernet link is up.

Ethernet activity Green Off The Ethernet link has no I/O activity.

Blink The Ethernet link has I/O activity.

Expansion port status Green Off The port is empty or the link is down.

On The port link is up and connected.

Table 19: Controller Enclosure LEDs (Back, Controller Module)

32 FibreCAT SX Series Operating Manual

Hardware, Operating, and Indicator Components Expansion Enclosure

3.2 Expansion Enclosure

The FibreCAT SX expansion enclosure can be connected to a FibreCAT SX controller

enclosure to provide additional disk storage capacity.

3.2.1 Components.

Description Quantity

SAS expansion module 1 or 21

SAS or SATA drive module 2–12 per enclosure

AC power and cooling module 2 per enclosure

3-Gbps, 4-lane SAS In port 1 per expansion module

3-Gbps, 4-lane SAS Out port 1 per expansion module

Table 20: Expansion Enclosure Components

1

Air management system drive blanks or I/O blanks must fill empty slots to maintain

optimum airflow through the chassis.

3.2.2 Components and Indicators at the Front Side

The components and indicators at the front of an expansion enclosure are the same as at

a controller enclosure (see page 28).

3.2.3 Ports and Switches at the Back Side

The following figure shows the ports (location: expansion module) and the power switch

(location: power and cooling module) at the back of the expansion enclosure equipped with

two SAS expansion modules. The second (lower) module is optional.You will use the ports

and the switch during the installation procedure.

FibreCAT SX Series Operating Manual 33

Expansion Enclosure Hardware, Operating, and Indicator Components

Power switch

SAS In port SAS Out port

Figure 6: Expansion Enclosure Ports and Power Switch

Port/Switch Description

Power switch Toggle, where “|” is On and “O” is Off.

SAS In port 3-Gbps, 4-lane (12 Gbps total) subtractive ingress port used to connect to a

controller enclosure.

SAS Out port 3-Gbps, 4-lane (12 Gbps total) table-routed egress port used to connect to another

expansion enclosure.

Table 21: Expansion Enclosure Ports and Switches (Back)

34 FibreCAT SX Series Operating Manual

Hardware, Operating, and Indicator Components Expansion Enclosure

3.2.4 Indicators at the Back Side

The following figure shows the LEDs at the back of the expansion enclosure equipped with

two SAS expansion modules. The second (lower) module is optional. You will use the LEDs

during the installation procedure.

AC Power Good

DC Voltage/Fan Fault/

Service Required SAS In port status SAS Out port status

Unit Locator FRU OK

OK to Remove Fault/Service Required

Figure 7: Expansion Enclosure LEDs

LED (Location: Power Color State Description

and Cooling Module)

AC Power Good Green Off AC power is off or input voltage is below the minimum

threshold.

On AC power is on and input voltage is normal.

DC Voltage/Fan Fault/ Yellow Off DC output voltage is normal.

Service Required On DC output voltage is out of range or a fan is operating

below the minimum required RPM.

Table 22: Expansion Enclosure LEDs (Back, Power and Cooling Module)

LED (Location: Color State Description

Expansion Module)

SAS In port status Green Off The port is empty or the link is down.

On The port link is up and connected.

SAS Out port status Green Off The port is empty or the link is down.

On The port link is up and connected.

Table 23: Expansion Enclosure LEDs (Back, Expansion Module)

FibreCAT SX Series Operating Manual 35

Expansion Enclosure Hardware, Operating, and Indicator Components

LED (Location: Color State Description

Expansion Module)

White Off Not active.

Unit Locator Blink Physically identifies the expansion module.

Blue Off Not implemented.

OK to Remove

Yellow On A fault has been detected or a service action is required.

Fault/ServiceRequired Blink Indicates a hardware-controlled power up or a cache

flush or restore error.

Green Off Expansion module is not OK.

FRU OK On Expansion module is operating normally.

Blink System is booting.

Table 23: Expansion Enclosure LEDs (Back, Expansion Module)

36 FibreCAT SX Series Operating Manual

4 Connecting Hosts / Configurations

This chapter describes the FibreCAT SX system cable connections for hosts and shows

typical configurations.

4.1 Connecting Remote Management Hosts

The management host directly manages systems out-of-band over a network. This section

describes how to connect the Ethernet cables and power cords to the management host.

1. Locate the Ethernet ports for controller A and controller B at the back of the controller

enclosure.

2. Connect an Ethernet cable to each Ethernet port.

3. Connect the other end of each Ethernet cable to a network that your management host

can access (preferably on the same subnet).

4.2 Installing the SCSI Enclosures Services Driver

NOTE

i This section applies to Microsoft Windows hosts only.

Installing the SCSI Enclosure Services (SES) driver prevents Microsoft Windows hosts from

displaying the Found New Hardware Wizard when the storage system is discovered.

1. In a web browser, go to

http://support.ts.fujitsu.com/com/support/downloads.html and download the SCSI

enclosure device driver for FibreCAT SX to a network location that the data host can

access.

2. Extract the package contents to a temporary folder on the host.

3. In that folder, double-click Setup.exe to install the driver.

4. Click Finish.

The driver is installed.

FibreCAT SX Series Operating Manual 37

Connecting Direct Attached iSCSI Configurations Connecting Hosts / Configurations

4.3 Connecting Direct Attached iSCSI Configurations

4.3.1 Single Controller FibreCAT SX80 iSCSI With One Host

A single-controller configuration is supported but provides no redundancy in the event that

the controller fails. Each data host has one iSCSI port connected to the controller module.

If the controller fails, the host loses access to the volume LUNs.

Single-Controller, Direct Attach Connection to a Single Data Host shows the direct attach

configuration for a single data host

Figure 8: Single-Controller, Direct Attach Connection to a Single Data Host (iSCSI)

The FibreCAT SX80 iSCSI Storage Systems was designed as a fully redundant dual

controller storage array for iSCSI systems. The storage system alternately assigns virtual

disks between the two controllers to support automatic load balancing. It is currently

available as a non-redundant single controller storage system as an entry level option.

When the FibreCAT SX80 iSCSI Storage System has a single controller, every other virtual

disk created will be owned by the non-existent second controller, regardless of the

ownership specified when creating the virtual disk - any storage assigned to the missing

controller is treated as "failed-over" to the existing controller. This choice allows for trans-

parent upgrade to a dual controller system in the future. In order for the virtual disks

assigned to the non-existent controller to be visible, the host needs to establish logins to

both iSCSI targets. The IP addresses of the non-existent controller (target) need to be

configured in the storage system. The connected hosts then need to establish iSCSI

sessions (logins) to one or both targets to access the volumes on those virtual disks.

38 FibreCAT SX Series Operating Manual

Connecting Hosts / Configurations Host Interface Speed for FibreCAT SX (FC)

For example, if you create 6 virtual disks, then the second, fourth and sixth virtual disks

would be owned by the non-existent second controller. To present volumes created on

these virtual disks to a host, you must assign IP addresses to the same ports on the non-

existent controller as ports that are used on the existing controller. The address assigned

to the port of the non-existent controller must be in the same network as the same port of

the existing controller. For example, if controller A has the following configuration:

Controller A

Port/Channel: 0 1

IP: 192.168.144.1 10.0.0.1

Netmask: 255.255.255.0 255.0.0.0

You should then set the IP address of the ports on the controller that is not installed to

reserve addresses in the same subnets so those hosts can see the volumes through the A

controller, as shown below:

Controller B (currently not installed)

Port/Channel: 0 1

IP: 192.168.144.18 10.0.0.22

Netmask: 255.255.255.0 255.0.0.0

Finally, include these addresses in the iSCSI initiator configuration the same as you would

the IP addresses of the existing controller.

If you follow this process, all of the volumes created on the FibreCAT SX80 iSCSI Storage

System will be visible through the existing controller host ports. When you are ready to

upgrade to a dual controller system, simply install the second controller with Ethernet

cables pre-attached and all the work will be done automatically by the storage system to

move those volumes to the new controller.

Configuration Rules

Fixed speed for all ports

4.4 Host Interface Speed for FibreCAT SX (FC)

NOTE

i The following restriction applies to FibreCAT SX60 / SX80 in direct attached config-

urations:

If your Host Interface Module (HIM) is Model 0 (or you have a mixed mode of HIM

0 and 1 in a dual controller FibreCAT controller enclosure), for FibreCAT SX60 /

SX80 in direct connect mode 2 Gbit FC speed is supported only.

If both HIMs in your controller enclosure are Model 1 (or you have only a single controller

FibreCAT SX and it is Model 1), in direct host connect mode up to 4 Gbit FC speed is

supported for FibreCAT SX60 / SX80.

FibreCAT SX Series Operating Manual 39

Host Interface Speed for FibreCAT SX (FC) Connecting Hosts / Configurations

For FibreCAT SX88 / SX100, in direct host connect mode always up to 4 Gbit FC speed is

supported.

In switch attached mode, for FibreCAT SX60 / SX80 / SX88 / SX100 up to 4 Gbit FC speed

is supported always (no restriction with any HIM Model).

If you have a direct attached configuration with FibreCAT SX60 / SX80, you should find out

the HIM Model (0 or 1) of your controller(s) via FibreCAT SX Manager’s Web Based

Interface:

1. Open FibreCAT SX Manager’s Web Based Interface.

2. Login as monitor or manage user.

3. In MONITOR STATUS menu, click the link advanced settings (see screenshots below).

Here you can find out the HIM Model of your controller module(s):

Figure 9: Detecting the HIM Model With FibreCAT SX Manager’s WBI (Example With Two HIM Models 0)

40 FibreCAT SX Series Operating Manual

Connecting Hosts / Configurations Connecting Direct Attached FC Configurations

Figure 10: Detecting the HIM Revision With FibreCAT SX Manager’s WBI (Example With Two HIM Models 1)

4.5 Connecting Direct Attached FC Configurations

This section explains how to connect data hosts to access the controller enclosure directly.

You will need fibre optic cables of the proper length to connect your data host to the storage

system.

The FibreCAT SX60 / SX80 / SX88 controller enclosure equipped with two controllers has

four FC connections, two per controller. The FibreCAT SX100 controller enclosure equipped

with two controllers has eight FC connections, four per controller. To maintain redundancy,

connect one data host to both Controller A and Controller B.

1. Locate the host ports at the back of the controller enclosure.

2. Connect a fiber optic cable to each host port on controller A and controller B.

V CAUTION!

Fiber optic cables are fragile. Do not bend, twist, fold, pinch, or step on the fiber

optic cables. Doing so can degrade performance or cause data loss.

3. Connect the other end of each fiber optic cable to the HBAs as shown in the following

figures.

FibreCAT SX Series Operating Manual 41

Connecting Direct Attached FC Configurations Connecting Hosts / Configurations

4.5.1 FibreCAT SX60 / SX80 / SX88 With One Dual Port Host

The cabling examples show a high-availability controller and path failover configuration.

This configuration requires host port interconnect circuitry between controller modules to

be set to Interconnected as described in “Configuring Controller Enclosure Host Ports (FC)”

on page 78. For path failover this configuration requires a host-based multipathing software.

The controller enclosure is equipped with two FC RAID controllers and the host have two

FC HBAs each.

Port interconnects:

interconnected by FibreCAT SX Manager

Figure 11: Minimal Connection to a Single Data Host

Configuration Rules

Restrictions: not for VMware

FC-Topology: Arbitrated Loop (FC-AL)

FC Speed: max. 4Gbit/s

(note “Host Interface Speed for FibreCAT SX (FC)” on page 39)

Host Port Interconnect: Interconnected

Path-Failover Software: FTS DDM V5; native MPIO (DSM); FTS Multipath V 5,

native DM-MP (RedHat, SuSE)

42 FibreCAT SX Series Operating Manual

Connecting Hosts / Configurations Connecting Direct Attached FC Configurations

4.5.2 FibreCAT SX60 / SX80 / SX88 With Two Dual Port Hosts

The following figure shows the preferred high-availability controller and path redundant

configuration. This configuration requires that host port interconnects are set to Intercon-

nected as described in “Configuring Controller Enclosure Host Ports (FC)” on page 78. This

configuration also requires host-based multipathing software. For failover behavior, see

“Configurations” on page 79.

A + B LUNs A + B LUNs

A + B LUNs A + B LUNs

Figure 12: High-Availability, Dual-Controller, Direct Attached Connection to Dual Data Hosts for Windows and

Linux (no VMware support)

Configuration Rules

Restrictions: not for VMware

FC-Topology: Arbitrated Loop (FC-AL)

FC Speed: max. 4Gbit/s

(note “Host Interface Speed for FibreCAT SX (FC)” on page 39)

Host Port Interconnect: Interconnected

Path-Failover Software: FTS DDM V5; native MPIO (DSM); FTS Multipath V 5,

native DM-MP (RedHat, SuSE)

FibreCAT SX Series Operating Manual 43

Connecting Direct Attached FC Configurations Connecting Hosts / Configurations

4.5.2.1 Controller Failover Scenario

A + B LUNs

A + B LUNs

FAILED

Figure 13: Direct Attached FibreCAT SX (Controller Failover Scenario)

In an active-active configuration, failover is the act of temporarily transferring ownership of

controller resources from a failed controller to a surviving controller. The resources include

virtual disks, cache data, host ID information, and LUNs and WWNs. In this scenario host

A and host B see all resources via controller A.

44 FibreCAT SX Series Operating Manual

Connecting Hosts / Configurations Connecting Direct Attached FC Configurations

4.5.2.2 Path Failover Scenario

A + B LUNs

A + B LUNs A + B LUNs

Figure 14: Direct Attached FibreCAT SX (Path Failover Scenario)

In scenario of path-failover, host A sees his B-LUNs via the host interconnect line. The filter

driver (multipath software) generates the B-LUN I/Os across the other HBA.

FibreCAT SX Series Operating Manual 45

Connecting Direct Attached FC Configurations Connecting Hosts / Configurations

4.5.3 FibreCAT SX60 / SX80 / SX88 With Two Dual Port Hosts for High

Performance

The following figure shows a non-redundant path-failover configuration that can be used

when high performance is more important than high availability. This configuration requires

the host port interconnect circuitry to be be set to Straight-through, which it is by default.

A LUNs B LUNs

A LUNs B LUNs

Figure 15: High-Performance, Dual-Controller, Direct Attached Connection to Dual Data Hosts (no Solaris

Support)

Configuration Rules

Restrictions: not for Solaris and not for VMware

FC-Topology: Arbitrated Loop (FC-AL)

FC Speed: max. 4Gbit/s (note “Host Interface Speed for FibreCAT SX (FC)”

on page 39)

Host Port Interconnect: Straight-through

Path-Failover Software: FTS DDM V5; native MPIO (DSM); FTS Multipath V 5

46 FibreCAT SX Series Operating Manual

Connecting Hosts / Configurations Connecting Direct Attached FC Configurations

4.5.4 FibreCAT SX100 With Four Dual-Port Hosts

2370, 2278 2070, 2178

2370, 2278 2070, 2178

2270, 2378 2078, 2170

2270, 2378 2078, 2170

Figure 16: Four Dual-Port Data Hosts with FibreCAT SX100

FibreCAT SX Series Operating Manual 47

Connecting Direct Attached FC Configurations Connecting Hosts / Configurations

This figure shows the optimized connectivity for a high-availability, dual-controller, direct

attached connection to four dual-port data hosts. To ensure failover fault tolerance, each

dual port host must be connected to each controller. Connecting one host port to Port 0 or

Port 1 and the other to Port 2 or Port 3 on the other controller provides the most efficient

internal path balancing in each controller.

Configuration Rules

Usage Clustered servers, direct connect to storage, classroom applications, classroom file

server

Advantage

Benefits of fault tolerance of dual-controller and clustered servers

Example business

Educational facility; small tech business

Topology

Loop mode

HBA settings

fixed speed 2/4Gbit

Link time out 60 sec

node time out 60 sec

Queue depth (see release notes)

SW MPIO SW

Storage settings

host port interconnect: Interconnected

In this configuration, all hosts have redundant connections to volumes that are associated

with each of the controllers. If a controller fails, the hosts maintain access to all of the

volumes through the hosts ports on the surviving controller.

48 FibreCAT SX Series Operating Manual

Connecting Hosts / Configurations Connecting Direct Attached FC Configurations

4.5.4.1 Controller Failover Scenario

2370, 2278 2070, 2178

2370, 2278 2070, 2178

2270, 2378 2078, 2170

2170, 2078 2078, 2170

FAILED

Figure 17: Four Dual-Port Data Hosts with FibreCAT SX100 and Failed Controller

FibreCAT SX Series Operating Manual 49

Connecting Switch Attached iSCSI Configurations Connecting Hosts / Configurations

4.6 Connecting Switch Attached iSCSI Configurations

4.6.1 FibreCAT SX80 iSCSI With Two Dual Port Hosts and Two Switches

The high-availability configuration requires two Gigabit Ethernet (GbE) switches, as shown

in the following figure. During active-active operation, both controllers' mapped volumes are

visible to both data hosts.

A & B volumes

A & B volumes

A volumes, A0 IP A volumes, A1 IP

B volumes, B0 IP B volumes, B1 IP

Figure 18: iSCSI Storage Presentation During Normal, Active-Active Operation

50 FibreCAT SX Series Operating Manual

Connecting Hosts / Configurations Connecting Switch Attached iSCSI Configurations

A dual-controller FibreCAT SX80 iSCSI storage system uses port 0 of each controller as

one failover pair and port 1 of each controller as a second failover pair. If one controller fails,

all mapped volumes remain visible to all hosts. Dual IP-address technology is used in the

failed over state, and is largely transparent to the host system. However, for complete fault

tolerance, host-based path failover software is recommended.

Configuration Rules

Fixed speed for all ports

4.6.1.1 Controller Failover Scenario

The following figure shows an example of storage presentation during failover of

controller B.

A & B volumes

A & B volumes

A & B volumes, A0 & B0 IPs A & B volumes, A1 & B1 IPs

Figure 19: iSCSI Storage Presentation During Failover

FibreCAT SX Series Operating Manual 51

Connecting Switch Attached FC Configurations Connecting Hosts / Configurations

Single controller mode is supported in both direct attached and switch attached configu-

ration. In single controller mode the system is set to a failed over state to support a future

upgrade to dual controller system. When configuring a single controller system you will

need to assign ghost host IP configuration to the system. This will ensure that when an

additional controller is inserted no additional configuration is required.

NOTE

i Ensure that when configuring a single controller system you assign a host port IP

address to both the active controller and the host B controller.

4.7 Connecting Switch Attached FC Configurations

This section explains how to connect data hosts to access the controller enclosure through

one or more external FC switches.

You will need fiber optic cables of the proper length to connect your data host to the switch,

and the switch to the storage system.

4.7.1 FibreCAT SX60 / SX80 / SX88 With One Switch and One Dual Port Host

1. Locate the host ports at the back of the controller enclosure.

2. Connect a fiber optic cable to each host port on controller A and controller B.

V CAUTION!

Fiber optic cables are fragile. Do not bend, twist, fold, pinch, or step on the fiber

optic cables. Doing so can degrade performance or cause data loss.

3. Connect the other end of each cable to a switch port. Refer to Figure 20 for configu-

ration options.

4. Using fiber optic cables connect the switch to two FC HBA ports as shown in the

following figures.

52 FibreCAT SX Series Operating Manual

Connecting Hosts / Configurations Connecting Switch Attached FC Configurations

The following figure shows a redundant connection through a single switch to a single data

host with two HBA ports. This configuration requires that host port interconnects are set to

Straight-through. It also requires host-based multipathing software.

A + B LUNs A + B LUNs

Figure 20: Redundant Connection Through a Single Switch to a Single Data Host

NOTE

i The FC switch must be configured according to port zoning rules (only one initiator

and one target per zone) and with fixed speed and topology settings.

FibreCAT SX Series Operating Manual 53

Connecting Switch Attached FC Configurations Connecting Hosts / Configurations

4.7.2 FibreCAT SX60 / SX80 / SX88 With Two Switches and One Dual Port

Host

1. Locate the host ports at the back of the controller enclosure.

2. For switch A: Connect fiber optic cables to host port 0 on controller A and host port 1

on controller B. Then connect the other ends of the cables to the switch.

3. For switch B: Connect fiber optic cables to host port 1 on controller A and host port 0

on controller B. Then connect the other ends of the cables to the switch.

V CAUTION!

Fiber optic cables are fragile. Do not bend, twist, fold, pinch, or step on the fiber

optic cables. Doing so can degrade performance or cause data loss.

4. Using fiber optic cables connect each of the two switches to FC HBAs on the host as

shown in the following figure.

This configuration requires host port interconnect circuitry between controller modules to

be set to Straight-through. The cabling examples show a controller and path high-availability

configuration. For path failover this configuration requires a host-based multipathing

software. The controller enclosure is equipped with two FC RAID controllers and the host

has two FC HBAs.

MMF <= 300 m A+B LUNs

MMF <= 300 m A+B LUNs

A B

A B

Figure 21: Redundant, High-Availability Connection Through Switches to Dual Data Hosts

54 FibreCAT SX Series Operating Manual

Connecting Hosts / Configurations Connecting Switch Attached FC Configurations

NOTE

i The FC switch must be configured according to port zoning rules (only one initiator

and one target per zone) and with fixed speed and topology settings.

Configuration Rules

Restrictions: with Solaris not for FibreCAT SX60

FC-Topology: Arbitrated Loop (FC-AL)

FC Speed: 2 / 4 Gbit/s

Host Port Interconnect: Straight-through

Max. Member in Switch Zone: only 1 initiator and 1 target per zone

Path-Failover Software Linux: native DM-MP (RedHat, SuSE)

Path-Failover Software Windows: FTS DDM V5, native MPIO (DSM), FTS Multipath V 5

Path-Failover Software VMware: native MP

4.7.2.1 Configuration Rules for VMware ESX Server 3.0.0 and 3.0.1

Figure 20 above shows the configuration.

● Only switch configuration supported.

● Use port zoning and not WWN zoning on the switches.

● Adjust the port topology on the switch: F-Port for server, L-Port for storage.

● Adjust the link speed on the switch ports.

FibreCAT SX Configuration:

● Host Port Configuration Link Speed: 4 Gbit/s or 2 Gbit/s (depending on HBA)

● Internal Host Port Interconnect: Straight-through

FibreCAT SX Series Operating Manual 55