Professional Documents

Culture Documents

Goodness-Of-fit of Randomistic Models

Goodness-Of-fit of Randomistic Models

Uploaded by

Hugo HernandezCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Goodness-Of-fit of Randomistic Models

Goodness-Of-fit of Randomistic Models

Uploaded by

Hugo HernandezCopyright:

Available Formats

2019-10

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research, 050030 Medellin, Colombia

hugo.hernandez@forschem.org

doi: 10.13140/RG.2.2.35386.34248

Abstract

Traditionally, the performance of mathematical models describing a certain system or process

is measured based on the quality of the deterministic predictions of the model, neglecting the

random behavior of the residuals. Least-squares regression methods assume a zero-mean

normal random behavior of the residuals, but the performance of those models does not take

into account how well the residuals are fitted by a normal random model. The purpose of this

paper is introducing the concept of goodness-of-fit for randomistic models, considering both

deterministic and random model components. An equivalent determination coefficient is

defined for evaluating the goodness-of-fit of randomistic models, which is a function of the

individual goodness-of-fit of the deterministic and random models. On the other hand, the

performance of a randomistic model will not only depend on its goodness-of-fit but also on its

capability for minimizing the standard deviation of the residuals (reducing uncertainty). For

that reason, a performance function is defined as the ratio between the goodness-of-fit of the

randomistic model and the variance of its residuals. Such performance function can be used as

an objective function to be maximized in an optimization problem during parameter

identification and/or model selection. Different examples are included for illustrating the

concepts introduced in this paper. Algorithms for calculating the goodness-of-fit of randomistic

models, implemented in R and MATLAB, are presented in the Appendix.

Keywords

Deterministic, Goodness-of-fit, Identification, Modeling, Normal Distribution, Optimization,

Performance, Random, Randomistic, Standard Deviation.

30/08/2019 ForsChem Research Reports 2019-10 (1 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

1. Introduction

Mathematical models are able to describe, explain and even predict the behavior of any

particular system. However, no mathematical model is perfect because it cannot consider all

possible factors that may have an influence on the system, and also because there is always

uncertainty in the information on the factors and their effects considered in the model. The

difference between the true behavior of the system and the model prediction (or estimation)

of the behavior of the system under any particular set of conditions is known as the residual

error of the model. Precisely because the residual error incorporates all unknown effects and

all uncertainties, it is clearly a random variable. A mathematical model that neglects these

residuals is basically a pure deterministic model. A mathematical model that takes into account

the residual error in its structure is usually denotes as a statistical model. However, since such

model contains both a deterministic and a random component in its structure, it will be

denoted here as a randomistic model instead.[1]

Typically, the fitness of a mathematical model is determined by numerical criteria such as the

coefficient of determination ( ),[2] the likelihood-ratio ( ),[3] and the Akaike information

criterion ( ).[4] The coefficient of determination is the complement of the relative error

observed with the model. can be used to test if the model estimations are significantly

different from the error noise, since by itself does not provide an indication of the

significance of the model. On the other hand, also evaluates the simplicity of the model

trying to overcome overfitting, which might occur by just maximizing .

Although there might be different implicit assumptions during the development of the model

(e.g. normality, independency and homoscedasticity of residuals), none of the previous

performance criteria penalizes the model when those implicit assumptions are violated. Thus,

the deterministic component of the model might seem to perform very well but its random

counterpart might not be satisfactory.

Thus, it would be desirable to assess the performance or goodness-of-fit of the model by

considering the performance of both deterministic and random components. In Section 2, a

method for the assessment of random models is presented, analogue to the determination

coefficient of deterministic models. In Section 3, the performance evaluation of randomistic

models considering both their deterministic and random components is proposed. Section 4

includes a brief discussion on the procedure required for identifying optimal randomistic

models. Finally, in Section 5 some representative examples are shown illustrating the concepts

and ideas presented in this work. Algorithms implemented in R and MATLAB for calculating the

goodness-of-fit of randomistic models are included in the Appendix.

30/08/2019 ForsChem Research Reports 2019-10 (2 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

2. Goodness-of-fit of Random Models

Random models (not to be confused with random effect models or random coefficients

models) are basically mathematical models representing the distribution of probabilities or

cumulative probabilities of a variable. They are also known as stochastic models, probability

models or probability distributions. Particularly, we are interested in “pure” random variables

where the mean or average value is exactly zero. Any randomistic variable can be transformed

into a pure random variable just by subtracting the mean value (a linear transformation). In

that case, the mean value can be interpreted as the deterministic component of the

randomistic variable.

The evaluation of the goodness-of-fit of a random model must be done comparing the model

with experimental results. When comparing probability density functions (PDF), it is found that

experimental PDF’s are usually highly noisy and sample-size dependent,[5] and thus, the quality

of the performance evaluation might be compromised. It is therefore recommended to

evaluate the performance of the model by comparing the cumulative probability model with

the experimental cumulative probability observed in the data. Three different performance

metrics have been previously proposed:[6]

Maximum difference in cumulative probability

( )

(2.1)

Average difference in cumulative probability

∑

〈 〉

(2.2)

Sum of squared differences in cumulative probability

(2.3)

where

( ) ( )

( )

( ) ( )

{

(2.4)

30/08/2019 ForsChem Research Reports 2019-10 (3 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

represents the cumulative probability function of the random variable , are the

experimental values of the variable, is the ascending rank of the experimental value , and n

is the total number of experimental data.

Please notice that the third metric (Eq. 2.3) involves a sum of squares and therefore it is

possible to define an analogue determination coefficient for the random model as:

(2.5)

where

∑( )

(2.6)

thus, the coefficient of determination of the random model becomes:

( )∑

(2.7)

3. Goodness-of-fit of Randomistic Models

Let us now consider a general randomistic model describing the response variable in terms of

a set of predictor variables :

( )

(3.1)

where represents any arbitrary deterministic model, and represents a pure (zero-mean)

random model, described by the following PDF:

( ) ( )

(3.2)

where is any arbitrary positive function of (realizations of the random model ), such that:

∫ ( )

(3.3)

30/08/2019 ForsChem Research Reports 2019-10 (4 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

∫ ( ) ( )

(3.4)

∫ ( ) ( )

(3.5)

represents the expected value (mean value) operator, represents the variance operator,

and is the standard deviation of the residual error of the deterministic model.§

The determination coefficient of the deterministic model is calculated from a set of

individual observations of the response variable ( ) at different conditions ( ) as follows:

∑

∑ ( 〈 〉)

(3.6)

where

( )

(3.7)

∑

〈 〉

(3.8)

On the other hand, the determination coefficient of the random model is (from Eq. 2.7):

( )∑

(3.9)

where

( ) ( )

( )

( ) ( )

{

(3.10)

§

Please notice that only when ( ) , ( ) ( ).

30/08/2019 ForsChem Research Reports 2019-10 (5 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

( ) ∫ ( )

(3.11)

Particularly, if a random model is assumed for the distribution of the residuals, then:

( )

√

( )

(3.12)

Since both models (deterministic and random models) are important components of the

randomistic model, the goodness-of-fit (determination coefficient) of the randomistic model

can be expressed as follows (as proposed in [7]):

( )

(3.13)

Eq. (3.13) indicates that the randomistic model has a good fit as long as at least one of the

models (deterministic or random) is good. On the other hand, if both models are poor, then the

fitness of the randomistic model will also be poor.

4. Identification of Randomistic Models

Goodness-of-fit metrics are useful for selecting and identifying the best model for describing a

particular system. According to Eq. (3.13), it is equally good to describe the system either with a

good deterministic model, or with a good random model. However, for practical purposes, it is

desirable to reduce the uncertainty of the estimations of a good model. That is, minimizing

while maximizing . This, however, might lead to overfitting of the model.

Overfitting will occur when the standard deviation of the estimation error is smaller than the

natural variance of the system ( ). Such natural variance is caused by experimental

measurement uncertainties as well as additional variations due to uncontrolled, unmeasured

factors influencing the response variable. If such natural variance is known, then the following

constraint should be present in the optimization problem:

(4.1)

On the other hand, since the proposed optimization problem is multiobjective (minimizing

while maximizing ), a suitable multiobjective optimization method should be used for

30/08/2019 ForsChem Research Reports 2019-10 (6 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

solving the problem. One of those methods consists on transforming the problem into a single

objective optimization. One possible single-objective constrained optimization problem is the

following:

( )

(4.2)

If only measurement uncertainties in the response variable will be considered for the natural

variation , then assuming a uniform distribution of the uncertain measured value:

(4.3)

where is the resolution of the system used for measuring the response variable .

The effect of measurement uncertainties in the predictor variables on the natural variation will

depend on the specific deterministic model considered.

Particularly, if a dimensionless objective function is required for comparison purposes, then the

optimization problem can be expressed as:

( ( ) ( ))

( )

(4.4)

where ( ) and ( ) represents the maximum and minimum observations found in the

measured response variable.

5. Examples

5.1. Simple Linear Regression: Age Estimation in Adult Lions

Whitman et al. [8] reported a correlation between the coloration of the nose of adult lions and

their age. Table 1 shows reported data of the proportion of black coloration of the nose of 32

adult male lions (from Seregenti and Ngorongoro, Tanzania) and their corresponding age.

30/08/2019 ForsChem Research Reports 2019-10 (7 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

Table 1. Nose coloration and age of adult male lions in Tanzania. Data from [9]

Nose Nose Nose Nose

coloration Age (yr) coloration Age (yr) coloration Age (yr) coloration Age (yr)

(%black) (%black) (%black) (%black)

21 1.1 23 2.4 30 4.3 48 7.3

14 1.5 22 2.1 42 3.8 44 7.3

11 1.9 20 1.9 43 4.2 34 7.8

13 2.2 17 1.9 59 5.4 37 7.1

12 2.6 15 1.9 60 5.8 34 7.1

13 3.2 27 1.9 72 6 74 13.1

12 3.2 26 2.8 29 3.4 79 8.8

18 2.9 21 3.6 10 4 51 5.4

A least-squares linear regression yields the following randomistic estimation model:

( ) ( )

(5.1)

where represents a Type-I standard normal** random variable.[10] Figure 1 presents

the data points from Table 1, and the estimations of the randomistic model (5.1).

The deterministic component of model (5.1) presents a determination coefficient (from Eq. 3.6)

. On the other hand, the random component of model (5.1) presents

a determination coefficient (from Eq. 3.9) . Thus, the

overall determination coefficient of the full randomistic model (5.1) is (from Eq. 3.13):

.

At first it would seem improbable that a deterministic model with a goodness-of-fit of

will result in a randomistic model with a goodness-of-fit of . However, Figure 1 shows

that the full randomistic model successfully describes the experimental data. This by no means

indicates that the model is perfect. In fact, it can be observed that the model predicts negative

ages at low nose colorations, which is unrealistic.

On the other hand, let us compare the results obtained using a constant-average value model

of the data. Such model will simply be expressed as:

( )

(5.2)

**

By performing the least-squares linear regression, the normality of the residuals is assumed.

30/08/2019 ForsChem Research Reports 2019-10 (8 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

Figure 1. Age estimation for adult lions using nose coloration using the randomistic model (5.1).

Blue data points: Data reported in [9]. Solid red line: Prediction of the deterministic

component. Gray dotted lines: Represent the random component of the model. Green dashed

lines: 99% confidence prediction limits of the randomistic model.

Figure 2. Age estimation for adult lions using nose coloration using the randomistic model (5.2).

Blue data points: Data reported in [9]. Solid red line: Prediction of the deterministic

component. Gray dotted lines: Represent the random component of the model. Green dashed

lines: 99% confidence prediction limits of the randomistic model.

30/08/2019 ForsChem Research Reports 2019-10 (9 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

This model presents a deterministic model coefficient , and a random

model coefficient . Thus, the full randomistic model has a

coefficient . Model (5.2) is presented graphically in

Figure 2, and compared with the experimental data. This model is basically a normal random

model of the distribution of the data. Although it can also be considered a good model for

describing the data, model (5.1) is better since it presents a higher goodness-of-fit, and a lower

standard deviation of the residuals (coefficient of ).

It is also possible to determine the optimal randomistic model by solving the optimization

problem presented in Eq. (4.2). Considering a linear deterministic model with a normal random

model, the best randomistic model coincides with the linear regression model given in Eq. (5.1).

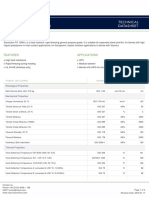

Table 2 summarizes the results obtained considering the multi-objective function defined in Eq.

(4.2), clearly demonstrating the superiority of the linear regression model.

Table 2. Multi-objective function performance of the randomistic models of Example 5.1.

Model (yr)

Least-squares linear regression

98.89% 1.6687 0.3551

model (Eq. 5.1)

Normal random model (Eq. 5.2) 95.09% 2.6766 0.1327

Finally, the resolution in the determination of the age of the lions seems to be (according to the

data available) . Thus, the natural standard deviation due to measurement error is

, way below the standard deviations of the model residuals obtained in both

cases. Particularly for the linear regression model, if uncertainty in the determination of the

nose coloration is considered (using a resolution of 1% black), then:

( )

√

(5.3)

still below the standard deviation of the residuals for both models, satisfying constraint (4.1).

5.2. Polynomial Regression: Rainfall in Central Italy

For the next example, let us consider some data reported for the average annual precipitation

between 1961 and 1990 at different locations in Central Italy.[11] The data is presented in Figure

3. The purpose of this example is comparing different polynomial regression models for fitting

30/08/2019 ForsChem Research Reports 2019-10 (10 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

the data. Table 3 summarizes the goodness-of-fit and multi-objective function performance

obtained for different randomistic models consisting of different polynomial deterministic

models paired with a normal random model. The deterministic models obtained by least

squares regression, from zero degree to 5th degree polynomials, are graphically compared in

Figure 4.

Figure 3. Average annual precipitation 1961-1990 in different locations of Central Italy vs.

distance from the sea. Slight differences are expected with respect to the original data as a

result of data extraction from the plot reported in [11].

Table 3. Multi-objective function performance of the different models considered in Example

5.2. Deterministic models are described by polynomials. Random models are described by

normal distributions.

Polynomial Model used in the Deterministic Random Residuals Randomistic

Deterministic Component of the Model Model Std. Dev. Model

Randomistic Model (mm)

Constant (zero degree polynomial)†† 0% 95.1% 215.65 95.1% 2.05x10-5

Linear (first degree polynomial) 62.8% 97.6% 132.18 99.1% 5.67x10-5

Second degree polynomial 63.3% 97.4% 132.01 99.0% 5.68x10-5

Third degree polynomial 63.8% 97.4% 131.86 99.1% 5.70x10-5

4th degree polynomial 63.9% 97.2% 132.44 99.0% 5.64x10-5

5th degree polynomial 64.0% 97.4% 132.96 99.1% 5.60x10-5

††

This model corresponds to a normal random model.

30/08/2019 ForsChem Research Reports 2019-10 (11 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

Figure 4. Comparison of the different polynomial deterministic models considered in Example

5.2 obtained by least squares regression.

The standard deviations of the model residuals were estimated using the following equation:

∑

√

(5.4)

where represents the model residuals, and is the degree of the polynomial. In other

words, represents the degrees of freedom of the model residuals.

Even though the goodness-of-fit of the deterministic model increases with the degree of the

polynomial, the goodness-of-fit of the randomistic model remains practically constant ( )

th

from the first to the 5 degree. There is however a minimum standard deviation of the

residuals at the third degree (but still larger than the natural deviation due to measurement

errors), which leads to a maximum in the objective function . This optimum value

illustrates the parsimony principle, since increasing the complexity of the model does not

necessarily improve the performance of the randomistic model (even though the performance

of the deterministic model improves).

By increasing the degree of the polynomial model from zero to one, the objective function

clearly improves, with a relative increase of . However, increasing the degree of the

polynomial from one to two, only yields a relative increase in the objective function of .A

question then arises whether this difference in objective functions is significant or not.

30/08/2019 ForsChem Research Reports 2019-10 (12 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

Considering normal random models, it is possible to compare two objective functions using a

-test of hypothesis. In this case, the objective function of a second model can be considered

to improve significantly with respect to a first model when:

( )

(5.5)

where represents the critical value of the distribution with a significance level . Smaller

values of the significance level require larger differences between the objective functions of

the models in order to consider them different.

For this example, assuming a significance level of (which is relatively high), the minimum

relative difference between the objective functions of two models for considering them

significantly different should be about .‡‡ Thus, there are no significant differences

between the polynomial models from first to 5 th degree, even with a significance level.

Therefore, even though the third degree polynomial model was found to be numerically the

best, it is not significantly different from the linear model (as it can be observed in Figure 4).

Thus, from the parsimony principle a linear model should be the right choice (between the

polynomial models considered), since it provides the same performance with the maximum

number of degrees of freedom for the residuals.

5.3. Heteroscedasticity: Chromatography Calibration Data

The third example was obtained from the calibration data of a high-performance liquid

chromatography (HPLC) method for quantifying caffeine in coffee samples. The calibration

data, extracted from plots reported by Sanchez [12], is presented in Figure 5. Clearly, a linear

calibration curve is adequate for describing the data. The model obtained by linear least-

squares regression is the following:

(5.6)

where is the concentration of caffeine in the sample in mg/L, is the peak area

obtained and is a standard normal random variable.

The goodness-of-fit coefficients for the different components of this randomistic model are:

‡‡

Since , ( ) , and √

30/08/2019 ForsChem Research Reports 2019-10 (13 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

(5.7)

(5.8)

(5.9)

Figure 5. Calibration data for the determination of caffeine concentration in coffee samples

using a chromatographic method. Data extracted from plots reported in [12].

Even though the performance of this model is very good, the residuals of the deterministic

model show heteroscedasticity (unequal variance) as can be seen in Figure 6:

Figure 6. Standardized residuals of the deterministic component of the randomistic model (5.6)

vs. caffeine concentration in the sample.

30/08/2019 ForsChem Research Reports 2019-10 (14 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

Since homoscedasticity (equal variance) is a key assumption of least-squares regression, the

behavior of the residuals casts some doubts about the validity of the regression model. From a

randomistic point of view, heteroscedasticity in the residuals actually indicates that the

standard deviation of the random model is also a function of the predictor variable, and

therefore, the randomistic model assuming constant standard deviation can be further

improved. Thus, assuming a linear relationship between the standard deviation of the residuals

and the concentration of caffeine in the sample (which is the known variable in the dataset),

we get:

( )

(5.10)

where and are constant parameters. Finding the optimum values of those parameters

by optimization, the following model is obtained:

( )

(5.11)

with goodness-of-fit coefficients:

(5.12)

(5.13)

(5.14)

Figure 7. Standardized residuals of the deterministic component of the randomistic model (5.11)

vs. caffeine concentration in the sample.

30/08/2019 ForsChem Research Reports 2019-10 (15 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

The standardized residuals for the model (5.11) are presented in Figure 7. Please notice that

standardization is performed dividing each residual by the value of the standard deviation

estimated at each caffeine concentration level. As it can be seen, heteroscedasticity of the

standardized residuals was reduced, and the performance of the model slightly improved.

Other non-linear models describing the change in standard deviation as a function of caffeine

concentration are also possible. Since models (5.6) and (5.11) present almost the same

performance,§§ the first model (with fewer parameters) would be preferred.

The corresponding calibration curves obtained from these models will then be:

(5.15)

( )

(5.16)

5.4. Nonlinear Transformations: Biomass Pyrolysis Kinetics

This example considers the decomposition kinetics of biomass by pyrolysis under non-

isothermal conditions. The data summarized in Figure 8 corresponds to a thermal gravimetric

analysis (TGA) of biomass decomposition in the temperature range from 235°C to 278°C using a

heating rate of 10°C/min, reported by Mallick et al. [13].

Figure 8. Solid mass loss as a function of temperature during TGA of biomass pyrolysis. Data

extracted from [13].

§§

A hypothesis test can demonstrate that the differences are not significant even for less conservative

significance levels.

30/08/2019 ForsChem Research Reports 2019-10 (16 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

Using the Coats-Redfern equation for non-isothermal decomposition of solids,[14] and

assuming a first-order decomposition kinetics, the following expression is obtained:

( )

( ) ( )

(5.17)

where represents the fractional solid mass loss, is the absolute temperature in ,

is the ideal gas constant, is the heating rate, and and are the

pre-exponential factor and the activation energy of an Arrhenius-type kinetic expression. Eq.

( )

(5.17) represents a linear model between the variable transformations ( ) and . The

transformed data, as well as the corresponding least-squares linear regression, is presented in

Figure 9.

Figure 9. Coats-Redfern transformation of TGA data for biomass pyrolysis.[13]. Blue points:

Transformed experimental data. Red solid line: Least-squares linear regression.

The model obtained by linear least-squares regression is:

( )

( )

(5.18)

30/08/2019 ForsChem Research Reports 2019-10 (17 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

The goodness-of-fit coefficients obtained are: , and .

From the model coefficients, it is possible to conclude that: and

. Thus, the final deterministic kinetic expression becomes:

( )

(5.19)

which can be analytically solved to yield:

( ) ( )

(5.20)

Assuming a normal distribution of the residuals from model (5.20) comparing with the original

experimental data (before transformation), the performance of the corresponding randomistic

model is: , , and .

However, from a randomistic point of view the final kinetic model obtained is:

( )

(5.21)

Expanding the exponential of the standard random variable as a Taylor series, and truncating

to the first terms, the following approximation is obtained:

( )

(5.22)

Expanding and approximating the exponential with the random variable again, we obtain:

( ) ( )

(5.23)

which indicates that the standard deviation of the residuals depends on the temperature. For

the range of temperatures considered, such standard deviation increases almost linearly with

temperature, taking values between and . Model (5.23) presents the

following performance: , , and .

This model provides a significant improvement in the goodness-of-fit of the random model for

the residuals.

30/08/2019 ForsChem Research Reports 2019-10 (18 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

On the other hand, if the original data is used to fit the parameters directly in model (5.20),

without the Coats-Redfern transformation, by maximizing the objective function , and

considering a normal distribution of the residuals, the following randomistic model is

obtained***:

( ) ( )

(5.24)

These parameters correspond to activation energy of and pre-exponential

factor . The performance obtained with model (5.24) is the following:

, , and . Furthermore, the

standard deviation of the measurement error of the response variable is estimated (using a

resolution for of 0.0001) to be , which is below the standard deviation of the

residuals in model (5.24). A comparison of the three models obtained is presented in Figure 10.

Figure 10. Comparison of three different models for the kinetics of biomass pyrolysis. Black

dots: Experimental data extracted from [13]. Red solid line: Model (5.20) Arrhenius equation

using parameters obtained by the Coats-Redfern transformation. Green dotted line: Model

(5.23) obtained directly from the Coats-Redfern equation. Blue dashed line: Model (5.24)

Arrhenius equation using parameters obtained by maximizing .

***

Using the generalized reduced gradient (GRG) nonlinear optimization method, and using parameters

of Model (5.20) as starting point.

30/08/2019 ForsChem Research Reports 2019-10 (19 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

5.5. Nonlinear Transformations: Solubility of Different Solutes in Two Solvents

The next example considers experimental data for the solubility of different types of solutes on

two solvents: Tetrahydrofuran (THF) and 2-methyl tetrahydrofuran (2-MeTHF).[15] Two models

were previously obtained [16] considering a simple linear regression and a robust optimization

using the log-log transformation of the solubility data (see Figure 11). The corresponding

randomistic models are respectively:

(5.25)

(5.26)

Transforming back the models into the original variables results in:

(5.27)

(5.28)

Figure 11. Solubility (mg/ml) of different solutes at room temperature for THF and 2-MeTHF in

decimal logarithm scale. Blue dots: Data sample. Dashed purple line: Least-squares fit (Eq.

5.25). Solid green line: Robust fit (Eq. 5.27). Only the deterministic components of the models

are shown.

The performance of the models, considering both the original and transformed variables, is

summarized in Table 4. An additional model (optimal model) is included, which is obtained by

30/08/2019 ForsChem Research Reports 2019-10 (20 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

maximizing the function , calculated on the original dataset. This model considered the

same structure of the previous models, and only the values of the coefficients were used as

decision variables. The corresponding models obtained are the following:

(5.29)

(5.30)

Table 4. Comparative performance of different solubility models with respect to both original

and transformed data (decimal logarithm transformation).

Data Randomistic Model

Least-squares model 39.68% 73.05% 11.266 83.75% 6.60x10-3

Original Robust model 43.10% 80.49% 10.941 88.90% 7.43x10-3

Optimal model 49.03% 81.17% 10.356 90.40% 8.43x10-3

Least-squares model 72.78% 98.43% 0.3827 99.57% 6.800

Transformed Robust model 62.59% 96.52% 0.4486 98.70% 4.905

Optimal model 70.37% 95.91% 0.3992 98.79% 6.199

The deterministic predictions of the three models in original data values are presented in Figure

12.

Figure 12. Solubility (mg/ml) of different solutes at room temperature for THF and 2-MeTHF in

original scale. Blue dots: Data sample. Dashed purple line: Least-squares fit (Eq. 5.26). Solid

green line: Robust fit (Eq. 5.28). Dotted red line: Optimal fit (Eq. 5.30).

30/08/2019 ForsChem Research Reports 2019-10 (21 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

For this particular example, when the objective function of the optimization is defined in the

transformed domain, the optimal model corresponds to the least-squares model. Even though

in the domain of the logarithm transformations of the variables the best fit is obtained by the

least-squares model, its performance on the original scale of the variables is not optimal. Thus,

when fitting models to transformed data, it is very important to determine if the goal is

optimizing the performance of the model in the transformed domain or in the original domain

of the data, because the results may vary significantly. As it was previously mentioned,[16] it is

confirmed that the robust regression approach performs better in the original scale of the data

compared to the least-squares regression model; however, its performance is not optimal from

a randomistic point of view.

5.6. Multivariate Fit: Simultaneous Saccharification-Fermentation Process for Ethanol

Production

The last example consists on the comparison of three different dynamic models used to predict

the behavior of a particular fermentation process for ethanol production. The experimental

data and model results are reported by Ochoa et al.[17]. The key feature of this example is that

4 different response variables (cell, starch, glucose, and ethanol concentration) are considered

for assessing model performance. Figure 13 presents the experimental data and models

predictions at each data point, for all 4 response variables. Even though all the response

variables are expressed in the same units (g/L), the ranges of experimental values are different

and thus it is preferably using a dimensionless objective function for model comparison.

Furthermore, if all response variables are considered equally important, the following function

can be used for assessing the performance of each model reported:

∑ ( )

( ) ( )

(5.31)

where represent cell, starch, glucose, and ethanol concentration, respectively.

It is also important noticing that the number of experimental observations is different for each

response variable, but this has no effect on the value of the performance function previously

defined.

Table 5 summarizes the performance comparison for the three models reported in [17],

assuming a zero-mean normal random model of the residuals. The relative performance

( ) of each individual model is presented for comparison.

30/08/2019 ForsChem Research Reports 2019-10 (22 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

Figure 13. Dynamic response during ethanol simultaneous saccharification-fermentation from

starch. a) Cell concentration (g/L). b) Starch concentration (g/L). c) Glucose concentration (g/L).

d) Ethanol concentration. Black diamonds: Experimental data. Red squares and line: Model 1.

Blue triangles and line: Model 2. Green circles and line: Model 3. Data from [17].

Table 5. Comparative performance of different models of ethanol fermentation reported in

[17], considering different response variables.

Model Response Variable

Cell concentration 57.19% 98.67% 99.43% 16.25% 37.6

Starch concentration 73.48% 76.20% 93.69% 17.10% 32.0

Model 1 29.5

Glucose concentration 96.05% 91.59% 99.67% 5.12% 380.3

Ethanol concentration 35.30% 93.58% 95.85% 27.12% 13.0

Cell concentration 62.66% 89.29% 96.00% 15.18% 41.6

Starch concentration 93.12% 92.87% 99.51% 8.71% 131.1

Model 2 100.8

Glucose concentration 93.42% 88.43% 99.24% 6.60% 227.6

Ethanol concentration 96.37% 89.68% 99.63% 6.43% 241.1

Cell concentration 75.65% 93.71% 98.47% 12.26% 65.5

Starch concentration 96.18% 54.03% 98.24% 6.49% 233.4

Model 3 137.8

Glucose concentration 98.66% 53.69% 99.38% 2.98% 1120.4

Ethanol concentration 92.42% 99.34% 99.95% 9.28% 116.1

30/08/2019 ForsChem Research Reports 2019-10 (23 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

The best overall performance was obtained with Model 3, whereas the worst overall

performance was obtained by Model 1. From a deterministic point of view, the best individual

model was glucose concentration for Model 3. It is also the model with the lowest standard

deviation of residuals relative to the range of the response variable, and highest relative

performance. On the other hand, the best individual random model was ethanol concentration

for Model 3, which is also the best model from a randomistic point of view. The random models

for starch and glucose concentration for Model 3 presented a poor fit. This is due to a drift of

the model with respect to experimental data which results in a significantly different from zero

average of the residuals. Eq. (5.31) can also be used as the objective function in an optimization

problem aiming to improving the estimation of the parameters of the models for ethanol

production from starch.

6. Conclusion

By analyzing the goodness of fit of a model, not only the performance of the deterministic

estimates should be considered. If the performance of the random model describing the

residuals of the deterministic model is also considered, it is possible to evaluate the goodness-

of-fit of the full randomistic model. Based on the definition of the determination coefficient

( ) for deterministic models, analogue coefficients for the random ( ) and randomistic

( ) models are presented. The best models will be those providing the largest randomistic

coefficient with the minimum estimated variance of the model residuals. Such variance

cannot be smaller than the natural variation of the system, caused by measurement

uncertainties and pure experimental errors. Different examples were presented in order to

illustrate the concepts introduced in this paper. They included topics such as model

comparison, optimal parameter identification, handling heteroscedasticity of residuals,

analyzing non-linear transformation of the variables, and evaluating the performance of the

model when multiple response variables are considered. Algorithms for calculating the

goodness-of-fit of randomistic models were implemented in R and MATLAB languages, and are

presented in the Appendix.

Acknowledgments

The author wishes to thank Prof. Dr. Silvia Ochoa (Universidad de Antioquia, Colombia), for

helpful discussions on this topic.

This research did not receive any specific grant from funding agencies in the public,

commercial, or not-for-profit sectors.

30/08/2019 ForsChem Research Reports 2019-10 (24 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

References

[1] Hernandez, H. (2018). The Realm of Randomistic Variables. ForsChem Research Reports

2018-10. doi: 10.13140/RG.2.2.29034.16326.

[2] Anderson-Sprecher, R. (1994). Model comparisons and R2. The American Statistician, 48(2),

113-117.

[3] Azzalini, A. (1996). Statistical Inference Based on the likelihood (Vol. 68). CRC Press.

[4] Akaike, H. (1974). A new look at the statistical model identification. IEEE Transactions on

Automatic Control, 19(6), 716-723.

[5] Hernandez, H. (2018). Comparison of Methods for the Reconstruction of Probability Density

Functions from Data Samples. ForsChem Research Reports 2018-12. doi:

10.13140/RG.2.2.30177.35686.

[6] Hernandez, H. (2018). Parameter Identification using Standard Transformations: An

Alternative Hypothesis Testing Method. ForsChem Research Reports 2018-04. doi:

10.13140/RG.2.2.14895.02728.

[7] Hernandez, H. (2019). Modeling and Identification of Noisy Dynamic Systems. ForsChem

Research Reports 2019-04. doi: 10.13140/RG.2.2.12571.72489.

[8] Whitman, K., Starfield, A. M., Quadling, H. S., & Packer, C. (2004). Sustainable trophy

hunting of African lions. Nature, 428(6979), 175.

[9] Whitlock, M. C., & Schluter, D. (2015). The Analysis of Biological Data. 2nd Ed. Macmillan

Learning. https://whitlockschluter.zoology.ubc.ca/data/chapter17

[10] Hernandez, H. (2018). Multidimensional Randomness, Standard Random Variables and

Variance Algebra. ForsChem Research Reports 2018-02. doi: 10.13140/RG.2.2.11902.48966.

[11] Gentilucci, M., Bisci, C., Burt, P., Fazzini, M., & Vaccaro, C. (2018). Interpolation of Rainfall

Through Polynomial Regression in the Marche Region (Central Italy). In: Mansourian, A., Pilesjö,

P., Harrie, L., & van Lammeren, R. (Eds.). Geospatial Technologies for All: Selected Papers of the

21st AGILE Conference on Geographic Information Science. Springer. pp. 55-73.

[12] Sanchez, J. (2018). Estimating detection limits in chromatography from calibration data:

ordinary least squares regression vs. weighted least squares. Separations, 5(4), 49.

[13] Mallick, D., Bora, B. J., Barbhuiya, S. A., Banik, R., Garg, J., Sarma, R., & Gogoi, A. K. (2019).

Detailed study of pyrolysis kinetics of biomass using thermogravimetric analysis. In: AIP

Conference Proceedings (Vol. 2091, No. 1, p. 020014). AIP Publishing.

[14] Coats, A. W., & Redfern, J. P. (1964). Kinetic parameters from thermogravimetric data.

Nature, 201(4914), 68.

30/08/2019 ForsChem Research Reports 2019-10 (25 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

[15] Qiu, J., & Albrecht, J. (2018). Solubility Correlations of Common Organic Solvents. Organic

Process Research & Development, 22(7), 829-835.

[16] Hernandez, H. (2018). Introduction to Randomistic Optimization. ForsChem Research

Reports 2018-11. doi: 10.13140/RG.2.2.30110.18246.

[17] Ochoa, S., Yoo, A., Repke, J. U., Wozny, G., & Yang, D. R. (2007). Modeling and parameter

identification of the simultaneous saccharification‐fermentation process for ethanol

production. Biotechnology progress, 23(6), 1454-1462.

Appendix. Algorithm Implementation

A.1. Algorithm Implementation in R Language

randgof<-function(datasample=NULL,estimates=NULL,nparam=1,rdist="Normal"){

#This function evaluates the goodness-of-fit and the performance of a randomistic model. The evaluation is

#performed considering both the original experimental data (datasample) and the deterministic predictions

#(estimates) of the model, under the exact same conditions of the original data. Both variables should be vectors

#with the same dimensions. The random model is evaluated assuming a certain distribution (rdist). Included in this

#code are the "Normal" and "Uniform" distributions. The standard deviation of the residuals is estimated using the

#number of parameters (nparam) considered in the deterministic model (including constant coefficients). The

#output includes: R2 (Randomistic goodness-of-fit), Perf (randomistic performance), detR2 (deterministic R2),

#randR2 (random fitness), sE (standard deviation of residuals), rPerf (relative performance), rsE (relative standard

#deviation of residuals)

#Calculation of residuals

res=datasample-estimates

#Calculation of deterministic R2

avg=mean(datasample)

SST=sum((datasample-avg)^2)

SSE=sum(res^2)

detR2=1-SSE/SST

#Calculation of random R2

res=sort(res)

n=length(res)

sE=sqrt(SSE/(n-nparam))

rsE=sE/(max(datasample)-min(datasample))

if (rdist=="Uniform") {

phi=punif(res,mean=0,sd=sE)

} else {

phi=pnorm(res,mean=0,sd=sE)

}

err=0*phi

for (i in 1:n){

err[i]=max(0,phi[i]-(i/n),(i-1)/n-phi[i])

}

30/08/2019 ForsChem Research Reports 2019-10 (26 / 27)

www.forschem.org

Goodness-of-fit of Randomistic Models

Hugo Hernandez

ForsChem Research

hugo.hernandez@forschem.org

randR2=1-(12*n/(n^2-1))*sum(err^2)

#Calculation of randomistic R2 and performance

R2=detR2+randR2-detR2*randR2

Perf=R2/(sE^2)

rPerf=R2/(rsE^2)

#Output

output=data.frame(R2,Perf,detR2,randR2,sE,rPerf,rsE)

return(output)

}

A.2. Algorithm Implementation in MATLAB Language

function

[R2,Perf,detR2,randR2,sE,rPerf,rsE]=randgof(datasample,estimates,nparam,rdist)

%This function evaluates the goodness-of-fit and the performance of a randomistic

%model. The evaluation is performed considering both the original experimental

%data (datasample) and the deterministic predictions (estimates) of the model,

%under the exact same conditions of the original data. Both variables should be

%vectors with the same dimensions. The random model is evaluated assuming a

%certain distribution (rdist). Included in this code are the 'norm' and

%'unif' distributions (among others). The standard deviation of the residuals is

%estimated using the number of parameters (nparam) considered in the

%deterministic model (including constant coefficients). The output includes: R2

%(Randomistic goodness-of-fit), Perf (randomistic performance), detR2

%(deterministic R2), randR2 (random fitness), sE (standard deviation of

%residuals), rPerf (relative performance), rsE (relative standard deviation of

%residuals)

%Calculation of residuals

res=datasample-estimates;

%Calculation of deterministic R2

avg=mean(datasample);

SST=sum((datasample-avg).^2);

SSE=sum(res.^2);

detR2=1-SSE/SST;

%Calculation of random R2

res=sort(res);

n=length(res);

sE=sqrt(SSE/(n-nparam));

rsE=sE/(max(datasample)-min(datasample));

phi=cdf(rdist,res/sE);

err=0*phi;

for i=1:n

err(i)=max([0,phi(i)-(i/n),(i-1)/n-phi(i)]);

end

randR2=1-(12*n/(n^2-1))*sum(err.^2);

%Calculation of randomistic R2 and performance

R2=detR2+randR2-detR2*randR2;

Perf=R2/(sE^2);

rPerf=R2/(rsE^2);

end

30/08/2019 ForsChem Research Reports 2019-10 (27 / 27)

www.forschem.org

You might also like

- Linear Mixed Effects Modeling in SPSSDocument29 pagesLinear Mixed Effects Modeling in SPSSLindsey OstermanNo ratings yet

- Sidewall Brochure E Oct 14Document4 pagesSidewall Brochure E Oct 14canito73No ratings yet

- Canal Trough Slab Trough Side Wall Road Slab Concrete Steel: Design of Pier With Pier Footing For Canal TroughDocument15 pagesCanal Trough Slab Trough Side Wall Road Slab Concrete Steel: Design of Pier With Pier Footing For Canal TroughSandip UpNo ratings yet

- Conveyor Belt EquationsDocument6 pagesConveyor Belt EquationsWaris La Joi WakatobiNo ratings yet

- How To Typeset Equations in LaTeX. MoserDocument41 pagesHow To Typeset Equations in LaTeX. MoserGorkogo15kv5No ratings yet

- Optimal Model Structure Identification 1. Multiple Linear RegressionDocument53 pagesOptimal Model Structure Identification 1. Multiple Linear RegressionHugo HernandezNo ratings yet

- Heteroscedastic Regression ModelsDocument29 pagesHeteroscedastic Regression ModelsHugo HernandezNo ratings yet

- Replacing The R Coefficient in Model AnalysisDocument43 pagesReplacing The R Coefficient in Model AnalysisHugo HernandezNo ratings yet

- Introduction To Randomistic OptimizationDocument25 pagesIntroduction To Randomistic OptimizationHugo HernandezNo ratings yet

- Smoothing, Random Effects and Generalized Linear Mixed Models in Survival AnalysisDocument31 pagesSmoothing, Random Effects and Generalized Linear Mixed Models in Survival AnalysisrrrrrrrrNo ratings yet

- Summary of Biostatistics ArticlesDocument4 pagesSummary of Biostatistics ArticlesDerekNo ratings yet

- BNSP: An R Package For Fitting Bayesian Semiparametric Regression Models and Variable SelectionDocument23 pagesBNSP: An R Package For Fitting Bayesian Semiparametric Regression Models and Variable Selectionrosa rosmanahNo ratings yet

- Paper 7-Analysis of Gumbel Model For Software Reliability Using Bayesian ParadigmDocument7 pagesPaper 7-Analysis of Gumbel Model For Software Reliability Using Bayesian ParadigmIjarai ManagingEditorNo ratings yet

- Entropy: Generalized Maximum Entropy Analysis of The Linear Simultaneous Equations ModelDocument29 pagesEntropy: Generalized Maximum Entropy Analysis of The Linear Simultaneous Equations ModelWole OyewoleNo ratings yet

- Algorithm 2Document27 pagesAlgorithm 2lalaxlalaNo ratings yet

- Link Ratio MethodDocument18 pagesLink Ratio MethodLuis ChioNo ratings yet

- Structural Equation ModelingDocument14 pagesStructural Equation ModelingFerra YanuarNo ratings yet

- Conditional Model Selection in Mixed-Effects Models With Lme4Document31 pagesConditional Model Selection in Mixed-Effects Models With Lme4Yao LuNo ratings yet

- Optimal Model Structure Identification 2 - Nonlinear RegressionDocument35 pagesOptimal Model Structure Identification 2 - Nonlinear RegressionHugo HernandezNo ratings yet

- Semc 3 QDocument9 pagesSemc 3 QshelygandhiNo ratings yet

- Moment-Based Estimation of Nonlinear Regression Models Under Unobserved Heterogeneity, With Applications To Non-Negative and Fractional ResponsesDocument29 pagesMoment-Based Estimation of Nonlinear Regression Models Under Unobserved Heterogeneity, With Applications To Non-Negative and Fractional ResponsesVigneshRamakrishnanNo ratings yet

- MCMC Methods For Multi-Response Generalized LinearDocument22 pagesMCMC Methods For Multi-Response Generalized LinearkyotopinheiroNo ratings yet

- Ankenman 2008Document9 pagesAnkenman 2008Kala J PrasadNo ratings yet

- Generating Random Samples From User-Defined Distributions: 11, Number 2, Pp. 299-304Document6 pagesGenerating Random Samples From User-Defined Distributions: 11, Number 2, Pp. 299-304Nam LêNo ratings yet

- Gradient Extrapolated Stochastic KrigingDocument25 pagesGradient Extrapolated Stochastic KrigingDominicNo ratings yet

- A Comparison of Univariate Probit and Logit Models Using SimulationDocument21 pagesA Comparison of Univariate Probit and Logit Models Using SimulationLotfy LotfyNo ratings yet

- (OK) Onyx - Structural - Equation - Modelling - 2018 PDFDocument34 pages(OK) Onyx - Structural - Equation - Modelling - 2018 PDFErwin Fadli KurniawanNo ratings yet

- How To Train EBMDocument22 pagesHow To Train EBMsatyamkumarmodNo ratings yet

- Mixed Up Mixed ModelsDocument8 pagesMixed Up Mixed Modelshubik38No ratings yet

- Structural Safety: J.-M. Bourinet, F. Deheeger, M. LemaireDocument11 pagesStructural Safety: J.-M. Bourinet, F. Deheeger, M. LemaireIago Freitas de AlmeidaNo ratings yet

- How Does The Sample Size Affect GARCH Model?: H.S. NG and K.P. LamDocument4 pagesHow Does The Sample Size Affect GARCH Model?: H.S. NG and K.P. LamSurendra Pal SinghNo ratings yet

- PDF Hosted at The Radboud Repository of The Radboud University NijmegenDocument20 pagesPDF Hosted at The Radboud Repository of The Radboud University NijmegenUdayan DasguptaNo ratings yet

- Within-And Between-Cluster Effects in Generalized Linear Mixed Models: A Discussion of Approaches and The Xthybrid CommandDocument27 pagesWithin-And Between-Cluster Effects in Generalized Linear Mixed Models: A Discussion of Approaches and The Xthybrid CommandChakale1No ratings yet

- Approximation Models in Optimization Functions: Alan D Iaz Manr IquezDocument25 pagesApproximation Models in Optimization Functions: Alan D Iaz Manr IquezAlan DíazNo ratings yet

- 14 Aos1260Document31 pages14 Aos1260Swapnaneel BhattacharyyaNo ratings yet

- Analyzing Likert Scale Data Using Cumulative Logit Response Functions With Proportional OddsDocument6 pagesAnalyzing Likert Scale Data Using Cumulative Logit Response Functions With Proportional OddsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A Review of Surrogate Assisted Multiobjective EADocument15 pagesA Review of Surrogate Assisted Multiobjective EASreya BanerjeeNo ratings yet

- Flex MG ArchDocument44 pagesFlex MG ArchsdfasdfkksfjNo ratings yet

- Naval Research Logistics - 2009 - Liu - Kernel Estimation of Quantile SensitivitiesDocument15 pagesNaval Research Logistics - 2009 - Liu - Kernel Estimation of Quantile SensitivitiesSouvikNo ratings yet

- Multiple Imputation of Predictor Variables Using Generalized Additive ModelsDocument27 pagesMultiple Imputation of Predictor Variables Using Generalized Additive ModelseduardoNo ratings yet

- Statistical TestsDocument11 pagesStatistical TestsMatias OpazoNo ratings yet

- 1 s2.0 S0378375805000285 MainDocument14 pages1 s2.0 S0378375805000285 Mainlee1995haoNo ratings yet

- Estimation Strategies For The Regression Coefficient Parameter Matrix in Multivariate Multiple RegressionDocument20 pagesEstimation Strategies For The Regression Coefficient Parameter Matrix in Multivariate Multiple RegressionRonaldo SantosNo ratings yet

- Translate - Varying Coefficient Models in Stata - v4Document40 pagesTranslate - Varying Coefficient Models in Stata - v4Arq. AcadêmicoNo ratings yet

- Gamma and Inverse Gaussian Frailty Models: A Comparative StudyDocument5 pagesGamma and Inverse Gaussian Frailty Models: A Comparative StudyinventionjournalsNo ratings yet

- Structural Safety: Armin Tabandeh, Gaofeng Jia, Paolo GardoniDocument18 pagesStructural Safety: Armin Tabandeh, Gaofeng Jia, Paolo Gardoniscri_kkNo ratings yet

- SPE-172432-MS Guidelines For Uncertainty Assessment Using Reservoir Simulation Models For Green-And Brown-Field SituationsDocument15 pagesSPE-172432-MS Guidelines For Uncertainty Assessment Using Reservoir Simulation Models For Green-And Brown-Field SituationsDuc Nguyen HoangNo ratings yet

- Sjos 12054Document16 pagesSjos 12054唐唐No ratings yet

- Optimal Significance Level and Sample Size in Hypothesis Testing 6 - Testing RegressionDocument37 pagesOptimal Significance Level and Sample Size in Hypothesis Testing 6 - Testing RegressionHugo HernandezNo ratings yet

- Object Risk Position Paper Mixture Models in Operational RiskDocument5 pagesObject Risk Position Paper Mixture Models in Operational RiskAlexandre MarinhoNo ratings yet

- The Relationship Between The Standardized Root Mean Square Residual and Model Misspecification in Factor Analysis ModelsDocument20 pagesThe Relationship Between The Standardized Root Mean Square Residual and Model Misspecification in Factor Analysis ModelsAngel Christopher Zegarra LopezNo ratings yet

- Identifying Shifts Between Two Regression Curves: August 14, 2019Document34 pagesIdentifying Shifts Between Two Regression Curves: August 14, 2019Gaston GBNo ratings yet

- Working Paper ITLS - WP - 13 - 01Document20 pagesWorking Paper ITLS - WP - 13 - 01Paul GhoeringNo ratings yet

- Paper MS StatisticsDocument14 pagesPaper MS Statisticsaqp_peruNo ratings yet

- Indeterminacy of Factor Score Estimates in Slightly Misspecified Confirmatory Factor ModelsDocument17 pagesIndeterminacy of Factor Score Estimates in Slightly Misspecified Confirmatory Factor ModelsAcatalepsoNo ratings yet

- alturkAMS21 24 2017Document32 pagesalturkAMS21 24 2017medixbtcNo ratings yet

- 1 s2.0 S0169743921001404 MainDocument8 pages1 s2.0 S0169743921001404 MainMadhusmita PandaNo ratings yet

- Cox Model Assumptions E Martingala Residuals - Easy Guides in RDocument14 pagesCox Model Assumptions E Martingala Residuals - Easy Guides in REMANUELA DI GREGORIONo ratings yet

- 19 Aos1924Document22 pages19 Aos1924sherlockholmes108No ratings yet

- APracticalGuide JASSS1742014Document14 pagesAPracticalGuide JASSS1742014Ivana Jovevska-VeljkovicNo ratings yet

- An Introduction To Conditional Random Fields: Charles Sutton and Andrew MccallumDocument90 pagesAn Introduction To Conditional Random Fields: Charles Sutton and Andrew Mccallumgirishkulkarni008No ratings yet

- Entropy 24 00713 v2Document12 pagesEntropy 24 00713 v2Szilard NemesNo ratings yet

- Process Performance Models: Statistical, Probabilistic & SimulationFrom EverandProcess Performance Models: Statistical, Probabilistic & SimulationNo ratings yet

- Mixture Models and ApplicationsFrom EverandMixture Models and ApplicationsNizar BouguilaNo ratings yet

- A Continuous Normal Approximation To The Binomial DistributionDocument25 pagesA Continuous Normal Approximation To The Binomial DistributionHugo HernandezNo ratings yet

- Understanding Work, Heat, and The First Law of Thermodynamics 1: FundamentalsDocument40 pagesUnderstanding Work, Heat, and The First Law of Thermodynamics 1: FundamentalsHugo HernandezNo ratings yet

- Estimation of The Mean Using Samples Obtained From Finite PopulationsDocument19 pagesEstimation of The Mean Using Samples Obtained From Finite PopulationsHugo HernandezNo ratings yet

- Confusion and Illusions in Collision TheoryDocument42 pagesConfusion and Illusions in Collision TheoryHugo HernandezNo ratings yet

- Question Everything - Models vs. RealityDocument11 pagesQuestion Everything - Models vs. RealityHugo HernandezNo ratings yet

- Molecular Modeling of Macroscopic Phase Changes 3 - Heat and Rate of EvaporationDocument34 pagesMolecular Modeling of Macroscopic Phase Changes 3 - Heat and Rate of EvaporationHugo HernandezNo ratings yet

- Heteroscedastic Regression ModelsDocument29 pagesHeteroscedastic Regression ModelsHugo HernandezNo ratings yet

- Multi-Algorithm OptimizationDocument33 pagesMulti-Algorithm OptimizationHugo HernandezNo ratings yet

- Entropy and Enthalpy - Reality or Grandiose MistakeDocument19 pagesEntropy and Enthalpy - Reality or Grandiose MistakeHugo HernandezNo ratings yet

- Towards A Robust and Unbiased Estimation of Standard DeviationDocument33 pagesTowards A Robust and Unbiased Estimation of Standard DeviationHugo HernandezNo ratings yet

- Probability Distribution and Bias of The Sample Standard DeviationDocument26 pagesProbability Distribution and Bias of The Sample Standard DeviationHugo HernandezNo ratings yet

- The Periodic Equality Operator ( )Document8 pagesThe Periodic Equality Operator ( )Hugo HernandezNo ratings yet

- PID Controller Stability and Tuning Using A Time-Domain ApproachDocument32 pagesPID Controller Stability and Tuning Using A Time-Domain ApproachHugo HernandezNo ratings yet

- A Cartesian Dialogue About Thermodynamics: Hugo HernandezDocument7 pagesA Cartesian Dialogue About Thermodynamics: Hugo HernandezHugo HernandezNo ratings yet

- Representative Functions of The Standard Normal DistributionDocument29 pagesRepresentative Functions of The Standard Normal DistributionHugo HernandezNo ratings yet

- Probability Distribution, Property Fraction Distribution and Partition Probability FunctionDocument25 pagesProbability Distribution, Property Fraction Distribution and Partition Probability FunctionHugo HernandezNo ratings yet

- Random and General Distribution Deviation of Nonlinear FunctionsDocument24 pagesRandom and General Distribution Deviation of Nonlinear FunctionsHugo HernandezNo ratings yet

- A General Multiscale Pair Interaction Potential ModelDocument25 pagesA General Multiscale Pair Interaction Potential ModelHugo HernandezNo ratings yet

- Clausius' vs. Boltzmann's EntropyDocument11 pagesClausius' vs. Boltzmann's EntropyHugo HernandezNo ratings yet

- ForsChem Research 100th Report - A 7-Year RetrospectiveDocument39 pagesForsChem Research 100th Report - A 7-Year RetrospectiveHugo HernandezNo ratings yet

- Categorical OptimizationDocument40 pagesCategorical OptimizationHugo HernandezNo ratings yet

- Molecular Modeling of Macroscopic Phase Changes 1 - Liquid EvaporationDocument20 pagesMolecular Modeling of Macroscopic Phase Changes 1 - Liquid EvaporationHugo HernandezNo ratings yet

- Cubic Spline Regression Using OAT OptimizationDocument34 pagesCubic Spline Regression Using OAT OptimizationHugo HernandezNo ratings yet

- Molecular Modeling of Macroscopic Phase Changes 2 - Vapor Pressure ParametersDocument43 pagesMolecular Modeling of Macroscopic Phase Changes 2 - Vapor Pressure ParametersHugo HernandezNo ratings yet

- Numerical Determination of The Probability Density of Functions of Randomistic VariablesDocument40 pagesNumerical Determination of The Probability Density of Functions of Randomistic VariablesHugo HernandezNo ratings yet

- Optimal Significance Level and Sample Size in Hypothesis Testing 6 - Testing RegressionDocument37 pagesOptimal Significance Level and Sample Size in Hypothesis Testing 6 - Testing RegressionHugo HernandezNo ratings yet

- Persistent Periodic FunctionsDocument11 pagesPersistent Periodic FunctionsHugo HernandezNo ratings yet

- Collision Dynamics Between Two Monoatomic MoleculesDocument28 pagesCollision Dynamics Between Two Monoatomic MoleculesHugo HernandezNo ratings yet

- Optimal Significance Level and Sample Size in Hypothesis Testing 5 - Tests of MediansDocument22 pagesOptimal Significance Level and Sample Size in Hypothesis Testing 5 - Tests of MediansHugo HernandezNo ratings yet

- Optimal Significance Level and Sample Size in Hypothesis Testing 3 - Large SamplesDocument22 pagesOptimal Significance Level and Sample Size in Hypothesis Testing 3 - Large SamplesHugo HernandezNo ratings yet

- Comparison of Saturation Calculation Using Archie Versus Simandoux and Indonesian Formulas With Stress On Parameter Selection - JZrilicDocument8 pagesComparison of Saturation Calculation Using Archie Versus Simandoux and Indonesian Formulas With Stress On Parameter Selection - JZrilicsalahudinNo ratings yet

- Self Healing PDFDocument7 pagesSelf Healing PDFAnonymous DH7sVjaZONo ratings yet

- EENG415 Power System Reliability Probability Theory: Lecture # 2Document78 pagesEENG415 Power System Reliability Probability Theory: Lecture # 2k xcd9No ratings yet

- Kaleidoscope PDFDocument37 pagesKaleidoscope PDFAtheesh CholasseriNo ratings yet

- Working Stresses For Visually Stress-Graded Unseasoned Structural Timber of Philippine WoodsDocument4 pagesWorking Stresses For Visually Stress-Graded Unseasoned Structural Timber of Philippine WoodsAeron LamsenNo ratings yet

- Lesson 2 The Fibonacci SequenceDocument6 pagesLesson 2 The Fibonacci SequenceLaylamaeNo ratings yet

- Styrolution PS 158N/L: General Purpose Polystyrene (GPPS)Document3 pagesStyrolution PS 158N/L: General Purpose Polystyrene (GPPS)scribdichigoNo ratings yet

- Index: Plant Layout and Working StructureDocument72 pagesIndex: Plant Layout and Working StructureGarvit MidhaNo ratings yet

- ExamDocument2 pagesExamLawrence 'Lorenzo' AzureNo ratings yet

- Sulphate Attack in Concrete and Its PreventionDocument21 pagesSulphate Attack in Concrete and Its PreventionvempadareddyNo ratings yet

- ELSYM5 ManualDocument8 pagesELSYM5 ManualAlberth MalquiNo ratings yet

- Facility Procedures and MaintenanceDocument34 pagesFacility Procedures and Maintenancebaba100% (1)

- TipsnTricks DS01146aDocument138 pagesTipsnTricks DS01146aTonyandAnthonyNo ratings yet

- Experiment 1: Thin Layer Chromatography Femi Ajayi 8266716 CHM1321 Lab 4 January 26 2016 Lab Instructor: Indee Ranasinghe Professor: William OgilvieDocument12 pagesExperiment 1: Thin Layer Chromatography Femi Ajayi 8266716 CHM1321 Lab 4 January 26 2016 Lab Instructor: Indee Ranasinghe Professor: William OgilvieDanielle SarpongNo ratings yet

- GEA 55-9187-CA-37: Encastrés de Sol IP65/IP67 GEA G12 70W Acier Inox AISI 316 3739lmDocument11 pagesGEA 55-9187-CA-37: Encastrés de Sol IP65/IP67 GEA G12 70W Acier Inox AISI 316 3739lmAmine MoutaouakilNo ratings yet

- Past Paper Questions ElectromagnetismDocument11 pagesPast Paper Questions ElectromagnetismruukiNo ratings yet

- 1SDA073041R1 E2 2n 2500 Ekip Dip Li 4p WMPDocument3 pages1SDA073041R1 E2 2n 2500 Ekip Dip Li 4p WMPmeriemNo ratings yet

- Geiger Muller Counter To Detect X-RaysDocument7 pagesGeiger Muller Counter To Detect X-RaysSuraj M NadarNo ratings yet

- GFASDocument110 pagesGFASnagy_andor_csongorNo ratings yet

- HSSDDocument3 pagesHSSDamijetomar08No ratings yet

- Teac Unit 03Document17 pagesTeac Unit 03Vaidika GoldieNo ratings yet

- Neutral Earthing ZigDocument5 pagesNeutral Earthing ZigAjit Kalel0% (1)

- Back PropagationDocument27 pagesBack PropagationShahbaz Ali Khan100% (1)

- Structural Engineering Part TimeDocument52 pagesStructural Engineering Part TimeDipin MohanNo ratings yet

- NTSE Paper Uttar Pradesh 2018Document46 pagesNTSE Paper Uttar Pradesh 2018Timpu KumarNo ratings yet

- Properly Calculate Vessel and Piping Wall Temperatures During Depressuring and Relief - G.A. MelhemDocument20 pagesProperly Calculate Vessel and Piping Wall Temperatures During Depressuring and Relief - G.A. MelhemDavid Smith50% (2)