Professional Documents

Culture Documents

Simulated Annealing: Simulated Annealing (SA) Is A Probabilistic Technique For Approximating The Global

Simulated Annealing: Simulated Annealing (SA) Is A Probabilistic Technique For Approximating The Global

Uploaded by

Karthikeyan Gopal0 ratings0% found this document useful (0 votes)

5 views2 pagesSimulated annealing is a probabilistic technique used to approximate the global optimum of an objective function within a large search space. It works by randomly selecting solutions close to the current one and deciding whether to move to the new solution or stay with the current one based on probabilities that change as the "temperature" decreases during the search process. Simulated annealing was inspired by annealing in metallurgy and can be used to find approximate solutions to complex optimization problems like the traveling salesman problem.

Original Description:

Original Title

Simulated Annealing-Suyash.docx

Copyright

© © All Rights Reserved

Available Formats

DOCX, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentSimulated annealing is a probabilistic technique used to approximate the global optimum of an objective function within a large search space. It works by randomly selecting solutions close to the current one and deciding whether to move to the new solution or stay with the current one based on probabilities that change as the "temperature" decreases during the search process. Simulated annealing was inspired by annealing in metallurgy and can be used to find approximate solutions to complex optimization problems like the traveling salesman problem.

Copyright:

© All Rights Reserved

Available Formats

Download as DOCX, PDF, TXT or read online from Scribd

Download as docx, pdf, or txt

0 ratings0% found this document useful (0 votes)

5 views2 pagesSimulated Annealing: Simulated Annealing (SA) Is A Probabilistic Technique For Approximating The Global

Simulated Annealing: Simulated Annealing (SA) Is A Probabilistic Technique For Approximating The Global

Uploaded by

Karthikeyan GopalSimulated annealing is a probabilistic technique used to approximate the global optimum of an objective function within a large search space. It works by randomly selecting solutions close to the current one and deciding whether to move to the new solution or stay with the current one based on probabilities that change as the "temperature" decreases during the search process. Simulated annealing was inspired by annealing in metallurgy and can be used to find approximate solutions to complex optimization problems like the traveling salesman problem.

Copyright:

© All Rights Reserved

Available Formats

Download as DOCX, PDF, TXT or read online from Scribd

Download as docx, pdf, or txt

You are on page 1of 2

Simulated Annealing

Simulated annealing (SA) is a probabilistic technique for approximating the global

optimum of a given function. Specifically, it is a metaheuristic to approximate global

optimization in a large search space for an optimization problem. It is often used

when the search space is discrete (e.g., the traveling salesman problem). For

problems where finding an approximate global optimum is more important than

finding a precise local optimum in a fixed amount of time, simulated annealing may

be preferable to alternatives such as gradient descent.

The simulation annealing can be used to find an approximation of a global minimum

for a function with many variables. In 1983, this approach was used by Kirkpatrick,

Gelatt Jr., Vecchi, for a solution of the traveling salesman problem. They also

proposed its current name, simulated annealing.

Accepting worse solutions is a fundamental property of metaheuristics because it

allows for a more extensive search for the global optimal solution. In general, the

simulated annealing algorithms work as follows. At each time step, the algorithm

randomly selects a solution close to the current one, measures its quality, and then

decides to move to it or to stay with the current solution based on either one of two

probabilities between which it chooses on the basis of the fact that the new solution

is better or worse than the current one. During the search, the temperature is

progressively decreased from an initial positive value to zero and affects the two

probabilities: at each step, the probability of moving to a better new solution is either

kept to 1 or is changed towards a positive value; on the other hand, the probability of

moving to a worse new solution is progressively changed towards zero.

The simulation can be performed either by a solution of kinetic equations for density

functions or by using the stochastic sampling method. The method is an adaptation

of the Metropolis–Hastings algorithm, a Monte Carlo method to generate sample

states of a thermodynamic system, published by N. Metropolis et al. in 1953.

When to use simulated annealing:

Basic Problems

Traveling salesman

Graph partitioning

Matching problems

Graph colouring

Scheduling

Engineering

VLSI design

Placement

Routing

Array logic minimization

Layout

Facilities layout

Image processing

Code design in information theory

You might also like

- CH 4Document14 pagesCH 4Nazia Enayet100% (1)

- Predictive Analytics - Unit 4 - Week 2 - QuestionsDocument3 pagesPredictive Analytics - Unit 4 - Week 2 - QuestionsKarthikeyan GopalNo ratings yet

- R-30iA Cimplicity Operator Manual (B-82604EN 01)Document90 pagesR-30iA Cimplicity Operator Manual (B-82604EN 01)YoganandhK100% (1)

- CLC Capstone ProposalDocument8 pagesCLC Capstone Proposalapi-439644243No ratings yet

- Ground Operations: Designed ForDocument3 pagesGround Operations: Designed ForKarl NonolNo ratings yet

- Simulated AnnealingDocument11 pagesSimulated Annealinggabby209No ratings yet

- Classical and Advanced Techniques For Optimization SummaryDocument3 pagesClassical and Advanced Techniques For Optimization SummaryEzra KenyanyaNo ratings yet

- Stochastic AlgorithmsDocument19 pagesStochastic Algorithmsfls159No ratings yet

- Simulated Annealing Overview: Zak Varty March 2017Document8 pagesSimulated Annealing Overview: Zak Varty March 2017lemon lemonNo ratings yet

- A Hybrid Descent MethodDocument10 pagesA Hybrid Descent MethodSofian Harissa DarmaNo ratings yet

- Nested Simulated Annealing Algorithm To Solve Large-Scale TSP ProblemDocument14 pagesNested Simulated Annealing Algorithm To Solve Large-Scale TSP Problemlion ẩn danhNo ratings yet

- Genetic Algorithm: Artificial Neural Networks (Anns)Document10 pagesGenetic Algorithm: Artificial Neural Networks (Anns)Partth VachhaniNo ratings yet

- Greedy AlgorithmDocument4 pagesGreedy AlgorithmMas HNo ratings yet

- Simulated AnnealingDocument6 pagesSimulated Annealingjohnvincent34No ratings yet

- Approximation AlgoDocument29 pagesApproximation AlgoVishnu SharmaNo ratings yet

- Module - 1 Lecture Notes - 4 Classical and Advanced Techniques For OptimizationDocument4 pagesModule - 1 Lecture Notes - 4 Classical and Advanced Techniques For OptimizationnnernNo ratings yet

- Algorithms & Complexity: Nicolas Stroppa - Nstroppa@computing - Dcu.ieDocument28 pagesAlgorithms & Complexity: Nicolas Stroppa - Nstroppa@computing - Dcu.iextremistuNo ratings yet

- Moosegard95 MC Sampling InverseProblemsDocument17 pagesMoosegard95 MC Sampling InverseProblemsSandeep GogadiNo ratings yet

- Handout 15 (Introduction To Non-Traditional Optimization Techniques)Document4 pagesHandout 15 (Introduction To Non-Traditional Optimization Techniques)Kumar GauravNo ratings yet

- Simulation OptimizationDocument6 pagesSimulation OptimizationAndres ZuñigaNo ratings yet

- 00r020bk1121qr PDFDocument11 pages00r020bk1121qr PDFIsaias BonillaNo ratings yet

- MetaDocument8 pagesMetahossamyomna10No ratings yet

- Festa Ribeiro 2002Document41 pagesFesta Ribeiro 2002christian garciaNo ratings yet

- CCATPREPARATION AI Module 3Document13 pagesCCATPREPARATION AI Module 3Vaishnavi GuravNo ratings yet

- Unrelated Parallel Machine Scheduling Using Local SearchDocument12 pagesUnrelated Parallel Machine Scheduling Using Local SearchOlivia brianneNo ratings yet

- Introduction and Basic Concepts: Classical and Advanced Techniques For OptimizationDocument12 pagesIntroduction and Basic Concepts: Classical and Advanced Techniques For OptimizationRuthie Anne LongasaNo ratings yet

- Classical & Advanced Optimization Techniques PDFDocument12 pagesClassical & Advanced Optimization Techniques PDFBarathNo ratings yet

- Graph Partitioning Using Metaheuristic Techniques: Naresh Ghorpade, H. R. BhapkarDocument9 pagesGraph Partitioning Using Metaheuristic Techniques: Naresh Ghorpade, H. R. BhapkarNaresh GhorpadeNo ratings yet

- RePAMO PDFDocument19 pagesRePAMO PDFRonald JosephNo ratings yet

- Preconditioning of Truncated-Newton MethodsDocument18 pagesPreconditioning of Truncated-Newton MethodsパプリカNo ratings yet

- Runtime PDFDocument11 pagesRuntime PDFKanwal PreetNo ratings yet

- Optimization of Field Development Using Particle Swarm Optimization and New Well Pattern Descriptions Literature ReviewDocument7 pagesOptimization of Field Development Using Particle Swarm Optimization and New Well Pattern Descriptions Literature ReviewPrecious OluwadahunsiNo ratings yet

- Artificial Intelligence: School of Engineering and TechnologyDocument8 pagesArtificial Intelligence: School of Engineering and TechnologynikitaNo ratings yet

- Optimization by Simulated Annealing: Quantitative Studies: Journal of Statistical Physics, 34, Nos. 5/6, 1984Document12 pagesOptimization by Simulated Annealing: Quantitative Studies: Journal of Statistical Physics, 34, Nos. 5/6, 1984Maha Lakshmi PerumalNo ratings yet

- Gezgin Satıcı Problemlerinin Çözümü Için Rassal Anahtar Temelli Elektromanyetizma Sezgiselinin UygulanmasıDocument8 pagesGezgin Satıcı Problemlerinin Çözümü Için Rassal Anahtar Temelli Elektromanyetizma Sezgiselinin UygulanmasıclemenNo ratings yet

- The Cheapest Shop Seeker: A New Algorithm For Optimization Problem in A Continous SpaceDocument8 pagesThe Cheapest Shop Seeker: A New Algorithm For Optimization Problem in A Continous SpaceIAES IJAINo ratings yet

- Conda CheatsheetDocument20 pagesConda CheatsheetHIMANSHU SHARMANo ratings yet

- 1995 Nsga IDocument28 pages1995 Nsga IAkash ce16d040No ratings yet

- Carousel GreedyDocument16 pagesCarousel GreedygabrielNo ratings yet

- Adobe Scan 27 Sep 2022Document6 pagesAdobe Scan 27 Sep 2022039 MeghaEceNo ratings yet

- Simulated Annealing OverviewDocument10 pagesSimulated Annealing OverviewJoseJohnNo ratings yet

- Paper 15-Improving The Solution of Traveling Salesman Problem Using Genetic, Memetic Algorithm and Edge Assembly CrossoverDocument4 pagesPaper 15-Improving The Solution of Traveling Salesman Problem Using Genetic, Memetic Algorithm and Edge Assembly CrossoverEditor IJACSANo ratings yet

- Genetic and Other Global Optimization Algorithms - Comparison and Use in Calibration ProblemsDocument11 pagesGenetic and Other Global Optimization Algorithms - Comparison and Use in Calibration ProblemsOktafian PrabandaruNo ratings yet

- Unit ViDocument47 pagesUnit ViRushikesh JadhavNo ratings yet

- The Application Research of Genetic Algorithm: Jumei ZhangDocument4 pagesThe Application Research of Genetic Algorithm: Jumei ZhangSanderNo ratings yet

- Practical Optimization Using Evolutionary MethodsDocument20 pagesPractical Optimization Using Evolutionary MethodsGerhard HerresNo ratings yet

- Lost Valley SearchDocument6 pagesLost Valley Searchmanuel abarcaNo ratings yet

- A New Heuristic Optimization Algorithm - Harmony Search Zong Woo Geem, Joong Hoon Kim, Loganathan, G.V.Document10 pagesA New Heuristic Optimization Algorithm - Harmony Search Zong Woo Geem, Joong Hoon Kim, Loganathan, G.V.Anonymous BQrnjRaNo ratings yet

- 15.04.2020 Daa atDocument6 pages15.04.2020 Daa atRajat Mittal 1815906No ratings yet

- AssesmentDocument3 pagesAssesmenttonmoy HasanNo ratings yet

- Exploring Matrix Generation Strategies in Isogeometric AnalysisDocument20 pagesExploring Matrix Generation Strategies in Isogeometric AnalysisJorge Luis Garcia ZuñigaNo ratings yet

- Michail Zak and Colin P. Williams - Quantum Neural NetsDocument48 pagesMichail Zak and Colin P. Williams - Quantum Neural Netsdcsi3No ratings yet

- Exact Meta Optimization CombinationDocument13 pagesExact Meta Optimization CombinationcsmateNo ratings yet

- An Adaptive Successive Over-Relaxation Method For Computing The Black-Scholes Implied VolatilityDocument46 pagesAn Adaptive Successive Over-Relaxation Method For Computing The Black-Scholes Implied VolatilityminqiangNo ratings yet

- Maximum-Likelihood EstimationDocument2 pagesMaximum-Likelihood EstimationdmugalloyNo ratings yet

- My Project Well Location OptimizationDocument7 pagesMy Project Well Location OptimizationPrecious OluwadahunsiNo ratings yet

- Discrete W5Document6 pagesDiscrete W5ashutosh karkiNo ratings yet

- Greedy Randomized Adaptive Search Procedures (Grasp) : BstractDocument11 pagesGreedy Randomized Adaptive Search Procedures (Grasp) : BstractsakiyamaNo ratings yet

- Meta ProblemDocument9 pagesMeta ProblemIvan Eric OleaNo ratings yet

- Multiobjective Genetic Algorithms Applied To Solve Optimization ProblemsDocument4 pagesMultiobjective Genetic Algorithms Applied To Solve Optimization ProblemsSidney LinsNo ratings yet

- 3 PDFDocument28 pages3 PDFGuraliuc IoanaNo ratings yet

- ShimuraDocument18 pagesShimuraDamodharan ChandranNo ratings yet

- Cell Name Original Value Final ValueDocument7 pagesCell Name Original Value Final ValueKarthikeyan GopalNo ratings yet

- Subscription OfferingsDocument4 pagesSubscription OfferingsKarthikeyan GopalNo ratings yet

- H S No of Tons HP SP No of TonsDocument1 pageH S No of Tons HP SP No of TonsKarthikeyan GopalNo ratings yet

- Print Is Still DominantDocument4 pagesPrint Is Still DominantKarthikeyan GopalNo ratings yet

- #Disrupt: Product Attributes Functional Benefits Emotional BenefitsDocument4 pages#Disrupt: Product Attributes Functional Benefits Emotional BenefitsKarthikeyan GopalNo ratings yet

- Digital First and Print Next ApproachDocument2 pagesDigital First and Print Next ApproachKarthikeyan GopalNo ratings yet

- So Beautifully Explained Watch Index, Match Vlookup and Index Match VideosDocument1 pageSo Beautifully Explained Watch Index, Match Vlookup and Index Match VideosKarthikeyan GopalNo ratings yet

- Marketing of KTM125Document3 pagesMarketing of KTM125Karthikeyan GopalNo ratings yet

- Descriptive StatisticsDocument9 pagesDescriptive StatisticsKarthikeyan GopalNo ratings yet

- Sector AnalysisDocument1 pageSector AnalysisKarthikeyan GopalNo ratings yet

- Group - 1 - Project Report - Section ADocument10 pagesGroup - 1 - Project Report - Section AKarthikeyan GopalNo ratings yet

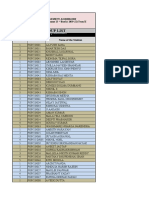

- Group List: Course: Optimisation With Spreadsheets Section ADocument2 pagesGroup List: Course: Optimisation With Spreadsheets Section AKarthikeyan GopalNo ratings yet

- Bajaj Challenge: OffroadDocument4 pagesBajaj Challenge: OffroadKarthikeyan GopalNo ratings yet

- SESS - Sec A - Group AllocationDocument2 pagesSESS - Sec A - Group AllocationKarthikeyan GopalNo ratings yet

- Social Media Management Tools IFTTT Is One Such ToolDocument5 pagesSocial Media Management Tools IFTTT Is One Such ToolKarthikeyan GopalNo ratings yet

- Union Imperatives From Unionized White Collar EmplDocument31 pagesUnion Imperatives From Unionized White Collar EmplKarthikeyan GopalNo ratings yet

- Personal SWOT Analysis Ask For FeedbackDocument4 pagesPersonal SWOT Analysis Ask For FeedbackKarthikeyan GopalNo ratings yet

- (In Thousands) : 2-35 Computing Cost of Goods Purchased and Cost of Goods SoldDocument2 pages(In Thousands) : 2-35 Computing Cost of Goods Purchased and Cost of Goods SoldKarthikeyan GopalNo ratings yet

- 3 Jays Submission Group No 9 Section A PDFDocument4 pages3 Jays Submission Group No 9 Section A PDFKarthikeyan GopalNo ratings yet

- 2018 Ibm and The EnvironmentDocument56 pages2018 Ibm and The EnvironmentKarthikeyan GopalNo ratings yet

- HP-Cisco FinalPPTDocument15 pagesHP-Cisco FinalPPTKarthikeyan Gopal100% (1)

- Session 19 - Structure - v2 PDFDocument36 pagesSession 19 - Structure - v2 PDFKarthikeyan GopalNo ratings yet

- List of Registered Contractors (Class-I, Ii & Iii) Working in National Highway ZoneDocument22 pagesList of Registered Contractors (Class-I, Ii & Iii) Working in National Highway ZoneKarthikeyan Gopal0% (1)

- Bamboo and Bamboo Products, Value-Added Bamboo Products-535182 PDFDocument43 pagesBamboo and Bamboo Products, Value-Added Bamboo Products-535182 PDFCA Aankit Kumar Jain100% (2)

- Manage Prescribed Load List (PLL) AR0008 C: InstructionsDocument5 pagesManage Prescribed Load List (PLL) AR0008 C: InstructionsAmazinmets07No ratings yet

- In Case of Doubts in Answers, Whatsapp 9730768982: Cost & Management Accounting - December 2019Document16 pagesIn Case of Doubts in Answers, Whatsapp 9730768982: Cost & Management Accounting - December 2019Ashutosh shahNo ratings yet

- 364.7T-02 (11) Evaluation and Minimization of Bruising (Microcracking) in Concrete Repair (TechNote)Document3 pages364.7T-02 (11) Evaluation and Minimization of Bruising (Microcracking) in Concrete Repair (TechNote)YaserNo ratings yet

- Wallbox Supernova BrochureDocument8 pagesWallbox Supernova BrochuresaraNo ratings yet

- A Multilingual Spam Review DetectionDocument5 pagesA Multilingual Spam Review DetectionInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Book Review On Brand Royalty How The World's Top 100 Brands Thrive and SurviveDocument5 pagesBook Review On Brand Royalty How The World's Top 100 Brands Thrive and SurviveAmeer M BalochNo ratings yet

- 35kV AL 133% TRXLPE One-Third Neutral LLDPE Primary UD: SPEC 81252Document5 pages35kV AL 133% TRXLPE One-Third Neutral LLDPE Primary UD: SPEC 81252Anonymous hxyW3v5QqVNo ratings yet

- X-Gipam Modbusrtu Map English (2014.02.26)Document993 pagesX-Gipam Modbusrtu Map English (2014.02.26)AfghanNo ratings yet

- Blackrock Us Factor Outlook July 2017 en UsDocument6 pagesBlackrock Us Factor Outlook July 2017 en UsAugusto Peña ChavezNo ratings yet

- TvtgurpsnewDocument94 pagesTvtgurpsnewKevin HutchingsNo ratings yet

- This Article Needs Additional Citations For VerificationDocument3 pagesThis Article Needs Additional Citations For Verificationsamina taneNo ratings yet

- Some Words Against The Staff of Face BookDocument50 pagesSome Words Against The Staff of Face BookGiorgi Leon KavtarażeNo ratings yet

- Drama Script SangkuriangDocument8 pagesDrama Script Sangkuriangbondan iskandarNo ratings yet

- Or AssignmentDocument7 pagesOr AssignmentAditya Sharma0% (1)

- Format 8 Synchronizing ReportDocument4 pagesFormat 8 Synchronizing ReportSameerNo ratings yet

- CPAR ReportDocument35 pagesCPAR ReportPatricia Mae VargasNo ratings yet

- Drug Monitoring PDFDocument1 pageDrug Monitoring PDFImran ChaudhryNo ratings yet

- Tahajjud and Dhikr: The Two Missing ComponentsDocument1 pageTahajjud and Dhikr: The Two Missing ComponentstakwaniaNo ratings yet

- FHMM1014 Chapter 1 Number and SetDocument116 pagesFHMM1014 Chapter 1 Number and SetLai Jie FengNo ratings yet

- Monday Tuesday Wednesday Thursday Friday: GRADES 1 To 12 Daily Lesson LogDocument7 pagesMonday Tuesday Wednesday Thursday Friday: GRADES 1 To 12 Daily Lesson LogKatrina Baldas Kew-isNo ratings yet

- System Security-Virus and WormsDocument26 pagesSystem Security-Virus and WormsSurangma ParasharNo ratings yet

- InheritanceDocument59 pagesInheritanceTiju ThomasNo ratings yet

- Chest Pain.Document53 pagesChest Pain.Shimmering MoonNo ratings yet

- Evs - Final Unit 3 - Environment Management & Sustainable DevelopmentDocument105 pagesEvs - Final Unit 3 - Environment Management & Sustainable DevelopmentchhaviNo ratings yet

- Grades of NdFeB MagnetsDocument127 pagesGrades of NdFeB MagnetsjgreenguitarsNo ratings yet

- What If Everyone Were: The Same?Document9 pagesWhat If Everyone Were: The Same?Eddie FongNo ratings yet