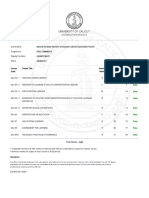

Professional Documents

Culture Documents

A Natural Bias For Simplicity: Thesis

A Natural Bias For Simplicity: Thesis

Uploaded by

shalqmanqk0 ratings0% found this document useful (0 votes)

33 views1 page1) Scientists have found that maps arising across many fields of science appear to share a common bias toward simplicity in their outputs, even though one might expect nature's maps to reflect a random distribution of complexities.

2) This finding emerges from applying ideas from algorithmic information theory to mathematical mappings. The theory suggests outputs become exponentially less likely as their Kolmogorov complexity increases, resulting in a bias toward simpler outputs.

3) Studies of maps from RNA sequences to structures, ordinary differential equations, and neural networks support this idea, finding the majority of outputs tend to be relatively simple despite many possible complex alternatives.

Original Description:

Original Title

Mark_Buchanan_-_Bias_for_Simplicity.pdf

Copyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this Document1) Scientists have found that maps arising across many fields of science appear to share a common bias toward simplicity in their outputs, even though one might expect nature's maps to reflect a random distribution of complexities.

2) This finding emerges from applying ideas from algorithmic information theory to mathematical mappings. The theory suggests outputs become exponentially less likely as their Kolmogorov complexity increases, resulting in a bias toward simpler outputs.

3) Studies of maps from RNA sequences to structures, ordinary differential equations, and neural networks support this idea, finding the majority of outputs tend to be relatively simple despite many possible complex alternatives.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

Download as pdf or txt

0 ratings0% found this document useful (0 votes)

33 views1 pageA Natural Bias For Simplicity: Thesis

A Natural Bias For Simplicity: Thesis

Uploaded by

shalqmanqk1) Scientists have found that maps arising across many fields of science appear to share a common bias toward simplicity in their outputs, even though one might expect nature's maps to reflect a random distribution of complexities.

2) This finding emerges from applying ideas from algorithmic information theory to mathematical mappings. The theory suggests outputs become exponentially less likely as their Kolmogorov complexity increases, resulting in a bias toward simpler outputs.

3) Studies of maps from RNA sequences to structures, ordinary differential equations, and neural networks support this idea, finding the majority of outputs tend to be relatively simple despite many possible complex alternatives.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

Download as pdf or txt

You are on page 1of 1

thesis

A natural bias for simplicity

A ‘map’ in ordinary usage is a representation The question Dingle and colleagues discrete mapping by discretizing the system

of one thing by another, usually simpler address is whether anything similar can be of ordinary differential equations, which

thing. Mathematicians define a map more established for maps in general. Any map can has 15 tunable parameters, and solving the

formally as a function, f, taking the elements be considered to carry out a computation, resulting numerical model to get outputs as

of one set into another. Using today’s and so can be considered in terms of the discrete time trajectories. Again, they found

computing terminology, one might also computer code that would be required to that the outputs were strongly biased toward

think of inputs and outputs — a map acts on implement it. Following this approach, the simplicity, and the likelihood of outputs of

an input to produce an output. Maps of this authors derive an analogue of the classic different complexity could be accurately

kind run through all of science, from plasma result discussed above, but applying to any estimated using the output complexity only.

physics to linguistics. of a broad class of discrete maps. The result This work may take us part way to

Scientists are experts in the maps of asserts that the probability P(x) a randomly understanding why so many aspects of nature

their own special areas. Earth scientists chosen input will generate an output x is can be understood on the basis of relatively

understand basic models of plate tectonics, bounded by 2–K(x). Again, there’s a natural simple models, and indeed why the world

just as biochemists have great familiarity weighting toward simpler outputs. seems understandable at all. It’s a fundamental

with models of cell signalling. Yet one might mathematical bias at work. Even so, there are

wonder if all these different maps may share many open questions. The authors note that

Maps that arise

certain general properties, beyond simple a general map could conceivably have any

properties such as continuities. Recent work

across many distribution of complexities of its outputs. A

suggests that the answer is yes — maps that fields of science map might be designed, for example, to have

arise across many fields of science appear to appear to share a almost all complex outputs, and very few

share a common and rather surprising bias common and simple outputs. In this case, even if simpler

toward simplicity in their outputs. That is, rather surprising outputs have a higher relative probability than

while we might naively expect nature’s maps bias toward more complex ones, the imbalance toward

to reflect a random sample of all possible simplicity in their complexity in the sheer number of outputs

maps, they actually tend strongly toward outputs. will win out, leading mostly to complex

those with many simple outputs. In this outputs. But in the applications they’ve

sense, nature really seems to be simpler examined so far, this doesn’t seem to happen.

than it could be. It may seem almost miraculous that Most maps do seem to favour simpler output

This idea emerges from an application of one can derive expectations about maps — although exactly why remains mysterious.

the ideas of algorithmic information theory in a general setting just by considering The authors also believe the work may help

to mathematical mappings (K. Dingle, computational aspects of how maps process to explain some observed features of so-called

C. Q. Camargo & A. A. Louis, Nat. Commun. 9, information. But it does seem to work, as deep learning neural networks, particularly

761; 2018). Algorithmic information theory Dingle and colleagues demonstrate in a why they seem to generalize so well and fit

aims to quantify the inherent complexity of a number of real world examples. An example data well outside of the examples on which

string of digits. In the case of binary digits, for from biophysics is the map connecting RNA they’ve been trained, and despite the over

example, a repeating string — 010101. — is nucleotide sequences or genotypes to so-called parametrization of the models — the number

inherently simple, whereas a highly erratic RNA secondary structures — the structures of parameters they contain is also much larger

string is (in general, though not always) these linear molecules naturally assume than the training data. In a preprint (https://

more complex; it contains more information due to interactions between different bases. arxiv.org/abs/1805.08522), Camargo and

and is more difficult to describe. A key idea Dingle and colleagues examined the case Louis, working with Guillermo Valle-Pérez,

in the field is the Kolmogorov complexity n =55 — RNAs of length 55 — and first used suggest this might be another example of the

K(x) of a binary string x, defined (leaving a popular software package to calculate the bias toward simpler outputs. Deep learning

aside some technicalities) as the length of minimum free energy structure for a large models may generalize well because they’re

the shortest programme able to generate x number of random sequences. They then biased in the same way that data from real

when run on a universal Turing machine. turned to a commonly employed shorthand world problems are — toward simple outputs.

This definition centres on a mapping. for describing the secondary structures to turn The work of Dingle, Camargo and Louis

It goes from an output string x to another each structure into a binary sequence. Finally, reminds me of the related observation by

binary string giving the length of the by estimating the complexity of each resulting Mark Transtrum and colleagues (preprint

shortest programme generating x. One output, they found that the results clearly at https://arxiv.org/abs/1501.07668)

interesting question is the following: what is showed a bias toward simpler sequences. concerning the surprising effectiveness of

the probability P(x) that a randomly chosen A more general example is ordinary 'sloppy models' in so many areas of science.

input will generate x? Mathematicians have differential equations, which yield discrete Why is the world understandable? We may

developed estimates for this quantity, finding models when coarse-grained by discretizing never know for sure. But the mystery may be

that it goes crudely in proportion to 2–K(x). input parameters and outputs, as happens starting to shed its secrets. ❐

Hence, output strings become exponentially in applications throughout science. Here the

less likely with increasing Kolmogorov authors tested a representative model drawn Mark Buchanan

complexity. This is a general result for from biochemisty and used in modelling

one particular mapping of importance in the biochemical pathways controlling Published online: 4 December 2018

algorithmic information theory. circadian rhythms. They first generated a https://doi.org/10.1038/s41567-018-0370-y

1154 Nature Physics | VOL 14 | DECEMBER 2018 | 1154 | www.nature.com/naturephysics

You might also like

- VCE Project Report FinalDocument17 pagesVCE Project Report FinalPuneet Singh Dhani25% (4)

- 13 Network Models: Nadine Baumann and Sebastian StillerDocument32 pages13 Network Models: Nadine Baumann and Sebastian Stillermorgoth12No ratings yet

- Projects2017-4 12 PDFDocument54 pagesProjects2017-4 12 PDFRAhul KumarNo ratings yet

- Wavelets On Graphs Via Spectral Graph TheoryDocument37 pagesWavelets On Graphs Via Spectral Graph Theory孔翔鸿No ratings yet

- GUNDAM: A Toolkit For Fast Spatial Correlation Functions in Galaxy SurveysDocument12 pagesGUNDAM: A Toolkit For Fast Spatial Correlation Functions in Galaxy SurveysAkhmad Alfan RahadiNo ratings yet

- Blanchard P., Volchenkov D. - Random Walks and Diffusions On Graphs and Databases. An Introduction - (Springer Series in Synergetics) - 2011Document277 pagesBlanchard P., Volchenkov D. - Random Walks and Diffusions On Graphs and Databases. An Introduction - (Springer Series in Synergetics) - 2011fictitious30No ratings yet

- 2019 - 1 - 3 - The CrossDocument3 pages2019 - 1 - 3 - The CrossLu YeesntNo ratings yet

- Lecture Notes On Bucket Algorithms - Luc DevroyeDocument154 pagesLecture Notes On Bucket Algorithms - Luc DevroyeAlex GjNo ratings yet

- Wavelets On Irregular Point SetsDocument17 pagesWavelets On Irregular Point SetsDAIRO OSWALDO ARCE CEBALLOSNo ratings yet

- Review of Perceptrons An Introduction To Computat PDFDocument3 pagesReview of Perceptrons An Introduction To Computat PDFlucasbotelhodacruzNo ratings yet

- Some of What Mathematicians Do-Krieger PDFDocument5 pagesSome of What Mathematicians Do-Krieger PDFGuido 125 LavespaNo ratings yet

- Techniques For Solving Sudoku Puzzles: March 2012Document12 pagesTechniques For Solving Sudoku Puzzles: March 2012Dev ArenaNo ratings yet

- Review of Perceptrons An Introduction To ComputatDocument3 pagesReview of Perceptrons An Introduction To ComputatSeverin BenzNo ratings yet

- NewJPhys12 2010corominas Murtra Etal CausalityDocument20 pagesNewJPhys12 2010corominas Murtra Etal CausalityMichał LangenNo ratings yet

- Computational Tractability: The View From Mars: The Problem of Systematically Coping With Computational IntractabilityDocument25 pagesComputational Tractability: The View From Mars: The Problem of Systematically Coping With Computational IntractabilityssfofoNo ratings yet

- Gautam Dasgupta (Auth.) - Finite Element Concepts - A Closed-Form Algebraic Development-Springer-Verlag New York (2018)Document358 pagesGautam Dasgupta (Auth.) - Finite Element Concepts - A Closed-Form Algebraic Development-Springer-Verlag New York (2018)Nelson Amorim100% (1)

- Fast Discrete Curvelet TransformsDocument44 pagesFast Discrete Curvelet TransformsjiboyvNo ratings yet

- Lovasz Discrete and ContinuousDocument23 pagesLovasz Discrete and ContinuousVasiliki VelonaNo ratings yet

- Introduction to the Mathematics of Inversion in Remote Sensing and Indirect MeasurementsFrom EverandIntroduction to the Mathematics of Inversion in Remote Sensing and Indirect MeasurementsNo ratings yet

- Occam 1 DDocument12 pagesOccam 1 DSilvia Dona SariNo ratings yet

- Fuzzy Neural Computing of Coffee and Tainted-Water Data From An Electronic NoseDocument6 pagesFuzzy Neural Computing of Coffee and Tainted-Water Data From An Electronic Noseyohanna maldonadoNo ratings yet

- Computational and Applied Topology, Tutorial.: Paweł Dłotko Swansea University (Version 3.14, and Slowly Converging.)Document64 pagesComputational and Applied Topology, Tutorial.: Paweł Dłotko Swansea University (Version 3.14, and Slowly Converging.)Isabel ColmenaresNo ratings yet

- On The Nystr Om Method For Approximating A Gram Matrix For Improved Kernel-Based LearningDocument23 pagesOn The Nystr Om Method For Approximating A Gram Matrix For Improved Kernel-Based LearningJhony Sandoval JuarezNo ratings yet

- Graphs and NetworksDocument5 pagesGraphs and NetworksvidulaNo ratings yet

- Godino PaperDocument12 pagesGodino Papermd manjurul ahsanNo ratings yet

- Shervashidze 11 ADocument23 pagesShervashidze 11 AzumaNo ratings yet

- 2008 Tezi 4.kaynak MakaleDocument17 pages2008 Tezi 4.kaynak MakaleGamze Ela KukuşNo ratings yet

- T-Designs LowDocument20 pagesT-Designs LowbilgaiyanNo ratings yet

- 1995 Siegelmann ScienceDocument4 pages1995 Siegelmann ScienceKushagra PundeerNo ratings yet

- Mat CDZ EnumerateDocument8 pagesMat CDZ EnumerateEMellaNo ratings yet

- Fast Label Embeddings Via Randomized Linear AlgebraDocument15 pagesFast Label Embeddings Via Randomized Linear Algebraomonait17No ratings yet

- Suh Theory of Complexity and PeriodicityDocument17 pagesSuh Theory of Complexity and PeriodicitypbouNo ratings yet

- Impagliazzo A Personal View On ComplexityDocument14 pagesImpagliazzo A Personal View On ComplexitygbecaliNo ratings yet

- Artificial Immune Systems and The Grand Challenge For Non-Classical ComputationDocument12 pagesArtificial Immune Systems and The Grand Challenge For Non-Classical ComputationJohn GacheruNo ratings yet

- Semantics and Thermodynamics: James P. CrutchfieldDocument38 pagesSemantics and Thermodynamics: James P. CrutchfieldrezapouNo ratings yet

- Computer Scientist Finds Small-Memory Algorithm For Fundamental Graph ProblemDocument3 pagesComputer Scientist Finds Small-Memory Algorithm For Fundamental Graph ProblemARC87No ratings yet

- The Functor of Points Approach To Schemes in Cubical Agda Max Zeuner, Matthias HutzlerDocument18 pagesThe Functor of Points Approach To Schemes in Cubical Agda Max Zeuner, Matthias Hutzlerjuliay0710No ratings yet

- Uncertainty Management With Fuzzy and Rough Sets - Recent Advances and ApplicationsDocument424 pagesUncertainty Management With Fuzzy and Rough Sets - Recent Advances and ApplicationsThuần Nguyễn ĐứcNo ratings yet

- Dynamical Systems and Fractals in Pascal by Doerfler BeckerDocument410 pagesDynamical Systems and Fractals in Pascal by Doerfler BeckerDaniel Stanica100% (1)

- A Personal View of Average-Case ComplexityDocument14 pagesA Personal View of Average-Case Complexityhax0mailNo ratings yet

- CopulaMLSurvey PDFDocument20 pagesCopulaMLSurvey PDFkarim kaifNo ratings yet

- V UstimenkoDocument18 pagesV UstimenkobayareakingNo ratings yet

- Bladt, Bo Friis Nielsen - Matrix-Exponential Distributions in Applied Probability-Springer US (2017)Document749 pagesBladt, Bo Friis Nielsen - Matrix-Exponential Distributions in Applied Probability-Springer US (2017)Fredy CastellaresNo ratings yet

- Quantitative Geography, 1967: LeslieDocument15 pagesQuantitative Geography, 1967: LeslieHenrique RamosNo ratings yet

- (Ebook) - Mathematics - Dynamical Systems and FractalsDocument410 pages(Ebook) - Mathematics - Dynamical Systems and FractalsLuis Javier CeliNo ratings yet

- An Integrated Neural-Fuzzy-Genetic-Algorithm Using Hyper-Surface Membership Functions To Predict Permeability in Petroleum ReservoirsDocument7 pagesAn Integrated Neural-Fuzzy-Genetic-Algorithm Using Hyper-Surface Membership Functions To Predict Permeability in Petroleum ReservoirsSaurabh TewariNo ratings yet

- Theoretical Computer Science: Catherine C. McgeochDocument15 pagesTheoretical Computer Science: Catherine C. McgeochDANTENo ratings yet

- ACM REP24 Paper SubmissionDocument19 pagesACM REP24 Paper Submissionvcox4460No ratings yet

- Exact Approaches For Scaffolding: Research Open AccessDocument18 pagesExact Approaches For Scaffolding: Research Open AccessJohn SmithNo ratings yet

- Standard Masters Thesis FormatDocument4 pagesStandard Masters Thesis Formatbrandycarpenterbillings100% (2)

- Antologie - The Science of Fractal Images Text 2Document168 pagesAntologie - The Science of Fractal Images Text 2paul popNo ratings yet

- "Encoding Trees," Comput.: 1I15. New (71Document5 pages"Encoding Trees," Comput.: 1I15. New (71Jamilson DantasNo ratings yet

- AIwanski, 1998. Recurrence Plots of Experimental DataDocument12 pagesAIwanski, 1998. Recurrence Plots of Experimental DataDagon EscarabajoNo ratings yet

- Algebra FuzzyDocument21 pagesAlgebra Fuzzyítalo FlôrNo ratings yet

- Theory and Applications of Compressed Sensing: Gitta Kutyniok October 15, 2018Document25 pagesTheory and Applications of Compressed Sensing: Gitta Kutyniok October 15, 2018GilbertNo ratings yet

- UntitledDocument116 pagesUntitledTsega TeklewoldNo ratings yet

- The Calabi–Yau Landscape: From Geometry, to Physics, to Machine LearningFrom EverandThe Calabi–Yau Landscape: From Geometry, to Physics, to Machine LearningNo ratings yet

- Discrete and Computational GeometryFrom EverandDiscrete and Computational GeometryRating: 4.5 out of 5 stars4.5/5 (3)

- Toaz - Info Learn Wordpress From Scratch PRDocument103 pagesToaz - Info Learn Wordpress From Scratch PRAhmed Wa SsiNo ratings yet

- Case Study On Akshaya Patra Group-1Document9 pagesCase Study On Akshaya Patra Group-1swaroopNo ratings yet

- Acct Lesson 9Document9 pagesAcct Lesson 9Gracielle EspirituNo ratings yet

- CONSIGNMENTDocument15 pagesCONSIGNMENTUmar muzammilNo ratings yet

- Luxury DIY Sulfate Shampoo - Workbook - VFDocument14 pagesLuxury DIY Sulfate Shampoo - Workbook - VFralucaxjsNo ratings yet

- Technological Institute of The Philippines: 938 Aurora Boulevard, Cubao, Quezon CityDocument140 pagesTechnological Institute of The Philippines: 938 Aurora Boulevard, Cubao, Quezon CityKaty Perry100% (1)

- Approval Sheet and Evaluation FormDocument8 pagesApproval Sheet and Evaluation FormDannet Frondozo DelmonteNo ratings yet

- SPM Linear LawDocument5 pagesSPM Linear LawNg YieviaNo ratings yet

- Assessment-2: Sithind002 Source and Use Information On The Hospitality IndustryDocument19 pagesAssessment-2: Sithind002 Source and Use Information On The Hospitality IndustryNidhi GuptaNo ratings yet

- Chapter 123 Final Na!!!Document34 pagesChapter 123 Final Na!!!Ricell Joy RocamoraNo ratings yet

- Web - DOW Industrial Reaction Engineering Course Flyer PDFDocument1 pageWeb - DOW Industrial Reaction Engineering Course Flyer PDFChintan Milan ShahNo ratings yet

- Einstein Hilbert Action With TorsionDocument19 pagesEinstein Hilbert Action With TorsionLillyOpenMindNo ratings yet

- Moist Heat Sterilization Validation and Requalification STERISDocument4 pagesMoist Heat Sterilization Validation and Requalification STERISDany RobinNo ratings yet

- Salami Attacks and Their Mitigation - AnDocument4 pagesSalami Attacks and Their Mitigation - AnParmalik KumarNo ratings yet

- Divyesh ResumeDocument2 pagesDivyesh ResumeDivyeshNo ratings yet

- Bed 2nd Sem ResultDocument1 pageBed 2nd Sem ResultAnusree PranavamNo ratings yet

- 2020a1t182 Assgn1Document9 pages2020a1t182 Assgn1Aahib NazirNo ratings yet

- Case Study 1: The London 2012 Olympic Stadium 1. The ProjectDocument15 pagesCase Study 1: The London 2012 Olympic Stadium 1. The ProjectIván Comprés GuzmánNo ratings yet

- Sop-10 Dose Rate MeasurementDocument3 pagesSop-10 Dose Rate MeasurementOSAMANo ratings yet

- Strad Pressenda v3Document6 pagesStrad Pressenda v3Marcos Augusto SilvaNo ratings yet

- 17.0 DistillationDocument73 pages17.0 DistillationcheesewizzNo ratings yet

- BS en 1713 - UtDocument20 pagesBS en 1713 - UtBoranAlouaneNo ratings yet

- Alcatel Lucent - Certkey.4a0 100.v2018!09!25.by - Amir.140qDocument62 pagesAlcatel Lucent - Certkey.4a0 100.v2018!09!25.by - Amir.140qRoshan KarnaNo ratings yet

- 3 Methods For Crack Depth Measurement in ConcreteDocument4 pages3 Methods For Crack Depth Measurement in ConcreteEvello MercanoNo ratings yet

- Graph Writing Vocabulary IndexDocument37 pagesGraph Writing Vocabulary IndexKamal deep singh SinghNo ratings yet

- Affidavit of Loss - Bir.or - Car.1.2020Document1 pageAffidavit of Loss - Bir.or - Car.1.2020black stalkerNo ratings yet

- Executing / Implementing Agency: Financial Management Assessment Questionnaire Topic ResponseDocument6 pagesExecuting / Implementing Agency: Financial Management Assessment Questionnaire Topic ResponseBelle CartagenaNo ratings yet

- Introduction To Naming and Drawing of Carboxylic Acids and EsterDocument38 pagesIntroduction To Naming and Drawing of Carboxylic Acids and Esterkartika.pranotoNo ratings yet

- SyllabusDocument6 pagesSyllabusMadhu ChauhanNo ratings yet