Professional Documents

Culture Documents

Python For Data Science Cheat Sheet Python Basics

Python For Data Science Cheat Sheet Python Basics

Uploaded by

MohamedYounesCopyright:

Available Formats

You might also like

- Python 3 Cheat SheetDocument2 pagesPython 3 Cheat SheetLaurent Pointal94% (50)

- Pandas 1.x Cookbook - Second Edition: Practical recipes for scientific computing, time series analysis, and exploratory data analysis using Python, 2nd EditionFrom EverandPandas 1.x Cookbook - Second Edition: Practical recipes for scientific computing, time series analysis, and exploratory data analysis using Python, 2nd EditionRating: 5 out of 5 stars5/5 (1)

- Pit DesignDocument107 pagesPit DesignPurwanto83% (6)

- Coffee Break NumPy PDFDocument211 pagesCoffee Break NumPy PDFKiran Kumar100% (4)

- Learning The Pandas Library Python Tools For Data Munging Analysis and Visual PDFDocument208 pagesLearning The Pandas Library Python Tools For Data Munging Analysis and Visual PDFAlicia Brett100% (11)

- Python Pandas TutorialDocument178 pagesPython Pandas TutorialDavid Charles95% (21)

- Python Cheat SheetsDocument11 pagesPython Cheat SheetsAndrew Khalatov97% (30)

- Data Analysis With PANDAS: Cheat SheetDocument4 pagesData Analysis With PANDAS: Cheat Sheetnesayu67% (3)

- Matplotlib Cheat SheetDocument8 pagesMatplotlib Cheat SheetTuan Ton100% (6)

- Pandas Python For Data ScienceDocument1 pagePandas Python For Data Sciencechowdamhemalatha100% (1)

- Pandas Cheat SheetDocument2 pagesPandas Cheat Sheetngyncloud100% (4)

- Numpy Crash Course - Sharp SightDocument160 pagesNumpy Crash Course - Sharp SightMario Leon Gatica86% (7)

- Python 3.2 Reference CardDocument2 pagesPython 3.2 Reference CardLaurent Pointal100% (12)

- Python Notes For ProfessionalsDocument814 pagesPython Notes For ProfessionalsNemani100% (13)

- OReilly Learning Python 4th Edition Oct 2009Document1,214 pagesOReilly Learning Python 4th Edition Oct 2009Siyoung Byun100% (16)

- Hands-On Data Analysis with Pandas: Efficiently perform data collection, wrangling, analysis, and visualization using PythonFrom EverandHands-On Data Analysis with Pandas: Efficiently perform data collection, wrangling, analysis, and visualization using PythonNo ratings yet

- Data Analytics with Python: Data Analytics in Python Using PandasFrom EverandData Analytics with Python: Data Analytics in Python Using PandasRating: 3 out of 5 stars3/5 (1)

- All Python CSDocument10 pagesAll Python CSAnkit Shukla100% (2)

- Python Cheat Sheets CompilationDocument14 pagesPython Cheat Sheets Compilationraghavandra ethish manickam100% (1)

- Python For Data Science - Cheat SheetsDocument10 pagesPython For Data Science - Cheat SheetsMustafa Ibrahim100% (4)

- Numpy Basics: Arithmetic OperationsDocument7 pagesNumpy Basics: Arithmetic OperationsDeepak Gupta100% (14)

- Numpy Python Cheat SheetDocument1 pageNumpy Python Cheat Sheetyellow_birdNo ratings yet

- PythonForDataScience Cheatsheet PDFDocument21 pagesPythonForDataScience Cheatsheet PDFGreet Labs100% (2)

- Numpy Python Cheat SheetDocument1 pageNumpy Python Cheat SheetJosh Iz100% (1)

- Python Cheat Sheet For Data Scientists by Tomi Mester 2019 PDFDocument23 pagesPython Cheat Sheet For Data Scientists by Tomi Mester 2019 PDFDanBarbera100% (3)

- Pandas Cheat SheetDocument2 pagesPandas Cheat SheetRoberto83% (12)

- Pandas DataFrame NotesDocument13 pagesPandas DataFrame Notesalainvalois67% (3)

- Python Cheat Sheet PDFDocument26 pagesPython Cheat Sheet PDFharishrnjic100% (2)

- SQL Cheat Sheet PythonDocument1 pageSQL Cheat Sheet PythonAndrew KhalatovNo ratings yet

- Numpy Cheat SheetDocument1 pageNumpy Cheat SheetIan Flores100% (1)

- Cheat Sheet: The Pandas Dataframe Object: Preliminaries Get Your Data Into A DataframeDocument12 pagesCheat Sheet: The Pandas Dataframe Object: Preliminaries Get Your Data Into A Dataframetoddae100% (1)

- Core Python Cheat SheetDocument9 pagesCore Python Cheat Sheetsalim100% (4)

- Python Matplotlib Cheat SheetDocument1 pagePython Matplotlib Cheat SheetsreedharNo ratings yet

- NumPy, SciPy, Pandas, Quandl Cheat SheetDocument4 pagesNumPy, SciPy, Pandas, Quandl Cheat Sheetseanrwcrawford100% (3)

- Begginer's Python Cheat Sheet-Essentials PDFDocument2 pagesBegginer's Python Cheat Sheet-Essentials PDFUspiredes BornicosNo ratings yet

- Python Cheat SheetDocument2 pagesPython Cheat Sheetebookkarthi100% (2)

- Python Quick Reference CardDocument17 pagesPython Quick Reference CardSérgio Luiz Araújo Silva94% (16)

- Python Cheat Sheet: Mosh HamedaniDocument14 pagesPython Cheat Sheet: Mosh HamedaniAbd Elrahman Hamdy100% (4)

- Data Visualization With Python PDFDocument662 pagesData Visualization With Python PDFDo Huy89% (9)

- Beginners Python Cheat SheetDocument28 pagesBeginners Python Cheat Sheetpavithra67% (3)

- What Is Python?: in Data ScienceDocument17 pagesWhat Is Python?: in Data Scienceprashant kumarNo ratings yet

- Pandas in 7 Days: Utilize Python to Manipulate Data, Conduct Scientific Computing, Time Series Analysis, and Exploratory Data AnalysisFrom EverandPandas in 7 Days: Utilize Python to Manipulate Data, Conduct Scientific Computing, Time Series Analysis, and Exploratory Data AnalysisNo ratings yet

- The Python Workshop: Learn to code in Python and kickstart your career in software development or data scienceFrom EverandThe Python Workshop: Learn to code in Python and kickstart your career in software development or data scienceRating: 5 out of 5 stars5/5 (1)

- State-of-the-Art Deep Learning Models in TensorFlow: Modern Machine Learning in the Google Colab EcosystemFrom EverandState-of-the-Art Deep Learning Models in TensorFlow: Modern Machine Learning in the Google Colab EcosystemNo ratings yet

- Cheat Sheet CollectionDocument15 pagesCheat Sheet CollectionNabiha Reyhan100% (1)

- Python Cheatsheets 1635792640Document9 pagesPython Cheatsheets 1635792640Mokhtar Alerte100% (1)

- Python Cheatsheets DsDocument11 pagesPython Cheatsheets DsRasib WaseetNo ratings yet

- Python DataScience PDFDocument9 pagesPython DataScience PDFRangan Srinivasan100% (1)

- PythonForDataScience PDFDocument1 pagePythonForDataScience PDFMaisarah Mohd PauziNo ratings yet

- Data Science Cheatsheets PDFDocument9 pagesData Science Cheatsheets PDFSamridhi ThakurNo ratings yet

- Python For Data Science Cheat Sheet: Subset SliceDocument1 pagePython For Data Science Cheat Sheet: Subset SliceHayath Masthan50% (2)

- Python 5Document9 pagesPython 5ram.pharmacy0020No ratings yet

- Python Cheat SheetDocument9 pagesPython Cheat SheetteegaNo ratings yet

- Python Basics: Subset SliceDocument5 pagesPython Basics: Subset Slicesurya r100% (1)

- Python DataScience Cheat-SheetDocument7 pagesPython DataScience Cheat-SheetZain UL ABIDIN100% (1)

- Cambridge International AS & A Level: Computer Science 9618/22Document16 pagesCambridge International AS & A Level: Computer Science 9618/22Ali TariqNo ratings yet

- S Syntax: DBA Training ManualDocument34 pagesS Syntax: DBA Training ManualJ Mateo Pineda LopNo ratings yet

- VL2023240100986 Ast02Document3 pagesVL2023240100986 Ast02SIDDHU KUMARNo ratings yet

- Mahmud RAHMAN - 2.2 WorkbookDocument20 pagesMahmud RAHMAN - 2.2 WorkbookMahmud RahmanNo ratings yet

- Java MCQ'SDocument16 pagesJava MCQ'Schivukula Vamshi KrishnaNo ratings yet

- CS 100 QP1 Solved Ktunotes - inDocument38 pagesCS 100 QP1 Solved Ktunotes - indavidsongeorgeNo ratings yet

- Sample Exam For Sas BaseDocument20 pagesSample Exam For Sas BaseospapNo ratings yet

- 5144 EPSInFormsDocument27 pages5144 EPSInFormsSachin NalawadeNo ratings yet

- Ch2b Programming (Part A) - EDocument100 pagesCh2b Programming (Part A) - Ekey3aaa13762No ratings yet

- Labview QuestionsDocument2 pagesLabview QuestionsVenkateswara RajuNo ratings yet

- Sputil Api Reference: Service Portal Cheat SheetDocument1 pageSputil Api Reference: Service Portal Cheat SheetPickMeNo ratings yet

- Regular Expressions Cheat Sheet v1Document1 pageRegular Expressions Cheat Sheet v1Jennifer HansenNo ratings yet

- Python ProgrammingDocument22 pagesPython ProgrammingPralay mondalNo ratings yet

- CS201 15Document32 pagesCS201 15AmeetNo ratings yet

- 05 Intro To Recursion PDFDocument28 pages05 Intro To Recursion PDFSabahat KanwalNo ratings yet

- Character Conversion APIsDocument14 pagesCharacter Conversion APIsrachmat99No ratings yet

- System VerilogDocument19 pagesSystem VerilogBalasubrahmanya VaRma DanduNo ratings yet

- Matlab LAB2.0Document43 pagesMatlab LAB2.0Farhan KhanNo ratings yet

- Jdegtget - Generictext/ Jdegtget - Generictextkeystr: SyntaxDocument5 pagesJdegtget - Generictext/ Jdegtget - Generictextkeystr: SyntaxRaveRaveNo ratings yet

- ASA Webhooks v1.0Document9 pagesASA Webhooks v1.0Himanshu SharmaNo ratings yet

- PPL NotesDocument144 pagesPPL Notessanyasirao1No ratings yet

- Fundamentals of Programming Lab Journal - Lab # 8: ObjectiveDocument3 pagesFundamentals of Programming Lab Journal - Lab # 8: ObjectivefurqanNo ratings yet

- Workforce Int MNGR v8 Advanced Int Prog (Practice)Document38 pagesWorkforce Int MNGR v8 Advanced Int Prog (Practice)Sydney RapanganwaNo ratings yet

- Python For Beginners Master Python Programming From Basics To Advanced Level Tim Simon All ChapterDocument67 pagesPython For Beginners Master Python Programming From Basics To Advanced Level Tim Simon All Chapterjesse.clarke416100% (6)

- Chapter 03 Review QuestionsDocument7 pagesChapter 03 Review QuestionsAnonymous lcuemKNo ratings yet

- PythonDocument83 pagesPythonRuel J. SaunarNo ratings yet

- 3 TopGoogleQuestionsDocument2 pages3 TopGoogleQuestionsDIVYANSHUNo ratings yet

- Java BeginnerDocument172 pagesJava BeginnerAsoka NguyenNo ratings yet

- Oops cs8392 16 MarksDocument85 pagesOops cs8392 16 MarksAsst.Prof S&H100% (2)

Python For Data Science Cheat Sheet Python Basics

Python For Data Science Cheat Sheet Python Basics

Uploaded by

MohamedYounesOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Python For Data Science Cheat Sheet Python Basics

Python For Data Science Cheat Sheet Python Basics

Uploaded by

MohamedYounesCopyright:

Available Formats

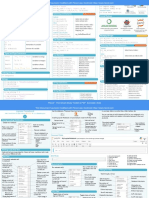

Python For Data Science Cheat Sheet Lists Also see NumPy Arrays Libraries

>>> a = 'is' Import libraries

Python Basics >>> b = 'nice' >>> import numpy Data analysis Machine learning

Learn More Python for Data Science Interactively at www.datacamp.com >>> my_list = ['my', 'list', a, b] >>> import numpy as np

>>> my_list2 = [[4,5,6,7], [3,4,5,6]] Selective import

>>> from math import pi Scientific computing 2D plotting

Variables and Data Types Selecting List Elements Index starts at 0

Subset Install Python

Variable Assignment

>>> my_list[1] Select item at index 1

>>> x=5

>>> my_list[-3] Select 3rd last item

>>> x

Slice

5 >>> my_list[1:3] Select items at index 1 and 2

Calculations With Variables >>> my_list[1:] Select items after index 0

>>> my_list[:3] Select items before index 3 Leading open data science platform Free IDE that is included Create and share

>>> x+2 Sum of two variables

>>> my_list[:] Copy my_list powered by Python with Anaconda documents with live code,

7 visualizations, text, ...

>>> x-2 Subtraction of two variables

Subset Lists of Lists

>>> my_list2[1][0] my_list[list][itemOfList]

3

>>> my_list2[1][:2] Numpy Arrays Also see Lists

>>> x*2 Multiplication of two variables

>>> my_list = [1, 2, 3, 4]

10 List Operations >>> my_array = np.array(my_list)

>>> x**2 Exponentiation of a variable

25 >>> my_list + my_list >>> my_2darray = np.array([[1,2,3],[4,5,6]])

>>> x%2 Remainder of a variable ['my', 'list', 'is', 'nice', 'my', 'list', 'is', 'nice']

Selecting Numpy Array Elements Index starts at 0

1 >>> my_list * 2

>>> x/float(2) Division of a variable ['my', 'list', 'is', 'nice', 'my', 'list', 'is', 'nice'] Subset

2.5 >>> my_list2 > 4 >>> my_array[1] Select item at index 1

True 2

Types and Type Conversion Slice

List Methods >>> my_array[0:2] Select items at index 0 and 1

str() '5', '3.45', 'True' Variables to strings

my_list.index(a) Get the index of an item array([1, 2])

>>>

int() 5, 3, 1 Variables to integers >>> my_list.count(a) Count an item Subset 2D Numpy arrays

>>> my_list.append('!') Append an item at a time >>> my_2darray[:,0] my_2darray[rows, columns]

my_list.remove('!') Remove an item array([1, 4])

float() 5.0, 1.0 Variables to floats >>>

>>> del(my_list[0:1]) Remove an item Numpy Array Operations

bool() True, True, True >>> my_list.reverse() Reverse the list

Variables to booleans >>> my_array > 3

>>> my_list.extend('!') Append an item array([False, False, False, True], dtype=bool)

>>> my_list.pop(-1) Remove an item >>> my_array * 2

Asking For Help >>> my_list.insert(0,'!') Insert an item array([2, 4, 6, 8])

>>> help(str) >>> my_list.sort() Sort the list >>> my_array + np.array([5, 6, 7, 8])

array([6, 8, 10, 12])

Strings

>>> my_string = 'thisStringIsAwesome' Numpy Array Functions

String Operations Index starts at 0

>>> my_string >>> my_array.shape Get the dimensions of the array

'thisStringIsAwesome' >>> my_string[3] >>> np.append(other_array) Append items to an array

>>> my_string[4:9] >>> np.insert(my_array, 1, 5) Insert items in an array

String Operations >>> np.delete(my_array,[1]) Delete items in an array

String Methods >>> np.mean(my_array) Mean of the array

>>> my_string * 2

'thisStringIsAwesomethisStringIsAwesome' >>> my_string.upper() String to uppercase >>> np.median(my_array) Median of the array

>>> my_string + 'Innit' >>> my_string.lower() String to lowercase >>> my_array.corrcoef() Correlation coefficient

'thisStringIsAwesomeInnit' >>> my_string.count('w') Count String elements >>> np.std(my_array) Standard deviation

>>> 'm' in my_string >>> my_string.replace('e', 'i') Replace String elements

True >>> my_string.strip() Strip whitespaces DataCamp

Learn Python for Data Science Interactively

©2012-2015 - Laurent Pointal Mémento v2.0.6

License Creative Commons Attribution 4 Python 3 Cheat Sheet Latest version on :

https://perso.limsi.fr/pointal/python:memento

integer, float, boolean, string, bytes Base Types ◾ ordered sequences, fast index access, repeatable values Container Types

int 783 0 -192 0b010 0o642 0xF3 list [1,5,9] ["x",11,8.9] ["mot"] []

zero binary octal hexa tuple (1,5,9) 11,"y",7.4 ("mot",) ()

float 9.23 0.0 -1.7e-6

-6 Non modifiable values (immutables) ☝ expression with only comas →tuple

bool True False ×10

""

str bytes (ordered sequences of chars / bytes)

str "One\nTwo" Multiline string: b""

escaped new line """X\tY\tZ ◾ key containers, no a priori order, fast key access, each key is unique

'I\'m' 1\t2\t3""" dictionary dict {"key":"value"} dict(a=3,b=4,k="v") {}

escaped ' escaped tab (key/value associations) {1:"one",3:"three",2:"two",3.14:"π"}

bytes b"toto\xfe\775" collection set {"key1","key2"} {1,9,3,0} set()

hexadecimal octal ☝ immutables ☝ keys=hashable values (base types, immutables…) frozenset immutable set empty

for variables, functions, Identifiers type(expression) Conversions

modules, classes… names

int("15") → 15

int("3f",16) → 63 nd

can specify integer number base in 2 parameter

a…zA…Z_ followed by a…zA…Z_0…9

◽ diacritics allowed but should be avoided int(15.56) → 15 truncate decimal part

◽ language keywords forbidden float("-11.24e8") → -1124000000.0

◽ lower/UPPER case discrimination round(15.56,1)→ 15.6 rounding to 1 decimal (0 decimal → integer number)

☺ a toto x7 y_max BigOne bool(x) False for null x, empty container x , None or False x ; True for other x

☹ 8y and for str(x)→ "…" representation string of x for display (cf. formatting on the back)

chr(64)→'@' ord('@')→64 code ↔ char

= Variables assignment

repr(x)→ "…" literal representation string of x

☝ assignment ⇔ binding of a name with a value

1) evaluation of right side expression value bytes([72,9,64]) → b'H\t@'

2) assignment in order with left side names list("abc") → ['a','b','c']

x=1.2+8+sin(y) dict([(3,"three"),(1,"one")]) → {1:'one',3:'three'}

a=b=c=0 assignment to same value set(["one","two"]) → {'one','two'}

y,z,r=9.2,-7.6,0 multiple assignments separator str and sequence of str → assembled str

a,b=b,a values swap ':'.join(['toto','12','pswd']) → 'toto:12:pswd'

a,*b=seq unpacking of sequence in str splitted on whitespaces → list of str

*a,b=seq item and list "words with spaces".split() → ['words','with','spaces']

and str splitted on separator str → list of str

x+=3 increment ⇔ x=x+3 *=

x-=2 decrement ⇔ x=x-2 /= "1,4,8,2".split(",") → ['1','4','8','2']

x=None « undefined » constant value %= sequence of one type → list of another type (via list comprehension)

del x remove name x … [int(x) for x in ('1','29','-3')] → [1,29,-3]

for lists, tuples, strings, bytes… Sequence Containers Indexing

negative index -5 -4 -3 -2 -1 Items count Individual access to items via lst[index]

positive index 0 1 2 3 4 len(lst)→5 lst[0]→10 ⇒ first one lst[1]→20

lst=[10, 20, 30, 40, 50] lst[-1]→50 ⇒ last one lst[-2]→40

positive slice 0 1 2 3 4 5 ☝ index from 0

On mutable sequences (list), remove with

negative slice -5 -4 -3 -2 -1 (here from 0 to 4)

del lst[3] and modify with assignment

lst[4]=25

Access to sub-sequences via lst[start slice:end slice:step]

lst[:-1]→[10,20,30,40] lst[::-1]→[50,40,30,20,10] lst[1:3]→[20,30] lst[:3]→[10,20,30]

lst[1:-1]→[20,30,40] lst[::-2]→[50,30,10] lst[-3:-1]→[30,40] lst[3:]→[40,50]

lst[::2]→[10,30,50] lst[:]→[10,20,30,40,50] shallow copy of sequence

Missing slice indication → from start / up to end.

On mutable sequences (list), remove with del lst[3:5] and modify with assignment lst[1:4]=[15,25]

Boolean Logic Statements Blocks Modules/Names Imports

module truc⇔file truc.py

Comparisons : < > <= >= == != from monmod import nom1,nom2 as fct

(boolean results) ≤ ≥ = ≠ parent statement: →direct access to names, renaming with as

a and b logical and both simulta- statement block 1… import monmod →access via monmod.nom1 …

indentation !

-neously ⁝ ☝ modules and packages searched in python path (cf sys.path)

a or b logical or one or other parent statement: statement block executed only

or both Conditional Statement

statement block2… if a condition is true

☝ pitfall : and and or return value of a or ⁝ yes no yes

of b (under shortcut evaluation). if logical condition: ? ?

⇒ ensure that a and b are booleans. no

not a logical not

next statement after block 1 statements block

True Can go with several elif, elif... and only one

True and False constants ☝ configure editor to insert 4 spaces in if age<=18:

False final else. Only the block of first true

place of an indentation tab. state="Kid"

condition is executed. elif age>65:

☝ floating numbers… approximated values Maths

angles in radians ☝ with a var x: state="Retired"

if bool(x)==True: ⇔ if x: else:

Operators: + - * / // % ** from math import sin,pi… state="Active"

if bool(x)==False: ⇔ if not x:

Priority (…) × ÷ ab sin(pi/4)→0.707…

integer ÷ ÷ remainder cos(2*pi/3)→-0.4999… Exceptions on Errors

Signaling an error:

@ → matrix × python3.5+numpy sqrt(81)→9.0 √ raise ExcClass(…) error

(1+5.3)*2→12.6 log(e**2)→2.0 Errors processing: normal processing

abs(-3.2)→3.2 ceil(12.5)→13 try: raise X() errorraise

processing processing

round(3.57,1)→3.6 floor(12.5)→12 normal procesising block

pow(4,3)→64.0 modules math, statistics, random, except Exception as e: ☝ finally block for final processing

☝ usual order of operations decimal, fractions, numpy, etc. (cf. doc) error processing block in all cases.

statements block executed as long as Conditional Loop Statement statements block executed for each Iterative Loop Statement

condition is true item of a container or iterator

☝ beware of infinite loops!

yes next

while logical condition: ? Loop Control for var in sequence: …

no finish

statements block break immediate exit statements block

continue next iteration

s = 0 initializations before the loop ☝ else block for normal Go over sequence's values

i = 1 condition with a least one variable value (here i) loop exit. s = "Some text" initializations before the loop

Algo: cnt = 0

☝ good habit : don't modify loop variable

while i <= 100: i=100 loop variable, assignment managed by for statement

s = s + i**2

i = i + 1 ☝ make condition variable change ! s= ∑ i 2 for c in s:

if c == "e": Algo: count

print("sum:",s) i=1 cnt = cnt + 1 number of e

print("found",cnt,"'e'") in the string.

print("v=",3,"cm :",x,",",y+4) Display loop on dict/set ⇔ loop on keys sequences

use slices to loop on a subset of a sequence

items to display : literal values, variables, expressions Go over sequence's index

print options: ◽ modify item at index

◽ sep=" " items separator, default space ◽ access items around index (before / after)

lst = [11,18,9,12,23,4,17]

◽ end="\n" end of print, default new line lost = []

◽ file=sys.stdout print to file, default standard output for idx in range(len(lst)): Algo: limit values greater

val = lst[idx] than 15, memorizing

s = input("Instructions:") Input

if val > 15: of lost values.

☝ input always returns a string, convert it to required type lost.append(val)

(cf. boxed Conversions on the other side). lst[idx] = 15

print("modif:",lst,"-lost:",lost)

len(c)→ items count Generic Operations on Containers Go simultaneously over sequence's index and values:

min(c) max(c) sum(c) Note: For dictionaries and sets, these for idx,val in enumerate(lst):

sorted(c)→ list sorted copy operations use keys.

val in c → boolean, membership operator in (absence not in) range([start,] end [,step]) Integer Sequences

enumerate(c)→ iterator on (index, value) ☝ start default 0, end not included in sequence, step signed, default 1

zip(c1,c2…)→ iterator on tuples containing ci items at same index

range(5)→ 0 1 2 3 4 range(2,12,3)→ 2 5 8 11

all(c)→ True if all c items evaluated to true, else False range(3,8)→ 3 4 5 6 7 range(20,5,-5)→ 20 15 10

any(c)→ True if at least one item of c evaluated true, else False range(len(seq))→ sequence of index of values in seq

Specific to ordered sequences containers (lists, tuples, strings, bytes…) ☝ range provides an immutable sequence of int constructed as needed

reversed(c)→ inversed iterator c*5→ duplicate c+c2→ concatenate

c.index(val)→ position c.count(val)→ events count function name (identifier) Function Definition

import copy named parameters

copy.copy(c)→ shallow copy of container def fct(x,y,z):

copy.deepcopy(c)→ deep copy of container fct

"""documentation"""

☝ modify original list Operations on Lists # statements block, res computation, etc.

lst.append(val) add item at end return res result value of the call, if no computed

result to return: return None

lst.extend(seq) add sequence of items at end ☝ parameters and all

lst.insert(idx,val) insert item at index variables of this block exist only in the block and during the function

lst.remove(val) remove first item with value val call (think of a “black box”)

lst.pop([idx])→value remove & return item at index idx (default last) Advanced: def fct(x,y,z,*args,a=3,b=5,**kwargs):

lst.sort() lst.reverse() sort / reverse liste in place *args variable positional arguments (→tuple), default values,

**kwargs variable named arguments (→dict)

Operations on Dictionaries Operations on Sets

d[key]=value d.clear() Operators: r = fct(3,i+2,2*i) Function Call

| → union (vertical bar char) storage/use of one argument per

d[key]→ value del d[key] & → intersection returned value parameter

d.update(d2) update/add - ^ → difference/symmetric diff. ☝ this is the use of function fct() fct

associations Advanced:

d.keys() < <= > >= → inclusion relations name with parentheses *sequence

d.values() →iterable views on Operators also exist as methods. which does the call **dict

d.items() keys/values/associations

d.pop(key[,default])→ value s.update(s2) s.copy()

s.add(key) s.remove(key) s.startswith(prefix[,start[,end]]) Operations on Strings

d.popitem()→ (key,value)

d.get(key[,default])→ value s.discard(key) s.clear() s.endswith(suffix[,start[,end]]) s.strip([chars])

d.setdefault(key[,default])→value s.pop() s.count(sub[,start[,end]]) s.partition(sep)→ (before,sep,after)

s.index(sub[,start[,end]]) s.find(sub[,start[,end]])

storing data on disk, and reading it back Files s.is…() tests on chars categories (ex. s.isalpha())

f = open("file.txt","w",encoding="utf8") s.upper() s.lower() s.title() s.swapcase()

s.casefold() s.capitalize() s.center([width,fill])

file variable name of file

opening mode encoding of s.ljust([width,fill]) s.rjust([width,fill]) s.zfill([width])

for operations ◽ 'r' read

on disk chars for text s.encode(encoding) s.split([sep]) s.join(seq)

◽ 'w' write

(+path…) files:

◽ 'a' append utf8 ascii formating directives values to format Formatting

cf. modules os, os.path and pathlib ◽ …'+' 'x' 'b' 't' latin1 …

"modele{} {} {}".format(x,y,r) str

writing ☝ read empty string if end of file reading "{selection:formatting!conversion}"

f.write("coucou") f.read([n]) → next chars

f.writelines(list of lines) if n not specified, read up to end ! ◽ Selection : "{:+2.3f}".format(45.72793)

f.readlines([n]) → list of next lines 2 →'+45.728'

Examples

f.readline() → next line nom "{1:>10s}".format(8,"toto")

☝ text mode t by default (read/write str), possible binary 0.nom →' toto'

4[key] "{x!r}".format(x="I'm")

mode b (read/write bytes). Convert from/to required type ! 0[2]

f.close() ☝ dont forget to close the file after use ! →'"I\'m"'

◽ Formatting :

f.flush() write cache f.truncate([size]) resize fill char alignment sign mini width.precision~maxwidth type

reading/writing progress sequentially in the file, modifiable with:

<>^= + - space 0 at start for filling with 0

f.tell()→position f.seek(position[,origin]) integer: b binary, c char, d decimal (default), o octal, x or X hexa…

Very common: opening with a guarded block with open(…) as f: float: e or E exponential, f or F fixed point, g or G appropriate (default),

(automatic closing) and reading loop on lines for line in f : string: s … % percent

of a text file: # processing ofline ◽ Conversion : s (readable text) or r (literal representation)

LEARN DATA SCIENCE ONLINE

Start Learning For Free - www.dataquest.io

Data Science Cheat Sheet

Python - Intermediate

KEY BASICS, PRINTING AND GETTING HELP

This cheat sheet assumes you are familiar with the content of our Python Basics Cheat Sheet

s - A Python string variable l - A Python list variable

i - A Python integer variable d - A Python dictionary variable

f - A Python float variable

L I STS len(my_set) - Returns the number of objects in now - wks4 - Return a datetime object

l.pop(3) - Returns the fourth item from l and my_set (or, the number of unique values from l) representing the time 4 weeks prior to now

deletes it from the list a in my_set - Returns True if the value a exists in newyear_2020 = dt.datetime(year=2020,

l.remove(x) - Removes the first item in l that is my_set month=12, day=31) - Assign a datetime

equal to x object representing December 25, 2020 to

l.reverse() - Reverses the order of the items in l REGULAR EXPRESSIONS newyear_2020

l[1::2] - Returns every second item from l, import re - Import the Regular Expressions module newyear_2020.strftime("%A, %b %d, %Y")

commencing from the 1st item re.search("abc",s) - Returns a match object if - Returns "Thursday, Dec 31, 2020"

l[-5:] - Returns the last 5 items from l specific axis the regex "abc" is found in s, otherwise None dt.datetime.strptime('Dec 31, 2020',"%b

re.sub("abc","xyz",s) - Returns a string where %d, %Y") - Return a datetime object

ST R I N G S all instances matching regex "abc" are replaced representing December 31, 2020

s.lower() - Returns a lowercase version of s by "xyz"

s.title() - Returns s with the first letter of every RANDOM

word capitalized L I ST C O M P R E H E N S I O N import random - Import the random module

"23".zfill(4) - Returns "0023" by left-filling the A one-line expression of a for loop random.random() - Returns a random float

string with 0’s to make it’s length 4. [i ** 2 for i in range(10)] - Returns a list of between 0.0 and 1.0

s.splitlines() - Returns a list by splitting the the squares of values from 0 to 9 random.randint(0,10) - Returns a random

string on any newline characters. [s.lower() for s in l_strings] - Returns the integer between 0 and 10

Python strings share some common methods with lists list l_strings, with each item having had the random.choice(l) - Returns a random item from

s[:5] - Returns the first 5 characters of s .lower() method applied the list l

"fri" + "end" - Returns "friend" [i for i in l_floats if i < 0.5] - Returns

"end" in s - Returns True if the substring "end" the items from l_floats that are less than 0.5 COUNTER

is found in s from collections import Counter - Import the

F U N C T I O N S F O R LO O P I N G Counter class

RANGE for i, value in enumerate(l): c = Counter(l) - Assign a Counter (dict-like)

Range objects are useful for creating sequences of print("The value of item {} is {}". object with the counts of each unique item from

integers for looping. format(i,value)) l, to c

range(5) - Returns a sequence from 0 to 4 - Iterate over the list l, printing the index location c.most_common(3) - Return the 3 most common

range(2000,2018) - Returns a sequence from 2000 of each item and its value items from l

to 2017 for one, two in zip(l_one,l_two):

range(0,11,2) - Returns a sequence from 0 to 10, print("one: {}, two: {}".format(one,two)) T RY/ E XC E P T

with each item incrementing by 2 - Iterate over two lists, l_one and l_two and print Catch and deal with Errors

range(0,-10,-1) - Returns a sequence from 0 to -9 each value l_ints = [1, 2, 3, "", 5] - Assign a list of

list(range(5)) - Returns a list from 0 to 4 while x < 10: integers with one missing value to l_ints

x += 1 l_floats = []

DICTIONARIES - Run the code in the body of the loop until the for i in l_ints:

max(d, key=d.get) - Return the key that value of x is no longer less than 10 try:

corresponds to the largest value in d l_floats.append(float(i))

min(d, key=d.get) - Return the key that DAT E T I M E except:

corresponds to the smallest value in d import datetime as dt - Import the datetime l_floats.append(i)

module - Convert each value of l_ints to a float, catching

S E TS now = dt.datetime.now() - Assign datetime and handling ValueError: could not convert

my_set = set(l) - Return a set object containing object representing the current time to now string to float: where values are missing.

the unique values from l wks4 = dt.datetime.timedelta(weeks=4)

- Assign a timedelta object representing a

timespan of 4 weeks to wks4

LEARN DATA SCIENCE ONLINE

Start Learning For Free - www.dataquest.io

Python For Data Science Cheat Sheet Asking For Help Dropping

>>> help(pd.Series.loc)

>>> s.drop(['a', 'c']) Drop values from rows (axis=0)

Pandas Basics Selection Also see NumPy Arrays >>> df.drop('Country', axis=1) Drop values from columns(axis=1)

Learn Python for Data Science Interactively at www.DataCamp.com

Getting

>>> s['b'] Get one element Sort & Rank

-5

Pandas >>> df.sort_index() Sort by labels along an axis

>>> df.sort_values(by='Country') Sort by the values along an axis

>>> df[1:] Get subset of a DataFrame

The Pandas library is built on NumPy and provides easy-to-use Country Capital Population >>> df.rank() Assign ranks to entries

data structures and data analysis tools for the Python 1 India New Delhi 1303171035

2 Brazil Brasília 207847528

programming language. Retrieving Series/DataFrame Information

Selecting, Boolean Indexing & Setting Basic Information

Use the following import convention: By Position >>> df.shape (rows,columns)

>>> import pandas as pd >>> df.iloc[[0],[0]] Select single value by row & >>> df.index Describe index

'Belgium' column >>> df.columns Describe DataFrame columns

Pandas Data Structures >>> df.iat([0],[0])

>>>

>>>

df.info()

df.count()

Info on DataFrame

Number of non-NA values

Series 'Belgium'

Summary

A one-dimensional labeled array a 3 By Label

>>> df.loc[[0], ['Country']] Select single value by row & >>> df.sum() Sum of values

capable of holding any data type b -5

'Belgium' column labels >>> df.cumsum() Cummulative sum of values

>>> df.min()/df.max() Minimum/maximum values

c 7 >>> df.at([0], ['Country']) >>> df.idxmin()/df.idxmax()

Index Minimum/Maximum index value

d 4 'Belgium' >>> df.describe() Summary statistics

>>> df.mean() Mean of values

>>> s = pd.Series([3, -5, 7, 4], index=['a', 'b', 'c', 'd'])

By Label/Position >>> df.median() Median of values

>>> df.ix[2] Select single row of

DataFrame Country

Capital

Brazil

Brasília

subset of rows Applying Functions

Population 207847528 >>> f = lambda x: x*2

Columns

Country Capital Population A two-dimensional labeled >>> df.ix[:,'Capital'] Select a single column of >>> df.apply(f) Apply function

>>> df.applymap(f) Apply function element-wise

data structure with columns 0 Brussels subset of columns

0 Belgium Brussels 11190846 1 New Delhi

of potentially different types 2 Brasília Data Alignment

1 India New Delhi 1303171035

Index >>> df.ix[1,'Capital'] Select rows and columns

2 Brazil Brasília 207847528 Internal Data Alignment

'New Delhi'

NA values are introduced in the indices that don’t overlap:

Boolean Indexing

>>> data = {'Country': ['Belgium', 'India', 'Brazil'], >>> s3 = pd.Series([7, -2, 3], index=['a', 'c', 'd'])

>>> s[~(s > 1)] Series s where value is not >1

'Capital': ['Brussels', 'New Delhi', 'Brasília'], >>> s[(s < -1) | (s > 2)] s where value is <-1 or >2 >>> s + s3

'Population': [11190846, 1303171035, 207847528]} >>> df[df['Population']>1200000000] Use filter to adjust DataFrame a 10.0

b NaN

>>> df = pd.DataFrame(data, Setting

c 5.0

columns=['Country', 'Capital', 'Population']) >>> s['a'] = 6 Set index a of Series s to 6

d 7.0

I/O Arithmetic Operations with Fill Methods

You can also do the internal data alignment yourself with

Read and Write to CSV Read and Write to SQL Query or Database Table

the help of the fill methods:

>>> pd.read_csv('file.csv', header=None, nrows=5) >>> from sqlalchemy import create_engine >>> s.add(s3, fill_value=0)

>>> df.to_csv('myDataFrame.csv') >>> engine = create_engine('sqlite:///:memory:') a 10.0

>>> pd.read_sql("SELECT * FROM my_table;", engine) b -5.0

Read and Write to Excel c 5.0

>>> pd.read_sql_table('my_table', engine) d 7.0

>>> pd.read_excel('file.xlsx') >>> pd.read_sql_query("SELECT * FROM my_table;", engine) >>> s.sub(s3, fill_value=2)

>>> pd.to_excel('dir/myDataFrame.xlsx', sheet_name='Sheet1') >>> s.div(s3, fill_value=4)

read_sql()is a convenience wrapper around read_sql_table() and

Read multiple sheets from the same file >>> s.mul(s3, fill_value=3)

read_sql_query()

>>> xlsx = pd.ExcelFile('file.xls')

>>> df = pd.read_excel(xlsx, 'Sheet1') >>> pd.to_sql('myDf', engine) DataCamp

Learn Python for Data Science Interactively

Python For Data Science Cheat Sheet Inspecting Your Array Subsetting, Slicing, Indexing Also see Lists

>>> a.shape Array dimensions Subsetting

NumPy Basics >>>

>>>

len(a)

b.ndim

Length of array

Number of array dimensions

>>> a[2]

3

1 2 3 Select the element at the 2nd index

Learn Python for Data Science Interactively at www.DataCamp.com >>> e.size Number of array elements >>> b[1,2] 1.5 2 3 Select the element at row 1 column 2

>>> b.dtype Data type of array elements 6.0 4 5 6 (equivalent to b[1][2])

>>> b.dtype.name Name of data type

>>> b.astype(int) Convert an array to a different type Slicing

NumPy >>> a[0:2]

array([1, 2])

1 2 3 Select items at index 0 and 1

2

The NumPy library is the core library for scientific computing in Asking For Help >>> b[0:2,1] 1.5 2 3 Select items at rows 0 and 1 in column 1

>>> np.info(np.ndarray.dtype) array([ 2., 5.]) 4 5 6

Python. It provides a high-performance multidimensional array

Array Mathematics

1.5 2 3

>>> b[:1] Select all items at row 0

object, and tools for working with these arrays. array([[1.5, 2., 3.]]) 4 5 6 (equivalent to b[0:1, :])

Arithmetic Operations >>> c[1,...] Same as [1,:,:]

Use the following import convention: array([[[ 3., 2., 1.],

>>> import numpy as np [ 4., 5., 6.]]])

>>> g = a - b Subtraction

array([[-0.5, 0. , 0. ], >>> a[ : :-1] Reversed array a

NumPy Arrays [-3. , -3. , -3. ]])

array([3, 2, 1])

>>> np.subtract(a,b) Boolean Indexing

1D array 2D array 3D array Subtraction

>>> a[a<2] Select elements from a less than 2

>>> b + a Addition 1 2 3

array([[ 2.5, 4. , 6. ], array([1])

axis 1 axis 2

1 2 3 axis 1 [ 5. , 7. , 9. ]]) Fancy Indexing

1.5 2 3 >>> np.add(b,a) Addition >>> b[[1, 0, 1, 0],[0, 1, 2, 0]] Select elements (1,0),(0,1),(1,2) and (0,0)

axis 0 axis 0 array([ 4. , 2. , 6. , 1.5])

4 5 6 >>> a / b Division

array([[ 0.66666667, 1. , 1. ], >>> b[[1, 0, 1, 0]][:,[0,1,2,0]] Select a subset of the matrix’s rows

[ 0.25 , 0.4 , 0.5 ]]) array([[ 4. ,5. , 6. , 4. ], and columns

>>> np.divide(a,b) Division [ 1.5, 2. , 3. , 1.5],

Creating Arrays >>> a * b

array([[ 1.5, 4. , 9. ],

Multiplication

[ 4. , 5.

[ 1.5, 2.

,

,

6.

3.

,

,

4. ],

1.5]])

>>> a = np.array([1,2,3]) [ 4. , 10. , 18. ]])

>>> b = np.array([(1.5,2,3), (4,5,6)], dtype = float) >>> np.multiply(a,b) Multiplication Array Manipulation

>>> c = np.array([[(1.5,2,3), (4,5,6)], [(3,2,1), (4,5,6)]], >>> np.exp(b) Exponentiation

dtype = float) >>> np.sqrt(b) Square root Transposing Array

>>> np.sin(a) Print sines of an array >>> i = np.transpose(b) Permute array dimensions

Initial Placeholders >>> np.cos(b) Element-wise cosine >>> i.T Permute array dimensions

>>> np.log(a) Element-wise natural logarithm

>>> np.zeros((3,4)) Create an array of zeros >>> e.dot(f) Dot product

Changing Array Shape

>>> np.ones((2,3,4),dtype=np.int16) Create an array of ones array([[ 7., 7.], >>> b.ravel() Flatten the array

>>> d = np.arange(10,25,5) Create an array of evenly [ 7., 7.]]) >>> g.reshape(3,-2) Reshape, but don’t change data

spaced values (step value)

>>> np.linspace(0,2,9) Create an array of evenly Comparison Adding/Removing Elements

spaced values (number of samples) >>> h.resize((2,6)) Return a new array with shape (2,6)

>>> e = np.full((2,2),7) Create a constant array >>> a == b Element-wise comparison >>> np.append(h,g) Append items to an array

>>> f = np.eye(2) Create a 2X2 identity matrix array([[False, True, True], >>> np.insert(a, 1, 5) Insert items in an array

>>> np.random.random((2,2)) Create an array with random values [False, False, False]], dtype=bool) >>> np.delete(a,[1]) Delete items from an array

>>> np.empty((3,2)) Create an empty array >>> a < 2 Element-wise comparison

array([True, False, False], dtype=bool) Combining Arrays

>>> np.array_equal(a, b) Array-wise comparison >>> np.concatenate((a,d),axis=0) Concatenate arrays

I/O array([ 1, 2,

>>> np.vstack((a,b))

3, 10, 15, 20])

Stack arrays vertically (row-wise)

Aggregate Functions array([[ 1. , 2. , 3. ],

Saving & Loading On Disk [ 1.5, 2. , 3. ],

>>> a.sum() Array-wise sum [ 4. , 5. , 6. ]])

>>> np.save('my_array', a) >>> a.min() Array-wise minimum value >>> np.r_[e,f] Stack arrays vertically (row-wise)

>>> np.savez('array.npz', a, b) >>> b.max(axis=0) Maximum value of an array row >>> np.hstack((e,f)) Stack arrays horizontally (column-wise)

>>> np.load('my_array.npy') >>> b.cumsum(axis=1) Cumulative sum of the elements array([[ 7., 7., 1., 0.],

>>> a.mean() Mean [ 7., 7., 0., 1.]])

Saving & Loading Text Files >>> b.median() Median >>> np.column_stack((a,d)) Create stacked column-wise arrays

>>> np.loadtxt("myfile.txt") >>> a.corrcoef() Correlation coefficient array([[ 1, 10],

>>> np.std(b) Standard deviation [ 2, 15],

>>> np.genfromtxt("my_file.csv", delimiter=',') [ 3, 20]])

>>> np.savetxt("myarray.txt", a, delimiter=" ") >>> np.c_[a,d] Create stacked column-wise arrays

Copying Arrays Splitting Arrays

Data Types >>> h = a.view() Create a view of the array with the same data >>> np.hsplit(a,3) Split the array horizontally at the 3rd

>>> np.copy(a) Create a copy of the array [array([1]),array([2]),array([3])] index

>>> np.int64 Signed 64-bit integer types >>> np.vsplit(c,2) Split the array vertically at the 2nd index

>>> np.float32 Standard double-precision floating point >>> h = a.copy() Create a deep copy of the array [array([[[ 1.5, 2. , 1. ],

>>> np.complex Complex numbers represented by 128 floats [ 4. , 5. , 6. ]]]),

array([[[ 3., 2., 3.],

>>>

>>>

np.bool

np.object

Boolean type storing TRUE and FALSE values

Python object type Sorting Arrays [ 4., 5., 6.]]])]

>>> np.string_ Fixed-length string type >>> a.sort() Sort an array

>>> np.unicode_ Fixed-length unicode type >>> c.sort(axis=0) Sort the elements of an array's axis DataCamp

Learn Python for Data Science Interactively

Python For Data Science Cheat Sheet Advanced Indexing Also see NumPy Arrays Combining Data

Selecting data1 data2

Pandas >>> df3.loc[:,(df3>1).any()] Select cols with any vals >1 X1 X2 X1 X3

Learn Python for Data Science Interactively at www.DataCamp.com >>> df3.loc[:,(df3>1).all()] Select cols with vals > 1

>>> df3.loc[:,df3.isnull().any()] Select cols with NaN a 11.432 a 20.784

>>> df3.loc[:,df3.notnull().all()] Select cols without NaN b 1.303 b NaN

Indexing With isin c 99.906 d 20.784

>>> df[(df.Country.isin(df2.Type))] Find same elements

Reshaping Data >>> df3.filter(items=”a”,”b”]) Filter on values

Merge

>>> df.select(lambda x: not x%5) Select specific elements

Pivot Where X1 X2 X3

>>> pd.merge(data1,

>>> df3= df2.pivot(index='Date', Spread rows into columns >>> s.where(s > 0) Subset the data data2, a 11.432 20.784

columns='Type', Query how='left',

values='Value') b 1.303 NaN

>>> df6.query('second > first') Query DataFrame on='X1')

c 99.906 NaN

Date Type Value

0 2016-03-01 a 11.432 Type a b c Setting/Resetting Index >>> pd.merge(data1, X1 X2 X3

1 2016-03-02 b 13.031 Date data2, a 11.432 20.784

>>> df.set_index('Country') Set the index

how='right',

2 2016-03-01 c 20.784 2016-03-01 11.432 NaN 20.784 >>> df4 = df.reset_index() Reset the index b 1.303 NaN

on='X1')

3 2016-03-03 a 99.906 >>> df = df.rename(index=str, Rename DataFrame d NaN 20.784

2016-03-02 1.303 13.031 NaN columns={"Country":"cntry",

4 2016-03-02 a 1.303 "Capital":"cptl", >>> pd.merge(data1,

2016-03-03 99.906 NaN 20.784 "Population":"ppltn"}) X1 X2 X3

5 2016-03-03 c 20.784 data2,

how='inner', a 11.432 20.784

Pivot Table Reindexing on='X1') b 1.303 NaN

>>> s2 = s.reindex(['a','c','d','e','b'])

>>> df4 = pd.pivot_table(df2, Spread rows into columns X1 X2 X3

values='Value', Forward Filling Backward Filling >>> pd.merge(data1,

index='Date', data2, a 11.432 20.784

columns='Type']) >>> df.reindex(range(4), >>> s3 = s.reindex(range(5), how='outer', b 1.303 NaN

method='ffill') method='bfill') on='X1') c 99.906 NaN

Stack / Unstack Country Capital Population 0 3

0 Belgium Brussels 11190846 1 3 d NaN 20.784

>>> stacked = df5.stack() Pivot a level of column labels 1 India New Delhi 1303171035 2 3

>>> stacked.unstack() Pivot a level of index labels 2 Brazil Brasília 207847528 3 3 Join

3 Brazil Brasília 207847528 4 3

0 1 1 5 0 0.233482 >>> data1.join(data2, how='right')

1 5 0.233482 0.390959 1 0.390959 MultiIndexing Concatenate

2 4 0.184713 0.237102 2 4 0 0.184713

>>> arrays = [np.array([1,2,3]),

3 3 0.433522 0.429401 1 0.237102 np.array([5,4,3])] Vertical

>>> df5 = pd.DataFrame(np.random.rand(3, 2), index=arrays) >>> s.append(s2)

Unstacked 3 3 0 0.433522

>>> tuples = list(zip(*arrays)) Horizontal/Vertical

1 0.429401 >>> index = pd.MultiIndex.from_tuples(tuples, >>> pd.concat([s,s2],axis=1, keys=['One','Two'])

Stacked names=['first', 'second']) >>> pd.concat([data1, data2], axis=1, join='inner')

>>> df6 = pd.DataFrame(np.random.rand(3, 2), index=index)

Melt >>> df2.set_index(["Date", "Type"])

>>> pd.melt(df2, Gather columns into rows

Dates

id_vars=["Date"],

value_vars=["Type", "Value"],

Duplicate Data >>> df2['Date']= pd.to_datetime(df2['Date'])

>>> df2['Date']= pd.date_range('2000-1-1',

value_name="Observations") >>> s3.unique() Return unique values periods=6,

>>> df2.duplicated('Type') Check duplicates freq='M')

Date Type Value

Date Variable Observations >>> dates = [datetime(2012,5,1), datetime(2012,5,2)]

0 2016-03-01 Type a >>> df2.drop_duplicates('Type', keep='last') Drop duplicates >>> index = pd.DatetimeIndex(dates)

0 2016-03-01 a 11.432 1 2016-03-02 Type b

>>> df.index.duplicated() Check index duplicates >>> index = pd.date_range(datetime(2012,2,1), end, freq='BM')

1 2016-03-02 b 13.031 2 2016-03-01 Type c

2 2016-03-01 c 20.784 3 2016-03-03 Type a Grouping Data Visualization Also see Matplotlib

4 2016-03-02 Type a

3 2016-03-03 a 99.906

5 2016-03-03 Type c Aggregation >>> import matplotlib.pyplot as plt

4 2016-03-02 a 1.303 >>> df2.groupby(by=['Date','Type']).mean()

6 2016-03-01 Value 11.432 >>> s.plot() >>> df2.plot()

>>> df4.groupby(level=0).sum()

5 2016-03-03 c 20.784 7 2016-03-02 Value 13.031 >>> df4.groupby(level=0).agg({'a':lambda x:sum(x)/len(x), >>> plt.show() >>> plt.show()

8 2016-03-01 Value 20.784 'b': np.sum})

9 2016-03-03 Value 99.906 Transformation

>>> customSum = lambda x: (x+x%2)

10 2016-03-02 Value 1.303

>>> df4.groupby(level=0).transform(customSum)

11 2016-03-03 Value 20.784

Iteration Missing Data

>>> df.dropna() Drop NaN values

>>> df.iteritems() (Column-index, Series) pairs >>> df3.fillna(df3.mean()) Fill NaN values with a predetermined value

>>> df.iterrows() (Row-index, Series) pairs >>> df2.replace("a", "f") Replace values with others

DataCamp

Learn Python for Data Science Interactively

Python For Data Science Cheat Sheet Plot Anatomy & Workflow

Plot Anatomy Workflow

Matplotlib Axes/Subplot The basic steps to creating plots with matplotlib are:

Learn Python Interactively at www.DataCamp.com 1 Prepare data 2 Create plot 3 Plot 4 Customize plot 5 Save plot 6 Show plot

>>> import matplotlib.pyplot as plt

>>> x = [1,2,3,4] Step 1

>>> y = [10,20,25,30]

>>> fig = plt.figure() Step 2

Matplotlib Y-axis Figure >>> ax = fig.add_subplot(111) Step 3

>>> ax.plot(x, y, color='lightblue', linewidth=3) Step 3, 4

Matplotlib is a Python 2D plotting library which produces >>> ax.scatter([2,4,6],

publication-quality figures in a variety of hardcopy formats [5,15,25],

color='darkgreen',

and interactive environments across marker='^')

platforms. >>> ax.set_xlim(1, 6.5)

X-axis

>>> plt.savefig('foo.png')

1 Prepare The Data Also see Lists & NumPy

>>> plt.show() Step 6

1D Data 4 Customize Plot

>>> import numpy as np Colors, Color Bars & Color Maps Mathtext

>>> x = np.linspace(0, 10, 100)

>>> y = np.cos(x) >>> plt.plot(x, x, x, x**2, x, x**3) >>> plt.title(r'$sigma_i=15$', fontsize=20)

>>> z = np.sin(x) >>> ax.plot(x, y, alpha = 0.4)

>>> ax.plot(x, y, c='k') Limits, Legends & Layouts

2D Data or Images >>> fig.colorbar(im, orientation='horizontal')

>>> im = ax.imshow(img, Limits & Autoscaling

>>> data = 2 * np.random.random((10, 10)) cmap='seismic')

>>> data2 = 3 * np.random.random((10, 10)) >>> ax.margins(x=0.0,y=0.1) Add padding to a plot

>>> Y, X = np.mgrid[-3:3:100j, -3:3:100j] >>> ax.axis('equal') Set the aspect ratio of the plot to 1

Markers >>> ax.set(xlim=[0,10.5],ylim=[-1.5,1.5]) Set limits for x-and y-axis

>>> U = -1 - X**2 + Y

>>> V = 1 + X - Y**2 >>> fig, ax = plt.subplots() >>> ax.set_xlim(0,10.5) Set limits for x-axis

>>> from matplotlib.cbook import get_sample_data >>> ax.scatter(x,y,marker=".") Legends

>>> img = np.load(get_sample_data('axes_grid/bivariate_normal.npy')) >>> ax.plot(x,y,marker="o") >>> ax.set(title='An Example Axes', Set a title and x-and y-axis labels

ylabel='Y-Axis',

Linestyles xlabel='X-Axis')

2 Create Plot >>>

>>>

plt.plot(x,y,linewidth=4.0)

plt.plot(x,y,ls='solid')

>>> ax.legend(loc='best')

Ticks

No overlapping plot elements

>>> import matplotlib.pyplot as plt >>> ax.xaxis.set(ticks=range(1,5), Manually set x-ticks

>>> plt.plot(x,y,ls='--') ticklabels=[3,100,-12,"foo"])

Figure >>> plt.plot(x,y,'--',x**2,y**2,'-.') >>> ax.tick_params(axis='y', Make y-ticks longer and go in and out

>>> plt.setp(lines,color='r',linewidth=4.0) direction='inout',

>>> fig = plt.figure() length=10)

>>> fig2 = plt.figure(figsize=plt.figaspect(2.0)) Text & Annotations

Subplot Spacing

Axes >>> ax.text(1, >>> fig3.subplots_adjust(wspace=0.5, Adjust the spacing between subplots

-2.1, hspace=0.3,

All plotting is done with respect to an Axes. In most cases, a 'Example Graph', left=0.125,

style='italic') right=0.9,

subplot will fit your needs. A subplot is an axes on a grid system. >>> ax.annotate("Sine", top=0.9,

>>> fig.add_axes() xy=(8, 0), bottom=0.1)

>>> ax1 = fig.add_subplot(221) # row-col-num xycoords='data', >>> fig.tight_layout() Fit subplot(s) in to the figure area

xytext=(10.5, 0),

>>> ax3 = fig.add_subplot(212) textcoords='data', Axis Spines

>>> fig3, axes = plt.subplots(nrows=2,ncols=2) arrowprops=dict(arrowstyle="->", >>> ax1.spines['top'].set_visible(False) Make the top axis line for a plot invisible

>>> fig4, axes2 = plt.subplots(ncols=3) connectionstyle="arc3"),) >>> ax1.spines['bottom'].set_position(('outward',10)) Move the bottom axis line outward

3 Plotting Routines 5 Save Plot

1D Data Vector Fields Save figures

>>> plt.savefig('foo.png')

>>> fig, ax = plt.subplots() >>> axes[0,1].arrow(0,0,0.5,0.5) Add an arrow to the axes

>>> lines = ax.plot(x,y) Draw points with lines or markers connecting them >>> axes[1,1].quiver(y,z) Plot a 2D field of arrows Save transparent figures

>>> ax.scatter(x,y) Draw unconnected points, scaled or colored >>> axes[0,1].streamplot(X,Y,U,V) Plot a 2D field of arrows >>> plt.savefig('foo.png', transparent=True)

>>> axes[0,0].bar([1,2,3],[3,4,5]) Plot vertical rectangles (constant width)

>>>

>>>

>>>

axes[1,0].barh([0.5,1,2.5],[0,1,2])

axes[1,1].axhline(0.45)

axes[0,1].axvline(0.65)

Plot horiontal rectangles (constant height)

Draw a horizontal line across axes

Draw a vertical line across axes

Data Distributions

>>> ax1.hist(y) Plot a histogram

6 Show Plot

>>> plt.show()

>>> ax.fill(x,y,color='blue') Draw filled polygons >>> ax3.boxplot(y) Make a box and whisker plot

>>> ax.fill_between(x,y,color='yellow') Fill between y-values and 0 >>> ax3.violinplot(z) Make a violin plot

2D Data or Images Close & Clear

>>> fig, ax = plt.subplots() >>> plt.cla() Clear an axis

>>> axes2[0].pcolor(data2) Pseudocolor plot of 2D array >>> plt.clf() Clear the entire figure

>>> im = ax.imshow(img, Colormapped or RGB arrays >>> axes2[0].pcolormesh(data) Pseudocolor plot of 2D array

cmap='gist_earth', >>> plt.close() Close a window

interpolation='nearest', >>> CS = plt.contour(Y,X,U) Plot contours

vmin=-2, >>> axes2[2].contourf(data1) Plot filled contours

vmax=2) >>> axes2[2]= ax.clabel(CS) Label a contour plot DataCamp

Learn Python for Data Science Interactively

Matplotlib 2.0.0 - Updated on: 02/2017

Python For Data Science Cheat Sheet 3 Renderers & Visual Customizations

Bokeh Glyphs Grid Layout

Learn Bokeh Interactively at www.DataCamp.com, Scatter Markers >>> from bokeh.layouts import gridplot

taught by Bryan Van de Ven, core contributor >>> p1.circle(np.array([1,2,3]), np.array([3,2,1]), >>> row1 = [p1,p2]

fill_color='white') >>> row2 = [p3]

>>> p2.square(np.array([1.5,3.5,5.5]), [1,4,3], >>> layout = gridplot([[p1,p2],[p3]])

color='blue', size=1)

Plotting With Bokeh Line Glyphs Tabbed Layout

>>> p1.line([1,2,3,4], [3,4,5,6], line_width=2)

>>> p2.multi_line(pd.DataFrame([[1,2,3],[5,6,7]]), >>> from bokeh.models.widgets import Panel, Tabs

The Python interactive visualization library Bokeh >>> tab1 = Panel(child=p1, title="tab1")

pd.DataFrame([[3,4,5],[3,2,1]]),

enables high-performance visual presentation of color="blue") >>> tab2 = Panel(child=p2, title="tab2")

>>> layout = Tabs(tabs=[tab1, tab2])

large datasets in modern web browsers.

Customized Glyphs Also see Data

Linked Plots

Bokeh’s mid-level general purpose bokeh.plotting Selection and Non-Selection Glyphs

>>> p = figure(tools='box_select') Linked Axes

interface is centered around two main components: data >>> p.circle('mpg', 'cyl', source=cds_df, >>> p2.x_range = p1.x_range

and glyphs. selection_color='red', >>> p2.y_range = p1.y_range

nonselection_alpha=0.1) Linked Brushing

>>> p4 = figure(plot_width = 100,

+ = Hover Glyphs tools='box_select,lasso_select')

>>> from bokeh.models import HoverTool

>>> p4.circle('mpg', 'cyl', source=cds_df)

data glyphs plot >>> hover = HoverTool(tooltips=None, mode='vline')

>>> p5 = figure(plot_width = 200,

>>> p3.add_tools(hover)

tools='box_select,lasso_select')

The basic steps to creating plots with the bokeh.plotting >>> p5.circle('mpg', 'hp', source=cds_df)

interface are: US

Colormapping >>> layout = row(p4,p5)

1. Prepare some data: >>> from bokeh.models import CategoricalColorMapper

Asia

Europe

Python lists, NumPy arrays, Pandas DataFrames and other sequences of values

2. Create a new plot

>>> color_mapper = CategoricalColorMapper(

factors=['US', 'Asia', 'Europe'],

palette=['blue', 'red', 'green'])

4 Output & Export

3. Add renderers for your data, with visual customizations >>> p3.circle('mpg', 'cyl', source=cds_df, Notebook

color=dict(field='origin',

4. Specify where to generate the output transform=color_mapper), >>> from bokeh.io import output_notebook, show

5. Show or save the results legend='Origin') >>> output_notebook()

>>> from bokeh.plotting import figure

>>> from bokeh.io import output_file, show Legend Location HTML

>>> x = [1, 2, 3, 4, 5] Step 1

>>> y = [6, 7, 2, 4, 5] Inside Plot Area Standalone HTML

>>> p = figure(title="simple line example", Step 2 >>> p.legend.location = 'bottom_left' >>> from bokeh.embed import file_html

>>> from bokeh.resources import CDN

x_axis_label='x',

>>> html = file_html(p, CDN, "my_plot")

y_axis_label='y') Outside Plot Area

>>> p.line(x, y, legend="Temp.", line_width=2) Step 3 >>> from bokeh.models import Legend

>>> r1 = p2.asterisk(np.array([1,2,3]), np.array([3,2,1]) >>> from bokeh.io import output_file, show

>>> output_file("lines.html") Step 4 >>> r2 = p2.line([1,2,3,4], [3,4,5,6]) >>> output_file('my_bar_chart.html', mode='cdn')

>>> show(p) Step 5 >>> legend = Legend(items=[("One" ,[p1, r1]),("Two",[r2])],

location=(0, -30)) Components

1 Data Also see Lists, NumPy & Pandas

>>> p.add_layout(legend, 'right')

Legend Orientation

>>> from bokeh.embed import components

>>> script, div = components(p)

Under the hood, your data is converted to Column Data

Sources. You can also do this manually: >>> p.legend.orientation = "horizontal" PNG

>>> import numpy as np >>> p.legend.orientation = "vertical"

>>> from bokeh.io import export_png

>>> import pandas as pd >>> export_png(p, filename="plot.png")

>>> df = pd.DataFrame(np.array([[33.9,4,65, 'US'], Legend Background & Border

[32.4,4,66, 'Asia'],

[21.4,4,109, 'Europe']]), >>> p.legend.border_line_color = "navy" SVG

columns=['mpg','cyl', 'hp', 'origin'], >>> p.legend.background_fill_color = "white"

index=['Toyota', 'Fiat', 'Volvo']) >>> from bokeh.io import export_svgs

>>> from bokeh.models import ColumnDataSource Rows & Columns Layout >>> p.output_backend = "svg"

>>> export_svgs(p, filename="plot.svg")

>>> cds_df = ColumnDataSource(df) Rows

>>> from bokeh.layouts import row

2 Plotting >>> layout = row(p1,p2,p3)

Columns

5 Show or Save Your Plots

>>> from bokeh.plotting import figure >>> from bokeh.layouts import columns >>> show(p1) >>> show(layout)

>>> p1 = figure(plot_width=300, tools='pan,box_zoom') >>> layout = column(p1,p2,p3) >>> save(p1) >>> save(layout)

>>> p2 = figure(plot_width=300, plot_height=300, Nesting Rows & Columns

x_range=(0, 8), y_range=(0, 8)) >>>layout = row(column(p1,p2), p3) DataCamp

>>> p3 = figure() Learn Python for Data Science Interactively

Python For Data Science Cheat Sheet 3 Plotting With Seaborn

Seaborn Axis Grids

Learn Data Science Interactively at www.DataCamp.com >>> g = sns.FacetGrid(titanic, Subplot grid for plotting conditional >>> h = sns.PairGrid(iris) Subplot grid for plotting pairwise

col="survived", relationships >>> h = h.map(plt.scatter) relationships

row="sex") >>> sns.pairplot(iris) Plot pairwise bivariate distributions

>>> g = g.map(plt.hist,"age") >>> i = sns.JointGrid(x="x", Grid for bivariate plot with marginal

>>> sns.factorplot(x="pclass", Draw a categorical plot onto a y="y", univariate plots

y="survived", Facetgrid data=data)

Statistical Data Visualization With Seaborn hue="sex",

data=titanic)

>>> i = i.plot(sns.regplot,

sns.distplot)

The Python visualization library Seaborn is based on >>> sns.lmplot(x="sepal_width", Plot data and regression model fits >>> sns.jointplot("sepal_length", Plot bivariate distribution

y="sepal_length", across a FacetGrid "sepal_width",

matplotlib and provides a high-level interface for drawing hue="species", data=iris,

attractive statistical graphics. data=iris) kind='kde')

Categorical Plots Regression Plots

Make use of the following aliases to import the libraries: >>> sns.regplot(x="sepal_width", Plot data and a linear regression

Scatterplot

>>> import matplotlib.pyplot as plt y="sepal_length", model fit

>>> sns.stripplot(x="species", Scatterplot with one

>>> import seaborn as sns data=iris,

y="petal_length", categorical variable

data=iris) ax=ax)

The basic steps to creating plots with Seaborn are: >>> sns.swarmplot(x="species", Categorical scatterplot with Distribution Plots

y="petal_length", non-overlapping points

1. Prepare some data data=iris) >>> plot = sns.distplot(data.y, Plot univariate distribution

2. Control figure aesthetics Bar Chart kde=False,

color="b")

3. Plot with Seaborn >>> sns.barplot(x="sex", Show point estimates and

y="survived", confidence intervals with Matrix Plots

4. Further customize your plot hue="class", scatterplot glyphs

>>> sns.heatmap(uniform_data,vmin=0,vmax=1) Heatmap

data=titanic)

>>> import matplotlib.pyplot as plt Count Plot

>>>

>>>

>>>

import seaborn as sns

tips = sns.load_dataset("tips")

sns.set_style("whitegrid") Step 2

Step 1

>>> sns.countplot(x="deck",

data=titanic,

Show count of observations

4 Further Customizations Also see Matplotlib

palette="Greens_d")

>>> g = sns.lmplot(x="tip", Step 3

Point Plot Axisgrid Objects

y="total_bill",

data=tips, >>> sns.pointplot(x="class", Show point estimates and >>> g.despine(left=True) Remove left spine

aspect=2) y="survived", confidence intervals as >>> g.set_ylabels("Survived") Set the labels of the y-axis

>>> g = (g.set_axis_labels("Tip","Total bill(USD)"). hue="sex", rectangular bars >>> g.set_xticklabels(rotation=45) Set the tick labels for x

set(xlim=(0,10),ylim=(0,100))) data=titanic, >>> g.set_axis_labels("Survived", Set the axis labels

Step 4 palette={"male":"g", "Sex")

>>> plt.title("title")

>>> plt.show(g) Step 5 "female":"m"}, >>> h.set(xlim=(0,5), Set the limit and ticks of the

markers=["^","o"], ylim=(0,5), x-and y-axis

linestyles=["-","--"]) xticks=[0,2.5,5],

1

Boxplot yticks=[0,2.5,5])

Data Also see Lists, NumPy & Pandas >>> sns.boxplot(x="alive", Boxplot

Plot

y="age",

>>> import pandas as pd hue="adult_male",

>>> import numpy as np >>> plt.title("A Title") Add plot title

data=titanic)

>>> uniform_data = np.random.rand(10, 12) >>> plt.ylabel("Survived") Adjust the label of the y-axis

>>> sns.boxplot(data=iris,orient="h") Boxplot with wide-form data

>>> data = pd.DataFrame({'x':np.arange(1,101), >>> plt.xlabel("Sex") Adjust the label of the x-axis

'y':np.random.normal(0,4,100)}) Violinplot >>> plt.ylim(0,100) Adjust the limits of the y-axis

>>> sns.violinplot(x="age", Violin plot >>> plt.xlim(0,10) Adjust the limits of the x-axis

Seaborn also offers built-in data sets: y="sex", >>> plt.setp(ax,yticks=[0,5]) Adjust a plot property

>>> titanic = sns.load_dataset("titanic") hue="survived", >>> plt.tight_layout() Adjust subplot params

>>> iris = sns.load_dataset("iris") data=titanic)

2 Figure Aesthetics Also see Matplotlib

5 Show or Save Plot Also see Matplotlib

>>> plt.show() Show the plot

Context Functions >>> plt.savefig("foo.png") Save the plot as a figure

>>> f, ax = plt.subplots(figsize=(5,6)) Create a figure and one subplot >>> plt.savefig("foo.png", Save transparent figure

>>> sns.set_context("talk") Set context to "talk" transparent=True)

>>> sns.set_context("notebook", Set context to "notebook",

Seaborn styles font_scale=1.5, Scale font elements and

>>> sns.set() (Re)set the seaborn default

rc={"lines.linewidth":2.5}) override param mapping Close & Clear Also see Matplotlib

>>> sns.set_style("whitegrid") Set the matplotlib parameters Color Palette >>> plt.cla() Clear an axis

>>> sns.set_style("ticks", Set the matplotlib parameters >>> plt.clf() Clear an entire figure

{"xtick.major.size":8, >>> sns.set_palette("husl",3) Define the color palette >>> plt.close() Close a window

"ytick.major.size":8}) >>> sns.color_palette("husl") Use with with to temporarily set palette

>>> sns.axes_style("whitegrid") Return a dict of params or use with >>> flatui = ["#9b59b6","#3498db","#95a5a6","#e74c3c","#34495e","#2ecc71"]

with to temporarily set the style >>> sns.set_palette(flatui) Set your own color palette DataCamp

Learn Python for Data Science Interactively

Python For Data Science Cheat Sheet Excel Spreadsheets Pickled Files

>>> file = 'urbanpop.xlsx' >>> import pickle

Importing Data >>> data = pd.ExcelFile(file) >>> with open('pickled_fruit.pkl', 'rb') as file:

pickled_data = pickle.load(file)

>>> df_sheet2 = data.parse('1960-1966',

Learn Python for data science Interactively at www.DataCamp.com skiprows=[0],

names=['Country',

'AAM: War(2002)'])

>>> df_sheet1 = data.parse(0, HDF5 Files

parse_cols=[0],

Importing Data in Python skiprows=[0], >>> import h5py

>>> filename = 'H-H1_LOSC_4_v1-815411200-4096.hdf5'

names=['Country'])

Most of the time, you’ll use either NumPy or pandas to import >>> data = h5py.File(filename, 'r')

your data: To access the sheet names, use the sheet_names attribute:

>>> import numpy as np >>> data.sheet_names

>>> import pandas as pd Matlab Files

Help SAS Files >>> import scipy.io

>>> filename = 'workspace.mat'

>>> from sas7bdat import SAS7BDAT >>> mat = scipy.io.loadmat(filename)

>>> np.info(np.ndarray.dtype)

>>> help(pd.read_csv) >>> with SAS7BDAT('urbanpop.sas7bdat') as file:

df_sas = file.to_data_frame()

Text Files Exploring Dictionaries

Stata Files Accessing Elements with Functions

Plain Text Files >>> data = pd.read_stata('urbanpop.dta') >>> print(mat.keys()) Print dictionary keys

>>> filename = 'huck_finn.txt' >>> for key in data.keys(): Print dictionary keys

>>> file = open(filename, mode='r') Open the file for reading print(key)

>>> text = file.read() Read a file’s contents Relational Databases meta

quality

>>> print(file.closed) Check whether file is closed

>>> from sqlalchemy import create_engine strain

>>> file.close() Close file

>>> print(text) >>> engine = create_engine('sqlite://Northwind.sqlite') >>> pickled_data.values() Return dictionary values

>>> print(mat.items()) Returns items in list format of (key, value)

Use the table_names() method to fetch a list of table names: tuple pairs

Using the context manager with

>>> with open('huck_finn.txt', 'r') as file:

>>> table_names = engine.table_names() Accessing Data Items with Keys

print(file.readline()) Read a single line

print(file.readline()) Querying Relational Databases >>> for key in data ['meta'].keys() Explore the HDF5 structure

print(file.readline()) print(key)

>>> con = engine.connect() Description

>>> rs = con.execute("SELECT * FROM Orders") DescriptionURL

Table Data: Flat Files >>> df = pd.DataFrame(rs.fetchall()) Detector

>>> df.columns = rs.keys() Duration

GPSstart

Importing Flat Files with numpy >>> con.close()

Observatory

Files with one data type Using the context manager with Type

UTCstart

>>> filename = ‘mnist.txt’ >>> with engine.connect() as con:

>>> print(data['meta']['Description'].value) Retrieve the value for a key

>>> data = np.loadtxt(filename, rs = con.execute("SELECT OrderID FROM Orders")

delimiter=',', String used to separate values df = pd.DataFrame(rs.fetchmany(size=5))

df.columns = rs.keys()

skiprows=2,

usecols=[0,2],

Skip the first 2 lines

Read the 1st and 3rd column

Navigating Your FileSystem

dtype=str) The type of the resulting array Querying relational databases with pandas

Magic Commands

Files with mixed data types >>> df = pd.read_sql_query("SELECT * FROM Orders", engine)

>>> filename = 'titanic.csv' !ls List directory contents of files and directories

>>> data = np.genfromtxt(filename, %cd .. Change current working directory

%pwd Return the current working directory path

delimiter=',',

names=True, Look for column header

Exploring Your Data

dtype=None)

NumPy Arrays os Library

>>> data_array = np.recfromcsv(filename) >>> data_array.dtype Data type of array elements >>> import os

>>> data_array.shape Array dimensions >>> path = "/usr/tmp"

The default dtype of the np.recfromcsv() function is None. >>> wd = os.getcwd() Store the name of current directory in a string

>>> len(data_array) Length of array

>>> os.listdir(wd) Output contents of the directory in a list

Importing Flat Files with pandas >>> os.chdir(path) Change current working directory

pandas DataFrames >>> os.rename("test1.txt", Rename a file

>>> filename = 'winequality-red.csv' "test2.txt")

>>> data = pd.read_csv(filename, >>> df.head() Return first DataFrame rows

nrows=5, >>> os.remove("test1.txt") Delete an existing file

Number of rows of file to read >>> df.tail() Return last DataFrame rows >>> os.mkdir("newdir") Create a new directory

header=None, Row number to use as col names >>> df.index Describe index

sep='\t', Delimiter to use >>> df.columns Describe DataFrame columns

comment='#', Character to split comments >>> df.info() Info on DataFrame

na_values=[""]) String to recognize as NA/NaN >>> data_array = data.values Convert a DataFrame to an a NumPy array DataCamp

Learn R for Data Science Interactively

Working with Different Programming Languages Widgets

Python For Data Science Cheat Sheet Kernels provide computation and communication with front-end interfaces Notebook widgets provide the ability to visualize and control changes

Jupyter Notebook like the notebooks. There are three main kernels: in your data, often as a control like a slider, textbox, etc.

Learn More Python for Data Science Interactively at www.DataCamp.com

You can use them to build interactive GUIs for your notebooks or to

IRkernel IJulia

synchronize stateful and stateless information between Python and

Installing Jupyter Notebook will automatically install the IPython kernel. JavaScript.

Saving/Loading Notebooks Restart kernel Interrupt kernel

Create new notebook Restart kernel & run Interrupt kernel & Download serialized Save notebook

all cells clear all output state of all widget with interactive

Open an existing

Connect back to a models in use widgets

Make a copy of the notebook Restart kernel & run remote notebook

current notebook all cells Embed current

Rename notebook Run other installed

widgets

kernels

Revert notebook to a

Save current notebook

previous checkpoint Command Mode:

and record checkpoint

Download notebook as

Preview of the printed - IPython notebook 15

notebook - Python

- HTML

Close notebook & stop - Markdown 13 14

- reST

running any scripts - LaTeX 1 2 3 4 5 6 7 8 9 10 11 12

Writing Code And Text

Code and text are encapsulated by 3 basic cell types: markdown cells, code

cells, and raw NBConvert cells.

Edit Cells Edit Mode: 1. Save and checkpoint 9. Interrupt kernel

2. Insert cell below 10. Restart kernel

3. Cut cell 11. Display characteristics

Cut currently selected cells Copy cells from 4. Copy cell(s) 12. Open command palette

to clipboard clipboard to current 5. Paste cell(s) below 13. Current kernel

cursor position 6. Move cell up 14. Kernel status

Paste cells from Executing Cells 7. Move cell down 15. Log out from notebook server

clipboard above Paste cells from 8. Run current cell

current cell Run selected cell(s) Run current cells down

clipboard below

and create a new one

Paste cells from current cell

below Asking For Help

clipboard on top Run current cells down

Delete current cells

of current cel and create a new one Walk through a UI tour

Split up a cell from above Run all cells

Revert “Delete Cells” List of built-in keyboard

current cursor Run all cells above the Run all cells below

invocation shortcuts

position current cell the current cell Edit the built-in

Merge current cell Merge current cell keyboard shortcuts

Change the cell type of toggle, toggle Notebook help topics

with the one above with the one below current cell scrolling and clear Description of

Move current cell up Move current cell toggle, toggle current outputs markdown available Information on

down scrolling and clear in notebook unofficial Jupyter

Adjust metadata

underlying the Find and replace all output Notebook extensions

Python help topics

current notebook in selected cells IPython help topics

View Cells

Remove cell Copy attachments of NumPy help topics

attachments current cell Toggle display of Jupyter SciPy help topics

Toggle display of toolbar Matplotlib help topics

Paste attachments of Insert image in logo and filename

SymPy help topics

current cell selected cells Toggle display of cell Pandas help topics

action icons:

Insert Cells - None About Jupyter Notebook

- Edit metadata

Toggle line numbers - Raw cell format

Add new cell above the Add new cell below the - Slideshow

current one in cells - Attachments

current one DataCamp

- Tags

Learn Python for Data Science Interactively

Python For Data Science Cheat Sheet Linear Algebra Also see NumPy

You’ll use the linalg and sparse modules. Note that scipy.linalg contains and expands on numpy.linalg.

SciPy - Linear Algebra >>> from scipy import linalg, sparse Matrix Functions

Learn More Python for Data Science Interactively at www.datacamp.com

Creating Matrices Addition

>>> np.add(A,D) Addition

>>> A = np.matrix(np.random.random((2,2)))

SciPy >>> B = np.asmatrix(b) Subtraction

>>> C = np.mat(np.random.random((10,5))) >>> np.subtract(A,D) Subtraction

The SciPy library is one of the core packages for >>> D = np.mat([[3,4], [5,6]]) Division

scientific computing that provides mathematical >>> np.divide(A,D) Division

Basic Matrix Routines Multiplication

algorithms and convenience functions built on the

>>> np.multiply(D,A) Multiplication

NumPy extension of Python. Inverse >>> np.dot(A,D) Dot product

>>> A.I Inverse >>> np.vdot(A,D) Vector dot product

>>> linalg.inv(A) Inverse

Interacting With NumPy Also see NumPy >>> A.T Tranpose matrix >>> np.inner(A,D) Inner product

>>> np.outer(A,D) Outer product

>>> import numpy as np >>> A.H Conjugate transposition >>> np.tensordot(A,D) Tensor dot product

>>> a = np.array([1,2,3]) >>> np.trace(A) Trace >>> np.kron(A,D) Kronecker product

>>> b = np.array([(1+5j,2j,3j), (4j,5j,6j)])

>>> c = np.array([[(1.5,2,3), (4,5,6)], [(3,2,1), (4,5,6)]]) Norm Exponential Functions

>>> linalg.norm(A) Frobenius norm >>> linalg.expm(A) Matrix exponential

Index Tricks >>> linalg.norm(A,1) L1 norm (max column sum) >>> linalg.expm2(A) Matrix exponential (Taylor Series)

>>> linalg.norm(A,np.inf) L inf norm (max row sum) >>> linalg.expm3(D) Matrix exponential (eigenvalue

>>> np.mgrid[0:5,0:5] Create a dense meshgrid decomposition)

>>> np.ogrid[0:2,0:2] Create an open meshgrid Rank Logarithm Function

>>> np.r_[[3,[0]*5,-1:1:10j] Stack arrays vertically (row-wise) >>> np.linalg.matrix_rank(C) Matrix rank >>> linalg.logm(A) Matrix logarithm

>>> np.c_[b,c] Create stacked column-wise arrays Determinant Trigonometric Tunctions

>>> linalg.det(A) Determinant >>> linalg.sinm(D) Matrix sine

Shape Manipulation Solving linear problems >>> linalg.cosm(D) Matrix cosine

>>> np.transpose(b) Permute array dimensions >>> linalg.solve(A,b) Solver for dense matrices >>> linalg.tanm(A) Matrix tangent

>>> b.flatten() Flatten the array >>> E = np.mat(a).T Solver for dense matrices Hyperbolic Trigonometric Functions

>>> np.hstack((b,c)) Stack arrays horizontally (column-wise) >>> linalg.lstsq(D,E) Least-squares solution to linear matrix >>> linalg.sinhm(D) Hypberbolic matrix sine

>>> np.vstack((a,b)) Stack arrays vertically (row-wise) equation >>> linalg.coshm(D) Hyperbolic matrix cosine

>>> np.hsplit(c,2) Split the array horizontally at the 2nd index Generalized inverse >>> linalg.tanhm(A) Hyperbolic matrix tangent

>>> np.vpslit(d,2) Split the array vertically at the 2nd index >>> linalg.pinv(C) Compute the pseudo-inverse of a matrix Matrix Sign Function

(least-squares solver) >>> np.sigm(A) Matrix sign function

Polynomials >>> linalg.pinv2(C) Compute the pseudo-inverse of a matrix

>>> from numpy import poly1d (SVD) Matrix Square Root

>>> linalg.sqrtm(A) Matrix square root

>>> p = poly1d([3,4,5]) Create a polynomial object

Creating Sparse Matrices Arbitrary Functions

Vectorizing Functions >>> linalg.funm(A, lambda x: x*x) Evaluate matrix function

>>> F = np.eye(3, k=1) Create a 2X2 identity matrix

>>> def myfunc(a):

if a < 0: >>> G = np.mat(np.identity(2)) Create a 2x2 identity matrix Decompositions

return a*2 >>> C[C > 0.5] = 0

else: >>> H = sparse.csr_matrix(C)

return a/2

Compressed Sparse Row matrix Eigenvalues and Eigenvectors

>>> I = sparse.csc_matrix(D) Compressed Sparse Column matrix >>> la, v = linalg.eig(A) Solve ordinary or generalized