Professional Documents

Culture Documents

Chapter Two Text/Document Operations and Automatic Indexing Statistical Properties of Text

Chapter Two Text/Document Operations and Automatic Indexing Statistical Properties of Text

Uploaded by

Tolosa TafeseOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Chapter Two Text/Document Operations and Automatic Indexing Statistical Properties of Text

Chapter Two Text/Document Operations and Automatic Indexing Statistical Properties of Text

Uploaded by

Tolosa TafeseCopyright:

Available Formats

Information Storage and Retrieval

Chapter Two

Text/Document Operations and Automatic Indexing

Statistical Properties of Text

How is the frequency of different words distributed?

o How fast does vocabulary size grow with the size of a corpus?

Such factors affect the performance of IR system and can be used to select

suitable term weights and other aspects of the system.

o A few words are very common.

2 most frequent words (e.g. “the”, “of”) can account for about 10% of word occurrences.

o Most words are very rare.

Half the words in a corpus appear only once, called “read only once”

o Called a “heavy tailed” distribution, since most of the probability mass is in

the “tail”

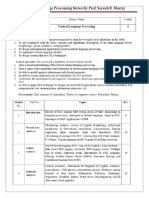

Sample Word Frequency Data

Frequencies from 336,310 documents in the 1GB TREC volume 3 Corpus 125,720,891 total

word occurrences; 508,209 unique words.

AUWC Compiled by Kassahun M. Page 1

Information Storage and Retrieval

Zipf’s distributions

Rank Frequency Distribution

For all the words in a collection of documents, for each word w

f: is the frequency that w appears

r: is rank of win order of frequency. (The most commonly occurring word has rank 1, etc.)

Word distribution: Zipf's Law

o Zipf's Law-named after the Harvard linguistic professor George Kingsley Zipf

(1902-1950),

Attempts to capture the distribution of the frequencies (i.e. number of occurrences) of the

words within a text.

o Zipf's Law states that when the distinct words in a text are arranged in

decreasing order of their frequency of occurrence (most frequent words first), the

occurrence characteristics of the vocabulary can be characterized by the constant

rank-frequency law of Zipf:

Frequency * Rank = constant

o If the words, w, in a collection are ranked, r, by their frequency, f, they roughly fit

the relation: r* f= c

o Different collections have different constants c. Example: Zipf's Law

AUWC Compiled by Kassahun M. Page 2

Information Storage and Retrieval

o The table shows the most frequently occurring words from 336,310 document

collection containing 125, 720, 891 total words; out of which 508, 209 unique

words.

Zipf’s law: modeling word distribution

o The collection frequency of the ith most common term is proportional to 1/I.

fi ∞ 1/i

If the most frequent term occurs f1times then the second most frequent term has

half as many occurrences, the third most frequent term has a third as many, etc.

o Zipf's Law states that the frequency of the ith most frequent word is 1/iӨ times that

of the most frequent word.

Occurrence of some event (P), as a function of the rank (i) where the rank is

determined by the frequency of occurrence, is a power-law function. Pi~

1/iӨ with the exponent Ө close to unity.

More Examples: Zipf’s Law

o Illustration of Rank-Frequency Law. Let the total number of word occurrences in

the sample N = 1, 000, 000

AUWC Compiled by Kassahun M. Page 3

Information Storage and Retrieval

Methods that Build on Zipf's Law

Stop lists: Ignore the most frequent words (upper cut-off). Used by almost all

systems.

Significant words: Take words in between the most frequent (upper cut-off)

and least frequent words (lower cut-off).

Term weighting: Give differing weights to terms based on their frequency,

with most frequent words weighed less. Used by almost all ranking methods.

Explanations for Zipf’s Law

o The law has been explained by “principle of least effort” which makes it easier for a

speaker or writer of a language to repeat certain words instead of coining new and

different words.

Zipf’s explanation was his “principle of least effort” which balances between

speaker’s desire for a small vocabulary and hearer’s desire for a large one.

Zipf’s Law Impact on IR

Good News: Stop words will account for a large fraction of text so eliminating

them greatly reduces inverted-index storage costs.

Bad News: For most words, gathering sufficient data for meaningful statistical

analysis (e.g. for correlation analysis for query expansion) is difficult since

they are extremely rare.

AUWC Compiled by Kassahun M. Page 4

Information Storage and Retrieval

Word significance: Luhn’s Ideas

o Luhn Idea (1958): the frequency of word occurrence in a text furnishes a useful

measurement of word significance.

o Luhn suggested that both extremely common and extremely uncommon words were not

very useful for indexing.

o For this, Luhn specifies two cutoff points: an upper and a lower cutoffs based on

which non-significant words are excluded

The words exceeding the upper cutoff were considered to be common

The words below the lower cutoff were considered to be rare

Hence they are not contributing significantly to the content of the text

The ability of words to discriminate content, reached a peak at a rank order position

half way between the two-cutoffs

o Let f be the frequency of occurrence of words in a text, and r their rank in

decreasing order of word frequency, then a plot relating f & r yields the following

curve

o Luhn (1958) suggested that both extremely common and extremely uncommon

words were not very useful for document representation & indexing.

AUWC Compiled by Kassahun M. Page 5

Information Storage and Retrieval

Vocabulary size: Heaps’ Law

o Has dictionaries 600,000+ words. But they do not include names of people,

locations, products etc.

o Heap’s law: estimates the number of vocabularies in a given corpus

Vocabulary Growth: Heaps’ Law

o How does the size of the overall vocabulary (number of unique words) grow with

the size of the corpus? This determines how the size of the inverted index will scale with

the size of the corpus.

o Heap’s law: estimates the number of vocabularies in a given corpus

The vocabulary size grows by O(nβ), where βis a constant between 0 –1.

If V is the size of the vocabulary and n is the length of the corpus in words,

Heap’s provides the following equation: Where constants:

Heap’s distributions

o Distribution of size of the vocabulary: there is a linear relationship between

vocabulary size and number of tokens

o Example: from 1,000,000,000 documents, there may be 1,000,000 distinct words.

Can you agree?

AUWC Compiled by Kassahun M. Page 6

Information Storage and Retrieval

Example

o We want to estimate the size of the vocabulary for a corpus of 1,000,000 words.

However, we only know statistics computed on smaller corpora sizes:

For 100,000 words, there are 50,000 unique words

For 500,000 words, there are 150,000 unique words

Estimate the vocabulary size for the 1,000,000 words corpus?

How about for a corpus of 1,000,000,000 words?

Text Operations

o Not all words in a document are equally significant to represent the

contents/meanings of a document

Some word carry more meaning than others

Noun words are the most representative of a document content

o Therefore, need to preprocess the text of a document in a collection to be used as

index terms. Using these to fall words in a collection to index documents creates

too much noise for the retrieval task

Reduce noise means reduce words which can be used to refer to the

document

o Preprocessing is the process of controlling the size of the vocabulary or the

number of distinct words used as index terms. Preprocessing will lead to an

improvement in the information retrieval performance. However, some search

engines on the Web omit preprocessing. Every word in the document is an index

term

o Text operations is the process of text transformations into logical

representations

o 5 main operations for selecting index terms, i.e. to choose words/stems(or groups

of words) to be used as indexing terms:

AUWC Compiled by Kassahun M. Page 7

Information Storage and Retrieval

1. Lexical analysis/Tokenization of the text-digits, hyphens, punctuations marks,

and the case of letters

2. Elimination of stop words-filter out words which are not useful in the retrieval

process

3. Stemming words-remove affixes(prefixes and suffixes)

4. Construction of term categorization structures such as thesaurus, to capture

relationship for allowing the expansion of the original query with related terms

Generating Document Representatives

o Text Processing System

1. Input text –full text, abstract or title

2. Output –a document representative adequate for use in an automatic

retrieval system

o The document representative consists of a list of class names, each name

representing a class of words occurring in the total input text. A document will be

indexed by a name if one of its significant words occurs as a member of that class.

What is Document Pre-processing?

o Document pre-processing is the process of incorporating a new document into an

information retrieval system.

What is the goal of Document Pre-processing?

o To represent the document efficiently in terms of both space (for storing the

document) and time (for processing retrieval requests) requirements.

o To maintain good retrieval performance (precision and recall).

AUWC Compiled by Kassahun M. Page 8

Information Storage and Retrieval

o Document pre-processing is a complex process that leads to the representation of

each document by a select set of index terms. However, some Web search engines

are giving up on much of this process and index all (or virtually all) the words in a

document).

The stages of Document Pre-processing

Document pre-processing includes 5 stages:

1. Lexical analysis

2. Stop word elimination

3. Stemming

4. Index-term selection

5. Construction of thesauri

Lexical analysis

o Change text of the documents into words to be adopted as index terms

o Objective: Identify/Determine the words of the document.

o Lexical analysis separates the input alphabet into

Word characters (e.g., the letters a-z)

Word separators (e.g., space, newline, tab)

o The following decisions may have impact on retrieval

1. Digits: Used to be ignored, but the trend now is to identify numbers (e.g.,

telephone numbers) and mixed strings as words.

2. Punctuation marks: Usually treated as word separators.

3. Hyphens: Should we interpret “pre-processing” as “pre processing” or as

“preprocessing”?

4. Letter case: Often ignored, but then a search for “First Bank” (a specific bank)

would retrieve a document with the phrase “Bank of America was the first bank to

offer its customers…”

Stop word elimination

AUWC Compiled by Kassahun M. Page 9

Information Storage and Retrieval

o Objective: Filter out words that occur in most of the documents.

o Such words have no value for retrieval purposes. These words are referred to as

stop words. They include

Articles (a, an, the, …)

Prepositions (in, on, of, …)

Conjunctions (and, or, but, if, …)

Pronouns (I, you, them, it…)

Possibly some verbs, nouns, adverbs, adjectives (make, thing,

similar, …)

o A typical stopword list may include several hundred words.

o As seen earlier, the 100 most frequent words add-up to about 50% of the words in

a document.

o Hence, stopword elimination improves the size of the indexing structures.

Stemming

o Objective: Replace all the variants of a word with the single stem of the word.

o Variants include plurals, gerund forms (ing-form), third person suffixes, past tense

suffixes, etc.

Example: connect: connects, connected, connecting, connection,…

o All have similar semantics and relate to a single concept.

o In parallel, stemming must be performed on the user query.

o Stemming improves

Storage and search efficiency: Less term is stored.

Recall:

Without stemming a query about “connection”, matches only

documents that have “connection”.

With stemming, the query is about “connect” and matches in

addition documents that originally had “connects”, “connected”,

“connecting”, etc.

AUWC Compiled by Kassahun M. Page 10

Information Storage and Retrieval

o However, stemming may hurt precision, because users can no longer target just a

particular form.

o Stemming may be performed using

Algorithms that stripe of suffixes according to substitution rules.

Large dictionaries, that provides the stem of each word.

Index term selection (indexing)

o Objective: Increase efficiency by extracting from the resulting document a

selected set of terms to be used for indexing the document.

If full text representation is adopted then all words are used for indexing.

o Indexing is a critical process: User's ability to find documents on a particular

subject is limited by the indexing process having created index terms for this

subject.

o Index can be done manually or automatically.

o Historically, manual indexing was performed by professional indexers associated

with library organizations. However, automatic indexing is more common now

(or, with full text representations, indexing is altogether avoided).

What are the advantages of manual indexing?

Ability to perform abstractions (conclude what the subject is) and determine additional

related terms,

Ability to judge the value of concepts.

What are the advantages of automatic indexing?

Reduced cost: Once initial hardware cost is amortized, operational cost is cheaper than

wages for human indexers.

Reduced processing time

Improved consistency.

o Controlled vocabulary: Index terms must be selected from a predefined set of

terms (the domain of the index).

AUWC Compiled by Kassahun M. Page 11

Information Storage and Retrieval

Use of a controlled vocabulary helps standardize the choice of terms.

Searching is improved, because users know the vocabulary being used.

Thesauri can compensate for lack of controlled vocabularies.

o Index exhaustively: the extent to which concepts are indexed.

Should we index only the most important concepts, or also more minor

concepts?

o Index specificity: the preciseness of the index term used.

Should we use general indexing terms or more specific terms?

Should we use the term "computer", "personal computer", or “Gateway E-

3400”?

o Main effect:

High exhaustively improves recall (decreases precision).

High specificity improves precision (decreases recall).

o Related issues:

Index title and abstract only, or the entire document?

Should index terms be weighted?

o Reducing the size of the index:

Recall that articles, prepositions, conjunctions, pronouns have already been

removed through a stopword list.

Recall that the 100 most frequent words account for 50% of all word

occurrences.

Words that are very infrequent (occur only a few times in a collection) are

often removed, under the assumption that they would probably not be in the

user’s vocabulary.

Reduction not based on probabilistic arguments: Nouns are often preferred

over verbs, adjectives, or adverbs.

Indexing may also assign weights to terms.

o Non-weighted indexing:

AUWC Compiled by Kassahun M. Page 12

Information Storage and Retrieval

No attempt to determine the value of the different terms assigned to a

document.

Not possible to distinguish between major topics and casual references.

All retrieved documents are equal in value.

Typical of commercial systems through the 1980s.

o Weighted indexing:

Attempt made to place a value on each term as a description of the document.

This value is related to the frequency of occurrence of the term in the

document (higher is better), but also to the number of collection documents

that use this term (lower is better).

Query weights and document weights are combined to a value describing the

likelihood that a document matches a query

Thesauri

o Objective: Standardize the index terms that were selected.

o In its simplest form a thesaurus is

A list of “important” words (concepts).

For each word, an associated list of synonyms.

o A thesaurus may be generic (cover all of English) or concentrate on a particular

domain of knowledge.

o The role of a thesaurus in information retrieval

Provide a standard vocabulary for indexing.

Help users locate proper query terms.

Provide hierarchies for automatic broadening or narrowing of queries.

o Here, our interest is in providing a standard vocabulary (a controlled vocabulary).

o Essentially, in this final stage, each indexing term is replaced by the concept that

defines its thesaurus class.

AUWC Compiled by Kassahun M. Page 13

You might also like

- A Frequency Dictionary of Korean PDFDocument1,204 pagesA Frequency Dictionary of Korean PDFxeibel78% (9)

- AI AssignmentDocument21 pagesAI AssignmentTolosa TafeseNo ratings yet

- Online Trade Registration and License System PRDocument79 pagesOnline Trade Registration and License System PRTolosa TafeseNo ratings yet

- LexemeDocument2 pagesLexemeJa KovNo ratings yet

- Personality PredictionDocument21 pagesPersonality PredictionShreya Rajput67% (3)

- Qowl As SahabaDocument81 pagesQowl As SahabafullofwillNo ratings yet

- Dani 1986 Historical City of TaxilaDocument88 pagesDani 1986 Historical City of TaxilaLinda Le100% (1)

- 2 Text OperationDocument42 pages2 Text OperationTensu AwekeNo ratings yet

- Text Operations 2021Document45 pagesText Operations 2021Abdo AbaborNo ratings yet

- 2 - Text OperationDocument45 pages2 - Text OperationKirubel WakjiraNo ratings yet

- 2 Text OperationDocument46 pages2 Text Operationseadamoh80No ratings yet

- Chapter Two Text OperationDocument44 pagesChapter Two Text OperationAaron MelendezNo ratings yet

- Chap. 2 Text OperationDocument44 pagesChap. 2 Text OperationadamuNo ratings yet

- 2 Text-OperationDocument60 pages2 Text-OperationYididiya YemiruNo ratings yet

- 2 - Text OperationDocument43 pages2 - Text OperationHailemariam SetegnNo ratings yet

- Chapter Two: Text OperationsDocument41 pagesChapter Two: Text Operationsendris yimerNo ratings yet

- 2 TextOperationsDocument54 pages2 TextOperationsMulugeta HailuNo ratings yet

- Processing Text: 4.1 From Words To TermsDocument52 pagesProcessing Text: 4.1 From Words To TermsThangam NatarajanNo ratings yet

- Course Title: Practical Translation Topic: Corpus-Based Analyses Submitted By: Amna AnwarDocument5 pagesCourse Title: Practical Translation Topic: Corpus-Based Analyses Submitted By: Amna AnwarNosheen IrshadNo ratings yet

- Schmitt & Schmitt (2012) A Reassessment of Frequency and Vocabulary Size in L2 Vocabulary TeachingDocument21 pagesSchmitt & Schmitt (2012) A Reassessment of Frequency and Vocabulary Size in L2 Vocabulary TeachingEdward FungNo ratings yet

- Definition of A CorpusDocument6 pagesDefinition of A CorpusGeorgiana GenyNo ratings yet

- Kornai - How Many Words Are ThereDocument26 pagesKornai - How Many Words Are ThereAlgoritzmiNo ratings yet

- 2012-The Oxford Handbook of Event-Related Potential ComponentsDocument115 pages2012-The Oxford Handbook of Event-Related Potential ComponentsYajing XuNo ratings yet

- Nilsen J 2014 Thai Frequency Dictionary 2 Edition PDFDocument221 pagesNilsen J 2014 Thai Frequency Dictionary 2 Edition PDFIeda DeutschNo ratings yet

- Eur Alex Torino 2006Document11 pagesEur Alex Torino 2006linniminNo ratings yet

- CORPUS TYPES and CRITERIADocument14 pagesCORPUS TYPES and CRITERIAElias Zermane100% (1)

- Word Association Norms,) /iutual Information, and LexicographyDocument8 pagesWord Association Norms,) /iutual Information, and Lexicographynguyenngoc4280No ratings yet

- NLP Notes (Ch1-5) PDFDocument41 pagesNLP Notes (Ch1-5) PDFVARNESH GAWDE100% (1)

- Lexicon & Text NormalizationDocument39 pagesLexicon & Text NormalizationasmaNo ratings yet

- 1904 00812 PDFDocument42 pages1904 00812 PDFкирилл кичигинNo ratings yet

- Term Paper Corpus LinguisticsDocument8 pagesTerm Paper Corpus Linguisticsafdtxmwjs100% (1)

- Dicción 1Document52 pagesDicción 1Paloma RobertsonNo ratings yet

- How Random Is A Corpus? The Library Metaphor: Stefan EvertDocument12 pagesHow Random Is A Corpus? The Library Metaphor: Stefan EvertCennet EkiciNo ratings yet

- Natural Language Processing CS 1462Document45 pagesNatural Language Processing CS 1462Hamad AbdullahNo ratings yet

- One Sense Per CollocationDocument6 pagesOne Sense Per CollocationtamthientaiNo ratings yet

- Unsupervised Learning of The Morphology PDFDocument46 pagesUnsupervised Learning of The Morphology PDFappled.apNo ratings yet

- Linguistic Society of America Language: This Content Downloaded From 112.134.168.199 On Fri, 04 Jan 2019 02:13:48 UTCDocument16 pagesLinguistic Society of America Language: This Content Downloaded From 112.134.168.199 On Fri, 04 Jan 2019 02:13:48 UTCSahitya Nirmanakaranaya Sahitya NirmanakaranayaNo ratings yet

- CILC 2013 Vincze and Alonso RamosDocument8 pagesCILC 2013 Vincze and Alonso RamosMarta MilicNo ratings yet

- Intelligent Database Driven Reverse Dictionary: Mr.R.Thanigaivel, Mr.S.A. Ramesh KumarDocument5 pagesIntelligent Database Driven Reverse Dictionary: Mr.R.Thanigaivel, Mr.S.A. Ramesh KumarInternational Organization of Scientific Research (IOSR)No ratings yet

- 0 ExperimenteeffDocument5 pages0 Experimenteeff202151085No ratings yet

- Lingua: Haitao LiuDocument12 pagesLingua: Haitao Liuheyixuan127No ratings yet

- Wordnet Improves Text Document Clustering: Andreas Hotho Steffen Staab Gerd StummeDocument8 pagesWordnet Improves Text Document Clustering: Andreas Hotho Steffen Staab Gerd StummeriverguardianNo ratings yet

- C. Exploration: Analysis and ReflectionDocument12 pagesC. Exploration: Analysis and ReflectionYasir ANNo ratings yet

- Thinking Like A LinguistDocument6 pagesThinking Like A LinguistnealgoldfarbNo ratings yet

- Discourse Analysis Vocabulary Management ProfileDocument28 pagesDiscourse Analysis Vocabulary Management ProfilefumsmithNo ratings yet

- Katz, J. Semantic Theory and The Meaning of GoodDocument29 pagesKatz, J. Semantic Theory and The Meaning of GoodRodolfo van GoodmanNo ratings yet

- Beyond Single Word-The Most Frequent Collocations in Spoken EnglishDocument10 pagesBeyond Single Word-The Most Frequent Collocations in Spoken EnglishNia Febriyanti100% (1)

- Gries & Berez 2017Document31 pagesGries & Berez 2017Átila AugustoNo ratings yet

- (Yearbook of Phraseology) Christiane Fellbaum (Ed.) - Idioms and Collocations - Corpus-Based Linguistic and Lexicographic Studies PDFDocument7 pages(Yearbook of Phraseology) Christiane Fellbaum (Ed.) - Idioms and Collocations - Corpus-Based Linguistic and Lexicographic Studies PDFAntonella ZeffiroNo ratings yet

- Lexis and Morphology 1Document24 pagesLexis and Morphology 1Laiba Waseem33% (3)

- Noun Phras EntrykoizumiDocument6 pagesNoun Phras EntrykoizumiMaria TodorovaNo ratings yet

- Language and Linguist Compass - 2009 - Gries - What Is Corpus LinguisticsDocument17 pagesLanguage and Linguist Compass - 2009 - Gries - What Is Corpus LinguisticsVũ ĐỗNo ratings yet

- The Most Frequent Collocations in Spoken EnglishDocument10 pagesThe Most Frequent Collocations in Spoken EnglishRubens RibeiroNo ratings yet

- 2009 STG CorpLing LangLingCompassDocument17 pages2009 STG CorpLing LangLingCompassMarta MilicNo ratings yet

- Erman 2000Document34 pagesErman 2000Víctor Fabián Gallegos SagredoNo ratings yet

- English Language and LinguisticsDocument26 pagesEnglish Language and LinguisticsanginehsarkisianNo ratings yet

- Corpus IntroductionDocument22 pagesCorpus IntroductionChao XuNo ratings yet

- OntheuseofcorporaDocument19 pagesOntheuseofcorporaMA. KAELA JOSELLE MADRUNIONo ratings yet

- Using Corpora in The Language Learning Classroom Corpus Linguistics For Teachers My AtcDocument22 pagesUsing Corpora in The Language Learning Classroom Corpus Linguistics For Teachers My AtcBajro MuricNo ratings yet

- Extending WNwith SyntagmaticDocument7 pagesExtending WNwith SyntagmaticMaria TodorovaNo ratings yet

- Shin Nation EltDocument15 pagesShin Nation Eltarupchakraborty69No ratings yet

- Week4 VTDocument47 pagesWeek4 VTosm6navniNo ratings yet

- Mental Lexicon2011Document34 pagesMental Lexicon2011Fernando lemos bernardoNo ratings yet

- The Idiom Principle and The Open Choice Principle (Britt Erman, Beatrice Warren, 2000)Document34 pagesThe Idiom Principle and The Open Choice Principle (Britt Erman, Beatrice Warren, 2000)IsraelEscMarNo ratings yet

- Ambo University Woliso Campus: School of Technology and InformaticsDocument13 pagesAmbo University Woliso Campus: School of Technology and InformaticsTolosa TafeseNo ratings yet

- Ambo University Woliso Campus: School of Technology and InformaticsDocument10 pagesAmbo University Woliso Campus: School of Technology and InformaticsTolosa TafeseNo ratings yet

- Beker Omer The Application Area of AIDocument16 pagesBeker Omer The Application Area of AITolosa TafeseNo ratings yet

- Android Based Crime Management SystemDocument9 pagesAndroid Based Crime Management SystemTolosa TafeseNo ratings yet

- Android Based Crime Manage System IndustDocument74 pagesAndroid Based Crime Manage System IndustTolosa TafeseNo ratings yet

- Access Control List: ACL'sDocument31 pagesAccess Control List: ACL'sTolosa TafeseNo ratings yet

- Chapter Two PropasalDocument12 pagesChapter Two PropasalTolosa TafeseNo ratings yet

- Ambo University Woliso Campus School of Technology and Informatics Department of Information TechnologyDocument44 pagesAmbo University Woliso Campus School of Technology and Informatics Department of Information TechnologyTolosa TafeseNo ratings yet

- Symmetry: A Cloud-Based Crime Reporting System With Identity ProtectionDocument29 pagesSymmetry: A Cloud-Based Crime Reporting System With Identity ProtectionTolosa TafeseNo ratings yet

- Prolog Lab Exercise Assignment by Tolosa TafeseDocument7 pagesProlog Lab Exercise Assignment by Tolosa TafeseTolosa TafeseNo ratings yet

- Heuristic Search Uses A Heuristic Function To Help Guide The Search. When A Node Is ExpandedDocument4 pagesHeuristic Search Uses A Heuristic Function To Help Guide The Search. When A Node Is ExpandedTolosa TafeseNo ratings yet

- Chapter Three: Design 3.1 Purpose and Goals of Design: 3.2 Component DiagramDocument7 pagesChapter Three: Design 3.1 Purpose and Goals of Design: 3.2 Component DiagramTolosa TafeseNo ratings yet

- AI in Agriculture PaperDocument13 pagesAI in Agriculture PaperTolosa TafeseNo ratings yet

- AI in Agriculture PaperDocument13 pagesAI in Agriculture PaperTolosa TafeseNo ratings yet

- Ambo University Woliso Campus: School of Technology and InformaticsDocument8 pagesAmbo University Woliso Campus: School of Technology and InformaticsTolosa TafeseNo ratings yet

- Stack-1661482425419 STKDocument2 pagesStack-1661482425419 STKGaetano GiordanoNo ratings yet

- Station Automation COM600 3.5: IEC 61850 Master (OPC) User's ManualDocument114 pagesStation Automation COM600 3.5: IEC 61850 Master (OPC) User's ManualJasson ChavezNo ratings yet

- M Business Communication 3rd Edition Rentz Solutions ManualDocument22 pagesM Business Communication 3rd Edition Rentz Solutions Manualpearlclement0hzi0100% (36)

- QURANDocument1,196 pagesQURANSmple Trth100% (3)

- Fil 16 Panitikan NG Rehiyon LPDocument11 pagesFil 16 Panitikan NG Rehiyon LPjessNo ratings yet

- 30 Sep 19 Class 4Document13 pages30 Sep 19 Class 4Asif sheikhNo ratings yet

- Grammar and Vocabulary. Revision-2Document3 pagesGrammar and Vocabulary. Revision-2Rocío Madolell OrellanaNo ratings yet

- Describing PeopleDocument19 pagesDescribing PeopleDemostenes ValeraNo ratings yet

- Marketing LandscapeDocument10 pagesMarketing LandscapePrata BestoNo ratings yet

- Cross Cultural Understanding: Between Indonesia and AmericaDocument20 pagesCross Cultural Understanding: Between Indonesia and AmericaM. Taufik Husni MubarokNo ratings yet

- Muhammad Kashif Manzoor - CVDocument4 pagesMuhammad Kashif Manzoor - CVaheerpk77No ratings yet

- Social Issues: 6.1. New Education Policy 2020Document8 pagesSocial Issues: 6.1. New Education Policy 2020Chetan MitraNo ratings yet

- How Do Children Acquire Their Mother TongueDocument5 pagesHow Do Children Acquire Their Mother TongueGratia PierisNo ratings yet

- CODEV Ch9Document20 pagesCODEV Ch9Sadegh SobhiNo ratings yet

- SG 248005Document454 pagesSG 248005improvisadoxNo ratings yet

- Umbrello Handbook XDocument41 pagesUmbrello Handbook Xdeive2194No ratings yet

- Vector_CANcaseXL_ManualDocument38 pagesVector_CANcaseXL_ManualOuassouNo ratings yet

- SYBSc Paper I, Unit-III, AlcoholDocument74 pagesSYBSc Paper I, Unit-III, Alcoholmyebooks.pramodNo ratings yet

- Stotram Digitalized byDocument6 pagesStotram Digitalized byMahadeva MishraNo ratings yet

- Idoc Sap MMDocument26 pagesIdoc Sap MMmusicshiva100% (3)

- The European Certificate For ClassicsDocument5 pagesThe European Certificate For ClassicsjcsmoraisNo ratings yet

- Chapter No.1 (Basics of Process Calculations) PDFDocument17 pagesChapter No.1 (Basics of Process Calculations) PDFDivya TirvaNo ratings yet

- Assignment # 4Document8 pagesAssignment # 4Radhikaa BehlNo ratings yet

- Supported Operating Systems - Chromeleon 7.2.10Document2 pagesSupported Operating Systems - Chromeleon 7.2.10Mondommeg SohanemNo ratings yet

- "What Is Metaphysics?" Author(s) : R. W. Sleeper Source: Transactions of The Charles S. Peirce Society, Spring, 1992, Vol. 28, No. 2 (Spring, 1992), Pp. 177-187 Published By: Indiana University PressDocument12 pages"What Is Metaphysics?" Author(s) : R. W. Sleeper Source: Transactions of The Charles S. Peirce Society, Spring, 1992, Vol. 28, No. 2 (Spring, 1992), Pp. 177-187 Published By: Indiana University PressSaddam HusseinNo ratings yet

- Participant Information - Linz2023Document24 pagesParticipant Information - Linz2023Alexandru OlariuNo ratings yet

- 15 1022q Lookup Techniques f2017 BWDocument18 pages15 1022q Lookup Techniques f2017 BWoscarlcs9910No ratings yet