Professional Documents

Culture Documents

A Vision Base Application For Virtual Mouse Interface Using Hand Gesture

A Vision Base Application For Virtual Mouse Interface Using Hand Gesture

Copyright:

Available Formats

You might also like

- Lesson Plan History TwoDocument5 pagesLesson Plan History TwoPamela SmithNo ratings yet

- Multi Level Inverter DocumentationDocument25 pagesMulti Level Inverter Documentationn anushaNo ratings yet

- CentrifugationDocument18 pagesCentrifugationNaser_Ahmed11No ratings yet

- Pratyu FinalDocument63 pagesPratyu FinalIsmart GamingNo ratings yet

- Control With Hand GesturesDocument18 pagesControl With Hand GesturesAjithNo ratings yet

- Fuzzy Logic Speed ControlDocument12 pagesFuzzy Logic Speed ControlRasheed AhamedNo ratings yet

- DVRDocument18 pagesDVRbsnl_cellone47100% (1)

- Automatic Plant Irrigation System With Dry / Wet Soil Sense and Controlling 230V Water Pump For Agricultural Applications Using AT89S52 MCUDocument21 pagesAutomatic Plant Irrigation System With Dry / Wet Soil Sense and Controlling 230V Water Pump For Agricultural Applications Using AT89S52 MCURam MohanNo ratings yet

- Human Posture Alert Jacket ThesisDocument43 pagesHuman Posture Alert Jacket ThesisHamzaNo ratings yet

- Automatic Room Light ControllerDocument43 pagesAutomatic Room Light ControllersaiNo ratings yet

- Eye Blink DocumentDocument38 pagesEye Blink Documentpavani13No ratings yet

- A Novel Dynamic Voltage Restorer Based On Matrix ConvertersDocument17 pagesA Novel Dynamic Voltage Restorer Based On Matrix ConvertersJexux010% (1)

- Ocr GttsDocument53 pagesOcr GttsKshitij NishantNo ratings yet

- Image Text To Speech Conversion PDFDocument4 pagesImage Text To Speech Conversion PDFarun hrNo ratings yet

- EE CPP Final Project 3rd YearDocument32 pagesEE CPP Final Project 3rd YearShaikh ZeeshanNo ratings yet

- Design of Gps Tracking System For Monitoring Parametric Vehicular Measurements With Accident Notification Via Sms For Saudi German Hospital FullDocument163 pagesDesign of Gps Tracking System For Monitoring Parametric Vehicular Measurements With Accident Notification Via Sms For Saudi German Hospital FullHazzelth Princess PasokNo ratings yet

- Password Based Circuit BreakerDocument8 pagesPassword Based Circuit BreakerRakshitha kNo ratings yet

- Final Year Project SynopsisDocument5 pagesFinal Year Project SynopsisAshok KumarNo ratings yet

- DC-DC Converter To Step Up Input VoltageDocument3 pagesDC-DC Converter To Step Up Input VoltageFawwaaz HoseinNo ratings yet

- Smart Helmet For Bike Rider Safety Final PPT Copy 2Document15 pagesSmart Helmet For Bike Rider Safety Final PPT Copy 2Ashish PandeyNo ratings yet

- DVR Project Report PDFDocument38 pagesDVR Project Report PDFabhishekNo ratings yet

- Research Paper On AgribotDocument5 pagesResearch Paper On AgribotAnshul AgarwalNo ratings yet

- Arduino Based Auto Rail Gate Signal Control System: Daffodil International UniversityDocument33 pagesArduino Based Auto Rail Gate Signal Control System: Daffodil International UniversityMd EmonNo ratings yet

- Agriculture Robot: Department of Mechanical EngineeringDocument23 pagesAgriculture Robot: Department of Mechanical EngineeringMayank JainNo ratings yet

- Design and Implementation of Soft Switching Boost ConverterDocument8 pagesDesign and Implementation of Soft Switching Boost ConverterAnonymous lPvvgiQjRNo ratings yet

- Online Parking ReservationDocument13 pagesOnline Parking ReservationYesha PatelNo ratings yet

- Gesture Controlled Prosthetic Arm: Major Project Report OnDocument41 pagesGesture Controlled Prosthetic Arm: Major Project Report OnAdityaJainNo ratings yet

- An Industry Oriented Mini Project Report: "Authentication by Face Recognition Using Open-Cv Python"Document40 pagesAn Industry Oriented Mini Project Report: "Authentication by Face Recognition Using Open-Cv Python"nick furiasNo ratings yet

- Thesis - Design, Control and Simulation of PMSG Based Stand-AloneDocument68 pagesThesis - Design, Control and Simulation of PMSG Based Stand-AloneMAZHAR ALAM MALLICKNo ratings yet

- Portable Mobile ChargerDocument11 pagesPortable Mobile ChargerAbirami Mani57% (7)

- Automatic Ambulance Rescue SystemDocument16 pagesAutomatic Ambulance Rescue SystemSankar Ami100% (1)

- Water Level MonitoringDocument3 pagesWater Level MonitoringMamatha MarriNo ratings yet

- Exhaust Gas Heat Recovery Power GenerationDocument52 pagesExhaust Gas Heat Recovery Power GenerationabhinavkarthickNo ratings yet

- VEHICLE THEFT ALERT ENGINE LOCK SYSTEM USING ARM7 Ijariie5380Document7 pagesVEHICLE THEFT ALERT ENGINE LOCK SYSTEM USING ARM7 Ijariie5380adhi rizalNo ratings yet

- Smart Adjustable Bridge PresentationDocument18 pagesSmart Adjustable Bridge PresentationDeshmukh AshwiniNo ratings yet

- Stepper Motors With ArduinoDocument19 pagesStepper Motors With Arduinochafic WEISSNo ratings yet

- Automatic Railway Track Crack DetectionDocument16 pagesAutomatic Railway Track Crack Detectionmrsugar18No ratings yet

- Report of IoT Based Power Theft Detection System (01 Suit)Document49 pagesReport of IoT Based Power Theft Detection System (01 Suit)sumit.copy12No ratings yet

- Government Polytechnic College Nedumangadu: Snapdragon ProcessorsDocument17 pagesGovernment Polytechnic College Nedumangadu: Snapdragon ProcessorspremamohanNo ratings yet

- IOT Based Home Security SystemDocument7 pagesIOT Based Home Security SystemS Jagadish PatilNo ratings yet

- Emotion Recognition Using Facial Expressions PDFDocument26 pagesEmotion Recognition Using Facial Expressions PDFNowreen HaqueNo ratings yet

- Density Based Traffic Light ControllingDocument39 pagesDensity Based Traffic Light Controllingsantosh426No ratings yet

- Fingerprint Based Voting MachineDocument62 pagesFingerprint Based Voting MachineNaveen Kumar83% (6)

- A Minor Project Report Submitted To Rajiv Gandhi Prodhyogik Vishwavidyalaya, Bhopal Towards Partial Fulfillment For The Award of The Degree ofDocument53 pagesA Minor Project Report Submitted To Rajiv Gandhi Prodhyogik Vishwavidyalaya, Bhopal Towards Partial Fulfillment For The Award of The Degree ofwahidjaanNo ratings yet

- Microcontroller Based Radar SystemDocument19 pagesMicrocontroller Based Radar SystemMeet MakwanaNo ratings yet

- Automatic Bike Stand ReportDocument50 pagesAutomatic Bike Stand ReportSai Vamshi PranayNo ratings yet

- Ai Project Report PDFDocument54 pagesAi Project Report PDFsai vickyNo ratings yet

- Arduino Based Home Automation System Using Bluetooth Through An Android Mobile PDFDocument72 pagesArduino Based Home Automation System Using Bluetooth Through An Android Mobile PDFsalmanNo ratings yet

- Hand Gesture To Speech TranslationDocument37 pagesHand Gesture To Speech TranslationRohan SNo ratings yet

- Black Book 2019Document56 pagesBlack Book 2019shubham sawantNo ratings yet

- IoT Operated Door Lock Using ESP32 CAM ModuleDocument3 pagesIoT Operated Door Lock Using ESP32 CAM ModuleInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Intelligent Saline Monitoring SystemDocument3 pagesIntelligent Saline Monitoring SystemAnonymous izrFWiQNo ratings yet

- Solar Powered Grass Cutting Robot Using IotDocument20 pagesSolar Powered Grass Cutting Robot Using IotDebashishParidaNo ratings yet

- Power Theft Detection SystemDocument5 pagesPower Theft Detection SystemVivek KumarNo ratings yet

- Iot ReportDocument56 pagesIot ReportuditNo ratings yet

- Design and Implementation of An Advanced Security System For Farm Protection From Wild AnimalsDocument5 pagesDesign and Implementation of An Advanced Security System For Farm Protection From Wild AnimalsGRD JournalsNo ratings yet

- Project Report On Pick and Place RobotDocument20 pagesProject Report On Pick and Place RobotRanvijaySharma0% (2)

- Speed Control of A DC Motor Using Hand GestureDocument4 pagesSpeed Control of A DC Motor Using Hand GestureArka Prava LahiriNo ratings yet

- Automatic Railway Gate Controller DocumentationDocument53 pagesAutomatic Railway Gate Controller Documentationmnair201167% (3)

- Virtual Mouse Using Artificial IntelligenceDocument8 pagesVirtual Mouse Using Artificial IntelligenceVIVA-TECH IJRINo ratings yet

- Wireless Cursor Controller Hand Gestures For Computer NavigationDocument8 pagesWireless Cursor Controller Hand Gestures For Computer NavigationIJRASETPublicationsNo ratings yet

- 6 Virtual Mouse System Utilizing AI Technology 2022 JuneDocument4 pages6 Virtual Mouse System Utilizing AI Technology 2022 JunePratham DubeyNo ratings yet

- A Comprehensive Review on the Therapeutic Potential of PectinDocument6 pagesA Comprehensive Review on the Therapeutic Potential of PectinInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Quizlet's Usefulness for Vocabulary Learning: Perceptions of High-Level and Low-Level Chinese College EFL LearnersDocument5 pagesQuizlet's Usefulness for Vocabulary Learning: Perceptions of High-Level and Low-Level Chinese College EFL LearnersInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A Rare Complication: Cardiac Arrest and Pulmonary Embolism in Polyarteritis NodosaDocument5 pagesA Rare Complication: Cardiac Arrest and Pulmonary Embolism in Polyarteritis NodosaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Forecasting PM10 Concentrations Using Artificial Neural Network in Imphal CityDocument10 pagesForecasting PM10 Concentrations Using Artificial Neural Network in Imphal CityInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- The Impact of Integrated Border Security System on Human Trafficking in Bangladesh: The Mediating Effect of the Use of Advanced TechnologyDocument12 pagesThe Impact of Integrated Border Security System on Human Trafficking in Bangladesh: The Mediating Effect of the Use of Advanced TechnologyInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Application of Project Management using CPM (Critical Path Method) and Pert (Project Evaluation and Review Technique) in the Nava House Bekasi Cluster Housing Development ProjectDocument12 pagesApplication of Project Management using CPM (Critical Path Method) and Pert (Project Evaluation and Review Technique) in the Nava House Bekasi Cluster Housing Development ProjectInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Quality Control to Reduce Appearance Defects at PT. Musical InstrumentDocument11 pagesQuality Control to Reduce Appearance Defects at PT. Musical InstrumentInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Application of the Economic Order Quantity (EOQ) Method on the Supply of Chemical Materials in the Laboratory of PT. Fajar Surya Wisesa TbkDocument15 pagesApplication of the Economic Order Quantity (EOQ) Method on the Supply of Chemical Materials in the Laboratory of PT. Fajar Surya Wisesa TbkInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Analysis of Carton Packaging Quality Control Using Statistical Quality Control Methods at PT XYZDocument10 pagesAnalysis of Carton Packaging Quality Control Using Statistical Quality Control Methods at PT XYZInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Visitor Perception Related to the Quality of Service in Todo Tourism VillageDocument10 pagesVisitor Perception Related to the Quality of Service in Todo Tourism VillageInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Entrepreneurial Adult Education as a Catalyst for Youth Employability in Disadvantaged AreasDocument9 pagesEntrepreneurial Adult Education as a Catalyst for Youth Employability in Disadvantaged AreasInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A New Model of Rating to Evaluate Start-ups in Emerging Indian MarketDocument7 pagesA New Model of Rating to Evaluate Start-ups in Emerging Indian MarketInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Enhancing Estimating the Charge Level in Electric Vehicles: Leveraging Force Fluctuation and Regenerative Braking DataDocument6 pagesEnhancing Estimating the Charge Level in Electric Vehicles: Leveraging Force Fluctuation and Regenerative Braking DataInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A Proof of Concept Using BLE to Optimize Patients Turnaround Time (PTAT) in Health CareDocument14 pagesA Proof of Concept Using BLE to Optimize Patients Turnaround Time (PTAT) in Health CareInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Health Care - An Android Application Implementation and Analyzing user Experience Using PythonDocument7 pagesHealth Care - An Android Application Implementation and Analyzing user Experience Using PythonInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Gravity Investigations Applied to the Geological Framework Study of the Mambasa Territory in Democratic Republic of CongoDocument9 pagesGravity Investigations Applied to the Geological Framework Study of the Mambasa Territory in Democratic Republic of CongoInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Intrusion Detection System with Ensemble Machine Learning Approaches using VotingClassifierDocument4 pagesIntrusion Detection System with Ensemble Machine Learning Approaches using VotingClassifierInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Crop Monitoring System with Water Moisture Levels Using ControllerDocument8 pagesCrop Monitoring System with Water Moisture Levels Using ControllerInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Naturopathic Approaches to Relieving Constipation: Effective Natural Treatments and TherapiesDocument10 pagesNaturopathic Approaches to Relieving Constipation: Effective Natural Treatments and TherapiesInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Developing Digital Fluency in Early Education: Narratives of Elementary School TeachersDocument11 pagesDeveloping Digital Fluency in Early Education: Narratives of Elementary School TeachersInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Synthetic and Natural Vitamin C Modulation of Leaded Paint-Induced Nephrotoxicity of Automobile Painters in Ile-Ife, Nigeria.Document21 pagesSynthetic and Natural Vitamin C Modulation of Leaded Paint-Induced Nephrotoxicity of Automobile Painters in Ile-Ife, Nigeria.International Journal of Innovative Science and Research TechnologyNo ratings yet

- Optimizing Bio-Implant Materials for Femur Bone Replacement:A Multi-Criteria Analysis and Finite Element StudyDocument5 pagesOptimizing Bio-Implant Materials for Femur Bone Replacement:A Multi-Criteria Analysis and Finite Element StudyInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A Study to Assess the Effectiveness of Bronchial Hygiene Therapy (BHT) on Clinical Parameters among Mechanically Ventilated Patients in Selected Hospitals at ErodeDocument7 pagesA Study to Assess the Effectiveness of Bronchial Hygiene Therapy (BHT) on Clinical Parameters among Mechanically Ventilated Patients in Selected Hospitals at ErodeInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Year Wise Analysis and Prediction of Gold RatesDocument8 pagesYear Wise Analysis and Prediction of Gold RatesInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- The Government's Role in DMO-based Urban Tourism in Kayutangan area of Malang cityDocument8 pagesThe Government's Role in DMO-based Urban Tourism in Kayutangan area of Malang cityInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Design and Implementation of Software-Defined ReceiverDocument8 pagesDesign and Implementation of Software-Defined ReceiverInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Fahfi/Mochaina Illegal Gambling Activities and its Average Turnover within Limpopo ProvinceDocument8 pagesFahfi/Mochaina Illegal Gambling Activities and its Average Turnover within Limpopo ProvinceInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Inclusive Leadership in the South African Police Service (SAPS)Document26 pagesInclusive Leadership in the South African Police Service (SAPS)International Journal of Innovative Science and Research TechnologyNo ratings yet

- Effectiveness of Organizing Crossing Transport Services in the Maritime Area of Riau Islands ProvinceDocument10 pagesEffectiveness of Organizing Crossing Transport Services in the Maritime Area of Riau Islands ProvinceInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Income Diversification of Rural Households in Bangladesh (2022-23)Document5 pagesIncome Diversification of Rural Households in Bangladesh (2022-23)International Journal of Innovative Science and Research TechnologyNo ratings yet

- Sustainable LuxuryDocument228 pagesSustainable LuxurybachNo ratings yet

- Dunne - Resurgence in Quantum Field Theory and Quantum MechanicsDocument58 pagesDunne - Resurgence in Quantum Field Theory and Quantum MechanicsL VNo ratings yet

- Anish Bhandari.22015997.FE5056, Problem Solving Methods and AnalysisDocument20 pagesAnish Bhandari.22015997.FE5056, Problem Solving Methods and AnalysisAnish bhandariNo ratings yet

- Lesson 1 Conceptual Framework of Mathematics From K 3Document34 pagesLesson 1 Conceptual Framework of Mathematics From K 3Godwin Jerome Reyes100% (2)

- MA KR C5 Controller enDocument183 pagesMA KR C5 Controller enAkechai OunsiriNo ratings yet

- Normal Probability Curve: By: Keerthi Samuel.K, Lecturer Vijay Marie College of NursingDocument22 pagesNormal Probability Curve: By: Keerthi Samuel.K, Lecturer Vijay Marie College of NursingSree LathaNo ratings yet

- Remedial Plan Grade 5Document1 pageRemedial Plan Grade 5hamza radyNo ratings yet

- Pengaruh Literasi DigitalDocument10 pagesPengaruh Literasi DigitalsdafdaNo ratings yet

- Modeling and Mapping The Spatial-Temporal Changes in Land Use and Land Cover in Lagos: A Dynamics For Building A Sustainable Urban CityDocument17 pagesModeling and Mapping The Spatial-Temporal Changes in Land Use and Land Cover in Lagos: A Dynamics For Building A Sustainable Urban CityAhmed RagabNo ratings yet

- SD2-TRA-016 General Carpentry WorksDocument5 pagesSD2-TRA-016 General Carpentry WorksРашад ИбрагимовNo ratings yet

- Landing Gear Articolo Stress - 2 PDFDocument9 pagesLanding Gear Articolo Stress - 2 PDFAB1984No ratings yet

- 2.sample Question 03 Agri SystemsDocument19 pages2.sample Question 03 Agri Systemsvendetta021199No ratings yet

- Digital Signal ProcessingDocument220 pagesDigital Signal ProcessingAarun ArasanNo ratings yet

- Milestone 1 - Transitions & JustifiersDocument5 pagesMilestone 1 - Transitions & JustifiersSamuel OliquinoNo ratings yet

- CDAM Chapter 4 Middle and Late AdolescenceDocument25 pagesCDAM Chapter 4 Middle and Late AdolescenceShannen GestiadaNo ratings yet

- RS-WD-HW-N01 485 Infrared Temperature Sensor User Manual: Document Version V1.0Document8 pagesRS-WD-HW-N01 485 Infrared Temperature Sensor User Manual: Document Version V1.0NILTON RAMOS ESTEBANNo ratings yet

- Chap02. P&ID Diagrams. Process Automation HandbookDocument6 pagesChap02. P&ID Diagrams. Process Automation HandbookJesus Peña Martinez100% (1)

- Form PTODocument3 pagesForm PTOJohn NainggolanNo ratings yet

- Study Guide:: Ricks P. Ortiz Principles of Soil Science - DedeDocument4 pagesStudy Guide:: Ricks P. Ortiz Principles of Soil Science - DedeRicks P. OrtizNo ratings yet

- 14 StratigraphyDocument5 pages14 StratigraphyHarsh ShahNo ratings yet

- 1464 1 478 1 10 20171012 PDFDocument8 pages1464 1 478 1 10 20171012 PDFSneha PradhanNo ratings yet

- Solving Simultaneous Equations On A TI CalculatorDocument5 pagesSolving Simultaneous Equations On A TI Calculatorallan_zirconia444No ratings yet

- Grade 11 SHS Chemistry Q1 WEEK 1 Module 4 Separation TechniquesDocument12 pagesGrade 11 SHS Chemistry Q1 WEEK 1 Module 4 Separation TechniquesJun DandoNo ratings yet

- DinniDocument8 pagesDinniHarshavardhan.A. DinniNo ratings yet

- Samuelson UnderstandingMarxianNotion 1971Document34 pagesSamuelson UnderstandingMarxianNotion 1971Kaio DanielNo ratings yet

- Final SOP of RajDocument3 pagesFinal SOP of RajRikhil NairNo ratings yet

- Windows Used or Impact TestingDocument5 pagesWindows Used or Impact TestingAhmed El TayebNo ratings yet

- Bahan Ajar Matematika Untuk Fisika 2 RPS-Matematika Untuk Fisika 2Document8 pagesBahan Ajar Matematika Untuk Fisika 2 RPS-Matematika Untuk Fisika 2meenayaNo ratings yet

A Vision Base Application For Virtual Mouse Interface Using Hand Gesture

A Vision Base Application For Virtual Mouse Interface Using Hand Gesture

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

A Vision Base Application For Virtual Mouse Interface Using Hand Gesture

A Vision Base Application For Virtual Mouse Interface Using Hand Gesture

Copyright:

Available Formats

Volume 6, Issue 11, November – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-216

A Vision Base Application For Virtual Mouse

Interface Using Hand Gesture

Sankha Sarkar Indrani Naskar

Department of Computer Science and Engineering Department of Computer Science and Engineering

JIS College of Engineering JIS College of Engineering

Kalyani, West Bengal, India Kalyani, West Bengal, India

Sourav Sahoo Sayan Ghosh

Department of Computer Science and Engineering Department of Computer Science and Engineering

JIS College of Engineering JIS College of Engineering

Kalyani, West Bengal, India Kalyani, West Bengal, India

Sumanta Chatterjee

Department of Computer Science and Engineering

JIS College of Engineering

Kalyani, West Bengal, India

Abstract– This paper proposes a way of controlling the images and store temporary and processes the frames and

position of the mouse cursor with the help of a fingertip then recognizes the predefined hand gesture operations.

without using any electronic device. We can be Python programing language is mostly used for developing

performing the operations like clicking and dragging this kind virtual mouse system, and OpenCV library is

objects easily with help of different hand gestures. The additionally used for contract computer vision. The system

proposed system will require only a webcam as an input makes use of the well-known python libraries like MediaPipe

device. Python and OpenCV will be required to and Autopy for the tracking of the hands and fingertip in real-

implement this software. The output of the webcam will time and also, Pyput, and PyAutoGUI packages were used

be displayed on the system’s screen so that it can be for tracking the finger traveling in the screen for performing

further calibrated by the user. The python dependencies operations like left-click, right-click, drag and scrolling

that will be used for implementing this system are functions. The output results and accuracy level of the

NumPy, Autopy and Mediapipe. proposed model showed very high, and also the proposed

model can work in the dark envirment as well as 60cm far

Keywords :- OpenCv,Autopy,Mediapipe Numpy; Calibrated, from the camera in real-world applications with the

Gesture. employment of a CPU without the employment of a GPU.

I. INTRODUCTION II. ALGORITHM USED FOR HAND TRACKING

With the developing technologies within the twenty-one

century, the areas of virtual reality devices that we are using A. Mediapipe

in our daily lifestyle, these devices are become It is a cross-platform framework that's mostly used for

more compact with wireless technologies like Bluetooth. building multimodal pipeline in machine learning, and it's an

This paper shows an AI virtual mouse system that take input open-source framework by Google. MediaPipe framework is

of hand gestures and detection for fingertip movement to most useful for cross-platform development work since this

perform mouse operations in computer by using computer framework is constructed by statistic data. The MediaPipe

vision. The most important objective of the proposed system framework works as multimodal, which implies this

is to perform mouse operation like single click, double click, framework is applied to face detection, facemesh, iris scanner

drag and scroll by employing an in-built camera or a web- pose detection, handdetection, hair segmentation object

camera within the computer rather than using a traditional detection, motion tracking and objection. The MediaPipe

mouse. Hand gesture and fingertip detection by using framework is that the best option to the developer for

computer vision is employed as an individual's and Computer building, analyzing and designing the systems performance

Interaction, simply referred to as HCI. Once we are using the in the form of graphs, and it's also been used for developing

AI virtual mouse system, we will track hand gestures and various application and systems within the cross-platform

fingertips by using an in-built camera or web camera and (Android, IOS, web, edges devices). The involving steps in

perform the mouse cursor operations like scrolling, single our proposed system uses MediaPipe framework as pipeline

click, double click. While employing a wireless or a structure configuration. This pipeline structure create and run

Bluetooth mouse, that's not purely wireless some in various platforms which allowing scalability in mobile and

devices like the mouse, little dongle we've to attach to the desktop system also. The MediaPipe package gives us three

PC, and also a battery cell to power the mouse. But in reliability, they're like performance, evaluation, and creation

this system, the user uses his/her hand to manage the which isretrievingby the sensor data, and using a set of

pc mouse operations. During this time, the camera captures components. Mediapipe processing inside a graph which is

defines flowing of packet between nodes.

IJISRT21NOV634 www.ijisrt.com 940

Volume 6, Issue 11, November – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-216

Apipelinesstructures could be a graph that consists of library in our system.

components called calculators, where each calculator is

connected by specific streams during which the B. Initializing the capturing device:

information packets flow. Developers also are ready

to replace or define custom calculators anywhere within Our next task istoinitialize the connected image capture

the graph for creating their custom applications. The device. We are make use of system primary camera as

calculators and streams are combined to form a data-flow cap = cv2.VideoCapture(0).if any secondary image capturing

diagram. The graph (Figure 1) is made by using the device connected that is not used by this program.

MediaPipe module. Each calculator modules runs according C. Capturing the frames and processing:

to the timestamp become available.it used real-time graph

system to define when the timestamp become available. A The proposed AI virtual mouse system uses primary

model of hand landmark which is consists of 21 locating camera for capturing the frames. The video frames are

points, as shown in Figure 1. processed and converted BGR to RGB format to find the

hand in video frame. This color conversion is done by the

following code:

check. img = cap.read()

imgRGB = cv2.cvtcolor(img. cv2.COLOR_BGR2RGB)

lmlist = handLandmarks(imgRGB)

if len(lmlist) !=0:

finger = fingers(lmlist)

x1, y1 = lmlist[0][1:]

x2, y2 = lmlist[12][1:]

x3 = numpy.interp(x1, (75, 640 - 75), (0, wScr))

y3 = numpy.interp(y1, (75, 480 - 75), (0, hScr))

D. Initializing mediapipe and capture window:

After that we are Initializing mediapipe which is

responsible for tracking the hand gesture and other actions.

Then we are setting the minimum confidence for hand

detection and minimum confidence for hand tracking by

mainHand=initHand.Hands(min_detection_confidence=0.8,

min_tracking_confidence=0.8).

Fig. 1: MediaPipe hand recognition flowchart That means it gives a success rate of 80% according to

the performed action by the main hand. After that mediapipe

B. Autopy

draw the axis line across the palm and middle of fingers. That

It is cross platform GUI automation module for python.

keeps track about finger movement and create connection

That module keeps tracks of finger in this proposed

with neighbor finger. Then we define the axis of the hand in

system.Autopy track the fingertip and tell us which finger is

the 2D format by defining the x-axis and y-axis value. By the

up and which one is down .This process is happing by giving

help of this axis we are keep track about the finger that which

system an input in the form of 0 and 1.From this module the

one is up and which one is down. We also keep a record of

mediapipe module takes output and done the process and

the previous frame of x-axis and y-axis values in the form of

give the proper output. From this output opencv visualize

0 and 1.

everything and create the proper frame from image.

C. OpenCv

Open source computer vision library is a programming

functions written in C++ mainly aimed at computer vision. It

was licensed by Apache and introduced by Intel. This library

is cross platform and free to use.it provides a features of Gpu

acceleration for real time operation.opencv are used in wide

areas like as 2D & 3D feature toolkits, Facial and gesture

recognition system, mobile robotics.

III. METHODOLOGY

A. Importing the necessary library:

At very first install& import all the necessary library in

preferred IDE.We are supposed to install onencv,

numpy,mediapipe and autopy framework.After successfully Fig. 2 : Co-ordinates or landmarks in the hand using

install all the library in pycharm, our next is to import all that Mediapipe

library into the code section so that we can make use this

IJISRT21NOV634 www.ijisrt.com 941

Volume 6, Issue 11, November – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-216

E. Defining hand landmarks: program checked for which finger is up by their

After confirming the hand axis our next task is to define corresponding index ID’s

the full hand land mark capture. We are capture the hand land . for id in range(1, 5):

mark by the predefined mediapipe 21 locating point (Figure if landmarks[tipIds[id]][2] < landmarks[tipIds[id] - 3][2]:

2).These 21 joint or locating point are to be tracked by the fingerTips.append(1)

mediapipe.If there is no landmark found the we print the else:

default value that is empty. That’s means no action need to finger Tips.append(0)

be performed. Otherwise it returns the coordinate value of Otherwise it returned to the Fingertip function.

X,Y and Z axis.The thumb finger is not included in the hand

landmark region. We are creating a loop in between 21 point Color convert: Hand contour are the curve of the hand

and return the tip point value by drawing graph line in segmented image extracted points. In this section contours

between finger. When we fold the finger or down the finger are detected using Moore neighbor algorithm. After

the lines are also down and the corresponding graph line are extracting the contour from the binary image the palm and

down. The gap n between the finger is not important in this finger region area is selected.If second condition is satisfied

point .The gap in between finger can become 5cm. Otherwise that means if other finger is up excluding thumb finger then

it get back to the initial position of finding. After locating we proceeds the code to change the color. First we read the

height, width and center of the hand landmark we converts frame from the image and change the format of the frames

the decimal coordinate relative to the index according to the from BGR to RGB.

each image. After capturing the total hand landmarks a green

rectangle box appeared around the hand where we can if len(lmList) != 0:

perform the mouse operation. x1, y1 = lmList[8][1:]

x2, y2 = lmList[12][1:]

It gets the index 8s and 12s for x and y axis values and

that is also skip the index values because it starts with value

1. Then check for pointing finger is up and thumb finger is

down .If this condition is true then we performs two

operations (1) converts width of the window relative to the

screen width (2)converts height of the window relative to the

screen height. And keep an eye on the pervious values of X

and Y .autopy.mouse.move(wScr-cX, cY) It moves the

mouse curser across the x3 and y3 values by inverting the

direction.if finger[1] == 0 and finger[0] == 1: It checks to

see if the pointer finger is down and thumb finger is up then

it performs left click. After end of this process the obtained

pixels are stored in an ordered array. These values are used

for gesture detection. The following code is help to detect the

color region:

Fig3: capturing the hand landmarks

check. img = cap.read()

F. Defining the finger landmarks: imgRGB = cv2.cvtcolor(img. cv2.COLOR_BGR2RGB)

After initializing the axis point are defining the hand lmlist = handLandmarks(imgRGB)

landmark. That is occupied the green dialog box and the

finger height width. The height and width are measured by if len(lmlist) !=0:

the captured image frame and counting the finger up down x1, y1 = lmlist[0][1:]

number by their movement. We are make use only the three x2, y2 = lmlist[12][1:]

finger. The thumb finger is not included in the hand landmark x3 = numpy.interp(x1, (75, 640 - 75), (0, wScr))

region. We are creating a loop in between 21 point and return y3 = numpy.interp(y1, (75, 480 - 75), (0, hScr))

the tip point value by drawing graph line in between finger.

Otherwise it get back to the initial position of finding. After if results.multi_hand_landmarks:

locating height, width and center of the hand landmark we for num, hand in enumerate(results.multi_hand_landmarks):

converts the decimal coordinate relative to the index mp_drawing.draw_landmarks(image, hand,

according to the each image. Here we are creating an array of mp_hands.HAND_CONNECTIONS,mp_drawing.DrawingS

size 4 which is storing the four index value of the different pec(color=(121, 22, 76), thickness=2, circle_radius=4),

finger. The Tip _ID only considered the 4, 8,12,16,20 no mp_drawing.DrawingSpec(color=(250, 44, 250),

index point. Then we are checking for the two condition (1) thickness=2, circle_radius=2),

If thumb is up and (2) If finger are up except the thumb. In

condition (1) we are checking if landmarks[tipIds[0]][1] >

lmList[tipIds[0] - 1][1]:

Then append the fingerTip and returned to the finger

landmark section. If condition (2) is satisfied then the

IJISRT21NOV634 www.ijisrt.com 942

Volume 6, Issue 11, November – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-216

proposed system is shown Figure 4.To explore working

condition of gestures with real-time tracking, we investigate

this system in some complicated cases, e.g., maximum

distant from the camera, low light condition, dark or busy

background during the tracking. The proposed can detect one

person hand at a time .Unlike existing systems, this study

uses only single CPU and does not require any external GPU

system or special devices.

For performing cursor moving operationsif the index

finger with Tipid=1 is up and middle perform no action, as

shows in Figure 5.

Fig. 5 : Gesture for cursor movement

For performing left click index finger with tipid=1 and

middle finger with tipId=2 both are up and difference

between two figure is 40pixles, as shows in Figure 6.

Fig. 6 : Gesture for left click

For performing right click if both the index finger

tipid=1 and middle finger index id=2 are up and the distance

Fig. 4 : Flowchart of the proposed AI virtual mouse system between the two fingers is 40pixels, as shows in figure7.

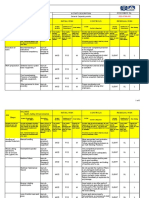

IV. EXPERIMENTAL RESULTS

In this paper, we are proposed a finger gesture based

mouse system. The hand region of image frame and the

center of the palm are first extracted from 3D structured

image by the mediapipe framework. Then hand contours are

extracted and show a graph line in 21 region point in hand.

The autopy algorithm keeps tracks the finger movement and

count the up finger by FingertipID python variable. Finally,

to control the mouse cursor based on the finger location and

their gesture. In our research we are considering the four

function: cursor movement, left click, right click and scroll

up-down. The fully explained working flow diagram of our Fig. 7: Gesture for right click

IJISRT21NOV634 www.ijisrt.com 943

Volume 6, Issue 11, November – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-216

For performing scroll up and downif the index finger approaches using gestures for virtual mouse systems. But in

with tipId=1 and middle finger with tipId=2 and distant our proposed system we can perform mouse operation with

between two finger is greater than 40Pixels, as shows in high accuracy.

Figure 9.

Accuracy

No Action Performed

Scroll Down Function

Scroll Up Function

Right Button Click

Left Button Click

Mouse Movement

92 94 96 98 100 102

Fig. 9 :Graph of Accuracy for different performed actions

Fig. 8 : Gesture for scroll operation

We suggest the scroll operation with two finger for

smooth operation but in this system we can scroll by three Accuracy

finger also. With three finger it is very hard to differentiate

between two finger and three finger if the gestures are fast.

RGB-D Images and Fingure Tip… 95

Finger Tip Gesture Mouse Accuracy Gesture based Virtual Mouse 69

Function (%)

Palm and fingure reconiztion… 78

Performed

Tip Id 1 or both tip Id’s 1 Curser 100 Our Proposed AI Virtual Mouse 100

and 2 are up movement

Tip Id 0 and 1 are up and Left click 95 0 20 40 60 80 100 120

the distance between the

fingers is <15 Fig. 10 : Accuracy graph fordifferentavailable mousesystem

Tip Id 0 and 1 are up and Right click 98

the distance between the V. APPLICATIONS

fingers is <20

Tip Id 0 and 1 are up and Scroll up 100 The AI virtual mouse system is beneficial for

the distance between the several applications like, it is utilized in situations where we

fingers is >20 and both cannot use the physical mouse and it will

fingers are moved down be accustomed reduce the space and value for using the

Tip Id 0 and 1 are up and Scroll down 100 physical mouse, and it improves the human-computer

the distance between the interaction. Major applications:

figures is <20 and both

fingers are moved up The proposed model encompasses a accuracy of 99%

All five tip Id’s 0,1,2,3,4 No action 100 which is way better than the that of other proposed models

are up for virtual mouse.

Result 100 In COVID-19 situation, it's not so safe to use the physical

Table1: Experimental results devices by touching them because it should possible to

make situation of spread the virus, so this proposed AI virtual

Table 1 shows the experimental test results of our AI mouse system may be wont to control the PC mouse

virtual mouse system. The results shows that there is no functions without using the physical mouse.

significant difference between physical mouse and virtual The system may be modified and wont to control automation

mouse operation. In our proposed system we can perform and robots systems without usage of devices.

mouse operations with 100 percent accuracy .The results AI virtual mouse will be accustomed play augmented reality

doesn’t show any difference between fainted light and and computer game based games without the wired or

normal light. We shall first discuss the fingertip tracking wireless mouse devices

preference in different lighting condition, noisy background within the field of robotics, the proposed system like HCI are

and also in distant tracking conditions. In this analysis we often used for controlling robots

record rapid gestures performance with their percentage of In the field of designing and architecture, this system will

accuracy. In figure 9 the line graph shows the accuracy level be used for designing virtually.

of different performed actions in our virtual mouse system. In

Figure10 our experimental results compared to another

IJISRT21NOV634 www.ijisrt.com 944

Volume 6, Issue 11, November – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-216

VI. CONCLUSION

This paper presented a brandnew AI virtual mouse

method using OpenCv, autopy and mediapipe by the help of

fingertip movement interacted with the computer in front of

camera without using any physical device. The approach

demonstrated high accuracy and highly accurate gesture

eliminates in practical applications. The proposed method

overcomes the limitations of already existing virtual mouse

systems. It has many advantages, e.g., working well in low

light as well as changing light condition with complex

background, and fingertip tracking of different shape and size

of finger with same accuracy. The experimental results shows

that the approaching system can perform very well in real-

time applications. We also intend to add new gesture on it to

handle the system more easily and interact with other smart

systems. It is possible to enrich the tracking system by using

machine learning algorithm like Open pose. It is also possible

to including body, hand and facial key points for different

gestures.

REFERENCE

[1.]J. Katona, “A review of human–computer interaction

and video game research fields in cognitive Info

Communications,” Applied Sciences, vol. 11, no. 6, p.

2646,2021.

[2.]D. L. Quam, “Gesture recognition with an

information Glove,” IEEE Conference on Aerospace and

Electronics, vol. 2, pp. 755–760, 1990.

[3.]D.-H. Liou, D. Lee, and C.-C. Hsieh, “A real time hand

gesture recognition system using motion history image,” in

Proceedings of the 2010 2nd International Conference on

Signal Processing Systems, July 2010.

[4.]S. U. Dudhane, “Cursor system using hand gesture

recognition,” IJARCCE, vol. 2, no. 5, 2013.

IJISRT21NOV634 www.ijisrt.com 945

You might also like

- Lesson Plan History TwoDocument5 pagesLesson Plan History TwoPamela SmithNo ratings yet

- Multi Level Inverter DocumentationDocument25 pagesMulti Level Inverter Documentationn anushaNo ratings yet

- CentrifugationDocument18 pagesCentrifugationNaser_Ahmed11No ratings yet

- Pratyu FinalDocument63 pagesPratyu FinalIsmart GamingNo ratings yet

- Control With Hand GesturesDocument18 pagesControl With Hand GesturesAjithNo ratings yet

- Fuzzy Logic Speed ControlDocument12 pagesFuzzy Logic Speed ControlRasheed AhamedNo ratings yet

- DVRDocument18 pagesDVRbsnl_cellone47100% (1)

- Automatic Plant Irrigation System With Dry / Wet Soil Sense and Controlling 230V Water Pump For Agricultural Applications Using AT89S52 MCUDocument21 pagesAutomatic Plant Irrigation System With Dry / Wet Soil Sense and Controlling 230V Water Pump For Agricultural Applications Using AT89S52 MCURam MohanNo ratings yet

- Human Posture Alert Jacket ThesisDocument43 pagesHuman Posture Alert Jacket ThesisHamzaNo ratings yet

- Automatic Room Light ControllerDocument43 pagesAutomatic Room Light ControllersaiNo ratings yet

- Eye Blink DocumentDocument38 pagesEye Blink Documentpavani13No ratings yet

- A Novel Dynamic Voltage Restorer Based On Matrix ConvertersDocument17 pagesA Novel Dynamic Voltage Restorer Based On Matrix ConvertersJexux010% (1)

- Ocr GttsDocument53 pagesOcr GttsKshitij NishantNo ratings yet

- Image Text To Speech Conversion PDFDocument4 pagesImage Text To Speech Conversion PDFarun hrNo ratings yet

- EE CPP Final Project 3rd YearDocument32 pagesEE CPP Final Project 3rd YearShaikh ZeeshanNo ratings yet

- Design of Gps Tracking System For Monitoring Parametric Vehicular Measurements With Accident Notification Via Sms For Saudi German Hospital FullDocument163 pagesDesign of Gps Tracking System For Monitoring Parametric Vehicular Measurements With Accident Notification Via Sms For Saudi German Hospital FullHazzelth Princess PasokNo ratings yet

- Password Based Circuit BreakerDocument8 pagesPassword Based Circuit BreakerRakshitha kNo ratings yet

- Final Year Project SynopsisDocument5 pagesFinal Year Project SynopsisAshok KumarNo ratings yet

- DC-DC Converter To Step Up Input VoltageDocument3 pagesDC-DC Converter To Step Up Input VoltageFawwaaz HoseinNo ratings yet

- Smart Helmet For Bike Rider Safety Final PPT Copy 2Document15 pagesSmart Helmet For Bike Rider Safety Final PPT Copy 2Ashish PandeyNo ratings yet

- DVR Project Report PDFDocument38 pagesDVR Project Report PDFabhishekNo ratings yet

- Research Paper On AgribotDocument5 pagesResearch Paper On AgribotAnshul AgarwalNo ratings yet

- Arduino Based Auto Rail Gate Signal Control System: Daffodil International UniversityDocument33 pagesArduino Based Auto Rail Gate Signal Control System: Daffodil International UniversityMd EmonNo ratings yet

- Agriculture Robot: Department of Mechanical EngineeringDocument23 pagesAgriculture Robot: Department of Mechanical EngineeringMayank JainNo ratings yet

- Design and Implementation of Soft Switching Boost ConverterDocument8 pagesDesign and Implementation of Soft Switching Boost ConverterAnonymous lPvvgiQjRNo ratings yet

- Online Parking ReservationDocument13 pagesOnline Parking ReservationYesha PatelNo ratings yet

- Gesture Controlled Prosthetic Arm: Major Project Report OnDocument41 pagesGesture Controlled Prosthetic Arm: Major Project Report OnAdityaJainNo ratings yet

- An Industry Oriented Mini Project Report: "Authentication by Face Recognition Using Open-Cv Python"Document40 pagesAn Industry Oriented Mini Project Report: "Authentication by Face Recognition Using Open-Cv Python"nick furiasNo ratings yet

- Thesis - Design, Control and Simulation of PMSG Based Stand-AloneDocument68 pagesThesis - Design, Control and Simulation of PMSG Based Stand-AloneMAZHAR ALAM MALLICKNo ratings yet

- Portable Mobile ChargerDocument11 pagesPortable Mobile ChargerAbirami Mani57% (7)

- Automatic Ambulance Rescue SystemDocument16 pagesAutomatic Ambulance Rescue SystemSankar Ami100% (1)

- Water Level MonitoringDocument3 pagesWater Level MonitoringMamatha MarriNo ratings yet

- Exhaust Gas Heat Recovery Power GenerationDocument52 pagesExhaust Gas Heat Recovery Power GenerationabhinavkarthickNo ratings yet

- VEHICLE THEFT ALERT ENGINE LOCK SYSTEM USING ARM7 Ijariie5380Document7 pagesVEHICLE THEFT ALERT ENGINE LOCK SYSTEM USING ARM7 Ijariie5380adhi rizalNo ratings yet

- Smart Adjustable Bridge PresentationDocument18 pagesSmart Adjustable Bridge PresentationDeshmukh AshwiniNo ratings yet

- Stepper Motors With ArduinoDocument19 pagesStepper Motors With Arduinochafic WEISSNo ratings yet

- Automatic Railway Track Crack DetectionDocument16 pagesAutomatic Railway Track Crack Detectionmrsugar18No ratings yet

- Report of IoT Based Power Theft Detection System (01 Suit)Document49 pagesReport of IoT Based Power Theft Detection System (01 Suit)sumit.copy12No ratings yet

- Government Polytechnic College Nedumangadu: Snapdragon ProcessorsDocument17 pagesGovernment Polytechnic College Nedumangadu: Snapdragon ProcessorspremamohanNo ratings yet

- IOT Based Home Security SystemDocument7 pagesIOT Based Home Security SystemS Jagadish PatilNo ratings yet

- Emotion Recognition Using Facial Expressions PDFDocument26 pagesEmotion Recognition Using Facial Expressions PDFNowreen HaqueNo ratings yet

- Density Based Traffic Light ControllingDocument39 pagesDensity Based Traffic Light Controllingsantosh426No ratings yet

- Fingerprint Based Voting MachineDocument62 pagesFingerprint Based Voting MachineNaveen Kumar83% (6)

- A Minor Project Report Submitted To Rajiv Gandhi Prodhyogik Vishwavidyalaya, Bhopal Towards Partial Fulfillment For The Award of The Degree ofDocument53 pagesA Minor Project Report Submitted To Rajiv Gandhi Prodhyogik Vishwavidyalaya, Bhopal Towards Partial Fulfillment For The Award of The Degree ofwahidjaanNo ratings yet

- Microcontroller Based Radar SystemDocument19 pagesMicrocontroller Based Radar SystemMeet MakwanaNo ratings yet

- Automatic Bike Stand ReportDocument50 pagesAutomatic Bike Stand ReportSai Vamshi PranayNo ratings yet

- Ai Project Report PDFDocument54 pagesAi Project Report PDFsai vickyNo ratings yet

- Arduino Based Home Automation System Using Bluetooth Through An Android Mobile PDFDocument72 pagesArduino Based Home Automation System Using Bluetooth Through An Android Mobile PDFsalmanNo ratings yet

- Hand Gesture To Speech TranslationDocument37 pagesHand Gesture To Speech TranslationRohan SNo ratings yet

- Black Book 2019Document56 pagesBlack Book 2019shubham sawantNo ratings yet

- IoT Operated Door Lock Using ESP32 CAM ModuleDocument3 pagesIoT Operated Door Lock Using ESP32 CAM ModuleInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Intelligent Saline Monitoring SystemDocument3 pagesIntelligent Saline Monitoring SystemAnonymous izrFWiQNo ratings yet

- Solar Powered Grass Cutting Robot Using IotDocument20 pagesSolar Powered Grass Cutting Robot Using IotDebashishParidaNo ratings yet

- Power Theft Detection SystemDocument5 pagesPower Theft Detection SystemVivek KumarNo ratings yet

- Iot ReportDocument56 pagesIot ReportuditNo ratings yet

- Design and Implementation of An Advanced Security System For Farm Protection From Wild AnimalsDocument5 pagesDesign and Implementation of An Advanced Security System For Farm Protection From Wild AnimalsGRD JournalsNo ratings yet

- Project Report On Pick and Place RobotDocument20 pagesProject Report On Pick and Place RobotRanvijaySharma0% (2)

- Speed Control of A DC Motor Using Hand GestureDocument4 pagesSpeed Control of A DC Motor Using Hand GestureArka Prava LahiriNo ratings yet

- Automatic Railway Gate Controller DocumentationDocument53 pagesAutomatic Railway Gate Controller Documentationmnair201167% (3)

- Virtual Mouse Using Artificial IntelligenceDocument8 pagesVirtual Mouse Using Artificial IntelligenceVIVA-TECH IJRINo ratings yet

- Wireless Cursor Controller Hand Gestures For Computer NavigationDocument8 pagesWireless Cursor Controller Hand Gestures For Computer NavigationIJRASETPublicationsNo ratings yet

- 6 Virtual Mouse System Utilizing AI Technology 2022 JuneDocument4 pages6 Virtual Mouse System Utilizing AI Technology 2022 JunePratham DubeyNo ratings yet

- A Comprehensive Review on the Therapeutic Potential of PectinDocument6 pagesA Comprehensive Review on the Therapeutic Potential of PectinInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Quizlet's Usefulness for Vocabulary Learning: Perceptions of High-Level and Low-Level Chinese College EFL LearnersDocument5 pagesQuizlet's Usefulness for Vocabulary Learning: Perceptions of High-Level and Low-Level Chinese College EFL LearnersInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A Rare Complication: Cardiac Arrest and Pulmonary Embolism in Polyarteritis NodosaDocument5 pagesA Rare Complication: Cardiac Arrest and Pulmonary Embolism in Polyarteritis NodosaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Forecasting PM10 Concentrations Using Artificial Neural Network in Imphal CityDocument10 pagesForecasting PM10 Concentrations Using Artificial Neural Network in Imphal CityInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- The Impact of Integrated Border Security System on Human Trafficking in Bangladesh: The Mediating Effect of the Use of Advanced TechnologyDocument12 pagesThe Impact of Integrated Border Security System on Human Trafficking in Bangladesh: The Mediating Effect of the Use of Advanced TechnologyInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Application of Project Management using CPM (Critical Path Method) and Pert (Project Evaluation and Review Technique) in the Nava House Bekasi Cluster Housing Development ProjectDocument12 pagesApplication of Project Management using CPM (Critical Path Method) and Pert (Project Evaluation and Review Technique) in the Nava House Bekasi Cluster Housing Development ProjectInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Quality Control to Reduce Appearance Defects at PT. Musical InstrumentDocument11 pagesQuality Control to Reduce Appearance Defects at PT. Musical InstrumentInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Application of the Economic Order Quantity (EOQ) Method on the Supply of Chemical Materials in the Laboratory of PT. Fajar Surya Wisesa TbkDocument15 pagesApplication of the Economic Order Quantity (EOQ) Method on the Supply of Chemical Materials in the Laboratory of PT. Fajar Surya Wisesa TbkInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Analysis of Carton Packaging Quality Control Using Statistical Quality Control Methods at PT XYZDocument10 pagesAnalysis of Carton Packaging Quality Control Using Statistical Quality Control Methods at PT XYZInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Visitor Perception Related to the Quality of Service in Todo Tourism VillageDocument10 pagesVisitor Perception Related to the Quality of Service in Todo Tourism VillageInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Entrepreneurial Adult Education as a Catalyst for Youth Employability in Disadvantaged AreasDocument9 pagesEntrepreneurial Adult Education as a Catalyst for Youth Employability in Disadvantaged AreasInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A New Model of Rating to Evaluate Start-ups in Emerging Indian MarketDocument7 pagesA New Model of Rating to Evaluate Start-ups in Emerging Indian MarketInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Enhancing Estimating the Charge Level in Electric Vehicles: Leveraging Force Fluctuation and Regenerative Braking DataDocument6 pagesEnhancing Estimating the Charge Level in Electric Vehicles: Leveraging Force Fluctuation and Regenerative Braking DataInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A Proof of Concept Using BLE to Optimize Patients Turnaround Time (PTAT) in Health CareDocument14 pagesA Proof of Concept Using BLE to Optimize Patients Turnaround Time (PTAT) in Health CareInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Health Care - An Android Application Implementation and Analyzing user Experience Using PythonDocument7 pagesHealth Care - An Android Application Implementation and Analyzing user Experience Using PythonInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Gravity Investigations Applied to the Geological Framework Study of the Mambasa Territory in Democratic Republic of CongoDocument9 pagesGravity Investigations Applied to the Geological Framework Study of the Mambasa Territory in Democratic Republic of CongoInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Intrusion Detection System with Ensemble Machine Learning Approaches using VotingClassifierDocument4 pagesIntrusion Detection System with Ensemble Machine Learning Approaches using VotingClassifierInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Crop Monitoring System with Water Moisture Levels Using ControllerDocument8 pagesCrop Monitoring System with Water Moisture Levels Using ControllerInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Naturopathic Approaches to Relieving Constipation: Effective Natural Treatments and TherapiesDocument10 pagesNaturopathic Approaches to Relieving Constipation: Effective Natural Treatments and TherapiesInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Developing Digital Fluency in Early Education: Narratives of Elementary School TeachersDocument11 pagesDeveloping Digital Fluency in Early Education: Narratives of Elementary School TeachersInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Synthetic and Natural Vitamin C Modulation of Leaded Paint-Induced Nephrotoxicity of Automobile Painters in Ile-Ife, Nigeria.Document21 pagesSynthetic and Natural Vitamin C Modulation of Leaded Paint-Induced Nephrotoxicity of Automobile Painters in Ile-Ife, Nigeria.International Journal of Innovative Science and Research TechnologyNo ratings yet

- Optimizing Bio-Implant Materials for Femur Bone Replacement:A Multi-Criteria Analysis and Finite Element StudyDocument5 pagesOptimizing Bio-Implant Materials for Femur Bone Replacement:A Multi-Criteria Analysis and Finite Element StudyInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A Study to Assess the Effectiveness of Bronchial Hygiene Therapy (BHT) on Clinical Parameters among Mechanically Ventilated Patients in Selected Hospitals at ErodeDocument7 pagesA Study to Assess the Effectiveness of Bronchial Hygiene Therapy (BHT) on Clinical Parameters among Mechanically Ventilated Patients in Selected Hospitals at ErodeInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Year Wise Analysis and Prediction of Gold RatesDocument8 pagesYear Wise Analysis and Prediction of Gold RatesInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- The Government's Role in DMO-based Urban Tourism in Kayutangan area of Malang cityDocument8 pagesThe Government's Role in DMO-based Urban Tourism in Kayutangan area of Malang cityInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Design and Implementation of Software-Defined ReceiverDocument8 pagesDesign and Implementation of Software-Defined ReceiverInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Fahfi/Mochaina Illegal Gambling Activities and its Average Turnover within Limpopo ProvinceDocument8 pagesFahfi/Mochaina Illegal Gambling Activities and its Average Turnover within Limpopo ProvinceInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Inclusive Leadership in the South African Police Service (SAPS)Document26 pagesInclusive Leadership in the South African Police Service (SAPS)International Journal of Innovative Science and Research TechnologyNo ratings yet

- Effectiveness of Organizing Crossing Transport Services in the Maritime Area of Riau Islands ProvinceDocument10 pagesEffectiveness of Organizing Crossing Transport Services in the Maritime Area of Riau Islands ProvinceInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Income Diversification of Rural Households in Bangladesh (2022-23)Document5 pagesIncome Diversification of Rural Households in Bangladesh (2022-23)International Journal of Innovative Science and Research TechnologyNo ratings yet

- Sustainable LuxuryDocument228 pagesSustainable LuxurybachNo ratings yet

- Dunne - Resurgence in Quantum Field Theory and Quantum MechanicsDocument58 pagesDunne - Resurgence in Quantum Field Theory and Quantum MechanicsL VNo ratings yet

- Anish Bhandari.22015997.FE5056, Problem Solving Methods and AnalysisDocument20 pagesAnish Bhandari.22015997.FE5056, Problem Solving Methods and AnalysisAnish bhandariNo ratings yet

- Lesson 1 Conceptual Framework of Mathematics From K 3Document34 pagesLesson 1 Conceptual Framework of Mathematics From K 3Godwin Jerome Reyes100% (2)

- MA KR C5 Controller enDocument183 pagesMA KR C5 Controller enAkechai OunsiriNo ratings yet

- Normal Probability Curve: By: Keerthi Samuel.K, Lecturer Vijay Marie College of NursingDocument22 pagesNormal Probability Curve: By: Keerthi Samuel.K, Lecturer Vijay Marie College of NursingSree LathaNo ratings yet

- Remedial Plan Grade 5Document1 pageRemedial Plan Grade 5hamza radyNo ratings yet

- Pengaruh Literasi DigitalDocument10 pagesPengaruh Literasi DigitalsdafdaNo ratings yet

- Modeling and Mapping The Spatial-Temporal Changes in Land Use and Land Cover in Lagos: A Dynamics For Building A Sustainable Urban CityDocument17 pagesModeling and Mapping The Spatial-Temporal Changes in Land Use and Land Cover in Lagos: A Dynamics For Building A Sustainable Urban CityAhmed RagabNo ratings yet

- SD2-TRA-016 General Carpentry WorksDocument5 pagesSD2-TRA-016 General Carpentry WorksРашад ИбрагимовNo ratings yet

- Landing Gear Articolo Stress - 2 PDFDocument9 pagesLanding Gear Articolo Stress - 2 PDFAB1984No ratings yet

- 2.sample Question 03 Agri SystemsDocument19 pages2.sample Question 03 Agri Systemsvendetta021199No ratings yet

- Digital Signal ProcessingDocument220 pagesDigital Signal ProcessingAarun ArasanNo ratings yet

- Milestone 1 - Transitions & JustifiersDocument5 pagesMilestone 1 - Transitions & JustifiersSamuel OliquinoNo ratings yet

- CDAM Chapter 4 Middle and Late AdolescenceDocument25 pagesCDAM Chapter 4 Middle and Late AdolescenceShannen GestiadaNo ratings yet

- RS-WD-HW-N01 485 Infrared Temperature Sensor User Manual: Document Version V1.0Document8 pagesRS-WD-HW-N01 485 Infrared Temperature Sensor User Manual: Document Version V1.0NILTON RAMOS ESTEBANNo ratings yet

- Chap02. P&ID Diagrams. Process Automation HandbookDocument6 pagesChap02. P&ID Diagrams. Process Automation HandbookJesus Peña Martinez100% (1)

- Form PTODocument3 pagesForm PTOJohn NainggolanNo ratings yet

- Study Guide:: Ricks P. Ortiz Principles of Soil Science - DedeDocument4 pagesStudy Guide:: Ricks P. Ortiz Principles of Soil Science - DedeRicks P. OrtizNo ratings yet

- 14 StratigraphyDocument5 pages14 StratigraphyHarsh ShahNo ratings yet

- 1464 1 478 1 10 20171012 PDFDocument8 pages1464 1 478 1 10 20171012 PDFSneha PradhanNo ratings yet

- Solving Simultaneous Equations On A TI CalculatorDocument5 pagesSolving Simultaneous Equations On A TI Calculatorallan_zirconia444No ratings yet

- Grade 11 SHS Chemistry Q1 WEEK 1 Module 4 Separation TechniquesDocument12 pagesGrade 11 SHS Chemistry Q1 WEEK 1 Module 4 Separation TechniquesJun DandoNo ratings yet

- DinniDocument8 pagesDinniHarshavardhan.A. DinniNo ratings yet

- Samuelson UnderstandingMarxianNotion 1971Document34 pagesSamuelson UnderstandingMarxianNotion 1971Kaio DanielNo ratings yet

- Final SOP of RajDocument3 pagesFinal SOP of RajRikhil NairNo ratings yet

- Windows Used or Impact TestingDocument5 pagesWindows Used or Impact TestingAhmed El TayebNo ratings yet

- Bahan Ajar Matematika Untuk Fisika 2 RPS-Matematika Untuk Fisika 2Document8 pagesBahan Ajar Matematika Untuk Fisika 2 RPS-Matematika Untuk Fisika 2meenayaNo ratings yet