Professional Documents

Culture Documents

8 Sorting

8 Sorting

Uploaded by

KimDivitOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

8 Sorting

8 Sorting

Uploaded by

KimDivitCopyright:

Available Formats

Sorting Algorithms

LP&ZT 2005

Sorting Algorithms

Having to sort a list is an issue that comes up all the time when you are writing programs. Sorting algorithms are a standard topic in introductory courses in Computer Science, not only because sorting itself is an important issue, but also because it is particularly suited to demonstrating that there can be several very dierent solutions (algorithms) to the same problem, and that it can be useful and instructive to compare these alternative approaches. In this lecture, we are going to introduce a couple of dierent sorting algorithms, discuss their implementation in Prolog, and analyse their complexity.

Ulle Endriss (ulle@illc.uva.nl)

Sorting Algorithms

LP&ZT 2005

Aim

We want to implement a predicate that will take an ordering relation and an unsorted list and return a sorted list. Examples: ?- sort(<, [3,8,5,1,2,4,6,7], List). List = [1, 2, 3, 4, 5, 6, 7, 8] Yes ?- sort(>, [3,8,5,1,2,4,6,7], List). List = [8, 7, 6, 5, 4, 3, 2, 1] Yes ?- sort(is_bigger, [horse,elephant,donkey], List). List = [elephant, horse, donkey] Yes

Ulle Endriss (ulle@illc.uva.nl)

Sorting Algorithms

LP&ZT 2005

Auxiliary Predicate to Check Orderings

We are going to use the following predicate to check whether two given terms A and B are ordered with respect to the ordering relation Rel supplied: check(Rel, A, B) :Goal =.. [Rel,A,B], call(Goal). Remark: In SWI Prolog, you could also use just Goal instead of call(Goal) in the last line of the program. Here are two examples of check/3 in action: ?- check(is_bigger, elephant, monkey). Yes ?- check(<, 7, 5). No

Ulle Endriss (ulle@illc.uva.nl) 3

Sorting Algorithms

LP&ZT 2005

Bubblesort

The rst sorting algorithm we are going to look into is called bubblesortthe way it operates is supposedly reminiscent of bubbles oating up in a glass of spa rood. This algorithm works as follows: Go through the list from left to right until you nd a pair of consecutive elements that are ordered the wrong way round. Swap them. Repeat the above until you can go through the full list without encountering such a pair. Then the list is sorted. Try sorting the list [3, 7, 20, 16, 4, 46] this way . . .

Ulle Endriss (ulle@illc.uva.nl)

Sorting Algorithms

LP&ZT 2005

Bubblesort in Prolog

This predicate calls swap/3 and then continues recursively. If swap/3 fails, then the current list is sorted and can be returned: bubblesort(Rel, List, SortedList) :swap(Rel, List, NewList), !, bubblesort(Rel, NewList, SortedList). bubblesort(_, SortedList, SortedList). Go recursively through a list until you nds a pair A/B to swap and return the new list, or fail if there is no such pair: swap(Rel, [A,B|List], [B,A|List]) :check(Rel, B, A). swap(Rel, [A|List], [A|NewList]) :swap(Rel, List, NewList).

Ulle Endriss (ulle@illc.uva.nl) 5

Sorting Algorithms

LP&ZT 2005

Examples

Just to prove that it really works: ?- bubblesort(<, [5,3,7,5,2,8,4,3,6], List). List = [2, 3, 3, 4, 5, 5, 6, 7, 8] Yes ?- bubblesort(is_bigger, [donkey,horse,elephant], List). List = [elephant, horse, donkey] Yes ?- bubblesort(@<, [donkey,horse,elephant], List). List = [donkey, elephant, horse] Yes

Ulle Endriss (ulle@illc.uva.nl)

Sorting Algorithms

LP&ZT 2005

An Improvement

The version of bubblesort we have given before can be improved upon. For the version presented, we know that we are going to have to do a lot of redundant comparisons: Suppose we have just swapped elements 100 and 101. Then in the next round, the earliest we are going to nd an unordered pair is after 99 comparisons (because the rst 99 elements have already been sorted in previous rounds). This problem can be avoided, by continuing to swap elements and only to return to the front of the list once we have reached its end. The Prolog implementation is just a little more complicated . . .

Ulle Endriss (ulle@illc.uva.nl)

Sorting Algorithms

LP&ZT 2005

Improved Bubblesort in Prolog

bubblesort2(Rel, List, SortedList) :swap2(Rel, List, NewList), % this now always succeeds List \= NewList, !, % check theres been a swap bubblesort2(Rel, NewList, SortedList). bubblesort2(_, SortedList, SortedList). swap2(Rel, [A,B|List], [B|NewList]) :check(Rel, B, A), swap2(Rel, [A|List], NewList). % continue! swap2(Rel, [A|List], [A|NewList]) :swap2(Rel, List, NewList). swap2(_, [], []). % new base case: reached end of list

Ulle Endriss (ulle@illc.uva.nl) 8

Sorting Algorithms

LP&ZT 2005

Complexity Analysis

Here we are only going to look into the time complexity (rather than the space complexity) of sorting algorithms. Throughout, let n be the length of the list to be sorted. This is the obvious parameter by which to measure the problem size. We are going to measure the complexity of an algorithm in terms of the number of primitive comparison operations (i.e. calls to check/3 in Prolog) required by that algorithm to sort a list of length n. This is a reasonable approximation of actual runtimes. As with search algorithms earlier on in the course, we are going to be interested in what happens to the complexity of solving a problem with a given algorithm when we increase the problem size. Will it be exponential in n? Or linear? Or something in between?

Ulle Endriss (ulle@illc.uva.nl) 9

Sorting Algorithms

LP&ZT 2005

Big-O Notation: Recap

Let f : N N and g : N N be two functions mapping natural numbers to natural numbers. Think of f as computing, for any problem size n, the worst-case time complexity f (n). This may be a rather complicated function. Think of g as a function that may be a good approximation of f and that is more convenient when speaking about complexities. The Big-O Notation is a way of making the idea of a suitable approximation mathematically precise. We say that f (n) is in O(g(n)) i there exist an n0 N and a c R+ such that f (n) c g(n) for all n n0 . That is, from some n0 onwards, the dierence between f and g will be at most some constant factor c.

Ulle Endriss (ulle@illc.uva.nl) 10

Sorting Algorithms

LP&ZT 2005

Examples

(1) Let f (n) = 5 n2 + 20. Then f (n) is in O(n2 ). Proof: Use c = 6 and n0 = 5. (2) Let f (n) = n + 1000000. Then f (n) is in O(n). Proof: Use c = 2 and n0 = 1000000 (or vice versa). (3) Let f (n) = 5 n2 + 20. Then f (n) is also in O(n3 ), but this is not very interesting. We want complexity classes to be sharp. (4) Let f (n) = 500 n200 + n17 + 1000. Then f (n) is in O(2n ). Proof: Use c = 1 and n0 = 3000. In general, exponential functions always grow much faster than any polynomial function. So O(2n ) is not at all a sharp complexity class for f . A better choice would be O(n200 ).

Ulle Endriss (ulle@illc.uva.nl)

11

Sorting Algorithms

LP&ZT 2005

Complexity of Bubblesort

How many comparisons does bubblesort perform in the worst case? Suppose we are using the improved version of bubblesort . . . In the worst case, the list is presented exactly the wrong way round, as in the following example: ?- bubblesort2(<, [10,9,8,7,6,5,4,3,2,1], List). The algorithm will rst move 10 to the end of the list, then 9, etc. In each round, we have to go through full list, i.e. make n1 comparisons. And there are n rounds (one for each element to be moved). Hence, we require n (n1) comparisons. ; Hence, the complexity of improved bubblesort is O(n2 ). Home entertainment: The complexity of our original version of bubblesort is actually O(n3 ). The exact number of comparisons 1 required is 12 n (n1) (2n+2) + (n1) . . . try to prove it!

Ulle Endriss (ulle@illc.uva.nl) 12

Sorting Algorithms

LP&ZT 2005

Quicksort

The next sorting algorithm we consider is called quicksort. It works as follows (for a non-empty list): Select an arbitrary element X from the list. Split the remaining elements into a list Left containing all the elements preceding X in the ordering relation, and a list Right containing all the remaining elements. Sort Left and Right using quicksort (recursion), resulting in SortedLeft and SortedRight, respectively. Return the result: SortedLeft ++ [X] ++ SortedRight. How fast quicksort runs will depend on the choice of X. In Prolog, we are simply going to select the head of the unsorted list.

Ulle Endriss (ulle@illc.uva.nl)

13

Sorting Algorithms

LP&ZT 2005

Quicksort in Prolog

Sorting the empty list results in the empty list (base case): quicksort(_, [], []). For the recursive rule, we rst remove the Head from the unsorted list and split the Tail into those elements preceding Head wrt. the ordering Rel (list Left) and the remaining elements (list Right). Then Left and Right are being sorted, and nally everything is put together to return the full sorted list: quicksort(Rel, [Head|Tail], SortedList) :split(Rel, Head, Tail, Left, Right), quicksort(Rel, Left, SortedLeft), quicksort(Rel, Right, SortedRight), append(SortedLeft, [Head|SortedRight], SortedList).

Ulle Endriss (ulle@illc.uva.nl) 14

Sorting Algorithms

LP&ZT 2005

Splitting Lists

We still need to implement split/5. This predicate takes an ordering relation, an element, and a list, and returns two lists: one containing the elements from the input list preceding the input element wrt. the input ordering relation, and one containing the remaining elements from the input list (both unsorted). split(_, _, [], [], []). split(Rel, Middle, [Head|Tail], [Head|Left], Right) :check(Rel, Head, Middle), !, split(Rel, Middle, Tail, Left, Right). split(Rel, Middle, [Head|Tail], Left, [Head|Right]) :split(Rel, Middle, Tail, Left, Right).

Ulle Endriss (ulle@illc.uva.nl) 15

Sorting Algorithms

LP&ZT 2005

Testing split/5

The following example demonstrates how split/5 works: ?- split(<, 20, [18,7,21,15,20,55,7,8,87], X, Y). X = [18, 7, 15, 7, 8] Y = [21, 20, 55, 87] Yes

Ulle Endriss (ulle@illc.uva.nl)

16

Sorting Algorithms

LP&ZT 2005

Quicksort Examples

A couple of examples demonstrating that quicksort works: ?- quicksort(>, [2,4,5,3,6,5,1], List). List = [6, 5, 5, 4, 3, 2, 1] Yes ?- quicksort(is_bigger, [elephant,donkey,horse], List). List = [elephant, horse, donkey] Yes

Ulle Endriss (ulle@illc.uva.nl)

17

Sorting Algorithms

LP&ZT 2005

Complexity of Splitting

To analyse the complexity of quicksort, we rst analyse the complexity of splitting, a crucial sub-routine of the algorithm. Given a list L and an element X, how many comparisons are required to divide the elements in L into those that are to be placed to the left and those that are to be placed to the right of X? Let n be the length of [X|L]. Clearly, we require exactly n1 comparison operations. Hence, the complexity of splitting in O(n).

Ulle Endriss (ulle@illc.uva.nl)

18

Sorting Algorithms

LP&ZT 2005

Complexity of Quicksort

To nd out what the complexity of quicksort is, we have to check how often quicksort performs a splitting operation, and on lists of how many elements it does so. A run of quicksort can be visualised as a tree. The height of the tree corresponds to the recursion depth; and the width of the tree corresponds to the work done by the splitting sub-routine at each recursion level . . . This will crucially depend on what elements we select for splitting. Splitting could be either more or less balanced or more or less unbalanced . . .

Ulle Endriss (ulle@illc.uva.nl)

19

Sorting Algorithms

LP&ZT 2005

Extremely Unbalanced Splitting

In the case of extremely unbalanced splitting (say, we always select the smallest element and all other elements end up in the righthand sublist), quicksort has got a complexity of O(n2 ). n This situation occurs, for instance, if the input list is already sorted and we always select the head of the list for splitting (as in our Prolog implementation).

Ulle Endriss (ulle@illc.uva.nl) 20

Sorting Algorithms

LP&ZT 2005

Balanced Splitting

For the case of balanced splitting (the number of elements ending up to the left of the selected element is always roughly equal to the number of elements ending up on the righthand side), the following gure depicts the situation: n To nd out about the complexity of quicksort in the case of (more or less) balanced splitting, we need to know what the height of such a tree is (with respect to n).

Ulle Endriss (ulle@illc.uva.nl) 21

Sorting Algorithms

LP&ZT 2005

Height of a Binary Tree

How high is a binary tree of width n? There is 1 root node. Each time we go down one level, the number of nodes per level doubles. On the nal level, there are n nodes (= width of the tree). So, how many times do we have to multiply 1 by 2 to get n? 1 2 2 2 = n

x

2x

= n log2 n

x =

Remark: Logarithms with dierent bases just dier by a constant factor (e.g. log2 n = 5 log32 n). So, in particular, when we use the Big-O Notation, the basis of logarithms does not matter and we are simply going to write log n.

Ulle Endriss (ulle@illc.uva.nl) 22

Sorting Algorithms

LP&ZT 2005

Complexity of Quicksort (cont.)

For balanced splitting, we end up with an overall complexity of O(n log n) for quicksort. In practice, we can usually assume that splitting will occur in more or less balanced a fashion. This is why quicksort is usually regarded as an O(n log n) algorithm, although we have seen that complexity will be quadratic in the very worst case. The assumption of balancedness is justied, for instance, when the input list is randomly ordered. In general, of course, we cannot make that assumption. In general, always selecting the head of the input list for splitting may not be a good strategy. In some cases it may be possible to devise a heuristic to decide which element to select (not discussed here).

Ulle Endriss (ulle@illc.uva.nl)

23

Sorting Algorithms

LP&ZT 2005

Summary: Sorting Algorithms

Sorting a list is a fundamental algorithmic problem that comes up again and again in Computer Science and AI. We have discussed three searching algorithms: na bubblesort, improved bubblesort, and quicksort. ve The Prolog implementation of each of these take an ordering relation and a list as input, and return the sorted list. The complexity of (improved) bubblesort is O(n2 ). Slow. The complexity of quicksort is O(n log n), at least under the assumption of reasonably balanced splitting. Fast. There are many other sorting algorithms around. Two of them, insert-sort and merge-sort are also explained in the textbook.

Ulle Endriss (ulle@illc.uva.nl)

24

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5824)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1093)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (852)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (590)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (898)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (541)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (349)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (823)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (122)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (403)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- Algorithms: Richard Johnsonbaugh Marcus SchaeferDocument5 pagesAlgorithms: Richard Johnsonbaugh Marcus SchaeferAfras Ahmad0% (2)

- Modelos Estoc Asticos 2: Christian OjedaDocument6 pagesModelos Estoc Asticos 2: Christian OjedaChristian OjedaNo ratings yet

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Cs170 Solutions ManualDocument66 pagesCs170 Solutions ManualSid NaikNo ratings yet

- Math 1022 Discrete Structure PDFDocument4 pagesMath 1022 Discrete Structure PDFNabin GautamNo ratings yet

- Ds SyllabusDocument6 pagesDs Syllabusarcher2012No ratings yet

- Assignment 2Document2 pagesAssignment 2compiler&automataNo ratings yet

- Java CodingDocument332 pagesJava Codingakbarviveka_16156616100% (4)

- FFT PDFDocument17 pagesFFT PDFMahmoud Handase35No ratings yet

- Design and Analysis of Algorithms PDFDocument15 pagesDesign and Analysis of Algorithms PDFRoop DubeyNo ratings yet

- Bresenham Line Drawing Algorithm,: Course WebsiteDocument47 pagesBresenham Line Drawing Algorithm,: Course Websitejontyrhodes1012237No ratings yet

- Dmoi TabletDocument345 pagesDmoi TabletRinesa RamaNo ratings yet

- Equation of A Parabola W S 3Document3 pagesEquation of A Parabola W S 3api-314332531No ratings yet

- Unit - 3 of Computer ArchitectureDocument59 pagesUnit - 3 of Computer ArchitectureShweta mauryaNo ratings yet

- Assignment3 PDFDocument2 pagesAssignment3 PDFkishalay sarkarNo ratings yet

- Previous Compre MFDS RegularDocument2 pagesPrevious Compre MFDS RegularSunny KumarNo ratings yet

- DocumentDocument8 pagesDocumentIshfaq Ahmad MirNo ratings yet

- Comc2015 Official Solutions enDocument10 pagesComc2015 Official Solutions enAngela Angie BuseskaNo ratings yet

- Important Question DMDocument4 pagesImportant Question DMvedhavarshini100% (1)

- ADA - Question - Bank 2020Document15 pagesADA - Question - Bank 2020TrishalaNo ratings yet

- Data Structures Using C++ 2E: GraphsDocument60 pagesData Structures Using C++ 2E: GraphsBobby StanleyNo ratings yet

- Math Test 2nd QuarterDocument2 pagesMath Test 2nd QuarterMark Kiven MartinezNo ratings yet

- Ece FDS Notes - Unit IDocument20 pagesEce FDS Notes - Unit ISENTHIL RNo ratings yet

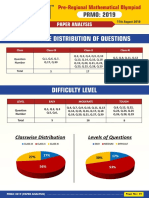

- PRMO 2019 Paper AnalysisDocument3 pagesPRMO 2019 Paper AnalysisarunNo ratings yet

- Basic Arithmetic Operations Short CutsDocument6 pagesBasic Arithmetic Operations Short Cutskarupu31081991No ratings yet

- Graph Theory (Euler+hamilton)Document11 pagesGraph Theory (Euler+hamilton)samiul mugdhaNo ratings yet

- S1 Ch3 Patterns and Sequences QDocument12 pagesS1 Ch3 Patterns and Sequences QAda CheungNo ratings yet

- 2012 Math F2 (Squares, Square Roots, Cubes and Cube Roots)Document1 page2012 Math F2 (Squares, Square Roots, Cubes and Cube Roots)compeilNo ratings yet

- Sudkamp Solutions LibreDocument115 pagesSudkamp Solutions LibreXiang LuNo ratings yet

- Program For Tic Tac Toe GameDocument29 pagesProgram For Tic Tac Toe GameDeepa RangarajanNo ratings yet

- CMO 2009 - Problems and Solutions of 41st Canadian Mathematical Olympiad PDFDocument5 pagesCMO 2009 - Problems and Solutions of 41st Canadian Mathematical Olympiad PDFEduardo MullerNo ratings yet