Professional Documents

Culture Documents

Saai 2 U 13 Cvfundamentals

Saai 2 U 13 Cvfundamentals

Uploaded by

Dark SideOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Saai 2 U 13 Cvfundamentals

Saai 2 U 13 Cvfundamentals

Uploaded by

Dark SideCopyright:

Available Formats

V11.

2

Unit 13. Computer vision fundamentals

Uempty

Unit 13. Computer vision fundamentals

Estimated time

00:30

Overview

This unit explains the basic steps of a typical computer vision (CV) pipeline, how CV analyzes and

processes images, and explores commonly used techniques in CV.

© Copyright IBM Corp. 2019, 2021 13-1

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Unit objectives

• Describe image representation for computers.

• Describe the computer vision (CV) pipeline.

• Describe different preprocessing techniques.

• Explain image segmentation.

• Explain feature extraction and selection.

• Describe when object recognition takes place.

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-1. Unit objectives

© Copyright IBM Corp. 2019, 2021 13-2

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

13.1. Image representation

© Copyright IBM Corp. 2019, 2021 13-3

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Image representation

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-2. Image representation

© Copyright IBM Corp. 2019, 2021 13-4

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Topics

• Image representation

• Computer vision pipeline

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-3. Topics

© Copyright IBM Corp. 2019, 2021 13-5

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Image representation

• Images are stored as a 2D array of pixels on computers.

• Each pixel has a certain value representing its intensity.

• Example of grayscale representation:

Image is black and white with shades of gray in between.

Pixel intensity is a number between 0 (black) and 255 (white).

int[ ][ ] array = { {255, 170, 170, 0},

{220, 80, 80, 170},

{255, 80, 0, 0},

{175, 20, 170, 0} };

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-4. Image representation

It is important to understand how images are stored and represented on computer screens. Images

are made of grids of pixels, that is, a two-dimensional (2D) array of pixels, where each pixel has a

certain value representing its intensity. A pixel represents a square of color on the user’s screen.

There are many ways to represent color in images.

If the image is grayscale, that is, black and white with shades of gray in between, the intensity is a

number in the range 0 - 255, where 0 represents black and 255 represents white. The numbers in

between these two values are different intensities of gray.

For example, in the picture in the slide, assume that you selected a small square from the image

(the part in the red square). The pixel representation for it is in black, white, and shades of gray. To

the right of the picture is the numeric values of these pixels in a 2D array.

Note

The 256 scaling is a result of using 8-bit storage (28 =256).

© Copyright IBM Corp. 2019, 2021 13-6

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

References:

• http://www.csfieldguide.org.nz/en/chapters/data-representation.html

• https://www.sqa.org.uk/e-learning/BitVect01CD/page_36.htm

• https://www.mathworks.com/help/examples/images/win64/SegmentGrayscaleImageUsingKMea

nsClusteringExample_01.png

© Copyright IBM Corp. 2019, 2021 13-7

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Image representation (cont.)

Example of color representation:

The pixel color is represented as a mix of Red, Green, and Blue.

The pixel intensity becomes three 2D arrays or one 2D array, where each entry is

an object containing the 3 color values of RGB

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-5. Image representation (cont.)

If the image is colored, there are many color models that are available. The most well-known model

is RGB, where the color of the pixels is represented as a mix of Red, Blue, and Green color

channels. The value of the pixel intensity is represented as three 2D arrays that represent the three

color channels, where each color value is in the range 0 – 255. The color value represents different

intensities of the colors and all the colors that you see on the screen. The number of color

variations that are available in RGB is 256 * 256 *256 = 16,777,216 possible colors.

Depending on the data structure used that image appears as

• Three 2D arrays, with each 2D array representing the values and intensities for one color. The

image is a combination of all three arrays.

• One 2D array, where each entry in the array is an object containing the 3 color values of RGB

References:

• http://www.csfieldguide.org.nz/en/chapters/data-representation.html

• https://www.sqa.org.uk/e-learning/BitVect01CD/page_36.htm

• https://www.mathworks.com/company/newsletters/articles/how-matlab-represents-pixel-colors.ht

ml

© Copyright IBM Corp. 2019, 2021 13-8

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

13.2. Computer vision pipeline

© Copyright IBM Corp. 2019, 2021 13-9

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Computer vision pipeline

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-6. Computer vision pipeline

© Copyright IBM Corp. 2019, 2021 13-10

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Topics

• Image representation

• Computer vision pipeline

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-7. Topics

© Copyright IBM Corp. 2019, 2021 13-11

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

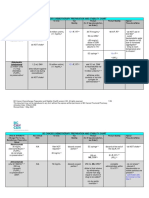

Computer vision pipeline

• The steps and functions that are included are highly dependent on the

application.

• Here is a conventional visual pattern recognition pipeline.

Feature

Image Pre-

Segmentation Extraction & Classification

Acquisition Processing

Selection

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-8. Computer vision pipeline

The steps and functions that are included in a CV system algorithm pipeline are highly dependent

on the application. The application might be implemented as a stand-alone part of a system and

might apply to only a certain step of the pipeline, or it can be a larger application, that is, an

end-to-end application that takes certain images and then applies all of the steps and functions in

the pipeline.

Reference:

https://www.researchgate.net/publication/273596152_Hardware_Architecture_for_Real-Time_Com

putation_of_Image_Component_Feature_Descriptors_on_a_FPGA

© Copyright IBM Corp. 2019, 2021 13-12

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Computer vision pipeline (cont.)

1. Image acquisition:

• The process of acquiring images and saving them in a digital image

format for processing.

• Images often use common formats, such as .jpeg, .png, and .bmp.

• Images are 2D images that are stored as arrays of pixels according to

their color model.

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-9. Computer vision pipeline (cont.)

1. Image acquisition

Image acquisition is the process of acquiring images and saving them in a digital image format for

processing in the pipeline.

Images are acquired from image sensors, such as commercial cameras. There are other types of

light-sensitive devices that can capture different types of images. Other image sensors include

radar, which uses radio waves for imaging.

Depending on the type of the device, the captured image can be 2D, 3D, or a sequence of images.

These images often use common formats, such as .jpeg, .png, and .bmp.

Images are stored as arrays of pixels according to their color model.

Reference:

https://en.wikipedia.org/wiki/Computer_vision#System_methods

© Copyright IBM Corp. 2019, 2021 13-13

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Computer vision pipeline (cont.)

2. Pre-processing:

• Preparing the image for the processing stage

• Examples:

Resizing images

Noise reduction

Contrast adjustment

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-10. Computer vision pipeline (cont.)

2. Pre-processing:

This stage focuses on preparing the image for the processing stage.

Examples:

• Images are resized as a form of normalization so that all images are the same size.

• Noise reduction reduces noise that represents false information in the image, as shown in the

slide.

• Contrast adjustment helps with the detection of image information. In the images of the little girl,

each image represents a different contrast adjustment.

Image source: https://www.mathworks.com/help/images/contrast-adjustment.html

Reference:

https://en.wikipedia.org/wiki/Computer_vision#System_methods

© Copyright IBM Corp. 2019, 2021 13-14

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Computer vision pipeline (cont.)

3. Segmentation:

• Partitioning an image into regions of similarity.

• Grouping pixels and features with similar characteristics together.

• Helps with selecting regions of interest within the images. These

regions can contain objects of interest that we want to capture.

• Segmenting an image into foreground

and background.

(Cremers, Rousson, and Deriche 2007) © 2007 Springer

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-11. Computer vision pipeline (cont.)

3. Segmentation

The position of the image segmentation phase is not fixed in the CV pipeline. It might be a part of

the pre-processing phase or follow it (as in our pipeline) or be part of the feature extraction and

selection phase or follow them.

Segmentation is one of the oldest problems in CV and has the following aspects:

• Partitioning an image into regions of similarity.

• Grouping pixels and features with similar characteristics together.

• Helps with selecting regions of interest within the images. These regions can contain objects of

interest that we want to capture.

• Segmenting an image into foreground and background to apply further processing on the

foreground.

© Copyright IBM Corp. 2019, 2021 13-15

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Image citation: Cremers, D., Rousson, M., & Deriche, R. (2007). A review of statistical approaches

to level set segmentation: integrating color, texture, motion and shape. International journal of

computer vision, 72(2), 195-215.

References:

• https://en.wikipedia.org/wiki/Computer_vision

• http://szeliski.org/Book/drafts/SzeliskiBook_20100903_draft.pdf

• http://www.cse.iitm.ac.in/~vplab/courses/CV_DIP/PDF/lect-Segmen.pdf

© Copyright IBM Corp. 2019, 2021 13-16

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Computer vision pipeline (cont.)

4. Feature extraction and selection:

• Find distinguishing information about the image.

• Image features examples: distinct color in an image or a specific shape

such as a line, edge, corner, or an image segment.

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-12. Computer vision pipeline (cont.)

4. Feature extraction and selection

In computer vision, a feature is a measurable piece of data in your image, which is unique to this

specific object. It can be a distinct color in an image or a specific shape such as a line, edge,

corner, or an image segment. A good feature is used to distinguish objects from one another.

The objective is to transform the raw data (image) into a features vector so that the learning

algorithm learns the characteristics of the object.

Typical examples of such features are:

• Lines and edges: These features are where sharp changes in brightness occur. They represent

the boundaries of objects.

• Corners: These features are points of interest in the image where intersections or changes in

brightness happens.

These corners and edges represent points and regions of interest in the image.

Brightness in this context refers to changes in the pixel intensity value.

© Copyright IBM Corp. 2019, 2021 13-17

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

References:

• https://freecontent.manning.com/computer-vision-pipeline-part-1-the-big-picture/

• https://freecontent.manning.com/the-computer-vision-pipeline-part-4-feature-extraction/

• https://en.wikipedia.org/wiki/Feature_detection_(computer_vision)

• https://www.mathworks.com/discovery/edge-detection.html

• https://en.wikipedia.org/wiki/Computer_vision

Image reference: https://www.mathworks.com/discovery/edge-detection.html

© Copyright IBM Corp. 2019, 2021 13-18

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Computer vision pipeline (cont.)

• Some extracted features might be irrelevant or redundant.

• After feature extraction comes feature selection.

• Feature selection is choosing a feature subset that can reduce

dimensionality with the least amount of information loss.

All Features Selection Selected

Techniques Features

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-13. Computer vision pipeline (cont.)

For feature extraction, all the features in the image that could be extracted are present. Now, we

start selecting the features that are of interest. Some extracted features maybe irrelevant or

redundant.

Here are some reasons to perform feature selection:

• Reduce the dimensions of the features that were extracted from the image.

• Avoid overfitting when training the model with the features.

• Create simpler models with less training time.

References:

• https://en.wikipedia.org/wiki/Feature_selection

• https://www.nature.com/articles/srep10312

© Copyright IBM Corp. 2019, 2021 13-19

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Computer vision pipeline (cont.)

5. Classification:

• The extracted features are used to classify the image.

• More processing might be done on the classified images to identify

more features from the image.

• Example: After face detection, identify features on the face, such as hair

style, age, and gender.

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-14. Computer vision pipeline (cont.)

5. Classification: Image classification is a practice in computer vision. It focuses on classifying an

image according to its visual content.

Further processing is done on the classified images to identify more features from the image. For

example, after the image is classified to detect the face region, identify features on the face such as

hair style, age, and gender.

Reference:

https://www.sciencedirect.com/topics/computer-science/image-classification.

© Copyright IBM Corp. 2019, 2021 13-20

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Decision making

• Apply logic to make decisions based on detected images.

• Examples:

Security application: Grant access based on face recognition.

In a greenhouse environment, a notification is sent to the engineers to act if a

plant is detected as suffering from decay.

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-15. Decision making

Decision making

After getting the output from the CV pipeline, users can apply logic that is based on the findings to

make decisions based on detected images.

For example, in security applications, the system can decide to grant access to a person if a face

that is detected is identified as the owner of a facility.

Another example is in a greenhouse environment, where a notification is sent to the engineers to

act if a plant is detected as suffering from decay.

© Copyright IBM Corp. 2019, 2021 13-21

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

V11.2

Unit 13. Computer vision fundamentals

Uempty

Unit summary

• Describe image representation for computers.

• Describe the computer vision (CV) pipeline.

• Describe different preprocessing techniques.

• Explain image segmentation.

• Explain feature extraction and selection.

• Describe when object recognition takes place.

Computer vision fundamentals © Copyright IBM Corporation 2019, 2021

Figure 13-16. Unit summary

© Copyright IBM Corp. 2019, 2021 13-22

Course materials may not be reproduced in whole or in part without the prior written permission of IBM.

You might also like

- The Future of JournalismDocument4 pagesThe Future of JournalismHuy NguyenNo ratings yet

- Ibm I AdministrationDocument962 pagesIbm I AdministrationPanzo Vézua Garcia100% (1)

- 2020 Dockzilla Loading Dock Buyers GuideDocument11 pages2020 Dockzilla Loading Dock Buyers GuideNadeem RazaNo ratings yet

- BWT Septron Line 31-61 Rev01!08!05-18 Opm enDocument56 pagesBWT Septron Line 31-61 Rev01!08!05-18 Opm enDavide Grioni100% (1)

- Saai 2 U 12 CvintroDocument18 pagesSaai 2 U 12 CvintroDark SideNo ratings yet

- Saai 2 U 02 WatsonDocument44 pagesSaai 2 U 02 WatsonDark SideNo ratings yet

- Saai 2 U 14 IntegrationDocument39 pagesSaai 2 U 14 IntegrationDark SideNo ratings yet

- Saai 2 U 03 WstudioDocument43 pagesSaai 2 U 03 WstudioDark SideNo ratings yet

- Saai 2 U 11 ChatbotsfundamentalsDocument42 pagesSaai 2 U 11 ChatbotsfundamentalsDark SideNo ratings yet

- coursBUTONLYQA MergedDocument52 pagescoursBUTONLYQA MergedBendoudaNo ratings yet

- Unité 1 PDFDocument70 pagesUnité 1 PDFhendaNo ratings yet

- Unit 2Document26 pagesUnit 2xaviNo ratings yet

- Unit6 Cloudant v2021Document57 pagesUnit6 Cloudant v2021Benali HamdiNo ratings yet

- ZS805 Unit03 ScriptDocument36 pagesZS805 Unit03 ScriptArdhiyan FadhlyNo ratings yet

- Saai 2 U 04 WMLDocument41 pagesSaai 2 U 04 WMLDark SideNo ratings yet

- LIL0241X Securing Web ResourcesDocument23 pagesLIL0241X Securing Web ResourcesecorradiNo ratings yet

- Design Thinking Unit-2 P1Document106 pagesDesign Thinking Unit-2 P1mayurprajapat89No ratings yet

- Interactive GraphicsDocument10 pagesInteractive GraphicsShikhar SuryanNo ratings yet

- Bidgoli10e ch02Document46 pagesBidgoli10e ch02toysdreatNo ratings yet

- Platform Acl, PopDocument23 pagesPlatform Acl, PopLê Tùng LâmNo ratings yet

- Exercise 0. Setting Up Your Hands-On Environment: Estimated TimeDocument13 pagesExercise 0. Setting Up Your Hands-On Environment: Estimated TimeTarik MajjatiNo ratings yet

- OL19 Eg 00Document15 pagesOL19 Eg 00Panzo Vézua GarciaNo ratings yet

- Module 07 - MimicsDocument15 pagesModule 07 - MimicsPhong TrầnNo ratings yet

- Configuring External Authentication Interface (EAI) : Lab ExercisesDocument18 pagesConfiguring External Authentication Interface (EAI) : Lab ExercisesLê Tùng LâmNo ratings yet

- PoweHA AIX Joe Armstrong Call HandoutDocument46 pagesPoweHA AIX Joe Armstrong Call Handoutedinel66666No ratings yet

- Exercise 0. Setting Up Your Hands-On Environment: Estimated TimeDocument19 pagesExercise 0. Setting Up Your Hands-On Environment: Estimated Timepresident fishrollNo ratings yet

- IBM Storage Integration With OpenStackDocument60 pagesIBM Storage Integration With OpenStackMetasebia AyisewNo ratings yet

- Exercise 01Document58 pagesExercise 01Navodit MoudgilNo ratings yet

- Demo Get Started With Docker On Ubuntu 16Document26 pagesDemo Get Started With Docker On Ubuntu 16Luis Zepeda HernandezNo ratings yet

- CGMT PPT Unit-1Document81 pagesCGMT PPT Unit-1Akshat GiriNo ratings yet

- CertifDocument1 pageCertifSiddhii BodakeNo ratings yet

- Drill ThroughDocument34 pagesDrill ThroughTALHA KHANNo ratings yet

- Images and Colors: Lecturer Nguyen Huy Anh Faculty of Information Technology, Hanoi UniversityDocument43 pagesImages and Colors: Lecturer Nguyen Huy Anh Faculty of Information Technology, Hanoi UniversityCNTT - 7C-18 Nguyễn Minh ThảoNo ratings yet

- Zhang 2020 J. Phys. Conf. Ser. 1533 032051Document5 pagesZhang 2020 J. Phys. Conf. Ser. 1533 032051NAJWA SALAMANo ratings yet

- Unit4 DevOps v2021Document69 pagesUnit4 DevOps v2021Benali Hamdi100% (1)

- Introduction To Computer Graphics: Eko Syamsuddin Hasrito, PHDDocument34 pagesIntroduction To Computer Graphics: Eko Syamsuddin Hasrito, PHDbotaxajaNo ratings yet

- Assignment02 InterfaceDesignDocument12 pagesAssignment02 InterfaceDesignHoài LinhNo ratings yet

- Certificate Cognitive ClassDocument2 pagesCertificate Cognitive ClassJahnavi AkumallaNo ratings yet

- Module 14 - Generic HMIDocument45 pagesModule 14 - Generic HMIMarious EesNo ratings yet

- Manual LevistudioU PDFDocument291 pagesManual LevistudioU PDFMajikates Service MaintenanceNo ratings yet

- School Personnel Support ProfileDocument1 pageSchool Personnel Support ProfileRose Anne Santiago DongonNo ratings yet

- Computer Graphics (COMP378) : Instructor: Mekides Assefa Computer Science Department Eit-MDocument8 pagesComputer Graphics (COMP378) : Instructor: Mekides Assefa Computer Science Department Eit-MmekidesNo ratings yet

- Chapter 1Document39 pagesChapter 1Duy Nguyễn KimNo ratings yet

- Cinematic Studio Sample Startup GuideDocument12 pagesCinematic Studio Sample Startup GuideGustavoLadinoNo ratings yet

- COMPUTER GRAPHICS CH 1-2 2Document27 pagesCOMPUTER GRAPHICS CH 1-2 2encmbaNo ratings yet

- Week0 INFT - Sem6 - IPDocument28 pagesWeek0 INFT - Sem6 - IPdivyasurve06101989No ratings yet

- Unit 3 HciDocument17 pagesUnit 3 HciMohd EjazNo ratings yet

- Principles of Visual Modeling: TopicsDocument32 pagesPrinciples of Visual Modeling: TopicsAlexandra RoscaNo ratings yet

- Lecture 5 Computer Modelling & VisualizationDocument57 pagesLecture 5 Computer Modelling & VisualizationLEGOELINo ratings yet

- Chapter 1 PDFDocument4 pagesChapter 1 PDFirushaNo ratings yet

- Module 09 - Mimics - BasicsDocument14 pagesModule 09 - Mimics - BasicsMarious EesNo ratings yet

- Unit 04 - IBM I - Virtualization - 3448280Document19 pagesUnit 04 - IBM I - Virtualization - 3448280kashif AdeelNo ratings yet

- Advanced Presentation LibreOffice Impress HandbookDocument39 pagesAdvanced Presentation LibreOffice Impress HandbookhendyNo ratings yet

- ISeries ConceptDocument44 pagesISeries Conceptsagaru_idNo ratings yet

- Experiment No. 1 Introduction To Image Processing Toolbox in MATLABDocument15 pagesExperiment No. 1 Introduction To Image Processing Toolbox in MATLABShahidan KirkukyNo ratings yet

- KM510 Course Guide DESDocument70 pagesKM510 Course Guide DESliliriu2000No ratings yet

- Exercitii Curs 1Document224 pagesExercitii Curs 1vikolnikolaiNo ratings yet

- 02 Lab3 IoT Cloud.v2.2Document67 pages02 Lab3 IoT Cloud.v2.2Holger ChambaNo ratings yet

- 802 Information - Technology Xi Xii 2022 23Document14 pages802 Information - Technology Xi Xii 2022 23Shahbaaz SinghNo ratings yet

- ISD LAB05-InterfaceDesignDocument12 pagesISD LAB05-InterfaceDesignTú PhạmNo ratings yet

- 6&7. Composite Screens in T24Document35 pages6&7. Composite Screens in T24Muhanad JalilNo ratings yet

- Virtual Boy Architecture: Architecture of Consoles: A Practical Analysis, #17From EverandVirtual Boy Architecture: Architecture of Consoles: A Practical Analysis, #17No ratings yet

- Saai 2 U 12 CvintroDocument18 pagesSaai 2 U 12 CvintroDark SideNo ratings yet

- Saai 2 U 11 ChatbotsfundamentalsDocument42 pagesSaai 2 U 11 ChatbotsfundamentalsDark SideNo ratings yet

- Saai 2 U 14 IntegrationDocument39 pagesSaai 2 U 14 IntegrationDark SideNo ratings yet

- Saai 2 U 04 WMLDocument41 pagesSaai 2 U 04 WMLDark SideNo ratings yet

- Saai 2 U 02 WatsonDocument44 pagesSaai 2 U 02 WatsonDark SideNo ratings yet

- Saai 2 U 03 WstudioDocument43 pagesSaai 2 U 03 WstudioDark SideNo ratings yet

- Saai 2 Ex 00Document16 pagesSaai 2 Ex 00Dark SideNo ratings yet

- Describing The Impact of Massive Open Online Course: Darielle Apilas-Bacayan Developer LessonDocument5 pagesDescribing The Impact of Massive Open Online Course: Darielle Apilas-Bacayan Developer LessonJhunel FernandezNo ratings yet

- The Untouchables and The Pax Britannica Dr. B.R.ambedkarDocument61 pagesThe Untouchables and The Pax Britannica Dr. B.R.ambedkarVeeramani ManiNo ratings yet

- SH Catalogue - Neuro & SpinalDocument17 pagesSH Catalogue - Neuro & SpinalSaqibullah ShahzadNo ratings yet

- Apostolic Fathers II 1917 LAKEDocument410 pagesApostolic Fathers II 1917 LAKEpolonia91No ratings yet

- Software Quality Assurance: Lecture # 6Document37 pagesSoftware Quality Assurance: Lecture # 6rabiaNo ratings yet

- MA History Modern Cbcs II Sem FinalDocument21 pagesMA History Modern Cbcs II Sem Finalrudalgupt88No ratings yet

- Introduction To Cost Estimation SoftwareDocument57 pagesIntroduction To Cost Estimation SoftwareGoutam Giri100% (4)

- Adrian Cooke: LimerickDocument2 pagesAdrian Cooke: Limerickapi-401919939No ratings yet

- Themes: Themes, Motifs and SymbolsDocument6 pagesThemes: Themes, Motifs and SymbolsTanu ManochaNo ratings yet

- Presentation Geotextile (November 2010)Document22 pagesPresentation Geotextile (November 2010)Gizachew ZelekeNo ratings yet

- Audit Non Conformance ReportDocument4 pagesAudit Non Conformance Reportbudi_alamsyah100% (2)

- Pe Lesson 2Document2 pagesPe Lesson 2Jessa De JesusNo ratings yet

- Cellphone SafetyDocument18 pagesCellphone Safetyva4avNo ratings yet

- Certificado Copla 6000 1Document1 pageCertificado Copla 6000 1juan aguilarNo ratings yet

- The Life Eternal Trust Pune: (A Sahaja Yoga Trust Formed by Her Holiness Shree Nirmala Devi)Document20 pagesThe Life Eternal Trust Pune: (A Sahaja Yoga Trust Formed by Her Holiness Shree Nirmala Devi)Shrikant WarkhedkarNo ratings yet

- Lee 2005Document7 pagesLee 2005afiqah.saironiNo ratings yet

- Kautilya Arthashastra and Its Relevance To Urban Planning StudiesDocument9 pagesKautilya Arthashastra and Its Relevance To Urban Planning Studies4gouthamNo ratings yet

- Week 2 Pain ManagementDocument51 pagesWeek 2 Pain Managementعزالدين الطيارNo ratings yet

- 01.inter Com Engg PDFDocument28 pages01.inter Com Engg PDFSanjeevi PrakashNo ratings yet

- Comm 10Document2 pagesComm 10boopNo ratings yet

- VLSI Interview QuestionsDocument41 pagesVLSI Interview QuestionsKarthik Real Pacifier0% (1)

- Nightingale PledgeDocument26 pagesNightingale PledgeIcee SaputilNo ratings yet

- Xt2052y2asr GDocument1 pageXt2052y2asr GjoseNo ratings yet

- NEW Garrett TV83 Turbo Repair Kit 468267-0000Document10 pagesNEW Garrett TV83 Turbo Repair Kit 468267-0000thomaskarakNo ratings yet

- Chemo Stability Chart - AtoKDocument59 pagesChemo Stability Chart - AtoKAfifah Nur Diana PutriNo ratings yet

- Cameroon: Maternal and Newborn Health DisparitiesDocument8 pagesCameroon: Maternal and Newborn Health Disparitiescadesmas techNo ratings yet

- CFJ Zimmer SubfailureDocument8 pagesCFJ Zimmer SubfailureGayle ShallooNo ratings yet