Professional Documents

Culture Documents

AIP A1 Report

AIP A1 Report

Uploaded by

ashok kumarCopyright:

Available Formats

You might also like

- Bartle Intro To Real Analysis Solutions CH 10 PDFDocument8 pagesBartle Intro To Real Analysis Solutions CH 10 PDFdoni ajah100% (1)

- SMSC 52 Command Reference Manual PDFDocument1,508 pagesSMSC 52 Command Reference Manual PDFTomNo ratings yet

- DIP Lab FileDocument13 pagesDIP Lab FileAniket Kumar 10No ratings yet

- Indian Sign Language Dataset (1-9) CNN Hyper Parameter TuningDocument8 pagesIndian Sign Language Dataset (1-9) CNN Hyper Parameter TuningsambitsoftNo ratings yet

- Plagarism Scan Report 3Document3 pagesPlagarism Scan Report 3Murali ShankarNo ratings yet

- My NotesDocument2 pagesMy NotesanshNo ratings yet

- AI AssignmentDocument31 pagesAI Assignmentmimonakarki7No ratings yet

- Machine LearningDocument19 pagesMachine Learningjohnny brooksNo ratings yet

- Image Classification Using Backpropagation Neural Network Without Using Built-In FunctionDocument8 pagesImage Classification Using Backpropagation Neural Network Without Using Built-In FunctionMaisha MashiataNo ratings yet

- Part 3 - Building A Deep Q-Network To Play Gridworld - Learning Instability and Target Networks - by NandaKishore Joshi - Towards Data ScienceDocument7 pagesPart 3 - Building A Deep Q-Network To Play Gridworld - Learning Instability and Target Networks - by NandaKishore Joshi - Towards Data Science배영광No ratings yet

- Udacity Nanodegree Project ReportDocument12 pagesUdacity Nanodegree Project ReportNaveen GuptaNo ratings yet

- Classroom Project Report Latex TemplateDocument7 pagesClassroom Project Report Latex TemplateAnton KuznetsovNo ratings yet

- Lab 05 - Digital Image Processing PracticeDocument9 pagesLab 05 - Digital Image Processing PracticeNhật ToànNo ratings yet

- Visualizing Deep Neural Networks Classes and Features - AnkivilDocument58 pagesVisualizing Deep Neural Networks Classes and Features - AnkivilAndres Tuells JanssonNo ratings yet

- 111 ReportDocument6 pages111 ReportNguyễn Tự SangNo ratings yet

- GAN ReportDocument24 pagesGAN ReportsenthilnathanNo ratings yet

- CV Project1Document11 pagesCV Project1Girish KumarNo ratings yet

- Image Deblurring Using A Neural Network Approach: ISSN: 2277-3754Document4 pagesImage Deblurring Using A Neural Network Approach: ISSN: 2277-3754Simona StolnicuNo ratings yet

- IISDocument10 pagesIISPetko PetkovskiNo ratings yet

- Final Project Report Nur Alam (肖恩) 20183290523Document12 pagesFinal Project Report Nur Alam (肖恩) 20183290523Xiao enNo ratings yet

- K MeansDocument10 pagesK MeansS BNo ratings yet

- DLCV Ch2 Example ExerciseDocument25 pagesDLCV Ch2 Example ExerciseMario ParotNo ratings yet

- Final Report - MLDocument12 pagesFinal Report - MLBollam Pragnya 518No ratings yet

- Image Super Resolution ReportDocument12 pagesImage Super Resolution ReportNikhil GuptaNo ratings yet

- TCP426 Practical FileDocument23 pagesTCP426 Practical FileAbhishek ChaudharyNo ratings yet

- Shoolini University Mid SemDocument3 pagesShoolini University Mid SemClash ClanNo ratings yet

- Convolution Neural Network: CP - 6 Machine Learning M S PrasadDocument37 pagesConvolution Neural Network: CP - 6 Machine Learning M S PrasadMani S Prasad100% (1)

- Visual Cryptographic Algorithm With Shared Data: Gazal Sahai T.S.LambaDocument5 pagesVisual Cryptographic Algorithm With Shared Data: Gazal Sahai T.S.LambaHari RamNo ratings yet

- 2017-Article Text-7775-1-10-20200513Document7 pages2017-Article Text-7775-1-10-20200513Kartini syukurNo ratings yet

- Building A Convolutional Neural Network Using Tensorflow KerasDocument10 pagesBuilding A Convolutional Neural Network Using Tensorflow Kerasharshita.btech22No ratings yet

- Denoising of Images Using AutoencodersDocument18 pagesDenoising of Images Using AutoencodersSudesha BasuNo ratings yet

- Video Frame Text Recognition System: Presented byDocument25 pagesVideo Frame Text Recognition System: Presented byHassan HASSANNo ratings yet

- 361 Project CodeDocument10 pages361 Project CodeskdlfNo ratings yet

- (IJCST-V11I2P11) :dr. Girish Tere, Mr. Kuldeep KandwalDocument7 pages(IJCST-V11I2P11) :dr. Girish Tere, Mr. Kuldeep KandwalEighthSenseGroupNo ratings yet

- PCA Analysis With Image FilesDocument10 pagesPCA Analysis With Image FilesKangsan LeeNo ratings yet

- Class TP2Document27 pagesClass TP2samashrafNo ratings yet

- Deep Learning Lab (Ai&ds)Document39 pagesDeep Learning Lab (Ai&ds)BELMER GLADSON Asst. Prof. (CSE)No ratings yet

- J. Sil 1Document6 pagesJ. Sil 1Soham DattaNo ratings yet

- Cs 180 MP 2Document8 pagesCs 180 MP 2weregarurumonxNo ratings yet

- Fake Image Detection ReportDocument21 pagesFake Image Detection ReportMathanika. MNo ratings yet

- Proj3 Arc2Document6 pagesProj3 Arc2api-538408600No ratings yet

- External Quality Grading of Apple Using Deep Learning: Nitika - Cse@cumail - inDocument8 pagesExternal Quality Grading of Apple Using Deep Learning: Nitika - Cse@cumail - inTECHTOBYTENo ratings yet

- Open Image SegmentationDocument9 pagesOpen Image SegmentationAbhishek JhaNo ratings yet

- GNN Python Code in Keras and Pytorch - by YashwanthReddyGoduguchintha - MediumDocument10 pagesGNN Python Code in Keras and Pytorch - by YashwanthReddyGoduguchintha - Mediumravinder.ds7865No ratings yet

- Welding Defect Classification Based On Convolution Neural Network (CNN) and Gaussian KernelDocument5 pagesWelding Defect Classification Based On Convolution Neural Network (CNN) and Gaussian KernelĐào Văn HưngNo ratings yet

- CNNDocument6 pagesCNNAsmar HajizadaNo ratings yet

- Why Convolutions?: Till Now in MLPDocument38 pagesWhy Convolutions?: Till Now in MLPItokiana valimbavaka RabenantenainaNo ratings yet

- Dip Lab - 9Document3 pagesDip Lab - 9Golam DaiyanNo ratings yet

- Assignment-1: Hallenges in Executing Gradient Descent Algorithm?Document2 pagesAssignment-1: Hallenges in Executing Gradient Descent Algorithm?kn patelNo ratings yet

- "I C U N N ": Mage Lassification Sing Eural EtworksDocument15 pages"I C U N N ": Mage Lassification Sing Eural Etworksbabloo veluvoluNo ratings yet

- DIVP MANUAL ExpDocument36 pagesDIVP MANUAL ExpSHIVANSH SHAHEE (RA2211032010085)No ratings yet

- Research Presentation TemplateDocument9 pagesResearch Presentation TemplateKatende ChrisNo ratings yet

- CD 601 Lab ManualDocument61 pagesCD 601 Lab ManualSatya Prakash SoniNo ratings yet

- 1 ST RGlucoma DetectionDocument23 pages1 ST RGlucoma DetectionNaveen KumarNo ratings yet

- CV 2 AssignmentDocument12 pagesCV 2 Assignmentsewmehon melakNo ratings yet

- ABHAYMLFILEDocument16 pagesABHAYMLFILEranabeena804No ratings yet

- Computer Vision HW 6: Ethan Gibson December 2018Document3 pagesComputer Vision HW 6: Ethan Gibson December 2018EthanNo ratings yet

- ProjectReport KanwarpalDocument17 pagesProjectReport KanwarpalKanwarpal SinghNo ratings yet

- Deep Neural Network ApplicationDocument17 pagesDeep Neural Network Applicationsvenmarshall606No ratings yet

- 1st Place Solution To Google Landmark Retrieval 2020 ModifiedDocument3 pages1st Place Solution To Google Landmark Retrieval 2020 ModifiedWisam NajiNo ratings yet

- Lecture 12 Learning in Vision 2022Document100 pagesLecture 12 Learning in Vision 2022ashok kumarNo ratings yet

- Exam 3Document2 pagesExam 3ashok kumarNo ratings yet

- Computational Linear Algebra - Problem Set 7Document2 pagesComputational Linear Algebra - Problem Set 7ashok kumarNo ratings yet

- SM NcutDocument18 pagesSM Ncutashok kumarNo ratings yet

- Mineralisation of DevelopmentallyDocument5 pagesMineralisation of DevelopmentallyRiad BachoNo ratings yet

- Quality Assurance of Laboratory Results: A Challenge in Health Care ManagementDocument6 pagesQuality Assurance of Laboratory Results: A Challenge in Health Care Managementrizkiyah prabawantiNo ratings yet

- Triangle Inequality Theorem WorksheetDocument1 pageTriangle Inequality Theorem WorksheetAleczander EstrebilloNo ratings yet

- Python Developer MCQ-QuestionsDocument11 pagesPython Developer MCQ-Questionsrammit2007No ratings yet

- Unconventional Machining Processes - Introduction and ClassificationDocument3 pagesUnconventional Machining Processes - Introduction and ClassificationVishal KumarNo ratings yet

- Digital Circuits For GATE ExamDocument15 pagesDigital Circuits For GATE ExamSAMIT KARMAKAR100% (1)

- Mobile Wimax: Sector Antenna Installation ManualDocument17 pagesMobile Wimax: Sector Antenna Installation ManualjbonvierNo ratings yet

- Lms Length Boys 3mon PDocument1 pageLms Length Boys 3mon PArdi IswaraNo ratings yet

- Perfect Score SBP Fizik SPM 2011 QuestionDocument53 pagesPerfect Score SBP Fizik SPM 2011 QuestionSamion AwaldinNo ratings yet

- Phase DiagramsDocument23 pagesPhase DiagramsnvemanNo ratings yet

- Fractional Time Delay Estimation AlgorithmDocument8 pagesFractional Time Delay Estimation AlgorithmandradesosNo ratings yet

- Spec Sheet SD200Document8 pagesSpec Sheet SD200Yohanes ArgamNo ratings yet

- Chilled Ceilings/Beams SystemsDocument8 pagesChilled Ceilings/Beams SystemsShazia Farman Ali QaziNo ratings yet

- Tuning of A PID Controller Using Ziegler-Nichols MethodDocument7 pagesTuning of A PID Controller Using Ziegler-Nichols MethodTomKish100% (1)

- Department of Electrical Engineering (New Campus) : LAB 5: JFET and MOSFET (DC Biasing Review)Document10 pagesDepartment of Electrical Engineering (New Campus) : LAB 5: JFET and MOSFET (DC Biasing Review)syed furqan javedNo ratings yet

- 五十铃isuzu Hatichi 4hk1 6hk1 ManualDocument351 pages五十铃isuzu Hatichi 4hk1 6hk1 Manual蔡苏100% (2)

- Daily Drilling Report No.28 Pozo La Colpa 2XDDocument6 pagesDaily Drilling Report No.28 Pozo La Colpa 2XDJuan Pablo Sanchez MelgarejoNo ratings yet

- Turning Radius TemplatesDocument20 pagesTurning Radius Templatesandra_janinaNo ratings yet

- Concepts RadiationDocument4 pagesConcepts RadiationDarkNo ratings yet

- Trumpet: Vacuum Tube MM PhonostageDocument8 pagesTrumpet: Vacuum Tube MM PhonostageSridip BanerjeeNo ratings yet

- Coupled LinesDocument10 pagesCoupled LinesFatih TokgözNo ratings yet

- 19 LO4 Measures of Central Tendency and SpreadDocument9 pages19 LO4 Measures of Central Tendency and SpreadwmathematicsNo ratings yet

- 2015 Eduction of Formaldehyde Emission From Particleboard by Phenolated Kraft LigninDocument25 pages2015 Eduction of Formaldehyde Emission From Particleboard by Phenolated Kraft LigninCansu kozbekçiNo ratings yet

- The Simple Answer To Torque Steer: "Revo" Suspension: Ford Forschungszentrum AachenDocument18 pagesThe Simple Answer To Torque Steer: "Revo" Suspension: Ford Forschungszentrum AachennmigferNo ratings yet

- DP Level Measurement BasicsDocument2 pagesDP Level Measurement Basicsjsrplc7952No ratings yet

- Bookstein - 2014 - Measuring and Reasoning Numerical Inference in The SciencesDocument570 pagesBookstein - 2014 - Measuring and Reasoning Numerical Inference in The Sciencessergiorodriguezarq97100% (1)

- Analysis of Spur Gear Using Composite MaterialDocument9 pagesAnalysis of Spur Gear Using Composite MaterialIJRASETPublicationsNo ratings yet

- Power Factor Correction: Wiseman Nene Eit - Electrical Eston Sugar MillDocument42 pagesPower Factor Correction: Wiseman Nene Eit - Electrical Eston Sugar MillPushpendraMeenaNo ratings yet

AIP A1 Report

AIP A1 Report

Uploaded by

ashok kumarOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

AIP A1 Report

AIP A1 Report

Uploaded by

ashok kumarCopyright:

Available Formats

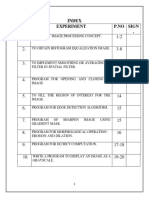

E9 246 AIP Assignment 1

Name: Pagadala Krishna Murthy, SR NO: 19217, MTech AI (Artificial Intelligence), EE.

Q1: Scale-Space extrema detection SIFT:

Original image: Keypoints for original image:

Keypoints detected= 699

Keypoints for scaled image: Keypoints for noisy image:

Keypoints detected = 170 Keypoints detected = 519

Keypoints for blur image: Keypoints for rotated image:

Keypoints detected = 864 Keypoints detected = 693

Original image: Keypoints for original image:

Keypoints detected= 261

Keypoints for scaled image: Keypoints for noisy image:

Keypoints detected = 102 Keypoints detected = 255

Keypoints for blur image: Keypoints for rotated image:

Keypoints detected = 991 Keypoints detected = 271

For all the transformations of image, most of the features detected are repeated in all the

transformations of image. So features detected can be said as invariant features.

First Image size is 500 X 375, Sigma =1.6, No. of images per octave = 5, No. of octaves = 8

Second Image size is 400 X 500

Q2_PartA: Using Pre-Trained deep neural network for Image classification:

Loaded inbuilt VGG16 model and extracted features from FC2 layer for each image, and formed

x_train and x_test

For y_train and y_test used the following numbers to each class of image in the data set

Albatross –0, American_Goldfinch –1, anthuriam - 2, frangipani - 3, Marigold-4,

Red_headed_Woodpecker-5

Classified each feature using KNN algorithm, ‘n’ is no. of Neighbours taken

For n=3 accuracy is 95%

For n=5 accuracy is 98.33%

For n=7 accuracy is 96.66%

For n=5 the accuracy obtained is highest on the given data

Q2_PartB: Fine tuning the classification layer

Folder preparation for test and train data set creation :

The prediction layer (last layer) in the VGG16 model has 1000 classes. Since our task is to classify on

6 classes. We need to modify this layer

Since I'm not able to replace the layer in the existing VGG16 model, I created a empty new

sequential model

Copied all the layers except the prediction layer into sequential model

Added a 6 class Dense layer with SoftMax activation function.

Compiled the model using Adam optimizer. Initially I tried to compile the entire model, but its taking

a very long time so I understood that it's trying to train all the layers, so freezed all the layers except

the last layer, so that only final layer is trainable.

The results are as follows:

Accuracy on training set: 99.12%

Accuracy on test set: 93.33%

Collab code link:

https://colab.research.google.com/drive/1AtDQLnc9eZuZRqTo5u2eEl_bQSZfNrFF#scrollTo=oxi573Zn

dISA

Q2_Optional: Simple CNN from scratch with CE (Cross Entropy) loss:

Implemented 3-layer CNN from scratch with convolution, flatten and dense layers

Compiled the model with Adam optimizer with learning rate = 0.0001 and loss = crossentropy

When trained model for the first time, I got 94.72% accuracy on training data, when I executed the

cell again, I got 99.72% accuracy. I assume this was due to overfitting tendency of the neural

networks repeating the same training data.

Later I restarted the program and run the model over the training data only once.

The accuracy on training data is 94.72%

The accuracy on test data is 99.17%

Collab codelink: https://colab.research.google.com/drive/1s2UzRs1nH5pOIcFIq959HwX_4ZqGOYJD

Comparison between the different parts of Q2.

In part A we try to use just the features given by fc1/fc2 layer of VGG16 model and use separate

classifier algorithm(KNN) to classify.

In part B we directly use VGG16 to classify, by fine tuning its classification layer to predict only 6

classes

In part C we built a simple CNN model from scratch with 3 layers and process is same as part B,

except that here we can train all layers in less time.

You might also like

- Bartle Intro To Real Analysis Solutions CH 10 PDFDocument8 pagesBartle Intro To Real Analysis Solutions CH 10 PDFdoni ajah100% (1)

- SMSC 52 Command Reference Manual PDFDocument1,508 pagesSMSC 52 Command Reference Manual PDFTomNo ratings yet

- DIP Lab FileDocument13 pagesDIP Lab FileAniket Kumar 10No ratings yet

- Indian Sign Language Dataset (1-9) CNN Hyper Parameter TuningDocument8 pagesIndian Sign Language Dataset (1-9) CNN Hyper Parameter TuningsambitsoftNo ratings yet

- Plagarism Scan Report 3Document3 pagesPlagarism Scan Report 3Murali ShankarNo ratings yet

- My NotesDocument2 pagesMy NotesanshNo ratings yet

- AI AssignmentDocument31 pagesAI Assignmentmimonakarki7No ratings yet

- Machine LearningDocument19 pagesMachine Learningjohnny brooksNo ratings yet

- Image Classification Using Backpropagation Neural Network Without Using Built-In FunctionDocument8 pagesImage Classification Using Backpropagation Neural Network Without Using Built-In FunctionMaisha MashiataNo ratings yet

- Part 3 - Building A Deep Q-Network To Play Gridworld - Learning Instability and Target Networks - by NandaKishore Joshi - Towards Data ScienceDocument7 pagesPart 3 - Building A Deep Q-Network To Play Gridworld - Learning Instability and Target Networks - by NandaKishore Joshi - Towards Data Science배영광No ratings yet

- Udacity Nanodegree Project ReportDocument12 pagesUdacity Nanodegree Project ReportNaveen GuptaNo ratings yet

- Classroom Project Report Latex TemplateDocument7 pagesClassroom Project Report Latex TemplateAnton KuznetsovNo ratings yet

- Lab 05 - Digital Image Processing PracticeDocument9 pagesLab 05 - Digital Image Processing PracticeNhật ToànNo ratings yet

- Visualizing Deep Neural Networks Classes and Features - AnkivilDocument58 pagesVisualizing Deep Neural Networks Classes and Features - AnkivilAndres Tuells JanssonNo ratings yet

- 111 ReportDocument6 pages111 ReportNguyễn Tự SangNo ratings yet

- GAN ReportDocument24 pagesGAN ReportsenthilnathanNo ratings yet

- CV Project1Document11 pagesCV Project1Girish KumarNo ratings yet

- Image Deblurring Using A Neural Network Approach: ISSN: 2277-3754Document4 pagesImage Deblurring Using A Neural Network Approach: ISSN: 2277-3754Simona StolnicuNo ratings yet

- IISDocument10 pagesIISPetko PetkovskiNo ratings yet

- Final Project Report Nur Alam (肖恩) 20183290523Document12 pagesFinal Project Report Nur Alam (肖恩) 20183290523Xiao enNo ratings yet

- K MeansDocument10 pagesK MeansS BNo ratings yet

- DLCV Ch2 Example ExerciseDocument25 pagesDLCV Ch2 Example ExerciseMario ParotNo ratings yet

- Final Report - MLDocument12 pagesFinal Report - MLBollam Pragnya 518No ratings yet

- Image Super Resolution ReportDocument12 pagesImage Super Resolution ReportNikhil GuptaNo ratings yet

- TCP426 Practical FileDocument23 pagesTCP426 Practical FileAbhishek ChaudharyNo ratings yet

- Shoolini University Mid SemDocument3 pagesShoolini University Mid SemClash ClanNo ratings yet

- Convolution Neural Network: CP - 6 Machine Learning M S PrasadDocument37 pagesConvolution Neural Network: CP - 6 Machine Learning M S PrasadMani S Prasad100% (1)

- Visual Cryptographic Algorithm With Shared Data: Gazal Sahai T.S.LambaDocument5 pagesVisual Cryptographic Algorithm With Shared Data: Gazal Sahai T.S.LambaHari RamNo ratings yet

- 2017-Article Text-7775-1-10-20200513Document7 pages2017-Article Text-7775-1-10-20200513Kartini syukurNo ratings yet

- Building A Convolutional Neural Network Using Tensorflow KerasDocument10 pagesBuilding A Convolutional Neural Network Using Tensorflow Kerasharshita.btech22No ratings yet

- Denoising of Images Using AutoencodersDocument18 pagesDenoising of Images Using AutoencodersSudesha BasuNo ratings yet

- Video Frame Text Recognition System: Presented byDocument25 pagesVideo Frame Text Recognition System: Presented byHassan HASSANNo ratings yet

- 361 Project CodeDocument10 pages361 Project CodeskdlfNo ratings yet

- (IJCST-V11I2P11) :dr. Girish Tere, Mr. Kuldeep KandwalDocument7 pages(IJCST-V11I2P11) :dr. Girish Tere, Mr. Kuldeep KandwalEighthSenseGroupNo ratings yet

- PCA Analysis With Image FilesDocument10 pagesPCA Analysis With Image FilesKangsan LeeNo ratings yet

- Class TP2Document27 pagesClass TP2samashrafNo ratings yet

- Deep Learning Lab (Ai&ds)Document39 pagesDeep Learning Lab (Ai&ds)BELMER GLADSON Asst. Prof. (CSE)No ratings yet

- J. Sil 1Document6 pagesJ. Sil 1Soham DattaNo ratings yet

- Cs 180 MP 2Document8 pagesCs 180 MP 2weregarurumonxNo ratings yet

- Fake Image Detection ReportDocument21 pagesFake Image Detection ReportMathanika. MNo ratings yet

- Proj3 Arc2Document6 pagesProj3 Arc2api-538408600No ratings yet

- External Quality Grading of Apple Using Deep Learning: Nitika - Cse@cumail - inDocument8 pagesExternal Quality Grading of Apple Using Deep Learning: Nitika - Cse@cumail - inTECHTOBYTENo ratings yet

- Open Image SegmentationDocument9 pagesOpen Image SegmentationAbhishek JhaNo ratings yet

- GNN Python Code in Keras and Pytorch - by YashwanthReddyGoduguchintha - MediumDocument10 pagesGNN Python Code in Keras and Pytorch - by YashwanthReddyGoduguchintha - Mediumravinder.ds7865No ratings yet

- Welding Defect Classification Based On Convolution Neural Network (CNN) and Gaussian KernelDocument5 pagesWelding Defect Classification Based On Convolution Neural Network (CNN) and Gaussian KernelĐào Văn HưngNo ratings yet

- CNNDocument6 pagesCNNAsmar HajizadaNo ratings yet

- Why Convolutions?: Till Now in MLPDocument38 pagesWhy Convolutions?: Till Now in MLPItokiana valimbavaka RabenantenainaNo ratings yet

- Dip Lab - 9Document3 pagesDip Lab - 9Golam DaiyanNo ratings yet

- Assignment-1: Hallenges in Executing Gradient Descent Algorithm?Document2 pagesAssignment-1: Hallenges in Executing Gradient Descent Algorithm?kn patelNo ratings yet

- "I C U N N ": Mage Lassification Sing Eural EtworksDocument15 pages"I C U N N ": Mage Lassification Sing Eural Etworksbabloo veluvoluNo ratings yet

- DIVP MANUAL ExpDocument36 pagesDIVP MANUAL ExpSHIVANSH SHAHEE (RA2211032010085)No ratings yet

- Research Presentation TemplateDocument9 pagesResearch Presentation TemplateKatende ChrisNo ratings yet

- CD 601 Lab ManualDocument61 pagesCD 601 Lab ManualSatya Prakash SoniNo ratings yet

- 1 ST RGlucoma DetectionDocument23 pages1 ST RGlucoma DetectionNaveen KumarNo ratings yet

- CV 2 AssignmentDocument12 pagesCV 2 Assignmentsewmehon melakNo ratings yet

- ABHAYMLFILEDocument16 pagesABHAYMLFILEranabeena804No ratings yet

- Computer Vision HW 6: Ethan Gibson December 2018Document3 pagesComputer Vision HW 6: Ethan Gibson December 2018EthanNo ratings yet

- ProjectReport KanwarpalDocument17 pagesProjectReport KanwarpalKanwarpal SinghNo ratings yet

- Deep Neural Network ApplicationDocument17 pagesDeep Neural Network Applicationsvenmarshall606No ratings yet

- 1st Place Solution To Google Landmark Retrieval 2020 ModifiedDocument3 pages1st Place Solution To Google Landmark Retrieval 2020 ModifiedWisam NajiNo ratings yet

- Lecture 12 Learning in Vision 2022Document100 pagesLecture 12 Learning in Vision 2022ashok kumarNo ratings yet

- Exam 3Document2 pagesExam 3ashok kumarNo ratings yet

- Computational Linear Algebra - Problem Set 7Document2 pagesComputational Linear Algebra - Problem Set 7ashok kumarNo ratings yet

- SM NcutDocument18 pagesSM Ncutashok kumarNo ratings yet

- Mineralisation of DevelopmentallyDocument5 pagesMineralisation of DevelopmentallyRiad BachoNo ratings yet

- Quality Assurance of Laboratory Results: A Challenge in Health Care ManagementDocument6 pagesQuality Assurance of Laboratory Results: A Challenge in Health Care Managementrizkiyah prabawantiNo ratings yet

- Triangle Inequality Theorem WorksheetDocument1 pageTriangle Inequality Theorem WorksheetAleczander EstrebilloNo ratings yet

- Python Developer MCQ-QuestionsDocument11 pagesPython Developer MCQ-Questionsrammit2007No ratings yet

- Unconventional Machining Processes - Introduction and ClassificationDocument3 pagesUnconventional Machining Processes - Introduction and ClassificationVishal KumarNo ratings yet

- Digital Circuits For GATE ExamDocument15 pagesDigital Circuits For GATE ExamSAMIT KARMAKAR100% (1)

- Mobile Wimax: Sector Antenna Installation ManualDocument17 pagesMobile Wimax: Sector Antenna Installation ManualjbonvierNo ratings yet

- Lms Length Boys 3mon PDocument1 pageLms Length Boys 3mon PArdi IswaraNo ratings yet

- Perfect Score SBP Fizik SPM 2011 QuestionDocument53 pagesPerfect Score SBP Fizik SPM 2011 QuestionSamion AwaldinNo ratings yet

- Phase DiagramsDocument23 pagesPhase DiagramsnvemanNo ratings yet

- Fractional Time Delay Estimation AlgorithmDocument8 pagesFractional Time Delay Estimation AlgorithmandradesosNo ratings yet

- Spec Sheet SD200Document8 pagesSpec Sheet SD200Yohanes ArgamNo ratings yet

- Chilled Ceilings/Beams SystemsDocument8 pagesChilled Ceilings/Beams SystemsShazia Farman Ali QaziNo ratings yet

- Tuning of A PID Controller Using Ziegler-Nichols MethodDocument7 pagesTuning of A PID Controller Using Ziegler-Nichols MethodTomKish100% (1)

- Department of Electrical Engineering (New Campus) : LAB 5: JFET and MOSFET (DC Biasing Review)Document10 pagesDepartment of Electrical Engineering (New Campus) : LAB 5: JFET and MOSFET (DC Biasing Review)syed furqan javedNo ratings yet

- 五十铃isuzu Hatichi 4hk1 6hk1 ManualDocument351 pages五十铃isuzu Hatichi 4hk1 6hk1 Manual蔡苏100% (2)

- Daily Drilling Report No.28 Pozo La Colpa 2XDDocument6 pagesDaily Drilling Report No.28 Pozo La Colpa 2XDJuan Pablo Sanchez MelgarejoNo ratings yet

- Turning Radius TemplatesDocument20 pagesTurning Radius Templatesandra_janinaNo ratings yet

- Concepts RadiationDocument4 pagesConcepts RadiationDarkNo ratings yet

- Trumpet: Vacuum Tube MM PhonostageDocument8 pagesTrumpet: Vacuum Tube MM PhonostageSridip BanerjeeNo ratings yet

- Coupled LinesDocument10 pagesCoupled LinesFatih TokgözNo ratings yet

- 19 LO4 Measures of Central Tendency and SpreadDocument9 pages19 LO4 Measures of Central Tendency and SpreadwmathematicsNo ratings yet

- 2015 Eduction of Formaldehyde Emission From Particleboard by Phenolated Kraft LigninDocument25 pages2015 Eduction of Formaldehyde Emission From Particleboard by Phenolated Kraft LigninCansu kozbekçiNo ratings yet

- The Simple Answer To Torque Steer: "Revo" Suspension: Ford Forschungszentrum AachenDocument18 pagesThe Simple Answer To Torque Steer: "Revo" Suspension: Ford Forschungszentrum AachennmigferNo ratings yet

- DP Level Measurement BasicsDocument2 pagesDP Level Measurement Basicsjsrplc7952No ratings yet

- Bookstein - 2014 - Measuring and Reasoning Numerical Inference in The SciencesDocument570 pagesBookstein - 2014 - Measuring and Reasoning Numerical Inference in The Sciencessergiorodriguezarq97100% (1)

- Analysis of Spur Gear Using Composite MaterialDocument9 pagesAnalysis of Spur Gear Using Composite MaterialIJRASETPublicationsNo ratings yet

- Power Factor Correction: Wiseman Nene Eit - Electrical Eston Sugar MillDocument42 pagesPower Factor Correction: Wiseman Nene Eit - Electrical Eston Sugar MillPushpendraMeenaNo ratings yet