Professional Documents

Culture Documents

21AIE401DRL TeamNo4 AIE19005 20 36 Report

21AIE401DRL TeamNo4 AIE19005 20 36 Report

Uploaded by

Ghanshyam s.nairOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

21AIE401DRL TeamNo4 AIE19005 20 36 Report

21AIE401DRL TeamNo4 AIE19005 20 36 Report

Uploaded by

Ghanshyam s.nairCopyright:

Available Formats

1

Autonomous Driving Agent in Carla using DDPG

Ghanshyam S. Nair, MMV Sai Nikhil, Aniket Sarrin

Department of Computer Science and Engineering, Amrita School of Engineering, Bengaluru, Amrita Vishwa

Vidyapeetham, India

mail2ghanshyam2001@gmail.com , sainikhilmmv@gmail.com , aniketsarrin@gmail.com

with DRL-based algorithms. The potential to handle some of the

most challenging issues in autonomous automobiles, including

scheduling and judgement, has been demonstrated in this area.

Abstract—When driving a car, it’s important to make sure it Deep Deterministic Policy Gradient (DDPG) is one of the Deep

consistently moves toward the parking spot, achieves a great Reinforcement Learning (DRL) algorithms (TD3). The primary

heading angle, and prevents major losses brought on by line methodology for the subjects of our forthcoming research is

pressure. It is suggested to use deep reinforcement learning to DDPG.

create an autonomous parking model. To determine the various

states of its movement, a parking kinematics model is constructed. LIMITATIONS:

A thorough reward function is created to take into account the Driverless cars (AD) is a challenging area for application of

emphasis of movement and security in various parking stages. deep learning (ML). It is challenging to provide the

Steering angle and displacement are employed to achieve appropriate behavioural outputs for a driverless car system

engagement as actions.The automatic parking of the vehicle is made

possible by training, and the many steps and circumstances because ”excel- lent” driving is already subject to arbitrary

involved in parking are thoroughly examined. Additionally, a interpretation. It can be challenging to choose the right input

subsequent generalisation experiment demonstrated the model’s properties for learning. There are many challenges involved in

strong generalizability. achieving such a sophisticated idealogy in the context of

Index Terms—CARLA , secure , autonomous autonomous driving, including Due to the complex driving,

such as the wide variations in traffic jams and human

involvement, as well as the need to balance efficiency (speed),

I. INTRODUCTION elegance (smoothness), and danger, self-driving in urban

Using automated technologies can help reduce traffic acci- contexts is still a major concern today. For autonomous

dents and disasters. As 94 percent of risky driving is caused by driving to work, decisions must be made in fluid, erratic

the actions or mistakes of the driver, self-driving vehicles can environments. Uncertainty in the prediction is caused by

significantly minimize it. A driver’s tendency for dangerous and imperfect data and a failure to accurately assess human

harmful driving behaviours may decline as freedom levels rise. drivers’ intentions. The other cars are intended to function as

Autonomous vehicles must pass a number of tests to ensure their hidden variables in this problem as a partly visible Markov

safe operation in everyday situations and to prevent fatal decision making process (POMDP).

collisions. However, conducting such research on the road is

difficult because it is expensive and difficult. Simulated scenario II. LITREATURE SURVEY

testing is a well-known practice in the automotive industry. For A. EXPERT DRIVERS’ DATA-BASED METHOD

example, the situations utilized in the past to instruct Automatic Typically, the data-based approaches for imitating experi-

Braking Systems are inadequate for training driverless cars. In enced drivers’ parking behaviours make use of supervised

essence, incredibly complex exercises are required to teach self learning techniques like neural networks. The network

driving cars to behave like humans. In this study, we used a self receives information about the surrounding environment as

driving simulation to examine the muti contextual perception inputs, including such visual information, location, and

problem of the sim- ulation model. AI is evolving through AV vehicle condi- tion, and produces the necessary driver action

design, addressing processes such as detection. The ego-vehicle [3], [4]. Due to the neural network’s limited ability to

may use Multi- Object Tracking (MOT), scenario forecasting, or generalise, a significant portion of training data is necessary to

evaluation of the current driving conditions to decide the safest adequately represent target working scenarios. Additionally,

course of action. Recently, approaches based on Deep the quality of parking data has a significant impact on the

Reinforcement Learning were used to resolve Markov Decision functioning of the network. As a result, the information must

Processes (MDPs), which aim to determine the best course of be of a high calibre. Expert data sets, however, are frequently

action for an agent to adopt in order to maximise a reward expensive and time and labour intensive. Additionally, the

function. These techniques have produced positive outcomes in effectiveness of algorithms trained in this way will be

fields like gaming and simple decision-making tools. To learn constrained by the expert data [5]. It is therefore challenging

how to utilise the AV also view inside the car, Deep to attain ideal multi-objective driving effectiveness because

Reinforcement Learning techniques are developed for the computer could only approximate but not match expert

autonomous vehicles. The Deep prefix in DRL relates to a time efficiency.

series prediction that enables the agent—in this case, the self- B. PRIOR KNOWLEDGE-BASED METHOD

driving car—to generalise the state value that it has only largely

A strategy that uses prior knowledge involves turning human

or never witnessed by drawing on the values of related states.

driving history into foreknowledge and using it to guide the

This method replaces tabular methods of forecasting state values

2

planning process. This strategy can be divided into three III. SYSTEM ARCHITECHTURE

categories: fuzzy logic control, heuristic search, and geometric A. Reinforcement Learning

approaches. The geometric method includes various types of

Self-driving cars will likely become a common type of

curves such as the Reeds-Shepp curve, clothoid curve, Bezier

vehicle and symbolize future transportation within the next

curve, spline curve, and polynomial curve. Heuristic functions

ten years. The vehicle must be secure, reliable, and

used in search algorithms like A* and fuzzy rules used in fuzzy

comfortable for the user for this to operate. Driverless cars

inference methods are also based on real-world parking

must be ex- tremely good mediators when making right or

situations. However, abstracting knowledge from the driving left movements if they are to progress in crowded places. It

environment can lead to a loss of information and make it more is believed that the primary mechanism for teaching driving

difficult to achieve the best multi-objective driving efficiency. laws is reinforcement learning. We provide an improved Q-

learning reinforced learning method that will maximize the

C. REINFORCEMENT LEARNING-BASED METHOD advantages of large state space. For our research on driverless

As was already said, approaches based on specialist data and autos, we only use the open-source simulation CARLA. This

foreknowledge primarily draw from or abstract from human study simulates a real-world situation where a robot provides

experience, which calls for a significant amount of highquality data to attempt to resolve issues.

parking data. The performance of the system will be constrained

also when professional sets of data are accessible.

Reinforcement learning systems, on the other hand, are educated

by their own history, which theoretically enables them to

outperform humans [5]. By engagement between the agent and

environment, the reinforcement instruction learns tactics.

Prototype methods and model-free methods are the two primary

divisions of reinforcement learning, depending on a criterion

that takes into account whether it is essential to represent the

environment. In the framework approach, a strategy to maximise

the cumulative reward is discovered after obtaining a state

transition probability matrix and reward function. Fig 1.Implementation Architecture

Model-free reinforcement learning methods estimate the value of

taking an action in a particular state and select the action with the

highest estimated return value to be taken in each state.[16][17] B. Deep Q-Network

There has not been much research on using reinforcement DDPG The DDPG (Deep Deterministic Policy Gradient)

learning to solve vehicle planning problems. Zhang et al. used a method is a model of a policy-supervised learning technique

experimental reinforcement learning technique called deeper that can be applied to issues involving continuous action

programme slope to solve the perpendicular parking vectors and high-dimensional space. Actor-critic, the central

problem.[26][27] They first trained the operator in a simulated component of DDPG, is made up of a weighted linear net

environment before moving the trained agent to the actual (critic) and a policy nets (actor). There are several advantages

vehicle for further training. The speed policy was simplified to a to the randomized planning networking which the DDPG

single fixed command, and the focus was only on the final method employs and upgrades with strategy gradients. This

portion of the two phases in perpendicular parking. Other enables the network to manage consistently high activity

researchers have used model-free reinforcement learning levels. In order to increase data utilisation, seasoned playback

arrays were developed, making it possible to execute double

techniques to study autonomous driving scenarios such as lane

wiring updates for the intended system and the existing or

change decisions and lane-keeping assistance. These techniques

specific current network. Convergence of the techniques or

include actor-critic, deep Q-network, DDPG, and others. Model-

strategy is facilitated by this. The targeted system employs

free methods do not require modeling of the environment, but

the mild update technique, which increases the stability of the

rely on actual agent-environment interaction, which can be less

person. The DDPG simulates a human brain to approach the

effective for learning. In a study comparing model-based and

model-free methods for playing video games, the model-based

method was found to be faster at learning in most cases and

significantly faster in a few cases. Previous research on steering

column attitude change has been limited to either the final

portion of perpendicular parking or high-speed situations such as

lane changes and lane-keeping assistance.[28] In this study,

parallel parking, which is generally considered more challenging

Fig. 2. Showing car approaching the target first and reaching the target

than perpendicular parking, is addressed. The steering wheel in

this case has a wide range of input angles, requiring efficient

learning.

3

functional form. This weighed sum network is also known as a Replay buffer: buffer size – 100000, with 100 steps in each

feedback net. Its input are the activity and state values [a,s], and buffer

its output are Q. (s, a). There seems to be a neural network to

roughly represent the strategy function. Plan network is another A. Reward Structure

name for this actor network. It specifically has an operator as a

result and a state as an input. The steepest descent approach is There are 2 kinds of reward structures used in this project

used in the critical network to reduce loss and update connection both varied in penalty and formulation. Angle penalty –

weights. The specific strategy is as follows. Mini-batch learning Cos(a).

requires the usage of knowledge replay arrays because DDPG

requires that samples be evenly and freely distributed.

Additionally, in order to allude to DQN’s split data network,

DDPG divides both of the agent nodes and the critique networks

into the aim system and the present network. The soft update

method also significantly increases the channel’s stability.

C. Environment

CARLA is a simulation platform that was designed to make

it easier to develop, train, and test autonomous systems. It

provides open digital content such as urban designs, houses, and

vehicles, as well as tools and protocols for gathering and sharing

data. CARLA has many customizable features including various

climatic conditions, control over all static and dynamic

characters, and the ability to create maps. It is a comprehensive

platform for simulating autonomous driving scenarios.

There are two impediments surrounding the parking place,

and the project’s simulation of the parking environment is ver-

tical. Figure 1 depicts the creation of the parking surroundings Fig.4 Angular Penalty Structure

reference system using the base as the centre of the parking spot.

Fig 3.Vertical Parking Environment

IV. RL MODEL FOR THE APPLICATION

Environment : CARLA - developed from the ground up to

support development, training, and validation of autonomous

driving systems.

Action Space : 1 or 2 – depending on the mode set from the

user (Only throttle / steer and throttle)

State Space : 16

RL algorithm/- policy: Soft Actor Critic Fig.5 Constructed Reward Function

Actor - State space - 16 -512-256-Tanh-1 Or 2 action output. Throttle Penalty - The reward can be calculated using

Critic - Action space - 2-64-128-OUT 1 linear or gaussian methodology.Linear methodology -

State pace - 16 -64-128 OUT 2 [Max(dis) - curr(dis)] / [max(dis) - prev(dis)]. Gaussian

Concatenate OUT 1 and OUT 2 to give out 256 layered methodology -

neurons which then gives out 1 dense output Max(reward)( − dis2/2 ∗ sigma2)

Noise addition: Ornstein Uhlenbeck Process

4

Fig 7.System Architecture is accomplished by introducing noise to the motion itself.

The method has a few intriguing characteristics. It is both a

B. Model Structure Markov and a Gaussian process. Theta, sigma, and mew are its

two hyperparameters. Mew is set to 0, theta is adjusted to

0.015, and sigma is an array of numpy values between 0.3 and

0.4.

5

D. Hyper Parameter values

Final Actor Structure: Input layer: The input layer has state

space as input size . State pace - 16 -512-256-Tanh-1 Or 2 Given below are the hyper-parameters used their respective

action output. Final Critic Structure: Input layer: This values:

structure has 2 different neural networks combining to give a MEMORY FRACTION = 0.3333

single output. One input layer has action space as input size TOTAL EPISODES = 1000

while the other has the state space. STEPS PER EPISODE = 100

AVERAGE EPISODES COUNT = 40

Action space - 2-64-128-OUT 1

CORRECT POSITION NON MOVING STEPS = 5

State space - 16 -64-128-OUT 2 OFF POSITION NON MOVING STEPS = 20

Concatenate OUT 1 and OUT 2 to give out 256 layered neurons REPLAY BUFFER CAPACITY = 100000

which then gives out 1 dense output. THROTTLE NOISE FACTOR -

Fig.

6.

Critic Model

Moving target input - Concatenated reward + gamma*(target

critic).Distance is calculated using Euclidian method.

Fig 8. Actor Model

C. Ornstein Uhlenbeck Process

By choosing a random response based on probabilities, exploration is carried out in reinforcement learning for fi- nite action

spaces (such as epsilon-greedy or Boltzmann Fig exploration). Exploration in continuous action environments

BATCH SIZE = 64

CRITIC LR = 0.002

ACTOR LR = 0.001

GAMMA = 0.99

TAU = 0.005

EPSILON = 1

EXPLORE = 100000.0

MIN EPSILON = 0.000001

6

V. RESULTS

Fig.6 Experiment 2 Result

VI. GAPS AND CHALLENGES

Parallel parking was difficult to implement due to high level of

crashes with other cars slow learning rate.

Fig 9.Concatenated NN Traffic management - Various flows and management in

different localities . The constraints of the vehicle cannot remain

the same.

Research gap - Most of the autonomous cars make use of the

fuzzy logic and sensory based output deep learning models they

do not rely much on reinforcement learning.

VII. CONCLUSION

We created learning data for the neural network utilizing the

deep semisupervised method DDPG. Then, to guarantee that the

automobile is always approaching the parking centre and

achieves the ideal car inclination during the parking process, a

straightforward and efficient reward system is built.

Additionally, in order to secure the safety of the parking process,

the converging of the strategy, and the commuter lot

The experiences replayed pool’s good parking activities and

states are sampled with a step size of 100 rounds, and the

overall converging of the reward value in 500 separate episodes

over the course of the full DDPG algorithm training procedure

is as follows:

Fig 10.Experiment 1 result

7

data, we penalise the line pressing action. learning how to

prevent data un-sampling.

VIII. FUTURE SCOPE

Consider using some other, quicker, and more effective

strategies. constructing a more intricate custom parking sce-

nario to up the challenge and precision. Consider simulating

traffic in different simulation environments.

REFERENCES

[1] ]Pan, You, Wang Lu - Virtual To Real Reinforcement Learning, arXiv

(2017).

[2] Shai Shalev-Shwartz, Shaked Shammah, Amnon Shashua - Safe, Multi-

Agent, Reinforcement Learning for Autonomous Driving, arXiv (2016).

[3] Sahand Sharifzadeh, Ioannis Chiotellis, Rudolph Triebel, Daniel Cre-

mers - Learning to Drive using Inverse Reinforcement Learning ANd

DQN, arXiv (2017).

[4] Markus Kuderer, Shilpa Gulati, Wolfram Burgard - Learning Driving

Styles for Autonomous Vehicles from Demonstration, IEEE (2015).

[5] Jeff Michels, Ashutosh Saxena, Andrew Y. Ng - High Speed Obstacle

Avoidance using Monocular Vision and Reinforcement Learning, ACM

(2005).

[6] Ahmad El Sallab, Mohammed Abdou, Etienne Perot Senthil Yogamani

- Deep Reinforcement Learning Framework for Autonomous Driving,

arXiv (2017).

[7] Alexey Dosovitskiy, German Ros, Felipe Codevilla, Antonio Lopez, and

Vladlen Koltun -CARLA: An Open Urban Driving Simulator.

[8] Jan Koutn´ık, Giuseppe Cuccu, Ju¨rgen Schmidhuber, Faustino

Gomez- Evolving Large-Scale Neural Networks for Vision-Based

Reinforcement Learning.

[9] Chenyi Chen, Ari Seff, Alain Kornhauser, Jianxiong Xiao-

DeepDriving: Learning Affordance for Direct Perception in

Autonomous Driving.

[10] Timothy P. Lillicrap, Jonathan J. Hunt, Alexander Pritzel, Nicolas

Heess, Tom Erez, Yuval Tassa, David Silver Daan Wierstra- Continuous

Control With Deep Reinforcement Learning.

[11] Brody Huval, Tao Wang, Sameep Tandon, Jeff Kiske, Will Song, Joel

Pazhayampallil, Mykhaylo Andriluka, Pranav Rajpurkar, Toki Migi-

matsu, Royce Cheng-Yue, Fernando Mujica, Adam Coates, Andrew Y.

Ng - An Empirical Evaluation of Deep Learning on Highway Driving.

[12] Abdur R. Fayjie, Sabir Hossain, Doukhi Oualid, and Deok-Jin Lee-

Driverless Car: Autonomous Driving Using Deep Reinforcement Learn-

ing In Urban Environment. [14]Matt Vitelli, Aran Nayebi, CARMA: A

Deep Reinforcement Learning Approach to Autonomous Driving.

You might also like

- The Multibody Systems Approach to Vehicle DynamicsFrom EverandThe Multibody Systems Approach to Vehicle DynamicsRating: 5 out of 5 stars5/5 (2)

- Java SE 8 Question BankDocument107 pagesJava SE 8 Question BankAshwin Ajmera100% (1)

- Condition ExclusionDocument10 pagesCondition ExclusionAnupa Wijesinghe100% (7)

- MainrepDocument6 pagesMainrepGhanshyam s.nairNo ratings yet

- Autonomous Driving With Deep Reinforcement Learning in CARLA SimulationDocument7 pagesAutonomous Driving With Deep Reinforcement Learning in CARLA Simulationozr72121No ratings yet

- 1909 11538 PDFDocument7 pages1909 11538 PDFChongfeng WeiNo ratings yet

- A Driving Decision Strategy (DDS) Based On 2020Document3 pagesA Driving Decision Strategy (DDS) Based On 2020bannu24111No ratings yet

- An Innovative Anomaly Driving Detection Strategy For Adaptive FCW of CNN ApproachDocument6 pagesAn Innovative Anomaly Driving Detection Strategy For Adaptive FCW of CNN Approachmariatul qibtiahNo ratings yet

- Autonomous Driving System Using Proximal Policy Optimization in Deep Reinforcement LearningDocument10 pagesAutonomous Driving System Using Proximal Policy Optimization in Deep Reinforcement LearningIAES IJAINo ratings yet

- A Survey of Deep Learning Applications To Autonomous Vehicle ControlDocument23 pagesA Survey of Deep Learning Applications To Autonomous Vehicle ControltilahunNo ratings yet

- Vehicle Layout Optimization Using Multi-Objective Genetic AlgorithmsDocument10 pagesVehicle Layout Optimization Using Multi-Objective Genetic Algorithms1. b3No ratings yet

- Driving Policy Transfer Via Modularity and Abstraction (12-2018, 1804.09364)Document15 pagesDriving Policy Transfer Via Modularity and Abstraction (12-2018, 1804.09364)kovejeNo ratings yet

- 1 s2.0 S111001682200789X MainDocument13 pages1 s2.0 S111001682200789X MainishwarNo ratings yet

- Algorithmic Analysis and Development For Enhanced Human AutonomousVehicle With Pages Removed (1) RemovDocument6 pagesAlgorithmic Analysis and Development For Enhanced Human AutonomousVehicle With Pages Removed (1) RemovSaurabh vijNo ratings yet

- Safe Multi Agent Reforcement Learning For Autonomous DrivingDocument13 pagesSafe Multi Agent Reforcement Learning For Autonomous DrivingChangsong yuNo ratings yet

- Synopsis On Achieving Complete Autonomy in Self Driving CarsDocument6 pagesSynopsis On Achieving Complete Autonomy in Self Driving CarsSammit NadkarniNo ratings yet

- Electronics 10 03159 v2Document22 pagesElectronics 10 03159 v2Dan RiosNo ratings yet

- Iet-Its 2019 0317Document9 pagesIet-Its 2019 0317Vismar OchoaNo ratings yet

- A Survey of Deep Learning Techniques For Autonomous DrivingDocument25 pagesA Survey of Deep Learning Techniques For Autonomous DrivingtilahunNo ratings yet

- Robovis AccDocument11 pagesRobovis AccCESIA ISABEL MORENO HERNANDEZNo ratings yet

- Machine Learning Based On Road Condition Identification System For Self-Driving CarsDocument4 pagesMachine Learning Based On Road Condition Identification System For Self-Driving CarsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- ChauffeurNet - Learning To Drive by Imitating The Best and Synthesizing The WorstDocument20 pagesChauffeurNet - Learning To Drive by Imitating The Best and Synthesizing The WorstLưu HảiNo ratings yet

- Exp 5 184Document13 pagesExp 5 184yaminik2408No ratings yet

- Convolutional Neural Network For A Self-Driving Car in A Virtual EnvironmentDocument6 pagesConvolutional Neural Network For A Self-Driving Car in A Virtual EnvironmentChandra LohithNo ratings yet

- SSRN Id4398118Document9 pagesSSRN Id4398118maniesha1438No ratings yet

- Alert SystemDocument7 pagesAlert SystemBalasaheb Bhausaheb Jadhav -No ratings yet

- A Survey of Deep Learning Techniques For Autonomous DrivingDocument30 pagesA Survey of Deep Learning Techniques For Autonomous DrivingChandan TiwariNo ratings yet

- Paper 3 MlisDocument9 pagesPaper 3 MlisDhruv SoniNo ratings yet

- An End-to-End Curriculum Learning Approach For AutDocument10 pagesAn End-to-End Curriculum Learning Approach For Autjorge.lozoyaNo ratings yet

- An IMM EKF Approach For Enhanced Multi-Target State Estimation For AppDocument13 pagesAn IMM EKF Approach For Enhanced Multi-Target State Estimation For AppsumathyNo ratings yet

- VTC2020Document5 pagesVTC2020mmorad aamraouyNo ratings yet

- Decision-Making Strategy On Highway For Autonomous Vehicles Using Deep Reinforcement LearningDocument11 pagesDecision-Making Strategy On Highway For Autonomous Vehicles Using Deep Reinforcement LearningEduard ConesaNo ratings yet

- Microscopic Traffic Simulation by Cooperative Multi-Agent Deep Reinforcement LearningDocument9 pagesMicroscopic Traffic Simulation by Cooperative Multi-Agent Deep Reinforcement LearningBora CobanogluNo ratings yet

- DDS For Autonomous VehiclesDocument29 pagesDDS For Autonomous Vehicles20W91A0581 K DEVI PRIYANo ratings yet

- DQN-based Reinforcement Learning For Vehicle Control of Autonomous Vehicles Interacting With PedestriansDocument6 pagesDQN-based Reinforcement Learning For Vehicle Control of Autonomous Vehicles Interacting With PedestriansVinh Lê HữuNo ratings yet

- Accelerating The Training of Deep Reinforcement Learning in Autonomous DrivingDocument8 pagesAccelerating The Training of Deep Reinforcement Learning in Autonomous DrivingIAES IJAINo ratings yet

- Machines 11 01068 v2Document14 pagesMachines 11 01068 v2dekerNo ratings yet

- An Adaptive Motion Planning Algorithm For Obstacle Avoidance in Autonomous Vehicle ParkingDocument11 pagesAn Adaptive Motion Planning Algorithm For Obstacle Avoidance in Autonomous Vehicle ParkingIAES IJAINo ratings yet

- Computer Vision Techniques For Vehicular Accident Detection A Brief ReviewDocument7 pagesComputer Vision Techniques For Vehicular Accident Detection A Brief ReviewInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- SSRN Id3566915Document4 pagesSSRN Id3566915B452 AKSHAY SHUKLANo ratings yet

- Enhancing Distracted Driver Detection With Human Body Activity Recognition Using Deep Learning F. Zandamela, F. Nicolls, D. Kunene & G. StoltzDocument17 pagesEnhancing Distracted Driver Detection With Human Body Activity Recognition Using Deep Learning F. Zandamela, F. Nicolls, D. Kunene & G. Stoltzjhonatan1.rmvNo ratings yet

- Research PaperDocument6 pagesResearch Paperhritwik.20scse1180049No ratings yet

- Artificial Intelligence AI Framework For Multi-ModDocument9 pagesArtificial Intelligence AI Framework For Multi-ModDuc ThuyNo ratings yet

- Self Driving Car Using Raspberry Pi: Keywords:Raspberry Pi, Lane Detection, Obstacle Detection, Opencv, Deep LearningDocument8 pagesSelf Driving Car Using Raspberry Pi: Keywords:Raspberry Pi, Lane Detection, Obstacle Detection, Opencv, Deep LearningKrishna KrishNo ratings yet

- Autonomous Vehicles: Challenges and Advancements in AI-Based Navigation SystemsDocument12 pagesAutonomous Vehicles: Challenges and Advancements in AI-Based Navigation SystemsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Electronics 10 01994 v3Document28 pagesElectronics 10 01994 v3benjasaaraNo ratings yet

- A Deep Learning Platooning-Based Video Information-Sharing Internet of Things Framework For Autonomous Driving SystemsDocument11 pagesA Deep Learning Platooning-Based Video Information-Sharing Internet of Things Framework For Autonomous Driving SystemslaeeeqNo ratings yet

- ML ReportDocument11 pagesML Report71762234024No ratings yet

- Paper 2Document21 pagesPaper 24AL20AI004Anush PoojaryNo ratings yet

- Cultrera 2020Document10 pagesCultrera 2020Victor Flores BenitesNo ratings yet

- Model-Based and Machine Learning-Based High-Level Controller For Autonomous Vehicle Navigation: Lane Centering and Obstacles AvoidanceDocument14 pagesModel-Based and Machine Learning-Based High-Level Controller For Autonomous Vehicle Navigation: Lane Centering and Obstacles AvoidanceIAES International Journal of Robotics and AutomationNo ratings yet

- Classification and Detection of Vehicles Using Deep LearningDocument9 pagesClassification and Detection of Vehicles Using Deep LearningEditor IJTSRDNo ratings yet

- Pretrained Vehicle ClassifierDocument11 pagesPretrained Vehicle ClassifierSuvramalya BasakNo ratings yet

- Toward HITL AI Enhancing Deep Reinforcement Learning Via RealTime Human Guidance For Autonomous DrivingDocument17 pagesToward HITL AI Enhancing Deep Reinforcement Learning Via RealTime Human Guidance For Autonomous DrivingnoeNo ratings yet

- YEs We GanDocument16 pagesYEs We GanMikeNo ratings yet

- 1911 11699 PDFDocument10 pages1911 11699 PDFChongfeng WeiNo ratings yet

- Proximal Policy Optimization Through A Deep ReinfoDocument19 pagesProximal Policy Optimization Through A Deep ReinfoRed AlexNo ratings yet

- Akademia Baru: Vehicle Speed Estimation Using Image ProcessingDocument8 pagesAkademia Baru: Vehicle Speed Estimation Using Image ProcessingAnjali KultheNo ratings yet

- Evolving A Rule System Controller For Automatic Driving in A Car Racing CompetitionDocument7 pagesEvolving A Rule System Controller For Automatic Driving in A Car Racing Competitionadam sandsNo ratings yet

- Advancements in Reinforcement Learning Algorithms For Autonomous SystemsDocument6 pagesAdvancements in Reinforcement Learning Algorithms For Autonomous SystemsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Learning Robust Control Policies For End-to-End Autonomous Driving From Data-Driven SimulationDocument8 pagesLearning Robust Control Policies For End-to-End Autonomous Driving From Data-Driven SimulationNguyễn Xuân KhảiNo ratings yet

- Aguila JImenez Mario ProjectoDocument18 pagesAguila JImenez Mario ProjectoMARIO ANTONIO AGUILA JIMENEZNo ratings yet

- Project Proposal - AURA-finDocument4 pagesProject Proposal - AURA-finGhanshyam s.nairNo ratings yet

- SPM Slides Chapter 1Document28 pagesSPM Slides Chapter 1Ghanshyam s.nairNo ratings yet

- SPM Slides Chapter 4Document36 pagesSPM Slides Chapter 4Ghanshyam s.nairNo ratings yet

- SPM Slides Chapter 3Document43 pagesSPM Slides Chapter 3Ghanshyam s.nairNo ratings yet

- SPM Slides Chapter 2Document24 pagesSPM Slides Chapter 2Ghanshyam s.nairNo ratings yet

- MainrepDocument6 pagesMainrepGhanshyam s.nairNo ratings yet

- Andon System (行灯 - システム) - The Real Visual Management toolDocument6 pagesAndon System (行灯 - システム) - The Real Visual Management toolAshokNo ratings yet

- Solidworks:: Lesson 1 - Basics and Modeling FundamentalsDocument48 pagesSolidworks:: Lesson 1 - Basics and Modeling FundamentalsCésar SilvaNo ratings yet

- Fluent - Tutorial - Dynamic Mesh - Projectile Moving Inside A BarrelDocument25 pagesFluent - Tutorial - Dynamic Mesh - Projectile Moving Inside A Barrelgent_man42No ratings yet

- Sample 5Document105 pagesSample 5asoedjfanushNo ratings yet

- 02 VMware NSX For Vsphere 6.2 Architecture OverviewDocument104 pages02 VMware NSX For Vsphere 6.2 Architecture OverviewninodjukicNo ratings yet

- EL9820 EL9821e Ver1.2Document9 pagesEL9820 EL9821e Ver1.2mikeNo ratings yet

- Simple Weekly Planner For Distance Learning With 3 Activities Per Day SlidesManiaDocument23 pagesSimple Weekly Planner For Distance Learning With 3 Activities Per Day SlidesManiaIntan RzyNo ratings yet

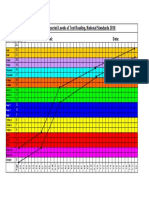

- Wedge Graph - Expected Levels of Text Reading, National Standards 2010 School: DateDocument1 pageWedge Graph - Expected Levels of Text Reading, National Standards 2010 School: Dateapi-26776334No ratings yet

- Final Draft Prcen Iso/Ts 19139: Technical Specification Spécification Technique Technische SpezifikationDocument62 pagesFinal Draft Prcen Iso/Ts 19139: Technical Specification Spécification Technique Technische SpezifikationAndreea CrstnNo ratings yet

- Detalii Aparat AuditivDocument6 pagesDetalii Aparat AuditivRuxandra BalasuNo ratings yet

- Universal Gate - Nand: This Presentation Will DemonstrateDocument14 pagesUniversal Gate - Nand: This Presentation Will DemonstrateAniket GirdharNo ratings yet

- CRo NotesDocument31 pagesCRo Notesgrs8940No ratings yet

- LAR Spare Part List With Pictures en 2011-08Document89 pagesLAR Spare Part List With Pictures en 2011-08Adriano lopes da silvaNo ratings yet

- K9GAG08U0E Samsung PDFDocument55 pagesK9GAG08U0E Samsung PDFHamza Abbasi AbbasiNo ratings yet

- MEXAM - Attempt Review26-30Document4 pagesMEXAM - Attempt Review26-30mark gonzalesNo ratings yet

- Power Systems Kohler PowerDocument28 pagesPower Systems Kohler PowerTrần Quang TuyênNo ratings yet

- Communications: Refers To The Sending, Receiving and Processing of Information Through Electronic MeansDocument90 pagesCommunications: Refers To The Sending, Receiving and Processing of Information Through Electronic MeansAngelicaNo ratings yet

- Ijrcm 2 IJRCM 2 - Vol 3 - 2013 - Issue 4 - AprilDocument157 pagesIjrcm 2 IJRCM 2 - Vol 3 - 2013 - Issue 4 - AprilMojisNo ratings yet

- Hytera HP78X Uv&VHF Service Manual V01 - EngDocument217 pagesHytera HP78X Uv&VHF Service Manual V01 - EngHytera MNC580No ratings yet

- Airline Reservation SystemDocument17 pagesAirline Reservation SystemShubham Poojary100% (1)

- Loke and Dahlin 2002 Journal of Applied Geophysics 49 149-162Document15 pagesLoke and Dahlin 2002 Journal of Applied Geophysics 49 149-162Roger WaLtersNo ratings yet

- JANMEJAY SINGH PROJECT CS - Sanjai SinghDocument24 pagesJANMEJAY SINGH PROJECT CS - Sanjai SinghHungry-- JoyNo ratings yet

- CV Final UpdateDocument3 pagesCV Final UpdateSam WildNo ratings yet

- Applications of Machine Learning For Prediction of Liver DiseaseDocument3 pagesApplications of Machine Learning For Prediction of Liver DiseaseATSNo ratings yet

- MML CommandsDocument159 pagesMML Commandstejendra184066No ratings yet

- COMPUTER SKILLS Book 3-Answer Key Chapter 1-Hardware and SoftwareDocument18 pagesCOMPUTER SKILLS Book 3-Answer Key Chapter 1-Hardware and SoftwareINFO LINGANo ratings yet

- ECE312 Lec09 PDFDocument23 pagesECE312 Lec09 PDFdeepak reddy100% (1)

- Load Calculation NewDocument1 pageLoad Calculation NewUtkarsh VermaNo ratings yet