Professional Documents

Culture Documents

B All Experimental Results: Cobbe Et Al. 2021 1

B All Experimental Results: Cobbe Et Al. 2021 1

Uploaded by

Alezz Stone FrancoOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

B All Experimental Results: Cobbe Et Al. 2021 1

B All Experimental Results: Cobbe Et Al. 2021 1

Uploaded by

Alezz Stone FrancoCopyright:

Available Formats

B All Experimental Results

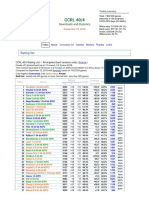

This section contains tables for experimental results for varying models and model sizes, on all

benchmarks, for standard prompting vs. chain-of-thought prompting.

For the arithmetic reasoning benchmarks, some chains of thought (along with the equations produced)

were correct, except the model performed an arithmetic operation incorrectly. A similar observation

was made in Cobbe et al. (2021). Hence, we can further add a Python program as an external

calculator (using the Python eval function) to all the equations in the generated chain of thought.

When there are multiple equations in a chain of thought, we propagate the external calculator results

from one equation to the following equations via string matching. As shown in Table 1, we see that

adding a calculator significantly boosts performance of chain-of-thought prompting on most tasks.

Table 1: Chain of thought prompting outperforms standard prompting for various large language

models on five arithmetic reasoning benchmarks. All metrics are accuracy (%). Ext. calc.: post-hoc

external calculator for arithmetic computations only. Prior best numbers are from the following. a:

Cobbe et al. (2021). b & e: Pi et al. (2022), c: Lan et al. (2021), d: Pi˛ekos et al. (2021).

Prompting GSM8K SVAMP ASDiv AQuA MAWPS

Prior best N/A (finetuning) 55a 57.4b 75.3c 37.9d 88.4e

UL2 20B Standard 4.1 10.1 16.0 20.5 16.6

Chain of thought 4.4 (+0.3) 12.5 (+2.4) 16.9 (+0.9) 23.6 (+3.1) 19.1 (+2.5)

+ ext. calc 6.9 28.3 34.3 23.6 42.7

LaMDA 137B Standard 6.5 29.5 40.1 25.5 43.2

Chain of thought 14.3 (+7.8) 37.5 (+8.0) 46.6 (+6.5) 20.6 (-4.9) 57.9 (+14.7)

+ ext. calc 17.8 42.1 53.4 20.6 69.3

GPT-3 175B Standard 15.6 65.7 70.3 24.8 72.7

(text-davinci-002) Chain of thought 46.9 (+31.3) 68.9 (+3.2) 71.3 (+1.0) 35.8 (+11.0) 87.1 (+14.4)

+ ext. calc 49.6 70.3 71.1 35.8 87.5

Codex Standard 19.7 69.9 74.0 29.5 78.7

(code-davinci-002) Chain of thought 63.1 (+43.4) 76.4 (+6.5) 80.4 (+6.4) 45.3 (+15.8) 92.6 (+13.9)

+ ext. calc 65.4 77.0 80.0 45.3 93.3

PaLM 540B Standard 17.9 69.4 72.1 25.2 79.2

Chain of thought 56.9 (+39.0) 79.0 (+9.6) 73.9 (+1.8) 35.8 (+10.6) 93.3 (+14.2)

+ ext. calc 58.6 79.8 72.6 35.8 93.5

20

You might also like

- Solana ZK Part1Document16 pagesSolana ZK Part1Jackie SinghNo ratings yet

- Campbell DiscComp 17e PPT Mod08Document59 pagesCampbell DiscComp 17e PPT Mod08aimanfakhrul79No ratings yet

- Solutions Manual For Stochastic Modeling: Analysis and SimulationDocument130 pagesSolutions Manual For Stochastic Modeling: Analysis and SimulationhaidarNo ratings yet

- Statistics Tutorial Solution 2020Document8 pagesStatistics Tutorial Solution 2020Sospeter EnockNo ratings yet

- Capture The Flag Kioptrix ServerDocument109 pagesCapture The Flag Kioptrix ServerSara Moreno GuitsNo ratings yet

- RSM Case StudyDocument12 pagesRSM Case StudyVbaluyoNo ratings yet

- Akash High Scale Benchmarks2Document11 pagesAkash High Scale Benchmarks2akashmavleNo ratings yet

- ACT 4&5Document3 pagesACT 4&5paduajeanuNo ratings yet

- I4-GCI(A0-7-ALL TP_Part33Document2 pagesI4-GCI(A0-7-ALL TP_Part33kheangnkNo ratings yet

- Statistics Practice Exercises 1Document2 pagesStatistics Practice Exercises 1Jackie EnriquezNo ratings yet

- N R L M e M Sin55 M Cos55Document3 pagesN R L M e M Sin55 M Cos55Lucian CiprianNo ratings yet

- CST 445 M5Document71 pagesCST 445 M5shyam krishnan sNo ratings yet

- CCRL Complete Engines ListDocument13 pagesCCRL Complete Engines ListBhagirathi SahuNo ratings yet

- Lecture7appendixa 12thsep2009Document31 pagesLecture7appendixa 12thsep2009Richa ShekharNo ratings yet

- VFAST Trans On Edu and Soc SciencesDocument4 pagesVFAST Trans On Edu and Soc SciencescallNo ratings yet

- Adding Decimals Workbook, Level 5Document102 pagesAdding Decimals Workbook, Level 5Tinh LeNo ratings yet

- Lab Wk1soln PDFDocument14 pagesLab Wk1soln PDFPeper12345No ratings yet

- Assignment 6: Introduction To Machine Learning Prof. B. RavindranDocument3 pagesAssignment 6: Introduction To Machine Learning Prof. B. RavindranAbhishek DubeyNo ratings yet

- Reasoning Question BankDocument114 pagesReasoning Question Bankrashmi netam100% (1)

- ROBLES - Module 4 QuestionDocument3 pagesROBLES - Module 4 QuestionClint RoblesNo ratings yet

- Introduction To Machine LearningDocument48 pagesIntroduction To Machine LearningKylleNo ratings yet

- Task 3.6 Calculate The Mean, Standard Deviation and Draw The Boxplots of The Following Frequency Distribution?Document2 pagesTask 3.6 Calculate The Mean, Standard Deviation and Draw The Boxplots of The Following Frequency Distribution?Madushan JayathilakeNo ratings yet

- Forecast Study ProblemsDocument5 pagesForecast Study Problemsemirdurmaz200131No ratings yet

- USL - 21070126112 - ColaboratoryDocument3 pagesUSL - 21070126112 - ColaboratoryVihan ChoradaNo ratings yet

- Greater Than DecimalsDocument2 pagesGreater Than DecimalspaulineNo ratings yet

- Latihan AndataDocument12 pagesLatihan AndataPSKM STIKES HI JAMBINo ratings yet

- Adding/Subtracting Decimals (A)Document30 pagesAdding/Subtracting Decimals (A)Ron Man BautistaNo ratings yet

- Cost & Benefit Analysis: Executive OverviewDocument68 pagesCost & Benefit Analysis: Executive OverviewVimal AanjnaNo ratings yet

- Answer Adding Number Bentuk LazimDocument12 pagesAnswer Adding Number Bentuk LazimSiti F Noor HattaNo ratings yet

- Worksheet DecimalsDocument2 pagesWorksheet DecimalsZahra Nur AlifahNo ratings yet

- Grade 4 Order Decimals bDocument2 pagesGrade 4 Order Decimals bIrfan ZafarNo ratings yet

- Gv-241 User ManualDocument23 pagesGv-241 User ManualJim CNo ratings yet

- Maths Workbook 8 SolutionsDocument57 pagesMaths Workbook 8 SolutionsHassan Alsharqawi100% (1)

- patil_mlDocument9 pagespatil_mlSarthak NandeNo ratings yet

- Frekuensi Min-MaxDocument7 pagesFrekuensi Min-MaxYudha Rental'sNo ratings yet

- Tutorial Chapter 3 and 4Document2 pagesTutorial Chapter 3 and 4NadiaNo ratings yet

- SCBS Investment Recommendations: Underperform UnderperformDocument4 pagesSCBS Investment Recommendations: Underperform UnderperformHappybabyNo ratings yet

- Subtracting Decimals Workbook Level 4Document102 pagesSubtracting Decimals Workbook Level 4Tinh LeNo ratings yet

- Excel Part 6 Tubes SBTG YofinDocument27 pagesExcel Part 6 Tubes SBTG YofinAnonymous V7x3Pe0YkNo ratings yet

- Yi X X Yi X Y: Grafico de DispercionDocument5 pagesYi X X Yi X Y: Grafico de Dispercionjlb1212No ratings yet

- Three Addends (2 Digit) : 1) Solve Each of The ProblemsDocument30 pagesThree Addends (2 Digit) : 1) Solve Each of The Problemsdee.aira2955100% (1)

- Solutions RegressionTutorialDocument51 pagesSolutions RegressionTutorialARBIN RAJNo ratings yet

- How To Download Data Structures and Algorithm Analysis in C 4Th Edition Ebook PDF Ebook PDF Docx Kindle Full ChapterDocument36 pagesHow To Download Data Structures and Algorithm Analysis in C 4Th Edition Ebook PDF Ebook PDF Docx Kindle Full Chapterdoug.wiggins940100% (28)

- Octane & RVP Calc SheetDocument8 pagesOctane & RVP Calc SheetAsifNo ratings yet

- Statistika 1BDocument4 pagesStatistika 1Brahardian daniarNo ratings yet

- Fmsa315 - s11 - A.ipynb - Fernández - ConstanzaDocument6 pagesFmsa315 - s11 - A.ipynb - Fernández - ConstanzaScribdTranslationsNo ratings yet

- Supporting Information For: Characterization of Crude Oil by Real-Component SurrogatesDocument15 pagesSupporting Information For: Characterization of Crude Oil by Real-Component SurrogatesHarmanNo ratings yet

- Confidential: SESSION 2018/2019Document26 pagesConfidential: SESSION 2018/2019MOHAMMED A.M. ABUJARAD 19EE0049No ratings yet

- CIT 2101 - VariationDocument24 pagesCIT 2101 - VariationKristian VillabrozaNo ratings yet

- Results 1Document4 pagesResults 1Đặng Thu TrúcNo ratings yet

- The Schematics of The Experimental Set Up Is Given BelowDocument19 pagesThe Schematics of The Experimental Set Up Is Given BelowSohini RoyNo ratings yet

- Team 3 Budget 17 August 2016Document17 pagesTeam 3 Budget 17 August 2016yiyito04No ratings yet

- Práctica de Maraton - Ipynb - Colaboratory - AlejandroDocument8 pagesPráctica de Maraton - Ipynb - Colaboratory - AlejandroAlejandro GirónNo ratings yet

- Statistical Data Analysis - Ipynb - ColaboratoryDocument6 pagesStatistical Data Analysis - Ipynb - ColaboratoryVarad KulkarniNo ratings yet

- Greater DecimalsDocument2 pagesGreater DecimalsCalm SeekNo ratings yet

- AgricolaeDocument89 pagesAgricolaeIwanNo ratings yet

- Function Machines - Filling in Missing DigitDocument3 pagesFunction Machines - Filling in Missing DigitPatrice BlairNo ratings yet

- Agricolae Tutorial (Version 1.3-5) : F Elipe de M Endiburu 2021-06-05Document88 pagesAgricolae Tutorial (Version 1.3-5) : F Elipe de M Endiburu 2021-06-05JAVIER DE LA HOZNo ratings yet

- Addsubmultdivid 2Document1 pageAddsubmultdivid 2NevgenNo ratings yet

- Introsort ScreenDocument10 pagesIntrosort Screenvivek upadhyay0% (1)

- Dwnload Full Production and Operations Analysis 6th Edition Nahmias Solutions Manual PDFDocument35 pagesDwnload Full Production and Operations Analysis 6th Edition Nahmias Solutions Manual PDFsaabatmandearnestus100% (11)

- RAMP Cardiac Controls (C5003) Stability 04feb2020 PDFDocument4 pagesRAMP Cardiac Controls (C5003) Stability 04feb2020 PDFerickNo ratings yet

- Cambridge International AS & A Level: Computer Science 9618/12Document10 pagesCambridge International AS & A Level: Computer Science 9618/12C JavaNo ratings yet

- Semester: 7-CBCS 2017 Date: 16 Jan 2021 Subject: CRYPTOGRAPHY (17EC744) Time: 02:30 PM - 04:30 PM Faculty: DR Jagadeesh Chandra A P Max Marks: 30Document1 pageSemester: 7-CBCS 2017 Date: 16 Jan 2021 Subject: CRYPTOGRAPHY (17EC744) Time: 02:30 PM - 04:30 PM Faculty: DR Jagadeesh Chandra A P Max Marks: 30VariNo ratings yet

- RTX 4000 Ada Datasheet Web Nvidia 2788511Document2 pagesRTX 4000 Ada Datasheet Web Nvidia 2788511Yassin 1PNo ratings yet

- RAM Vs ROMDocument5 pagesRAM Vs ROMShubh SatvikNo ratings yet

- Secj3323-202120221 CiDocument7 pagesSecj3323-202120221 CiMariamNo ratings yet

- REDCap Performance SuggestionsDocument2 pagesREDCap Performance SuggestionsAlyer EspinozaNo ratings yet

- 2 7Ghz Bidirectional I C Bus Controlled Synthesiser: Advance InformationDocument12 pages2 7Ghz Bidirectional I C Bus Controlled Synthesiser: Advance InformationNuKamila WorldNo ratings yet

- Java Collection FrameworkDocument21 pagesJava Collection Frameworkvinay MurakambattuNo ratings yet

- From Mainframes To MicroprocessorsDocument8 pagesFrom Mainframes To MicroprocessorsAsif MemonNo ratings yet

- Resume Gourav KumarDocument2 pagesResume Gourav KumarGrocery Pvt ltdNo ratings yet

- Python Vs Java Comparison Python JavaDocument23 pagesPython Vs Java Comparison Python JavaRamesh kNo ratings yet

- Black Spot Identification: Sensitivity: InternalDocument8 pagesBlack Spot Identification: Sensitivity: InternalShain SalimNo ratings yet

- MicroconrtollerDocument12 pagesMicroconrtollerNahomNo ratings yet

- ZLAN7104 WiFi User ManualDocument42 pagesZLAN7104 WiFi User ManualyuryNo ratings yet

- Template EmopDocument46 pagesTemplate EmopMauricio FierroNo ratings yet

- @ckiptv 100hitsDocument67 pages@ckiptv 100hitsAbeltito SalgaNo ratings yet

- Wheel Tracker Large DeviceDocument3 pagesWheel Tracker Large DeviceRabnawaz ImamNo ratings yet

- Chap 1Document98 pagesChap 1Fahmi OlayahNo ratings yet

- PCC-450 Reference Manual Ver1 13rev003Document24 pagesPCC-450 Reference Manual Ver1 13rev003omjettyNo ratings yet

- It8511te IteDocument333 pagesIt8511te IteZBrasil InformáticaNo ratings yet

- Stop and Wait ArqDocument22 pagesStop and Wait ArqAtul KumarNo ratings yet

- Microprocessor and Peripherals Interfacing Notes: Course Code: ECC501 Class: TE-EXTC Mumbai UniversityDocument10 pagesMicroprocessor and Peripherals Interfacing Notes: Course Code: ECC501 Class: TE-EXTC Mumbai UniversityAnikhet MulkyNo ratings yet

- Hands-On Lab Analog Sensors, Signal and Data Output: 1 Aims of This ExerciseDocument9 pagesHands-On Lab Analog Sensors, Signal and Data Output: 1 Aims of This Exercise蕾蕾No ratings yet

- Cisco NoteDocument43 pagesCisco NoteEthiopian CodeNo ratings yet

- CPP STL Cheat SheetDocument19 pagesCPP STL Cheat Sheetshubham singhNo ratings yet

- ACN MICRO PROJECTfinalDocument23 pagesACN MICRO PROJECTfinal1213 Vaibhav KothareNo ratings yet

- How To Start Steam in Offline ModeDocument1 pageHow To Start Steam in Offline Modesteam steamNo ratings yet