Professional Documents

Culture Documents

Download

Download

Uploaded by

aruna92piyumi93Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Download

Download

Uploaded by

aruna92piyumi93Copyright:

Available Formats

See discussions, stats, and author profiles for this publication at: https://www.researchgate.

net/publication/2864315

The Role of Transparency in Recommender Systems

Article · May 2002

DOI: 10.1145/506443.506619 · Source: CiteSeer

CITATIONS READS

409 2,293

2 authors, including:

Kirsten Medhurst

Schneider Electric

15 PUBLICATIONS 3,476 CITATIONS

SEE PROFILE

All content following this page was uploaded by Kirsten Medhurst on 23 October 2015.

The user has requested enhancement of the downloaded file.

The Role of Transparency in Recommender Systems

Rashmi Sinha & Kirsten Swearingen

School of Information Management & Systems, UC Berkeley

Berkeley, CA 94720 USA

{sinha, kirstens}@sims.berkeley.edu

ABSTRACT success of prior suggestions from this recommender, and (c)

Recommender Systems act as personalized decision guides, asking the recommender for more information about why the

aiding users in decisions on matters related to personal taste. recommendation was made. Similarly, Recommender Systems

Most previous research on Recommender Systems has need to offer the user some ways to judge the appropriateness

focused on the statistical accuracy of the algorithms driving of recommendations.

the systems, with little emphasis on interface issues and the TRANSPARENCY IN USER INTERFACES

user’s perspective. The goal of this research was to examine Previous research has shown that expert systems that act as

the role of transparency (user understanding of why a decision guides need to provide explanations and justifications

particular recommendation was made) in Recommender for their advice (Buchanan & Shortcliffe, 1984). Studies with

Systems. To explore this issue, we conducted a user study of search engines also show the importance of transparency.

five music Recommender Systems. Preliminary results Koenmann & Belkin (1996) found that greater visibility and

indicate that users like and feel more confident about interactivity for relevance feedback helped search performance

recommendations that they perceive as transparent. and satisfaction with system. Muramatsu & Pratt (2001)

Keywords: Recommender Systems, Usability Studies, WWW investigated user mental models for typical query

transformations done by search engines during the retrieval

INTRODUCTION

A good way to personalize recommendations for an individual process (e.g., stop word removal, term suffix expansion). They

is to identify people with similar interests and recommend found that making such transformations transparent to users

items that have interested these like-minded people (Resnick improves search performance.

& Varian, 1997). This premise is the statistical basis of Johnson & Johnson (1993) point out that explanations play a

collaborative filtering (CF) algorithms that form the backbone crucial role in the interaction between users and complex

of most Recommender Systems. Many online commerce systems. According to their research, one purpose of

sites (Amazon, CDNow, Barnes & Noble) incorporate explanation is to illustrate the relationship between antecedent

Recommender Systems to help customers choose from large and consequent (i.e., between cause and effect).

catalogues of products. In the context of recommender systems, understanding the

Most online Recommender Systems act like black boxes, not relationship between the input to the system (ratings made by

offering the user any insight into the system logic or user) and output (recommendations) allows the user to initiate

justification for the recommendations. The typical interaction a predictable and efficient interaction with the system.

paradigm involves asking the user for some input (in the form Transparency allows users to meaningfully revise the input in

of ratings of items, or listing favorite artists or authors), order to improve recommendations, rather than making “shots

processing the input, and giving the user some output in the in the dark.” Herlocker et al. suggest that Recommender

form of recommendations. Systems have not been used in high-risk decision-making

We challenge this black box approach, especially since the because of a lack of transparency (2000). While users might

human analogue to recommendation-making is a transparent take a chance on an opaque movie recommendation, they

social process. In fact, CF algorithms are also called social might be unwilling to commit to a vacation spot without

filtering algorithms because they are modeled after the time- understanding the reasoning behind such a recommendation.

tested social process of receiving recommendations by asking STUDY GOALS

friends with similar tastes to recommend movies, books, or We are interested in exploring the role of transparency in

music that they like. The recipient of a recommendation has a recommender systems. Do users perceive Recommender

number of ways to decide whether to trust the System logic to be transparent, or do they feel that they lack

recommendation: (a) scrutinizing the similarity between the insight into why an item is recommended? Is perceived

taste of the recipient and the recommender (b) assessing the transparency related to greater liking for recommendations?

Our investigation focuses on the perception of transparency

rather than the accuracy of the perception. We hypothesize

that users who perceive that they do not understand why a

recommendation is made are less likely to trust system

suggestions.

STUDY DESIGN

Figure 1: Relationship of Transparency &

To examine our hypothesis, we conducted a user study of 5 Familiarity w ith Liking Not Transparent

Music Recommender Systems (Amazon’s Recommendations

Transparent

Explorer, CDNow’s Album Advisor, MediaUnbound,

MoodLogic’s Filters Browser, and SongExplorer). We 5

Mean Liking

focused on Music Recommender Systems because unlike 4

book and movie systems, music systems allow users to 3

evaluate each recommended item during the study itself. We 2

identified more than 10 Music Recommender Systems and 1

chose five that offered different interaction paradigms and New Negative Positive Previous

results displays (e.g. number of items returned, amount of item Error Bars show Std. Error Previous Opinion Opinion

description, audio sample vs. complete song, methods for

generating new sets of recommendations). DISCUSSION

The results of this preliminary study indicate that in general

12 people participated in the study. All participants reported

users like and feel more confident in recommendations

using the Internet and/or listening to music daily; most

perceived as transparent. For new items, it is not surprising

reported purchasing or downloading music monthly. For each

that users like transparent recommendations more than non-

of the 5 systems (in random order), participants: (a) provided

transparent ones. However, users also like to know why an

input (e.g. favorite musician, ratings on a set of items). (b)

item was recommended even for items they already liked. This

listened to music sample, and (c) rated liking, confidence & suggests that users are not just looking for blind

transparency for 10 recommendations. Thus current results are

recommendations from a system, but are also looking for a

based on ratings of 600 recommendations (12 users x 5

justification of the system’s choice.

systems x 10 recommendations). Our primary measure for

transparency was the user response to the question, “Do you This is an important finding from the perspective of system

understand why the system recommended this item to you?” designers. A good CF algorithm that generates accurate

recommendations is not enough to constitute a useful system

RESULTS from the users’ perspective. The system needs to convey to the

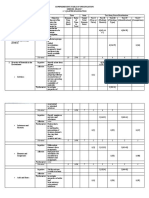

Table 1 shows means and standard errors for transparent and user its inner logic and why a particular recommendation is

non-transparent recommendations. Mean liking was suitable for them. We plan to follow up this work with an

significantly higher for transparent than non-transparent experimental study manipulating the transparency of

recommendations [t(579)=-7.89; p<.01]. Mean Confidence recommended items while holding everything else constant.

showed a similar trend [t(388)=-5.88; p<.01]. We found We also plan to investigate the efficacy of different methods

similar results when examining each of the five systems for achieving system transparency.

individually.

REFERENCES

Table 1: Effect of Transparency on Liking and Confidence

1. Buchanan, B., & Shortcliffe, E. Rule-Based Expert

Not Transparent Transparent Systems: The Mycin Experiments of the Stanford Heuristic

Mean Liking 2.79 (.07) 3.51 (.06) Programming Project. Reading, MA: Addison Wesley

Mean Confidence 6.89 (.17) 8.12 (.12) Publishing Company. 1984

2. Herlocker, J., Konstan, J.A., & Riedl, J. Explaining

A potential confounding factor is users’ previous familiarity Collaborative Filtering Recommendations. ACM 2000

with recommended items. There are three conditions: (i) no Conference on CSCW. 2000

previous experience with recommended item, (ii) a positive

3. Johnson, J. & Johnson, P. Explanation facilities and

previous opinion of recommendation, or (iii) a negative

interactive systems. In Proceedings of Intelligent User

previous experience of item. To examine the question of

Interfaces '93. (159-166). 1993

whether transparency and previous experience interact, we

computed means for transparent and non-transparent 4. Koenemann, J., & Belkin, N. A case for interaction: A

recommendations for the three kinds of familiarity separately. study of interactive information retrieval behavior and

Figure 1 shows that mean liking is highest for previously liked effectiveness. In Proceedings of the Human Factors in

items, lowest for previously disliked items, and in between for Computing Systems Conference. ACM Press, NY, 1996

new items. Also, the relationship between transparency and 5. Muramatsu, J., & Pratt, W. Transparent Search Queries:

liking is modulated by a user’s previous experience with an Investigating users’ mental models of search engines. In

item. Mean liking is significantly higher for transparent than Proceedings of SIGIR Conference. ACM Press, NY, 2001

non-transparent recommendations in the case of both new

[t(39)=-3.7; p<.01] and previously liked [t(240)=2.68; p<.01] 6. Resnick, P, and Varian, H.R. Recommender Systems.

recommendations. The same difference was not significant for Commun. ACM 40, 3 (56-58). 1997

previously disliked items [t(129)=-1.08; n.s.].

View publication stats

You might also like

- Artigo - Doll e Torkzadeh 1988 - The Mesurement of End-User Computing SatisfactionDocument17 pagesArtigo - Doll e Torkzadeh 1988 - The Mesurement of End-User Computing Satisfactiondtdesouza100% (1)

- Music Recommendation EngineDocument12 pagesMusic Recommendation EngineFreyja SigurgisladottirNo ratings yet

- Recommender Systems: An Overview: Robin Burke, Alexander Felfernig, Mehmet H. GökerDocument6 pagesRecommender Systems: An Overview: Robin Burke, Alexander Felfernig, Mehmet H. GökerabdulsattarNo ratings yet

- Do Users Appreciate Explanations of RecommendationsDocument6 pagesDo Users Appreciate Explanations of RecommendationsKevin LevroneNo ratings yet

- .A Movie Recommender System MOVREC Using Machine Learning TechniquesDocument6 pages.A Movie Recommender System MOVREC Using Machine Learning TechniquesPC BhaskarNo ratings yet

- A Survey of Music Recommendation Systems With A Proposed Music Recommendation SystemDocument7 pagesA Survey of Music Recommendation Systems With A Proposed Music Recommendation SystemNilesh MukherjeeNo ratings yet

- A Seminar Report (Updated)Document23 pagesA Seminar Report (Updated)Vishal SoniNo ratings yet

- Movie Recommender System: Shekhar 20BCS9911 Sanya Pawar 20BCS9879 Tushar Mishra 20BCS9962Document27 pagesMovie Recommender System: Shekhar 20BCS9911 Sanya Pawar 20BCS9879 Tushar Mishra 20BCS9962Amrit SinghNo ratings yet

- On The Recommending of Citations For Research PapersDocument10 pagesOn The Recommending of Citations For Research PapersLord CalhounNo ratings yet

- Content-Based Movie Recommendation System Using Genre CorrelationDocument8 pagesContent-Based Movie Recommendation System Using Genre CorrelationPerni AkashNo ratings yet

- Recommendation Systems: Department of Computer Science Engineering University School of Information and TechnologyDocument6 pagesRecommendation Systems: Department of Computer Science Engineering University School of Information and Technologyajaykumar_domsNo ratings yet

- Recommender System - Module 1 - Introduction To Recommender SystemDocument49 pagesRecommender System - Module 1 - Introduction To Recommender SystemDainikMitraNo ratings yet

- Data Mining Final Project ReportDocument8 pagesData Mining Final Project ReportAakash NanthanNo ratings yet

- Don't Look Stupid: Avoiding Pitfalls When Recommending Research PapersDocument10 pagesDon't Look Stupid: Avoiding Pitfalls When Recommending Research Papersshininglightchristiancollege1No ratings yet

- LITERATURE SURVEY ON RECOMMENDATION ENGINEaperDocument9 pagesLITERATURE SURVEY ON RECOMMENDATION ENGINEaperBhupender YadavNo ratings yet

- Deep Autoencoder For Recommender Systems: Parameter Influence AnalysisDocument11 pagesDeep Autoencoder For Recommender Systems: Parameter Influence AnalysisĐứcĐiệuNo ratings yet

- Evaluating-ToIS-20041Document49 pagesEvaluating-ToIS-20041multe123No ratings yet

- E96660695201532Document5 pagesE96660695201532CeceNo ratings yet

- Fairness and Transparency in Recommendation: The Users' PerspectiveDocument6 pagesFairness and Transparency in Recommendation: The Users' PerspectiveAkshay RockzzNo ratings yet

- A Theory-Driven Design Framework For Social Recommender SystemsDocument37 pagesA Theory-Driven Design Framework For Social Recommender SystemsFroy Richmond Gabriel SilverioNo ratings yet

- Collaborative Filtering Using A Regression-Based Approach: Slobodan VuceticDocument22 pagesCollaborative Filtering Using A Regression-Based Approach: Slobodan VuceticmuhammadrizNo ratings yet

- Article 72Document4 pagesArticle 72Tuấn Anh NguyễnNo ratings yet

- ScriptDocument2 pagesScriptsourabh sinhaNo ratings yet

- A Literature Review and Classification of Recommender Systems Research PDFDocument7 pagesA Literature Review and Classification of Recommender Systems Research PDFea6qsxqdNo ratings yet

- Survey Recomender System AlgorithmDocument33 pagesSurvey Recomender System AlgorithmRonny SugiantoNo ratings yet

- Background InformationDocument9 pagesBackground InformationMwanthi kimuyuNo ratings yet

- McNee, Sean Riedl, John Konstan, Joseph - Making Recommendations Better An Analytic Model For Human-Recommender InteractionDocument6 pagesMcNee, Sean Riedl, John Konstan, Joseph - Making Recommendations Better An Analytic Model For Human-Recommender InteractionMarcos CurvelloNo ratings yet

- 4704-Article Text-9006-1-10-20201231Document13 pages4704-Article Text-9006-1-10-20201231cagan.ulNo ratings yet

- Trends, Problems and Solutions of Recommender System: ArticleDocument5 pagesTrends, Problems and Solutions of Recommender System: Articleabhinav pachauriNo ratings yet

- Digital Content Recommendation System Through Facial Emotion RecognitionDocument7 pagesDigital Content Recommendation System Through Facial Emotion RecognitionIJRASETPublicationsNo ratings yet

- Hybrid Critiquing-Based Recommender SystemsDocument10 pagesHybrid Critiquing-Based Recommender SystemsWinter WindNo ratings yet

- Sentiment Classification of Movie Reviews by Supervised Machine Learning ApproachesDocument8 pagesSentiment Classification of Movie Reviews by Supervised Machine Learning Approacheslok eshNo ratings yet

- Trust-Aware Recommender Systems: Paolo Massa Massa@itc - It Paolo Avesani Avesani@itc - ItDocument8 pagesTrust-Aware Recommender Systems: Paolo Massa Massa@itc - It Paolo Avesani Avesani@itc - ItFathin MubarakNo ratings yet

- 98 Jicr September 3208Document6 pages98 Jicr September 3208bawec59510No ratings yet

- Report Movie RecommendationDocument49 pagesReport Movie RecommendationChris Sosa JacobNo ratings yet

- SSRN Id4447197Document8 pagesSSRN Id4447197Tuan Anh TranNo ratings yet

- Movie Recommendation System Using Machine LearningDocument6 pagesMovie Recommendation System Using Machine LearningInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- ReportDocument16 pagesReportUddesh BhagatNo ratings yet

- Million Song Dataset Recommendation Project Report: Yi Li Rudhir Gupta Yoshiyuki Nagasaki Tianhe ZhangDocument8 pagesMillion Song Dataset Recommendation Project Report: Yi Li Rudhir Gupta Yoshiyuki Nagasaki Tianhe ZhangPiyush GargNo ratings yet

- MediaRec A Hybrid Media Recommender SystemDocument8 pagesMediaRec A Hybrid Media Recommender SystemIJRASETPublicationsNo ratings yet

- Introduction To Rec Om Mender Systems, Algorithms and EvaluationDocument4 pagesIntroduction To Rec Om Mender Systems, Algorithms and EvaluationddeepakkumarNo ratings yet

- Product Review Analysis With Filtering Vulgarity & Ranking System Based On Transaction & OtpDocument4 pagesProduct Review Analysis With Filtering Vulgarity & Ranking System Based On Transaction & OtpijcnesNo ratings yet

- Application of DataDocument1 pageApplication of DataSrinivasNo ratings yet

- Online Book Recommendation SystemDocument21 pagesOnline Book Recommendation SystemHeff100% (1)

- What Is A Recommender SystemDocument3 pagesWhat Is A Recommender SystemPeter RivaNo ratings yet

- HAI 2019 PreprintDocument10 pagesHAI 2019 Preprintamine332No ratings yet

- Survey Paper On Recommendation EngineDocument9 pagesSurvey Paper On Recommendation EngineAnonymous JKGPDGNo ratings yet

- Ijaret: International Journal of Advanced Research in Engineering and Technology (Ijaret)Document8 pagesIjaret: International Journal of Advanced Research in Engineering and Technology (Ijaret)IAEME PublicationNo ratings yet

- 14 - An Approach To Integrating Sentiment Analysis Into Recommender SystemsDocument17 pages14 - An Approach To Integrating Sentiment Analysis Into Recommender SystemsOffice WorkNo ratings yet

- Movie Recommendation System: Synopsis For Project (KCA 353)Document17 pagesMovie Recommendation System: Synopsis For Project (KCA 353)Rishabh MishraNo ratings yet

- ReportDocument20 pagesReports8240644No ratings yet

- Recommendation Systems:Issues and Challenges: Soanpet .Sree Lakshmi, Dr.T.Adi LakshmiDocument2 pagesRecommendation Systems:Issues and Challenges: Soanpet .Sree Lakshmi, Dr.T.Adi Lakshmiazertytyty000No ratings yet

- 13jay ChotaliyaDocument119 pages13jay ChotaliyaAlpa VarsaniNo ratings yet

- Sensors 22 04904Document22 pagesSensors 22 04904Miza RaiNo ratings yet

- Chen 2017Document17 pagesChen 2017Muhammad Teguh RiantoNo ratings yet

- Recommender Systems AsanovDocument7 pagesRecommender Systems AsanovFrank LeoneNo ratings yet

- A Collaborative Location BasedDocument28 pagesA Collaborative Location BasedCinty NyNo ratings yet

- Sentiment Analysis of Movie Reviews Using Machine Learning TechniquesDocument6 pagesSentiment Analysis of Movie Reviews Using Machine Learning Techniquesrojey79875No ratings yet

- Mastering User Surveys: A Guide for UX Designers and ResearchersFrom EverandMastering User Surveys: A Guide for UX Designers and ResearchersNo ratings yet

- Field Site VisitsDocument11 pagesField Site Visitsapi-700032483No ratings yet

- Future Hopes and Plans - Be Going To - Present Continuous - Will - Be AbleDocument31 pagesFuture Hopes and Plans - Be Going To - Present Continuous - Will - Be AbleDouglasm o Troncos RiosNo ratings yet

- U2l1 SeDocument0 pagesU2l1 Seapi-202140623No ratings yet

- Lesson 2 Plan FinalDocument10 pagesLesson 2 Plan Finalapi-300567789No ratings yet

- Outreach Program ModuleDocument8 pagesOutreach Program ModuleToffee Perez100% (1)

- Finance 351Document6 pagesFinance 351sultaniiuNo ratings yet

- Online-Training - SIMATIC Service 1Document2 pagesOnline-Training - SIMATIC Service 1Jemerald MagtanongNo ratings yet

- Pelatihan Teknik Pengajaran Vocabulary Berbasis Media RealiaDocument9 pagesPelatihan Teknik Pengajaran Vocabulary Berbasis Media RealiaSyariNo ratings yet

- A CROSS CULTURAL STUDY ON AMERICAN AND VIETNAMESE STUDENTS' BODY LANGUAGE IN ORAL PRESENTATION. Do Thanh Uyen. QH 06Document72 pagesA CROSS CULTURAL STUDY ON AMERICAN AND VIETNAMESE STUDENTS' BODY LANGUAGE IN ORAL PRESENTATION. Do Thanh Uyen. QH 06Kavic80% (5)

- English 7: Weekly Home Learning Plan in English 7Document5 pagesEnglish 7: Weekly Home Learning Plan in English 7Tipa JacoNo ratings yet

- StaffingDocument24 pagesStaffingagelesswapNo ratings yet

- Introduction To Software Cost Estimation: SectionDocument22 pagesIntroduction To Software Cost Estimation: SectionSurabhi ChavanNo ratings yet

- Tos 2nd Quarter English 10Document3 pagesTos 2nd Quarter English 10Abigail GoloNo ratings yet

- IELTS Rainwater Diagram Reported 2015 PDFDocument20 pagesIELTS Rainwater Diagram Reported 2015 PDFNavneet SoniNo ratings yet

- A Position Paper On "No Homework Policy"Document2 pagesA Position Paper On "No Homework Policy"Lorainne Ballesteros100% (5)

- đề học kì 1-lớp 9Document30 pagesđề học kì 1-lớp 9Alexius Dr.No ratings yet

- One Art EssayDocument2 pagesOne Art Essayb71bpjha100% (2)

- Internship Final TemplateDocument5 pagesInternship Final TemplateSupraja ParuchuriNo ratings yet

- SLM - MATH 8 Q4 WK 2 Module 2 LtamposDocument15 pagesSLM - MATH 8 Q4 WK 2 Module 2 LtamposZia De SilvaNo ratings yet

- Academic Blueprint Part 2 RevFA20Document5 pagesAcademic Blueprint Part 2 RevFA20Muhammad sumairNo ratings yet

- Fire Fighting Building B O QDocument10 pagesFire Fighting Building B O QJurie_sk3608No ratings yet

- Use of English 01ADocument2 pagesUse of English 01AТетяна СтрашноваNo ratings yet

- Executive Resume 4-1-1Document3 pagesExecutive Resume 4-1-1waseemimran401No ratings yet

- Rates of Intimate Partner Violence Perpetration and Victimization Among Adults With ADHDDocument10 pagesRates of Intimate Partner Violence Perpetration and Victimization Among Adults With ADHDRamoncito77No ratings yet

- Sample CVDocument3 pagesSample CVjacobNo ratings yet

- M3-Lecture 20211212-MCI - SOKODocument24 pagesM3-Lecture 20211212-MCI - SOKOJohnny VuNo ratings yet

- Course OutlineDocument2 pagesCourse OutlineDagim AbaNo ratings yet

- Comprehensive Table of Specification: Science-Grade 7 1 Quarter ExaminationDocument3 pagesComprehensive Table of Specification: Science-Grade 7 1 Quarter ExaminationAnnie Bagalacsa Cepe-TeodoroNo ratings yet

- A Benefit of Standard Operating Procedures For A Customer Would BeDocument9 pagesA Benefit of Standard Operating Procedures For A Customer Would BeJulieNo ratings yet