Professional Documents

Culture Documents

STS_LESSON-6

STS_LESSON-6

Uploaded by

Alexandra AyneCopyright:

Available Formats

You might also like

- Ids Ips Audit ChecklistDocument1 pageIds Ips Audit Checklisthakim hbNo ratings yet

- Upending The Uncanny ValleyDocument8 pagesUpending The Uncanny ValleyGina Smith100% (2)

- TLE 10 Lesson 1Document111 pagesTLE 10 Lesson 1Lindsay Kirstyn Tabaniag100% (1)

- OECD Learning Compass 2030 Concept Note SeriesDocument146 pagesOECD Learning Compass 2030 Concept Note SeriesNguyen Nhu Ngoc NGONo ratings yet

- Database As A Service Using Oracle Enterprise Manager 12c CookbookDocument64 pagesDatabase As A Service Using Oracle Enterprise Manager 12c Cookbookramses71No ratings yet

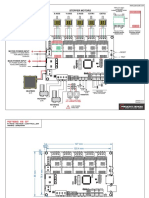

- X5 GT Wiring DiagramDocument2 pagesX5 GT Wiring DiagramBrian Thompson0% (1)

- Reviewer For StsDocument5 pagesReviewer For StsKathleenNo ratings yet

- Reviewer For StsDocument5 pagesReviewer For StsNhelle IronVoltoxNo ratings yet

- History of RoboticsDocument7 pagesHistory of RoboticsGabee LegaspiNo ratings yet

- EnglishDocument16 pagesEnglishsj0000350No ratings yet

- History of Robotics Timeline 2Document1 pageHistory of Robotics Timeline 2api-632695803No ratings yet

- Modern Robotics: Present Day WeaponsDocument2 pagesModern Robotics: Present Day WeaponsfazalrazaNo ratings yet

- Chapter 2C. When Technology and Humanity CrossDocument19 pagesChapter 2C. When Technology and Humanity CrossBea Mae SangalangNo ratings yet

- Robotics HistoryDocument22 pagesRobotics HistoryAli HaiderNo ratings yet

- A. The Ethical Dilemmas of Robotics: Chapter 6 When Technology and Humanity CrossDocument20 pagesA. The Ethical Dilemmas of Robotics: Chapter 6 When Technology and Humanity CrossLady Ann AltezaNo ratings yet

- Chapter 6 - STSDocument13 pagesChapter 6 - STSRonna Panganiban DipasupilNo ratings yet

- Chapter-6 STSDocument12 pagesChapter-6 STSCasimero CabungcalNo ratings yet

- Robotics: Ece 411: Robotics Engr. Lalaine Jean A. Ballais, EctDocument9 pagesRobotics: Ece 411: Robotics Engr. Lalaine Jean A. Ballais, Ecttsk2230No ratings yet

- Robot - WikipediaDocument40 pagesRobot - WikipediaTejasNo ratings yet

- The Ascension of Robotics Through TimeDocument5 pagesThe Ascension of Robotics Through TimeIrina PopaNo ratings yet

- Chapter 6Document29 pagesChapter 6sweetsummer621No ratings yet

- Robotics Lecture g7 PDFDocument2 pagesRobotics Lecture g7 PDFShannel TornoNo ratings yet

- History of Robotics TimelineDocument11 pagesHistory of Robotics Timelineapi-369287010No ratings yet

- History of RoboticsDocument21 pagesHistory of RoboticsVeekshith Salient KnightNo ratings yet

- 1st Edition of Robotics and Automation Guide Distrelec PDFDocument32 pages1st Edition of Robotics and Automation Guide Distrelec PDFSouhila BoukhlifaNo ratings yet

- DFDFDDDocument5 pagesDFDFDD김가현No ratings yet

- Breif History of RoboticsDocument3 pagesBreif History of Roboticsapi-253221137No ratings yet

- Robotics q1Document12 pagesRobotics q1divinegarciaNo ratings yet

- Where Do The Ideas For Different Kinds of Robots Come From? 10 PointsDocument2 pagesWhere Do The Ideas For Different Kinds of Robots Come From? 10 PointsJulianne Love IgotNo ratings yet

- Redefiniendo RoboticaDocument13 pagesRedefiniendo RoboticaLuis Calderón RugelesNo ratings yet

- New Microsoft Word DocumentDocument53 pagesNew Microsoft Word Documentapi-3707156No ratings yet

- Seminar 2Document26 pagesSeminar 2Manan GaurNo ratings yet

- Rossums Universal Robots Not The MachinesDocument4 pagesRossums Universal Robots Not The MachinesJon GaronNo ratings yet

- RobotsDocument2 pagesRobotspsykosomatikNo ratings yet

- 06과 Grammar: EnglishDocument6 pages06과 Grammar: Englishsj0000350No ratings yet

- Origins: Nothing Scarier Than A Bunch of Silver-Painted Guys Wearing Buckets On Their HeadsDocument7 pagesOrigins: Nothing Scarier Than A Bunch of Silver-Painted Guys Wearing Buckets On Their HeadsEmyNo ratings yet

- 1 Introduction To Robotics DevelopmentDocument52 pages1 Introduction To Robotics Developmentiveynesh5No ratings yet

- Chart 1Document2 pagesChart 1gautham sajuNo ratings yet

- Please Read: A Personal Appeal From Wikipedia Founder Jimmy WalesDocument23 pagesPlease Read: A Personal Appeal From Wikipedia Founder Jimmy WalesmihirapotterNo ratings yet

- Robotics - Thinking, Sensing and ActingDocument3 pagesRobotics - Thinking, Sensing and Actingmsilva_74No ratings yet

- IntroductionDocument120 pagesIntroductionAbdur RehmanNo ratings yet

- History of Robot ENGDocument5 pagesHistory of Robot ENGCharles Palacios NavarroNo ratings yet

- What Is Robotics History of Robotics Scope of Robotics Advantages Disadvantages ApplicationsDocument12 pagesWhat Is Robotics History of Robotics Scope of Robotics Advantages Disadvantages Applicationss suhas gowdaNo ratings yet

- Britannica Illustrated Science Library - TechnologyDocument56 pagesBritannica Illustrated Science Library - TechnologySounak HalderNo ratings yet

- RoboticsDocument11 pagesRoboticsHari Hara Sudhan KaliappanNo ratings yet

- 01 HistoryDocument12 pages01 HistoryJefferson MagallanesNo ratings yet

- Index: 2 1.2 History 4 1.3 Development 5 1.4 Challenges in Humanoid Robots 5Document29 pagesIndex: 2 1.2 History 4 1.3 Development 5 1.4 Challenges in Humanoid Robots 5Honey SinghNo ratings yet

- RobotsDocument22 pagesRobotsKari NelsonNo ratings yet

- Love and Sex with Robots: The Evolution of Human-Robot RelationshipsFrom EverandLove and Sex with Robots: The Evolution of Human-Robot RelationshipsRating: 3.5 out of 5 stars3.5/5 (20)

- Introduction To Robotics PDFDocument22 pagesIntroduction To Robotics PDFsamurai7_77No ratings yet

- AnusDocument24 pagesAnusrajbunisa saleemNo ratings yet

- Epucheu ResearchDocument12 pagesEpucheu Researchepucheu11No ratings yet

- Chapter 6 Group 3.Document61 pagesChapter 6 Group 3.gracieNo ratings yet

- Duran Thill ChallengesDocument22 pagesDuran Thill ChallengestsigeNo ratings yet

- Presentation Adapted From Space Foundation Robotics/Nanotechnology 2009Document18 pagesPresentation Adapted From Space Foundation Robotics/Nanotechnology 2009Aman SachdevaNo ratings yet

- Buchanan 2006 - A (Very) Brief History of Artificial IntelligenceDocument8 pagesBuchanan 2006 - A (Very) Brief History of Artificial Intelligencedaniel pazNo ratings yet

- STS SECTION 1-3 Reviewer - PRELIMSDocument9 pagesSTS SECTION 1-3 Reviewer - PRELIMSJb CuevasNo ratings yet

- Project01 RobotDocument5 pagesProject01 RobotPatricio OrtizNo ratings yet

- Robots and ApplicationsDocument15 pagesRobots and ApplicationsChief PriestNo ratings yet

- Robots in Popular CultureDocument2 pagesRobots in Popular CultureRamojus BaleviciusNo ratings yet

- RoboticsDocument5 pagesRoboticswynwon80No ratings yet

- Resume-1Document1 pageResume-1Alexandra AyneNo ratings yet

- STS_LESSON-7Document4 pagesSTS_LESSON-7Alexandra AyneNo ratings yet

- 19 - Lecture #14 - Minerals - Part 1Document58 pages19 - Lecture #14 - Minerals - Part 1Alexandra AyneNo ratings yet

- VitaminsDocument12 pagesVitaminsAlexandra AyneNo ratings yet

- Modern Geotechnical Design Codes of Practice Implementation, ApplicationDocument340 pagesModern Geotechnical Design Codes of Practice Implementation, ApplicationAngusNo ratings yet

- CSE-311 Operating SystemsDocument22 pagesCSE-311 Operating Systemsbasheer usman illelaNo ratings yet

- DIN-A-MITE Solid-State Power ControllerDocument21 pagesDIN-A-MITE Solid-State Power Controllermisael123No ratings yet

- Arnav Roy: B.Tech, Information TechnologyDocument1 pageArnav Roy: B.Tech, Information TechnologyarnavNo ratings yet

- Gcse Astronomy Coursework ExamplesDocument4 pagesGcse Astronomy Coursework Examplesqclvqgajd100% (2)

- Q1 Module 5 Electric Circuit Applying Ohms Law FinalDocument26 pagesQ1 Module 5 Electric Circuit Applying Ohms Law FinalMartin lhione LagdameoNo ratings yet

- LDXX 6516DS VTMDocument3 pagesLDXX 6516DS VTMBranko BorkovicNo ratings yet

- READMEDocument4 pagesREADMENose NoloseNo ratings yet

- Mitel 5000 CP v5.0 System Administration Diagnostics Guide PDFDocument46 pagesMitel 5000 CP v5.0 System Administration Diagnostics Guide PDFRichNo ratings yet

- Maintenance Manual: Spitfire 65/90Document319 pagesMaintenance Manual: Spitfire 65/90Сергей СаяпинNo ratings yet

- Simplify Your Custom Logic Using Extended AttributesDocument34 pagesSimplify Your Custom Logic Using Extended AttributesArjun SharmaNo ratings yet

- Accenture - It's LearningDocument40 pagesAccenture - It's LearningUtkarsh SinghNo ratings yet

- Nemo File Format V2.14Document545 pagesNemo File Format V2.14Claudio Garretón VénderNo ratings yet

- Uht 75 Uht 79Document1 pageUht 75 Uht 79ALI MESSAOUDINo ratings yet

- DeadlockDocument53 pagesDeadlocksaifulNo ratings yet

- Usbmedia 888k0x k1x k8x k9x l0x l1x l8x l9x j9x r22-11Document4 pagesUsbmedia 888k0x k1x k8x k9x l0x l1x l8x l9x j9x r22-11Renato RochaNo ratings yet

- Java Last Year Question PaperDocument18 pagesJava Last Year Question PaperibrahimnasimshaikhNo ratings yet

- ListinorebautDocument109 pagesListinorebautwineletronNo ratings yet

- Gary Vee Content PDFDocument86 pagesGary Vee Content PDFvbisNo ratings yet

- Substation Grounding Studieswith More Accurate Fault Analysisand Simulation StrategiesDocument10 pagesSubstation Grounding Studieswith More Accurate Fault Analysisand Simulation StrategiesFOGNo ratings yet

- Apr19nsDocument7 pagesApr19nsPreeti goswamiNo ratings yet

- FurnitureDocument51 pagesFurniturepoonam sanas43% (7)

- NanotechnologyDocument7 pagesNanotechnologyJames UgbesNo ratings yet

- ACO PACIFIC INC - 7012 - Hoja TecnicaDocument6 pagesACO PACIFIC INC - 7012 - Hoja TecnicaJuan Sebastian Juris ZapataNo ratings yet

- Ieee Digital Signal Processing 2015 Matlab Project List Mtech BeDocument3 pagesIeee Digital Signal Processing 2015 Matlab Project List Mtech BeHassan RohailNo ratings yet

- GEM - Product Price List Sep 2017Document1 pageGEM - Product Price List Sep 2017sasasjkNo ratings yet

STS_LESSON-6

STS_LESSON-6

Uploaded by

Alexandra AyneCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

STS_LESSON-6

STS_LESSON-6

Uploaded by

Alexandra AyneCopyright:

Available Formats

SCIENCE, TECHNOLOGY AND SOCIETY GED SEM

C / JOANA BEATRICE SIBAL & ALIZAH JOY CUETO 109 O2

CHAPTER 6: WHEN TECHNOLOGY AND HUMANITY CROSS

• Servants of Greek God Hephaestus (God of Fire

TOPIC OUTLINE and the Forge)

1 The Ethical Dilemmas of Robotics o built robots out of gold which where his

2 Human, Morals and Machines “helpers” and life-size golden handmaidens

3 Why the Future Does Not Need Us? who helped around the house

• Myth of Pygmalion - carefully crafted a statue of

A. The Ethical Dilemmas of Robotics Galatea that would come to life thru the Goddess

Technological progress has brought many amazing Venus

achievements in the past century, like fast travel and

instant communication. 700 BCE

Hephaestus (God of Technology) – he was creating a

Androids new defense system for King Minos for his island

• robots that are just like humans and aware of kingdom of Crete but mortal guards and ordinary

themselves weapons.

• Challenging to create Talos - shape of a giant man and made of gleaming

• Even though we've made progress, fully human-like bronze with superhuman strength and powered with

robots are still a long way off. Ichor (the life fluid of the Gods)

• Technology → advancing rapidly → some think it's - the first robot

just a matter of time Patragol Crew of Jason, Midea, and Argonauts

But if these robots become a reality, there will be a lot Midea – can make Talos immortal in exchange with

of ethical issues to deal with. removing the bolt and Talos agreed and it drained the

Definition life of Talos and after that the crew got to go home safely

ROBOT and sound

4th Century BCE

-- Greek engineers begun making actual automatons

including robotic servants and flying birds

Talos – is the most famous invention who appeared on

Greek coins, vase paintings, public fresco, and in

theatron performances.

Even 2500 years ago, Greeks already begun to

investigate the uncertain line between human and

machine

Developments

• 1495

o Leonardo da Vinci sketched plans for a

humanoid robot.

a humanoid robot created by Honda in 2000 o Designed in a form of an armored knight

o unclear if Da Vinci built it, but the concept

• no clear agreement on its exact definition marked a significant moment in robotic history.

• Generally agreed that it possess attributes such as o Possess the ability to sit up, wave its arms,

mobility, intelligent behavior, sense and manipulation move its head and open its mouth

of environment Since then, progress has been made in developing

• goes beyond just “androids” humanoid robots, aiming for ones that closely

Karel Capek (Rossum’s Universal Robots, resemble humans. Despite recent technological

R.U.R, 1920) advances and increased computing power, achieving

this dream is still a long way off.

• first to use the word “robot”

• “robota” – “work” from Slavic language

Robin Marantz Henig (Article in The New York

• “artificial people” were created to serve and were

Times)

given the ability to think

Although the term "robot" originated in 1920, the

concept of mechanical humans dates back to Greek

mythology.

Ancient Times

ROBOTS

• “mechanical human” - as depicted in Greek Mythology

BALICLIC. GUALTER. PAYOS. TOMOCO | 2207 1

WHEN TECHNOLOGY AND HUMANITY CROSS

• One type of “matter” which Aristotle speaks of could

be biological material such as what plants,

animals, and humans consist of; or

• Another type of “matter” could also be the

mechanical and electronic components which

make up modern-day robots.

Clearly it is not the “matter” alone which

distinguishes whether an object is a living

organism, for if it were, Aristotle’s view would differ little

• Shared her experiences with “Social robots” from materialism.

• These robots fall short of the idealized servant

robots from Greek mythology and are more like • The distinguishing characteristic of Aristotle is his

early versions of the desired androids. inclusion of “form.”

-- Contrary to popular expectations, these machines

aren't obedient companions or villains ready to FORM

rebel. • simply means whatever it is that makes a human a

▪ Instead, they are metal entities connected to human, a plant a plant, and an animal an animal.

computers, relying on their human designers o Each of these have a specific “form” which is

to start them up and troubleshoot any issues. – not the same as its “matter”

they don’t have autonomy ▪ but is a functioning unity (matter and

Rodney Brooks (Expert in Robotics And Artificial form) which is essential to each living

Intelligence, 2008) organism in order for it to be just that,

• explains that it is no longer a question of whether living.

human-level artificial intelligence will be developed, • In Aristotle’s terms, the “form” of a living organism

but rather how and when is often referred to as “psyche” or “soul.”

• Android development introduces a set of unique

ethical issues which industrial robots do not evoke. Materialism and Catholicism

• The overarching question that results is what • Materialism: often conflicts with religious views

exactly these robots truly are. worldwide unlike Aristotle's philosophy which was

• Are they simply piles of electronics running adopted by many religions, notably the Roman

advanced algorithms, or are they a new form of life? Catholic Church and specifically by St. Thomas

• What is Life? The question of what constitutes life Aquinas.

is one on which the world may never come to a

consensus. Materialism – “a philosophical system which regards

Throughout history, people have had various opinions matter as the only reality in the world, which undertakes

on what defines a living organism, from ancient to explain every event in the universe as resulting from

philosophers to everyday individuals. the conditions and activity of matter, and which thus

dro denies the existence of God and the soul.”

- Catholic Encyclopedia –

Materialism – matter and reality only

Materialism vs Aristotle’s View Aristotle’s View – has form, you are acknowledging the

• The basic tenets of Aristotle’s view are that an existence of a higher being and has a soul

organism has both “matter” and “form.” Why does it matter that materialism conflicts with

o “Form”: referring to the unique characteristics Catholicism and most other religions? Specifically,

that make an organism what it is what does this have to do with robots and androids?

• This differs from the philosophical position known as o It is relevant because If materialism is

MATERIALISM, which has become popular in correct: humans should have the power to

modern times and finds its roots among the ancient develop new forms of life.

Indians. o If everything in the universe is just material

• Materialism - “organisms are made simply of various and the outcome of material interactions:

types of “matter.” there should be no obstacle to developing

-- a more modern viewpoint that excludes the concept androids and considering them as equally valid

of "form" or "soul," focusing solely on different types life forms as humans.

of "matter."

These philosophical positions significantly impact how

humans approach and interact with robots.

BALICLIC. GUALTER. PAYOS. TOMOCO | 2207 2

WHEN TECHNOLOGY AND HUMANITY CROSS

THREE LAWS OF ROBOTICS

Isaac Asimov

• introduced to the world of science fiction what are South Korea

known as the Three Laws of Robotics, which were o considered one of the most high-tech

published in his short story “Runaround” in 1942. countries in the world

• The laws Asimov formulated are: o leading the way in the development of the Robot

1. A robot may not injure a human being or, Ethics Charter

through inaction, allow a human being to come

to harm. Robot Ethics Charter

2. A robot must obey any orders given to it by o drawn up “to prevent human abuse of robots—

human beings, except where such orders and vice versa”.

would conflict with the First Law. o focuses on addressing social issues arising

3. A robot must protect its own existence as long from the widespread integration of robots into

as such protection does not conflict with the society, including

First or Second Law. ▪ defining proper human-robot

These three laws indicate that the robots uphold interactions

humans as superior since they have form. ▪ managing data collection and

While these laws are rooted in science fiction, the distribution by robots

current status of robotic technology requires a fresh ▪ Some see this charter as reminiscent

perspective on them. of Asimov's Three Laws of Robotics,

• As ideas from science fiction become reality, but others, like robot designer Mark

Tilden, believe it's premature to

Asimov's laws can now be applied practically.

imbue robots with morals.

• Initially, these laws appear to ensure the safe

advancement of this potential new life form. Despite differing views, the advancement of technology

o However, they assume that human life holds will likely prompt other countries to develop their own

greater value than that of the androids being codes of ethics for robots and human interactions.

created.

B. Human, Morals and Machines

If we believe that androids should be regarded as equal Technology is transforming our interactions with

to or even above humans, Asimov's laws may not nature.

apply. • We now have what's called "technological

o place androids in a subordinate position to nature," where various technologies either

humans. replicate, enhance, or simulate natural experiences.

If androids are considered equal to humans in terms of • Television channels like the Discovery Channel

their right to life, implementing Asimov's laws and Animal Planet offer digital portrayals of nature,

becomes problematic. showcasing animal behaviors and natural

• In a scenario involving both human and android phenomena.

soldiers, should an android always sacrifice • Video games like Zoo Tycoon allow children to

itself to save a human? Would humans be engage with virtual animal life. Even zoos are

willing to die for androids? integrating technology, using webcams to let

• Despite some embracing materialism and people observe animals remotely.

envisioning robots as potential equals to humans, • Robot pets, like Sony's AIBO, are popular items

it's doubtful that many would be willing to in stores.

sacrifice their lives for a robot. • Many people spend significant time in virtual

Although current robotics technology doesn't demand worlds like Second Life.

ethical codes for robots, some countries are taking Does it matter that we're substituting real nature

proactive steps towards developing a code of ethics for with technological alternatives?

them.

Robot Ethics Charter

BALICLIC. GUALTER. PAYOS. TOMOCO | 2207 3

WHEN TECHNOLOGY AND HUMANITY CROSS

To answer this, we look at evolutionary and cross- • According to academics, entrepreneurs, and

cultural perspectives on humans' relationship with businesses, these technologies have the potential

nature, as well as recent psychological research on the to significantly change society.

impact of technological nature. • While it's uncertain if these technologies will

achieve their ambitious goals, they will definitely

Legal Moves for Safety mix with influential demographic, economic,

Scientists are starting to seriously consider the and cultural factors, potentially altering daily

ethical challenges arising from advancements in life significantly.

robotics.

“Is Google Making Us Stupid?”

• In South Korea, experts are creating an ethical • article by Nicholas Carrs (2008)

code to prevent mistreatment of robots by humans • discusses how the Internet affects our ability to

and vice versa. focus, changes our knowledge, and increases

• The European Robotics Network (Euron) is our dependence on it

urging governments to establish laws, particularly • argues that spending time online has negative

focusing on safety as robots become more effects on our minds, making it difficult to

commonplace in everyday life. concentrate on long reading passages.

As robots become more intelligent, determining • Carr personally struggles with the negative effects,

who is responsible for any harm they cause feeling that his brain now expects information to

becomes tricky - whether it's the designer, the user, be delivered quickly, like the fast flow of the

or the robot itself. Internet.

• Ethical guidelines for robots can be based on • Carr suggests that our brains are adapting to the

utilitarian principles but may need adjustments rapid pace of online information consumption.

based on specific circumstances. • The article is divided into two main parts:

• For instance, medical professionals follow o Carr's desire for his brain to synchronize with

ethical codes tailored to patient needs, and similar the Internet

standards apply to other professions like law, o Google's perspective on replacing human

religion, and military service. brains with artificial intelligence

• Robots will adhere to the ethical rules we provide

them, making decisions based on moral codes and C. Why the Future Does Not Need Us?

values we set. • As technology advances, intelligent machines

• In emergency situations, autonomous vehicles capable of outperforming humans in various tasks

may prioritize saving more lives, even if it means are being developed.

sacrificing a few. o This could lead to all work being carried out by

highly organized systems of machines,

Ethical Issues rendering human effort unnecessary.

• There are special cases that will require • Either of two cases might occur:

modifications of the core rules that are based on the o The machines might be permitted to make all

circumstances of their use. For example: of their own decisions without human oversight.

✓ Doctors do not euthanize patients to spread o Human control over the machines might be

the wealth of their organs, even if it means that retained.

there is a net positive with regard to Machines > Humans

survivors. • If machines are granted full decision-making

-- They have to conform to a separate code of autonomy, the outcome is unpredictable since it's

ethics designed around the needs of patients impossible to foresee their behavior.

and their rights that restricts their actions. o However, it's evident that humanity's fate would

✓ Lawyers, religious leaders, and military then be dictated by these machines.

personnel who establish special relationships • While it might be argued that humans would never

with individuals who are protected by specific willingly surrender all power to machines, the

ethical codes reality could be different. Society might either

o willingly relinquish control to machines or

It's crucial for these systems to be able to explain their o find itself so reliant on them that it has no

moral decisions, just like humans would explain their practical choice but to accept their decisions.

actions.

Humans > Machines

• Human control over machines might persist

Today, advanced technologies like Artificial o average man: may have control over certain

Intelligence (AI), augmented reality, virtual reality, private machines of his own, such as his car or

home robots, and cloud computing are fascinating his personal computer

many people.

BALICLIC. GUALTER. PAYOS. TOMOCO | 2207 4

WHEN TECHNOLOGY AND HUMANITY CROSS

o tiny elite: control over large systems of Robot industries would compete fiercely for resources,

machines will be in the hands of the driving prices beyond human affordability and

• Ruthless elite: might opt to eliminate the majority potentially leading to the displacement of biological

of humanity; could employ methods such as humans from existence.

propaganda or biological means to decrease the A textbook on dystopia and Moravec

birth rate until humanity dwindles, leaving only the • discuss how our main job in the 21st century will be

elite o “ensuring continued cooperation from the

• Compassionate elite: may choose to shepherd the robot industries” by passing laws decreeing

rest of humanity, ensuring their basic needs are that they be “nice,” and

met, providing wholesome activities, and offering o describing how seriously dangerous a human

treatments for dissatisfied individuals. can be once transformed into an unbounded

o However, life in such a society would lack super intelligent robot.

purpose, potentially requiring biological or • Moravec’s view is that the robots will eventually

psychological alterations to eliminate the succeed us that humans clearly face extinction.

desire for power or redirect it into harmless • While we're accustomed to frequent scientific

pursuits. advancements, we haven't fully grasped the

o While engineered individuals in this society unique threats posed by 21st-century technologies

may be content, they would lack freedom and like

be reduced to the status of domesticated o robotics

animals. o genetic engineering

o nanotechnology

Theodore Kaczynski

• also known as the Unabomber Threats Brought by Scientific Breakthroughs

• an American domestic terrorist who killed three 1. Robots, engineered organisms, and nanobots share

people and injured many more in a bombing campaign a dangerous amplifying factor; they can self-replicate.

targeting individuals involved with modern technology. • While a bomb explodes only once, a single robot or

• While his actions were criminal and insane, his vision nanobot can reproduce rapidly and become

highlighted the unintended consequences often uncontrollable.

associated with technology. • This self-replication can occur through computer

networks, presenting risks like system failures or

❖ unintended consequences are often associated disruptions.

with technology – highlighted by Unabomber • Uncontrolled self-replication may lead to a

• Echoes Murphy's law – "Anything that can go GREATER RISK: potentially causing significant

wrong, will." For examples: physical damage.

o overuse of antibiotics → biggest such problem 2. With Robotics, genetic engineering and

so far: the emergence of antibiotic-resistant nanotechnology, a sequence of small, individually

and much more dangerous bacteria. sensible advances leads to an accumulation of great

o attempts to eliminate malarial mosquitoes power and, simultaneously, great danger.

using DDT (dichlorodiphenyltrichloroethane • Each of these technologies also offers untold

insecticide) caused them to acquire DDT promise: The vision of near immortality that

resistance; malarial parasites, likewise, Kurzweil sees in his robot dreams drives us

acquired multi-drug-resistant genes forward;

• The root cause of such surprises lies in the complexity o Genetic engineering may soon provide

of the systems involved, where changes can have treatments, if not outright cures, for most

cascading (may kasunod pa) effects that are hard to diseases; and

predict, especially when human actions are involved. o Nanotechnology and nanomedicine can

• Biological species often struggle to survive when address more ills.

faced with superior competitors, as seen in the o Together, they could significantly extend our

displacement of South American marsupials by average life span and improve the quality of

North American placental mammals millions of our lives.

years ago. • What was different in the 20th century? Certainly,

Marsupials – mammals comprising kangaroos and the technologies underlying the weapons of mass

related animals na hindi nakakapagdevelop ng true destruction (WMD)–nuclear, biological, and

placenta chemical (NBC)–were powerful, and the weapons

• In a completely free marketplace, advanced robots an enormous threat.

would likely impact humans similarly to how North • But building nuclear weapons required, at least for

American placental mammals affected South a time, access to both

American marsupials, or how humans have impacted o rare–indeed, effectively unavailable–raw

numerous species. materials and

o highly protected information

BALICLIC. GUALTER. PAYOS. TOMOCO | 2207 5

WHEN TECHNOLOGY AND HUMANITY CROSS

• biological and chemical weapons programs also constructive ones. It can be exploited by military or

tended to require large- scale activities. terrorist groups to create devastating devices that could

target specific areas or populations.

21st-century technologies–genetics, nanotechnology, J. Robert Oppenheimer

and robotics (GNR)

• so powerful that they can spawn whole new

classes of accidents and abuses.

• Most dangerously, for the first time, these accidents

and abuses are widely within the reach of

individuals or small groups.

o do not require large facilities or rare raw

materials. Knowledge alone will enable their

use; thus, we have the possibility not just of

weapons of mass destruction but of

knowledge-enabled mass destruction

(KMD), this destructiveness hugely amplified

by the power of self-replication.

3. Enormous computing power is combined with the

manipulative advances of the physical sciences and the • a renowned physicist

new, deep understandings in genetics, enormous • led the development of the atomic bomb out of

transformative power is being unleashed with the rapid concern for the threat posed by Nazi Germany

and radical progress in molecular electronics. obtaining such weapons.

• Molecular electronics – where individual atoms and • Despite concerns about potential risks such as an

molecules replace lithographically drawn transistors atomic explosion setting the atmosphere on fire

• We would be able to meet or exceed the Moore’s based on the calculation by Edward Teller,

law rate of progress for another 30 years. By 2030, scientists proceeded with the first atomic test,

we are likely to be able to build machines, in known as Trinity.

quantity, a million times as powerful as the • Following a successful test, atomic bombs were

personal computers of today. dropped on Hiroshima and Nagasaki.

4. We now know with certainty that the profound • While some scientists suggested demonstrating the

changes in the biological sciences are imminent and will bomb instead of using it in combat, the urgency to

challenge all our notions of what life is. end the war prevailed.

• Genetic engineering promises to • Despite the shock and horror following the

- revolutionize agriculture by increasing crop bombings, another bomb was dropped on

yields while reducing the use of pesticides Nagasaki.

- create tens of thousands of novel species of • In November 1945, Oppenheimer emphasized the

bacteria, plants, viruses, and animals responsibility of scientists to

- replace reproduction, or supplement it, with - use knowledge for the betterment of humanity

cloning and

- create cures for many diseases, increasing our - consider the consequences of their actions.

life span and our quality of life.

• [Genetic engineering] Human cloning: profound

OPPENHEIMER

ethical and moral issues

Oppenheimer stood firmly behind the scientific attitude,

- If we were to reengineer ourselves into several

saying, “It is not possible to be a scientist unless

separate and unequal species, then we would

you believe that the knowledge of the world, and the

threaten the notion of equality that is the very

power which this gives, is a thing which is of

cornerstone of our democracy.

intrinsic value to humanity, and that you are using

- The general public is aware of, and uneasy

it to help in the spread of knowledge and are willing

about, genetically modified foods, and

to take the consequences.”

seems to be rejecting the notion that such

foods should be permitted to be unlabeled. In our time, how much danger do we face not just

• [Genetic engineering] from nuclear weapons but from all these

- As the Lovins’ note, the USDA has already technologies? How high are the extinction risks?

approved about 50 genetically engineered

crops for unlimited release; more than half of Human Extinction vs Technologies

the world’s soybeans and a third of its corn now • Philosopher John Leslie concluded that the risk

contain genes spliced in from some other forms of human extinction is at least 30 percent.

of life. • Ray Kurzweil holds the view that there is a greater

5. Nanotechnology, like nuclear technology, is more than fifty percent likelihood of successfully

prone to misuse for destructive purposes rather than

BALICLIC. GUALTER. PAYOS. TOMOCO | 2207 6

WHEN TECHNOLOGY AND HUMANITY CROSS

navigating the future, although it should be noted “The exercise of vital powers along lines of excellence

that he has frequently been labeled as optimistic. in a life affording them scope”

Not only are these estimates not encouraging, but they - Greeks Definition -

do not include the probability of many horrid outcomes

that lie short of extinction. • To be truly happy in the future,

- have meaningful goals and challenges.

How To Be Saved from Human Extinction? - explore other ways to express our

creativity, rather than relying solely on

• Faced with such assessments, some serious

constant economic growth.

people are already suggesting that we simply

▪ While economic growth has benefited

move beyond the Earth as quickly as possible.

us for a long time, it hasn't made us

• We would colonize the galaxy using von

completely happy.

Neumann probes (self-replicating spacecrafts),

which hop from star system to star system,

Now, we have to decide if we want to keep pursuing

replicating as they go.

endless growth through technology, knowing the

• This step will almost certainly be necessary billion

risks involved.

years from now (or sooner if our solar system is

disastrously impacted by the impending collision of

our galaxy with the Andromeda galaxy within the

next three billion years),

o but if we take Kurzweil and Moravec at their

word, it might be necessary by the middle of

this century.

• Another idea involves constructing shields to

defend against dangerous technologies, akin to

the Strategic Defense Initiative proposed during

the Reagan administration.

o However, experts argue that such shields

would be ineffective and may have harmful

side effects.

o Therefore, limiting the pursuit of certain types

of knowledge emerges as a more realistic

alternative to mitigate the risks posed by

advanced technologies.

• We have been seeking knowledge since ancient

times. Aristotle opened his Metaphysics with the

simple statement: “All men by nature desire to

know.”

• Throughout history, the pursuit of knowledge has

been revered, but there are warnings, such as

Nietzsche's, about the potential dangers of

seeking truth at any cost.

-- Nietzsche: at the end of the 19th century, not

only that God is dead but that “faith in science,

which after all exists undeniably, cannot owe its

origin to a calculus of utility; it must have originated

in spite of the fact that the disutility and

dangerousness of the ‘will to truth,’ of ‘truth at any

price’ is proved to it constantly.”

▪ It is this further danger that we now fully face

the consequences of our truth-seeking. The

truth that science seeks can certainly be

considered a dangerous substitute for God

if it is likely to lead to our extinction. – just

like atomic bombs, nanotechnology, genetic

engineering (we study it for knowledge, but it

has devastating effects)

HAPPINESS

BALICLIC. GUALTER. PAYOS. TOMOCO | 2207 7

You might also like

- Ids Ips Audit ChecklistDocument1 pageIds Ips Audit Checklisthakim hbNo ratings yet

- Upending The Uncanny ValleyDocument8 pagesUpending The Uncanny ValleyGina Smith100% (2)

- TLE 10 Lesson 1Document111 pagesTLE 10 Lesson 1Lindsay Kirstyn Tabaniag100% (1)

- OECD Learning Compass 2030 Concept Note SeriesDocument146 pagesOECD Learning Compass 2030 Concept Note SeriesNguyen Nhu Ngoc NGONo ratings yet

- Database As A Service Using Oracle Enterprise Manager 12c CookbookDocument64 pagesDatabase As A Service Using Oracle Enterprise Manager 12c Cookbookramses71No ratings yet

- X5 GT Wiring DiagramDocument2 pagesX5 GT Wiring DiagramBrian Thompson0% (1)

- Reviewer For StsDocument5 pagesReviewer For StsKathleenNo ratings yet

- Reviewer For StsDocument5 pagesReviewer For StsNhelle IronVoltoxNo ratings yet

- History of RoboticsDocument7 pagesHistory of RoboticsGabee LegaspiNo ratings yet

- EnglishDocument16 pagesEnglishsj0000350No ratings yet

- History of Robotics Timeline 2Document1 pageHistory of Robotics Timeline 2api-632695803No ratings yet

- Modern Robotics: Present Day WeaponsDocument2 pagesModern Robotics: Present Day WeaponsfazalrazaNo ratings yet

- Chapter 2C. When Technology and Humanity CrossDocument19 pagesChapter 2C. When Technology and Humanity CrossBea Mae SangalangNo ratings yet

- Robotics HistoryDocument22 pagesRobotics HistoryAli HaiderNo ratings yet

- A. The Ethical Dilemmas of Robotics: Chapter 6 When Technology and Humanity CrossDocument20 pagesA. The Ethical Dilemmas of Robotics: Chapter 6 When Technology and Humanity CrossLady Ann AltezaNo ratings yet

- Chapter 6 - STSDocument13 pagesChapter 6 - STSRonna Panganiban DipasupilNo ratings yet

- Chapter-6 STSDocument12 pagesChapter-6 STSCasimero CabungcalNo ratings yet

- Robotics: Ece 411: Robotics Engr. Lalaine Jean A. Ballais, EctDocument9 pagesRobotics: Ece 411: Robotics Engr. Lalaine Jean A. Ballais, Ecttsk2230No ratings yet

- Robot - WikipediaDocument40 pagesRobot - WikipediaTejasNo ratings yet

- The Ascension of Robotics Through TimeDocument5 pagesThe Ascension of Robotics Through TimeIrina PopaNo ratings yet

- Chapter 6Document29 pagesChapter 6sweetsummer621No ratings yet

- Robotics Lecture g7 PDFDocument2 pagesRobotics Lecture g7 PDFShannel TornoNo ratings yet

- History of Robotics TimelineDocument11 pagesHistory of Robotics Timelineapi-369287010No ratings yet

- History of RoboticsDocument21 pagesHistory of RoboticsVeekshith Salient KnightNo ratings yet

- 1st Edition of Robotics and Automation Guide Distrelec PDFDocument32 pages1st Edition of Robotics and Automation Guide Distrelec PDFSouhila BoukhlifaNo ratings yet

- DFDFDDDocument5 pagesDFDFDD김가현No ratings yet

- Breif History of RoboticsDocument3 pagesBreif History of Roboticsapi-253221137No ratings yet

- Robotics q1Document12 pagesRobotics q1divinegarciaNo ratings yet

- Where Do The Ideas For Different Kinds of Robots Come From? 10 PointsDocument2 pagesWhere Do The Ideas For Different Kinds of Robots Come From? 10 PointsJulianne Love IgotNo ratings yet

- Redefiniendo RoboticaDocument13 pagesRedefiniendo RoboticaLuis Calderón RugelesNo ratings yet

- New Microsoft Word DocumentDocument53 pagesNew Microsoft Word Documentapi-3707156No ratings yet

- Seminar 2Document26 pagesSeminar 2Manan GaurNo ratings yet

- Rossums Universal Robots Not The MachinesDocument4 pagesRossums Universal Robots Not The MachinesJon GaronNo ratings yet

- RobotsDocument2 pagesRobotspsykosomatikNo ratings yet

- 06과 Grammar: EnglishDocument6 pages06과 Grammar: Englishsj0000350No ratings yet

- Origins: Nothing Scarier Than A Bunch of Silver-Painted Guys Wearing Buckets On Their HeadsDocument7 pagesOrigins: Nothing Scarier Than A Bunch of Silver-Painted Guys Wearing Buckets On Their HeadsEmyNo ratings yet

- 1 Introduction To Robotics DevelopmentDocument52 pages1 Introduction To Robotics Developmentiveynesh5No ratings yet

- Chart 1Document2 pagesChart 1gautham sajuNo ratings yet

- Please Read: A Personal Appeal From Wikipedia Founder Jimmy WalesDocument23 pagesPlease Read: A Personal Appeal From Wikipedia Founder Jimmy WalesmihirapotterNo ratings yet

- Robotics - Thinking, Sensing and ActingDocument3 pagesRobotics - Thinking, Sensing and Actingmsilva_74No ratings yet

- IntroductionDocument120 pagesIntroductionAbdur RehmanNo ratings yet

- History of Robot ENGDocument5 pagesHistory of Robot ENGCharles Palacios NavarroNo ratings yet

- What Is Robotics History of Robotics Scope of Robotics Advantages Disadvantages ApplicationsDocument12 pagesWhat Is Robotics History of Robotics Scope of Robotics Advantages Disadvantages Applicationss suhas gowdaNo ratings yet

- Britannica Illustrated Science Library - TechnologyDocument56 pagesBritannica Illustrated Science Library - TechnologySounak HalderNo ratings yet

- RoboticsDocument11 pagesRoboticsHari Hara Sudhan KaliappanNo ratings yet

- 01 HistoryDocument12 pages01 HistoryJefferson MagallanesNo ratings yet

- Index: 2 1.2 History 4 1.3 Development 5 1.4 Challenges in Humanoid Robots 5Document29 pagesIndex: 2 1.2 History 4 1.3 Development 5 1.4 Challenges in Humanoid Robots 5Honey SinghNo ratings yet

- RobotsDocument22 pagesRobotsKari NelsonNo ratings yet

- Love and Sex with Robots: The Evolution of Human-Robot RelationshipsFrom EverandLove and Sex with Robots: The Evolution of Human-Robot RelationshipsRating: 3.5 out of 5 stars3.5/5 (20)

- Introduction To Robotics PDFDocument22 pagesIntroduction To Robotics PDFsamurai7_77No ratings yet

- AnusDocument24 pagesAnusrajbunisa saleemNo ratings yet

- Epucheu ResearchDocument12 pagesEpucheu Researchepucheu11No ratings yet

- Chapter 6 Group 3.Document61 pagesChapter 6 Group 3.gracieNo ratings yet

- Duran Thill ChallengesDocument22 pagesDuran Thill ChallengestsigeNo ratings yet

- Presentation Adapted From Space Foundation Robotics/Nanotechnology 2009Document18 pagesPresentation Adapted From Space Foundation Robotics/Nanotechnology 2009Aman SachdevaNo ratings yet

- Buchanan 2006 - A (Very) Brief History of Artificial IntelligenceDocument8 pagesBuchanan 2006 - A (Very) Brief History of Artificial Intelligencedaniel pazNo ratings yet

- STS SECTION 1-3 Reviewer - PRELIMSDocument9 pagesSTS SECTION 1-3 Reviewer - PRELIMSJb CuevasNo ratings yet

- Project01 RobotDocument5 pagesProject01 RobotPatricio OrtizNo ratings yet

- Robots and ApplicationsDocument15 pagesRobots and ApplicationsChief PriestNo ratings yet

- Robots in Popular CultureDocument2 pagesRobots in Popular CultureRamojus BaleviciusNo ratings yet

- RoboticsDocument5 pagesRoboticswynwon80No ratings yet

- Resume-1Document1 pageResume-1Alexandra AyneNo ratings yet

- STS_LESSON-7Document4 pagesSTS_LESSON-7Alexandra AyneNo ratings yet

- 19 - Lecture #14 - Minerals - Part 1Document58 pages19 - Lecture #14 - Minerals - Part 1Alexandra AyneNo ratings yet

- VitaminsDocument12 pagesVitaminsAlexandra AyneNo ratings yet

- Modern Geotechnical Design Codes of Practice Implementation, ApplicationDocument340 pagesModern Geotechnical Design Codes of Practice Implementation, ApplicationAngusNo ratings yet

- CSE-311 Operating SystemsDocument22 pagesCSE-311 Operating Systemsbasheer usman illelaNo ratings yet

- DIN-A-MITE Solid-State Power ControllerDocument21 pagesDIN-A-MITE Solid-State Power Controllermisael123No ratings yet

- Arnav Roy: B.Tech, Information TechnologyDocument1 pageArnav Roy: B.Tech, Information TechnologyarnavNo ratings yet

- Gcse Astronomy Coursework ExamplesDocument4 pagesGcse Astronomy Coursework Examplesqclvqgajd100% (2)

- Q1 Module 5 Electric Circuit Applying Ohms Law FinalDocument26 pagesQ1 Module 5 Electric Circuit Applying Ohms Law FinalMartin lhione LagdameoNo ratings yet

- LDXX 6516DS VTMDocument3 pagesLDXX 6516DS VTMBranko BorkovicNo ratings yet

- READMEDocument4 pagesREADMENose NoloseNo ratings yet

- Mitel 5000 CP v5.0 System Administration Diagnostics Guide PDFDocument46 pagesMitel 5000 CP v5.0 System Administration Diagnostics Guide PDFRichNo ratings yet

- Maintenance Manual: Spitfire 65/90Document319 pagesMaintenance Manual: Spitfire 65/90Сергей СаяпинNo ratings yet

- Simplify Your Custom Logic Using Extended AttributesDocument34 pagesSimplify Your Custom Logic Using Extended AttributesArjun SharmaNo ratings yet

- Accenture - It's LearningDocument40 pagesAccenture - It's LearningUtkarsh SinghNo ratings yet

- Nemo File Format V2.14Document545 pagesNemo File Format V2.14Claudio Garretón VénderNo ratings yet

- Uht 75 Uht 79Document1 pageUht 75 Uht 79ALI MESSAOUDINo ratings yet

- DeadlockDocument53 pagesDeadlocksaifulNo ratings yet

- Usbmedia 888k0x k1x k8x k9x l0x l1x l8x l9x j9x r22-11Document4 pagesUsbmedia 888k0x k1x k8x k9x l0x l1x l8x l9x j9x r22-11Renato RochaNo ratings yet

- Java Last Year Question PaperDocument18 pagesJava Last Year Question PaperibrahimnasimshaikhNo ratings yet

- ListinorebautDocument109 pagesListinorebautwineletronNo ratings yet

- Gary Vee Content PDFDocument86 pagesGary Vee Content PDFvbisNo ratings yet

- Substation Grounding Studieswith More Accurate Fault Analysisand Simulation StrategiesDocument10 pagesSubstation Grounding Studieswith More Accurate Fault Analysisand Simulation StrategiesFOGNo ratings yet

- Apr19nsDocument7 pagesApr19nsPreeti goswamiNo ratings yet

- FurnitureDocument51 pagesFurniturepoonam sanas43% (7)

- NanotechnologyDocument7 pagesNanotechnologyJames UgbesNo ratings yet

- ACO PACIFIC INC - 7012 - Hoja TecnicaDocument6 pagesACO PACIFIC INC - 7012 - Hoja TecnicaJuan Sebastian Juris ZapataNo ratings yet

- Ieee Digital Signal Processing 2015 Matlab Project List Mtech BeDocument3 pagesIeee Digital Signal Processing 2015 Matlab Project List Mtech BeHassan RohailNo ratings yet

- GEM - Product Price List Sep 2017Document1 pageGEM - Product Price List Sep 2017sasasjkNo ratings yet