Professional Documents

Culture Documents

Lean Six Sigma Measure Phase

Lean Six Sigma Measure Phase

Uploaded by

allisson_acosta18Copyright:

Available Formats

You might also like

- Agile Landscape - Chriss WebbDocument2 pagesAgile Landscape - Chriss WebbRichard Lopez0% (1)

- 06 5s Implementation Plan and Training Guide v20130618 PDFDocument16 pages06 5s Implementation Plan and Training Guide v20130618 PDFRamesh BabuNo ratings yet

- SAP S4 Hana CloudDocument1 pageSAP S4 Hana CloudYong Benedict67% (3)

- Unit 4 - L Notes - Introduction To MicrocontrollerDocument53 pagesUnit 4 - L Notes - Introduction To MicrocontrollerAKSHANSH MATHUR100% (1)

- PS70299 ICS 1100 Product SpecificationsDocument7 pagesPS70299 ICS 1100 Product SpecificationsTanuwijaya Jaya SugiNo ratings yet

- 03 - BB Manual - Measure - v12 - 4 PDFDocument154 pages03 - BB Manual - Measure - v12 - 4 PDFRicardo BravoNo ratings yet

- Measure Phase: Process DiscoveryDocument81 pagesMeasure Phase: Process DiscoveryKefin TajebNo ratings yet

- 1 - Control - Welcome To Control SPFDocument4 pages1 - Control - Welcome To Control SPFadeelcyNo ratings yet

- Dmaic Six Sigma 1699396223Document138 pagesDmaic Six Sigma 1699396223chorouqNo ratings yet

- Continuous Improvement: The 7 Basic Quality Tools The DMAIC ProcessDocument15 pagesContinuous Improvement: The 7 Basic Quality Tools The DMAIC ProcessdayalumeNo ratings yet

- Measure - Phase of The DMAIC MethodologyDocument148 pagesMeasure - Phase of The DMAIC MethodologyGaddipati MohankrishnaNo ratings yet

- Performance Management: With The Earth in MindDocument29 pagesPerformance Management: With The Earth in MindpablodiazproNo ratings yet

- 5S Implementation PlanDocument16 pages5S Implementation PlanSathyanarayanan GNo ratings yet

- Deliverables by Workstream Design 2011 PDFDocument1 pageDeliverables by Workstream Design 2011 PDFMohammed AhmedNo ratings yet

- Process Mapping in PracticeDocument21 pagesProcess Mapping in Practiceokamo100% (2)

- GAMMA 2 Course Material v2.1Document33 pagesGAMMA 2 Course Material v2.1Neelam ShindeNo ratings yet

- S02+C06+ +17+Steps+of+BB+RoadmapDocument3 pagesS02+C06+ +17+Steps+of+BB+Roadmapazharbinyunus01No ratings yet

- BITM 305-Week - 3Document63 pagesBITM 305-Week - 310303374No ratings yet

- Chapter 10: Process Implementation With Executable ModelsDocument25 pagesChapter 10: Process Implementation With Executable ModelsFranz Antony BendezuNo ratings yet

- Green Belt Course ManualDocument34 pagesGreen Belt Course ManualKaranShinde100% (1)

- Lean Six Sigma Operations Black Belt - Week 1: Sample Size CalculationDocument42 pagesLean Six Sigma Operations Black Belt - Week 1: Sample Size CalculationSteph JoseNo ratings yet

- Software Testing - Best Practice: Testing Checklist The Testing LifecycleDocument1 pageSoftware Testing - Best Practice: Testing Checklist The Testing LifecycleNidal ChoumanNo ratings yet

- Requirements Gathering and ManagementDocument20 pagesRequirements Gathering and ManagementAlan McSweeney100% (4)

- HC-Root Cause Analysis in HC - ExtractedDocument54 pagesHC-Root Cause Analysis in HC - Extracteddinesh pharmaNo ratings yet

- FBPM2 Chapter 11 ProcessMonitoringDocument82 pagesFBPM2 Chapter 11 ProcessMonitoringnoranasihahNo ratings yet

- Module 39. Improve RoadmapDocument4 pagesModule 39. Improve Roadmaptaghavi1347No ratings yet

- The Toyota Way 1Document32 pagesThe Toyota Way 1jeremy rasoolNo ratings yet

- 1 - Good Presentation ExampleDocument34 pages1 - Good Presentation ExampleHesham TaherNo ratings yet

- GROUP 3 Note Effective Problem Solving Through 7QC Tools - Air SelangorDocument129 pagesGROUP 3 Note Effective Problem Solving Through 7QC Tools - Air Selangorinfo qtcNo ratings yet

- Measures of Forecast Error - MSE MAD MAPE Regression AnalysisDocument34 pagesMeasures of Forecast Error - MSE MAD MAPE Regression AnalysisDecaNo ratings yet

- AnalyzeDocument8 pagesAnalyzeEr Darsh ChahalNo ratings yet

- Quality 1Document3 pagesQuality 1Nam Phạm xuânNo ratings yet

- Six Sigma: DMAIC Y F (X)Document37 pagesSix Sigma: DMAIC Y F (X)Niral JainNo ratings yet

- SPC Charts in Manufacturing Industry Students Name UniversityDocument5 pagesSPC Charts in Manufacturing Industry Students Name UniversityTito MazolaNo ratings yet

- Basic 1 Hour Classroom Instruction Delivery Alignment Map (Activity Workshop)Document5 pagesBasic 1 Hour Classroom Instruction Delivery Alignment Map (Activity Workshop)Cherryl Asuque GellaNo ratings yet

- Improve MaterialDocument130 pagesImprove MaterialbillNo ratings yet

- 5 2-14 Rapid Changeover Smed Uoc7001a - 91558nsw CLRDocument47 pages5 2-14 Rapid Changeover Smed Uoc7001a - 91558nsw CLRImran HakimNo ratings yet

- Introduction To Six SigmaDocument30 pagesIntroduction To Six SigmaKashif Razzaqui100% (40)

- Quality Circle Writting Book NewDocument11 pagesQuality Circle Writting Book Newboncy raguNo ratings yet

- Lean Six Sigma Operations Black Belt Bridge - Week 1: Evaluate Measurement System OverviewDocument27 pagesLean Six Sigma Operations Black Belt Bridge - Week 1: Evaluate Measurement System OverviewSteph JoseNo ratings yet

- Scope Definition: Evaluation Questionnaire Checklist Templates Guidelines Estimation FrameworksDocument10 pagesScope Definition: Evaluation Questionnaire Checklist Templates Guidelines Estimation Frameworksbudhaditya.choudhury2583No ratings yet

- FBPM2 Chapter07 QuantitativeProcessAnalysisDocument66 pagesFBPM2 Chapter07 QuantitativeProcessAnalysisTholhah HizbullohNo ratings yet

- Week 6 Dasar Pemodelan ProsesDocument48 pagesWeek 6 Dasar Pemodelan ProsesJavla RumpaNo ratings yet

- Course Material of Six Sigma Black-Belt - Improvement Phase Course Material of Six Sigma Black-Belt - Improvement PhaseDocument65 pagesCourse Material of Six Sigma Black-Belt - Improvement Phase Course Material of Six Sigma Black-Belt - Improvement Phasev menonNo ratings yet

- Road To I PT Final PosterDocument1 pageRoad To I PT Final PosterChristian Trésor KandoNo ratings yet

- Module 3 Matl - Measure PhaseDocument75 pagesModule 3 Matl - Measure PhaseHannah Nicdao TumangNo ratings yet

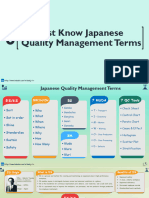

- 6 Must Know Japanese Quality Management Concepts 1664051048Document8 pages6 Must Know Japanese Quality Management Concepts 1664051048Shady Essam ElkilanyNo ratings yet

- 6 Must Know Japanese Quality Management ConceptsDocument8 pages6 Must Know Japanese Quality Management Conceptsvinc 98No ratings yet

- Quality ManagementDocument8 pagesQuality ManagementHaris PrayogoNo ratings yet

- GLX Brasil April Day2Document102 pagesGLX Brasil April Day2flavioNo ratings yet

- 8332 Iam Assessmeth 4ppa4v3 No CropDocument4 pages8332 Iam Assessmeth 4ppa4v3 No Cropdavidphillips2020No ratings yet

- Chapter 2: Process Identification: Seite 1Document45 pagesChapter 2: Process Identification: Seite 1Talha HabibNo ratings yet

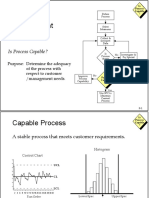

- The Quality Improvement Model: Is Process Capable?Document19 pagesThe Quality Improvement Model: Is Process Capable?VishalNaranjeNo ratings yet

- Improve - 9 - Wrap Up and Action Items - v12-1Document10 pagesImprove - 9 - Wrap Up and Action Items - v12-1okanboragameNo ratings yet

- Business Process ManagementDocument51 pagesBusiness Process ManagementRoaa MuhammedNo ratings yet

- You Exec - Bains Management Toolkit Part1 FreeDocument5 pagesYou Exec - Bains Management Toolkit Part1 FreedsNo ratings yet

- The 7 Steps of QC Problem Solving: QC Pillar Training MaterialDocument58 pagesThe 7 Steps of QC Problem Solving: QC Pillar Training MaterialvictorNo ratings yet

- Cost Recovery: Turning Your Accounts Payable Department into a Profit CenterFrom EverandCost Recovery: Turning Your Accounts Payable Department into a Profit CenterNo ratings yet

- Apd4 T88res Install e RevbDocument54 pagesApd4 T88res Install e RevbMSushi MsushispaNo ratings yet

- Cloud Computing ApplicationsDocument60 pagesCloud Computing ApplicationsAshwini T50% (2)

- Sesion3 Optimizacion Redes MovilesDocument12 pagesSesion3 Optimizacion Redes MovilesCreación Redes LitoralNo ratings yet

- 3D Printing and NanotechnologyDocument18 pages3D Printing and NanotechnologyJoyitaNo ratings yet

- Cod.380S.B: Instruction ManualDocument24 pagesCod.380S.B: Instruction Manualamskroud brahimNo ratings yet

- Prismatic DesignDocument12 pagesPrismatic DesignPersia JulietNo ratings yet

- ACS600Document21 pagesACS600Varun Kumar100% (2)

- Mitsubishi FX1N Yj ManualDocument124 pagesMitsubishi FX1N Yj ManualSandi PratamaNo ratings yet

- File Infection Techniques: Wei WangDocument30 pagesFile Infection Techniques: Wei WangNhật HuyNo ratings yet

- Journal - Change of Authorship Form: Multidisciplinary Digital Publishing InstituteDocument3 pagesJournal - Change of Authorship Form: Multidisciplinary Digital Publishing InstituteReynaldo CanahuaNo ratings yet

- (DSMAT 31) : Maximum Marks: 30 Answer All QuestionsDocument14 pages(DSMAT 31) : Maximum Marks: 30 Answer All QuestionsSimha TestNo ratings yet

- Sap Fi End User Practice Work: AR AP Asset AccountingDocument19 pagesSap Fi End User Practice Work: AR AP Asset AccountingHany RefaatNo ratings yet

- PDF Aviation& Electronic Parts Lookup NSN Parts LookupDocument104 pagesPDF Aviation& Electronic Parts Lookup NSN Parts LookupnsnpartslookupNo ratings yet

- Generative Design MethodsDocument9 pagesGenerative Design MethodsMohammed Gism Allah0% (1)

- 2.operating SystemDocument7 pages2.operating Systemrc5184751No ratings yet

- Can J Chem Eng - 2023 - Yu - Automated Nanofibre Sizing by Multi Image Processing and Deep Learning With Revised UNet ModelDocument13 pagesCan J Chem Eng - 2023 - Yu - Automated Nanofibre Sizing by Multi Image Processing and Deep Learning With Revised UNet ModelDanielNo ratings yet

- Packet Sniffer Project Document PDF FreeDocument90 pagesPacket Sniffer Project Document PDF FreeSurendra ChoudharyNo ratings yet

- Python PracticalDocument18 pagesPython PracticalDivya RajputNo ratings yet

- COS30015 IT Security Research Project: AbstractDocument7 pagesCOS30015 IT Security Research Project: AbstractMAI HOANG LONGNo ratings yet

- Advantech Adam 3600 Intelligent Rtu EbookDocument33 pagesAdvantech Adam 3600 Intelligent Rtu Ebookrusnardi hanif radityaNo ratings yet

- Sap PP Interview Questions, Answers and ExplanationsDocument53 pagesSap PP Interview Questions, Answers and ExplanationsB.n. TiwariNo ratings yet

- 00 360 Midas Experience Program IntroDocument73 pages00 360 Midas Experience Program Introrobert ongNo ratings yet

- Pantech-Software NLP, ML, AI, Android, Big Data, Cloud Computing, NLP Projects 2022 To 2-23Document10 pagesPantech-Software NLP, ML, AI, Android, Big Data, Cloud Computing, NLP Projects 2022 To 2-2320261A3232 LAKKIREDDY RUTHWIK REDDYNo ratings yet

- Trends: Technology Trend Awareness As A Skill Refers To Being Mindful ofDocument6 pagesTrends: Technology Trend Awareness As A Skill Refers To Being Mindful ofleslie jane archivalNo ratings yet

- Curriculum Vitae of Bashir Ahmed Bhuiyan-1Document3 pagesCurriculum Vitae of Bashir Ahmed Bhuiyan-1Golam RasulNo ratings yet

- Introduction To Electrostatic FEA With BELADocument9 pagesIntroduction To Electrostatic FEA With BELAASOCIACION ATECUBONo ratings yet

- 3D Model of Steam Engine Using Opengl: Indian Institute of Information Technology, AllahabadDocument18 pages3D Model of Steam Engine Using Opengl: Indian Institute of Information Technology, AllahabadRAJ JAISWALNo ratings yet

- 20105-AR-GEN-00-004-01 Rev 02Document1 page20105-AR-GEN-00-004-01 Rev 02Bahaa MohamedNo ratings yet

Lean Six Sigma Measure Phase

Lean Six Sigma Measure Phase

Uploaded by

allisson_acosta18Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Lean Six Sigma Measure Phase

Lean Six Sigma Measure Phase

Uploaded by

allisson_acosta18Copyright:

Available Formats

86

Lean Six Sigma

Black Belt Training

Measure Phase

Welcome to Measure

Now that we have completed the Define Phase we are going to jump into the Measure Phase.

Here you enter

H t ththe world

ld off measurement,

t where

h you can didiscover th

the ultimate

lti t source off

problem-solving power: data. Process improvement is all about narrowing down to the vital few

factors that influence the behavior of a system or a process. The only way to do this is to

measure and observe your process characteristics and your critical-to-quality characteristics.

Measurement is generally the most difficult and time-consuming phase in the DMAIC

methodology. But if you do it well, and right the first time, you will save your self a lot of trouble

later and maximize your chance of improvement.

Welcome to the Measure Phase - will give you a brief look at the topics we are going to cover.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

87

Welcome to Measure

Overview

These are the modules

we will cover in the Welcome

Welcome to

to Measure

Measure

Measure Phase.

Process

Process Discovery

Discovery

Six

Six Sigma

Sigma Statistics

Statistics

Measurement

Measurement System

System Analysis

Analysis

Process

Process Capability

Capability

Wrap

Wrap Up

Up &

& Action

Action Items

Items

DMAIC Roadmap

Process Owner

Champion/

Identify Problem Area

D t

Determine

i Appropriate

A i t Project

P j t Focus

F

Define

Estimate COPQ

Establish Team

Measure

Assess Stability, Capability, and Measurement Systems

Identify and Prioritize All X’s

alyze

Prove/ Disprove Impact X’s

X s Have On Problem

Ana

Improve

Identify, Prioritize, Select Solutions Control or Eliminate X’s Causing Problems

Implement Solutions to Control or Eliminate X’s Causing Problems

Control

Implement Control Plan to Ensure Problem Doesn’t Return

Verify

y Financial Impact

p

Here is the overview of the DMAIC process. Within Measure we are going to start getting into details about

process performance, measurement systems and variable prioritization.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

88

Welcome to Measure

Measure Phase Deployment

Detailed Problem Statement Determined

Detailed Process Mapping

Identify All Process X’s Causing Problems (Fishbone, Process Map)

Select the Vital Few X’s Causing Problems (X-Y Matrix, FMEA)

Assess Measurement System

Y

Repeatable &

Reproducible?

N

Implement Changes to Make System Acceptable

Assess Stability (Statistical Control)

Assess Capability (Problem with Centering/Spread)

Estimate Process Sigma Level

Review Progress with Champion

Ready for Analyze

This provides a process look at putting “Measure” to work. By the time we complete this phase you

will have a thorough understanding of the various Measure Phase concepts

concepts.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

89

Lean Six Sigma

Black Belt Training

Measure Phase

Process Discovery

Now we will continue in the Measure Phase with “Process Discovery”.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

90

Process Discovery

Overview

Welcome

Welcome to

to Measure

Measure

Process

Process Discovery

Discovery

Cause

Cause &

& Effect

Effect Diagram

Diagram

Detailed

Detailed Process

Process Mapping

Mapping

Cause

Cause and

and Effect

Effect Diagrams

Diagrams

FMEA

FMEA

Six

Six Sigma

Sigma Statistics

Statistics

Measurement

Measurement System

System Analysis

Analysis

Process

Process Capability

Capability

Wrap

Wrap Up

Up &

& Action

Action Items

Items

The purpose of this module is highlighted above. We will review tools to help facilitate Process

Discovery.

This will be a lengthy step as it requires a full characterization of your selected process

process.

There are four key deliverables from the Measure Phase:

(1) A robust description of the process and its workflow

(2) A quantitative assessment of how well the process is actually working

(3) An assessment of any measurement systems used to gather data for making decisions or to

describe the performance of the process

(4) A “short” list of the potential causes of our problem, these are the X’s that are most likely

related to the problem

problem.

On the next lesson page we will help you develop a visual and mental model that will give you

leverage in finding the causes to any problem..

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

91

Process Discovery

Overview of Brainstorming Techniques

Cause and Effect Diagram

People Machine Method

The Y

The or

Problem

The X’s Problem

Condition

(Causes)

l

Material Measurement Environment Categories

You will need to use brainstorming techniques to identify all possible problems and their causes.

Brainstorming techniques work because the knowledge and ideas of two or more persons is

always greater than that of any one individual.

Brainstorming will generate a large number of ideas or possibilities in a relatively short time.

Brainstorming tools are meant for teams

teams, but can be used at the individual level also

also.

Brainstorming will be a primary input for other improvement and analytical tools that you will use.

You will learn two excellent brainstorming techniques, cause and effect diagrams and affinity

diagrams. Cause and effect diagrams are also called Fishbone Diagrams because of their

appearance and sometimes called Ishikawa diagrams after their inventor.

In a brainstorming session, ideas are expressed by the individuals in the session and written down

without debate or challenge

challenge. The general steps of a brainstorming sessions are:

1. Agree on the category or condition to be considered.

2. Encourage each team member to contribute.

3. Discourage debates or criticism, the intent is to generate ideas and

not to qualify them, that will come later.

4. Contribute in rotation (take turns), or free flow, ensure every member

has an equal opportunity.

5. Listen to and respect the ideas of others.

6. Record all ideas generated about the subject.

7. Continue until no more ideas are offered.

8. Edit the list for clarity and duplicates.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

92

Process Discovery

Cause and Effect Diagram

Cause and Effect Diagram A commonly used tool

People Machine Method

to solicit ideas by

using categories to

The Y stimulate cause and

The X’s

The or

Problem

Problem

Condition

effect relationship with

(Causes) a problem. It uses

verbal inputs in a team

l

Material Measurement Environment Categories environment.

Products Categories for the legs of the Transactional

– Measurement diagram can use templates – People

– People for products or transactional – Policy

– Method symptoms Or you can select

symptoms. – Procedure

– Materials the categories by process – Place

– Equipment step or what you deem – Measurement

– Environment appropriate for the situation. – Environment

A cause and effect diagram is a composition of lines and words representing a meaningful

relationship between an effect,

effect or condition

condition, and its causes

causes. To focus the effort and facilitate thought

thought,

the legs of the diagram are given categorical headings. Two common templates for the headings are

for product related and transactional related efforts. Transactional is meant for processes where

there is no traditional or physical product; rather it is more like an administrative process.

Transactional processes are characterized as processes dealing with forms, ideas, people,

decisions and services. You would most likely use the product template for determining the cause of

burnt pizza and use the transactional template if you were trying to reduce order defects from the

order taking process

process. A third approach is to identify all categories as you best perceive them

them.

When performing a cause and effect diagram, keep drilling down, always asking why, until you find

the root causes of the problem. Start with one category and stay with it until you have exhausted all

possible inputs and then move to the next category. The next step is to rank each potential cause by

its likelihood of being the root cause. Rank it by the most likely as a 1, second most likely as a 2 and

so on. This make take some time, you may even have to create sub-sections like 2a, 2b, 2c, etc.

Then come back to reorder the sub-section in to the larger ranking. This is your first attempt at really

finding the Y=f(X); remember the funnel? The top X’s have the potential to be the Critical X’s, those

X’s which exert the most influence on the output Y.

Finally you will need to determine if each cause is a control or a Noise factor. This as you know is a

requirement for the characterization of the process. Next we will explain the meaning and methods

of using some of the common categories.

There may be several interpretations of some of the Process Mapping symbols; however, just about

everyone uses these primary symbols to document processes. As you become more practiced you

will find additional symbols useful, i.e. reports, data storage etc. For now we will start with just these

symbols.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

93

Process Discovery

Cause and Effect Diagram

The Measurement category groups causes related to the measurement and

measuring of a process activity or output:

Examples of questions to ask:

• Is there a metric issue? Measurement

• Is there a valid measurement

system? Is the data good

enough?h?

Y

• Is data readily available?

The People category groups root causes related to people, staffing, and

organizations:

Examples

p of q

questions to ask: People

p

• Are people trained, do they

have the right skills?

• Is there person to person

Y

variation?

• Are people over - worked?

Cause and Effect Diagram

The Method category groups root causes related to how the work is done, the

way the process is actually conducted:

Examples

p of q

questions to ask: Method

• How is this performed?

• Are procedures correct?

• What might unusual? Y

The Materials category groups root causes related to parts, supplies, forms or

information needed to execute a process:

Examples of questions to ask:

• Are bills of material current? Y

• Are parts or supplies obsolete?

• Are there defects in the materials

Materials

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

94

Process Discovery

Cause and Effect Diagram

The Equipment category groups root causes related to tools used in the process:

Examples of questions to ask:

• Have machines been serviced recently,

what is the uptime?

• Have tools been properly maintained? Y

• Is there variation?

Equipment

The Environment (a.k.a. Mother Nature) category groups root causes related to

our work environment, market conditions, and regulatory issues.

Examples of questions to ask:

• Is the workplace safe and

comfortable? Y

• Are outside regulations impacting the

business?

• Does the company culture aid the

process? Environment

Classifying the X’s

The Cause & Effect Diagram is simply a tool to generate opinions

about possible causes for defects.

For each of the X’s identified in the Fishbone diagram classify them

as follows:

– Controllable – C (Knowledge)

– Procedural – P (People, Systems)

– Noise – N (External or Uncontrollable)

Think of procedural as a subset of controllable. Unfortunately, many

procedures within a company are not well controlled and can cause

the defect level to go up. The classification methodology is used to

separate the X’s so they can be used in the X-Y Diagram and the

FMEA taught later in this module.

WHICH X

X’s

s CAUSE DEFECTS?

The Cause and Effect Diagram is an organized way to approach brainstorming. This approach allows

us to further organize ourselves by classifying the X’s into controllable, procedural or noise types.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

95

Process Discovery

Chemical Purity Example

Measurement Manpower Materials

Incoming QC (P) Training on method (P) Raw Materials (C)

Measurement Insufficient staff (C)

Method (P) Skill Level (P) Multiple Vendors (C)

Measurement

Capability (C) Adherence to procedure (P) S

Specifications

ifi ti (C)

Work order variability (N)

Chemical

Startup inspection (P) Room Humidity (N) Column Capability (C) Purity

Handling (P) RM Supply in Market (N) Nozzle type (C)

Purification Method (P) Shipping Methods (C) Temp controller (C)

Data collection/feedback

(P)

Methods Mother Nature Equipment

This example of the Cause and Effect Diagram is of chemical purity. Notice how the input variables for

each branch are classified as Controllable, Procedural and Noise.

Cause & Effect Diagram - MINITAB™

Below is a Cause & Effect Diagram for surface flaws. The next few

slides will demonstrate how to create it in MINITAB™.

The Fishbone Diagram shown here for surface flaws was generated in MINITAB™. We will now

review the various steps for creating a Cause and Effect Diagram using the MINITAB™

statistical software package.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

96

Process Discovery

Cause & Effect Diagram - MINITAB™

Open the MINITAB™ Project “Measure Data Sets.mpj” and select the worksheet

Surfaceflaws.mtw.

Open the MINITAB™ worksheet “Surfaceflaws.mtw”.

Take a few moments to study the worksheet. Notice the first 6 columns are the classic bones for a

Fishbone. Each subsequent column is labeled for one of the X’s listed in one of the first six columns

and are the secondary bones

bones.

After you have entered the Labels, click on the first field under the “Causes” column to bring up the

list of branches on the left hand side. Next double-click the first branch name on the left hand side to

move “C1 Man” underneath “Causes”.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

97

Process Discovery

Cause & Effect Diagram - MINITAB™ (cont.)

To continue identifying

the secondary

branches, select the

button, “Sub…” to the

right of the “Label”

column.

Click on the third field

under “Causes” to

bring up the list of

branches on the left

hand side.

Next double-click the

seventh branch name

on the left hand side to

move “C7 Training”

underneath “Causes”

then select “OK” and

repeat for each

remaining sub branch.

In order to adjust the Fishbone Diagram so the main causes titles are

not rolled grab the line with your mouse and move the entire bone.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

98

Process Discovery

Cause & Effect Diagram Exercise

Exercise objective: Create a Fishbone Diagram.

1. Retrieve the high level Process Map for your project

and use it to complete a Fishbone, if possible include

your project team.

Don ’t let the

big one get

away!

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

99

Process Discovery

Overview of Process Mapping

In order to correctly m a na ge a process,

process you

m ust be a ble to describe it in a w a y tha t ca n be

ea sily understood.

– The preferred method for describing a process is

to identify it with a generic name, show the

workflow with a Process Map and describe its

purpose with an operational description.

– The

Th fifirstt activity

ti it off th

the Measure

M Phase

Ph is

i to

t

adequately describe the process under

investigation.

ct

Sta rt Step A Step B Step C St

Step D Fi i h

Finish

e

sp

In

Process Mapping, also called flowcharting, is a technique to visualize the tasks, activities and steps

necessary to produce a product or a service. The preferred method for describing a process is to

identify it with a generic name, show the workflow with a Process Map and describe its purpose with

an operational description

description.

Remember that a process is a blending of inputs to produce some desired output. The intent of each

task, activity and step is to add value, as perceived by the customer, to the product or service we are

producing. You cannot discover if this is the case until you have adequately mapped the process.

There are many reasons for creating a Process Map:

- It helps all process members understand their part in the process and how their process fits into the

bigger picture

picture.

- It describes how activities are performed and how the work effort flows, it is a visual way of standing

above the process and watching how work is done. In fact, process maps can be easily uploaded into

model and simulation software where computers allow you to simulate the process and visually see

how it works.

- It can be used as an aid in training new people.

- It will show you where you can take measurements that will help you to run the process better.

- It will help

p yyou understand where problems

p occur and what some of the causes may y be.

- It leverages other analytical tools by providing a source of data and inputs into these tools.

- It identifies and leads you to many important characteristics you will need as you strive to make

improvements.

Individual maps developed by Process Members form the basis of Process Management. The

individual processes are linked together to see the total effort and flow for meeting business and

customer needs.

In order to improve or to correctly manage a process, you must be able to describe it in a way that

can be easily understood, that is why the first activity of the Measure Phase is to adequately describe

the process under investigation. Process Mapping is the most important and powerful tool you will

use to improve the effectiveness and efficiency of a process.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

100

Process Discovery

Information from Process Mapping

These are more reasons

why Process Mapping is By mapping processes we can identify many important

the most important and characteristics and develop information for other analytical tools:

powerful tool you will

need to solve a problem. 1. Process inputs (X’s)

It has been said that Six 2. Supplier requirements

Sigma is the most 3. Process outputs (Y’s)

efficient problem solving 4. Actual customer needs

methodology

h d l available.

il bl 5

5. All value-added

l dd d andd non-value

l added

dd d process tasks

t k and

d steps

t

This is because work 6. Data collection points

done with one tool sets •Cycle times

up another tool, very little •Defects

information and work is •Inventory levels

wasted. Later you will •Cost of poor quality, etc.

learn to how to further 7. Decision points

use the information and 8. Problems that have immediate fixes

knowledge you gather 9. Process control needs

from Process Mapping.

Process Mapping

There are usually three views

Th

There are usually

ll three

th views

i off a process:

of a process: The first view is

“what you think the process

is” in terms of its size, how

1 2 3 work flows and how well the

process works. In virtually all

What you THINK it is.. What it ACTUALLY is.. What it SHOULD be..

cases the extent and difficulty

of performing the process is

understated.

d t t d

It is not until someone

Process Maps the process

that the full extent and

difficulty is known, and it

virtually is always larger than

what we thought, is more

difficult and it cost more to operate than we realize. It is here that we discover the hidden operations

also. This is the second view: “what the process actually is”.

Then there is the third view: “what it should be”. This is the result of process improvement activities. It

is precisely what you will be doing to the key process you have selected during the weeks between

classes. As a result of your project you will either have created the “what it should be” or will be well

on your way to getting there. In order to find the “what it should be” process, you have to learn

process mapping and literally “walk”

walk the process via a team method to document how it works. This is

a much easier task then you might suspect, as you will learn over the next several lessons.

We will start by reviewing the standard Process Mapping symbols.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

101

Process Discovery

Standard Process Mapping Symbols

Standard symbols for process mapping (available in Microsoft

Office™, Visio™, iGrafx™ , SigmaFlow™ and other products):

A RECTANGLE indicates an A PARALLELAGRAM shows

activity. Statements within that there are data

the rectangle should begin

with a verb

A DIAMOND signifies a decision An ELLIPSE shows the start

point.

i t OOnly

l two

t pathsth emerge from

f and end of the process

a decision point: No and Yes

An ARROW shows the A CIRCLE WITH A LETTER OR

1 NUMBER INSIDE symbolizes

connection and direction

th continuation

the ti ti off a

of flow

flowchart to another page

There may be several interpretations of some of the Process Mapping symbols; however, just

about everyone uses these primary symbols to document processes. As you become more

practiced you will find additional symbols useful, i.e. reports, data storage etc. For now we will

start with just these symbols.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

102

Process Discovery

Process Mapping Levels

Levell 1 – The

L Th Macro

M Process

P Map,

M sometimes

ti called

ll d a

Management level or viewpoint.

Calls

Customer Take Make Cook Pizza Box Deliver Customer

for

Hungry Order Pizza Pizza Correct Pizza Pizza Eats

Order

Level 2 – The Process Map, sometimes called the Worker level or

viewpoint This example is from the perspective of the pizza chef

viewpoint.

Pizza

Dough

No

Take Order Add Place in Observe Check Yes Remove

from Cashier Ingredients Oven Frequently if Done from Oven 1

Start New

Pizza

Scrap

No

Tape

Pizza Place in Put on

1 Correct Box

Order on Delivery Rack

Yes Box

Level 3 – The Micro Process Map, sometimes called the Improvement

level or viewpoint. Similar to a level 2, it will show more steps and tasks

and on it will be various performance data; yields, cycle time, value and

non value added time, defects, etc.

Before Process Mapping starts, you have to learn about the different level of detail on a Process

Map and the different types of Process Maps. Fortunately these have been well categorized and

are easy to understand.

There are three different levels of Process Maps. You will need to use all three levels and you most

likely will use them in order from the macro map to the micro map. The macro map contains the

least level of detail, with increasing detail as you get to the micro map. You should think of and use

the level of Process Maps in a way similar to the way you would use road maps. For example, if

you want to find a country, you look at the world map. If you want to find a city in that country, you

look at the country map. If you want to find a street address in the city, you use a city map. This is

the general rule or approach for using Process Maps.

Thee Macro

ac o Process

ocess Map,ap, what

at is

s called

ca ed tthe

e Level

e e 1 Map,

ap, sshows

o s tthee big

b g picture,

p ctu e, you will use tthis

s to

orient yourself to the way a product or service is created. It will also help you to better see which

major step of the process is most likely related to the problem you have and it will put the various

processes that you are associated with in the context of the larger whole. A Level 1 PFM,

sometimes called the “management” level, is a high-level process map having the following

characteristics:

Combines related activities into one major processing step

Illustrates where/how the process fits into the big picture

Has minimal detail

Illustrates only major process steps

Can be completed with an understanding of general process steps and the

purpose/objective of the process

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

103

Process Discovery

Process Mapping Levels (cont.)

The next level is generically called the Process Map

Map. You will refer to it as a Level 2 Map and it

identifies the major process steps from the workers point of view. In the pizza example above,

these are the steps the pizza chef takes to make, cook and box the pizza for delivery. It gives you

a good idea of what is going on in this process, but could can you fully understand why the

process performs the way it does in terms of efficiency and effectiveness, could you improve the

process with the level of knowledge from this map?

Probably not, you are going to need a Level 3 Map called the Micro Process Map. It is also known

as the improvement view off a process. There is however a lot off value in the Level 2 Map,

because it is helping you to “see” and understand how work gets done, who does it, etc. It is a

necessary stepping stone to arriving at improved performance.

Next we will introduce the four different types of Process Maps. You will want to use different

types of Process Maps, to better help see, understand and communicate the way processes

behave.

Types of Process Maps

The Linear-Flow Process Map There are four

Calls

Customer

Hungry

for

Order

Take

Order

Make

Pizza

Cook

Pizza

Pizza

Correct

Box

Pizza

Deliver

Pizza

Customer

Eats types of Process

M

Maps that

th t you will

ill

As the name states, this diagram shows the process steps in a sequential flow, generally ordered

from an upper left corner of the map towards the right side. use. They are the

The Deployment-Flow or Swim Lane Process Map Linear Flow Map,

the deployment or

Customer

Customer Calls for Customer

Hungry Order Eats

Swim Lane Flow

Map, the S-I-P-0-C

Cashier

Take

Map (pronounced

Order

Pizza Box sipoc) and the

Cook

M k

Make C

Cookk

Pizza Pizza Correct Pizza

Value Stream

Map.

Deliverer

Deliver

Pizza

The value of the Swim Lane map is that is shows you who or which department is responsible for

While they all

the steps in a process. This can provide powerful insights in the way a process performs. A

timeline can be added to show how long it takes each group to perform their work. Also each

show how work

time work moves across a swim lane, there is a “Supplier – Customer” interaction. This is usually

where bottlenecks and queues form.

gets done, they

emphasize

different aspects of process flow and provide you with alternative ways to understand the

behavior of the process so you can do something about it. The Linear Flow Map is the most

traditional and is usually where most start the mapping effort.

The Swim Lane Map adds another dimension of knowledge to the picture of the process: Now

you can see which department area or person is responsible. You can use the various types of

maps in the form of any of the three levels of a Process Map.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

104

Process Discovery

Process Maps – Examples for Different Processes

L in e a r P r o c e s s M a p fo r D o o r M a n u fa c tu r in g

B e g in P r e p d o o r s In s p e c t P r e -c le a n in g A

R e tu r n

fo r

r e w o r k

M a r k f o r d o o r

In s ta ll in to In s p e c t

A w o r k jig

L ig h t s a n d in g

f in is h

h a n d le B

d r illin g

R e w o r k

D e - b u r r a n d A p p ly p a r t M o v e t o

B D r ill h o le s

s m o o th h o le n u m b e r fin is h in g

C

S c r a t c h F in a l A p p ly s t a in

C In s p e c t In s p e c t E n d

r e p a ir c le a n in g a n d d r y

S c r a p

S w im L a n e P r o c e s s M a p fo r C a p ita l E q u ip

P r e p a r e

Business

D e fin e p a p e r w o r k R e v ie w &

R e c e iv e &

Unit

( C A A R & a p p r o v e

N e e d s in s t a lla tio n C A A R

u s e

r e q u e s t )

R e v ie w &

C o n f ig u r e

I.T.

a p p r o v e

& in s t a ll

s t a n d a r d

Finance

R e v ie w &

Is s u e

a p p r o v e

p a y m e n t

C A A R

Corporate

Top Mgt/

R e v ie w &

a p p r o v e

C A A R

Procurement

A c q u ir e

e q u ip m e n t

S u p p lie r S u p p lie r

Supplier

S h ip s P a id

2 1 d a y s 6 d a y s 1 5 d a y s 5 d a y s 1 7 d a y s 7 d a y s 7 1 d a y s 5 0 d a y s

Types of Process Maps

The SIPOC diagram is The SIPOC “Supplier – Input – Process – Output – Customer”

especially useful after Process Map

you have been able to Suppliers Inputs Process O utputs Custom ers Requirem ents

construct either a Level 1 r ATT Phones

Ph r Pi

Pizza type r See Below r Pi

Price r C k

Cook r C

Complete

l callll < 3 min

i

r r r r Accounting r

or Level 2 Map because

Office Depot Size Order confirmation Order to Cook < 1 minute

r TI Calculators r Quantity r Bake order r Complete bake order

it facilitates your r N EC Cash Register r

r

Extra Toppings

Special orders

r

r

Data on cycle time

Order rate data

r

r

Correct bake order

Correct address

gathering of other r Drink types & quantities r Order transaction r Correct Price

r Other products r Delivery info

pertinent data that is r Phone number

affecting the process in a r

r

Address

N ame

systematic way. It will r Time, day and date

r

help you to better see

Volume

and understand all of the Level 1 Process M a p for Custom er O rder Process

influences affecting the

Call for Answer W rite Confirm Sets Address Order to

behavior and an Order Phone Order Order Price & Phone Cook

performance of the

process. The SIPOC diagram is especially useful after you have been able to construct

either a Level 1 or Level 2 Map because it facilitates your gathering of other

You may also add a pertinent data that is affecting the process in a systematic way.

requirements section

to both the supplier side and the customer side to capture the expectations for the inputs and

the outputs of the process. Doing a SIPOC is a great building block to creating the Level 3

Micro Process Map. The two really compliment each other and give you the power to make

improvements to the process.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

105

Process Discovery

Types of Process Maps

The Value Stream Map p is a The Value Stream Map

specialized map that helps Process Steps

Log Route Disposition Cut Check Mail Delivery

you to understand -Computer

-1 Person

-Department

Assignments

-Guidelines

-1 Person

-Computer

-Printer

-Envelops

-Postage

Size of work queue or I I I I I

numerous performance inventory

-1 Person -1 Person -1 Person

metrics associated primarily Process Step

4,300 C/T = 15 sec

Uptime = 0.90

7,000 C/T = 75 sec

Uptime = 0.95

1,700 C/T = 255 sec

Uptime = 0.95

2,450 C/T = 15 sec

Uptime = 0.85

1,840 C/T = 100 sec

Uptime = 0.90

Hours = 8 Hours = 8 Hours = 8 Hours = 8 Hours = 8

with the speed of the Time Parameters Breaks = 0.5

Hours

Breaks = 0.5

Hours

Breaks = 0.5

Hours

Breaks = 0.5

Hours

Breaks = 0.5

Hours

process, but has many

Available =6.75 Available =7.13 Available =7.13 Available =6.38 Available =6.75

Sec. Sec. Sec. Sec. Sec.

Avail. = 24,300 Avail. = 25,650 Avail. = 25,650 Avail. = 22,950 Avail. = 24,300

other important data. While Step Processing Time

Days of Work in 15 sec 75 sec 255 sec 15 sec 100 sec

thi P

this Process M Map level

l l is

i att queue 2.65 days 20.47 days 16.9 days 1.60 days 7.57 days

the macro level, the Value Process Performance

Metrics IPY = 0.92 IPY = .94 IPY = .59 IPY = .96 IPY = .96

Stream Map provides you a Defects = 0.08

RTY = .92

Defects = .06

RTY = .86

Defects = .41

RTY = .51

Defects = .04

RTY = .49

Defects = .04

RTY = .47

lot of detailed performance

Rework = 4.0% Rework = 0.0 Rework = 10% Rework = 0.0 Rework = 0.0

Material Yield = .96 Material Yield = .94 Material Yield = .69 Material Yield = .96 Material Yield = .96

Scrap = 0.0% Scrap = 0.0% Scrap = 0.0% Scrap = 0.0% Scrap = 4.0%

data for the major steps of Aggregate Performance

Metrics

the process. It is great for Cum Material Yield = .96 X .94 X .69 X .96 X .96 = .57 RTY = .92 X .94 X .59 X .96 X .96 = .47

finding bottlenecks in the The Value Stream Map is a very powerful technique to understand the

process.

p velocity of process transactions, queue levels and value added ratios in

both manufacturing and non-manufacturing processes.

Process Mapping Exercise – Going to Work

The purpose of this exercise is to develop a Level 1 Macro, Linear

Process Flow Map and then convert this map to a Swim Lane Map.

Read the following background for the exercise: You have been concerned

about your ability to arrive at work on time and also the amount of time it takes

from the time your alarm goes off until you arrive at work. To help you better

understand both the variation in arrival times and the total time,, you

y decide to

create a Level 1 Macro Process Map. For purposes of this exercise, the start is

when your alarm goes off the first time and the end is when you arrive at your

work station.

Task 1 – Mentally think about the various tasks and activities that you routinely

do from the defined start to the end points of the exercise.

Task 2 – Using a pencil and paper create a linear process map at the macro

level but with enough detail that you can see all the major steps of your

level,

process.

Task 3 – From the Linear Process Map, create a swim lane style Process Map.

For the lanes you may use the different phases of your process, such as the

wake up phase, getting prepared, driving, etc.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

106

Process Discovery

A Process Map of Process Mapping

Process Mapping

follows a general Select the process

Create the Level 2 Create a Level 3

PFM PFM

order, but sometimes

you may find it

necessary, even Determine Add Performance

approach to map Perform SIPOC

advisable to deviate the process data

somewhat. However,

you will find this a

good path to follow Complete Level 1

PFM worksheet

Identify all X’s and Identify VA/NVA

Y’s steps

as it has proven itself

to generate

significant results. Identify customer

Create Level 1 PFM

requirements

On the lessons

ahead we will always

show you where you Define the scope Identify supplier

for the Level 2 PFM requirements

are at in this

sequence of tasks

for Process Mapping. Before we begin our Process Mapping we will first start you off with how to

determine the approach to mapping the process.

Basically there are two approaches: the individual and the team approach.

Process Mapping Approach

Select the Using the Individual Approach

process 1. Start with the Level 1 Macro Process Map.

2. Meet with process owner(s) / manager(s). Create a

Level 1 Map and obtain approval to interview

Determine

approach to process members.

map the 3 Starting with the beginning of the process

3. process, pretend

process

you are the product or service flowing through the

process, interview to gather information.

Complete

Level 1 4. As the interview progress, assemble the data into a

PFM Level 2 PFM.

worksheet

5. Verify the accuracy of the Level 2 PFM with the

individuals who provided input.

Create

Level 1

6. Update the Level 2 PFM as needed.

PFM

Using the Team Approach

Define the

scope for 1. Follow the Team Approach to Process Mapping

the Level 2

PFM

If you decide to do the individual approach, here are a few key factors: You must pretend that you are the

product or service flowing through the process and you are trying to “experience” all of the tasks that

h

happen th

through

h the

th various

i steps.

t

You must start by talking to the manager of the area and/or the process owner. This is where you will

develop the Level 1 Macro Process Map. While you are talking to him, you will need to receive permission

to talk to the various members of the process in order to get the detailed information you need.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

107

Process Discovery

Process Mapping Approach

Process Mapping

P M i

works best with a Select the Using the Team Approach

team approach. The process 1. Start with the Level 1 Macro Process Map.

2. Meet with process owner(s) / manager(s). Create a

logistics of Level 1 Map and obtain approval to call a process

Determine

performing the approach to mapping meeting with process members (See team

mapping a map the workshop instructions for details on running the

process meeting).

somewhat different, 3. Bring key members of the process into the process

Complete

but it overall it takes Level 1 flow workshop. If the process is large in scope, hold

less time, the quality PFM individual workshops for each subsection of the

worksheet total process. Start with the beginning steps.

of the output is Organize meeting to use the “post-it note approach

higher and you will Create to gather individual tasks and activities, based on

Level 1 the macro map, that comprise the process.

have more “buy-in” PFM 4. Immediately assemble the information that has

into the results. Input been provided into a Process Map.

should come from Define the 5. Verify the PFM by discussing it with process owners

individuals familiar scope for and by observing the actual process from beginning

the Level 2

PFM to end.

with

ith allll stages

t off

process.

Where appropriate the team should include line individuals, supervisors, design engineers, process

engineers, process technicians, maintenance, etc. The team process mapping workshop is where it

all comes together.

Select the The Team Process Mapping Workshop

process 1. Add to and agree on Macro Process Map.

2. Using 8.5 X 11 paper for each macro process step,

Determine tape the process to the wall in a linear style.

approach to 3. Process Members then list all known process tasks

map the that they do on a Post-it note, one process task per

process

note.

• Include the actual time spent to perform each

Complete

Level 1 activity, do not include any wait time or queue

PFM time.

worksheet • List any known performance data that describe

the quality of the task.

Create 4. Place the post-it notes on the wall under the

Level 1 appropriate macro step in the order of the work flow.

PFM 5. Review process with whole group, add additional

information and close meeting.

Define the 6. Immediately consolidate information into a Level 2

scope for Process Map.

the Level 2 7. You will still have to verify the map by walking the

PFM

process

process.

In summary, after adding to and agreeing to the Macro Process Map, the team process mapping

approach is performed using multiple post-it notes where each person writes one task per note and,

when finished, place them onto a wall which contains a large scale Macro Process Map.

This is a very fast way to get a lot of information including how long it takes to do a particular task.

Using the Value Stream Analysis techniques which you will study laterlater, you will use this data to

improve the process. We will now discuss the development of the various levels of Process Mapping.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

108

Process Discovery

Steps in Generating a Level 1 PFM

You may y recall that the

preferred method for

describing a process is to Select the Creating a Level 1 PFM

process

identify it with a generic 1. Identify a generic name for the process:

For instance: “Customer order process”

name, describe its purpose 2. Identify the beginning and ending steps of the process:

Determine

with an operational approach to Beginning - customer calls in. Ending – baked pizza given to

map the operations

description and show the process

3. Describe the primary purpose and objective of the process

workflow with a process (operational definition):

Complete

p

map. When

Wh developing

d l i a Level 1 Th purpose off th

The the process is

i to

t obtain

bt i telephone

t l h orders

d for

f

PFM pizzas, sell additional products if possible, let the customer

Macro Process Map, always worksheet know the price and approximate delivery time, provide an

add one process step in front accurate cook order, log the time and immediately give it to the

pizza cooker.

Create

of and behind the area you Level 1 4. Mentally “walk” through the major steps of the process and

believe contains your PFM write them down:

Receive the order via phone call from the customer, calculate

problem as a minimum. To the price, create a build order and provide the order to

Define the

aid you in your start, we have scope for

operations

the Level 2 5. Use standard flowcharting symbols to order and to illustrate

provided yyou with a checklist

p PFM the flow of the major process steps.

steps

or worksheet. You may

acquire this data from

your own knowledge and/or with the interviews you do with the managers / process owners. Once you

have this data, and you should do this before drawing maps, you will be well positioned to

communicate with others and you will be much more confident as you proceed.

A Macro Process Map can be useful when reporting project status to management. A macro-map can

show the scope of the project

project, so management can adjust their expectations accordingly.

accordingly Remember

Remember,

only major process steps are included. For example, a step listed as “Plating” in a manufacturing

Macro Process Map, might actually consists of many steps: pre-clean, anodic cleaning, cathodic

activation, pre-plate, electro-deposition, reverse-plate, rinse and spin-dry, etc. The plating step in the

macro-map will then be detailed in the Level 2 Process Map.

Exercise – Generate a Level 1 PFM

Th purpose off thi

The this exercise

i iis to

t ddevelop

l aL

Levell 1 Li

Linear

Select the Process Flow Map for the key process you have selected as your

process workplace assignment.

Read the following background for the exercise: You will use

Determine your selected key process for this exercise (if more than one

approach to person in the class is part of the same process you may do it as a

map the

process small group). You may not have all the pertinent detail to correctly

put together the Process Map, that is ok, do the best you can.

Complete This will give you a starting template when you go back to do your

Level 1 workplace assignment. In this exercise you may use the Level 1

PFM

worksheet PFM worksheet on the next page as an example.

Create Task 1 – Identify a generic name for the process.

Level 1 Task 2 - Identify the beginning and ending steps of the process.

PFM Task 3 - Describe the primary purpose and objective of the

process ((operational

p p definition).

)

Define the Task 4 - Mentally “walk” through the major steps of the process

scope for and write them down.

the Level 2

PFM Task 5 - Use standard flowcharting symbols to order and to

illustrate the flow of the major process steps.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

109

Process Discovery

Exercise – Generate a Level 1 PFM (cont.)

If necessary,

necessary you may look

at the example for the Pizza 1. Identify a generic name for the process:

order entry process.

2. Identify the beginning and ending steps of the process:

3. Describe the primary purpose and objective of the process

(operational definition):

4. Mentally “walk” through the major steps of the process and write

them down:

5. Use standard flowcharting symbols to order and to illustrate the flow

off the

h major

j process steps on a separate sheet

h off paper.

Exercise – Generate a Level 1 PFM Solution

1

1. Identify a generic name for the process:

(I.E. customer order process).

• Identify the beginning and ending steps of the process:

(beginning - customer calls in, ending – pizza order given to the chef).

• Describe the primary purpose and objective of the process (operational

definition):

) ((The p

purpose

p of the p

process is to obtain telephone

p orders for

Pizzas, sell additional products if possible, let the customer know the

price and approximate delivery time, provide an accurate cook order, log

the time and immediately give it to the pizza cooker).

• Mentally “walk” through the major steps of the process and write them

down:

(Receive the order via phone call from the customer, calculate the price,

create a build order and provide the order to the chef).

• Use standard flowcharting symbols to order and to illustrate the flow of

the major process steps on a separate sheet of paper.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

110

Process Discovery

Defining the Scope of Level 2 PFM

With a completed Level 1

PFM, you can now “see” Customer Order Process Customer Order Process

where you have to go to get Select the Customer Calls for Take Make Cook Box Deliver Customer

more detailed information. process Hungry Order Order Pizza Pizza Pizza Pizza Eats

You will have the basis for

a Level 2 Process Map.

Determine Pizza

The improvements are in approach to Dough

th details.

the d t il If th

the efficiency

ffi i map the No

process Take Order Add Place in Observe Check Yes Remove

or effectiveness of the from Cashier Ingredients Oven Frequently if Done from Oven 1

process could be

significantly improved by a Complete Start New

Pizza

Level 1

broad summary analysis, PFM Scrap

the improvement would be worksheet No

done already. If you map 1 Pizza Place in

Tape

Order on

Put on

Delivery Rack

the process at an Correct

Yes

Box

Box

Create

actionable level, you can

Level 1

identify the source of PFM The rules for determining the Level 2 Process Map scope:

inefficiencies and defects.

But you need to be careful • From your Macro Process Map, select the area which represents your

about mapping too little an problem.

Define the

area and missing your scope for • Map this area at a Level 2.

problem cause, or mapping the Level 2

PFM • Start and end at natural startingg and stopping

pp g ppoints for a pprocess, in

t large

to l an area in

i detail,

d t il other words you have the complete associated process.

thereby wasting your

valuable time.

The rules for determining the

scope of the Level 2 Process Crea te the

Map: Level 2 PFM Pizza

Dough

a)) Look at your

y Macro Process No

Map, select the area which Take Order Add Place in Observe Check Yes Remove

Perform 1

represents your problem. SIPO C

from Cashier Ingredients Oven Frequently if Done from Oven

b) Map this area at a Level 2.

Start New

c) Start and end at natural Pizza

Identify a ll

starting and stopping points for X ’s a nd Y’s Scrap

a process, in other words you No

have the complete associated Identify

1 Pizza

Correct

Place in

Box

Tape

Order on

Put on

Delivery Rack

Yes Box

process

process. customer

t

requirements

When you perform the process

mapping workshop or do the Identify

supplier

individual interviews, you will requirements

determine how the various tasks

and activities form a complete step. Do not worry about precisely defining the steps, it is not an exact

science, common sense will prevail. If you have done a process mapping workshop, which you will

remember we highly recommended, you will actually have a lot of the data for the Level 3 Micro

Process Map. You will now perform a SIPOC and, with the other data you already have, it will

position you for about 70 percent to 80 percent of the details you will need for the Level 3 Process

Map.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

111

Process Discovery

Building a SIPOC

SIPOC diagram for customer-order process:

Create the Suppliers Inputs Process Outputs Customers Requirements

Level 2 PFM r ATT Phones r Pizza type r See Below r Price r Cook r Complete call < 3 min

r Office Depot r Size r Order confirmation r Accounting r Order to Cook < 1 minute

r TI Calculators r Quantity r Bake order r Complete bake order

r NEC Cash Register r Extra Toppings r Data on cycle time r Correct bake order

r Special orders r Order rate data r Correct address

r Drink types & quantities r Order transaction r Correct Price

Perform

r Other products r Delivery info

SIPOC r Phone number

r Address

r Name

r Time da

Time, day and date

r Volume

Identify all X’s

and Y’s

Identify Customer Order:

customer

requirements

Level 1 process flow diagram

Call for Answer Write Confirm Sets Address Order to

an Order Phone Order Order Price & Phone Cook

Identify

y

supplier

requirements

The tool name prompts the team to consider the suppliers (the 'S' in SIPOC) of your process, the

inputs (the 'I') to the process, the process (the 'P') your team is improving, the outputs (the 'O') of

the process and the customers (the 'C') that receive the process outputs.

Requirements of the customers can be appended to the end of the SIPOC for further detail and

requirements are easily added for the suppliers as well.

The SIPOC tool is particularly useful in identifying:

Who supplies inputs to the process?

What are all of the inputs to the process we are aware of? (Later in the DMAIC methodology

you will use other tools which will find still more inputs, remember Y=f(X) and if we are going to

improve Y, we are going to have to find all the X’s.

What specifications are placed on the inputs?

What are all of the outputs of the process?

Who are the true customers of the process?

What are the requirements of the customers?

You can actually begin with the Level 1 PFM that has 4 to 8 high-level steps, but a Level 2 PFM is even

of more value. Creating a SIPOC with a process mapping team, again the recommended method is a

wall exercise similar to your other process mapping workshop. Create an area that will allow the team to

place post-it

post it note additions to the 8.5

8 5 X 11 sheets with the letters S,

S I,

I P,

P O and C on them with a copy of

the Process Map below the sheet with the letter P on it.

Hold a process flow workshop with key members. (Note: If the process is large in scope, hold an

individual workshop for each subsection of the total process, starting with the beginning steps).

The preferred order of the steps is as follows:

1. Identify the outputs of this overall process.

2. Identify the customers who will receive the outputs of the process.

3. Identify

f customers’ preliminary requirements

4. Identify the inputs required for the process.

5. Identify suppliers of the required inputs that are necessary for the process to function.

6. Identify the preliminary requirements of the inputs for the process to function properly.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

112

Process Discovery

Identifying Customer Requirements

You are now ready to

identify the customer

requirements for the Create the

Level 2 PFM

outputs you have defined. PROCESS OUTPUT

Process Name

Operational

Definition

IDENTIFICATION AND ANALYSIS

Customer requirements, 1

Output Data

3 4 5 6 7

Requirements Data

8 9 10

Measurement Data

11 12

Value Data

13

General Data/Information

called VOC, determine

Customer (Name) Metric Measurement VA

System (How is it Frequency of or

Perform Process Output - Name (Y) Internal External Metric LSL Target USL Measured) Measurement Performance Level Data NVA Comments

what are and are not SIPOC

acceptable for each of the

outputs. You may find that

some of the outputs do not Identify all X’s

and Y’s

have requirements or

specifications. For a well

managed process, this is Identify

customer

not acceptable. If this is the requirements

case, you must

ask/negotiate with the

Identify

customer as to what is supplier

acceptable. requirements

There is a technique for

determining the validity of customer and supplier requirements. It is called “RUMBA” standing for:

Reasonable, Understandable, Measurable, Believable and Achievable. If a requirement cannot meet all of

these characteristics, then it is not a valid requirement , hence the word negotiation. We have included the

process for validating

p g customer requirements

q at the end of this lesson.

The Excel spreadsheet is somewhat self explanatory. You will use a similar form for identifying the

supplier requirements. Start by writing in the process name followed by the process operational

definition. The operational definition is a short paragraph which states why the process exists, what it

does and what its value proposition is. Always take sufficient time to write this such that anyone who

reads it will be able to understand the process. Then list each of the outputs, the Y’s, and write in the

customer’s name who receives this output, categorized as an internal or external customer.

Next are the requirements data. To specify and measure something, it must have a unit of measure;

called a metric. As an example, the metric for the speed of your car is miles per hour, for your weight it is

pounds, for time it is hours or minutes and so on. You may know what the LSL and USL are but you may

not have a target value. A target is the value the customer prefers all the output to be centered at;

essentially, the average of the distribution. Sometimes it is stated as “1 hour +/- 5 minutes”. One hour is

the target, the LSL is 55 minutes and the USL is 65 minutes. A target may not be specified by the

customer; if not, put in what the average would be. You will want to minimize the variation from this

value.

value

You will learn more about measurement, but for now you must know that if something is required, you

must have a way to measure it as specified in column 9. Column 10 is how often the measurement is

made and column 11 is the current value for the measurement data. Column 12 is for identifying if this is

a value or non value added activity; more on that later. And finally column 13 is for any comments you

want to make about the output.

You will

Yo ill come back to this form and rank the significance of the o

outputs

tp ts in terms of importance to identif

identify

the CTQ’s.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

113

Process Discovery

Identifying Supplier Requirements

The supplier input or

process input identification

and analysis form is nearly Create the

Level 2 PFM

identical to the output form PROCESS INPUT

Process Name

Operational

Definition

just covered. Now you are IDENTIFICATION AND ANALYSIS

1 2

Input Data

3 4 5 6 7

Requirements Data

8 9 10

Measurement Data

11

Value Data

12

General Data/Information

the customer, you will Perform Controlled (C)

Process Input- Name (X) Noise (N)

Supplier (Name)

Internal External Metric LSL

Metric

Target USL

Measurement

System (How is it Frequency of Performance

Measured) Measurement Level Data

NV

or

NVA Comments

specify what is required of SIPOC

your suppliers for your

process to work correctly;

Identify all X’s

remember RUMBA – the and Y’s

same rules apply.

You will notice a new Identify

customer

parameter introduced in requirements

column 2. It asks if the input

is a controlled input or an

Identify

uncontrolled input (noise). supplier

requirements

The next topic will discuss

the meaning of these terms.

Later you will come back to this form and rank the importance of the inputs to the success of your

process and eventually you will have found the Critical X’s.

Controllable vs. Noise Inputs

For any process or process Screens in Place

step input, there are two Procedural Oven Clean

Ingredients prepared

Inputs

primary types of inputs:

Controllable - we can exert

influence over them

Uncontrollable - they behave Controllable Key Process

Inputs Process Outputs

as they want to within some

reasonable boundaries. Correct Ingredients

Properly Cooked

Procedural - A standardized Room Temp Pizza Size Hot Pizza >140 deg

set of activities leading to Moisture Content

Ingredient Variation

Noise Inputs Ingredient Types/Mixes

Volume

readiness of a step.

Compliance to GAAP

Every input can be either:

(Generally Accepted Controllable (C) - Inputs can be adjusted or controlled while the process is running (e.g., speed,

Accounting Principals). feed rate, temperature, and pressure)

Procedural (P) - Inputs can be adjusted or controlled while the process is running (e.g., speed,

feed rate, temperature, and pressure)

However, even with the inputs Noise (N) - Things we don’t think we can control, we are unaware of or see, too expensive or too

we define as controllable, we difficult to control (e.g., ambient temperature, humidity, individual)

never exert complete control.

We can control an input within the limits of its natural variation, but it will vary on its own based on

its distributional shape - as you have previously learned. You choose to control certain inputs

because you either know or believe they have an effect on the outcome of the process, it is

inexpensive to do, so controlling it “makes us feel better” or there once was a problem and the

solution (right or wrong) was to exert control over some input.

Certified Lean Six Sigma Black Belt Book Copyright OpenSourceSixSigma.com

114

Process Discovery

Controllable vs. Noise Inputs (cont.)

You choose to not control some inputs because you think you cannot control them them, you either know or

believe they don’t have much affect on the output, you think it is not cost justified or you just don’t

know these inputs even exist. Yes, that’s right, you don’t know they are having an affect on the output.

For example, what effect does ambient noise or temperature have on your ability to be attentive or

productive, etc?

It is important to distinguish which category an input falls into. You know through Y=f(X), that if it is a

Critical X, by definition, that you must control it. Also if you believe that an input is or needs to be

controlled then you have automatically implied there are requirements placed on it and that it must be

controlled,

measured. You must always think and ask whether an input is or should be controlled or if it is

uncontrolled.

Exercise – Supplier Requirements

The purpose of this exercise is to identify the requirements for the

Create the

suppliers to the key process you have selected as your workplace

Level 2 PFM assignment.

Read the following background for the exercise: You will use

Perform your selected key process for this exercise (if more than one

SIPOC

person in the class is part of the same process you may do it as a

small ggroup).

p) You may y not have all the p

pertinent detail to correctly

y

identify all supplier requirements, that is ok, do the best you can.

Identify all X’s This will give you a starting template when you go back to do your

and Y’s

workplace assignment. Use the process input identification and

analysis form for this exercise.

Identify