Professional Documents

Culture Documents

WTL_MegaMapOfML

WTL_MegaMapOfML

Uploaded by

tominetorgamerCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

WTL_MegaMapOfML

WTL_MegaMapOfML

Uploaded by

tominetorgamerCopyright:

Available Formats

Soft K-means 20

Sigmoid

Alisa

EEEEEEE L IIIIIIIII ZZZZZZZ AAA Cortana

Yandex 40

Logistic Linear Kernel

E L I Z A A Translate $

9. K-Means Spectral 60

regression

x2

O

Advertisement

9

E L I Z A A Siri 9 hy ptim

EEEEEE L

E

E

L

L

I

I

I Z

Z

Z A

AAAAAAA

A

A

A Question How to become a cat? clustering 80

Kernel pe a

rp l

EEEEEEE LLLLLLLL IIIIIIIII ZZZZZZZ A A

answering 35 100 Kernel SVM

la

ne

........................................................................................................................................

ELIZA > {PLEASE TYPE IN ALL CAPS} WHAT’S YOUR NAME DEAR?

systems 30 120 Regression 20

methods

Maximum

margin

CAT > MEOW 25

ELIZA > HI. I’M ELIZA, WHAT DO YOU WANT TO TALK ABOUT? L1 x1

Ranging

50 100 150 200 250

CAT > MEOW Google

20

ELIZA > WHAT HAPPENED? translate

CAT > MEEEEEOOOOOOOOOOOOOW Agglomerative Graph-based L2

ELIZA > REPEAT PLEASE chess Translation Verification Recomendation 15

Hierarchical kernel PCA

10

CAT> MEOW!

ELIZA > BYE AND KEEP IN TOUCH systems 5 Clustering

PCA SVM Random

of autorship Clustering

Search results

Nucleotide

forest

t-SNE

Kernel

Sentiment Moleculas

generation C G

5 10 15 20 25 30

DBSCAN PCA 100 200 300 Margin Soft margin

analysis

A T

GMM

Bioinformatics ω1 KNN Excuse Lend

Word sense

Dimension ω2 the Car

disambiguation G C Association Bad Good

Speech Named entity

DNA

reduction

X

Classic Decision Gini

Impurity

Excuse

Previous

Experience

tree

Semantic

T A

segmentation recognition Boosting

No Yes

Excuse First

Hydrogen request

Speech bonds ω3 Stacking No Yes

Graphs

recognition

Statistical

I needz yur Relationship Recognizing Friend

programmaen

extraction Textual entailment Ensembles

Unsupervised

skillz. Maek ARIMA

your kode

graet againb. NLP Time Series

learning Semi-

supervised Simularity Bagging

XGBoost

Terminology Tree

Word2vec extraction learning learning LDA boosting Isolation

Chat bots

Grammar Sequences Bayesian

Bayesian

Ensembles

CatBoost

LightGBM

forest

Text to induction

speech Syntax crafted

query

rules

takes

changes

easily

rule NLP

Train

Test

methods

approach

disadvantage development skilled require

functions doesnt analysis

requires ARMA Loss

existing

Part-of-speech

system

types

massive

corpus linguist base

tagging

knowledge

compared

Coreference

Variational

new

data

training example

manually developed

engineer based machine

resolution Parsing MAE

significant

extension

Lemmatization

methods

learningbased

flexible need

updated core

Supervised

translation obvious

Grammar

encode

P(B A)P(A)

experts

MCMC

Discource

manner

rulebased One-shot P(A B) =

Text

synonyms

MSE

Documents N-grams

learning learning P(B)

Automatic generation classification Natural EM

algorithm Colors:

summarization

Rule-based language RMSE

Machine

Cross validation

NLP

Discourse

processing

analysis

MNIST Embedings

Learning Overfitting Regularization

Neural Variational Recurrent

Bag of words

network AutoEncoder neural networks

Description Handwriting o t-2 o t-1 ot o t+1

Generation recognition compression

Desease from Images

TF-IDF

V V V V

Input Output

Convolutional

detection Zack Tutt

Anomaly neural networks

detection S

t-2

S

t-1

S

t

S

t+1

ENCODER DECODER

Chess

1997 0 U U U U GRU State

Generative

1 adversarial networks

Kasparov vs DeepBlue x t-2 x t-1 xt x t+1 AutoEncoder

Multiple Data 2.5 : 3.5 2

objects Security 3

4 Adam2 RNN Seq2seq

Regressions

5

Image ImageNet

6

and simple methods

Objects classification Prisma

7

8 Neural

Mini batch

gradient descent

detection

Cancer Relation Trees

9

Input Gradient descent memory

layer Output core

AlexNet

FindFace layer LTSM Simularity learning

Face Objects

localization

Single Computer LDA

recognition objects

vision Source Style Result

Dense Stochastic

gradient

Attention Bayesian methods

descent Soft

Simulated attention tanh

annealing Genetics

DOG Style transfer Genetic

C51

Self attention

σ σ tanh σ

Emotions 2D to 3D Metaheuristic algorithm

Unsupervised

recognition 89%

Gaussian pyramids Self play HRL methods Capsule Deep MaxPooling

Q-netwok

Sift MCTS

State BatchNorm Heuristic methods

Panorams Image Prioritized

Instance (DQN)

Environment

replay PixelCNN CNN

Generation Value

89% 21% 8% 23%

segmentation

Reinforcement Dueling

AlexNet Feature map Attention

based

double DQN

Semantics

Learning Gym Inception net

Reward Domain knowlege

Self-driving segmentation Neural networks

cars Exploration

Action Reinforcement

Exploitation ResNet learning

Q-learning Wasserstein GAN

Stack GAN

Rainbow MDP VGGNet

GAN

Conditional GAN

Game Starcraft II DotA 2 Go SARSA

DeepFakes Initial image

Robotics Sparse

bots 2019

1:5

2017

0:2

2016

1:4

Advantage

actor critic (A2C)

Real faces

rewards Policy Generator Discriminator Simulator of a machine

based

Deconvolutional Deep convolutional learning specialist

POMDP Actor ACKTR

Random

Network (DCN) Network (DCN)

critic

vs vs vs noise Fake

MaNa

Dendi Lee Sedol

AlphaGo LunarLander luden.io/wtl

AlphaStar Crossentropy

Asyncronous advantage

method actor critic (A3C)

Generated

REINFORCE MountainCar

CartPole

faces Real

Methods and

Application area architecture

You might also like

- Philip Bliss - It Is Well - Arranged by Clyde Davids (Piano)Document3 pagesPhilip Bliss - It Is Well - Arranged by Clyde Davids (Piano)Balamaze IsaiahNo ratings yet

- Torso GuideDocument373 pagesTorso GuideMonica PatracutaNo ratings yet

- Platform ADocument1 pagePlatform ACosmescu AnaNo ratings yet

- 13-Alignment Plan &profile KM 40-50Document1 page13-Alignment Plan &profile KM 40-50Tamrayehu KuremaNo ratings yet

- Eine Kleine Nachtmusik: Reduccion Al PianoDocument2 pagesEine Kleine Nachtmusik: Reduccion Al PianoPedroo Pianista431100% (1)

- Ahw 10 103500 Ea 2373 00053 0002 Noy0000y0812 CH 02Document8 pagesAhw 10 103500 Ea 2373 00053 0002 Noy0000y0812 CH 02Parag Lalit SoniNo ratings yet

- Item Specification Table: ElementDocument4 pagesItem Specification Table: ElementNur SyahidaNo ratings yet

- OpAmps EquationsDocument1 pageOpAmps EquationsTelmo MiguelNo ratings yet

- K.380 Domenico ScarlattiDocument4 pagesK.380 Domenico ScarlattiInner West Music CollegeNo ratings yet

- EM-POSTO DE COMBUSTÍVEL-29.09.16.prancha 1 PDFDocument1 pageEM-POSTO DE COMBUSTÍVEL-29.09.16.prancha 1 PDFMarcusFragosoNo ratings yet

- Blacked Out-Piano LeadDocument2 pagesBlacked Out-Piano LeadNikita KiriuchinNo ratings yet

- Marcha Nupcial (Richard Wagner) - PianoDocument2 pagesMarcha Nupcial (Richard Wagner) - Pianofernando zunigaNo ratings yet

- To Zanarkand - Final Fantasy X - 2001Document3 pagesTo Zanarkand - Final Fantasy X - 2001m.campos3494No ratings yet

- Intersection DrawingDocument1 pageIntersection DrawingLokesh K. SoniNo ratings yet

- Piano PDFDocument2 pagesPiano PDFAnonymous QpW3abesu9No ratings yet

- Mi Niña Bonita... CompletaDocument10 pagesMi Niña Bonita... CompletaEL CHAMO SOY YO Ke'Mambo TípicoNo ratings yet

- Piano PDFDocument2 pagesPiano PDFLuis Alberto Guerra ZeaNo ratings yet

- Piano PDFDocument2 pagesPiano PDFAnonymous QpW3abesu9No ratings yet

- Piano PDFDocument2 pagesPiano PDFCarlos VasquezNo ratings yet

- Auo t315xw02 vvk0 CBSB T-Con SCH PDFDocument11 pagesAuo t315xw02 vvk0 CBSB T-Con SCH PDFGiancarloRichardRivadeneyraMirandaNo ratings yet

- AZPT Segments and Connectors-Dec-2017Document1 pageAZPT Segments and Connectors-Dec-2017john warningNo ratings yet

- Carol: Richard Storrs Willis (1819-1900), 1850 Edmund SearsDocument1 pageCarol: Richard Storrs Willis (1819-1900), 1850 Edmund SearsmanoloNo ratings yet

- Klavierstück in As: Andantino EspressivoDocument1 pageKlavierstück in As: Andantino EspressivoWesley EstevesNo ratings yet

- TDP Log Layout Open Cut-.Document1 pageTDP Log Layout Open Cut-.DHANESH KcNo ratings yet

- Ec1-02a Second Floor Fdas LayoutDocument1 pageEc1-02a Second Floor Fdas LayoutSEDFREY DELA CRUZNo ratings yet

- 4Document4 pages4Abstruse RonNo ratings yet

- Smks Cinta Kasih Imandi Daftar Nilai Siswa Semester 6 TAHUN 2021Document2 pagesSmks Cinta Kasih Imandi Daftar Nilai Siswa Semester 6 TAHUN 2021Gustian PogaladNo ratings yet

- Hymne Ukraine 5 Parties-PianoDocument1 pageHymne Ukraine 5 Parties-PianoRenataNo ratings yet

- My Castle TownDocument3 pagesMy Castle TownPauloNo ratings yet

- UOttawa CampusDocument1 pageUOttawa CampusAmrakpady UchNo ratings yet

- Saint Saens Camille Le CygneDocument1 pageSaint Saens Camille Le CygneOmar Abdallah Najar MedinaNo ratings yet

- Planview CutDocument1 pagePlanview CutPandu RadinaNo ratings yet

- Denah PDF KeseluruhanDocument13 pagesDenah PDF KeseluruhanpoladwipaNo ratings yet

- Campsite Map Small-2016Document1 pageCampsite Map Small-2016api-529188810No ratings yet

- Pastime FlûteDocument1 pagePastime FlûteMeliodas SamaNo ratings yet

- Pastime FlûteDocument1 pagePastime FlûteMeliodas SamaNo ratings yet

- C.plano de UbicacionDocument1 pageC.plano de UbicacionEdinson F. RomeroNo ratings yet

- Gloomhaven Forgotten Circles RulebookDocument5 pagesGloomhaven Forgotten Circles RulebookDaniel ArdilaNo ratings yet

- ERL LayoutDocument1 pageERL Layoutmu khaledNo ratings yet

- Ground Tiling Layout-Model333Document1 pageGround Tiling Layout-Model333SIDHARTH SNo ratings yet

- Marcha Nupcial WagnerDocument2 pagesMarcha Nupcial WagnerVictoria AcostaNo ratings yet

- Italia Alto Sax 1Document1 pageItalia Alto Sax 1Cláudio ClementeNo ratings yet

- AitoukaDocument10 pagesAitoukaYaitu SayaNo ratings yet

- LGF BOH Rooms MEP Handover LayoutDocument1 pageLGF BOH Rooms MEP Handover LayoutRohit SinghNo ratings yet

- CCTV Location - MAINGATEDocument1 pageCCTV Location - MAINGATEAdi PriyapurnatamaNo ratings yet

- Top Gun AnthemDocument1 pageTop Gun AnthemVania NascimentoNo ratings yet

- Llorarás UTP-PianoDocument4 pagesLlorarás UTP-PianoJ.A.N.S xdNo ratings yet

- La Venda-Trombon - 2Document1 pageLa Venda-Trombon - 2Judit Carrilero CabezasNo ratings yet

- La Venda-Trombon 2Document1 pageLa Venda-Trombon 2Judit Carrilero CabezasNo ratings yet

- Outils - 6 Sigma Project GuidelineDocument1 pageOutils - 6 Sigma Project GuidelineCar YamooNo ratings yet

- V1a TR 20002Document4 pagesV1a TR 20002bambangNo ratings yet

- SWR UpdatedDocument1 pageSWR Updatedram prasad meenaNo ratings yet

- Layout BDocument1 pageLayout BMuhammad FauzanNo ratings yet

- A Winter Story RemediosDocument3 pagesA Winter Story RemediosMaxim KoreshevNo ratings yet

- The Sanctuary of Zi'tah: Naoshi Mizuta Transcribed by EugennoixDocument3 pagesThe Sanctuary of Zi'tah: Naoshi Mizuta Transcribed by EugennoixvjkeNo ratings yet

- Paquito Chocolatero-Dolçaina - FaDocument1 pagePaquito Chocolatero-Dolçaina - FaDamian Magallon FerrandezNo ratings yet

- Bland LogoTitle Back - Final Fantasy Tactics PS1 Hitoshi SakimotoDocument2 pagesBland LogoTitle Back - Final Fantasy Tactics PS1 Hitoshi SakimotoCaio mantovani AlvesNo ratings yet

- Jdh1-05-004-Zas-Ts9-1-Ar-Asb-0702 - 00Document1 pageJdh1-05-004-Zas-Ts9-1-Ar-Asb-0702 - 00Civil structureNo ratings yet

- Ave María - Clarinete Pral y 1ºDocument1 pageAve María - Clarinete Pral y 1ºJOSE LUIS BEDMAR ESTRADANo ratings yet

- Aria StagioneDocument2 pagesAria StagioneVicente JonathanNo ratings yet

- Basics of Animal Communication - Interaction, Signalling and Sensemaking in The Animal KingdomDocument160 pagesBasics of Animal Communication - Interaction, Signalling and Sensemaking in The Animal Kingdommatijahajek100% (1)

- FINAL Syllabus Changes in Cambridge IGCSE™ Biology (0610) Author LetterDocument2 pagesFINAL Syllabus Changes in Cambridge IGCSE™ Biology (0610) Author LetterAGS GamingNo ratings yet

- BSC & LFC Maintence Tips and Procedures-V.B.-mar2021Document9 pagesBSC & LFC Maintence Tips and Procedures-V.B.-mar2021Alexis KellyNo ratings yet

- Psy512 CH 3Document3 pagesPsy512 CH 3Maya GeeNo ratings yet

- Characteristics of Life UpdatedDocument12 pagesCharacteristics of Life UpdatedGarcia Gino MikoNo ratings yet

- Class 9th the Fundamental Unit of Life PPTDocument31 pagesClass 9th the Fundamental Unit of Life PPTsrrmchrnNo ratings yet

- Bio 3cm Ch11Document10 pagesBio 3cm Ch11KARRINGTON BRAHAMNo ratings yet

- University of Alberta Biology 207 - Molecular Genetics & Heredity Fall 2014 - Section A1 - Course SyllabusDocument4 pagesUniversity of Alberta Biology 207 - Molecular Genetics & Heredity Fall 2014 - Section A1 - Course SyllabusMathew WebsterNo ratings yet

- Week 3: Cell Modifications: General Biology 1Document3 pagesWeek 3: Cell Modifications: General Biology 1Florene Bhon GumapacNo ratings yet

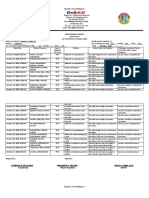

- Sta. Barbara Central School: Tel No. (062) 992-3586 Email: 126210@deped - Gov.phDocument9 pagesSta. Barbara Central School: Tel No. (062) 992-3586 Email: 126210@deped - Gov.phClarissa Dela CruzNo ratings yet

- Full Download Ebook PDF The Complete Field Guide To Butterflies of Australia 2nd Edition PDFDocument41 pagesFull Download Ebook PDF The Complete Field Guide To Butterflies of Australia 2nd Edition PDFrobert.roberts100100% (34)

- EarthAndLifeScience (SHS) Q2 Mod21 EvolvingConceptOfLifeBasedOnEmergingPiecesOfEvidence V1Document23 pagesEarthAndLifeScience (SHS) Q2 Mod21 EvolvingConceptOfLifeBasedOnEmergingPiecesOfEvidence V1Roseman TumaliuanNo ratings yet

- Class IV - Summer Learning Portfolio - 23-24-1Document9 pagesClass IV - Summer Learning Portfolio - 23-24-1Tanveer KhanNo ratings yet

- General Biology 1: First Semester - Quarter 1Document25 pagesGeneral Biology 1: First Semester - Quarter 1Hera SoleilNo ratings yet

- Kompilasi Reading ComprehensionDocument25 pagesKompilasi Reading ComprehensionirniNo ratings yet

- KS3 Science 2005 Mark SchemeDocument56 pagesKS3 Science 2005 Mark SchemeMai TruongNo ratings yet

- Notice-Xplore 2021-1 PDFDocument3 pagesNotice-Xplore 2021-1 PDFjiya singhNo ratings yet

- Nature That Makes Us Human Why We Keep Destroying Nature and How We Can Stop Doing So Michel Loreau Full ChapterDocument67 pagesNature That Makes Us Human Why We Keep Destroying Nature and How We Can Stop Doing So Michel Loreau Full Chapterpeter.stevenson777100% (9)

- SPM 4 - RespirationDocument1 pageSPM 4 - RespirationKumar AyavooNo ratings yet

- VAIBHAVI KADAM ResumeDocument1 pageVAIBHAVI KADAM ResumebloodstoragecentersuryaNo ratings yet

- Toxicon: Mahmood Sasa, Dennis K. Wasko, William W. LamarDocument19 pagesToxicon: Mahmood Sasa, Dennis K. Wasko, William W. Lamarandrea pinzonNo ratings yet

- Jilbert B. Gumaru, LPTDocument85 pagesJilbert B. Gumaru, LPTJulius MacaballugNo ratings yet

- SOPDocument2 pagesSOPNIGEL SAANANo ratings yet

- Cprot 100 SyllabusDocument6 pagesCprot 100 Syllabusjan ray aribuaboNo ratings yet

- NEET Chapter Wise Weightage 2019Document8 pagesNEET Chapter Wise Weightage 2019Kartik MalhotraNo ratings yet

- Jppres20.1004 9.5.584Document14 pagesJppres20.1004 9.5.584Indri SetyawatiNo ratings yet

- How To Optimize Your Water Quality & Intake For Health Huberman Lab PodcastDocument52 pagesHow To Optimize Your Water Quality & Intake For Health Huberman Lab Podcastwaheed khanNo ratings yet

- Cognitive and Language DevelopmentDocument10 pagesCognitive and Language DevelopmentD JNo ratings yet

- Clearing and Infiltration (Histopathology and Cytology)Document2 pagesClearing and Infiltration (Histopathology and Cytology)Noriz Ember DominguezNo ratings yet