Professional Documents

Culture Documents

Markov 1

Markov 1

Uploaded by

Narasimharajan ManiOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Markov 1

Markov 1

Uploaded by

Narasimharajan ManiCopyright:

Available Formats

Stochastic Processes, Markov Chains, and Markov Models

Stochastic Process

Stochastic Processes, Markov Chains, and Markov Models

Stochastic Process Markov Chain

Stochastic Process

Stochastic Processes, Markov Chains, and Markov Models

L645 Dept. of Linguistics, Indiana University Fall 2009

Markov Models

A stochastic or random process is a sequence 1 , 2 , . . . of random variables based on the same sample space . The possible outcomes of the random variables are called the set of possible states of the process.

Markov Chain Markov Models

The process will be said to be in state t at time t.

Note that the random variables are in general not independent.

In fact, the interesting thing about stochastic process is the dependence between the random variables.

1 / 22

2 / 22

Stochastic Process: Example 1

Stochastic Processes, Markov Chains, and Markov Models

Stochastic Process: Example 2

Stochastic Processes, Markov Chains, and Markov Models

Stochastic Process Markov Chain Markov Models

Stochastic Process Markov Chain

The sequence of outcomes when repeatedly casting the die is a stochastic process with discrete random variables and a discrete time parameter.

There are three telephone lines, and at any given moment 0, 1, 2 or 3 of them can be busy. Once every minute we will observe how many of them are busy. This will be a random variable with = {0, 1, 2, 3}. Let 1 be the number of busy lines at the rst observation time, 2 the number of busy lines at the second observation time, etc. The sequence of the number of the busy lines then forms a random process with discrete random variables and a discrete time parameter.

Markov Models

3 / 22

4 / 22

Characterizing a random process

Stochastic Processes, Markov Chains, and Markov Models

Markov Chains and the Markov Property (1)

Stochastic Processes, Markov Chains, and Markov Models

Stochastic Process

Stochastic Process

To fully characterize a random process with time parameter t, we specify: 1. the probability P(1 = xj ) of each outcome xj for the rst observation, i.e., the initial state 1 2. for each subsequent observation/state t+1 : t = 1, 2, ... the conditional probabilities P(t+1 = xit+1 |1 = xi , ..., t = xit ) Terminate after some nite number of steps T

Markov Chain Markov Models

A Markov chain is a special type of stochastic process where the probability of the next state conditional on the entire sequence of previous states up to the current state is in fact only dependent on the current state. This is called the Markov property and can be stated as:

Markov Chain Markov Models

P(t+1 = xit+1 |1 = xi1 , . . . , t = xit ) =

P(t+1 = xit+1 |t = xit )

5 / 22

6 / 22

Markov Chains and the Markov Property (2)

Stochastic Processes, Markov Chains, and Markov Models

Markov Chains: Example

Stochastic Processes, Markov Chains, and Markov Models

The probability of a Markov chain 1 , 2 , . . . can be calculated as:

Stochastic Process Markov Chain Markov Models

Stochastic Process Markov Chain Markov Models

P(1 = xi1 , . . . , t = xit ) = = P(1 = xi1 ) P(2 = xi2 |1 = xi1 ) . . . P(t = xit |t1 = xit1 ) The conditional probabilities P(t+1 = xit+1 |t = xit ) are called the transition probabilities of the Markov chain. A nite Markov chain must at each time be in one of a nite number of states.

We can turn the telephone example with 0, 1, 2 or 3 busy lines into a (nite) Markov chain by assuming that the number of busy lines will depend only on the number of lines that were busy the last time we observed them, and not on the previous history.

7 / 22

8 / 22

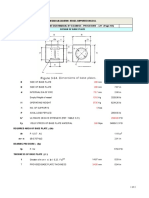

Transition Matrix for a Markov Process

Consider a Markov chain with n states s1 , . . . , sn . Let pij denote the transition probability from state si to state sj , i.e., P(t+1 = sj |t = si ). The transition matrix for this Markov process is then dened as p11 . . . p1n P = . . . . . . . . . , pij 0, pn1 . . . pnn

n

Stochastic Processes, Markov Chains, and Markov Models

Transition Matrix: Example

Stochastic Processes, Markov Chains, and Markov Models

Stochastic Process Markov Chain Markov Models

Stochastic Process Markov Chain

In the telephone example, a possible transition matrix could for example be: s0 0.2 0.3 0.1 0.1 s1 0.5 0.4 0.3 0.1 s2 0.2 0.2 0.4 0.3 s3 0.1 0.1 0.2 0.5

Markov Models

P= pij = 1, i = 1, . . . , n

j=1

s0 s1 s2 s3

In general, a matrix with these properties is called a stochastic matrix.

9 / 22

10 / 22

Transition Matrix: Example (2)

Stochastic Processes, Markov Chains, and Markov Models

Transition Matrix after Two Steps

Stochastic Processes, Markov Chains, and Markov Models

Stochastic Process Markov Chain

Stochastic Process

(2) pij

Assume that currently, all three lines are busy. What is then the probability that at the next point in time exactly one line is busy? The element in Row 3, Column 1 (p31 ) is 0.1, and thus p(1|3) = 0.1. (Note that we have numbered the rows and columns 0 through 3.)

Markov Models

= P(t+2 = sj |t = si )

n

Markov Chain Markov Models

=

r =1 n

P(t+1 = sr |t = si ) P(t+2 = sj |t+1 = sr ) pir prj

=

r =1

The element pij can be determined by matrix multiplication as the value in row i and column j of the transition matrix P 2 = PP. More generally, the transition matrix for t steps is P t .

11 / 22 12 / 22

(2)

Matrix Multiplication

Stochastic Processes, Markov Chains, and Markov Models

Matrix after Two Steps: Example

Stochastic Processes, Markov Chains, and Markov Models

Stochastic Process Markov Chain Markov Models

For the telephone example: Assuming that currently all three lines are busy, what is the probability of exactly one line being busy after two steps in time? s0 0.22 0.21 0.17 0.12 s1 0.37 0.38 0.31 0.22 s2 0.25 0.25 0.30 0.28 s3 0.15 0.15 0.20 0.24

Stochastic Process Markov Chain Markov Models

Lets go to

http://people.hofstra.edu/Stefan Waner/realWorld/tutorialsf1/frames3 2.html

and practice.

P 2 = PP =

s0 s1 s2 s3

p31 = 0.22

(2)

13 / 22

14 / 22

Initial Probability Vector

Stochastic Processes, Markov Chains, and Markov Models

Initial Probability Vector

Stochastic Processes, Markov Chains, and Markov Models

A vector v = [v1 , . . . , vn ] with vi 0 and called a probability vector.

n i=1 vi

Stochastic Process

Stochastic Process Markov Chain Markov Models

= 1 is

Markov Chain Markov Models

The probability vector that determines the state probabilities of the observations of the rst element (state) in a Markov chain, i.e., where vi = P(1 = si ), is called an initial probability vector. The initial probability vector and the transition matrix together determine the probability of the chain in a particular state at a particular point in time.

If p t (si ) is the probability of a Markov chain being in state si at time t, i.e., after t 1 steps, then [p t (s1 ), . . . , p t (sn )] = vP t1

15 / 22

16 / 22

Initial Probability Vector: Example

Stochastic Processes, Markov Chains, and Markov Models

Markov Models

Stochastic Processes, Markov Chains, and Markov Models

Stochastic Process

Stochastic Process Markov Chain Markov Models

Let v = [0.5 0.3 0.2 0.0] be the initial probability vector for the telephone example of a Markov chain. What is then the probability that after two steps exactly two lines are busy? vP 2 = vPP = [ s0 0.21 s1 0.43 s2 0.24 s3 0.12 ]

Markov Chain Markov Models

Markov models add the following to a Markov chain:

a sequence of random variables t for t = 1, ..., T representing the signal emitted at time t

We will use j to refer to a particular signal

p(2 = s2 ) = 0.24

17 / 22

18 / 22

Markov Models (1)

A Markov Model consists of:

Stochastic Processes, Markov Chains, and Markov Models

HMM Example 1: Crazy Softdrink Machine

Stochastic Processes, Markov Chains, and Markov Models

Stochastic Process Markov Chain Markov Models

Stochastic Process

a nite set of states = {s1 , . . . , sn }; an n n state transition matrix P = [pij ] where pij = P(t+1 = sj | t = si ); an signal alphabet = {1 , . . . , m };

0.5 0.7 ColaPref. 0.3 Iced Tea Pref.

Markov Chain Markov Models

0.5

an n m signal matrix A = [aij ], which for each state-signal pair determines the probability aij = p(t = j | t = si ) that signal j will be emitted given that the current state is si ; and an initial vector v = [v1 , . . . , vn ] where vi = P(1 = si ).

with emission probabilities:

CP IP

cola ice-t lem 0.6 0.1 0.3 0.1 0.7 0.2

from: Manning/Schutze; p. 321

19 / 22 20 / 22

Markov Models (2)

Stochastic Processes, Markov Chains, and Markov Models

Markov Models (3)

Stochastic Processes, Markov Chains, and Markov Models

Stochastic Process Markov Chain Markov Models

The probability that signal j will be emitted at time t is then:

n n

Stochastic Process Markov Chain Markov Models

p (si , j ) = p (si ) p(t = j | t = si ) where

(t)

(t)

p (t) (si )

is the ith element of the vector vPt1

p (t) (j ) =

i=1

p (t) (si , j ) =

i=1

This means that the probability of emitting a particular signal depends only on the current state

p (t) (si ) p(t = j | t = si )

. . . and not on the previous states

Thus if p (t) (j ) is the probability of the model emitting signal j at time t, i.e., after t 1 steps, then [p (t) (1 ), . . . , p (t) (m )] = vPt1 A

21 / 22

22 / 22

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5834)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1093)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (852)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (590)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (903)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (541)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (350)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (824)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (122)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (405)

- Cycle SyncingDocument43 pagesCycle SyncingHeShot MeDown100% (2)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Leg Support Calculation PDFDocument2 pagesLeg Support Calculation PDFSanjay MoreNo ratings yet

- The Evolution of Video Streaming and Digital Content DeliveryDocument8 pagesThe Evolution of Video Streaming and Digital Content DeliveryBrookings InstitutionNo ratings yet

- 5 25 17 Migraines PowerPointDocument40 pages5 25 17 Migraines PowerPointSaifi AlamNo ratings yet

- HEI Tech Sheet 110Document15 pagesHEI Tech Sheet 110Suganya LokeshNo ratings yet

- Sri Lank An Airline IndustryDocument29 pagesSri Lank An Airline IndustryTuan RifkhanNo ratings yet

- Research Progress of The Arti Ficial Intelligence Application in Wastewater Treatment During 2012 - 2022: A Bibliometric AnalysisDocument17 pagesResearch Progress of The Arti Ficial Intelligence Application in Wastewater Treatment During 2012 - 2022: A Bibliometric AnalysisjinxiaohuibabaNo ratings yet

- Unit VII Lecture NotesDocument3 pagesUnit VII Lecture NotesSteve Sullivan100% (2)

- AM1000 Modbus Protocol en VA0Document4 pagesAM1000 Modbus Protocol en VA0Pedro José Arjona GonzálezNo ratings yet

- T2T 32T BTC Master Manual enDocument10 pagesT2T 32T BTC Master Manual enRAMON RUIZNo ratings yet

- Md. Rizwanur Rahman - CVDocument4 pagesMd. Rizwanur Rahman - CVHimelNo ratings yet

- Catalogo ColonneDocument140 pagesCatalogo Colonneapi-18378576No ratings yet

- Chapter8-Campuran Pada Tingkat Molekuler - Part 1Document58 pagesChapter8-Campuran Pada Tingkat Molekuler - Part 1Uswatun KhasanahNo ratings yet

- Water Content in SoilDocument4 pagesWater Content in SoilJohn Paul CristobalNo ratings yet

- Valve Body 55Document3 pagesValve Body 55Davidoff RedNo ratings yet

- Da0bl7mb6d0 Rev DDocument44 pagesDa0bl7mb6d0 Rev DFerreira da CunhaNo ratings yet

- (A) Design - Introduction To Transformer DesignDocument16 pages(A) Design - Introduction To Transformer DesignZineddine BENOUADAHNo ratings yet

- English Final Test Grade XiiDocument9 pagesEnglish Final Test Grade XiiLiza RahmawatiNo ratings yet

- Kohima Nagaland LBDocument1 pageKohima Nagaland LBIndia TreadingNo ratings yet

- A. Title of Experiment B. Date and Time of Experiment: Wednesday, 10Document15 pagesA. Title of Experiment B. Date and Time of Experiment: Wednesday, 10LichaNo ratings yet

- WATERGUARD 45 (Acrylic Waterproofing Coating)Document3 pagesWATERGUARD 45 (Acrylic Waterproofing Coating)Santosh Kumar PatnaikNo ratings yet

- Tac85 11Document32 pagesTac85 11TateNo ratings yet

- Problem: Determine The Total Volume of Earth To Be Excavated Up To Elevation 0Document17 pagesProblem: Determine The Total Volume of Earth To Be Excavated Up To Elevation 0gtech00100% (1)

- Pablocastillo PDFDocument44 pagesPablocastillo PDFPabloNo ratings yet

- Behaviour of Hollow Core Slabs Under Point LoadsDocument17 pagesBehaviour of Hollow Core Slabs Under Point LoadsVálter LúcioNo ratings yet

- TDS 0033 FlexoTop 202003Document3 pagesTDS 0033 FlexoTop 202003Mearg NgusseNo ratings yet

- Gas Pressure Reducing: Gas Pressure Reducing & Shut-Off Valve & Shut-Off Valve Series 71P11A Series 71P11ADocument4 pagesGas Pressure Reducing: Gas Pressure Reducing & Shut-Off Valve & Shut-Off Valve Series 71P11A Series 71P11AĐình Sơn HoàngNo ratings yet

- Surveillance, Torture and Contr - Rosanne Marie SchneiderDocument135 pagesSurveillance, Torture and Contr - Rosanne Marie SchneiderMagic MikeNo ratings yet

- Exit Poll CedatosDocument1 pageExit Poll CedatosEcuadorenvivoNo ratings yet

- Practice Exam Linear Algebra PDFDocument2 pagesPractice Exam Linear Algebra PDFShela RamosNo ratings yet