Professional Documents

Culture Documents

IMA PPtTutorial

IMA PPtTutorial

Uploaded by

Nijin BabuOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

IMA PPtTutorial

IMA PPtTutorial

Uploaded by

Nijin BabuCopyright:

Available Formats

Lawrence Livermore National Laboratory

Ulrike Meier Yang

LLNL-PRES-463131

Lawrence Livermore National Laboratory, P. O. Box 808, Livermore, CA 94551

This work performed under the auspices of the U.S. Department of Energy by

Lawrence Livermore National Laboratory under Contract DE-AC52-07NA27344

A Parallel Computing Tutorial

2

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

References

Introduction to Parallel Computing, Blaise Barney

https://computing.llnl.gov/tutorials/parallel_comp

A Parallel Multigrid Tutorial, Jim Jones

https://computing.llnl.gov/casc/linear_solvers/present.html

Introduction to Parallel Computing, Design and Analysis

of Algorithms, Vipin Kumar, Ananth Grama, Anshul

Gupta, George Karypis

3

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Overview

Basics of Parallel Computing (see Barney)

- Concepts and Terminology

- Computer Architectures

- Programming Models

- Designing Parallel Programs

Parallel algorithms and their implementation

- basic kernels

- Krylov methods

- multigrid

4

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Serial computation

Traditionally software has been written for serial computation:

- run on a single computer

- instructions are run one after another

- only one instruction executed at a time

5

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

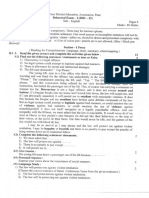

Von Neumann Architecture

John von Neumann first authored the

general requirements for an electronic

computer in 1945

Data and instructions are stored in memory

Control unit fetches instructions/data from

memory, decodes the instructions and then

sequentially coordinates operations to

accomplish the programmed task.

Arithmetic Unit performs basic arithmetic

operations

Input/Output is the interface to the human

operator

6

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Parallel Computing

Simultaneous use of multiple compute sources to solve a single

problem

7

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Why use parallel computing?

Save time and/or money

Enables the solution of larger problems

Provide concurrency

Use of non-local resources

Limits to serial computing

8

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Concepts and Terminology

Flynns Taxonomy (1966)

S I S D

Single Instruction, Single Data

S I M D

Single Instruction, Multiple Data

M I S D

Multiple Instruction, Single Data

M I M D

Multiple Instruction, Multiple Data

9

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

SISD

Serial computer

Deterministic execution

Examples: older generation main frames, work stations, PCs

10

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

SIMD

A type of parallel computer

All processing units execute the same instruction at any given clock cycle

Each processing unit can operate on a different data element

Two varieties: Processor Arrays and Vector Pipelines

Most modern computers, particularly those with graphics processor units

(GPUs) employ SIMD instructions and execution units.

11

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

MISD

A single data stream is fed into multiple processing units.

Each processing unit operates on the data independently via independent

instruction streams.

Few actual examples : Carnegie-Mellon C.mmp computer (1971).

12

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

MIMD

Currently, most common type of parallel computer

Every processor may be executing a different instruction stream

Every processor may be working with a different data stream

Execution can be synchronous or asynchronous, deterministic or non-

deterministic

Examples: most current supercomputers, networked parallel computer

clusters and "grids", multi-processor SMP computers, multi-core PCs.

Note: many MIMD

architectures also

include SIMD execution

sub-components

13

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Parallel Computer Architectures

Shared memory:

all processors can access the

same memory

Uniform memory access (UMA):

- identical processors

- equal access and access

times to memory

14

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Non-uniform memory access (NUMA)

Not all processors have equal access to all memories

Memory access across link is slower

Advantages:

- user-friendly programming perspective to memory

- fast and uniform data sharing due to the proximity of memory to CPUs

Disadvantages:

- lack of scalability between memory and CPUs.

- Programmer responsible to ensure "correct" access of global memory

- Expense

15

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Distributed memory

Distributed memory systems require a communication network to

connect inter-processor memory.

Advantages:

- Memory is scalable with number of processors.

- No memory interference or overhead for trying to keep cache coherency.

- Cost effective

Disadvantages:

- programmer responsible for data communication between processors.

- difficult to map existing data structures to this memory organization.

16

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Hybrid Distributed-Shared Meory

Generally used for the currently largest and fastest

computers

Has a mixture of previously mentioned advantages and

disadvantages

17

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Parallel programming models

Shared memory

Threads

Message Passing

Data Parallel

Hybrid

All of these can be implemented on any architecture.

18

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Shared memory

tasks share a common address space, which they read and write

asynchronously.

Various mechanisms such as locks / semaphores may be used to

control access to the shared memory.

Advantage: no need to explicitly communicate of data between

tasks -> simplified programming

Disadvantages:

Need to take care when managing memory, avoid

synchronization conflicts

Harder to control data locality

19

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Threads

A thread can be considered as a

subroutine in the main program

Threads communicate with each

other through the global memory

commonly associated with shared

memory architectures and

operating systems

Posix Threads or pthreads

OpenMP

20

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Message Passing

A set of tasks that use their

own local memory during

computation.

Data exchange through

sending and receiving

messages.

Data transfer usually requires

cooperative operations to be

performed by each process.

For example, a send operation

must have a matching receive operation.

MPI (released in 1994)

MPI-2 (released in 1996)

21

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Data Parallel

The data parallel model demonstrates the following characteristics:

Most of the parallel work

performs operations on a data

set, organized into a common

structure, such as an array

A set of tasks works collectively

on the same data structure,

with each task working on a

different partition

Tasks perform the same operation

on their partition

On shared memory architectures, all tasks may have access to the

data structure through global memory. On distributed memory

architectures the data structure is split up and resides as "chunks"

in the local memory of each task.

22

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Other Models

Hybrid

- combines various models, e.g. MPI/OpenMP

Single Program Multiple Data (SPMD)

- A single program is executed by all tasks simultaneously

Multiple Program Multiple Data (MPMD)

- An MPMD application has multiple executables. Each task can

execute the same or different program as other tasks.

23

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Designing Parallel Programs

Examine problem:

- Can the problem be parallelized?

- Are there data dependencies?

- where is most of the work done?

- identify bottlenecks (e.g. I/O)

Partitioning

- How should the data be decomposed?

24

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Various partitionings

25

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

How should the algorithm be distributed?

26

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Communications

Types of communication:

- point-to-point

- collective

27

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Synchronization types

Barrier

Each task performs its work until it reaches the barrier. It then

stops, or "blocks".

When the last task reaches the barrier, all tasks are

synchronized.

Lock / semaphore

The first task to acquire the lock "sets" it. This task can then

safely (serially) access the protected data or code.

Other tasks can attempt to acquire the lock but must wait until

the task that owns the lock releases it.

Can be blocking or non-blocking

28

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Load balancing

Keep all tasks busy all of the time. Minimize idle time.

The slowest task will determine the overall performance.

29

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Granularity

In parallel computing, granularity is a qualitative

measure of the ratio of computation to

communication.

Fine-grain Parallelism:

- Low computation to communication ratio

- Facilitates load balancing

- Implies high communication overhead and less

opportunity for performance enhancement

Coarse-grain Parallelism:

- High computation to communication ratio

- Implies more opportunity for performance

increase

- Harder to load balance efficiently

30

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Parallel Computing Performance Metrics

Let T(n,p) be the time to solve a problem of size n

using p processors

- Speedup: S(n,p) = T(n,1)/T(n,p)

- Efficiency: E(n,p) = S(n,p)/p

- Scaled Efficiency: SE(n,p) = T(n,1)/T(pn,p)

An algorithm is scalable if there exists C>0 with

SE(n,p) C for all p

31

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Strong Scalability

increasing the number of processors

for a fixed problem size

1

2

3

4

5

6

7

8

1 2 3 4 5 6 7 8

S

p

e

e

d

u

p

no of procs

perfect

speedup

method

0

1

2

3

4

5

6

1 2 3 4 5 6 7 8

s

e

c

o

n

d

s

no of procs

Run times

0.6

0.7

0.8

0.9

1

1.1

1.2

1 2 3 4 5 6 7 8

E

f

f

i

c

i

e

n

c

y

no of procs

32

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Weak scalability

increasing the number of processors using a fixed problem size

per processor

0

0.2

0.4

0.6

0.8

1

1.2

1.4

1.6

1.8

2

1 2 3 4 5 6 7 8

s

e

c

o

n

d

s

no of procs

Run times

0

0.2

0.4

0.6

0.8

1

1.2

1 2 3 4 5 6 7 8

S

c

a

l

e

d

E

f

f

i

c

i

e

n

c

y

No of procs

33

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Amdahls Law

Maximal Speedup = 1/(1-P), P parallel portion of code

34

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Amdahls Law

Speedup = 1/((P/N)+S),

N no. of processors, S serial portion of code

Speedup

N P=0.5 P=0.9 P=0.99

10

100

1000

10000

100000

1.82

1.98

1.99

1.99

1.99

5.26

9.17

9.91

9.91

9.99

9.17

50.25

90.99

99.02

99.90

50%

75%

90%

95%

35

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Performance Model for distributed memory

Time for n floating point operations:

t

comp

= fn

1/f Mflops

Time for communicating n words:

t

comm

= + n

latency, 1/ bandwidth

Total time: t

comp

+ t

comm

36

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Parallel Algorithms and Implementations

basic kernels

Krylov methods

multigrid

37

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Vector Operations

Adding two vectors

x = y+z

Axpys

y = y+x

Vector products

Proc 0

Proc 1

Proc 2

z

0

= x

0

+ y

0

z

1

= x

1

+ y

1

z

2

= x

2

+ y

2

Proc 0

Proc 1

Proc 2

s

0

= x

0

* y

0

s

1

= x

1

* y

1

s

2

= x

2

* y

2

s = s

0

+ s

1

+ s

2

s = x

T

y

38

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Dense n x n Matrix Vector Multiplication

Matrix size n x n , p processors

=

T = 2(n

2

/p)f + (p-1)(+n/p)

Proc 0

Proc 1

Proc 2

Proc 3

39

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Sparse Matrix Vector Multiplication

Matrix of size n

2

x n

2

, p

2

processors

Consider graph of matrix

Partitioning influences run time

T

1

10(n

2

/p

2

)f + 2(+n) vs. T

2

10(n

2

/p

2

)f + 4(+n/p)

40

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Effect of Partitioning on Performance

1

3

5

7

9

11

13

15

1 3 5 7 9 11 13 15

S

p

e

e

d

u

p

No of procs

Perfect Speedup

MPI opt

MPI-noopt

41

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Coordinate Format (COO)

real Data(nnz), integer Row(nnz), integer Col(nnz)

Data

Row

Col

Matrix-Vector multiplication:

for (k=0; k<nnz; k++)

y[[Row[k]] = y[Row[k]] + Data[k] * x[Col[k]];

Sparse Matrix Vector Multiply with COO Data Structure

1 0 0 2

0 3 4 0

0 5 6 0

7 0 8 9

2 5 1 3 7 9 4 8 6

0 2 0 1 3 3 1 3 2

3 1 0 1 0 3 2 2 2

42

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Matrix-Vector multiplication:

for (k=0; k<nnz; k++)

y[[Row[k]] = y[Row[k]] + Data[k] * Col[A_j[k]]

Data possible conflict

row 1-3

Row

Col

Proc 0 Proc 1

Sparse Matrix Vector Multiply with COO Data Structure

2 3 1 5 7 9 4 8 6

0 1 0 2 3 3 1 3 2

3 1 0 1 0 3 2 2 2

1 0 0 2

0 3 4 0

0 5 6 0

7 0 8 9

2 3 1 4

0 1 0 1

3 1 0 2

5 7 9 8 6

2 3 3 3 2

1 0 3 2 2

1 0 0 2

0 3 4 0

0 5 6 0

7 0 8 9

43

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Sparse Matrix Vector Multiply with CSR Data Structure

Compressed sparse row (CSR)

real Data(nnz), integer Rowptr(n+1), Col(nnz)

Data

Rowptr

Col

Matrix-Vector multiplication:

for (i=0; i<n; i++)

for (j=Rowptri[i]; j < Rowptr[i+1]; j++)

y[i] = y[i] + Data[j] * x[Col[j]];

1 0 0 2

0 3 4 0

0 5 6 0

7 0 8 9

1 2 3 4 5 6 7 8 9

0 2 4 6 9

0 3 1 2 1 2 0 2 3

44

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Sparse Matrix Vector Multiply with CSC Data Structure

Compressed sparse column (CSC)

real Data(nnz), integer Colptr(n+1), Row(nnz)

Data

Colptr

Row

Matrix-Vector multiplication:

for (i=0; i<n; i++)

for (j=Colptri[i]; j < Colptr[i+1]; j++)

y[Row[j]] = y[Row[j]] + Data[j] * x[i];

1 0 0 2

0 3 4 0

0 5 6 0

7 0 8 9

1 7 3 5 4 6 8 2 9

0 2 4 7 9

0 3 1 2 1 2 3 0 3

45

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

CSR

CSC

Parallel Matvec, CSR vs. CSC

y

0

= A

0

x

0

y

1

= A

1

x

1

y

2

= A

2

x

2

A

0

x

0

y = y

0

+ y

1

+ y

2

A

0

A

2

x

1

x

2

46

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Sparse Matrix Vector Multiply with Diagonal Storage

Diagonal storage

real Data(n,m), integer offset(m)

Data offset

Matrix-Vector multiplication:

for (i=0; i<m; i++)

{ k = offset[i];

for (j=0; j < n; j++)

y[j] = y[j] + Data[i,j+k] * x[j+k];}

1 2 0 0

0 3 0 0

5 0 6 7

0 8 0 9

0 1 2

0 3 0

5 6 7

8 9 0

-2 0 1

47

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Other Data Structures

Block data structures (BCSR, BCSC)

block size bxb, n divisible by b

real Data(nnb*b*b), integer Ptr(n/b+1), integer Index(nnb)

Based on space filling curves

(e.g. Hilbert curves, Z-order)

integer IJ(2*nnz)

real Data(nnz)

Matrix-Vector multiplication:

for (i=0; i<nnz; i++)

y[IJ[2*i+1]] = y[IJ[2*i+1]] + Data[i] * x[IJ[2*i]];

48

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Data Structures for Distributed Memory

Concept for MATAIJ (PETSc) and ParCSR (Hypre)

x x x

x x x x x x

x x x x x

x x x x x x

x x x x

x x x x x x x

x x x x x x

x x x x x

x x x x x

x x x x x x

x x x x x x

Proc 0

Proc 1

Proc 2

49

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Data Structures for Distributed Memory

Concept for MATAIJ (PETSc) and ParCSR (Hypre)

x x x

x x x x x x

x x x x x

x x x x x x

x x x x

x x x x x x x

x x x x x x

x x x x x

x x x x x

x x x x x x

x x x x x x

Proc 0

Proc 1

Proc 2

50

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Data Structures for Distributed Memory

Concept for MATAIJ (PETSc) and ParCSR (Hypre)

Each processor : Local CSR Matrix,

Nonlocal CSR Matrix

Mapping local to global indices

x x x

x x x x x x

x x x x x

x x x x x x

x x x x

x x x x x x x

x x x x x x

x x x x x

x x x x x

x x x x x x

x x x x x x

0 1 2 3 4 5 6 7 8 9 10

Proc 0

Proc 1

Proc 2

0 2 3 8 10

51

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Communication Information

Neighbor hood information (No. of neighbors)

Amount of data to be sent and/or received

Global partitioning for p processors

not good on very large numbers of processors!

r

0

r

1

r

2

. r

p

52

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Assumed partition (AP) algorithm

Answering global distribution questions previously required O(P)

storage & computations

On BG/L, O(P) storage may not be possible

New algorithm requires

O(1) storage

O(log P) computations

Enables scaling to 100K+

processors

AP idea has general applicability beyond hypre

Data owned by processor 3,

in 4s assumed partition

Actual partition

1 2 4 3

1 N

Assumed partition ( p = (iP)/N )

1 2 3 4

Actual partition info is sent to the assumed

partition processors distributed directory

53

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Assumed partition (AP) algorithm is more challenging

for structured AMR grids

AMR can produce grids with gaps

Our AP function accounts for these

gaps for scalability

Demonstrated on 32K procs of BG/L

Simple, nave AP function leaves

processors with empty partitions

54

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Effect of AP on one of hypres multigrid solvers

0

1

2

3

4

5

6

7

0 20000 40000 60000

s

e

c

o

n

d

s

No of procs

Run times on up to 64K procs on BG/P

global

assumed

55

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Machine specification Hera - LLNL

Multi-core/ multi-socket

cluster

864 diskless nodes

interconnected

by Quad Data rate (QDR)

Infiniband

AMD opteron quad core

(2.3 GHz)

Fat tree network

56

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Individual 512 KB L2 cache for each core

2 MB L3 cache shared by 4 cores

4 sockets per node,16 cores sharing the 32 GB

main memory

NUMA memory access

Multicore cluster details (Hera):

57

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

MxV Performance 2-16 cores OpenMP vs. MPI

7pt stencil

0

1000

2000

3000

4000

5000

6000

7000

8000

9000

0 200000 400000

M

f

l

o

p

s

matrix size

OpenMP

1

2

4

8

12

16

0

1000

2000

3000

4000

5000

6000

7000

8000

9000

0 200000 400000

M

f

l

o

p

s

matrix size

MPI

58

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

MxV Speedup for various matrix sizes, 7-pt stencil

0

5

10

15

20

25

30

1 3 5 7 9 11 13 15

S

p

e

e

d

u

p

no of tasks

MPI

0

5

10

15

20

25

30

1 3 5 7 9 11 13 15

S

p

e

e

d

u

p

no of threads

OpenMP

512

8000

21952

32768

64000

110592

512000

perfect

59

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Iterative linear solvers

Krylov solvers :

- Conjugate gradient

- GMRES

- BiCGSTAB

Generally consist of basic linear kernels

60

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Conjugate Gradient for solving Ax=b

k = 0, x

0

= 0, r

0

= b,

0

= r

0

T

r

0

;

while (

i

>

2

0

)

Solve Mz

k

= r

k

;

k

= r

k

T

z

k

;

if (k=0) p

1

= z

0

else p

k+1

= z

k

+

k

p

k

/

k-1

;

k = k+1 ;

k

=

k-1

/ p

k

T

Ap

k

;

x

k

= x

k-1

+

k

p

k

;

r

k

= r

k-1

-

k

Ap

k

;

k

= r

k

T

r

k

;

end

x = x

k

;

61

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Weak scalability diagonally scaled CG

0

2

4

6

8

10

12

0 20 40 60 80 100 120

s

e

c

o

n

d

s

no of procs

0

100

200

300

400

500

600

0 20 40 60 80 100 120

no of procs

Number of iterations

Total times 100 iterations

62

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Achieve algorithmic scalability through multigrid

smooth

Finest Grid

First Coarse Grid

restrict

interpolate

63

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Multigrid parallelized by domain partitioning

Partition fine grid

Interpolation fully parallel

Smoothing

64

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Select coarse grids,

Define interpolation,

Define restriction and coarse-grid operators

Algebraic Multigrid

Setup Phase:

Solve Phase:

m m ) m (

f u A Relax =

m m ) m (

f u A Relax =

1 m ) m ( m

e P e e Interpolat

+

=

m ) m ( m m

u A f r Compute =

m m m

e u u Correct +

1 m 1 m ) 1 m (

r e A

Solve

+ + +

=

m ) m ( 1 m

r R r Restrict =

+

,... 2 , 1 m , P

) m (

=

) m ( ) m ( ) m ( ) 1 (m T ) m ( ) m (

P A R A ) P ( R = =

+

65

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

How about scalability of multigrid V cycle?

Time for relaxation on finest grid for nxn points per proc

T

0

4 + 4n + 5n

2

f

Time for relaxation on level k

T

k

4 + 4(n/2

k

) + 5(n/2

k

)

2

/4

Total time for V_cycle:

T

V

2

k

(4 + 4(n/2

k

) + 5(n/2

k

)

2

/4)

8(1+1+1+ )

+ 8(1 + 1/2 + 1/4 +) n

+ 10 (1 + 1/4 + 1/16 + ) n

2

f

8 L + 16 n + 40/3 n

2

f

L log

2

(pn) number of levels

lim

p>>0

T

V

(n,1)/ T

V

(pn,p) = O(1/log

2

(p))

66

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

How about scalability of multigrid W cycle?

Total time for W cycle:

T

W

2

k

2

k

(4 + 4(n/2

k

) + 5(n/2

k

)

2

/4)

8(1+2+4+ )

+ 8(1 + 1 + 1 +) n

+ 10 (1 + 1/2 + 1/4 + ) n

2

f

8 (2

L+1

- 1) + 16 L n + 20 n

2

f

8 (2pn - 1) + 16 log

2

(pn) n + 20 n

2

f

L log

2

(pn)

67

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Smoothers

Smoothers significantly affect multigrid convergence and runtime

solving: Ax=b, A SPD

smoothing: x

n+1

= x

n

+ M

-1

(b-Ax

n

)

smoothers:

Jacobi, M=D, easy to parallelize

Gauss-Seidel, M=D+L, highly sequential!!

smooth

1 - n 0,..., i , ) j ( x ) j , i ( A ) i ( b

) i , i ( A

) i ( x

n

i j , j

k k

=

|

|

.

|

\

|

=

= =

1

0

1

1

1 - n 0,..., i , ) j ( x ) j , i ( A ) j ( x ) j , i ( A ) i ( b

) i , i ( A

) i ( x

n

i j

k

i

j

k k

=

|

|

.

|

\

|

=

+ =

=

1

1

1

1

0

1

68

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Parallel Smoothers

Red-Black Gauss-Seidel

Multi-color Gauss-Seidel

69

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Hybrid smoothers

|

|

|

|

|

|

.

|

\

|

=

p

M

.

.

.

M

M

1

k k

k

k

L D

M =

1

Hybrid SOR:

) L D ( D ) L D ( M

T

k k k k k k

=

1

Hybrid Symm. Gau-Seidel:

k k k

L D M =

Hybrid Gau-Seidel:

Can be used for distributed as well as shared parallel computing

Needs to be used with care

Might require smoothing parameter M

= M

Corresponding block schemes,

Schwarz solvers, ILU, etc.

70

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

How is the smoother threaded?

A

1

A =

A

2

A

p

A

p

=

t

1

t

2

t

3

t

4

Hybrid: GS within each

thread, Jacobi on thread

boundaries

the smoother is threaded

such that each thread

works on a contiguous

subset of the rows

matrices are distributed

across P processors in

contiguous blocks of rows

71

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

FEM application: 2D Square

AMG determines thread subdomains by splitting nodes (not elements)

the applications numbering of the elements/nodes becomes relevant!

(i.e., threaded domains dependent on colors)

partitions grid element-wise into nice subdomains for each processor

16 procs

(colors indicate element numbering)

72

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

We want to use OpenMP on multicore machines...

hybrid-GS is not guaranteed to converge, but in practice it is

effective for diffusion problems when subdomains are well-defined

Some Options

1. applications needs to order unknowns in

processor subdomain nicely

may be more difficult due to refinement

may impose non-trivial burden on

application

2. AMG needs to reorder unknowns (rows)

non-trivial, costly

3. smoothers that dont depend on parallelism

73

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Coarsening algorithms

Original coarsening algorithm

Parallel coarsening algorithms

74

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Choosing the Coarse Grid (Classical AMG)

Add measures

(Number of strong

influences of a point)

75

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Choosing the Coarse Grid (Classical AMG)

Pick a point with

maximal measure and

make it a C-point

2

3

3

2

4

4

2

1

2

3

2

76

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Choosing the Coarse Grid (Classical AMG)

Pick a point with

maximal measure and

make it a C-point

Make its neighbors

F-points

3

2

4

2

1

2

77

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Choosing the Coarse Grid (Classical AMG)

Pick a point with

maximal measure and

make it a C-point

Make its neighbors

F-points

Increase measures of

neighbors of new F-

points by number of

new F-neighbors

5

3

4

3

1

2

78

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Choosing the Coarse Grid (Classical AMG)

Pick a point with

maximal measure and

make it a C-point

Make its neighbors

F-points

Increase measures of

neighbors of new F-

points by number of

new F-neighbors

Repeat until finished

5

3

4

3

1

2

79

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Choosing the Coarse Grid (Classical AMG)

Pick a point with

maximal measure and

make it a C-point

Make its neighbors

F-points

Increase measures of

neighbors of new F-

points by number of

new F-neighbors

Repeat until finished

3

5

3

1

80

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Choosing the Coarse Grid (Classical AMG)

Pick a point with

maximal measure and

make it a C-point

Make its neighbors

F-points

Increase measures of

neighbors of new F-

points by number of

new F-neighbors

Repeat until finished

81

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Parallel Coarsening Algorithms

Perform sequential algorithm on each processor,

then deal with processor boundaries

82

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

PMIS: start

select C-pt with

maximal measure

select neighbors as

F-pts

3 5 5 5 5 5 3

5 8 8 8 8 8 5

5 8 8 8 8 8 5

5 8 8 8 8 8 5

5 8 8 8 8 8 5

5 8 8 8 8 8 5

3 5 5 5 5 5 3

83

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

PMIS: add random numbers

select C-pt with

maximal measure

select neighbors as

F-pts

3.7 5.3 5.0 5.9 5.4 5.3 3.4

5.2 8.0 8.5 8.2 8.6 8.2 5.1

5.9 8.1 8.8 8.9 8.4 8.9 5.9

5.7 8.6 8.3 8.8 8.3 8.1 5.0

5.3 8.7 8.3 8.4 8.3 8.8 5.9

5.0 8.8 8.5 8.6 8.7 8.9 5.3

3.2 5.6 5.8 5.6 5.9 5.9 3.0

84

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

PMIS: exchange neighbor information

select C-pt with

maximal measure

select neighbors as

F-pts

3.7 5.3 5.0 5.9 5.4 5.3 3.4

5.2 8.0 8.5 8.2 8.6 8.2 5.1

5.9 8.1 8.8 8.9 8.4 8.9 5.9

5.7 8.6 8.3 8.8 8.3 8.1 5.0

5.3 8.7 8.3 8.4 8.3 8.8 5.9

5.0 8.8 8.5 8.6 8.7 8.9 5.3

3.2 5.6 5.8 5.6 5.9 5.9 3.0

85

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

PMIS: select

8.8

8.9 8.9

8.9

3.7 5.3 5.0 5.9 5.4 5.3 3.4

5.2 8.0 8.5 8.2 8.6 8.2 5.1

5.9 8.1 8.8 8.4 5.9

5.7 8.6 8.3 8.8 8.3 8.1 5.0

5.3 8.7 8.3 8.4 8.3 8.8 5.9

5.0 8.5 8.6 8.7 5.3

3.2 5.6 5.8 5.6 5.9 5.9 3.0

select C-pts with

maximal measure

locally

make neighbors F-

pts

86

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

PMIS: update 1

5.4 5.3 3.4

select C-pts with

maximal measure

locally

make neighbors F-

pts

(requires neighbor

info)

3.7 5.3 5.0 5.9

5.2 8.0

5.9 8.1

5.7 8.6

8.4

8.6

5.6

87

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

PMIS: select

8.6

5.9

8.6

5.4 5.3 3.4

3.7 5.3 5.0

5.2 8.0

5.9 8.1

5.7

8.4

5.6

select C-pts with

maximal measure

locally

make neighbors F-

pts

88

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

PMIS: update 2

5.3 3.4

select C-pts with

maximal measure

locally

make neighbors F-

pts

3.7 5.3

5.2 8.0

89

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

PMIS: final grid

select C-pts with

maximal measure

locally

make neighbor F-

pts

remove neighbor

edges

90

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Interpolation

Nearest neighbor interpolation straight forward to

parallelize

Harder to parallelize long range interpolation

i

j

l k

91

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Communication patterns 128 procs Level 0

Setup Solve

92

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Communication patterns 128 procs Setup

distance 1 vs. distance 2 interpolation

Distance 1 Level 4 Distance 2 Level 4

93

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Communication patterns 128 procs Solve

distance 1 vs. distance 2 interpolation

Distance 1 Level 4 Distance 2 Level 4

94

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

AMG-GMRES(10) on Hera, 7pt 3D Laplace problem on a unit cube,

100x100x100 grid points per node (16 procs per node)

No. of cores

s

e

c

o

n

d

s

Solve times Setup times

No. of cores

0

2

4

6

8

10

12

14

16

18

20

0 1000 2000 3000

Hera-1

Hera-2

BGL-1

BGL-2

0

2

4

6

8

10

12

14

16

18

20

0 1000 2000 3000

95

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Distance 1 vs distance 2 interpolation on different

computer architectures

AMG-GMRES(10), 7pt 3D Laplace problem on a unit cube, using

50x50x25 points per processor, (100x100x100 per node on Hera)

0

5

10

15

20

25

30

0 1000 2000 3000

s

e

c

o

n

d

s

no of cores

Hera-1

Hera-2

BGL-1

BGL-2

96

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

AMG-GMRES(10) on Hera, 7pt 3D Laplace problem on a unit cube,

100x100x100 grid points per node (16 procs per node)

No. of cores

s

e

c

o

n

d

s

Solve cycle times Setup times

No. of cores

0

5

10

15

20

25

30

35

0 5000 10000

MPI 16x1

H 8x2

H 4x4

H 2x8

OMP 1x16

MCSUP 1x16

0

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0 5000 10000

97

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

0

5

10

15

20

25

30

35

40

45

50

0 2000 4000 6000 8000 10000 12000

MPI 16x1

H 8x2

H 4x4

H 2x8

OMP 1x16

MCSUP 1x16

AMG-GMRES(10) on Hera, 7pt 3D Laplace problem on a unit cube,

100x100x100 grid points per node (16 procs per node)

No. of cores

S

e

c

o

n

d

s

98

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Blue Gene/P Solution Intrepid

Argonne National Laboratory

40 racks with 1024

compute nodes each

Quad-core 850 MHz

PowerPC 450 Processor

163,840 cores

2GB main memory per

node shared by all cores

3D torus network

- isolated dedicated partitions

99

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

AMG-GMRES(10) on Intrepid, 7pt 3D Laplace problem on a unit

cube, 50x50x25 grid points per core (4 cores per node)

0

1

2

3

4

5

6

0 50000 100000

S

e

c

o

n

d

s

No of cores

0

0.05

0.1

0.15

0.2

0.25

0.3

0 50000 100000

S

e

c

o

n

d

s

No of cores

smp

dual

vn

vn-omp

H1x4

H2x2

MPI

H4x1

Setup times Cycle times

100

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

AMG-GMRES(10) on Intrepid, 7pt 3D Laplace problem on a unit

cube, 50x50x25 grid points per core (4 cores per node)

0

2

4

6

8

10

12

14

16

0 50000 100000

S

e

c

o

n

d

s

No of procs

H1x4

H2x2

MPI

H4x1

H1x4 H2x2 MPI

128 17 17 18

1024 20 20 22

8192 25 25 25

27648 29 27 28

65536 30 33 29

128000 37 36 36

No. Iterations

101

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Cray XT-5 Jaguar-PF Oak Ridge National Laboratory

18,688 nodes in 200 cabinets

Two sockets with AMD Opteron

Hex-Core processor per node

16 GB main memory per node

Network: 3D torus/ mesh with

wrap-around links (torus-like)

in two dimensions and without

such links in the remaining dimension

Compute Node Linux, limited set of services, but reduced noise ratio

PGIs C compilers (v.904) with and without OpenMP,

OpenMP settings: -mp=numa (preemptive thread pinning, localized

memory allocations)

102

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

AMG-GMRES(10) on Jaguar, 7pt 3D Laplace problem on a unit

cube, 50x50x30 grid points per core (12 cores per node)

Setup times Cycle times

0

1

2

3

4

5

6

7

8

9

10

0 50000 100000 150000 200000

S

e

c

o

n

d

s

No. of cores

MPI

H12x1

H1x12

H4x3

H2x6

0

0.05

0.1

0.15

0.2

0.25

0 50000 100000 150000 200000

S

e

c

o

n

d

s

No. of cores

103

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

AMG-GMRES(10) on Jaguar, 7pt 3D Laplace problem on a unit

cube, 50x50x30 grid points per core (12 cores per node)

0

2

4

6

8

10

12

14

16

0 50000 100000 150000 200000

s

e

c

o

n

d

s

no of cores

MPI

H1x12

H4x3

H2x6

104

Option:UCRL# Option:Additional Information

Lawrence Livermore National Laboratory

Thank You!

This work performed under the auspices of the U.S. Department of

Energy by Lawrence Livermore National Laboratory under

Contract DE-AC52-07NA27344.

You might also like

- PAC5 (UF1) : Reported Speech & Passives: Historial de IntentosDocument6 pagesPAC5 (UF1) : Reported Speech & Passives: Historial de IntentospemirubioNo ratings yet

- APPA 507 Calibration Procedure Ver.2.1Document13 pagesAPPA 507 Calibration Procedure Ver.2.1Matias MakiyamaNo ratings yet

- Xcode User GuideDocument279 pagesXcode User GuideStar De TiltNo ratings yet

- Introduction To Parallel Computing LLNLDocument44 pagesIntroduction To Parallel Computing LLNLAntônio ArapiracaNo ratings yet

- Chapter2 - CLO1 The Architecture and Algorithm DesignDocument81 pagesChapter2 - CLO1 The Architecture and Algorithm DesignmelvynNo ratings yet

- CA Classes-251-255Document5 pagesCA Classes-251-255SrinivasaRaoNo ratings yet

- Chapter 6 Advanced TopicsDocument14 pagesChapter 6 Advanced TopicsRoshanNo ratings yet

- ln13 DsDocument21 pagesln13 DsMunish KharbNo ratings yet

- Technical Seminar Report On: "High Performance Computing"Document14 pagesTechnical Seminar Report On: "High Performance Computing"mit_thakkar_2No ratings yet

- Programação Paralela e DistribuídaDocument39 pagesProgramação Paralela e DistribuídaMarcelo AzevedoNo ratings yet

- Parallel ComputingDocument53 pagesParallel Computingtig1100% (1)

- Parallel Computing: Charles KoelbelDocument12 pagesParallel Computing: Charles KoelbelSilvio DresserNo ratings yet

- HE163750 - Nguyen Quoc Huy - Part1Document15 pagesHE163750 - Nguyen Quoc Huy - Part1Nguyen Quoc Huy (K16HL)No ratings yet

- Distributed Systems: Xining LiDocument21 pagesDistributed Systems: Xining Liakbisoi1No ratings yet

- Comp422 534 2020 Lecture1 IntroductionDocument49 pagesComp422 534 2020 Lecture1 IntroductionSadia MughalNo ratings yet

- Cs405-Computer System Architecture: Module - 1 Parallel Computer ModelsDocument72 pagesCs405-Computer System Architecture: Module - 1 Parallel Computer Modelsashji s rajNo ratings yet

- Cs405-Computer System Architecture: Module - 1 Parallel Computer ModelsDocument72 pagesCs405-Computer System Architecture: Module - 1 Parallel Computer Modelsashji s rajNo ratings yet

- Introduction To Parallel ProcessingDocument29 pagesIntroduction To Parallel ProcessingAzri Mohd KhanilNo ratings yet

- 01 Intro Parallel ComputingDocument40 pages01 Intro Parallel ComputingHilmy MuhammadNo ratings yet

- PDC - Lecture - No. 2Document31 pagesPDC - Lecture - No. 2nauman tariqNo ratings yet

- Cs405-Computer System Architecture: Module - 1 Parallel Computer ModelsDocument91 pagesCs405-Computer System Architecture: Module - 1 Parallel Computer Modelsashji s rajNo ratings yet

- Mscs6060 Parallel and Distributed SystemsDocument50 pagesMscs6060 Parallel and Distributed SystemsSadia MughalNo ratings yet

- Cluster and Grid ComputingDocument37 pagesCluster and Grid ComputingLuis Peña PalaciosNo ratings yet

- Parallel and Distributed ComputingDocument90 pagesParallel and Distributed ComputingWell WisherNo ratings yet

- What Is Parallel ComputingDocument9 pagesWhat Is Parallel ComputingLaura DavisNo ratings yet

- 2013luv SupercomputersDocument12 pages2013luv Supercomputersakhilaakula53No ratings yet

- Baker CHPT 5 SIMD GoodDocument94 pagesBaker CHPT 5 SIMD GoodAsif MehmoodNo ratings yet

- Parallel ComputingDocument32 pagesParallel ComputingKhushal BisaniNo ratings yet

- Distributed Systems: University of PennsylvaniaDocument26 pagesDistributed Systems: University of PennsylvaniaNagendra KumarNo ratings yet

- CS 213: Parallel Processing Architectures: Laxmi Narayan BhuyanDocument26 pagesCS 213: Parallel Processing Architectures: Laxmi Narayan Bhuyanamimul13748No ratings yet

- Parallel DatabaseDocument22 pagesParallel DatabaseSameer MemonNo ratings yet

- Advanced Computer ArchitectureDocument28 pagesAdvanced Computer ArchitectureSameer KhudeNo ratings yet

- Distributed SystemDocument26 pagesDistributed SystemSMARTELLIGENT100% (1)

- Lecture 10 Parallel Computing - by FQDocument29 pagesLecture 10 Parallel Computing - by FQaina syafNo ratings yet

- Introduction To Parallel Computing: John Von Neumann Institute For ComputingDocument18 pagesIntroduction To Parallel Computing: John Von Neumann Institute For Computingsandeep_nagar29No ratings yet

- Introduction To Distributed Operating Systems Communication in Distributed SystemsDocument150 pagesIntroduction To Distributed Operating Systems Communication in Distributed SystemsSweta KamatNo ratings yet

- Rubio Campillo2018Document3 pagesRubio Campillo2018AimanNo ratings yet

- Week 5 - The Impact of Multi-Core Computing On Computational OptimizationDocument11 pagesWeek 5 - The Impact of Multi-Core Computing On Computational OptimizationGame AccountNo ratings yet

- Lec1 IntroductionDocument23 pagesLec1 IntroductionSAINo ratings yet

- Parallel Computer Models: PCA Chapter 1Document61 pagesParallel Computer Models: PCA Chapter 1AYUSH KUMARNo ratings yet

- Ds/Unit 1 Truba College of Science & Tech., BhopalDocument9 pagesDs/Unit 1 Truba College of Science & Tech., BhopalKuldeep PalNo ratings yet

- Advance Computer Architecture2Document36 pagesAdvance Computer Architecture2AnujNo ratings yet

- Introduction To Parallel ComputingDocument34 pagesIntroduction To Parallel ComputingJOna Lyne0% (1)

- Comp422 2011 Lecture1 IntroductionDocument50 pagesComp422 2011 Lecture1 IntroductionaskbilladdmicrosoftNo ratings yet

- Basics of Parallel Programming: Unit-1Document79 pagesBasics of Parallel Programming: Unit-1jai shree krishnaNo ratings yet

- Distributed Systems: - CSE 380 - Lecture Note 13 - Insup LeeDocument24 pagesDistributed Systems: - CSE 380 - Lecture Note 13 - Insup LeeApoorv JoshiNo ratings yet

- CS 213: Parallel Processing Architectures: Laxmi Narayan BhuyanDocument26 pagesCS 213: Parallel Processing Architectures: Laxmi Narayan BhuyanRammurtiRawatNo ratings yet

- Introduction To Parallel Computing: National Tsing Hua University Instructor: Jerry Chou 2017, Summer SemesterDocument81 pagesIntroduction To Parallel Computing: National Tsing Hua University Instructor: Jerry Chou 2017, Summer SemesterMichael ShiNo ratings yet

- Sardar Patel College of Engineering, Bakrol: Distributed Operating SystemDocument16 pagesSardar Patel College of Engineering, Bakrol: Distributed Operating SystemNirmalNo ratings yet

- Chapter 1 ExerciesesDocument5 pagesChapter 1 ExerciesesSyedRahimAliShahNo ratings yet

- LLNL Introduction To Parallel ComputingDocument39 pagesLLNL Introduction To Parallel ComputingLogeswari GovindarajuNo ratings yet

- CS 2254operating Systems3Document3 pagesCS 2254operating Systems3Suganya NatarajanNo ratings yet

- CS326 Parallel and Distributed Computing: SPRING 2021 National University of Computer and Emerging SciencesDocument47 pagesCS326 Parallel and Distributed Computing: SPRING 2021 National University of Computer and Emerging SciencesNehaNo ratings yet

- Questions To Practice For Cse323 Quiz 1Document4 pagesQuestions To Practice For Cse323 Quiz 1errormaruf4No ratings yet

- Silberschatz 7 Chapter 1 Questions and Answers: Jas::/conversion/tmp/scratch/389117979Document6 pagesSilberschatz 7 Chapter 1 Questions and Answers: Jas::/conversion/tmp/scratch/389117979AbdelrahmanAbdelhamedNo ratings yet

- Week1 - Parallel and Distributed ComputingDocument46 pagesWeek1 - Parallel and Distributed ComputingA NNo ratings yet

- Syllabus CS-0000 V Sem All SubjectDocument11 pagesSyllabus CS-0000 V Sem All Subject12ashivangtiwariNo ratings yet

- So2 - Tifi - Tusty Nadia Maghfira-Firda Priatmayanti-Fatthul Iman-Fedro Jordie PDFDocument9 pagesSo2 - Tifi - Tusty Nadia Maghfira-Firda Priatmayanti-Fatthul Iman-Fedro Jordie PDFTusty Nadia MaghfiraNo ratings yet

- Multi-Processor / Parallel ProcessingDocument12 pagesMulti-Processor / Parallel ProcessingSayan Kumar KhanNo ratings yet

- Parallel Computing: Concepts and TerminologiesDocument18 pagesParallel Computing: Concepts and TerminologiesPhani KumarNo ratings yet

- CS0051 - Module 01Document52 pagesCS0051 - Module 01Ron BayaniNo ratings yet

- Linguistic InequalityDocument5 pagesLinguistic InequalityZahid GujjarNo ratings yet

- How To Write A Strong Conclusion For Research PaperDocument6 pagesHow To Write A Strong Conclusion For Research Paperikrndjvnd100% (1)

- Uipath Saiv1 PDFDocument5 pagesUipath Saiv1 PDFmrdobby555No ratings yet

- UntitledDocument922 pagesUntitledJADERSON da silva ramosNo ratings yet

- Faculty of Law, Aligarh Muslim University PDFDocument8 pagesFaculty of Law, Aligarh Muslim University PDFAnas AliNo ratings yet

- Filipino EnglishDocument6 pagesFilipino EnglishFeelin YepNo ratings yet

- Main - Creative WritingDocument72 pagesMain - Creative WritingHailie Jade BaunsitNo ratings yet

- Questionnaire - Unit 5: Did EnjoyDocument1 pageQuestionnaire - Unit 5: Did Enjoygenesis medranoNo ratings yet

- CAE Speaking Phrases 34Document1 pageCAE Speaking Phrases 34xRalluNo ratings yet

- Summary of Literature Review ExampleDocument8 pagesSummary of Literature Review Examplebzknsgvkg100% (1)

- A Long Sermon Part 3 The Easter Vigil For Holy Week A The Mystery of New Life Herbert McCabe OPDocument13 pagesA Long Sermon Part 3 The Easter Vigil For Holy Week A The Mystery of New Life Herbert McCabe OPKatherine ErikaNo ratings yet

- 9 - Imagicle Attendant Console For Cisco UCDocument13 pages9 - Imagicle Attendant Console For Cisco UCRizamNo ratings yet

- Examen Anglais 2AMDocument4 pagesExamen Anglais 2AMNesrine ztnNo ratings yet

- Maori GrammarDocument188 pagesMaori GrammarDimitris Leivaditis100% (1)

- Nature of Greek Political ThoughtDocument13 pagesNature of Greek Political ThoughtVAIDEHI YADAVNo ratings yet

- L1 SPECIAL TOPICS IN STS - Information SocietyDocument36 pagesL1 SPECIAL TOPICS IN STS - Information SocietyEdreign Pete MagdaelNo ratings yet

- UntitledDocument12 pagesUntitledWildatul VirdausiyahNo ratings yet

- Grammar - Possessive Pronouns: Subjective (Nominative) & ObjectiveDocument2 pagesGrammar - Possessive Pronouns: Subjective (Nominative) & ObjectiveJiaur RahmanNo ratings yet

- Math 313 (Linear Algebra) Final Exam Practice KEYDocument13 pagesMath 313 (Linear Algebra) Final Exam Practice KEYTabishNo ratings yet

- Task Tracking Template ProjectManager WLNKDocument2 pagesTask Tracking Template ProjectManager WLNKechlon2001No ratings yet

- Brass Shear Screws Data PDFDocument2 pagesBrass Shear Screws Data PDFBella cedricNo ratings yet

- 2 - English - SaravDocument6 pages2 - English - SaravPhillip BurgessNo ratings yet

- إعداد منهج لتعليم اللغة لأغراض علميةDocument24 pagesإعداد منهج لتعليم اللغة لأغراض علميةأحمدمحمدعليحمودNo ratings yet

- The Life History of Prophet Muhammad Source of Islami Law ALHUJRATDocument29 pagesThe Life History of Prophet Muhammad Source of Islami Law ALHUJRATZain KhanNo ratings yet

- Brown & Hudson (1998) - WP16Document25 pagesBrown & Hudson (1998) - WP16Ella Mansel (Carmen M.)No ratings yet

- Rove February 2023 SEO Report PDFDocument23 pagesRove February 2023 SEO Report PDFSuganthan MurugesanNo ratings yet

- CHP 3 (System Requirements)Document4 pagesCHP 3 (System Requirements)Parth A ShahNo ratings yet