Professional Documents

Culture Documents

LVM Mirror Walking - Flash Read Preferred

LVM Mirror Walking - Flash Read Preferred

Uploaded by

liew990 ratings0% found this document useful (0 votes)

222 views15 pagesThe document describes how to migrate logical volume (LV) data from one storage array to a new storage array with larger LUNs using AIX LVM mirror walking. It involves:

1) Creating mirrors of the LVs on the new storage array with larger LUNs;

2) Synchronizing the mirrors to copy the data;

3) Removing the mirrors on the original storage array once synchronization is complete, leaving only the mirrors on the new storage array with the migrated data.

It also describes how to configure the LV mirrors to use flash storage as the preferred location for reads by making the flash LUNs the primary copies during synchronization. This improves read performance.

Original Description:

LVM flash

Copyright

© © All Rights Reserved

Available Formats

PPTX, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentThe document describes how to migrate logical volume (LV) data from one storage array to a new storage array with larger LUNs using AIX LVM mirror walking. It involves:

1) Creating mirrors of the LVs on the new storage array with larger LUNs;

2) Synchronizing the mirrors to copy the data;

3) Removing the mirrors on the original storage array once synchronization is complete, leaving only the mirrors on the new storage array with the migrated data.

It also describes how to configure the LV mirrors to use flash storage as the preferred location for reads by making the flash LUNs the primary copies during synchronization. This improves read performance.

Copyright:

© All Rights Reserved

Available Formats

Download as PPTX, PDF, TXT or read online from Scribd

Download as pptx, pdf, or txt

0 ratings0% found this document useful (0 votes)

222 views15 pagesLVM Mirror Walking - Flash Read Preferred

LVM Mirror Walking - Flash Read Preferred

Uploaded by

liew99The document describes how to migrate logical volume (LV) data from one storage array to a new storage array with larger LUNs using AIX LVM mirror walking. It involves:

1) Creating mirrors of the LVs on the new storage array with larger LUNs;

2) Synchronizing the mirrors to copy the data;

3) Removing the mirrors on the original storage array once synchronization is complete, leaving only the mirrors on the new storage array with the migrated data.

It also describes how to configure the LV mirrors to use flash storage as the preferred location for reads by making the flash LUNs the primary copies during synchronization. This improves read performance.

Copyright:

© All Rights Reserved

Available Formats

Download as PPTX, PDF, TXT or read online from Scribd

Download as pptx, pdf, or txt

You are on page 1of 15

AIX LVM Mirror walking

and Flash Storage Preferred Read

Deployment

How to walk the LVM data over from

one storage array to a new storage

array, using a smaller number of larger

LUNS.

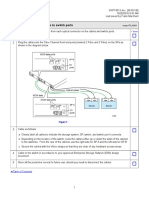

Mirror Walking

All data is available and online throughout the entire

process. No down time required

Some impact on Server resources, CPU, I/O.

Requirements

The VG must be scalable to accept the larger LUNs number

of PPs per LUN.

varyoffvg <vg>; chvg G <vg>; varyonvg <vg>

This is one place where down time may be required

The hdisks within a single VG can be walked over to a

smaller number of larger hdisks.

Cannot do this across VGs.

hdisk1

hdisk2

hdisk3

hdisk4

hdisk5

hdisk6

lv1

lv2

lv3

lv4

hdisk7

hdisk8

hdisk9

vg1

All hdisks and lvs in a single vg can

use this technique

Vg must be scalable and able to accept

Larger LUN size PP count. Introducing

A larger sized LUN may not be able to

be added if the vg is not scalable.

hdisk1

hdisk2

hdisk3

hdisk4

hdisk5

hdisk6

lv1

lv2

lv3

lv4

hdisk7

hdisk8

hdisk9

hdisk11

hdisk10

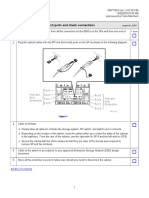

-Create Bigger LUNS on XIV

-Scan for the new devices

-xiv_fc_admin -R

vg1

hdisk1

hdisk2

hdisk3

hdisk4

hdisk5

hdisk6

lv1

lv2

lv3

lv4

hdisk7

hdisk8

hdisk9

hdisk11

hdisk10

Add hdisks to vg1

-extendvg vg1 hdisk10 hdisk11

vg1

hdisk1

hdisk2

hdisk3

hdisk4

hdisk5

hdisk6

lv1

lv2

lv3

lv4

hdisk7

hdisk8

hdisk9

hdisk11

hdisk10

Start the lv mirroring

-mklvcopy lv1 2 hdisk10 hdisk11

-mklvcopy lv2 2 hdisk10 hdisk11

-mklvcopy lv3 2 hdisk10 hdisk11

-mklvcopy lv4 2 hdisk10 hdisk11

lslv -l lv1

vg1

Check lv for pv contents

lslv -l lv1

lvm2lv:/lvm2

PV COPIES IN BAND DISTRIBUTION

hdisk1 004:000:000 100% 000:004:000:000:000

hdisk10 000:000:000 100% 000:000:000:000:000

hdisk2 004:000:000 100% 000:004:000:000:000

hdisk11 000:000:000 100% 000:000:000:000:000

hdisk3 004:000:000 100% 000:004:000:000:000

hdisk4 004:000:000 100% 000:004:000:000:000

hdisk5 004:000:000 100% 000:004:000:000:000

hdisk6 004:000:000 100% 000:004:000:000:000

hdisk7 004:000:000 100% 000:004:000:000:000

hdisk8 004:000:000 100% 000:004:000:000:000

hdisk9 004:000:000 100% 000:004:000:000:000

Note the 2 new LUNS have 0 PPs distributed

hdisk1

hdisk2

hdisk3

hdisk4

hdisk5

hdisk6

lv1

lv2

lv3

lv4

hdisk7

hdisk8

hdisk9

hdisk11

hdisk10

All lvs mirrors will be stale

lsvg l vg1

vg1:

LV NAME TYPE LPs PPs PVs LV STATE MOUNT POINT

lv1 jfs2 40 80 12 open/stale /lvm1

lv2 jfs2 40 80 12 open/stale /lvm2

lv3 jfs2 40 80 12 open/stale /lvm3

lv4 jfs2 40 80 12 open/stale /lvm4

Synchronize the two copies

syncvg v vg1

Will take time to complete

vg1

hdisk1

hdisk2

hdisk3

hdisk4

hdisk5

hdisk6

lv1

lv2

lv3

lv4

hdisk7

hdisk8

hdisk9

hdisk11

hdisk10

Now LVs are synced

lsvg l vg1

vg1:

LV NAME TYPE LPs PPs PVs LV STATE MOUNT POINT

lv1 jfs2 40 80 12 open/syncd /lvm1

lv2 jfs2 40 80 12 open/syncd /lvm2

lv3 jfs2 40 80 12 open/syncd /lvm3

lv4 jfs2 40 80 12 open/syncd /lvm4

vg1

hdisk1

hdisk2

hdisk3

hdisk4

hdisk5

hdisk6

lv1

lv2

lv3

lv4

hdisk7

hdisk8

hdisk9

hdisk11

hdisk10

vg1

Check lv for pv contents and distribution

lslv -l lv1

lvm2lv:/lvm2

PV COPIES IN BAND DISTRIBUTION

hdisk1 004:000:000 100% 000:004:000:000:000

hdisk10 020:000:000 100% 000:020:000:000:000

hdisk2 004:000:000 100% 000:004:000:000:000

hdisk11 020:000:000 100% 000:020:000:000:000

hdisk3 004:000:000 100% 000:004:000:000:000

hdisk4 004:000:000 100% 000:004:000:000:000

hdisk5 004:000:000 100% 000:004:000:000:000

hdisk6 004:000:000 100% 000:004:000:000:000

hdisk7 004:000:000 100% 000:004:000:000:000

hdisk8 004:000:000 100% 000:004:000:000:000

hdisk9 004:000:000 100% 000:004:000:000:000

Note the 2 new LUNS have more PPs than the original LUNS

hdisk1

hdisk2

hdisk3

hdisk4

hdisk5

hdisk6

lv1

lv2

lv3

lv4

hdisk7

hdisk8

hdisk9

hdisk11

hdisk10

Remove the old pvs from each lv

rmlvcopy lv1 1 hdisk1 hdisk2 hdisk3 hdisk4 hdisk5 hdisk6 hdisk7 hdisk8 hdisk9

lslv l lv1

lv1:

PV COPIES IN BAND DISTRIBUTION

hdisk10 020:000:000 100% 000:020:000:000:000

hdisk11 020:000:000 100% 000:020:000:000:000

Migration Done!

vg1

Flash Storage Read Preferred

To enable Flash storage as a read-preferred copy of the logical volume device pair,

Add in the mirror as discussed on slide 6

mklvcopy <lv> 2 hdisk10 hdisk11

Synchronize the mirrored logical volumes

syncvg v <vg>

Remove the primary devices from the <lv>

rmlvcopy <lv> 1 hdisk1 hdisk2 hdisk3 hdisk4 hdisk5 hdisk6 hdisk7 hdisk8 hdisk9

Add back the original primary devices and sync. They will now be added in as

secondary

mklvcopy lvm1lv 2 hdisk1 hdisk2 hdisk3 hdisk4 hdisk5 hdisk6 hdisk7 hdisk8 hdisk9

Synchronize the mirrored logical volumes

syncvg v <vg>

Adjust the write schedule policy

Check which logical volumes are primary, and which are secondary

# lslv -m <lv>

lvm1lv:<lv>

LP PP1 PV1 PP2 PV2 PP3 PV3

0001 0078 hdisk10 0014 hdisk1

0002 0079 hdisk11 0014 hdisk2

0003 0079 hdisk10 0014 hdisk3

0004 0080 hdisk11 0014 hdisk4

0005 0080 hdisk10 0014 hdisk5

0006 0081 hdisk11 0014 hdisk6

0007 0081 hdisk10 0014 hdisk7

0007 0081 hdisk11 0014 hdisk8

0007 0081 hdisk10 0014 hdisk9

Now the devices in the PV1 column are the primary devices. The reads will all be

supported by the PV1 devices. During Boot, the PV1 devices are the primary copy

of the mirror, and will be used as the sync point.

Flash Storage Read Preferred

There are 5 write policies for LVM mirroring

The default is parallel

Write operations are done in parallel to all copies of the mirror.

Read operations are done to the least busy device

We want parallel write with sequential read

Write operations are done in parallel to all copies of the mirror.

Read operations are ALWAYS performed on the primary copy of the devices in the mirror set

During Boot, the PV1 devices are the primary copy of the mirror, and will be used as the sync source.

There is a short amount of downtime for the file system required to change the policy

Adjust the write schedule policy

Check the write schedule policy of the logical volume

LOGICAL VOLUME: lvm2lv VOLUME GROUP: lvm_test

LV IDENTIFIER: 00f62b1d00004c000000013fd4f00d26.2 PERMISSION: read/write

VG STATE: active/complete LV STATE: closed/syncd

TYPE: jfs2 WRITE VERIFY: off

MAX LPs: 512 PP SIZE: 256 megabyte(s)

COPIES: 2 SCHED POLICY: parallel

LPs: 40 PPs: 80

STALE PPs: 0 BB POLICY: relocatable

INTER-POLICY: maximum RELOCATABLE: yes

INTRA-POLICY: middle UPPER BOUND: 1024

MOUNT POINT: /lvm2 LABEL: /lvm2

DEVICE UID: 0 DEVICE GID: 0

DEVICE PERMISSIONS: 432

MIRROR WRITE CONSISTENCY: on/ACTIVE

EACH LP COPY ON A SEPARATE PV ?: yes

Serialize IO ?: NO

INFINITE RETRY: no

DEVICESUBTYPE: DS_LVZ

COPY 1 MIRROR POOL: None

COPY 2 MIRROR POOL: None

COPY 3 MIRROR POOL: None

Adjust the write schedule policy

Change the write schedule policy. This must be done with the file system for each of the logical

volumes unmounted. Here is where the down time will occur.

Chlv d ps <lv>

Re-Check the write schedule policy

LOGICAL VOLUME: lvm1lv VOLUME GROUP: lvm_test

LV IDENTIFIER: 00f62b1d00004c000000013fd4f00d26.1 PERMISSION: read/write

VG STATE: active/complete LV STATE: opened/stale

TYPE: jfs2 WRITE VERIFY: off

MAX LPs: 512 PP SIZE: 256 megabyte(s)

COPIES: 2 SCHED POLICY: parallel/sequential

LPs: 40 PPs: 80

STALE PPs: 40 BB POLICY: relocatable

INTER-POLICY: maximum RELOCATABLE: yes

INTRA-POLICY: middle UPPER BOUND: 1024

MOUNT POINT: /lvm1 LABEL: /lvm1

DEVICE UID: 0 DEVICE GID: 0

DEVICE PERMISSIONS: 432

MIRROR WRITE CONSISTENCY: on/ACTIVE

EACH LP COPY ON A SEPARATE PV ?: yes

Serialize IO ?: NO

INFINITE RETRY: no

DEVICESUBTYPE: DS_LVZ

COPY 1 MIRROR POOL: None

COPY 2 MIRROR POOL: None

COPY 3 MIRROR POOL: None

You might also like

- How To Replace A Disk in IBM AIXDocument5 pagesHow To Replace A Disk in IBM AIXIla IlanNo ratings yet

- Aix DetailsDocument46 pagesAix DetailsSaurav TripathyNo ratings yet

- LVM Configuration - RHEL 8 (EX294)Document28 pagesLVM Configuration - RHEL 8 (EX294)Nitish Kumar VermaNo ratings yet

- Experiment:4: "Try Not To Become A Man With Success, But Rather Try To Become A Man of Value"Document7 pagesExperiment:4: "Try Not To Become A Man With Success, But Rather Try To Become A Man of Value"Reshu DuggalNo ratings yet

- GOIP SMS Server English ManualDocument2 pagesGOIP SMS Server English ManualEcuatek TelecomunicacionesNo ratings yet

- VG Commands: LSVG Display All VgsDocument19 pagesVG Commands: LSVG Display All VgsnarendrakaduskarNo ratings yet

- IBM AIX - IMP CommandsDocument5 pagesIBM AIX - IMP CommandsvolavidyasagarraoNo ratings yet

- AIX Replace Disk by MirroringDocument5 pagesAIX Replace Disk by MirroringJames BorgNo ratings yet

- Linux LVM MirrorDocument5 pagesLinux LVM MirrorSumit RoyNo ratings yet

- Disk Format and LVM ExtendDocument9 pagesDisk Format and LVM ExtendRam GuggulNo ratings yet

- How To Mirror Your Root Disk On AIX (A.k.a. Rootvg)Document8 pagesHow To Mirror Your Root Disk On AIX (A.k.a. Rootvg)tuancoiNo ratings yet

- Logical Volume Management: Michal SedlakDocument31 pagesLogical Volume Management: Michal SedlakFatih AslanNo ratings yet

- How To Increase The Size of An LVM2 Logical VolumeDocument7 pagesHow To Increase The Size of An LVM2 Logical VolumeJack WangNo ratings yet

- AIX Logical Volume Manager (LVM)Document4 pagesAIX Logical Volume Manager (LVM)rajuyjNo ratings yet

- Draft 1.1 - Interconntecting Multiple DC Sites With Dual Fabric VxLANDocument6 pagesDraft 1.1 - Interconntecting Multiple DC Sites With Dual Fabric VxLANrahul.logicalNo ratings yet

- AIX For System AdministratorsDocument8 pagesAIX For System Administratorsraj_esh_0201No ratings yet

- Aix VioDocument8 pagesAix VioliuylNo ratings yet

- Hot Spare PresentationDocument34 pagesHot Spare PresentationRavoof ShaikNo ratings yet

- LVM Snapshot Concepts and MechanismDocument10 pagesLVM Snapshot Concepts and MechanismAhmed (Mash) Mashhour100% (2)

- Aix FinalDocument44 pagesAix FinalvishalNo ratings yet

- Powervm QuicksheetDocument2 pagesPowervm QuicksheetrengaaaNo ratings yet

- CCNP Labs PDFDocument124 pagesCCNP Labs PDFUmer Aziz RanaNo ratings yet

- What Is LVMDocument8 pagesWhat Is LVMagamem1No ratings yet

- 2.AIX For System Administrators - LVDocument9 pages2.AIX For System Administrators - LVjpeg144No ratings yet

- Keepalived LVS TUN PDFDocument26 pagesKeepalived LVS TUN PDFchienkmaNo ratings yet

- AIX - LVM CheatsheetDocument4 pagesAIX - LVM CheatsheetKebe NanaNo ratings yet

- LNX Storage 3Document12 pagesLNX Storage 3Yulin LiuNo ratings yet

- 2524 LacpDocument6 pages2524 LacpamisheraNo ratings yet

- Replacing A Failed PVDocument2 pagesReplacing A Failed PVImran RentiaNo ratings yet

- AIX Logical Volume Manager (LVM)Document7 pagesAIX Logical Volume Manager (LVM)Lucky ShrikanthNo ratings yet

- Configuring VSS (Cisco 6500) and VPC (Cisco NX5K) - Data Center and Network TechnobabbleDocument7 pagesConfiguring VSS (Cisco 6500) and VPC (Cisco NX5K) - Data Center and Network TechnobabbleMichael Ceballos PaulinoNo ratings yet

- Identify Newly Added LUN. 2. Create Physical Volume (PV) 3. Create Volume Group (VG) 4. Create Logical Volume (LV) 5. Create File System 6. Mount File System 7. Entries in /etc/fstab FileDocument6 pagesIdentify Newly Added LUN. 2. Create Physical Volume (PV) 3. Create Volume Group (VG) 4. Create Logical Volume (LV) 5. Create File System 6. Mount File System 7. Entries in /etc/fstab FileMukesh BarnwalNo ratings yet

- Unixwerk - How To Add A New VG To An Active HACMP Resource GroupDocument3 pagesUnixwerk - How To Add A New VG To An Active HACMP Resource GroupSenthil GaneshNo ratings yet

- AOS-CX Simulator - VRF Part 1 Lab GuideDocument17 pagesAOS-CX Simulator - VRF Part 1 Lab Guiderashmi mNo ratings yet

- Mirroring ROOTVG StepsDocument2 pagesMirroring ROOTVG StepsJeffrey VergaraNo ratings yet

- OVN VancouverDocument33 pagesOVN Vancouver陈勇No ratings yet

- ConfigsDocument3 pagesConfigsSindhuNo ratings yet

- HPUX by ShrikantDocument21 pagesHPUX by ShrikantmanmohanmirkarNo ratings yet

- Backup and Restore Logical Volume Using LVM SnapshotDocument25 pagesBackup and Restore Logical Volume Using LVM SnapshotpalkybdNo ratings yet

- Aix CommandsDocument19 pagesAix CommandsJagatheeswari JagaNo ratings yet

- AIX - LVM Cheat SheetDocument4 pagesAIX - LVM Cheat Sheetimranpathan22No ratings yet

- LVMDocument27 pagesLVMNeha MjNo ratings yet

- IBM VIOS MaintenanceDocument46 pagesIBM VIOS MaintenanceEyad Muin Ibdair100% (1)

- Netbackup Veritas Cluster Implementation Procedure: Install VCSDocument4 pagesNetbackup Veritas Cluster Implementation Procedure: Install VCSYing Peng KenneyNo ratings yet

- Aix LVMDocument15 pagesAix LVMAvinash HiwaraleNo ratings yet

- DS8000 - TrainingDocument55 pagesDS8000 - TrainingKiran Kumar PeteruNo ratings yet

- Tips & Tricks For Using LVM Effectively / Intro To VXVM: Renay Gaye Hewlett-PackardDocument85 pagesTips & Tricks For Using LVM Effectively / Intro To VXVM: Renay Gaye Hewlett-PackardsunkumarNo ratings yet

- LVM and VXVM An Introduction: by Bill Hassell With Acknowledgements To David Totsch and Chris WongDocument88 pagesLVM and VXVM An Introduction: by Bill Hassell With Acknowledgements To David Totsch and Chris WongPOLLYCORPNo ratings yet

- Switchshow: How To Zone in Brocade Switch PreparationDocument4 pagesSwitchshow: How To Zone in Brocade Switch PreparationBikuNo ratings yet

- Extending An LVM VolumeDocument3 pagesExtending An LVM VolumeFahmi Anhar CNo ratings yet

- LVM RecoveryDocument11 pagesLVM RecoverySiddu ReddyNo ratings yet

- K7 SolutionsDocument36 pagesK7 SolutionsVenugopal Athiur Ramachandran100% (1)

- LVM Fundamentals LabDocument92 pagesLVM Fundamentals LabDario SimbanaNo ratings yet

- Basic Command RNC/RXI: PWD Ls CD .. CD Home CD Moshell ./moshell RNCSM07 ./moshell 10.107.240.31Document11 pagesBasic Command RNC/RXI: PWD Ls CD .. CD Home CD Moshell ./moshell RNCSM07 ./moshell 10.107.240.31Tien Hung VuongNo ratings yet

- Dual VioDocument13 pagesDual VioKarthikeyan ManisekaranNo ratings yet

- Interview Questions 1Document13 pagesInterview Questions 1Rajesh KannanNo ratings yet

- PluralSight RHELStorageFundamentalsDocument10 pagesPluralSight RHELStorageFundamentalsmoskytNo ratings yet

- AIX Patching Proess 6thjun2012-20161118-184517836Document8 pagesAIX Patching Proess 6thjun2012-20161118-184517836Avinash NekkantiNo ratings yet

- DRBD-Cookbook: How to create your own cluster solution, without SAN or NAS!From EverandDRBD-Cookbook: How to create your own cluster solution, without SAN or NAS!No ratings yet

- WAN TECHNOLOGY FRAME-RELAY: An Expert's Handbook of Navigating Frame Relay NetworksFrom EverandWAN TECHNOLOGY FRAME-RELAY: An Expert's Handbook of Navigating Frame Relay NetworksNo ratings yet

- LEARN MPLS FROM SCRATCH PART-B: A Beginners guide to next level of networkingFrom EverandLEARN MPLS FROM SCRATCH PART-B: A Beginners guide to next level of networkingNo ratings yet

- Floor Load RequirementsDocument4 pagesFloor Load Requirementsliew99No ratings yet

- How To Access SPP On KCDocument1 pageHow To Access SPP On KCliew99No ratings yet

- CTA - Containers Explained v1Document25 pagesCTA - Containers Explained v1liew99No ratings yet

- NSD TuningDocument6 pagesNSD Tuningliew99No ratings yet

- Install/Upgrade Array Health Analyzer: Inaha010 - R001Document2 pagesInstall/Upgrade Array Health Analyzer: Inaha010 - R001liew99No ratings yet

- Cable CX3-10c Data Ports To Switch Ports: cnspr170 - R001Document1 pageCable CX3-10c Data Ports To Switch Ports: cnspr170 - R001liew99No ratings yet

- SONAS Security Strategy - SecurityDocument38 pagesSONAS Security Strategy - Securityliew99No ratings yet

- Cable The CX3-40-Series Array To The LAN: Cnlan160 - R006Document2 pagesCable The CX3-40-Series Array To The LAN: Cnlan160 - R006liew99No ratings yet

- Configure Distributed Monitor Email-Home Webex Remote AccessDocument6 pagesConfigure Distributed Monitor Email-Home Webex Remote Accessliew99No ratings yet

- Cable Cx600 Sps To Switch Ports and Check ConnectionsDocument1 pageCable Cx600 Sps To Switch Ports and Check Connectionsliew99No ratings yet

- Pacemaker CookbookDocument4 pagesPacemaker Cookbookliew99No ratings yet

- Cable CX3-20-Series Data Ports To Switch Ports: cnspr160 - R002Document1 pageCable CX3-20-Series Data Ports To Switch Ports: cnspr160 - R002liew99No ratings yet

- Upndu 410Document5 pagesUpndu 410liew99No ratings yet

- Update Software On A CX300 series/CX500 series/CX700/CX3 Series ArrayDocument13 pagesUpdate Software On A CX300 series/CX500 series/CX700/CX3 Series Arrayliew99No ratings yet

- If Software Update Is Offline Due To ATA Chassis: Path ADocument7 pagesIf Software Update Is Offline Due To ATA Chassis: Path Aliew99No ratings yet

- Prepare CX300, 500, 700, and CX3 Series For Update To Release 24 and AboveDocument15 pagesPrepare CX300, 500, 700, and CX3 Series For Update To Release 24 and Aboveliew99No ratings yet

- Verify and Restore LUN OwnershipDocument1 pageVerify and Restore LUN Ownershipliew99No ratings yet

- Cable The CX3-20-Series Array To The LAN: Storage System Serial Number (See Note Below)Document2 pagesCable The CX3-20-Series Array To The LAN: Storage System Serial Number (See Note Below)liew99No ratings yet

- Cable The CX3-80 Array To The LAN: Cnlan150 - R005Document2 pagesCable The CX3-80 Array To The LAN: Cnlan150 - R005liew99No ratings yet

- Verify You Have Reviewed The Latest Clariion Activity Guide (Cag)Document1 pageVerify You Have Reviewed The Latest Clariion Activity Guide (Cag)liew99No ratings yet

- Test /acceptance Criteria (TAC) For Implementation (Was EIP-2)Document8 pagesTest /acceptance Criteria (TAC) For Implementation (Was EIP-2)liew99No ratings yet

- Hardware/software Upgrade Readiness CheckDocument2 pagesHardware/software Upgrade Readiness Checkliew99No ratings yet

- Test and Acceptance Criteria (TAC) Procedure For Installation (Was EIP)Document3 pagesTest and Acceptance Criteria (TAC) Procedure For Installation (Was EIP)liew99No ratings yet

- Post Hardware/Software Upgrade Procedure: Collecting Clariion Storage System InformationDocument2 pagesPost Hardware/Software Upgrade Procedure: Collecting Clariion Storage System Informationliew99No ratings yet

- and Install Software On The Service Laptop: cnhck010 - R006Document1 pageand Install Software On The Service Laptop: cnhck010 - R006liew99No ratings yet

- Restore Array Call Home Monitoring: ImportantDocument2 pagesRestore Array Call Home Monitoring: Importantliew99No ratings yet

- Disable Array From Calling Home: ImportantDocument2 pagesDisable Array From Calling Home: Importantliew99No ratings yet

- HP HP0-A01: Practice Exam: Question No: 1Document61 pagesHP HP0-A01: Practice Exam: Question No: 1Cassandra HernandezNo ratings yet

- CIS Red Hat Enterprise Linux 8 Benchmark v1.0.1Document570 pagesCIS Red Hat Enterprise Linux 8 Benchmark v1.0.1Julio BarreraNo ratings yet

- Data Ontap - Best Practice and Implementation GuideDocument68 pagesData Ontap - Best Practice and Implementation GuidecracciunNo ratings yet

- Intructions GcitDocument1 pageIntructions Gcitiñaki gonzalezNo ratings yet

- Module 03 - Advanced AD DS Infrastructure ManagementDocument13 pagesModule 03 - Advanced AD DS Infrastructure ManagementCong TuanNo ratings yet

- Practical - 1 - Installation of MysqlDocument11 pagesPractical - 1 - Installation of MysqlmeetNo ratings yet

- Windows and Linux Operating Systems From PDFDocument9 pagesWindows and Linux Operating Systems From PDFPAULA ANDREA PERALTA TRIANANo ratings yet

- Configuring AD Groups As Remote Administrators in FMG and FAZDocument3 pagesConfiguring AD Groups As Remote Administrators in FMG and FAZIrena Đaković OštroNo ratings yet

- RkillDocument4 pagesRkilldondanieldequeretaroNo ratings yet

- SG 242083Document488 pagesSG 242083vagnerjoliveiraNo ratings yet

- Group Policy Management Interview Questions and AnswersDocument3 pagesGroup Policy Management Interview Questions and AnswersksrpirammaNo ratings yet

- Chmod CommandDocument6 pagesChmod CommandDarkoNo ratings yet

- sBDS-Threat-Trace-Deployment-Guide-1 - HillstoneDocument32 pagessBDS-Threat-Trace-Deployment-Guide-1 - Hillstonedanduvis89No ratings yet

- Veritas Notes KamalDocument102 pagesVeritas Notes KamalNaveen VemulaNo ratings yet

- User Manual: Trustport Antivirus 2012 Bartpe PluginDocument21 pagesUser Manual: Trustport Antivirus 2012 Bartpe PluginDsdfsf GhgjgjNo ratings yet

- 1st SEM Practical File by Shubham JainDocument27 pages1st SEM Practical File by Shubham JainChad EspinozaNo ratings yet

- UsbFix ReportDocument4 pagesUsbFix Reportsami kaziNo ratings yet

- Get-Pip PyDocument413 pagesGet-Pip Pyanik fauziyahNo ratings yet

- Linux CommandsDocument3 pagesLinux CommandspawankalyanrajuNo ratings yet

- SLE201v15 Lab Exercise 3.3Document3 pagesSLE201v15 Lab Exercise 3.3omar walidNo ratings yet

- HKDMS - PT. Hutama Karya Project EPC Lawe-Lawe Facilities RDMP RU V - Balikpapan - Folder 'KBP-LAWELAWE'Document3 pagesHKDMS - PT. Hutama Karya Project EPC Lawe-Lawe Facilities RDMP RU V - Balikpapan - Folder 'KBP-LAWELAWE'Irfanimovic AbbasevicNo ratings yet

- PTBurn SDKDocument53 pagesPTBurn SDKoscar_gar75No ratings yet

- DCOM Secure by DefaultDocument60 pagesDCOM Secure by DefaultEdwin Pari100% (1)

- Basic Linux and Postgres CommandsDocument4 pagesBasic Linux and Postgres CommandsCed KTGNo ratings yet

- Security Pro AssignmentDocument3 pagesSecurity Pro AssignmenthasanNo ratings yet

- DD vcredistMSI5717Document55 pagesDD vcredistMSI5717amalina_hrdNo ratings yet

- F 0674320Document4 pagesF 0674320Pablo Andres HerediaNo ratings yet

- Bernese - Installation On UnixDocument12 pagesBernese - Installation On UnixandenetNo ratings yet