Professional Documents

Culture Documents

75%(4)75% found this document useful (4 votes)

847 viewsSentencing Software

Sentencing Software

Uploaded by

John Philip MoneraThis document discusses the use of sentencing software called COMPAS in courts to assess the recidivism risk of criminal defendants. It notes that an investigation by ProPublica found COMPAS to be biased against black defendants by incorrectly classifying them as having a higher risk of reoffending compared to white defendants. While COMPAS claims its algorithms are not biased, several studies have found COMPAS to be no more accurate than human judges in predicting recidivism and that it has low success rates, especially for predicting violent crime recidivism. There are concerns about ethical issues arising from the use of algorithms to help determine criminal sentences.

Copyright:

© All Rights Reserved

Available Formats

Download as PPTX, PDF, TXT or read online from Scribd

You might also like

- WWW Scribd Com Document 523146465 Fraud Bible 1Document20 pagesWWW Scribd Com Document 523146465 Fraud Bible 1Ohi0100% (3)

- Electric: Circuit Problems With SolutionsDocument73 pagesElectric: Circuit Problems With Solutionspassed outNo ratings yet

- CHAPTER 1: The Practice of EntrepreneurshipDocument40 pagesCHAPTER 1: The Practice of EntrepreneurshipTomato MenudoNo ratings yet

- STSDocument8 pagesSTSMonica Mejillano Ortiz100% (2)

- Intellectual Revolution of AfricaDocument6 pagesIntellectual Revolution of AfricaKyla PornalezaNo ratings yet

- THE Textalyzer: Ines, Jeremieah Torres, Alicia Yap, Jairra Zamora, JosephDocument6 pagesTHE Textalyzer: Ines, Jeremieah Torres, Alicia Yap, Jairra Zamora, Josephbianca reyesNo ratings yet

- Sentencing SoftwareDocument14 pagesSentencing SoftwareCathrena DequinaNo ratings yet

- Assignment 1 Filipino InventionDocument2 pagesAssignment 1 Filipino InventionJosephus ParagesNo ratings yet

- Sts Chapter 3Document3 pagesSts Chapter 3Jared De GuzmanNo ratings yet

- Betel Chewing in The PhilippinesDocument14 pagesBetel Chewing in The PhilippinesgraceNo ratings yet

- A Reaction On The Movie PhiladelphiaDocument1 pageA Reaction On The Movie PhiladelphiaKate RineNo ratings yet

- STSDocument4 pagesSTSMochaNo ratings yet

- Case No. 1Document6 pagesCase No. 1Merry Day100% (1)

- Scenes That Has ObligationDocument4 pagesScenes That Has ObligationMaryrose SumulongNo ratings yet

- Jamaica V. ObradorDocument7 pagesJamaica V. ObradorMiks EnriquezNo ratings yet

- Ethical SubjectivismDocument6 pagesEthical SubjectivismJeric Laurio100% (1)

- SIBULO NICOLE ANNE PHIN102 GC23Ar DIGEST 2Document6 pagesSIBULO NICOLE ANNE PHIN102 GC23Ar DIGEST 2Nicole Anne Santiago SibuloNo ratings yet

- The Commonwealth of The PhilippinesDocument7 pagesThe Commonwealth of The PhilippinesRenalyn de LimaNo ratings yet

- Universal Declaration of Bioethics and Human RightsDocument2 pagesUniversal Declaration of Bioethics and Human RightsAjing GoblokNo ratings yet

- Emotion Sensing Facial RecognitionDocument13 pagesEmotion Sensing Facial RecognitionJohn Philip Monera100% (2)

- SCITESDocument2 pagesSCITESAzhly AntenorNo ratings yet

- Information AgeDocument8 pagesInformation AgeAnne Ocampo SoloriaNo ratings yet

- Explain The Basis of The Power of The State To Impose TaxesDocument10 pagesExplain The Basis of The Power of The State To Impose TaxesRevy CumahigNo ratings yet

- Feminist EthicsDocument20 pagesFeminist EthicsZaira Claire Lim Maniba33% (3)

- Security Council: Why Global Governance Is Multi-Faceted?Document1 pageSecurity Council: Why Global Governance Is Multi-Faceted?Princess Erika C. Mendoza100% (1)

- Philosophy ReflectionDocument2 pagesPhilosophy ReflectionCrystal Padilla100% (2)

- DR Perla D Santos OcampoDocument2 pagesDR Perla D Santos OcampoAiza CabolesNo ratings yet

- Mathematics Behind Humanities and Arts, Mystery of Mathematics, Math Is The Hidden Secret To Understanding The WorldDocument1 pageMathematics Behind Humanities and Arts, Mystery of Mathematics, Math Is The Hidden Secret To Understanding The WorldJohn Angelo MorenoNo ratings yet

- The Rizal Law (Republic Act 1425) : House Bill 5561/senate Bill 438Document14 pagesThe Rizal Law (Republic Act 1425) : House Bill 5561/senate Bill 438qweqwe100% (3)

- Pe Midterm ReviewerDocument24 pagesPe Midterm ReviewerAngelique CaliNo ratings yet

- Appendix 1 NSTP Areas of CocernDocument2 pagesAppendix 1 NSTP Areas of CocernIan Conan JuanicoNo ratings yet

- How Does The Instrument Safeguard Human Rights in The Face of Science and Technology?Document2 pagesHow Does The Instrument Safeguard Human Rights in The Face of Science and Technology?rjay manaloNo ratings yet

- Zero Hunger: A Challenge Accepted by Filipinos: A Term Paper OnDocument7 pagesZero Hunger: A Challenge Accepted by Filipinos: A Term Paper OnSarah Jane Menil0% (1)

- Lecture - Chapter 1Document9 pagesLecture - Chapter 1Lawrence Ryan DaugNo ratings yet

- This Study Resource Was: Assessment 5Document1 pageThis Study Resource Was: Assessment 5Raymar MacarayanNo ratings yet

- Morality Ethics by Jerry Dalagan, Jr.Document33 pagesMorality Ethics by Jerry Dalagan, Jr.Jerry Jr Cumayas DalaganNo ratings yet

- Gen Luna 2Document4 pagesGen Luna 2Ces Sarena VillareyesNo ratings yet

- What Is VirtueDocument2 pagesWhat Is VirtueThea QueenNo ratings yet

- Section 2 Part I. Mathematics As A Tool Chapter 4. Data ManagementDocument19 pagesSection 2 Part I. Mathematics As A Tool Chapter 4. Data ManagementMhyles MarinasNo ratings yet

- Global City 1Document34 pagesGlobal City 1regane villorejoNo ratings yet

- Ge Rizal 1Document5 pagesGe Rizal 1amieNo ratings yet

- Gec 17 - Sts MAL #1: (Introduction To Science, Technology, and Society)Document5 pagesGec 17 - Sts MAL #1: (Introduction To Science, Technology, and Society)Erika MaeNo ratings yet

- Grace Mission College: Module 1: Introduction To EthicsDocument70 pagesGrace Mission College: Module 1: Introduction To EthicsDelmar Acyatan100% (1)

- (SANAANI) NUR-FATIMA) ETHICS (Section-2H) Post MODULEDocument4 pages(SANAANI) NUR-FATIMA) ETHICS (Section-2H) Post MODULENur SanaaniNo ratings yet

- Ge4 Apportionment and VotingDocument1 pageGe4 Apportionment and VotingLee DuquiatanNo ratings yet

- Photo Critiquing ExerciseDocument3 pagesPhoto Critiquing ExerciseRex KorrNo ratings yet

- Indigenous Science and Technology in The Philippines AMNDocument16 pagesIndigenous Science and Technology in The Philippines AMNMiguel Andrei InfanteNo ratings yet

- Ethics Module CompilationDocument23 pagesEthics Module CompilationDiana Rose DalitNo ratings yet

- Cfe 102 PDFDocument54 pagesCfe 102 PDFPaul MillareNo ratings yet

- Medicinal Plants What People Believe They Can Do What They Actually DoDocument2 pagesMedicinal Plants What People Believe They Can Do What They Actually DoAcua RioNo ratings yet

- Ethics-Globalization and PluralismDocument17 pagesEthics-Globalization and PluralismEphraim Lingayu100% (1)

- Is Morally Permissible in Order To Prevent A Greater EvilDocument3 pagesIs Morally Permissible in Order To Prevent A Greater EvilSuperGirl_miKNo ratings yet

- Sts Module 3 Lesson 4 5 Edited For CBLDocument18 pagesSts Module 3 Lesson 4 5 Edited For CBLHanabi PandonganNo ratings yet

- Debate Sogie BillDocument12 pagesDebate Sogie BillLuke Edward GallaresNo ratings yet

- The Cairo Declaration ABCDocument2 pagesThe Cairo Declaration ABCnors lagsNo ratings yet

- Midterms STSDocument5 pagesMidterms STSRosete San Agustin Galvez100% (1)

- STS - A Assignment 5 The Fate of The Philippine Science and Technology Innovation.Document2 pagesSTS - A Assignment 5 The Fate of The Philippine Science and Technology Innovation.Asuna YuukiNo ratings yet

- Section 1100 To Section 1114Document8 pagesSection 1100 To Section 1114Albano MeaNo ratings yet

- Reading Assignment #1Document2 pagesReading Assignment #1BethNo ratings yet

- RPH Module 2Document12 pagesRPH Module 2Althea Faye RabanalNo ratings yet

- The Depravity Standard: A Call For Large Scale Homicide Case ResearchDocument4 pagesThe Depravity Standard: A Call For Large Scale Homicide Case Researchedavey_960595015No ratings yet

- Machine Bias - ProPublicaDocument10 pagesMachine Bias - ProPublicaMason ZhangNo ratings yet

- Jrny RmeDocument4 pagesJrny RmeJohn Philip MoneraNo ratings yet

- Rme JrnyDocument3 pagesRme JrnyJohn Philip MoneraNo ratings yet

- Monera - John Philip M - Bsee4a - Lecture 1 - Review QuestionsDocument1 pageMonera - John Philip M - Bsee4a - Lecture 1 - Review QuestionsJohn Philip MoneraNo ratings yet

- 4 and 5Document21 pages4 and 5John Philip MoneraNo ratings yet

- Journeyman Closed Book Exam 1Document6 pagesJourneyman Closed Book Exam 1John Philip MoneraNo ratings yet

- Compliance-Matrix Bolalin Monera Sanchez Ee3aDocument8 pagesCompliance-Matrix Bolalin Monera Sanchez Ee3aJohn Philip MoneraNo ratings yet

- Monera - John Philip M - Bsee4a - System Protection To Human BodyDocument2 pagesMonera - John Philip M - Bsee4a - System Protection To Human BodyJohn Philip MoneraNo ratings yet

- Quiz 1Document1 pageQuiz 1John Philip MoneraNo ratings yet

- Monera Bsee 3a - Week4 OutputDocument3 pagesMonera Bsee 3a - Week4 OutputJohn Philip MoneraNo ratings yet

- Laboratory Activity1 Monera Johnphilip Bsee3aDocument6 pagesLaboratory Activity1 Monera Johnphilip Bsee3aJohn Philip MoneraNo ratings yet

- Camarines Norte State College: Laboratory Activity For Prelim PeriodDocument2 pagesCamarines Norte State College: Laboratory Activity For Prelim PeriodJohn Philip MoneraNo ratings yet

- World Literature (Lit 2) : Final Test PaperDocument7 pagesWorld Literature (Lit 2) : Final Test PaperJohn Philip MoneraNo ratings yet

- QUIZ1 - NUMERICALMETHODS&ANALYSIS - MONERA - BSEE3A Edit1Document6 pagesQUIZ1 - NUMERICALMETHODS&ANALYSIS - MONERA - BSEE3A Edit1John Philip MoneraNo ratings yet

- It To CongressDocument4 pagesIt To CongressJohn Philip MoneraNo ratings yet

- Week 5Document8 pagesWeek 5John Philip Monera100% (1)

- Week 3Document2 pagesWeek 3John Philip MoneraNo ratings yet

- RT Relevant DiscontinuitiesDocument70 pagesRT Relevant Discontinuitiesabdo50% (2)

- Beast Tech - Thomas R HornDocument234 pagesBeast Tech - Thomas R HornThe Digital Architect100% (2)

- Packet Tracer For Windows and LinuxDocument2 pagesPacket Tracer For Windows and LinuxMohammed Akram AliNo ratings yet

- Addvalue Satcom Solutions 10mar2022 KK02Document36 pagesAddvalue Satcom Solutions 10mar2022 KK02Brew LuoNo ratings yet

- Confident Engineering Company ProfileDocument2 pagesConfident Engineering Company ProfileRATHNA KUMARNo ratings yet

- IntroduccionDocument7 pagesIntroduccionValentina CardenasNo ratings yet

- HP-19C & 29C Solutions Mathematics 1977 B&WDocument40 pagesHP-19C & 29C Solutions Mathematics 1977 B&WjjirwinNo ratings yet

- AGFA DX-M BrochureDocument8 pagesAGFA DX-M BrochureEmmanuel HeliesNo ratings yet

- Hoja de Vida en Ingles - EjemploDocument6 pagesHoja de Vida en Ingles - EjemploGiovanny D-frz69% (16)

- Sports Thesis StatementDocument8 pagesSports Thesis Statementwisaj0jat0l3100% (2)

- Arts-1 Q2Document35 pagesArts-1 Q2Alvin MellaNo ratings yet

- 03-2-Ceramic Form and Function An Ethnographic Search and An Archeological ApplicationDocument14 pages03-2-Ceramic Form and Function An Ethnographic Search and An Archeological ApplicationDante .jpgNo ratings yet

- Capacitors: Expulsion Fuse Installation InstructionsDocument6 pagesCapacitors: Expulsion Fuse Installation InstructionscrcruzpNo ratings yet

- Motionmountain Volume3Document318 pagesMotionmountain Volume3halojumper63No ratings yet

- LV and MV CABLE CURRENT CARRYING CAPACITYDocument14 pagesLV and MV CABLE CURRENT CARRYING CAPACITYDilesh SwitchgearNo ratings yet

- Jalil Akhtar 058Document7 pagesJalil Akhtar 058Malik JalilNo ratings yet

- Week 1Document4 pagesWeek 1Czarina RelleveNo ratings yet

- Energy Saving Solutions in Restaurant PDFDocument4 pagesEnergy Saving Solutions in Restaurant PDFRuby PhamNo ratings yet

- Acsr SPLN 41-7 PDFDocument2 pagesAcsr SPLN 41-7 PDFAde Y Saputra100% (1)

- Letter Writing Business LettersDocument60 pagesLetter Writing Business LettersAvery Jan SilosNo ratings yet

- Safety RelayDocument28 pagesSafety Relayeric_sauvageau1804No ratings yet

- Class 1Document15 pagesClass 1eisha123No ratings yet

- Phoenix MecanoDocument11 pagesPhoenix MecanoTiago LalierNo ratings yet

- Natural DisastersDocument1 pageNatural DisastersAela VinayNo ratings yet

- Iwatsu: ManualDocument51 pagesIwatsu: ManualInsoo KangNo ratings yet

- 04.0 APA Style Citations and References August 2015Document28 pages04.0 APA Style Citations and References August 2015Rain BiNo ratings yet

- SHARP-mxm350n M350u m450n M450uDocument12 pagesSHARP-mxm350n M350u m450n M450uIoas IodfNo ratings yet

- Lecture7 PressureVessel Combined LoadingDocument22 pagesLecture7 PressureVessel Combined LoadingHarold Valle ReyesNo ratings yet

Sentencing Software

Sentencing Software

Uploaded by

John Philip Monera75%(4)75% found this document useful (4 votes)

847 views23 pagesThis document discusses the use of sentencing software called COMPAS in courts to assess the recidivism risk of criminal defendants. It notes that an investigation by ProPublica found COMPAS to be biased against black defendants by incorrectly classifying them as having a higher risk of reoffending compared to white defendants. While COMPAS claims its algorithms are not biased, several studies have found COMPAS to be no more accurate than human judges in predicting recidivism and that it has low success rates, especially for predicting violent crime recidivism. There are concerns about ethical issues arising from the use of algorithms to help determine criminal sentences.

Original Description:

Science, technology, and Society

Copyright

© © All Rights Reserved

Available Formats

PPTX, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentThis document discusses the use of sentencing software called COMPAS in courts to assess the recidivism risk of criminal defendants. It notes that an investigation by ProPublica found COMPAS to be biased against black defendants by incorrectly classifying them as having a higher risk of reoffending compared to white defendants. While COMPAS claims its algorithms are not biased, several studies have found COMPAS to be no more accurate than human judges in predicting recidivism and that it has low success rates, especially for predicting violent crime recidivism. There are concerns about ethical issues arising from the use of algorithms to help determine criminal sentences.

Copyright:

© All Rights Reserved

Available Formats

Download as PPTX, PDF, TXT or read online from Scribd

Download as pptx, pdf, or txt

75%(4)75% found this document useful (4 votes)

847 views23 pagesSentencing Software

Sentencing Software

Uploaded by

John Philip MoneraThis document discusses the use of sentencing software called COMPAS in courts to assess the recidivism risk of criminal defendants. It notes that an investigation by ProPublica found COMPAS to be biased against black defendants by incorrectly classifying them as having a higher risk of reoffending compared to white defendants. While COMPAS claims its algorithms are not biased, several studies have found COMPAS to be no more accurate than human judges in predicting recidivism and that it has low success rates, especially for predicting violent crime recidivism. There are concerns about ethical issues arising from the use of algorithms to help determine criminal sentences.

Copyright:

© All Rights Reserved

Available Formats

Download as PPTX, PDF, TXT or read online from Scribd

Download as pptx, pdf, or txt

You are on page 1of 23

SENTENCING SOFTWARE

JOHN KAYLEE JIMENEZ

JOHN PHILIP MONERA

LEONIL OCANA

SENTENCING SOFTWARE

SOFTWARE USED BY JUDGES IN SENTENCING

HEARINGS TO CREATE RECIDIVISM.

COMPAS

-CORRECTIONAL OFFENDER MANAGEMENT

PROFILING FOR ALTERNATIVE SANCTIONS

SOME OF ETHICAL DILEMMAS

• In May, the investigative news organization ProPublica claimed that COMPAS

is biased against black defendants. Northpointe, the Michigan-based

company that created the tool, released its own report questioning

ProPublica’s analysis. ProPublica rebutted the rebuttal, academic

researchers entered the fray, this newspaper’s Wonkblog weighed in, and

even the Wisconsin Supreme Court cited the controversy in its recent ruling

that upheld the use of COMPAS in sentencing.

• ProPublica points out that among defendants who ultimately did not reoffend,

blacks were more than twice as likely as whites to be classified as medium or

high risk (42 percent vs. 22 percent). Even though these defendants did not go

on to commit a crime, they are nonetheless subjected to harsher treatment by

the courts. ProPublica argues that a fair algorithm cannot make these serious

errors more frequently for one race group than for another.

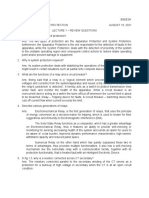

DISTRIBUTION OF DEFENDANTS ACROSS RISK CATEGORIES BY RACE. BLACK DEFENDANTS REOFFENDED AT A HIGHER

RATE THAN WHITES, AND ACCORDINGLY, A HIGHER PROPORTION OF BLACK DEFENDANTS ARE DEEMED MEDIUM OR

HIGH RISK. AS A RESULT, BLACKS WHO DO NOT REOFFEND ARE ALSO MORE LIKELY TO BE CLASSIFIED HIGHER RISK

THAN WHITES WHO DO NOT REOFFEND.

• using AI in investigations and sentencing could potentially help save time and money

• COMPAS’s algorithms overall have been found to be no more effective than human

decision. A study conducted by a researcher at Dartmouth College determined that

humans were able to accurately predict whether a criminal reoffended just as well as

COMPAS. Another study by Rutgers University reaffirmed the notably low success

rates of COMPAS, especially when predicting the likelihood of someone who

committed a violet crime to reoffend.

• COMPAS involves inputting the answers to over 100 questions about a

person’s history from a variety of subjects, including offenses, family, and even

social life.

• means to guide courts in their sentencing.

HTTPS://GCN.COM/ARTICLES/2018/01/18/RECID

IVISM-PREDICTION-SOFTWARE-FLAWS.ASPX

• http://si410wiki.sites.uofmhosting.net/index.php/Criminal_sentencing_softwar

e

• https://www.washingtonpost.com/news/monkey-cage/wp/2016/10/17/can-

an-algorithm-be-racist-our-analysis-is-more-cautious-than-

propublicas/?noredirect=on

You might also like

- WWW Scribd Com Document 523146465 Fraud Bible 1Document20 pagesWWW Scribd Com Document 523146465 Fraud Bible 1Ohi0100% (3)

- Electric: Circuit Problems With SolutionsDocument73 pagesElectric: Circuit Problems With Solutionspassed outNo ratings yet

- CHAPTER 1: The Practice of EntrepreneurshipDocument40 pagesCHAPTER 1: The Practice of EntrepreneurshipTomato MenudoNo ratings yet

- STSDocument8 pagesSTSMonica Mejillano Ortiz100% (2)

- Intellectual Revolution of AfricaDocument6 pagesIntellectual Revolution of AfricaKyla PornalezaNo ratings yet

- THE Textalyzer: Ines, Jeremieah Torres, Alicia Yap, Jairra Zamora, JosephDocument6 pagesTHE Textalyzer: Ines, Jeremieah Torres, Alicia Yap, Jairra Zamora, Josephbianca reyesNo ratings yet

- Sentencing SoftwareDocument14 pagesSentencing SoftwareCathrena DequinaNo ratings yet

- Assignment 1 Filipino InventionDocument2 pagesAssignment 1 Filipino InventionJosephus ParagesNo ratings yet

- Sts Chapter 3Document3 pagesSts Chapter 3Jared De GuzmanNo ratings yet

- Betel Chewing in The PhilippinesDocument14 pagesBetel Chewing in The PhilippinesgraceNo ratings yet

- A Reaction On The Movie PhiladelphiaDocument1 pageA Reaction On The Movie PhiladelphiaKate RineNo ratings yet

- STSDocument4 pagesSTSMochaNo ratings yet

- Case No. 1Document6 pagesCase No. 1Merry Day100% (1)

- Scenes That Has ObligationDocument4 pagesScenes That Has ObligationMaryrose SumulongNo ratings yet

- Jamaica V. ObradorDocument7 pagesJamaica V. ObradorMiks EnriquezNo ratings yet

- Ethical SubjectivismDocument6 pagesEthical SubjectivismJeric Laurio100% (1)

- SIBULO NICOLE ANNE PHIN102 GC23Ar DIGEST 2Document6 pagesSIBULO NICOLE ANNE PHIN102 GC23Ar DIGEST 2Nicole Anne Santiago SibuloNo ratings yet

- The Commonwealth of The PhilippinesDocument7 pagesThe Commonwealth of The PhilippinesRenalyn de LimaNo ratings yet

- Universal Declaration of Bioethics and Human RightsDocument2 pagesUniversal Declaration of Bioethics and Human RightsAjing GoblokNo ratings yet

- Emotion Sensing Facial RecognitionDocument13 pagesEmotion Sensing Facial RecognitionJohn Philip Monera100% (2)

- SCITESDocument2 pagesSCITESAzhly AntenorNo ratings yet

- Information AgeDocument8 pagesInformation AgeAnne Ocampo SoloriaNo ratings yet

- Explain The Basis of The Power of The State To Impose TaxesDocument10 pagesExplain The Basis of The Power of The State To Impose TaxesRevy CumahigNo ratings yet

- Feminist EthicsDocument20 pagesFeminist EthicsZaira Claire Lim Maniba33% (3)

- Security Council: Why Global Governance Is Multi-Faceted?Document1 pageSecurity Council: Why Global Governance Is Multi-Faceted?Princess Erika C. Mendoza100% (1)

- Philosophy ReflectionDocument2 pagesPhilosophy ReflectionCrystal Padilla100% (2)

- DR Perla D Santos OcampoDocument2 pagesDR Perla D Santos OcampoAiza CabolesNo ratings yet

- Mathematics Behind Humanities and Arts, Mystery of Mathematics, Math Is The Hidden Secret To Understanding The WorldDocument1 pageMathematics Behind Humanities and Arts, Mystery of Mathematics, Math Is The Hidden Secret To Understanding The WorldJohn Angelo MorenoNo ratings yet

- The Rizal Law (Republic Act 1425) : House Bill 5561/senate Bill 438Document14 pagesThe Rizal Law (Republic Act 1425) : House Bill 5561/senate Bill 438qweqwe100% (3)

- Pe Midterm ReviewerDocument24 pagesPe Midterm ReviewerAngelique CaliNo ratings yet

- Appendix 1 NSTP Areas of CocernDocument2 pagesAppendix 1 NSTP Areas of CocernIan Conan JuanicoNo ratings yet

- How Does The Instrument Safeguard Human Rights in The Face of Science and Technology?Document2 pagesHow Does The Instrument Safeguard Human Rights in The Face of Science and Technology?rjay manaloNo ratings yet

- Zero Hunger: A Challenge Accepted by Filipinos: A Term Paper OnDocument7 pagesZero Hunger: A Challenge Accepted by Filipinos: A Term Paper OnSarah Jane Menil0% (1)

- Lecture - Chapter 1Document9 pagesLecture - Chapter 1Lawrence Ryan DaugNo ratings yet

- This Study Resource Was: Assessment 5Document1 pageThis Study Resource Was: Assessment 5Raymar MacarayanNo ratings yet

- Morality Ethics by Jerry Dalagan, Jr.Document33 pagesMorality Ethics by Jerry Dalagan, Jr.Jerry Jr Cumayas DalaganNo ratings yet

- Gen Luna 2Document4 pagesGen Luna 2Ces Sarena VillareyesNo ratings yet

- What Is VirtueDocument2 pagesWhat Is VirtueThea QueenNo ratings yet

- Section 2 Part I. Mathematics As A Tool Chapter 4. Data ManagementDocument19 pagesSection 2 Part I. Mathematics As A Tool Chapter 4. Data ManagementMhyles MarinasNo ratings yet

- Global City 1Document34 pagesGlobal City 1regane villorejoNo ratings yet

- Ge Rizal 1Document5 pagesGe Rizal 1amieNo ratings yet

- Gec 17 - Sts MAL #1: (Introduction To Science, Technology, and Society)Document5 pagesGec 17 - Sts MAL #1: (Introduction To Science, Technology, and Society)Erika MaeNo ratings yet

- Grace Mission College: Module 1: Introduction To EthicsDocument70 pagesGrace Mission College: Module 1: Introduction To EthicsDelmar Acyatan100% (1)

- (SANAANI) NUR-FATIMA) ETHICS (Section-2H) Post MODULEDocument4 pages(SANAANI) NUR-FATIMA) ETHICS (Section-2H) Post MODULENur SanaaniNo ratings yet

- Ge4 Apportionment and VotingDocument1 pageGe4 Apportionment and VotingLee DuquiatanNo ratings yet

- Photo Critiquing ExerciseDocument3 pagesPhoto Critiquing ExerciseRex KorrNo ratings yet

- Indigenous Science and Technology in The Philippines AMNDocument16 pagesIndigenous Science and Technology in The Philippines AMNMiguel Andrei InfanteNo ratings yet

- Ethics Module CompilationDocument23 pagesEthics Module CompilationDiana Rose DalitNo ratings yet

- Cfe 102 PDFDocument54 pagesCfe 102 PDFPaul MillareNo ratings yet

- Medicinal Plants What People Believe They Can Do What They Actually DoDocument2 pagesMedicinal Plants What People Believe They Can Do What They Actually DoAcua RioNo ratings yet

- Ethics-Globalization and PluralismDocument17 pagesEthics-Globalization and PluralismEphraim Lingayu100% (1)

- Is Morally Permissible in Order To Prevent A Greater EvilDocument3 pagesIs Morally Permissible in Order To Prevent A Greater EvilSuperGirl_miKNo ratings yet

- Sts Module 3 Lesson 4 5 Edited For CBLDocument18 pagesSts Module 3 Lesson 4 5 Edited For CBLHanabi PandonganNo ratings yet

- Debate Sogie BillDocument12 pagesDebate Sogie BillLuke Edward GallaresNo ratings yet

- The Cairo Declaration ABCDocument2 pagesThe Cairo Declaration ABCnors lagsNo ratings yet

- Midterms STSDocument5 pagesMidterms STSRosete San Agustin Galvez100% (1)

- STS - A Assignment 5 The Fate of The Philippine Science and Technology Innovation.Document2 pagesSTS - A Assignment 5 The Fate of The Philippine Science and Technology Innovation.Asuna YuukiNo ratings yet

- Section 1100 To Section 1114Document8 pagesSection 1100 To Section 1114Albano MeaNo ratings yet

- Reading Assignment #1Document2 pagesReading Assignment #1BethNo ratings yet

- RPH Module 2Document12 pagesRPH Module 2Althea Faye RabanalNo ratings yet

- The Depravity Standard: A Call For Large Scale Homicide Case ResearchDocument4 pagesThe Depravity Standard: A Call For Large Scale Homicide Case Researchedavey_960595015No ratings yet

- Machine Bias - ProPublicaDocument10 pagesMachine Bias - ProPublicaMason ZhangNo ratings yet

- Jrny RmeDocument4 pagesJrny RmeJohn Philip MoneraNo ratings yet

- Rme JrnyDocument3 pagesRme JrnyJohn Philip MoneraNo ratings yet

- Monera - John Philip M - Bsee4a - Lecture 1 - Review QuestionsDocument1 pageMonera - John Philip M - Bsee4a - Lecture 1 - Review QuestionsJohn Philip MoneraNo ratings yet

- 4 and 5Document21 pages4 and 5John Philip MoneraNo ratings yet

- Journeyman Closed Book Exam 1Document6 pagesJourneyman Closed Book Exam 1John Philip MoneraNo ratings yet

- Compliance-Matrix Bolalin Monera Sanchez Ee3aDocument8 pagesCompliance-Matrix Bolalin Monera Sanchez Ee3aJohn Philip MoneraNo ratings yet

- Monera - John Philip M - Bsee4a - System Protection To Human BodyDocument2 pagesMonera - John Philip M - Bsee4a - System Protection To Human BodyJohn Philip MoneraNo ratings yet

- Quiz 1Document1 pageQuiz 1John Philip MoneraNo ratings yet

- Monera Bsee 3a - Week4 OutputDocument3 pagesMonera Bsee 3a - Week4 OutputJohn Philip MoneraNo ratings yet

- Laboratory Activity1 Monera Johnphilip Bsee3aDocument6 pagesLaboratory Activity1 Monera Johnphilip Bsee3aJohn Philip MoneraNo ratings yet

- Camarines Norte State College: Laboratory Activity For Prelim PeriodDocument2 pagesCamarines Norte State College: Laboratory Activity For Prelim PeriodJohn Philip MoneraNo ratings yet

- World Literature (Lit 2) : Final Test PaperDocument7 pagesWorld Literature (Lit 2) : Final Test PaperJohn Philip MoneraNo ratings yet

- QUIZ1 - NUMERICALMETHODS&ANALYSIS - MONERA - BSEE3A Edit1Document6 pagesQUIZ1 - NUMERICALMETHODS&ANALYSIS - MONERA - BSEE3A Edit1John Philip MoneraNo ratings yet

- It To CongressDocument4 pagesIt To CongressJohn Philip MoneraNo ratings yet

- Week 5Document8 pagesWeek 5John Philip Monera100% (1)

- Week 3Document2 pagesWeek 3John Philip MoneraNo ratings yet

- RT Relevant DiscontinuitiesDocument70 pagesRT Relevant Discontinuitiesabdo50% (2)

- Beast Tech - Thomas R HornDocument234 pagesBeast Tech - Thomas R HornThe Digital Architect100% (2)

- Packet Tracer For Windows and LinuxDocument2 pagesPacket Tracer For Windows and LinuxMohammed Akram AliNo ratings yet

- Addvalue Satcom Solutions 10mar2022 KK02Document36 pagesAddvalue Satcom Solutions 10mar2022 KK02Brew LuoNo ratings yet

- Confident Engineering Company ProfileDocument2 pagesConfident Engineering Company ProfileRATHNA KUMARNo ratings yet

- IntroduccionDocument7 pagesIntroduccionValentina CardenasNo ratings yet

- HP-19C & 29C Solutions Mathematics 1977 B&WDocument40 pagesHP-19C & 29C Solutions Mathematics 1977 B&WjjirwinNo ratings yet

- AGFA DX-M BrochureDocument8 pagesAGFA DX-M BrochureEmmanuel HeliesNo ratings yet

- Hoja de Vida en Ingles - EjemploDocument6 pagesHoja de Vida en Ingles - EjemploGiovanny D-frz69% (16)

- Sports Thesis StatementDocument8 pagesSports Thesis Statementwisaj0jat0l3100% (2)

- Arts-1 Q2Document35 pagesArts-1 Q2Alvin MellaNo ratings yet

- 03-2-Ceramic Form and Function An Ethnographic Search and An Archeological ApplicationDocument14 pages03-2-Ceramic Form and Function An Ethnographic Search and An Archeological ApplicationDante .jpgNo ratings yet

- Capacitors: Expulsion Fuse Installation InstructionsDocument6 pagesCapacitors: Expulsion Fuse Installation InstructionscrcruzpNo ratings yet

- Motionmountain Volume3Document318 pagesMotionmountain Volume3halojumper63No ratings yet

- LV and MV CABLE CURRENT CARRYING CAPACITYDocument14 pagesLV and MV CABLE CURRENT CARRYING CAPACITYDilesh SwitchgearNo ratings yet

- Jalil Akhtar 058Document7 pagesJalil Akhtar 058Malik JalilNo ratings yet

- Week 1Document4 pagesWeek 1Czarina RelleveNo ratings yet

- Energy Saving Solutions in Restaurant PDFDocument4 pagesEnergy Saving Solutions in Restaurant PDFRuby PhamNo ratings yet

- Acsr SPLN 41-7 PDFDocument2 pagesAcsr SPLN 41-7 PDFAde Y Saputra100% (1)

- Letter Writing Business LettersDocument60 pagesLetter Writing Business LettersAvery Jan SilosNo ratings yet

- Safety RelayDocument28 pagesSafety Relayeric_sauvageau1804No ratings yet

- Class 1Document15 pagesClass 1eisha123No ratings yet

- Phoenix MecanoDocument11 pagesPhoenix MecanoTiago LalierNo ratings yet

- Natural DisastersDocument1 pageNatural DisastersAela VinayNo ratings yet

- Iwatsu: ManualDocument51 pagesIwatsu: ManualInsoo KangNo ratings yet

- 04.0 APA Style Citations and References August 2015Document28 pages04.0 APA Style Citations and References August 2015Rain BiNo ratings yet

- SHARP-mxm350n M350u m450n M450uDocument12 pagesSHARP-mxm350n M350u m450n M450uIoas IodfNo ratings yet

- Lecture7 PressureVessel Combined LoadingDocument22 pagesLecture7 PressureVessel Combined LoadingHarold Valle ReyesNo ratings yet